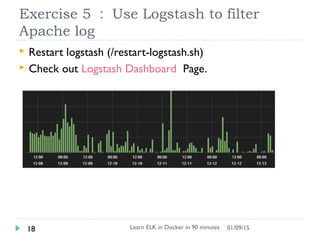

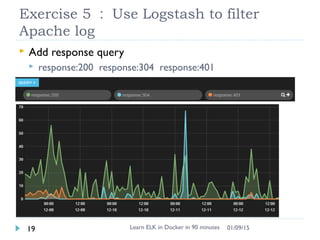

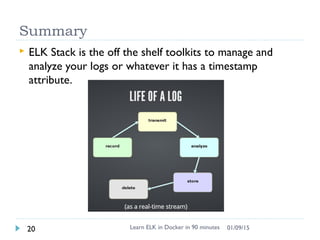

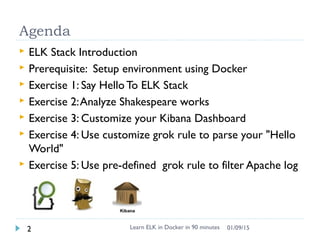

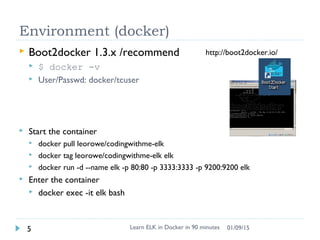

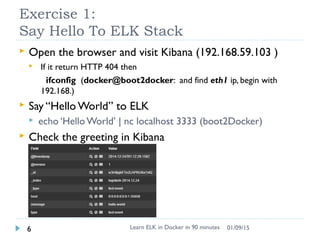

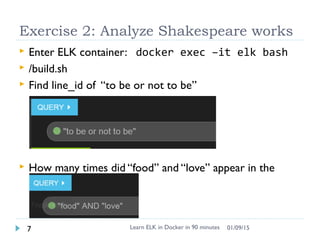

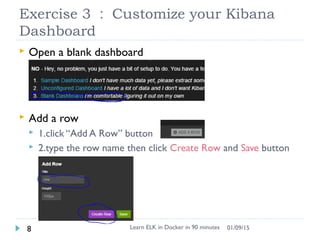

The document outlines exercises for learning the ELK stack using Docker. It introduces Elasticsearch for data storage, Logstash for collecting and parsing logs, and Kibana for visualization. The exercises demonstrate setting up the environment, sending a test message to ELK, analyzing Shakespeare works, customizing the Kibana dashboard, using Grok filters to parse logs, and filtering Apache logs with Logstash.

![Exercise 4 : Use customize grok filter

to parse your "Hello World"

Learn ELK in Docker in 90 minutes11 01/09/15

add a grok filter into /logstash.conf

input { tcp { port => 3333 type => "text event"}}

filter{

grok{ match=>['message','%{WORD:greetings}%{SPACE}%

{WORD:name}']

}

}

output { elasticsearch { host => localhost } }](https://image.slidesharecdn.com/learnelkindocker-150109005647-conversion-gate02/85/Learn-ELK-in-docker-11-320.jpg)

![Add a filter to deal with Apache logs

filter{

if [type]=='apache-log'{

grok{

match=>['message','%{COMMONAPACHELOG:message}']

}

date{

match=>['timestamp','dd/MMM/yyyy:HH:mm:ss Z']

}

mutate {

convert => { "response" => "integer" }

convert => { "bytes" => "integer" }

}

}

}

Learn ELK in Docker in 90 minutes17 01/09/15](https://image.slidesharecdn.com/learnelkindocker-150109005647-conversion-gate02/85/Learn-ELK-in-docker-17-320.jpg)