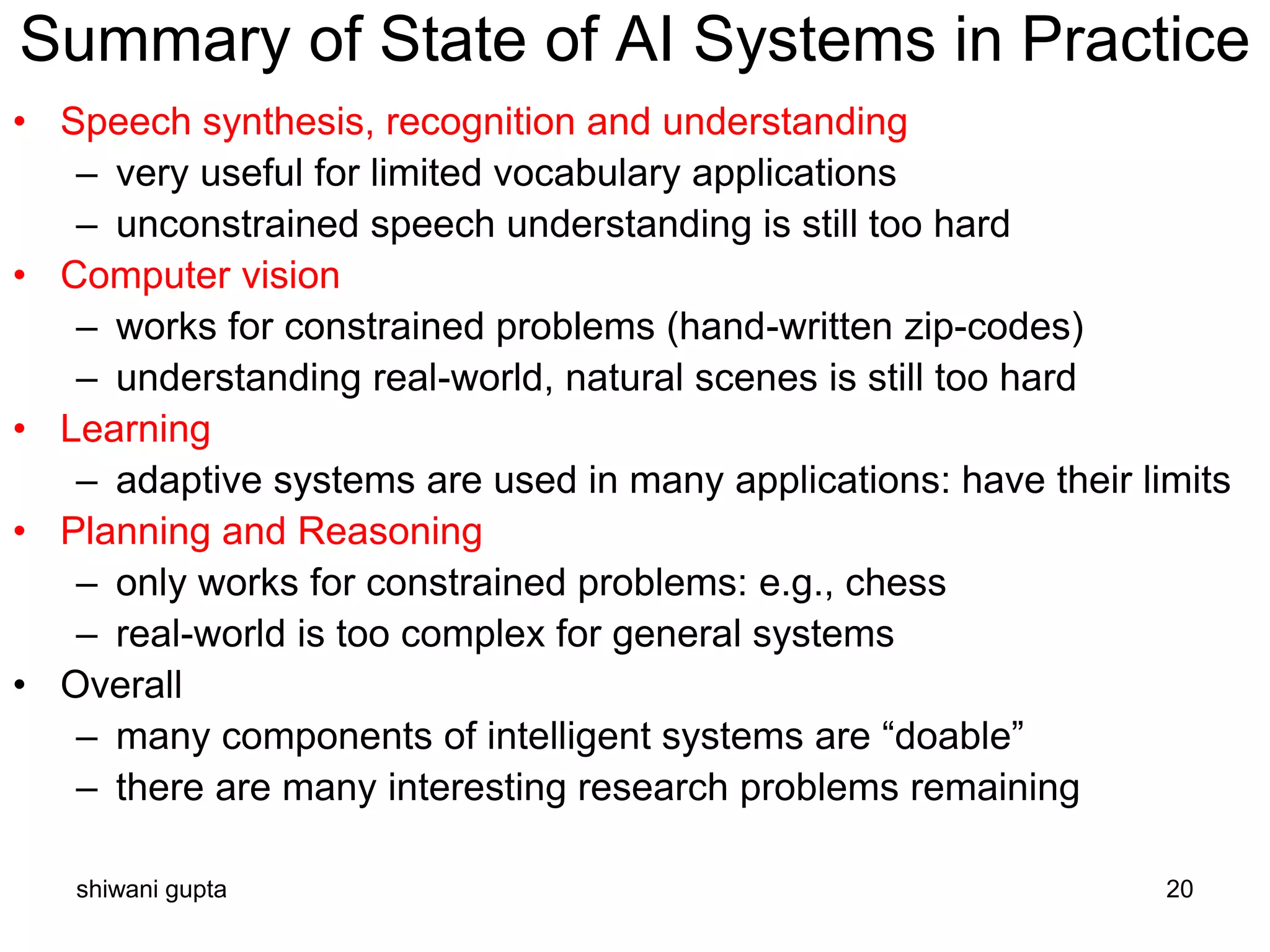

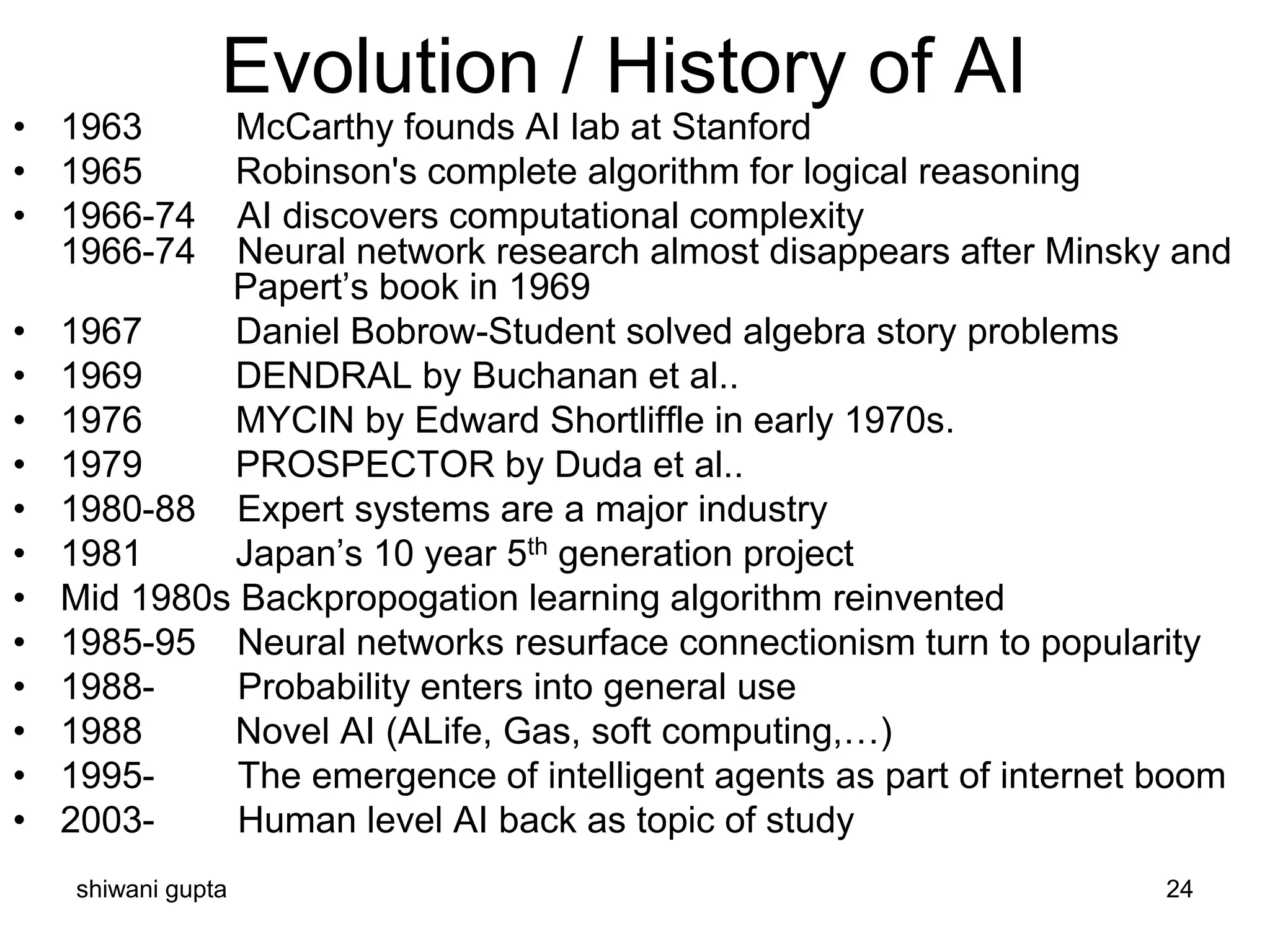

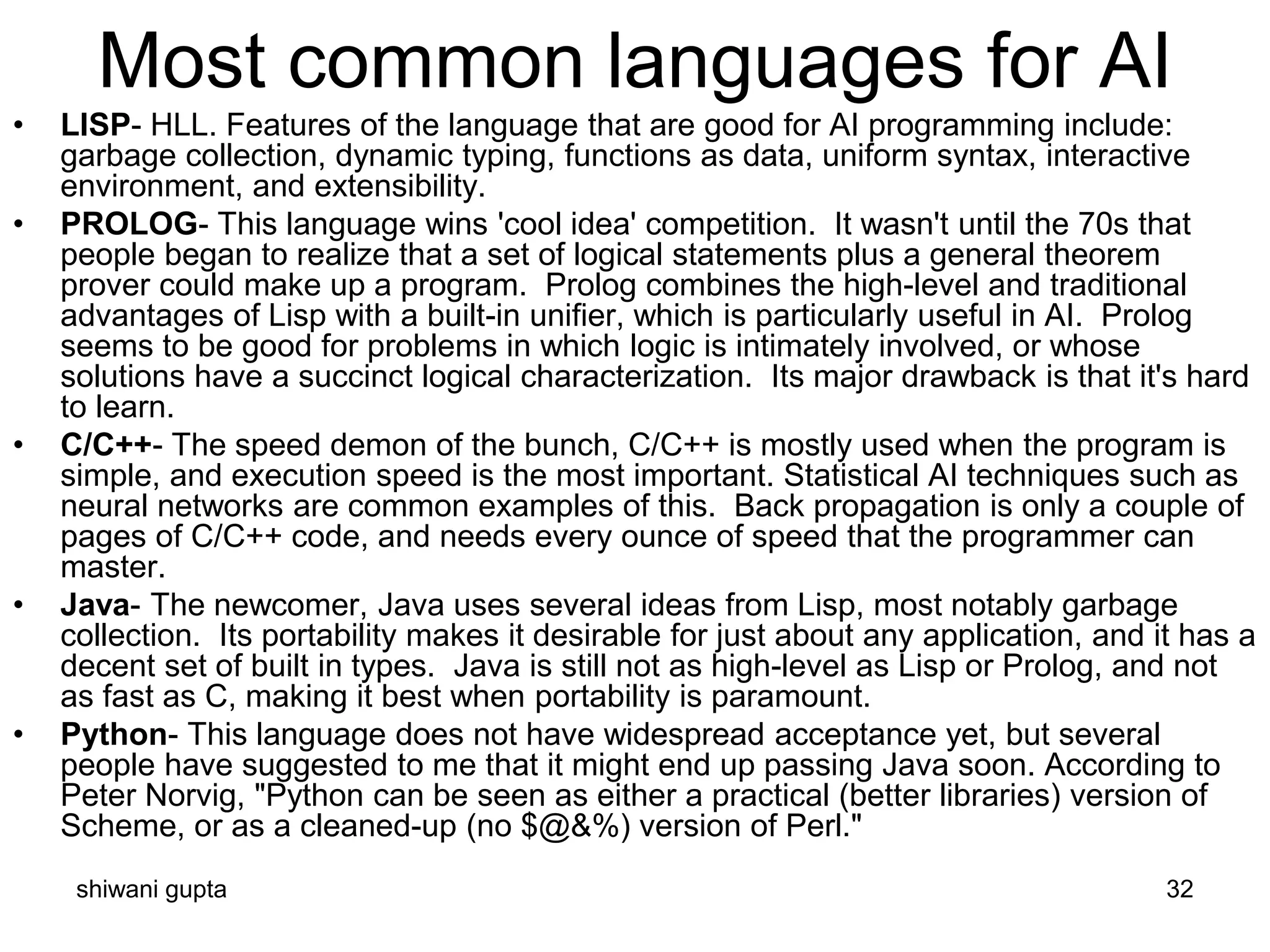

The document outlines the objectives, outcomes, and learning outcomes of a course on artificial intelligence. The objectives include conceptualizing ideas and techniques for intelligent systems, understanding mechanisms of intelligent thought and action, and understanding advanced representation and search techniques. Outcomes include developing an understanding of AI building blocks, choosing appropriate problem solving methods, analyzing strengths and weaknesses of AI approaches, and designing models for reasoning with uncertainty. Learning outcomes include knowledge, intellectual skills, practical skills, and transferable skills in artificial intelligence.

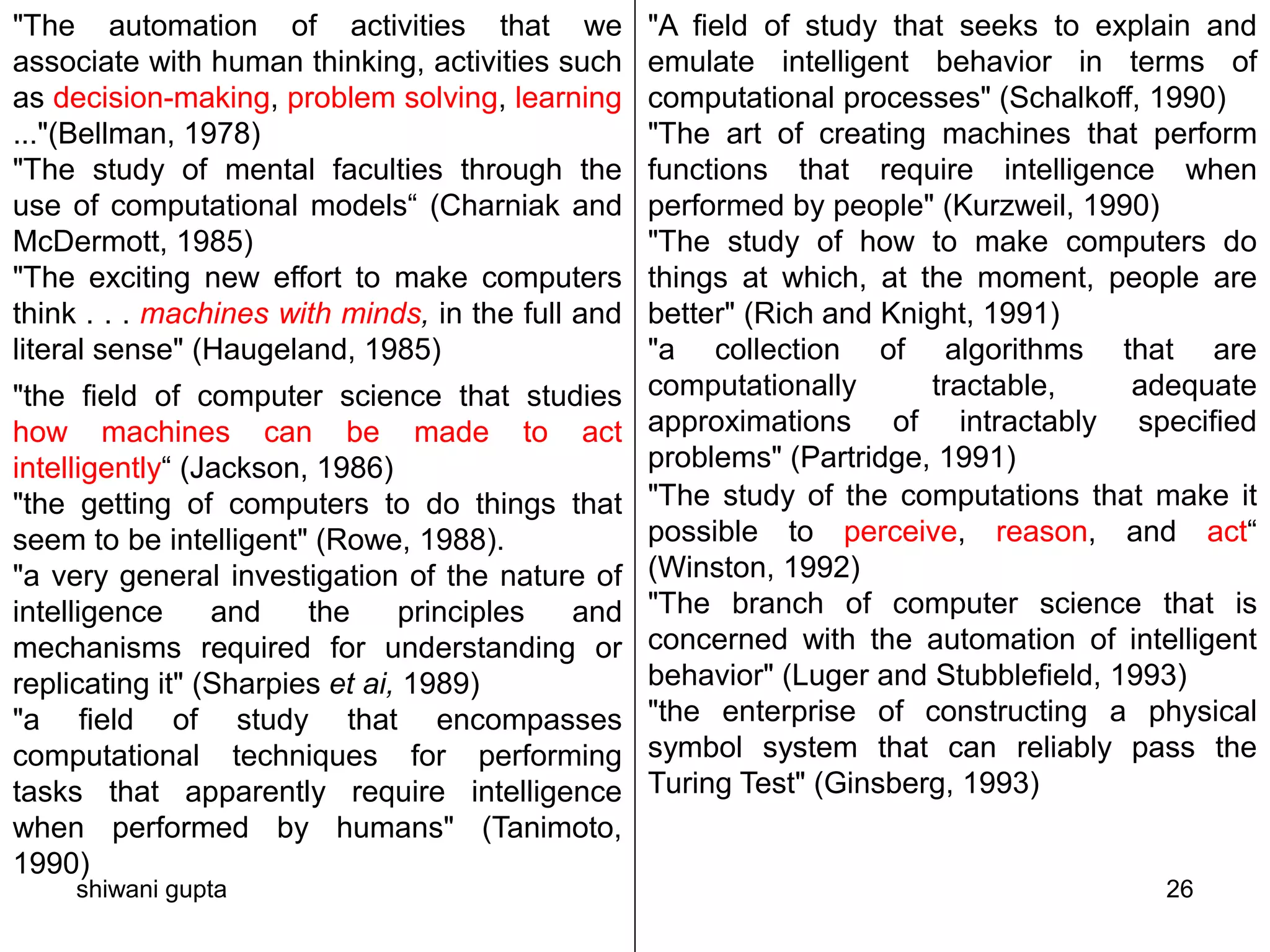

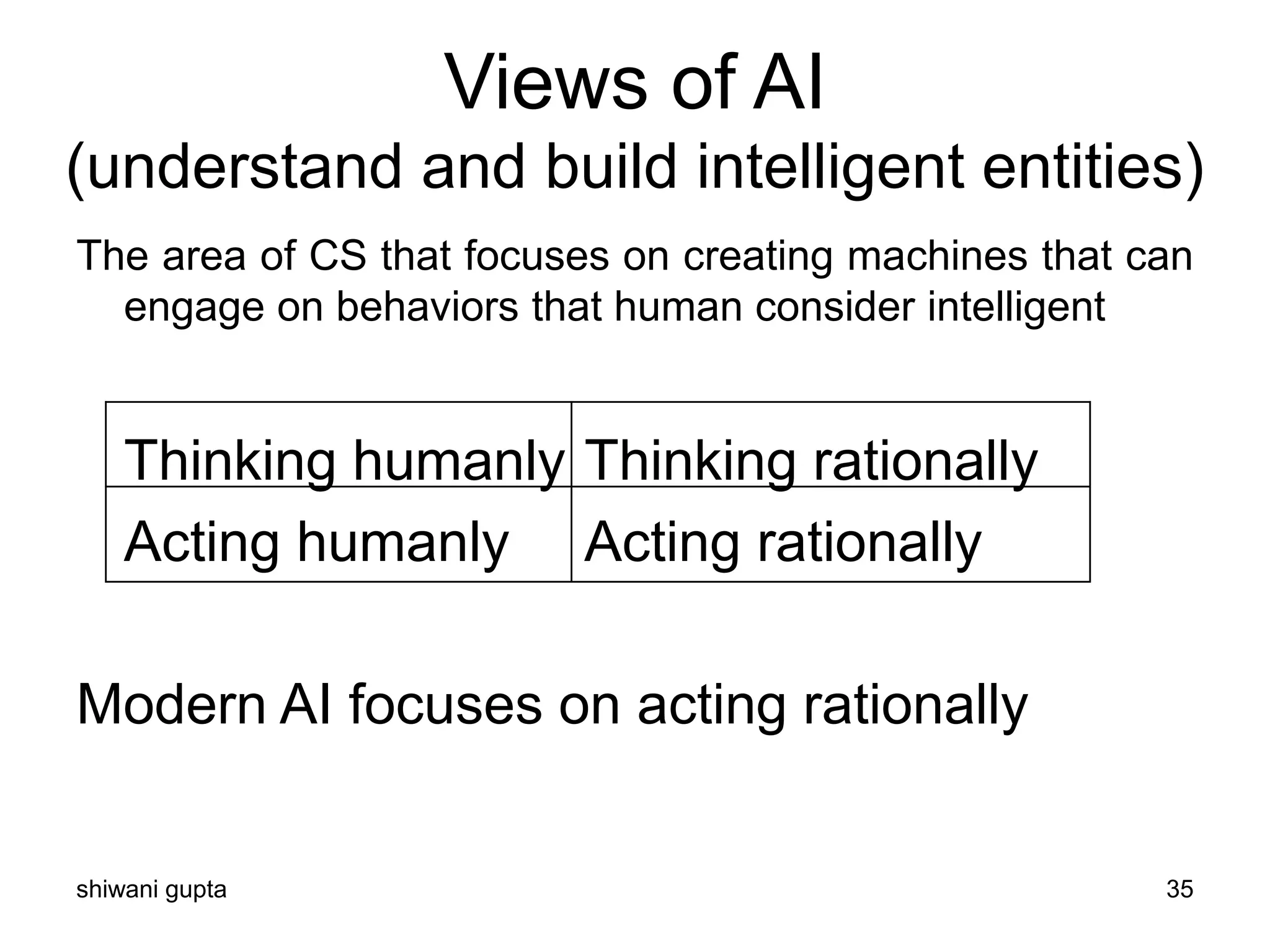

![Systems that think like humans:

cognitive modeling

• Humans as observed from ‘inside’

• How do we know how humans think?

– Introspection vs. psychological

experiments

• Cognitive Science

• “The exciting new effort to make computers

think … machines with minds in the full and

literal sense” (Haugeland)

• “[The automation of] activities that we

associate with human thinking, activities such

as decision-making, problem solving, learning

…” (Bellman)](https://image.slidesharecdn.com/introductiontoai-200319111425/75/Introduction-to-ai-36-2048.jpg)