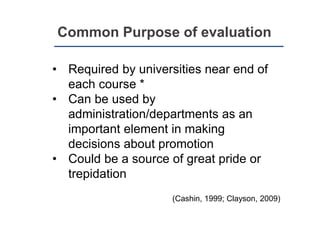

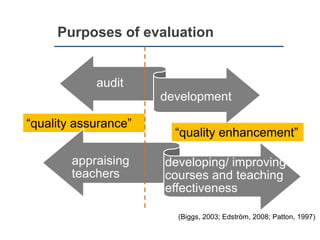

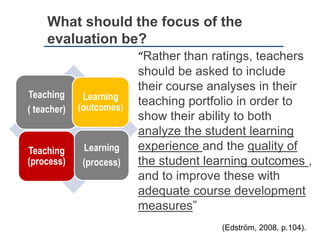

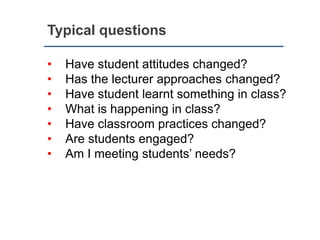

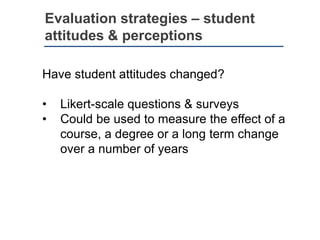

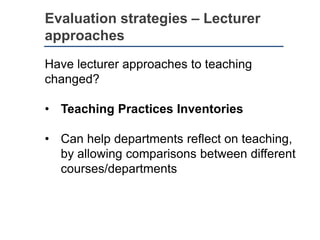

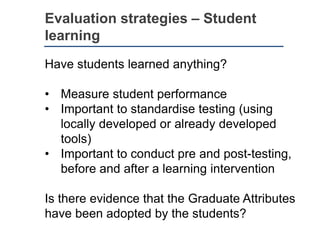

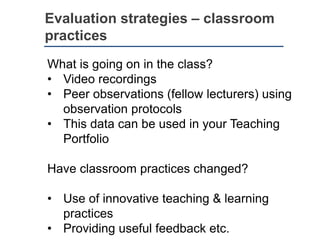

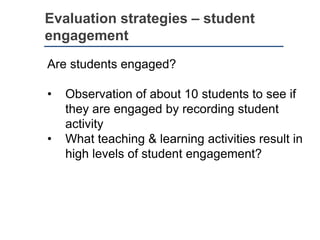

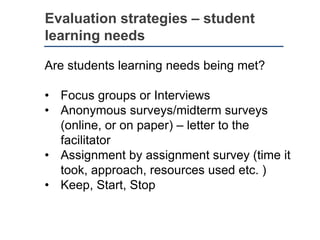

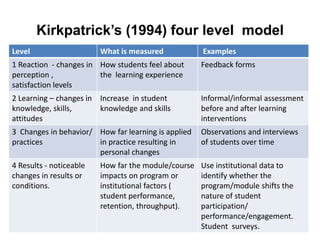

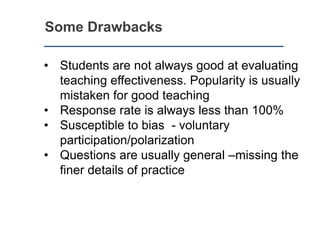

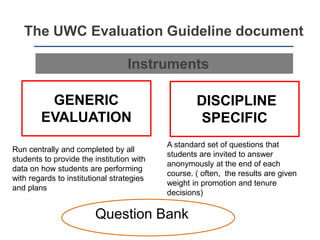

This document discusses evaluating coursework from a student perspective. It outlines common purposes of evaluation like quality assurance and improving teaching. The focus of evaluation should be on both teaching/teacher and learning/outcomes. Strategies mentioned include surveys, observations, interviews, pre-/post-testing. Drawbacks include low response rates and students not always accurately assessing teaching. The document advocates using a variety of quantitative and qualitative methods like those in Kirkpatrick's four-level model to gain a holistic view of a course's effectiveness and opportunities for improvement from both a student learning and teaching practice perspective.