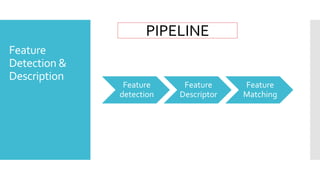

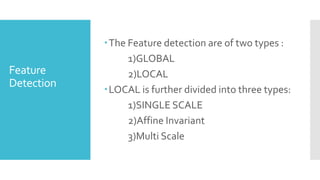

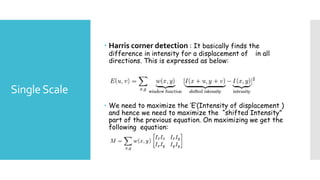

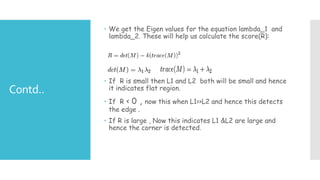

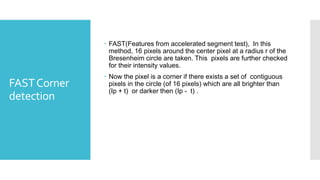

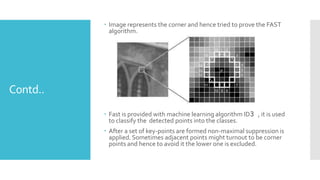

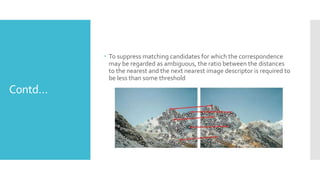

The document discusses image processing techniques, focusing on feature detection, description, and matching. It elaborates on various methods such as global vs. local feature representation, Harris corner detection, SIFT, SURF, and LBP, each with its own mechanism for identifying and describing image features. Additionally, it covers feature matching strategies including brute-force and FLANN-based methods to establish correspondences between image features.