Embed presentation

Downloaded 29 times

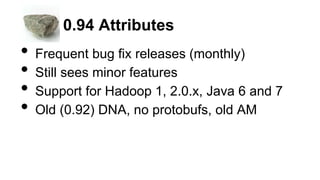

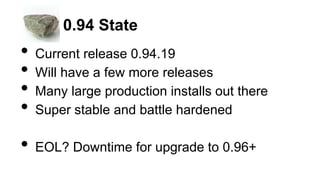

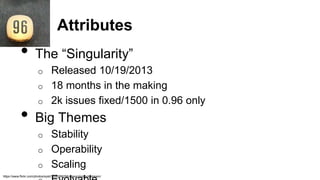

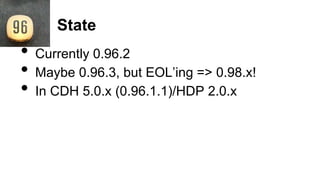

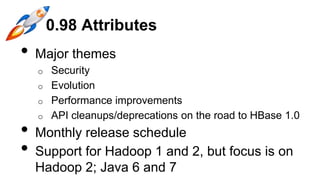

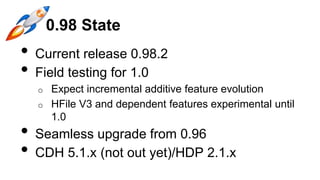

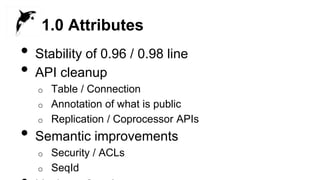

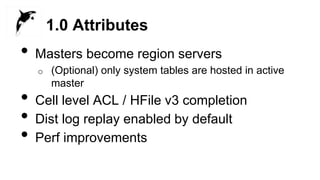

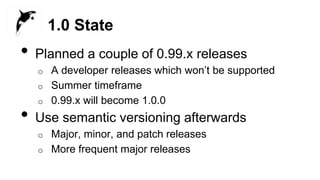

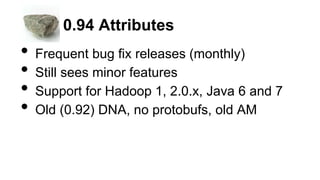

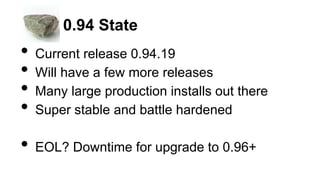

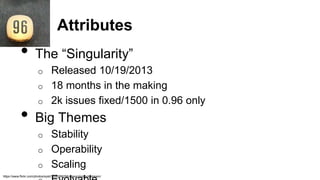

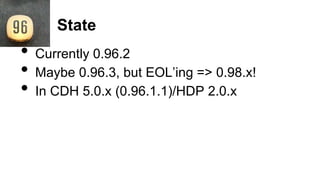

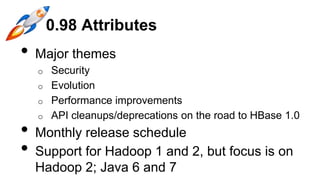

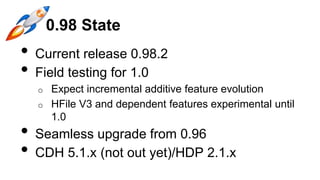

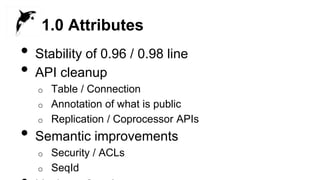

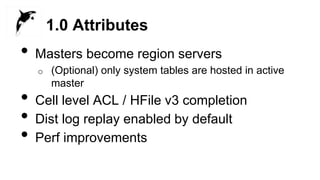

The document outlines the state of various HBase release branches, highlighting frequent bug fixes and stability in version 0.94, and major themes of security and performance improvements in versions 0.98 and 1.0. It details the anticipated end-of-life transitions and the planned release schedule for upcoming versions, with an emphasis on user upgrade paths. Overall, the document provides insights into the evolution of HBase and its major releases.