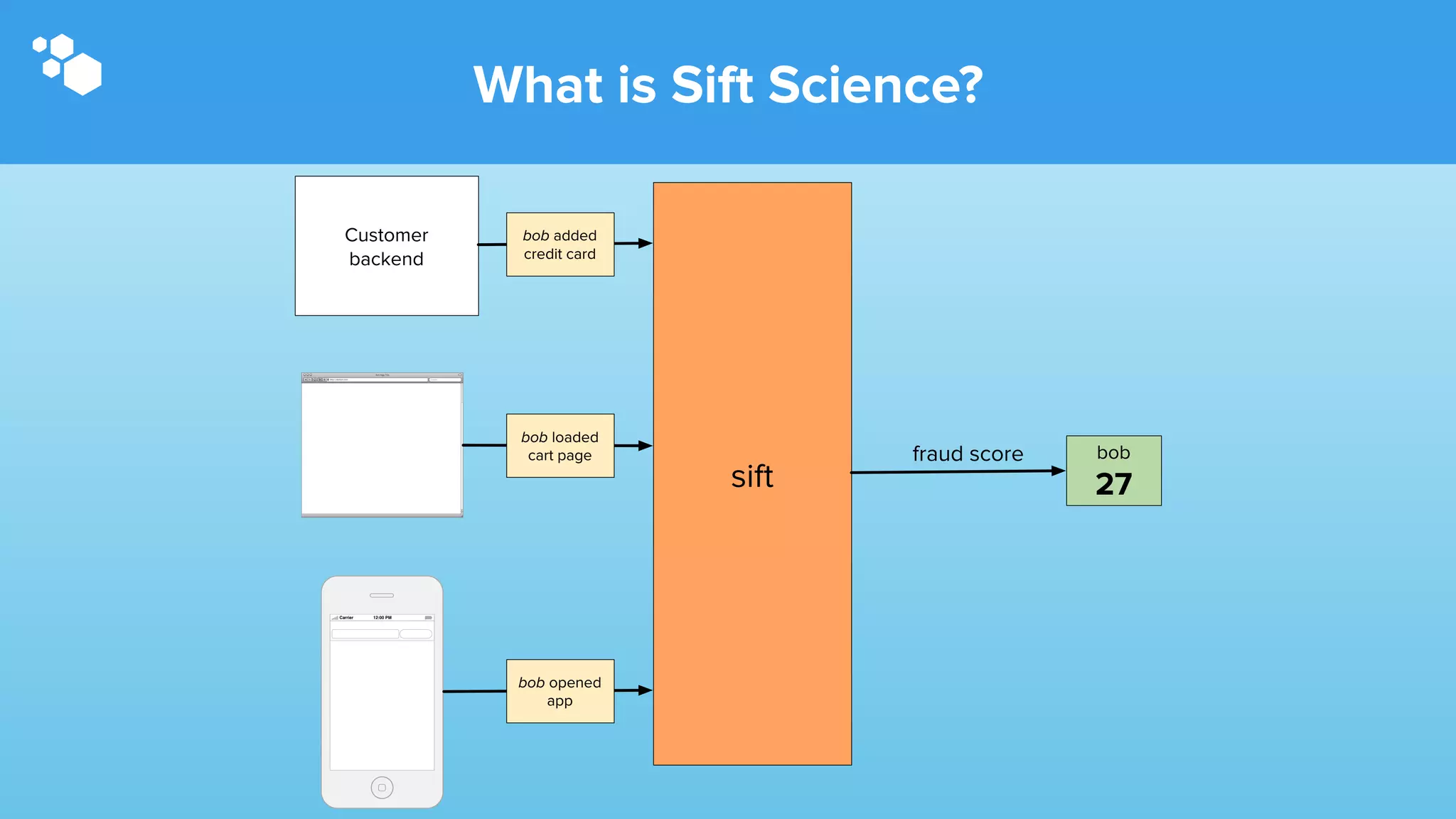

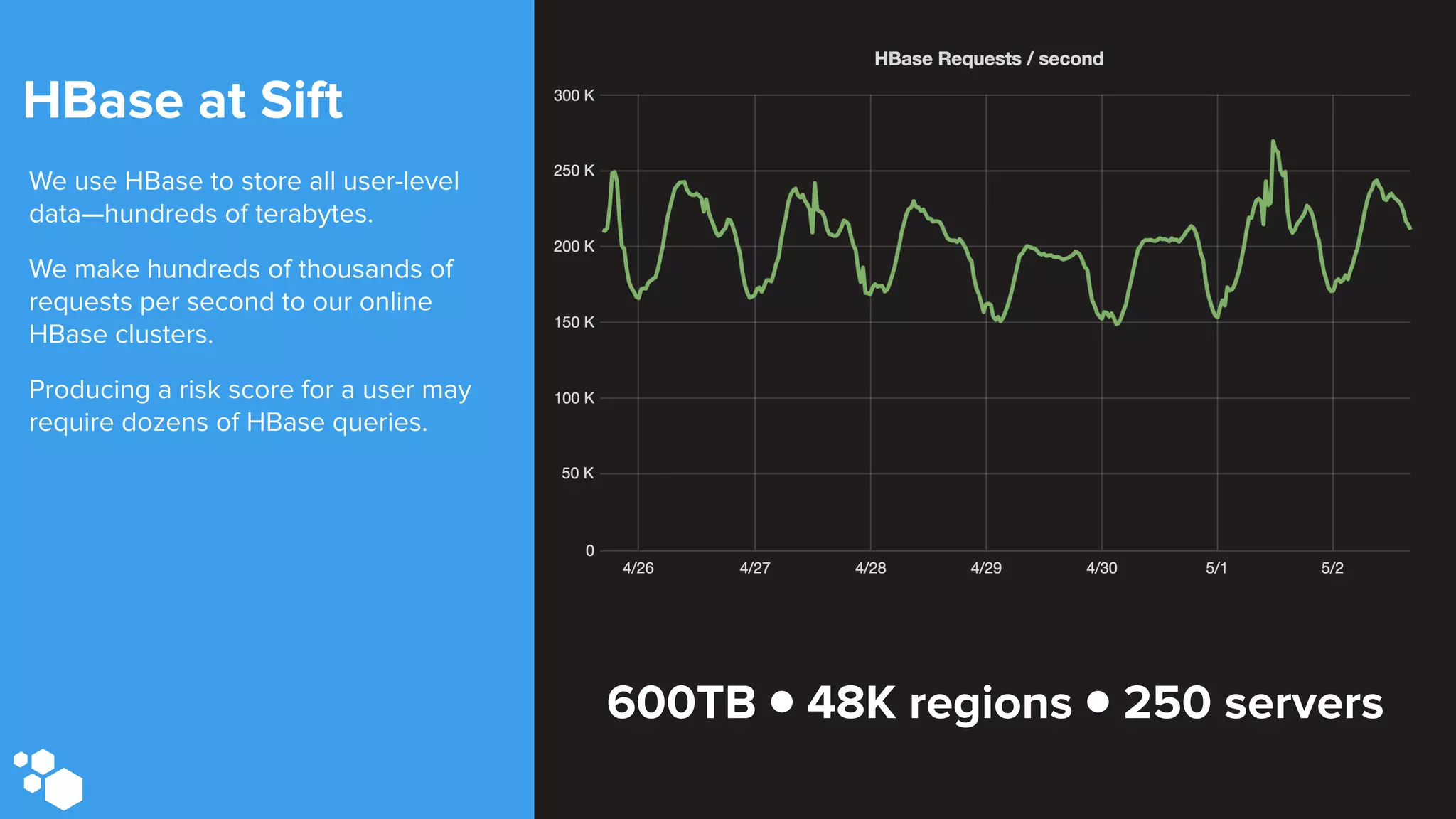

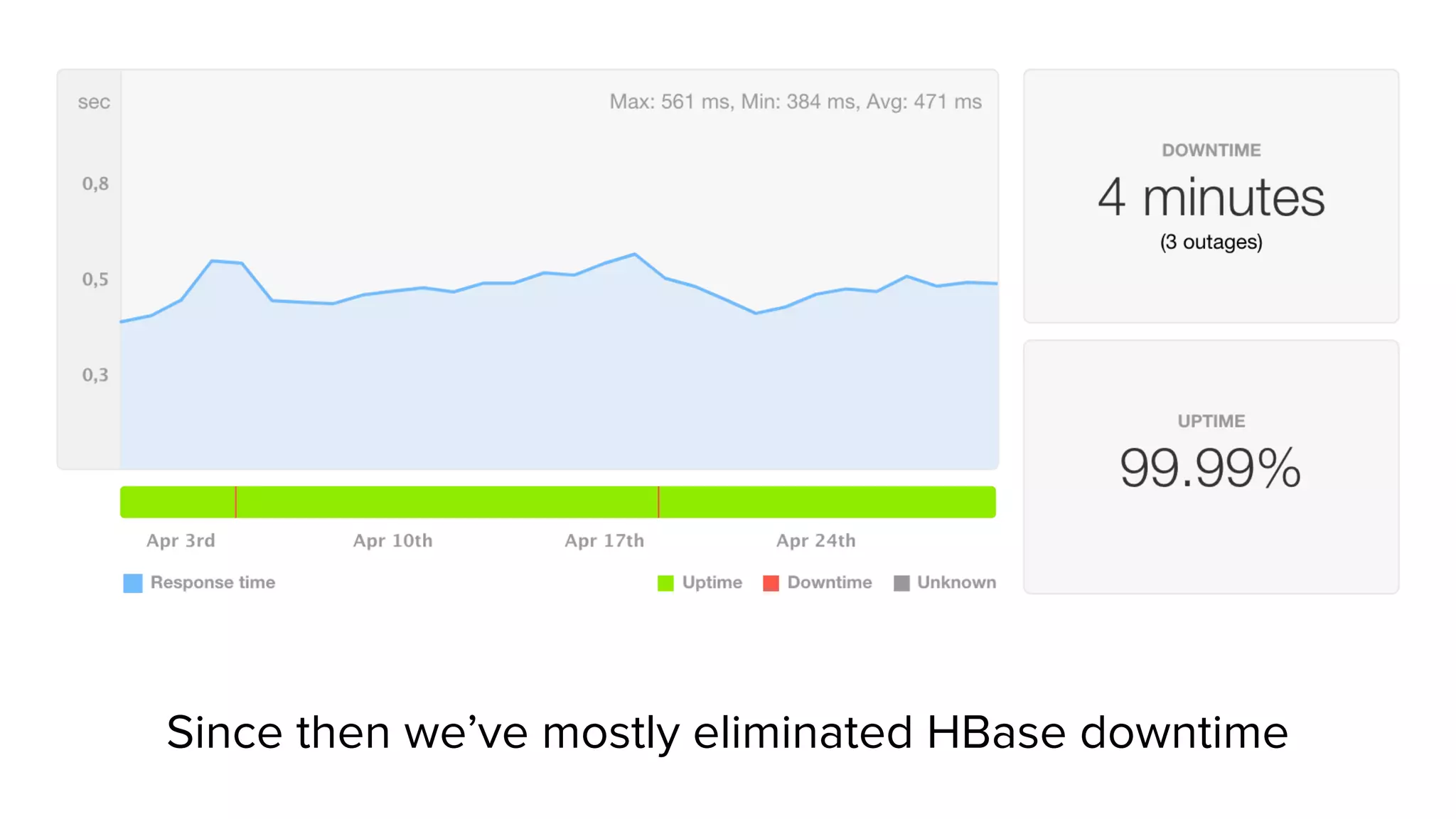

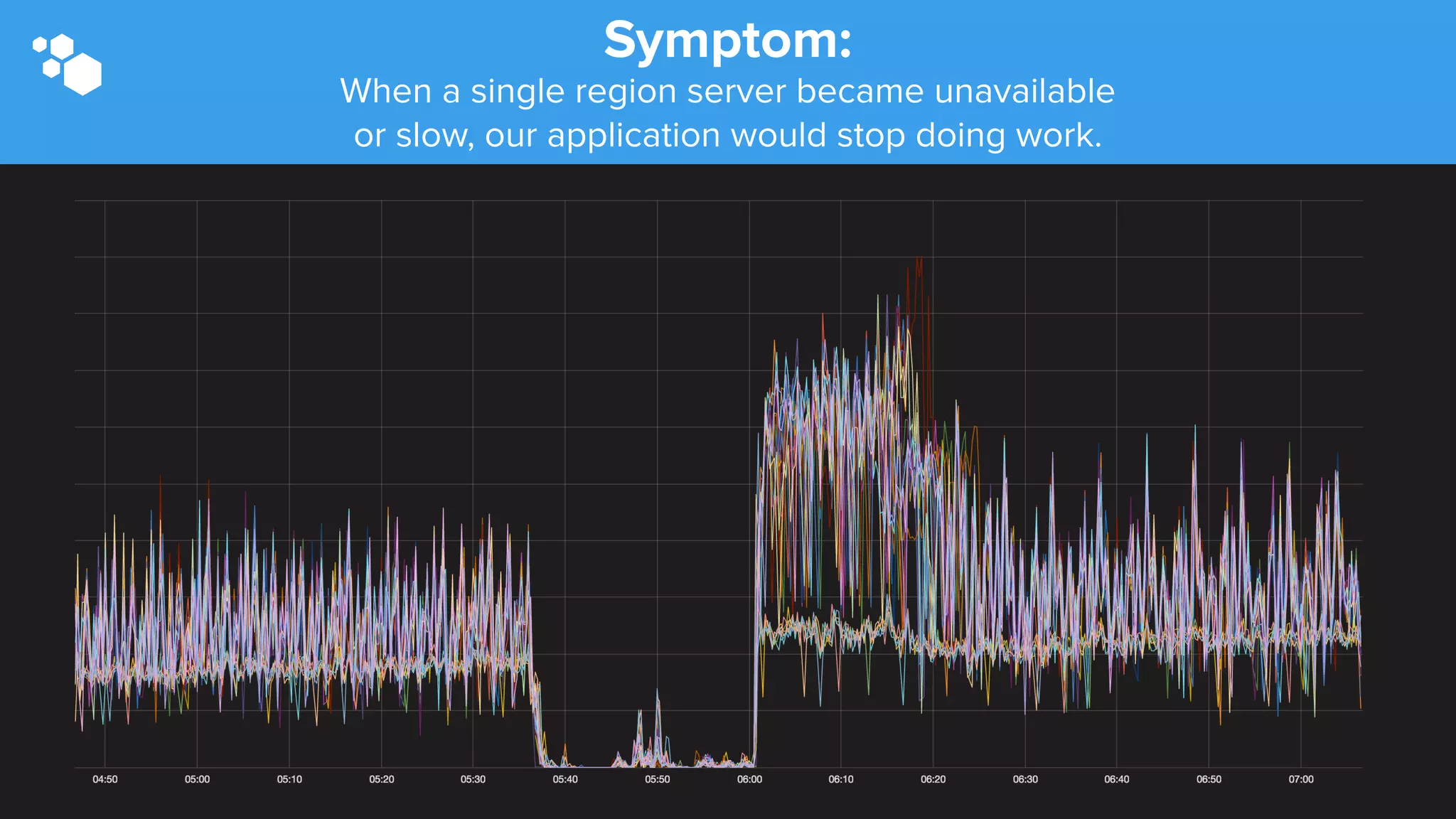

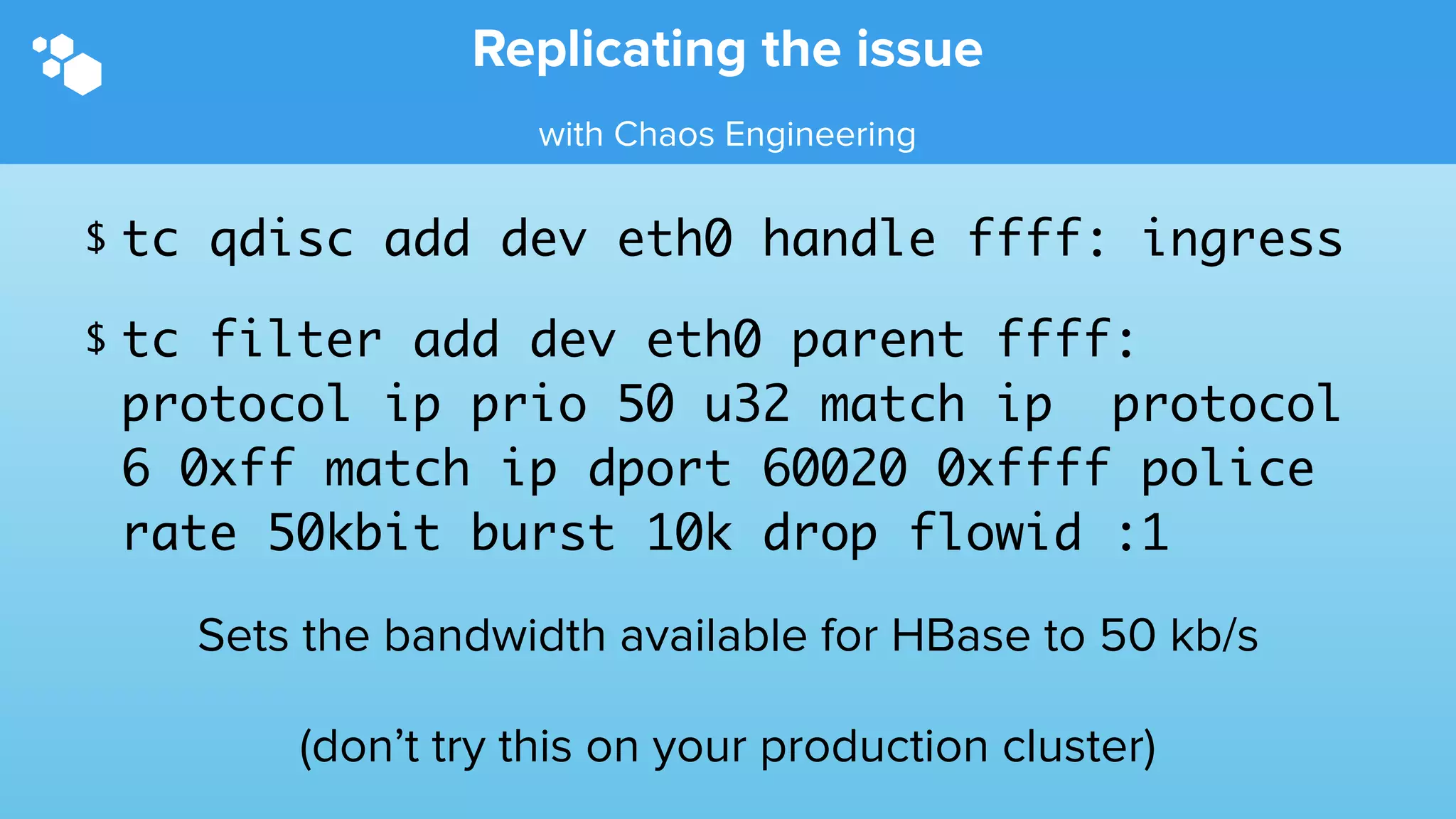

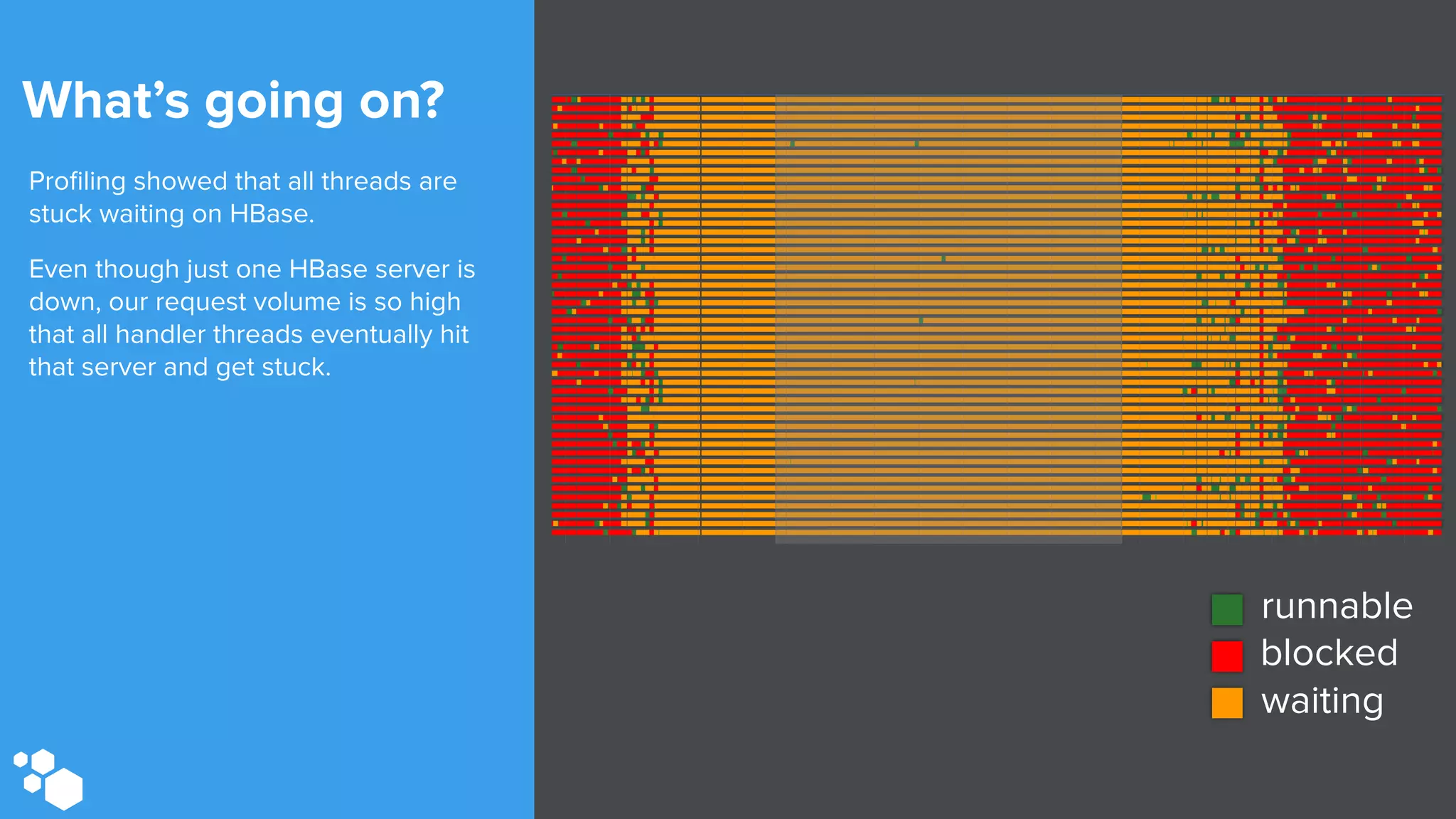

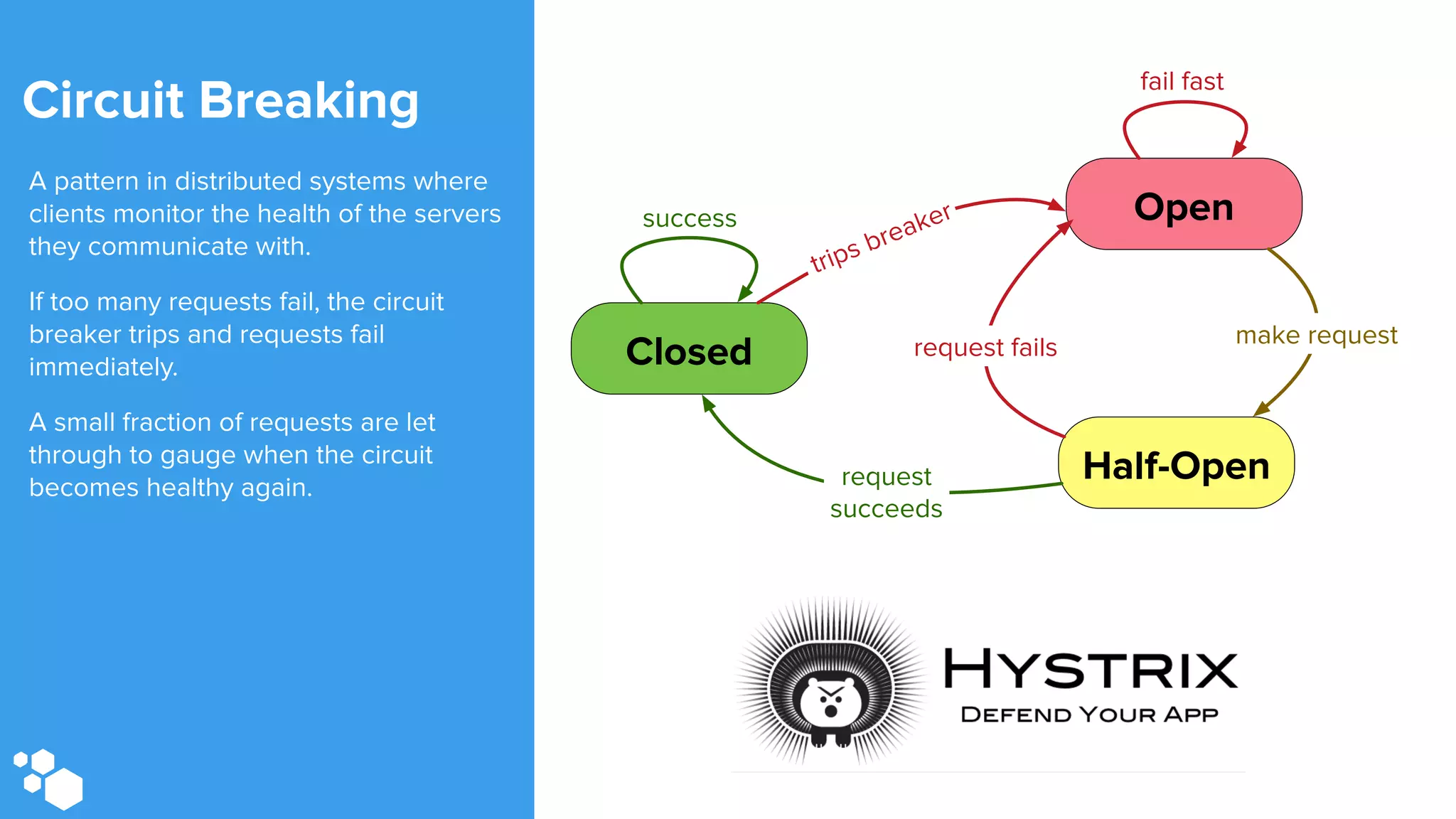

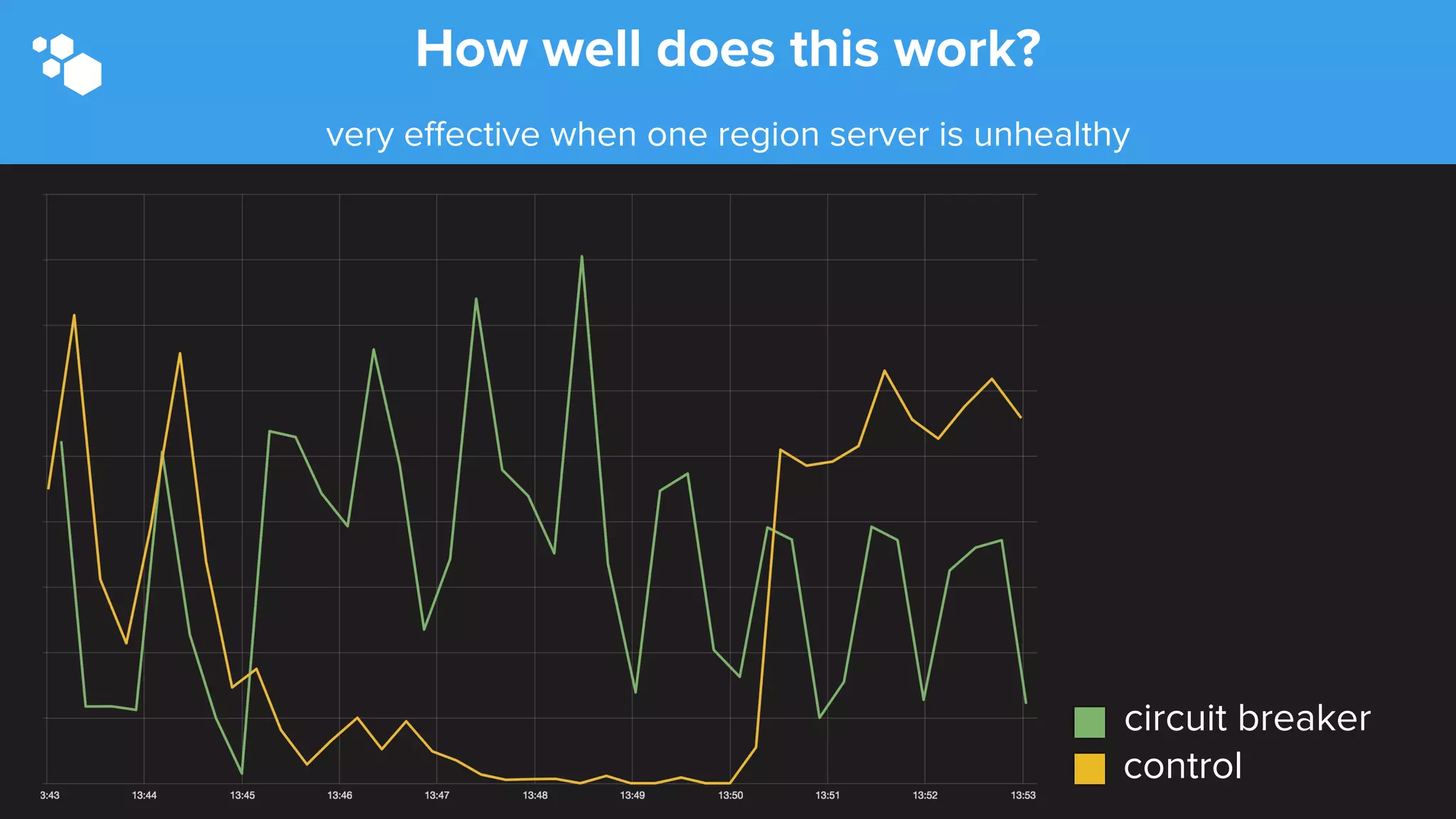

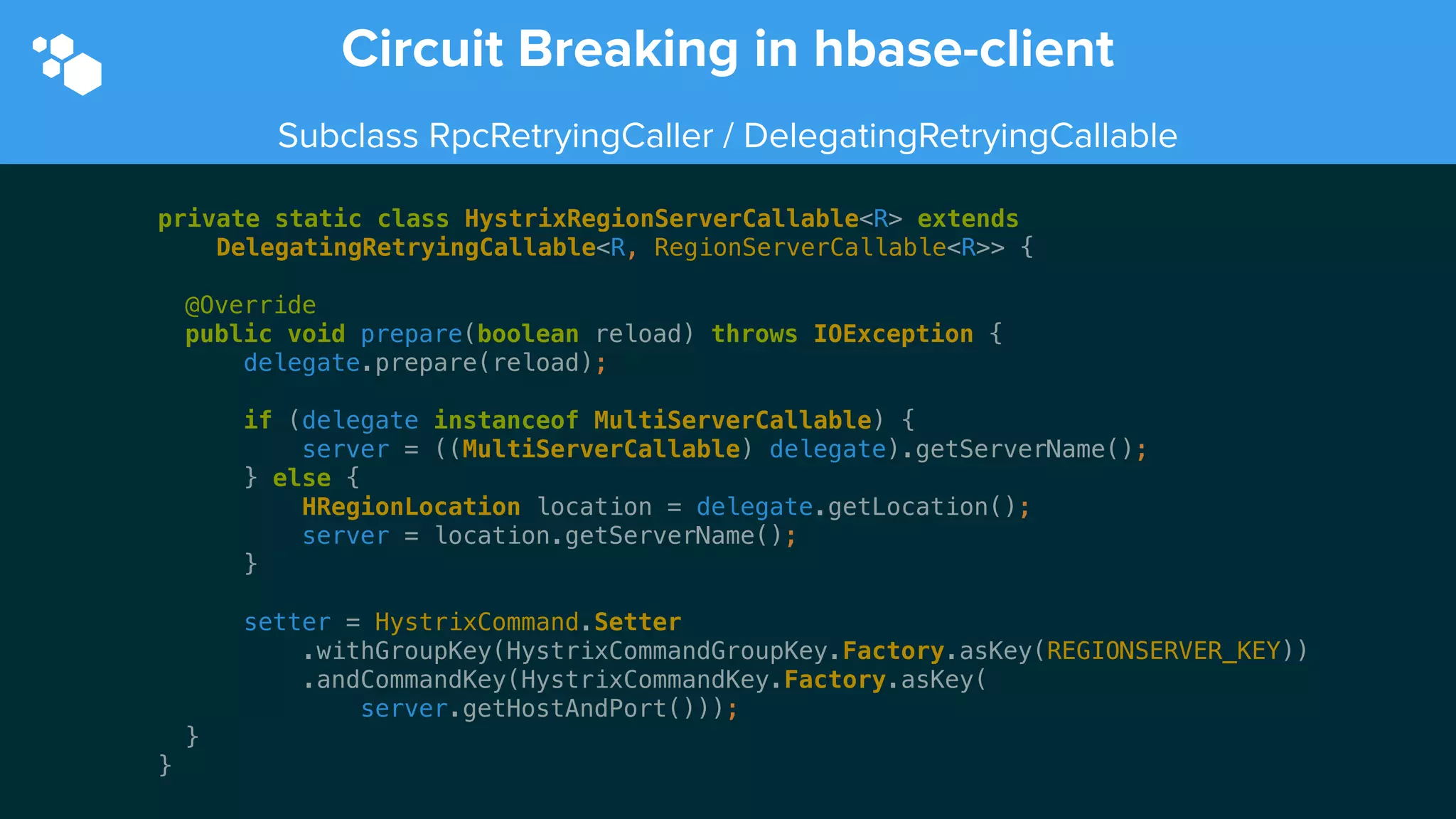

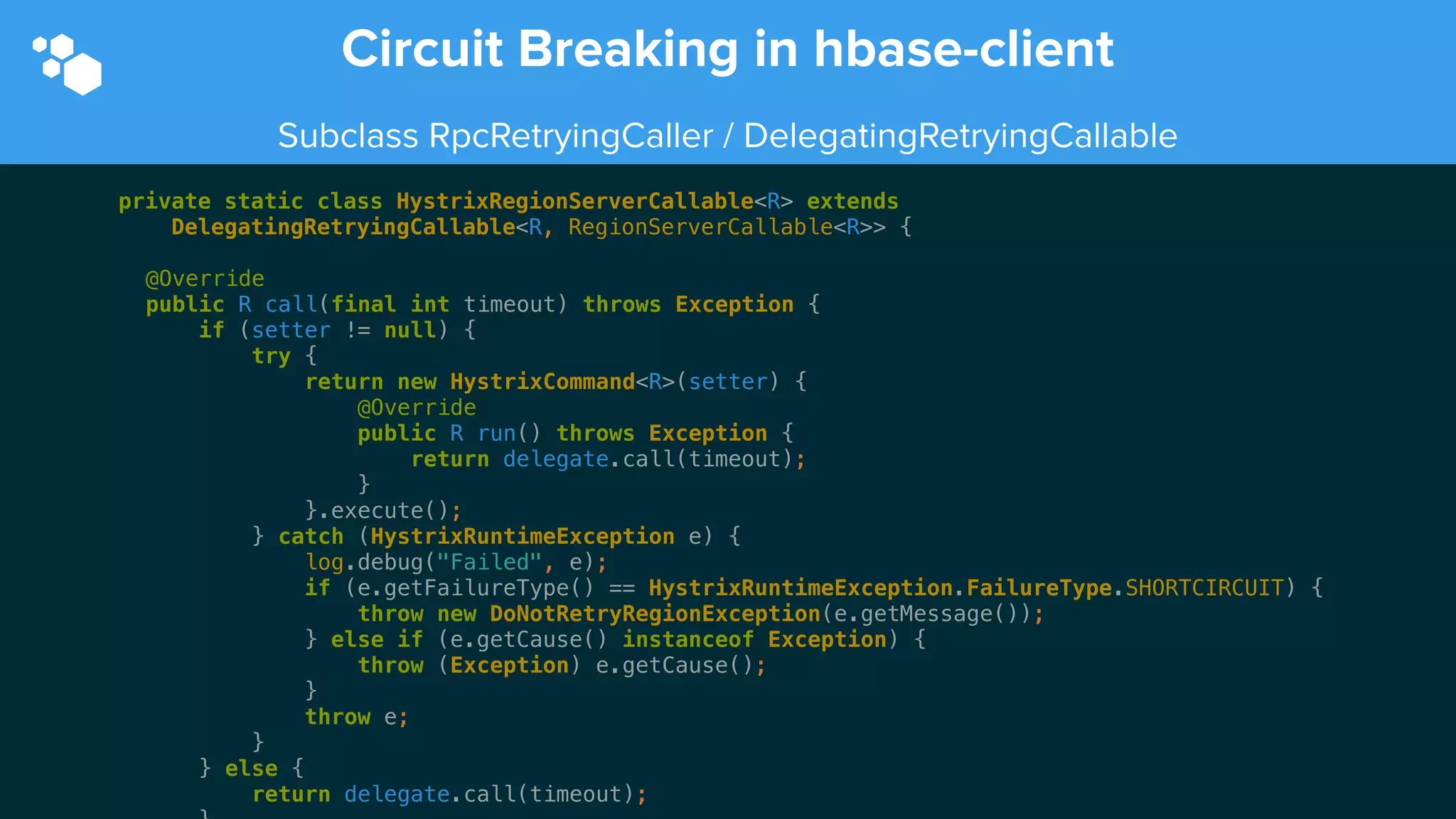

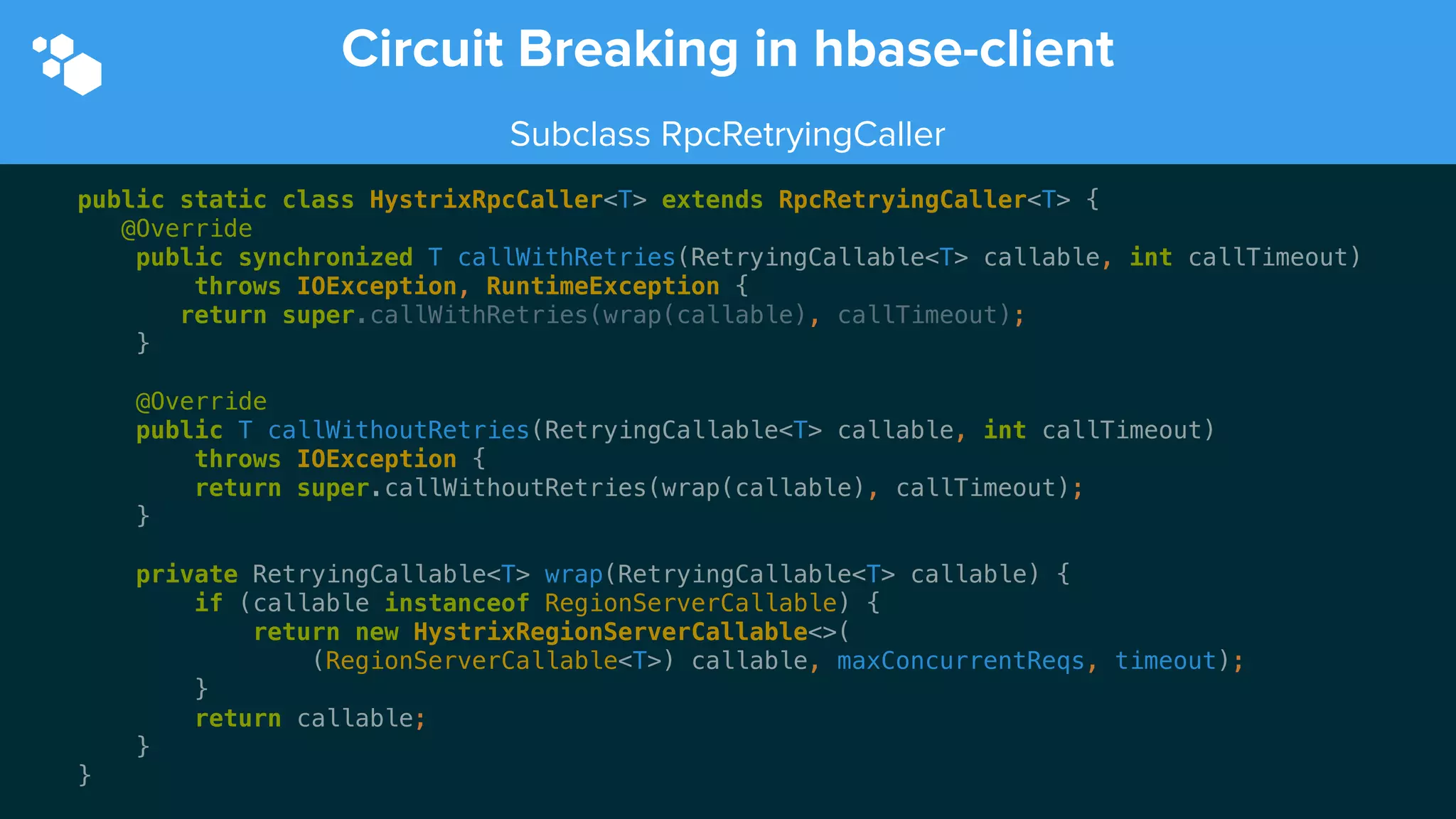

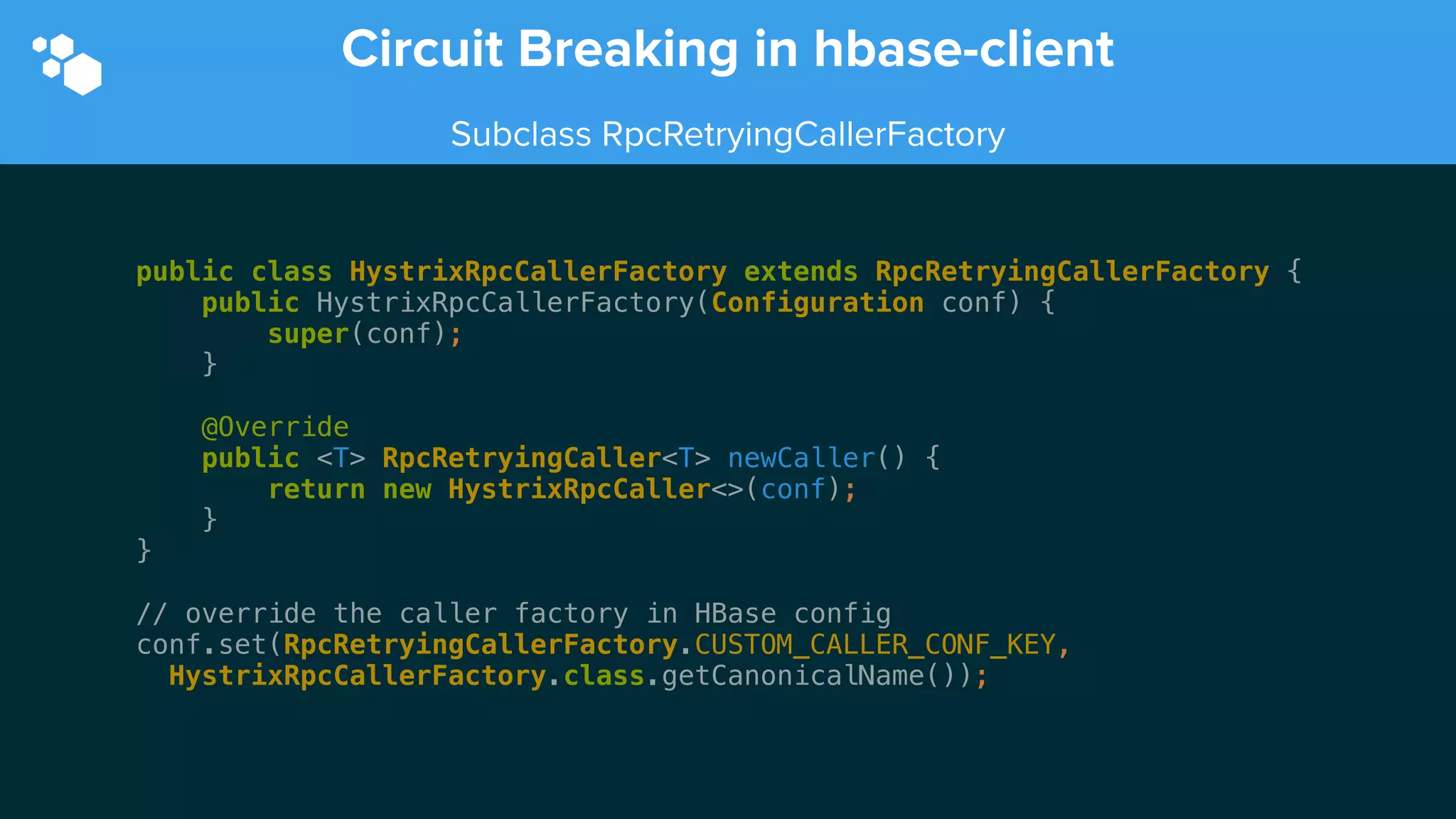

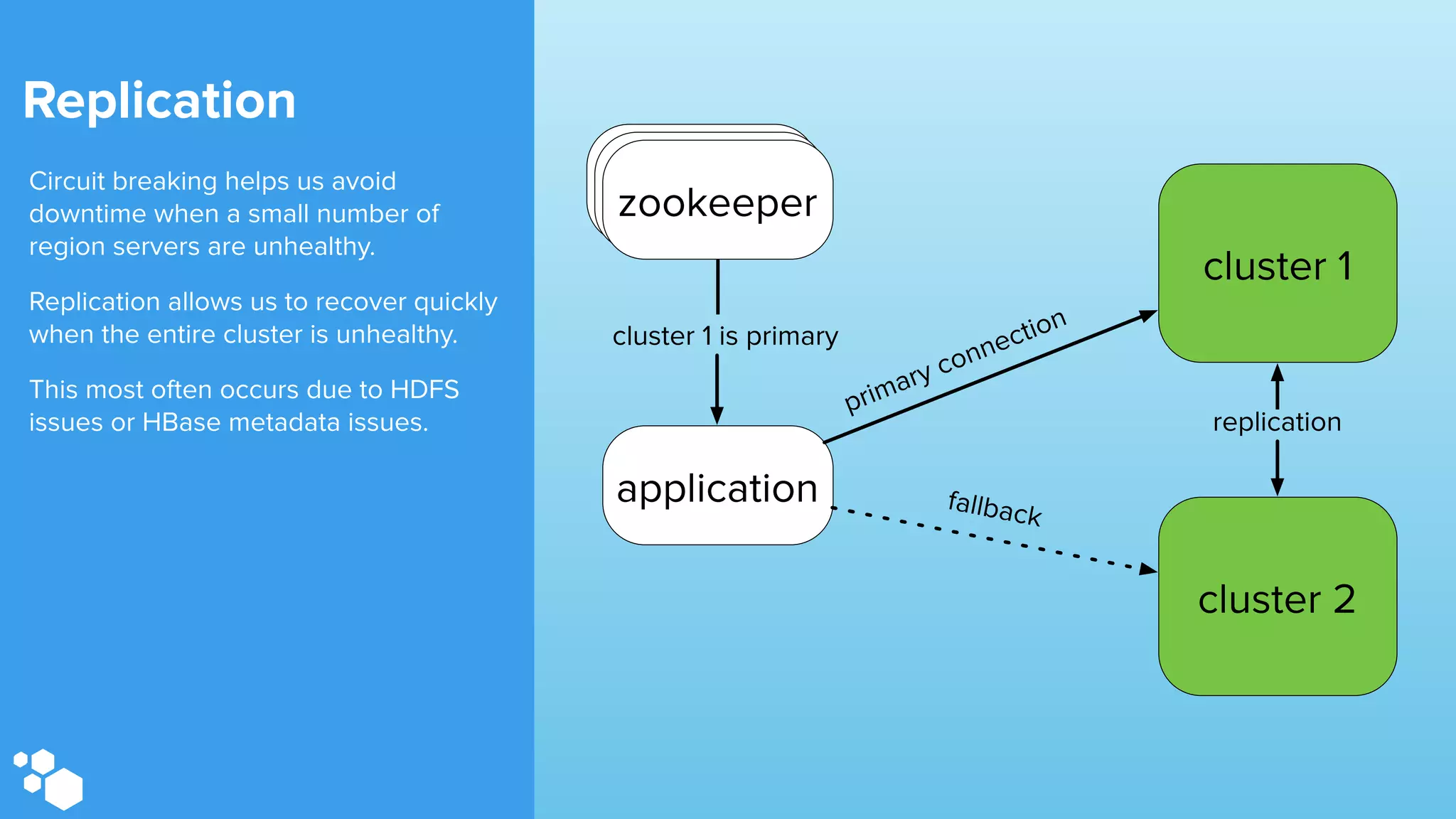

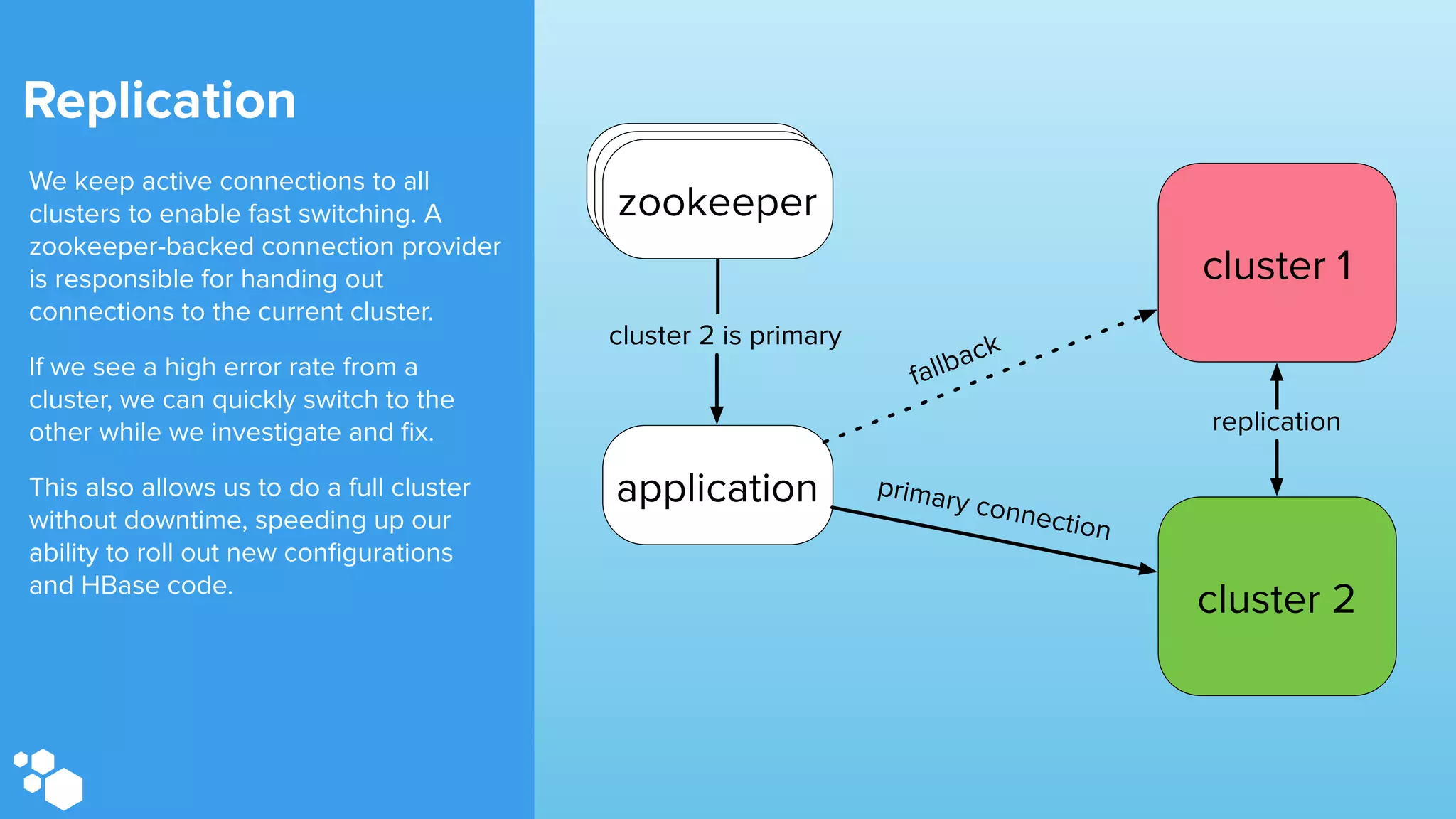

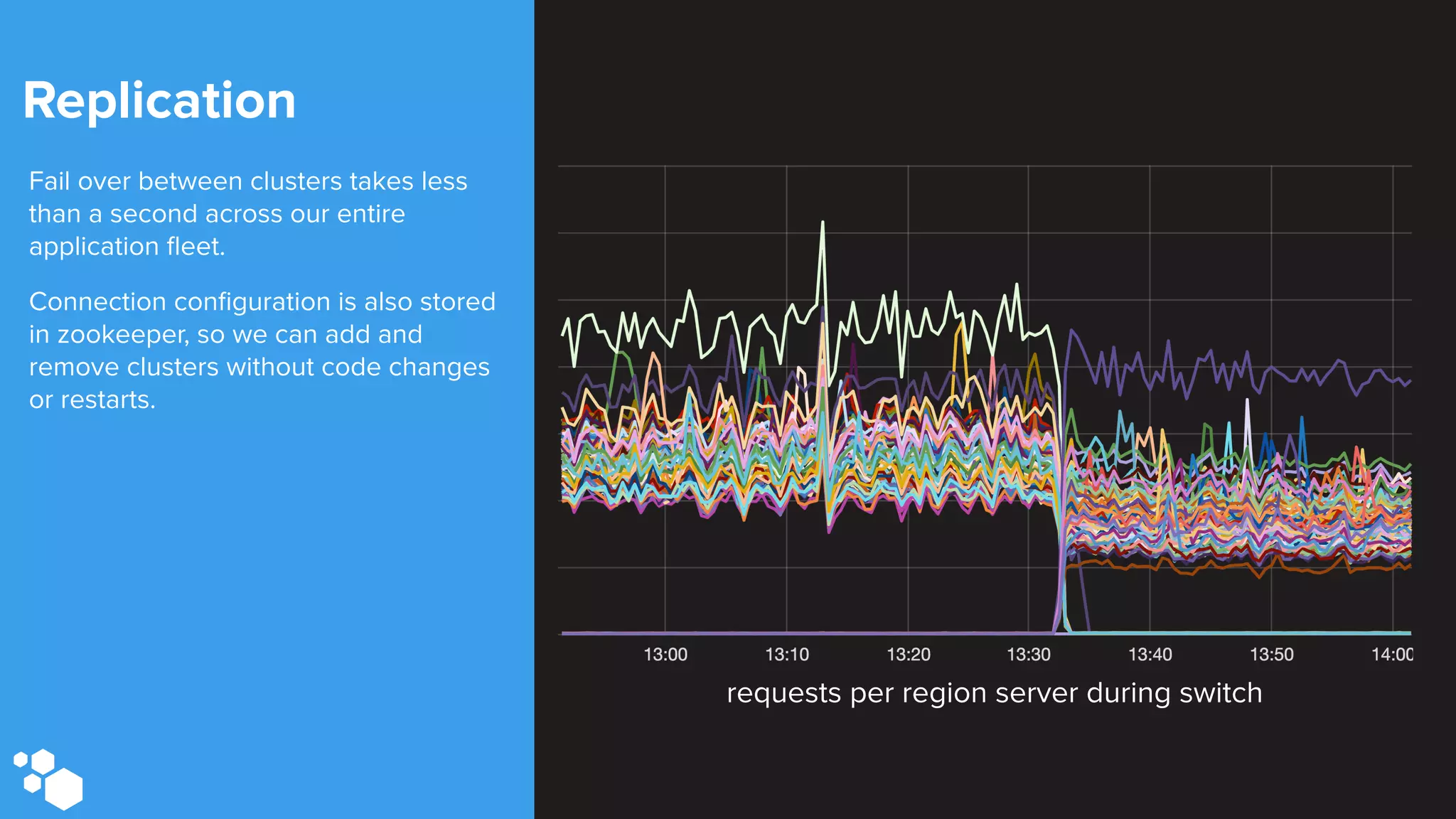

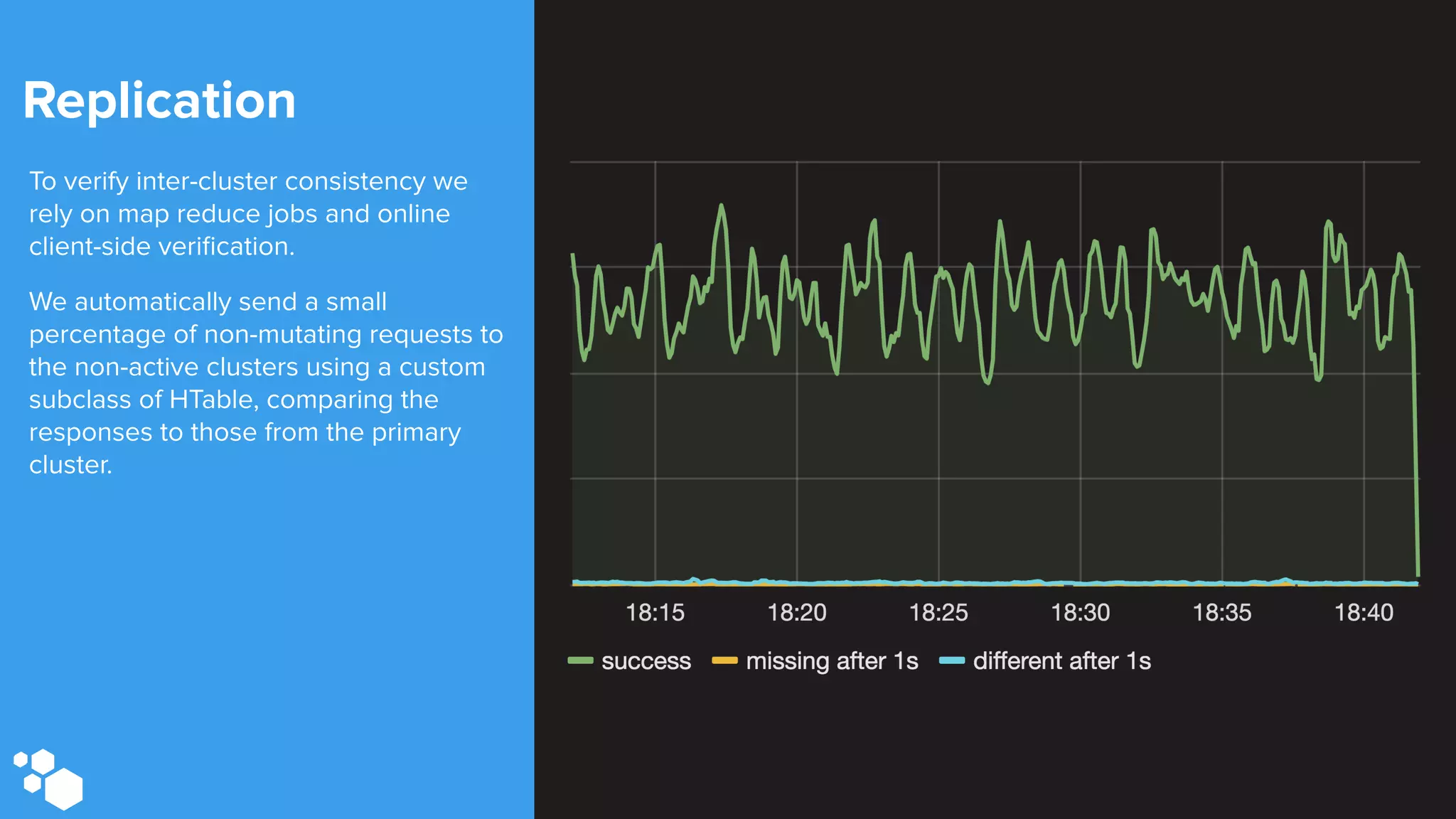

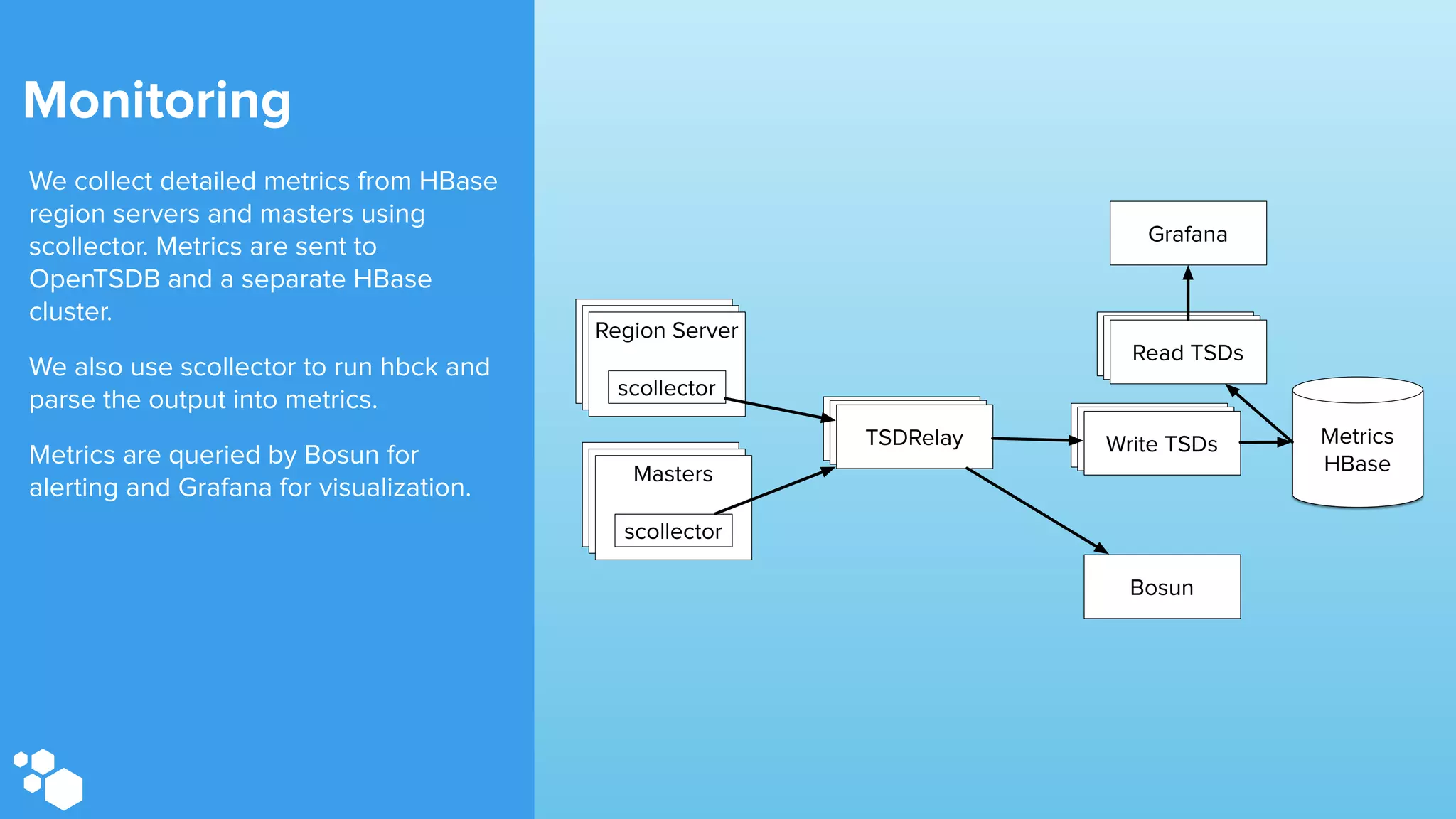

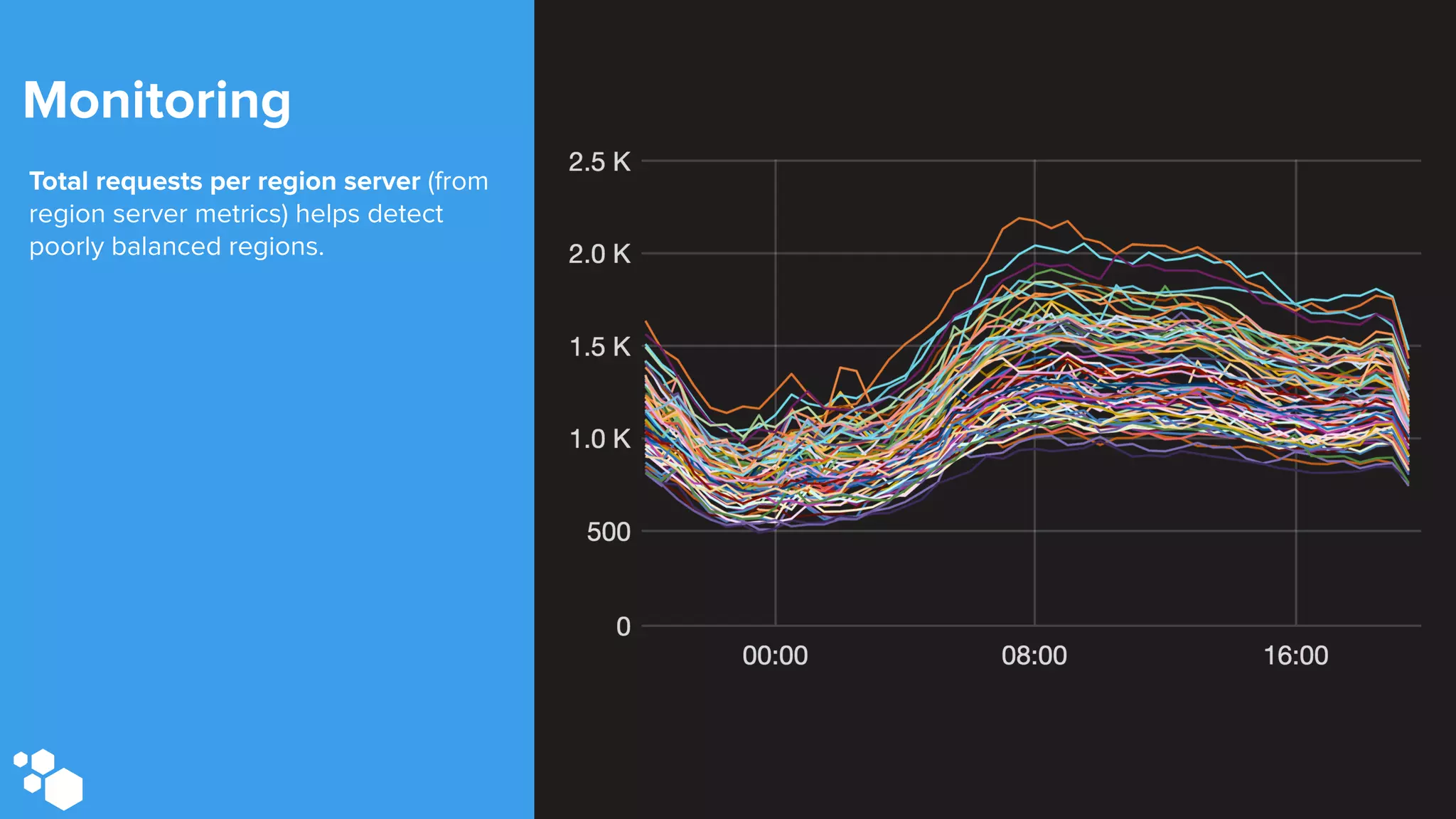

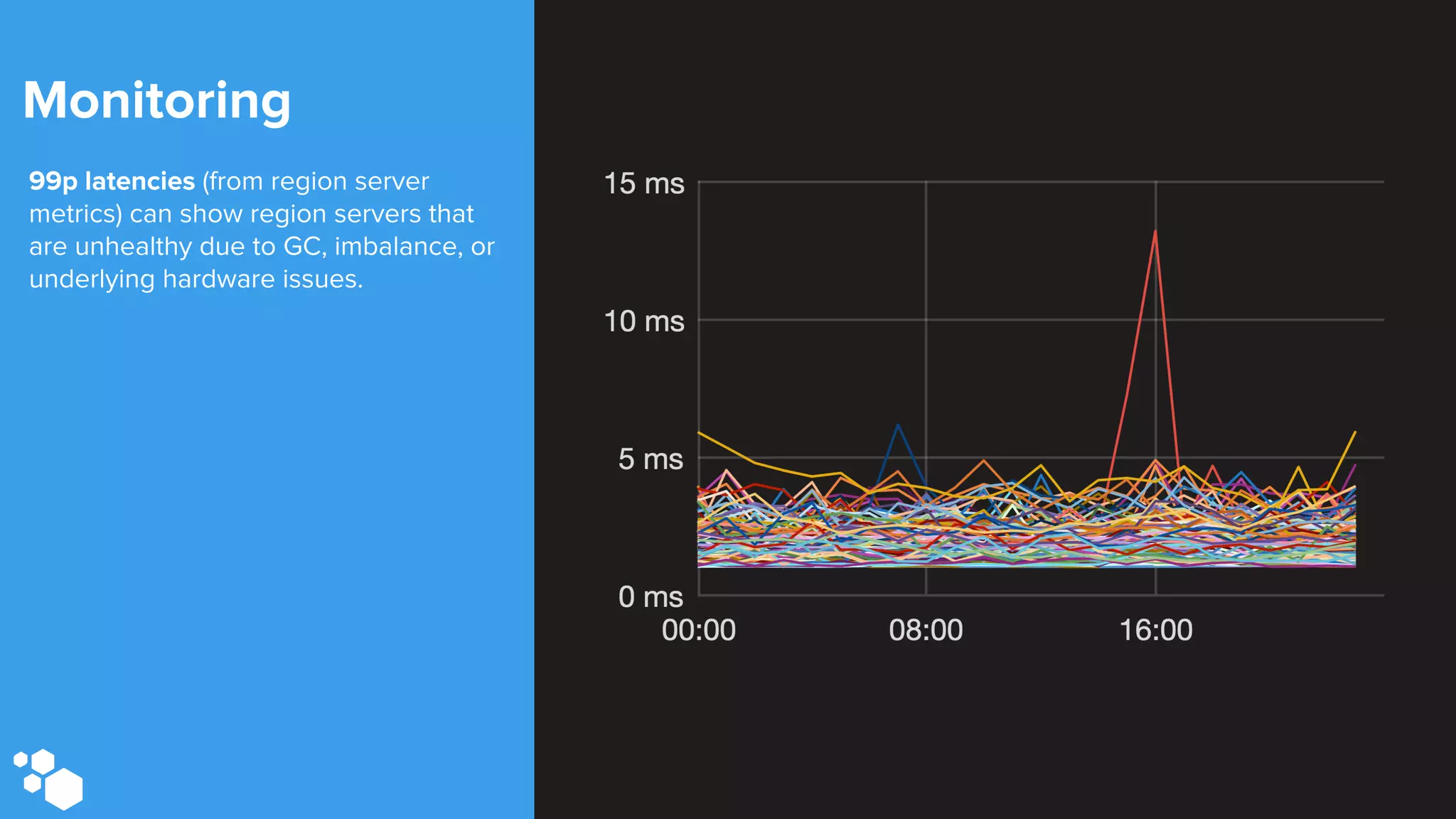

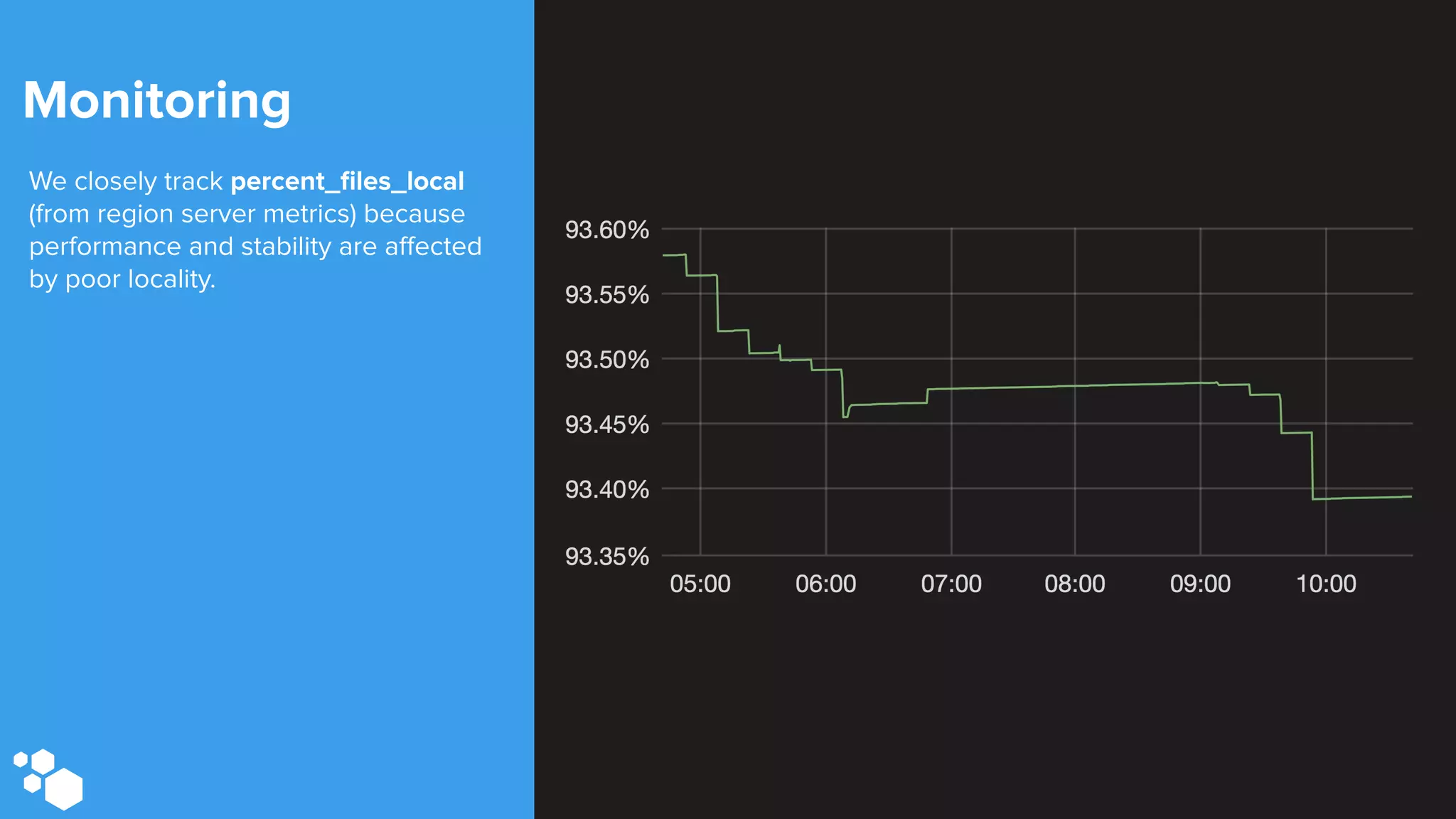

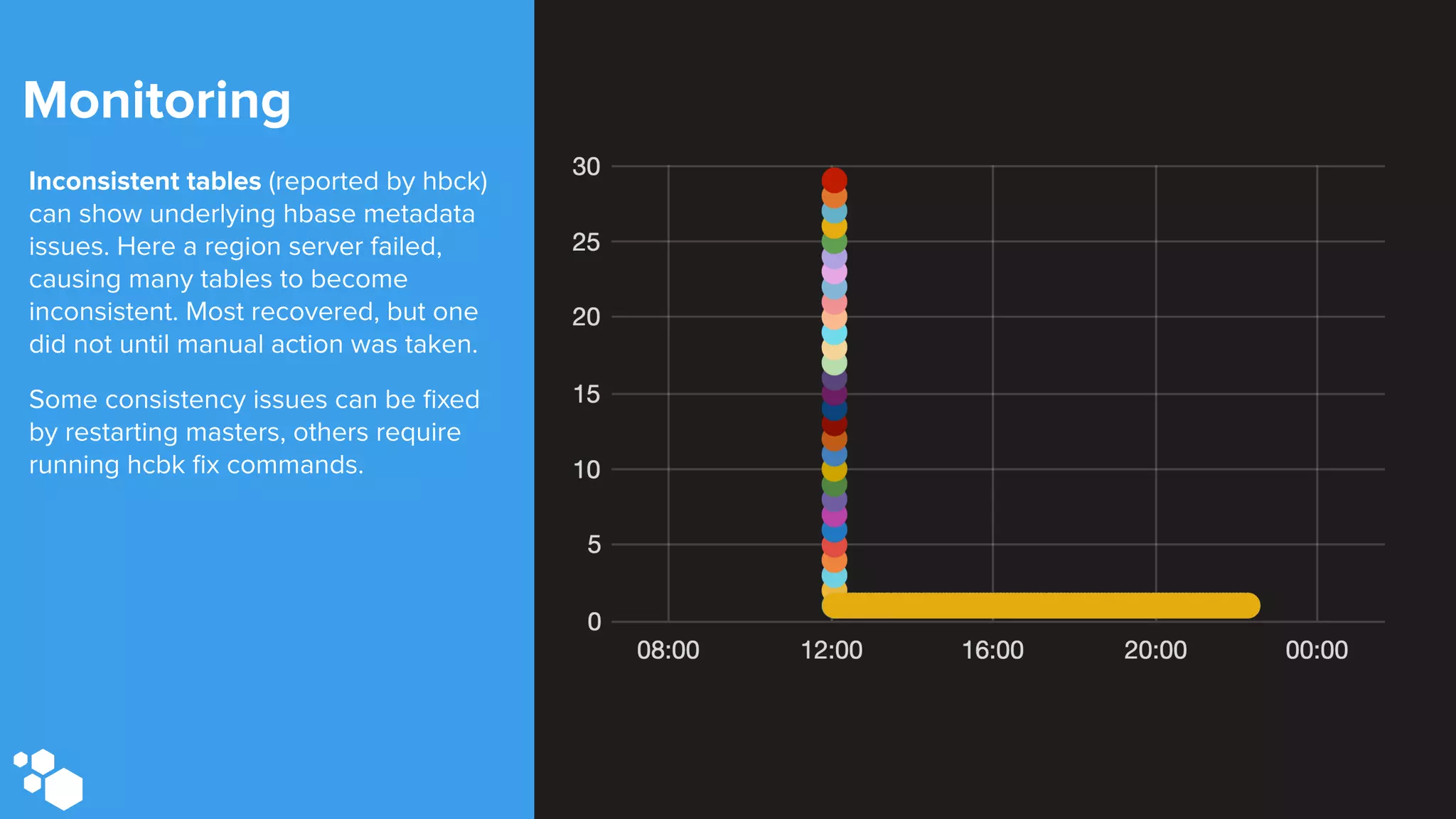

Sift Science uses real-time machine learning to help businesses combat online fraud, relying heavily on HBase to manage large volumes of user data and risk scoring. The company prioritizes reliability by implementing circuit breaking and replication strategies to minimize downtime and facilitate quick recovery during failures. Monitoring is crucial, with metrics collected to ensure system health, detect issues, and guide future improvements, including automation of recovery procedures and cross-datacenter replication.