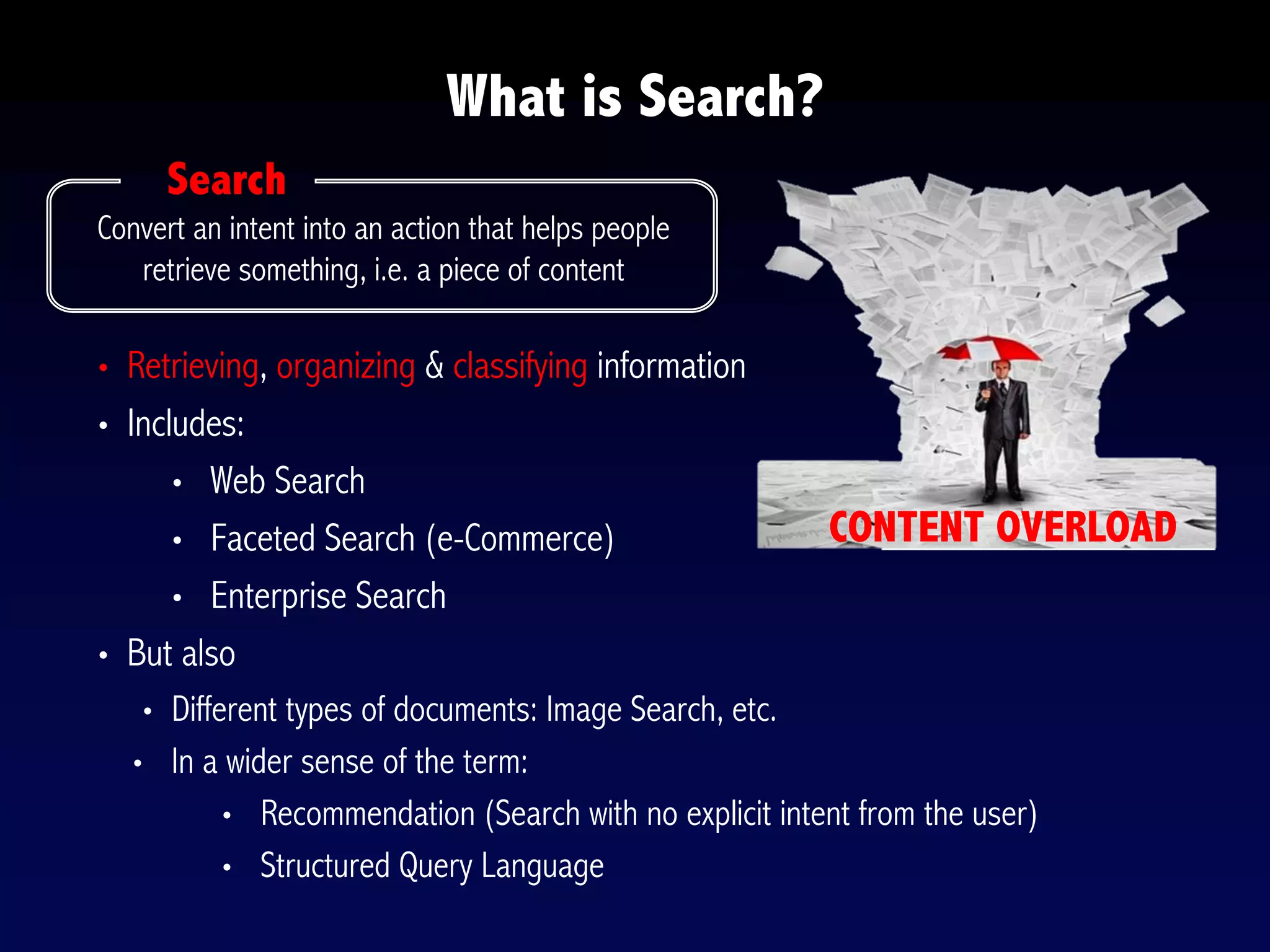

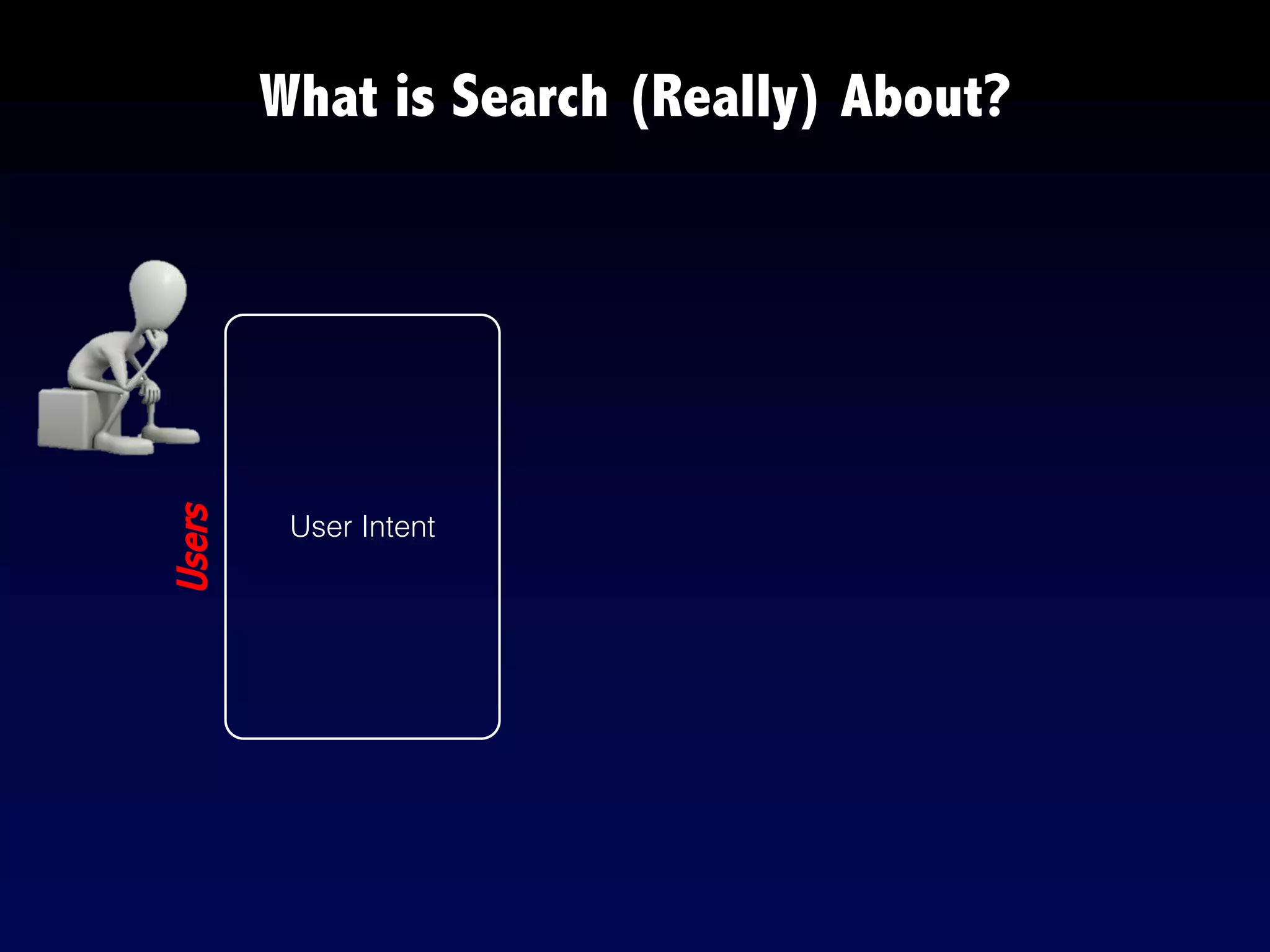

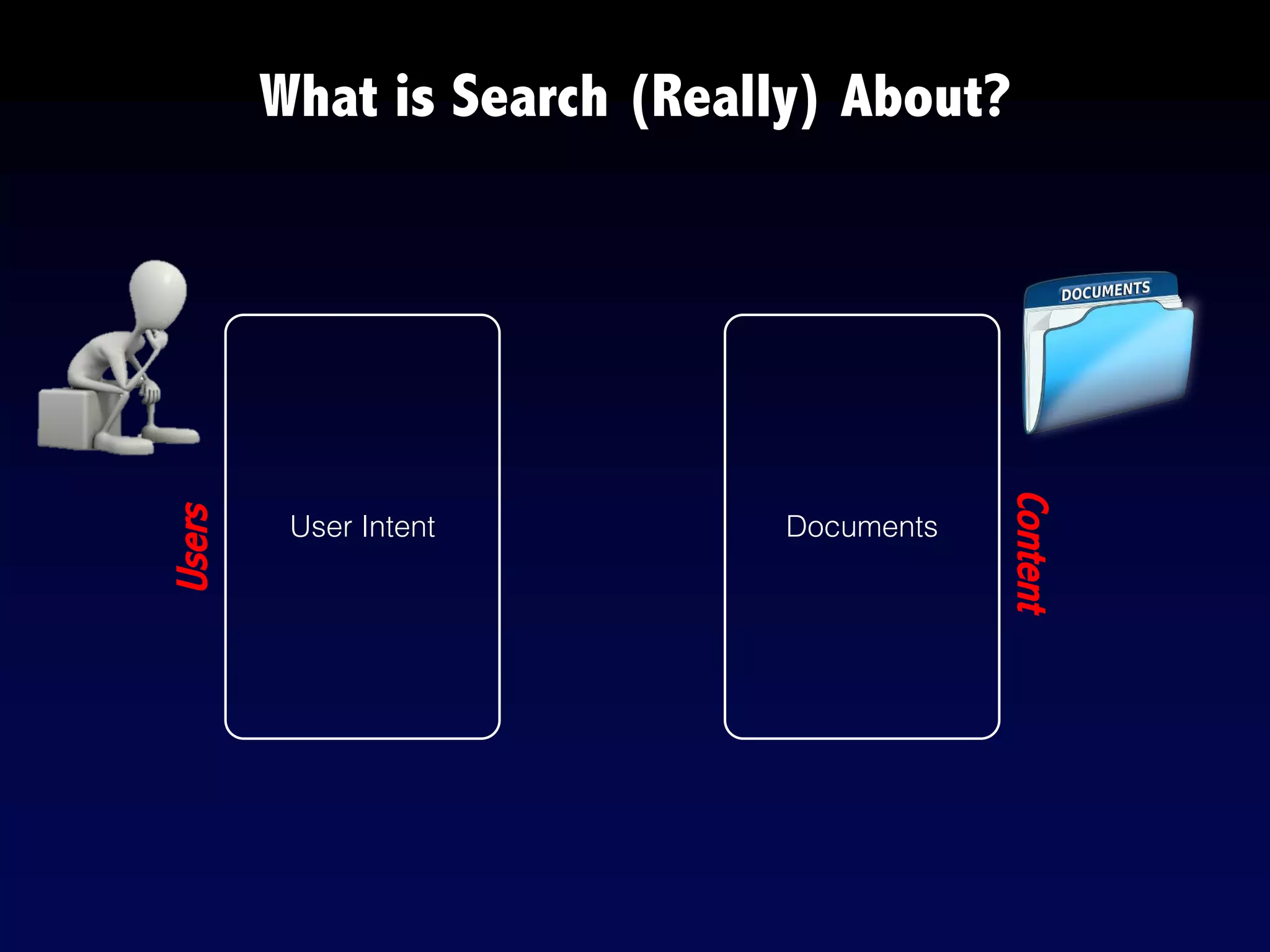

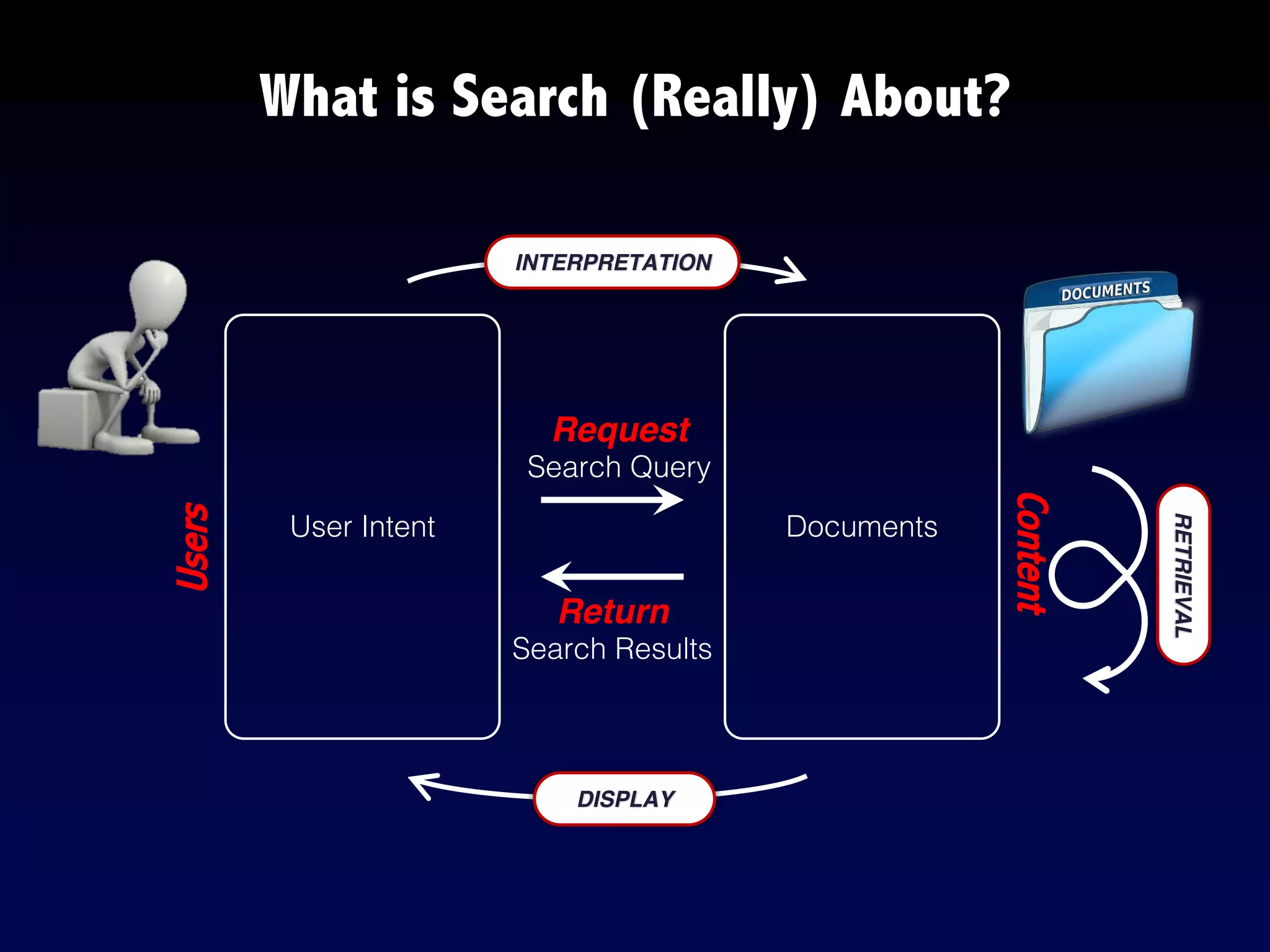

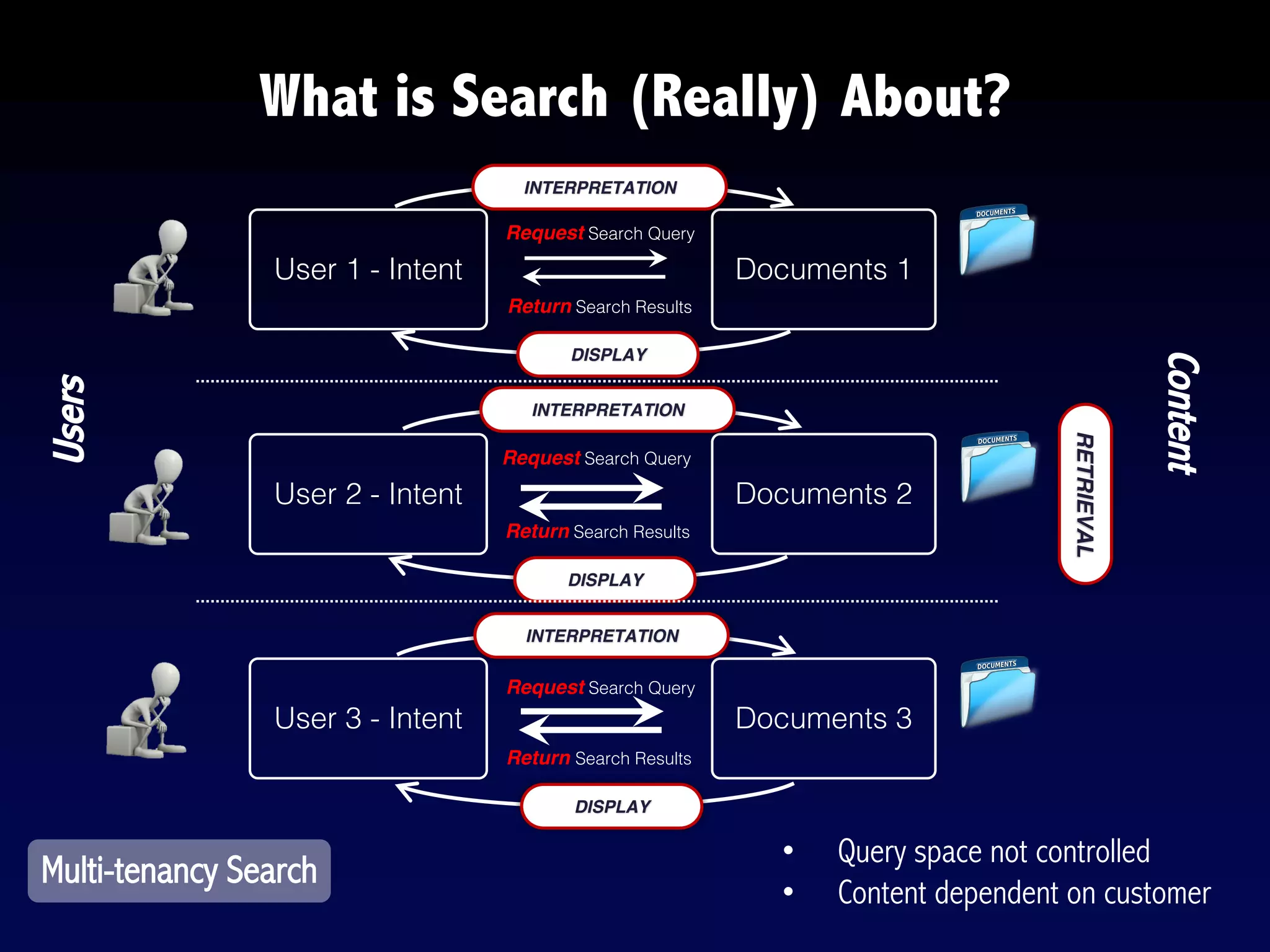

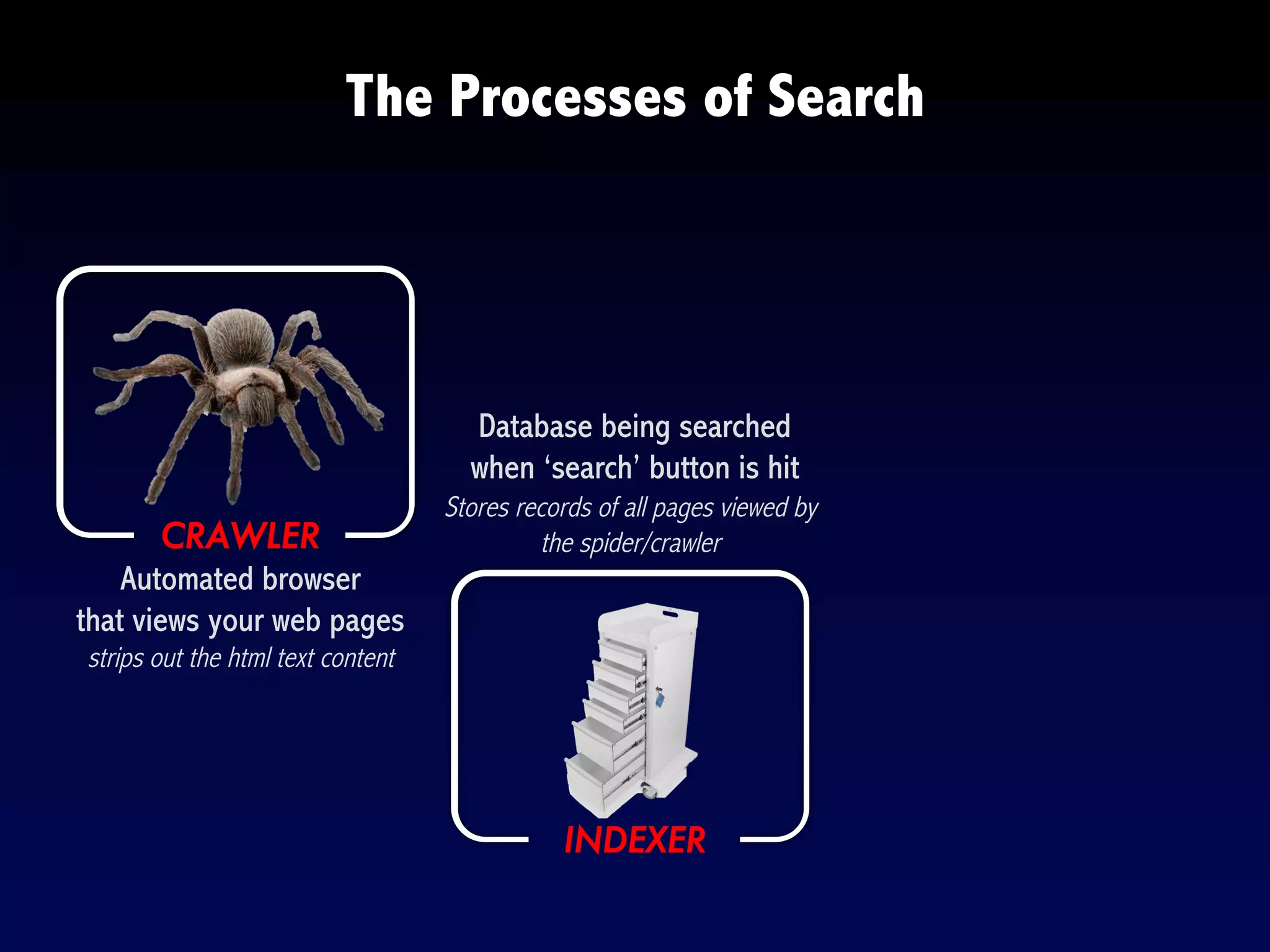

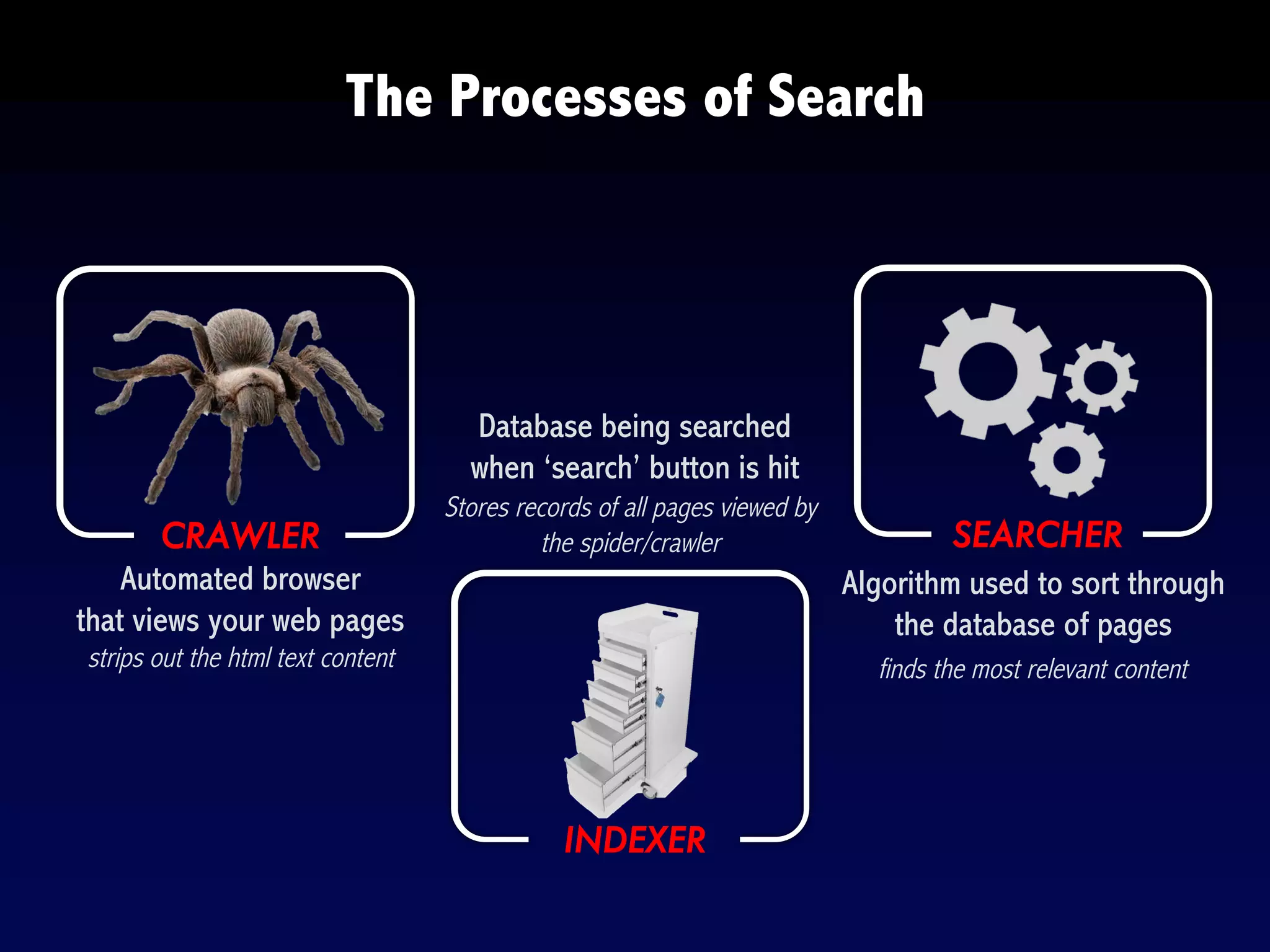

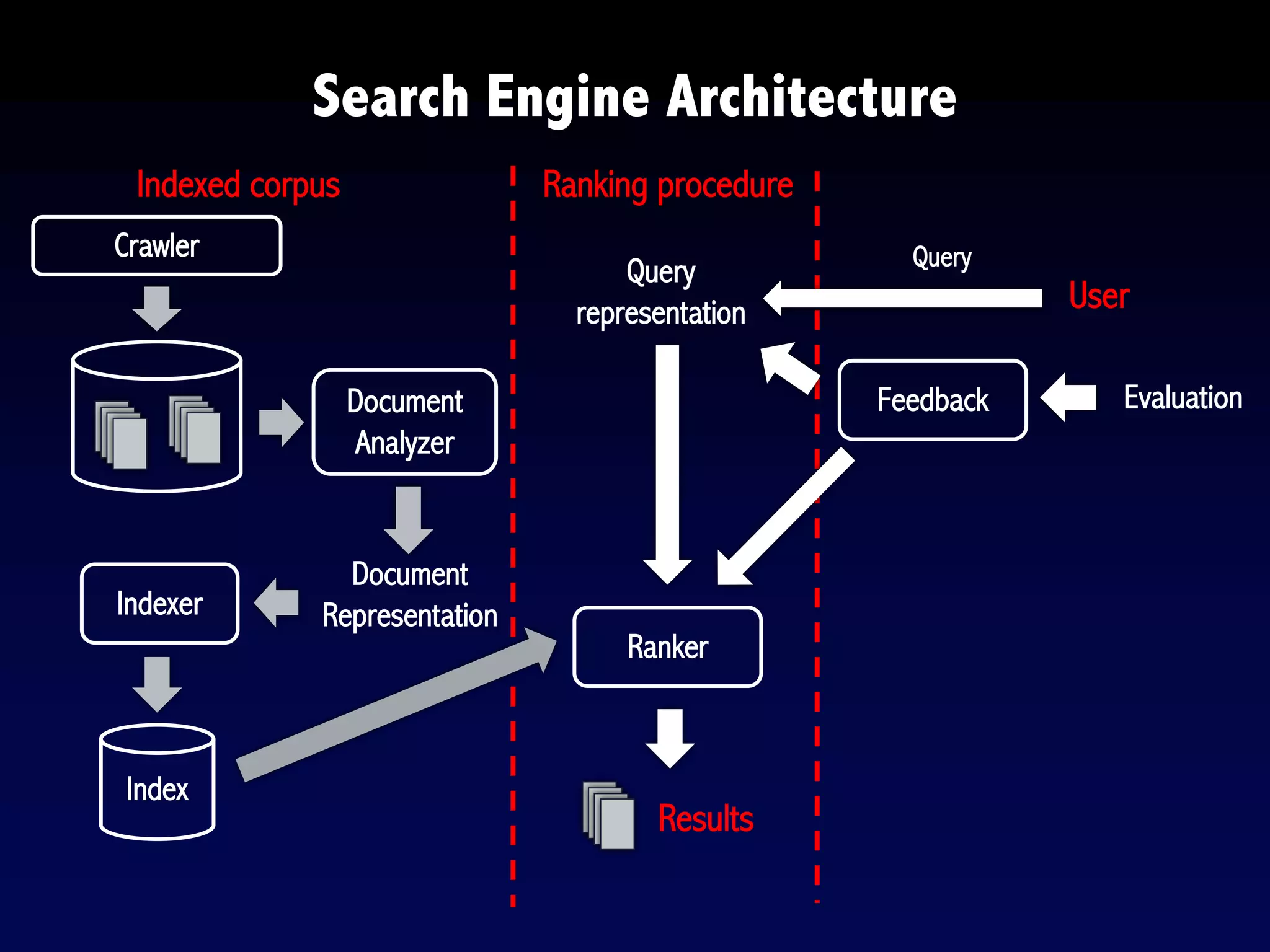

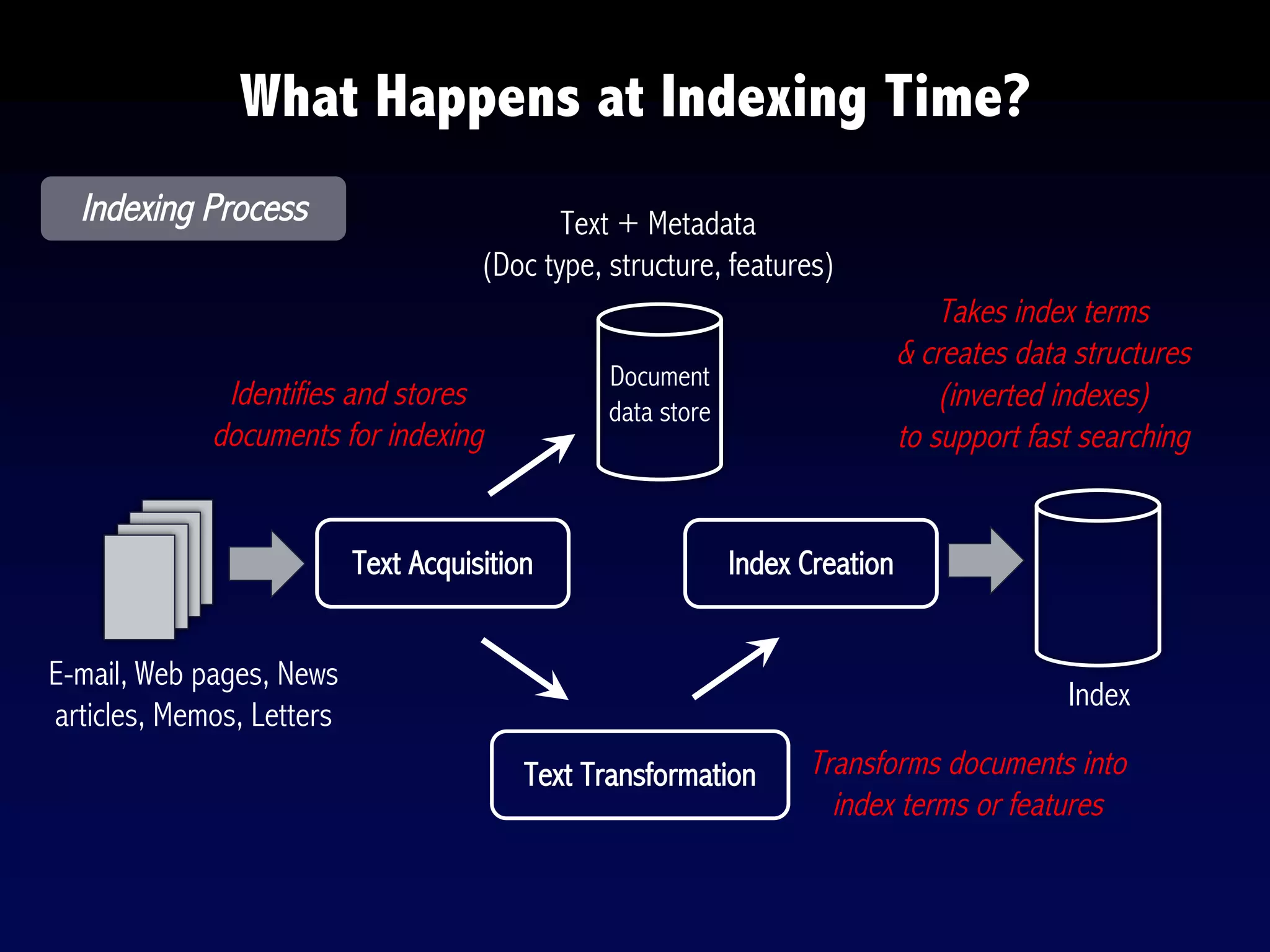

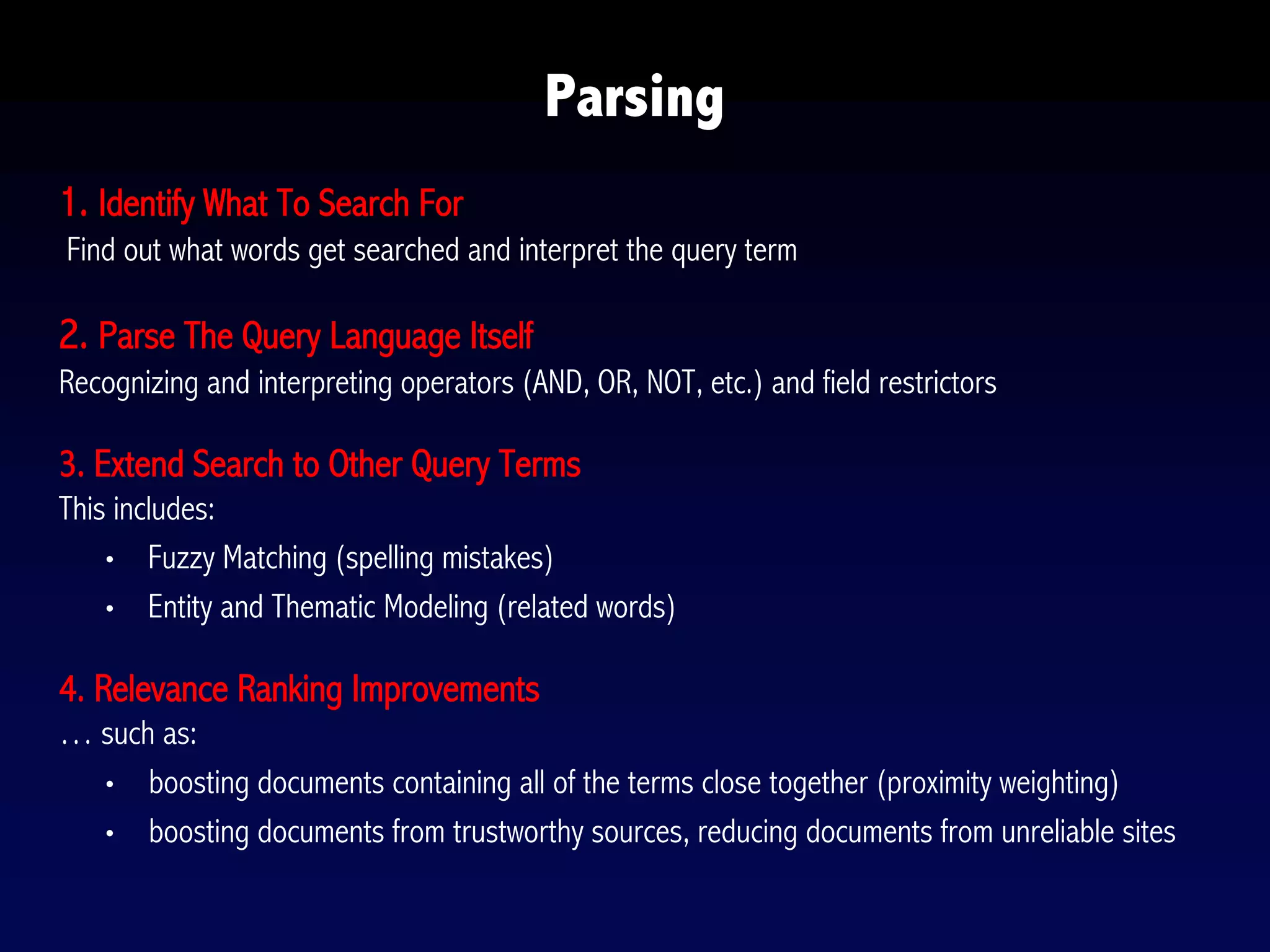

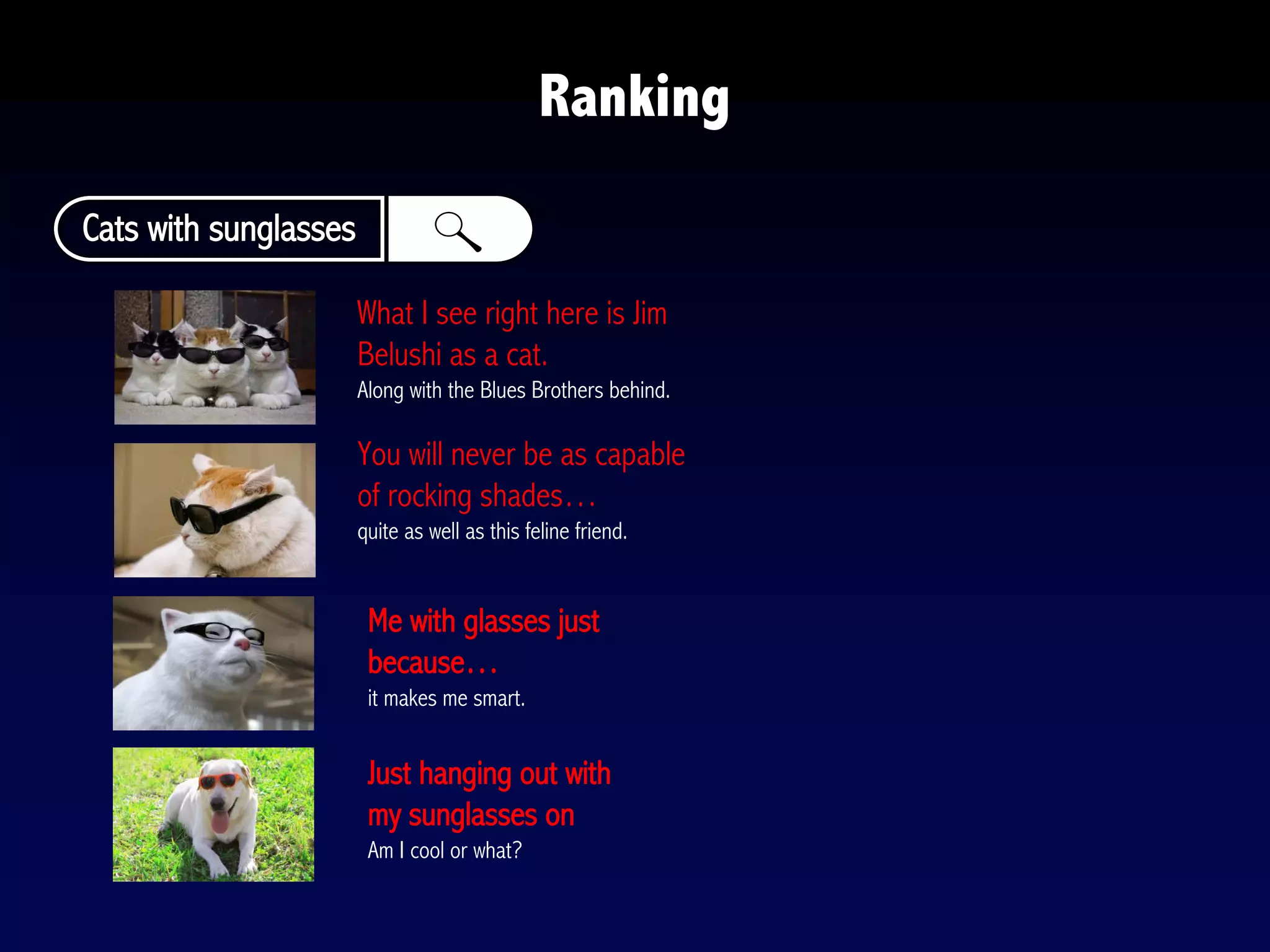

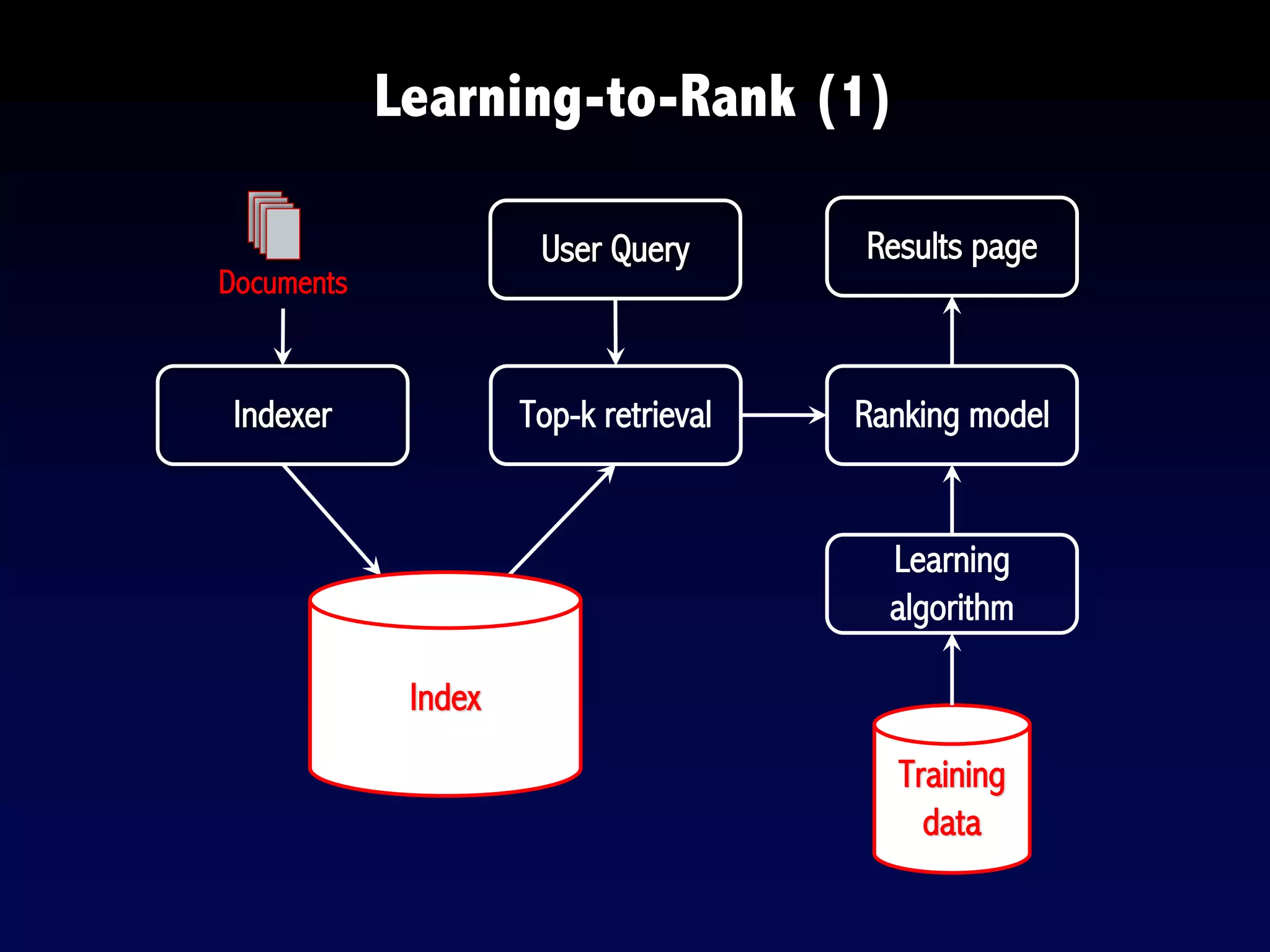

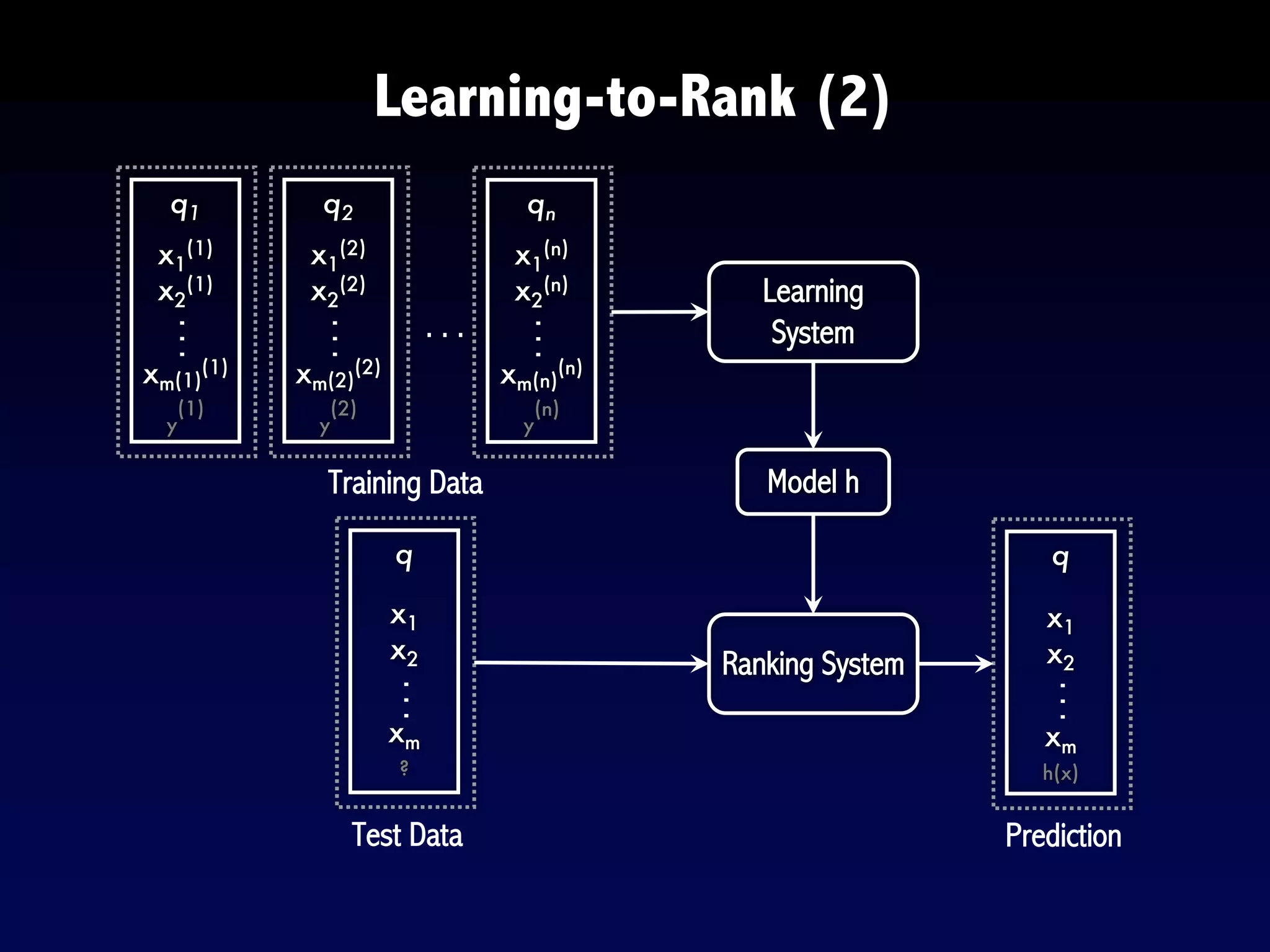

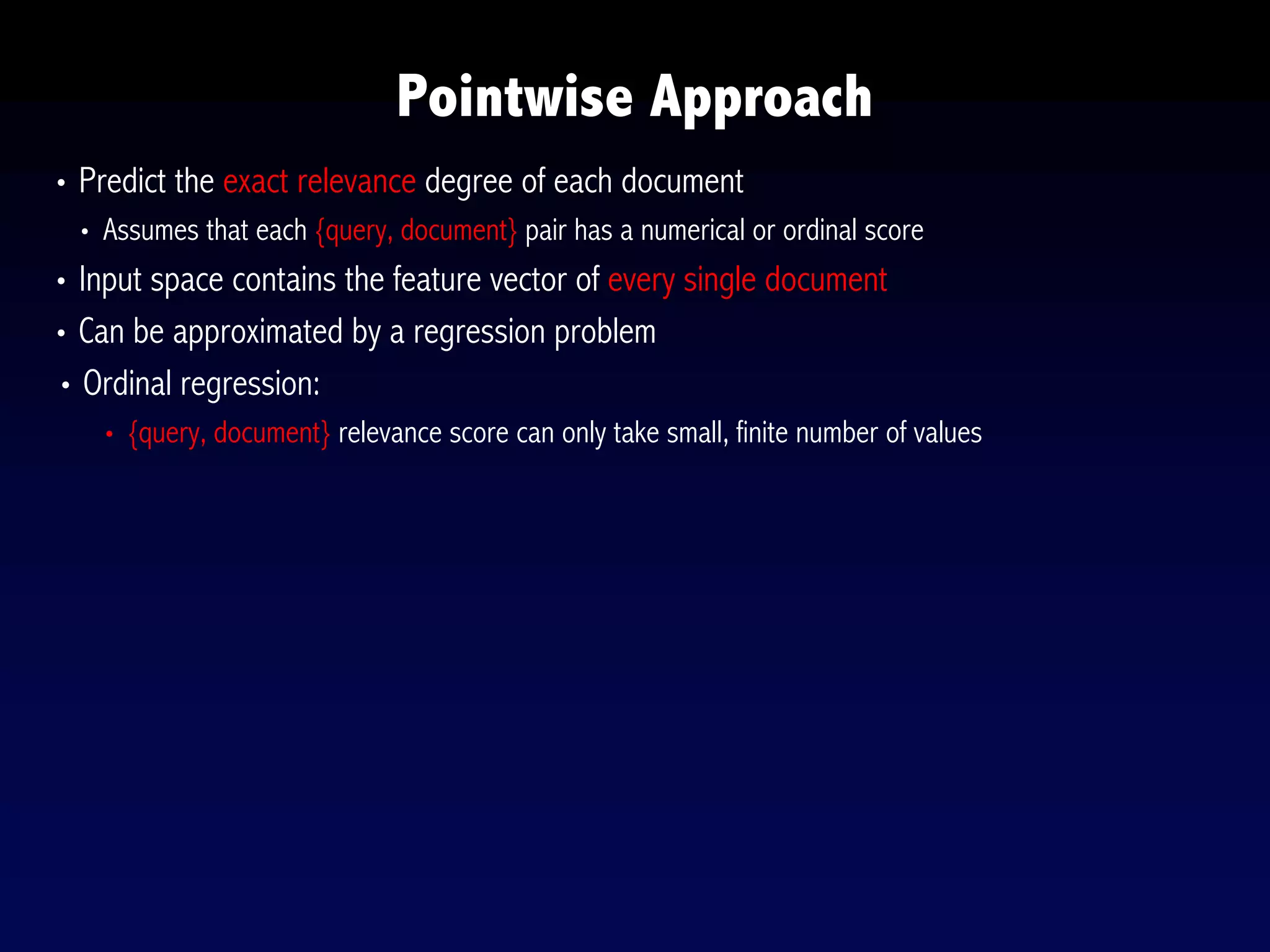

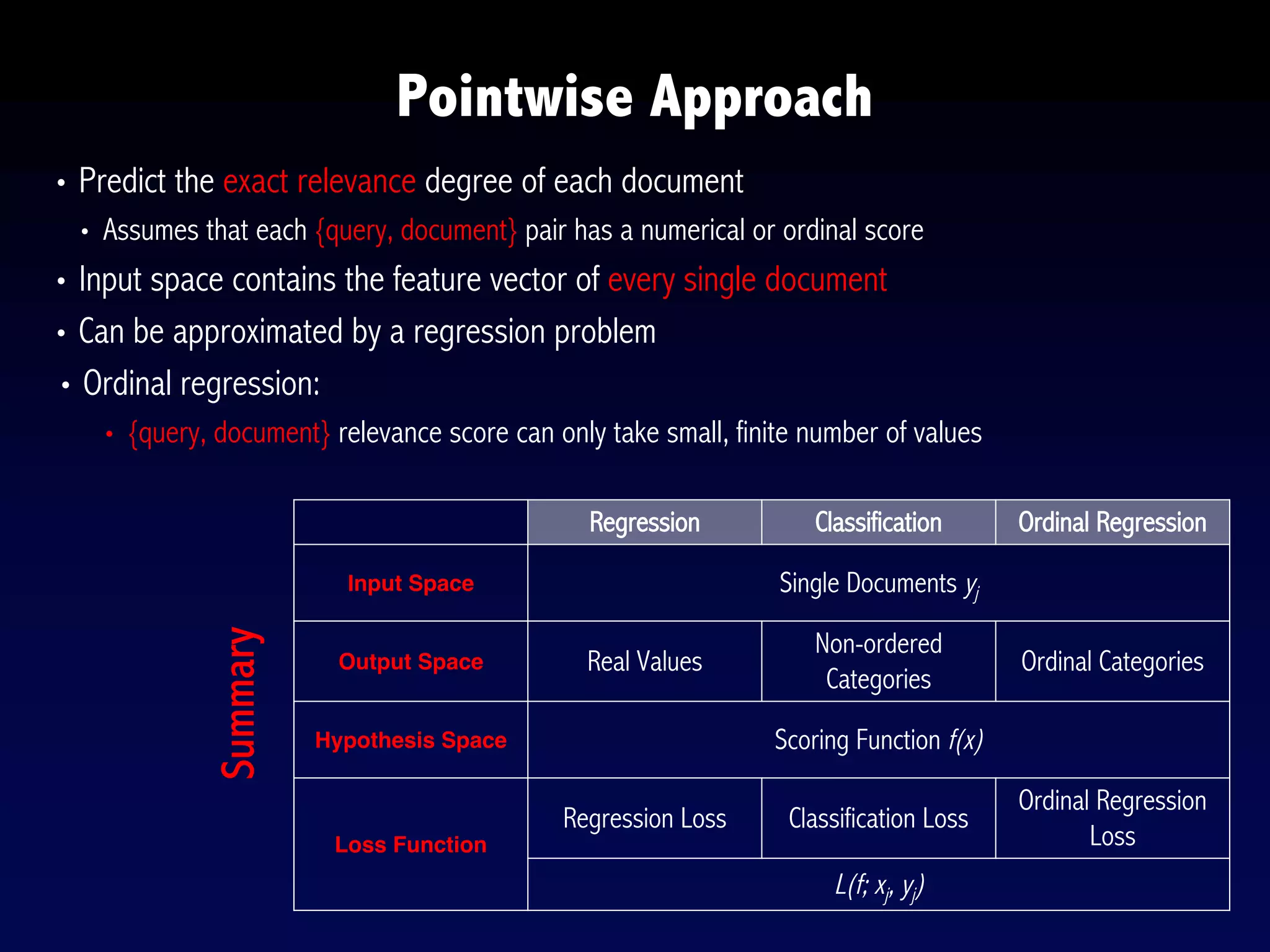

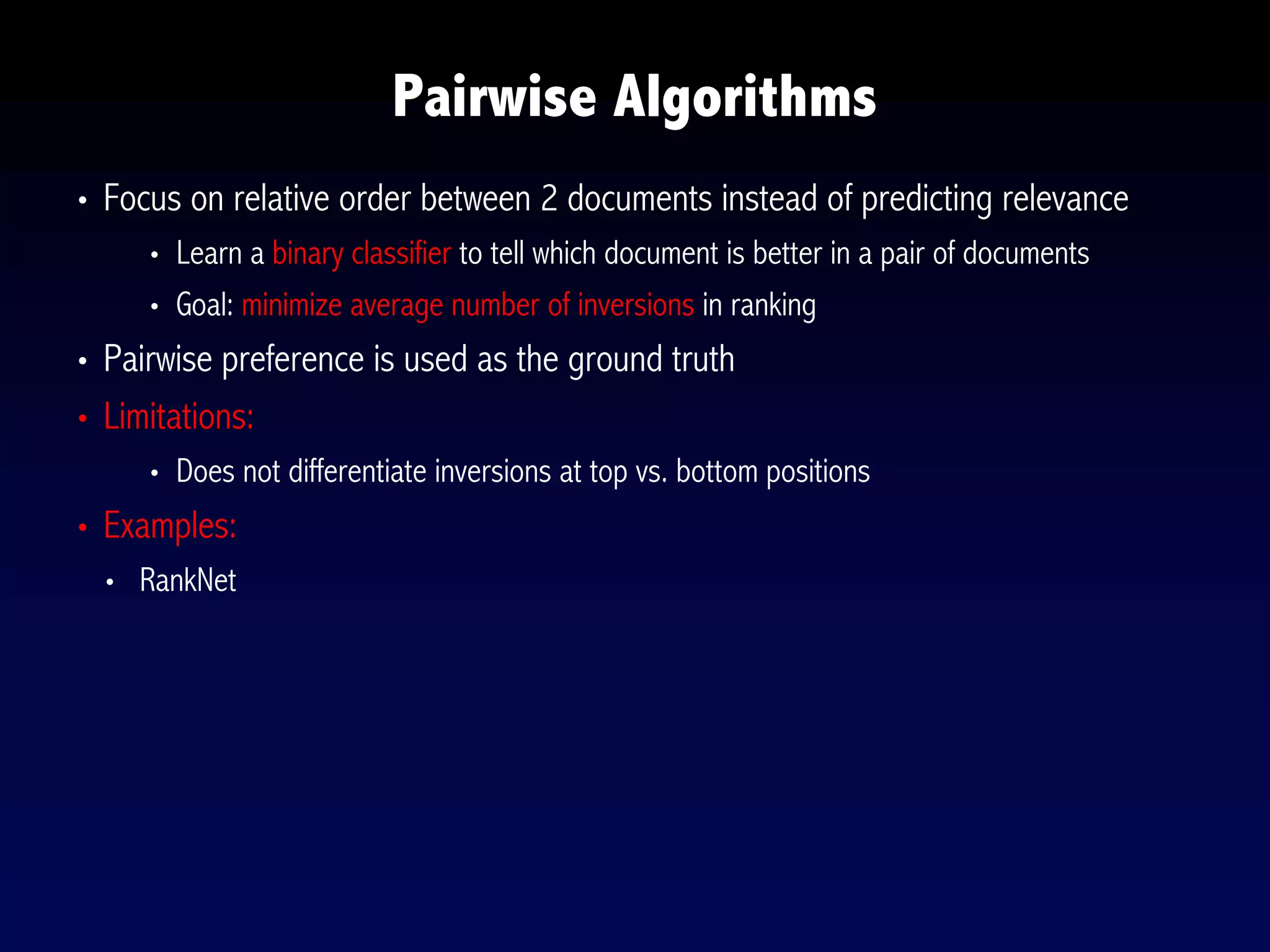

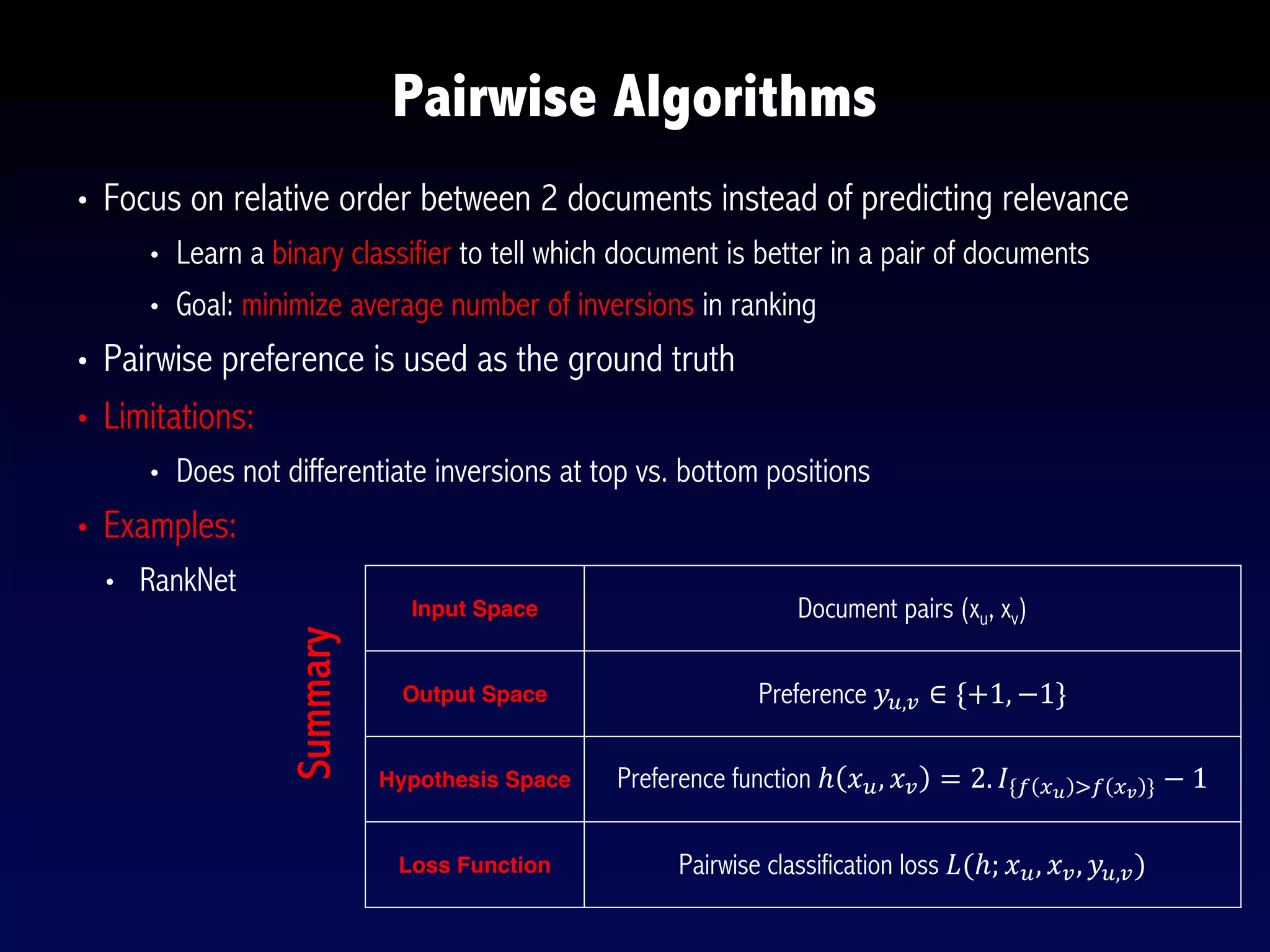

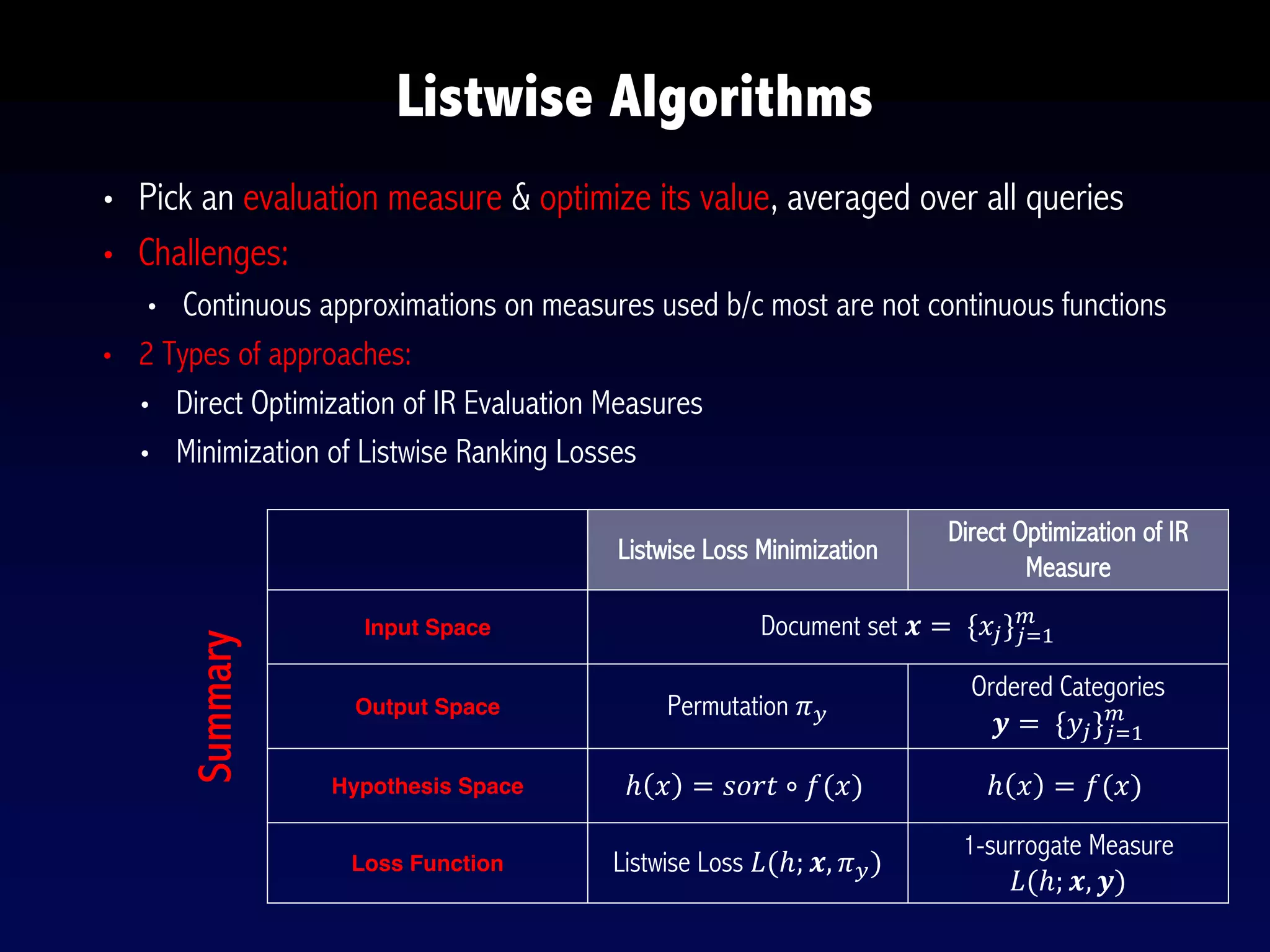

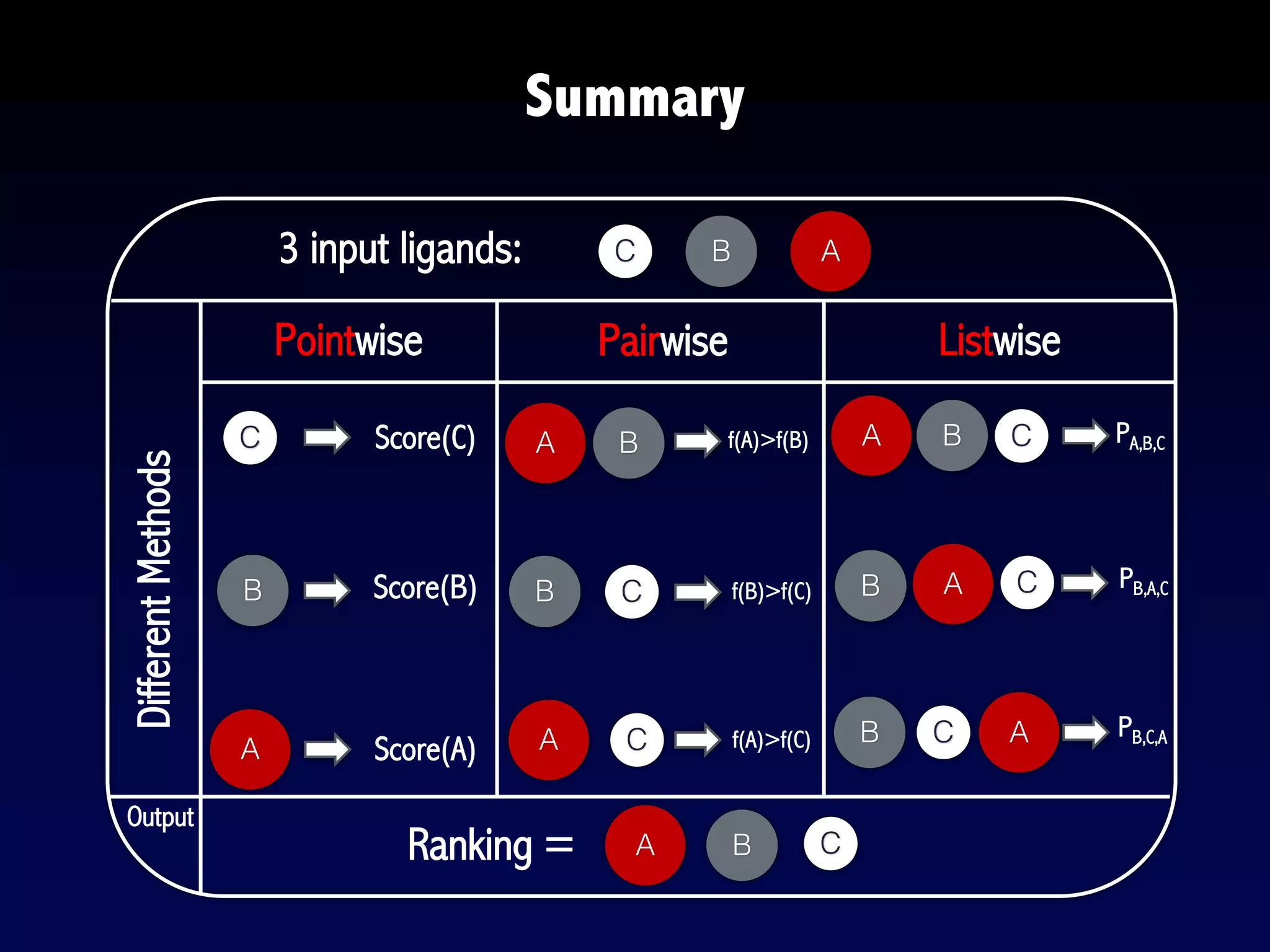

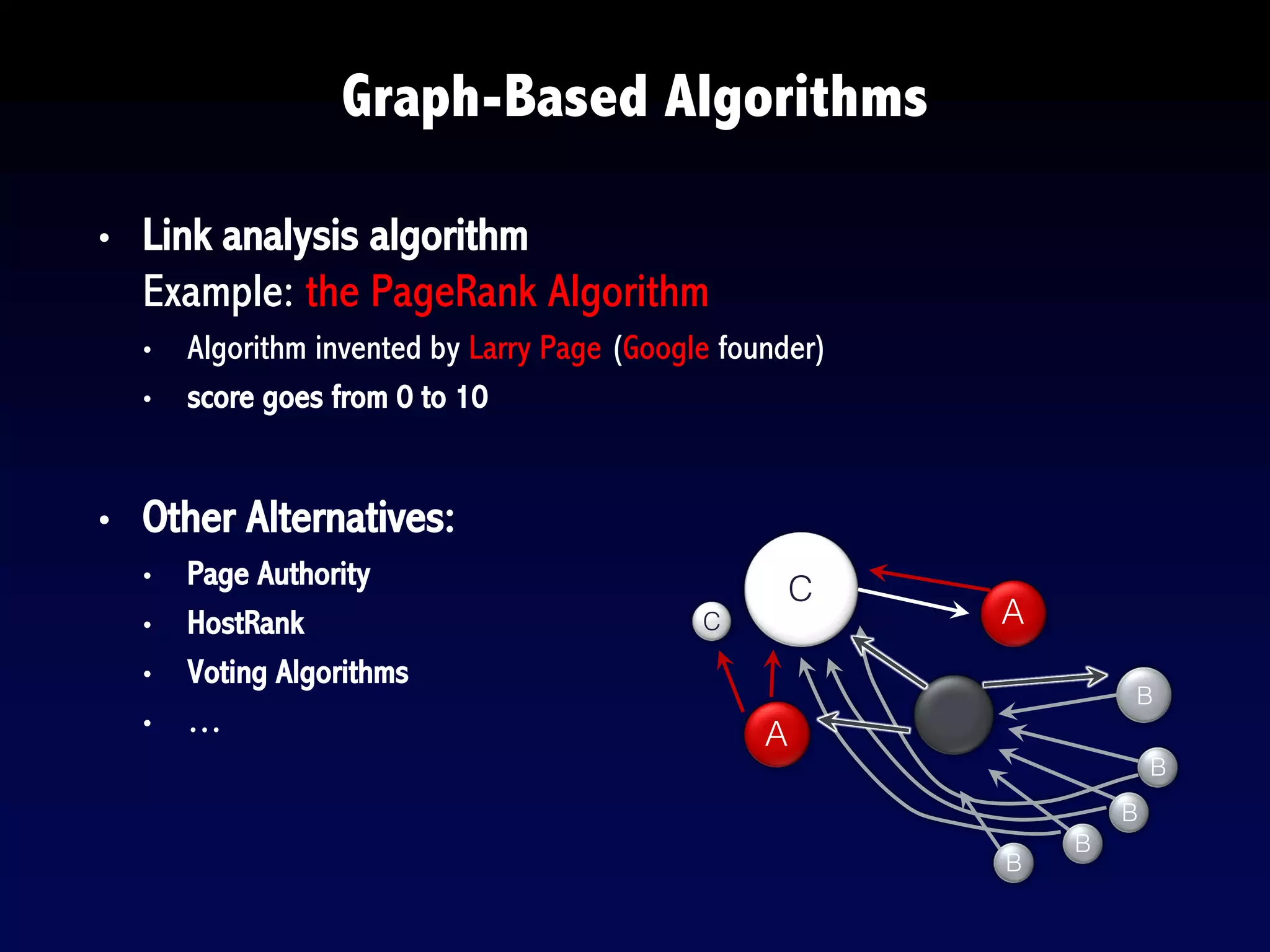

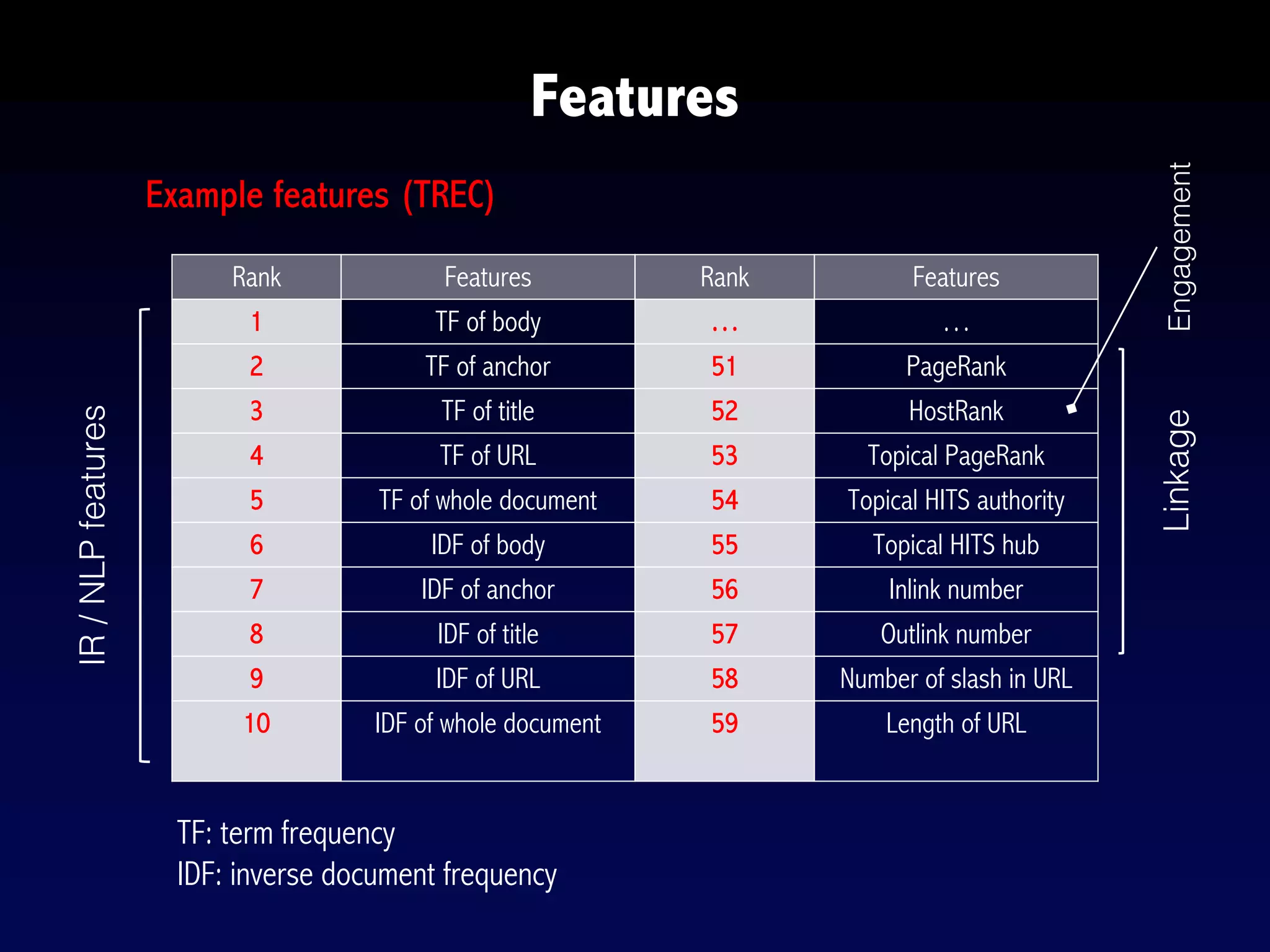

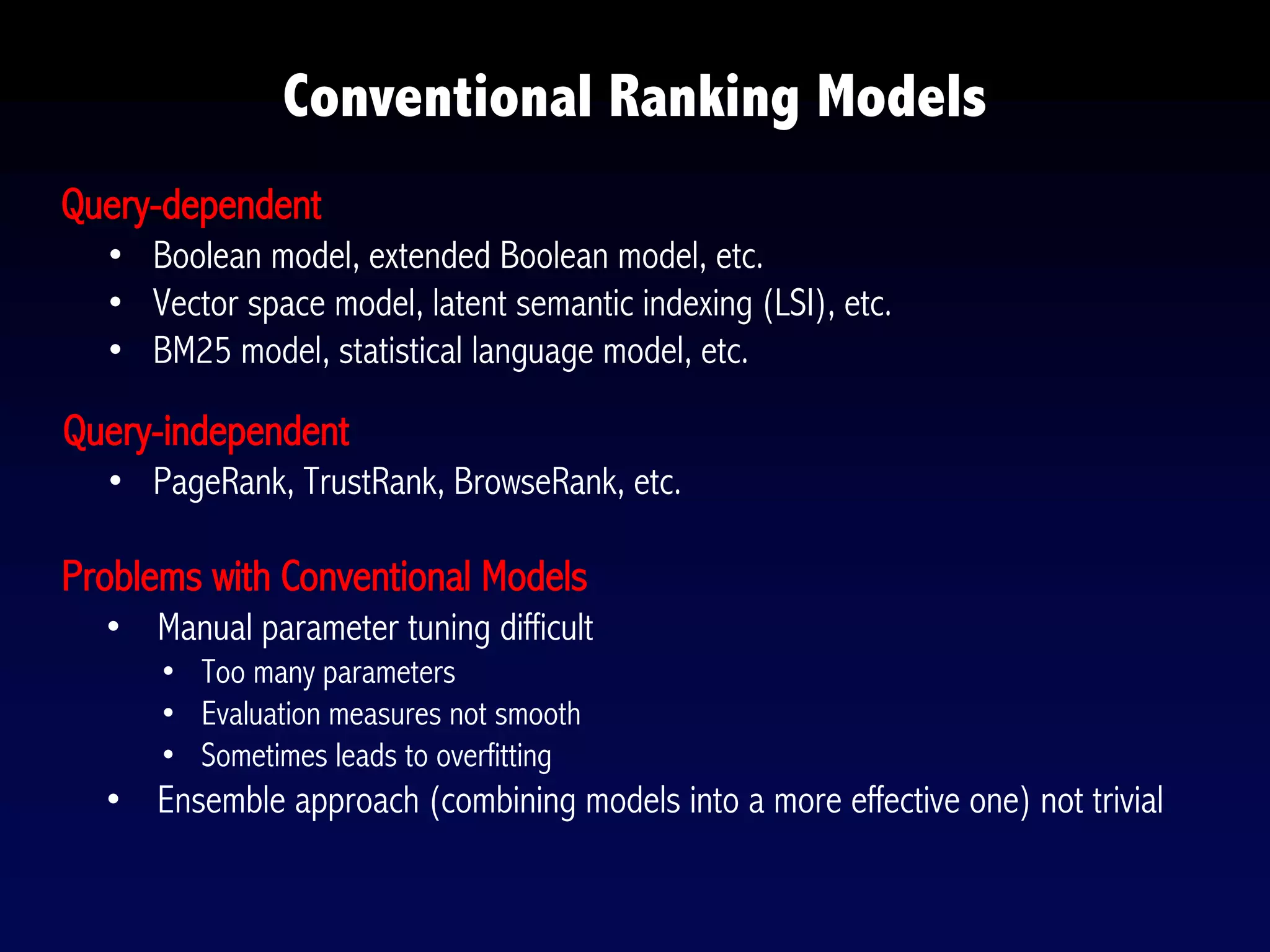

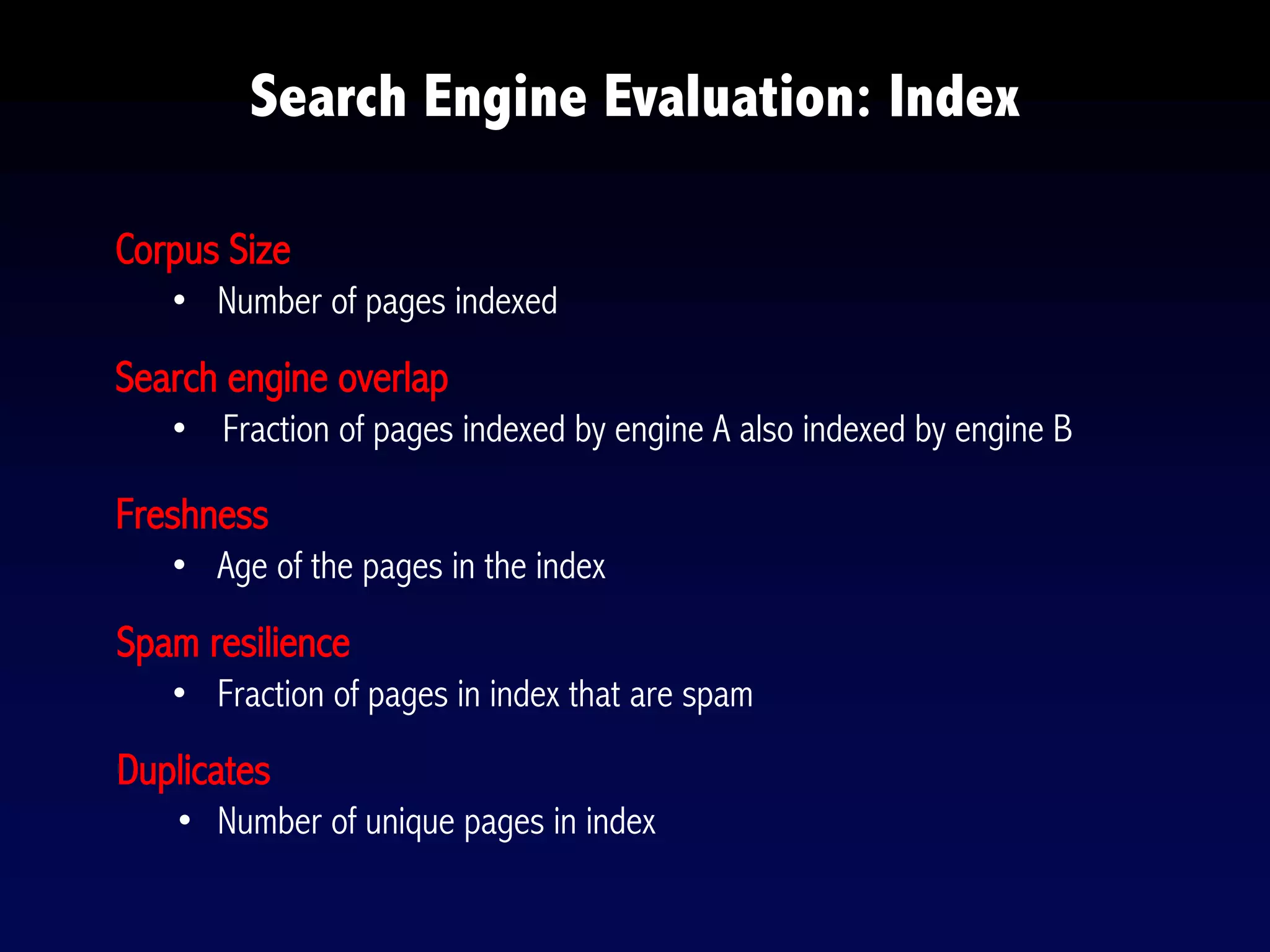

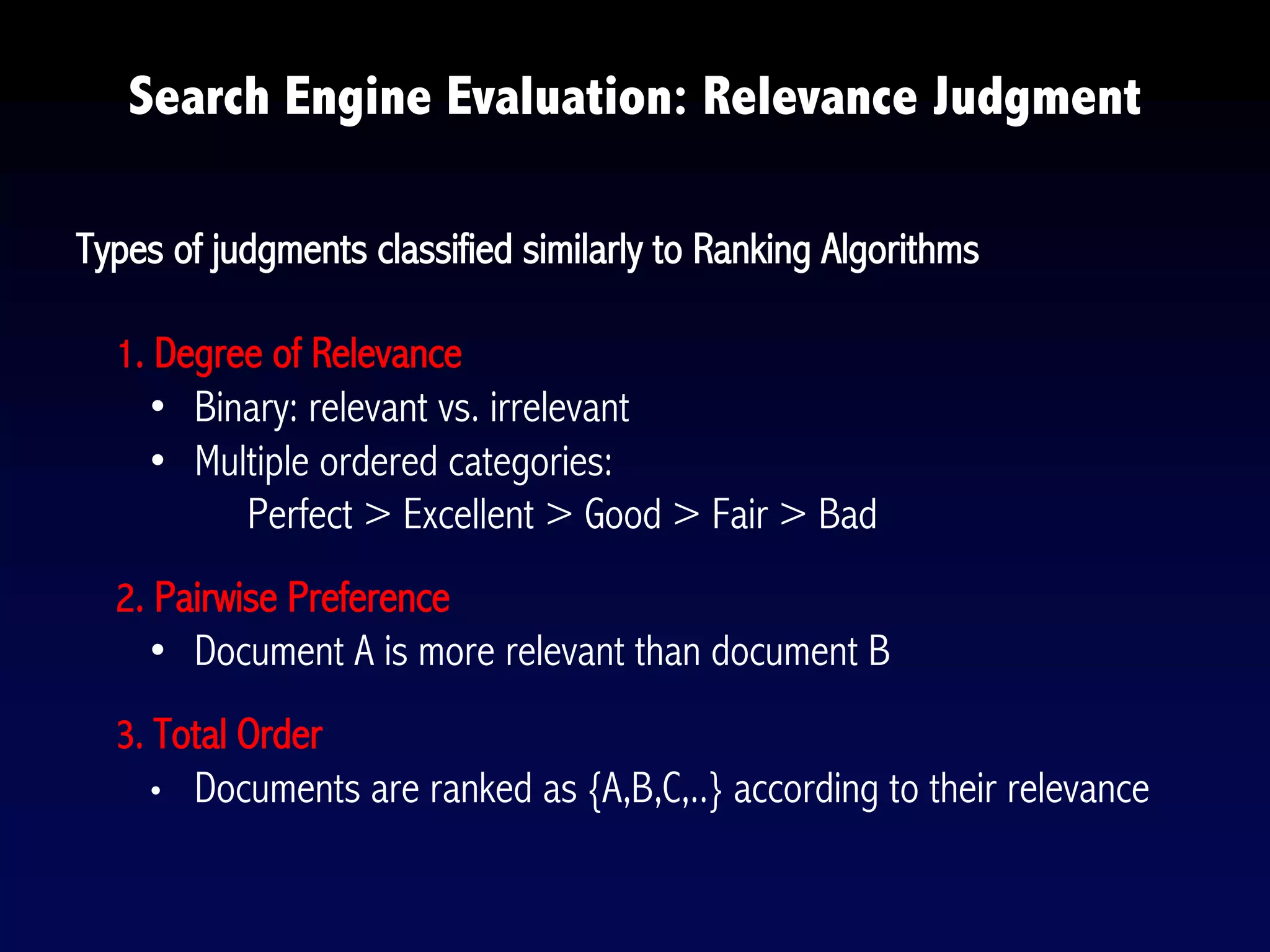

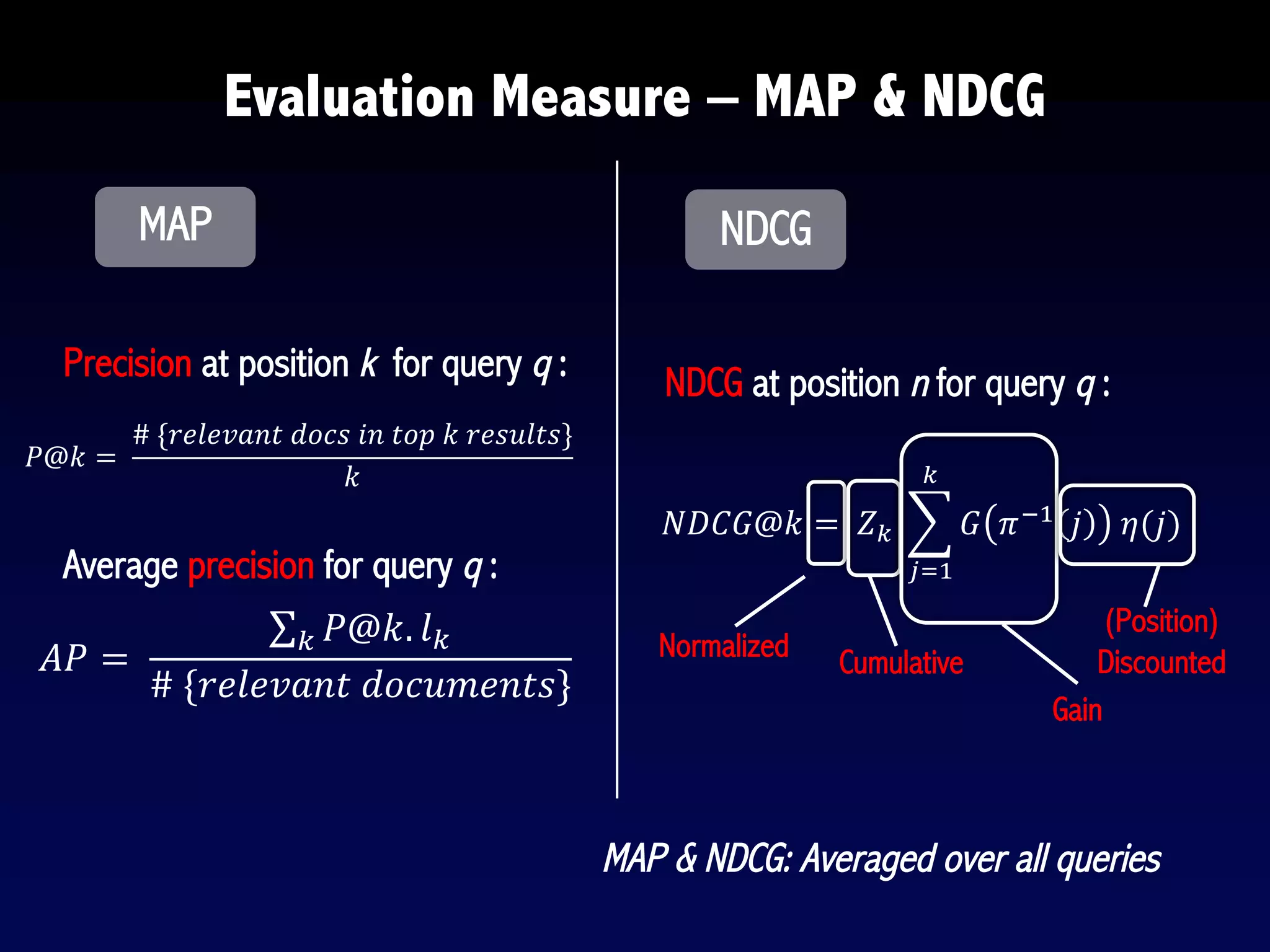

The document provides an overview of search engines and search algorithms. It discusses (1) the key concepts of search including user intent, queries, documents and results; (2) the technical aspects such as indexing, ranking, and learning algorithms; and (3) current and future challenges for search. Learning algorithms covered include pointwise, pairwise, and listwise approaches. The goal of search engines is to accurately match user intent with relevant documents from a large corpus.