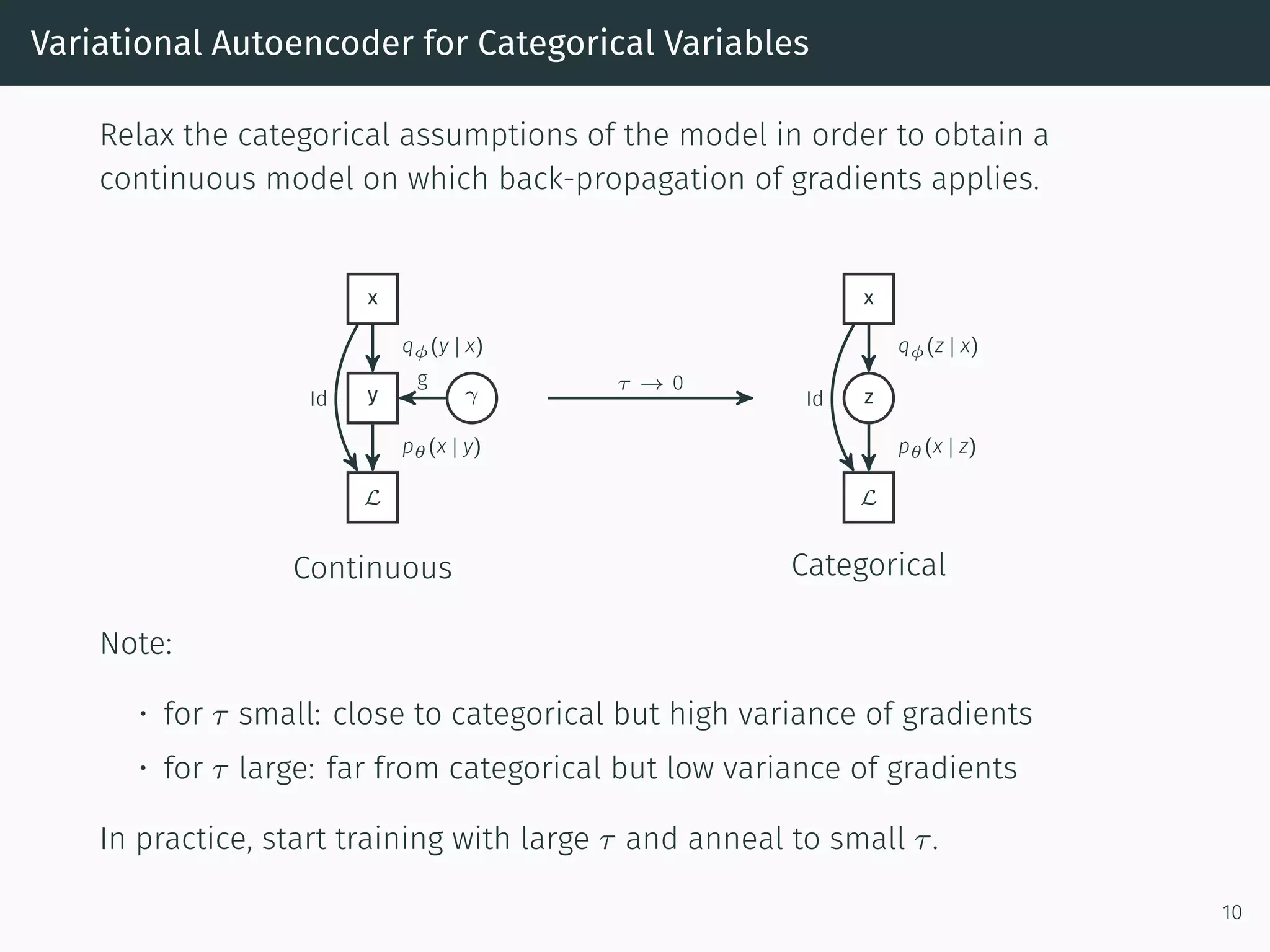

This document discusses applying deep learning techniques like variational autoencoders to cyber security and anomaly detection in network traffic. It notes that while deep learning has made progress in related areas, modeling categorical network flow data poses unique challenges. It proposes using variational inference with a Gumbel softmax relaxation to train a generative model on network flows in an unsupervised manner. The trained model could then be used for tasks like anomaly detection based on the model's predictions or a sample's reconstruction error.

![Application

Train variational autoencoder to obtain parameters ˆθ, ˆϕ.

Possible approaches for anomaly detection:

• Use qˆϕ(z | x) : x → z for input to a machine learning anomaly detector

• Use pˆθ : x → p ∈ [0, 1] to identify rare events

• Use reconstruction error

x

q ˆϕ

−→ z

p ˆθ

−→ x

as anomaly detector

Other approaches are possible...

11](https://image.slidesharecdn.com/deeplearningforcybersecurity-170321133815/75/Deep-Learning-for-Cyber-Security-12-2048.jpg)