The document discusses different forms of normalization used to eliminate anomalies from a database design. It summarizes:

1) Normalization is a method to remove anomalies like update, deletion, and insertion anomalies from a database to bring it to a consistent state.

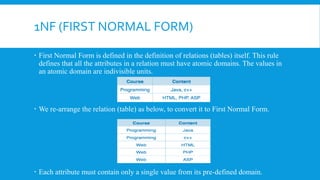

2) First normal form (1NF) requires that each attribute contains a single, atomic value.

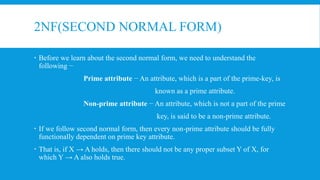

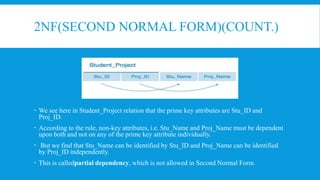

3) Second normal form (2NF) requires that non-key attributes are fully dependent on the primary key and that there are no partial dependencies.

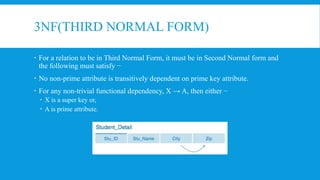

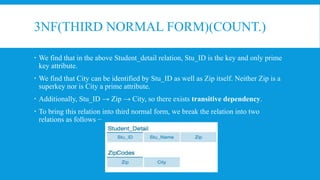

4) Third normal form (3NF) extends 2NF by requiring no transitive dependencies on non-prime attributes and that non-key attributes are not transitively dependent on the primary key.