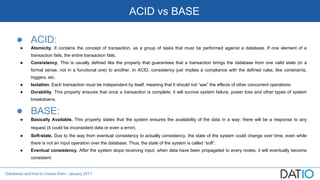

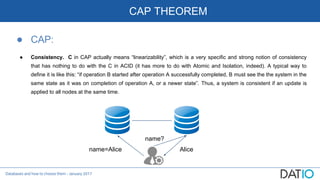

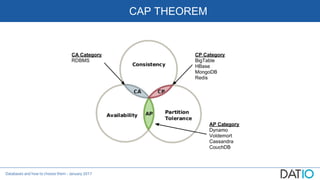

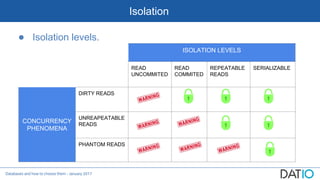

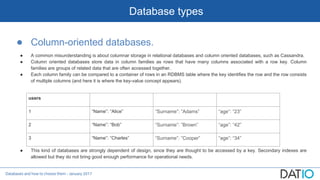

The document discusses different types of databases including relational, column-oriented, document-oriented, and graph databases. It explains key concepts such as ACID vs BASE, CAP theorem, isolation levels, indexes, sharding, and provides descriptions and comparisons of each database type.