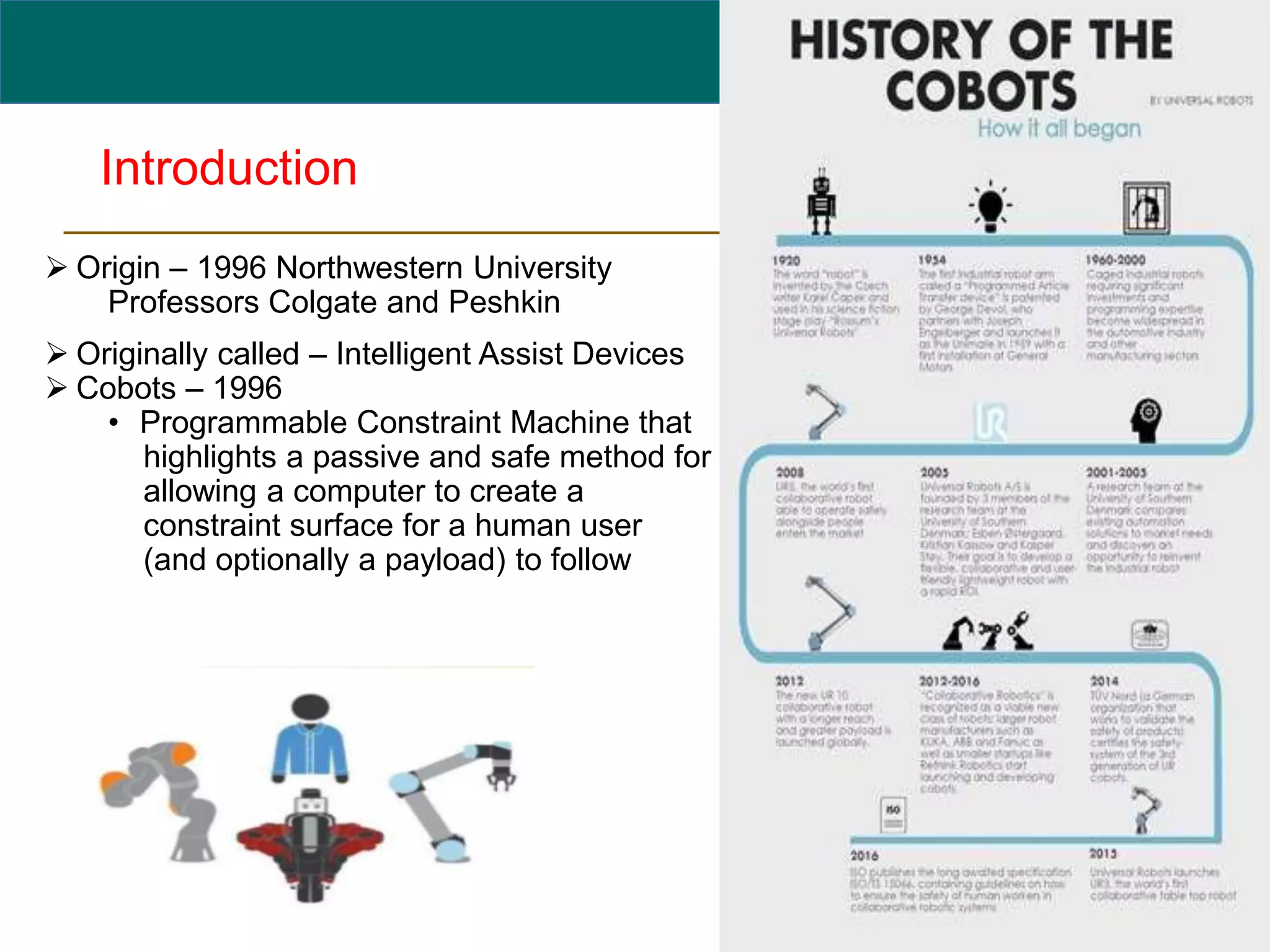

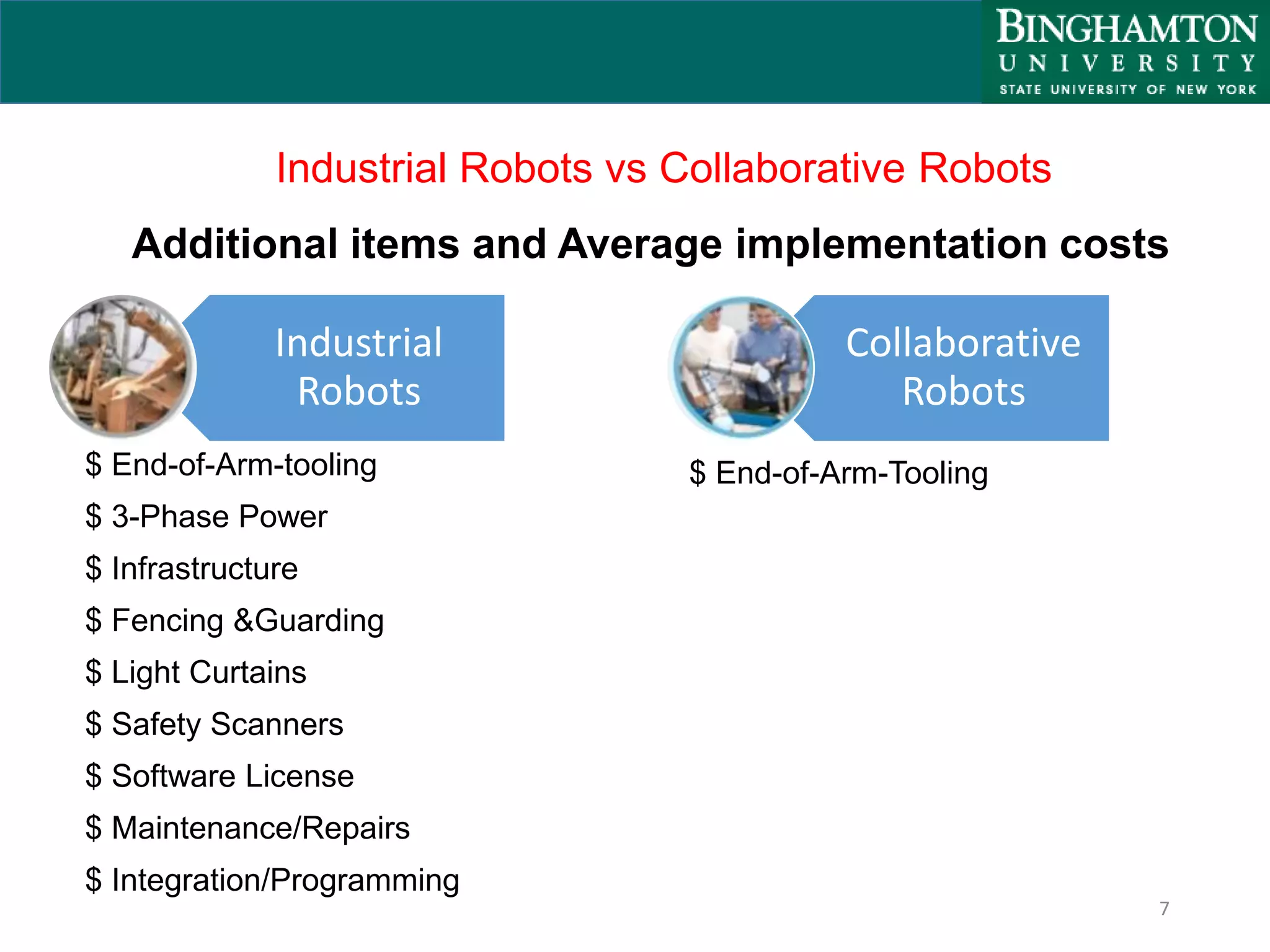

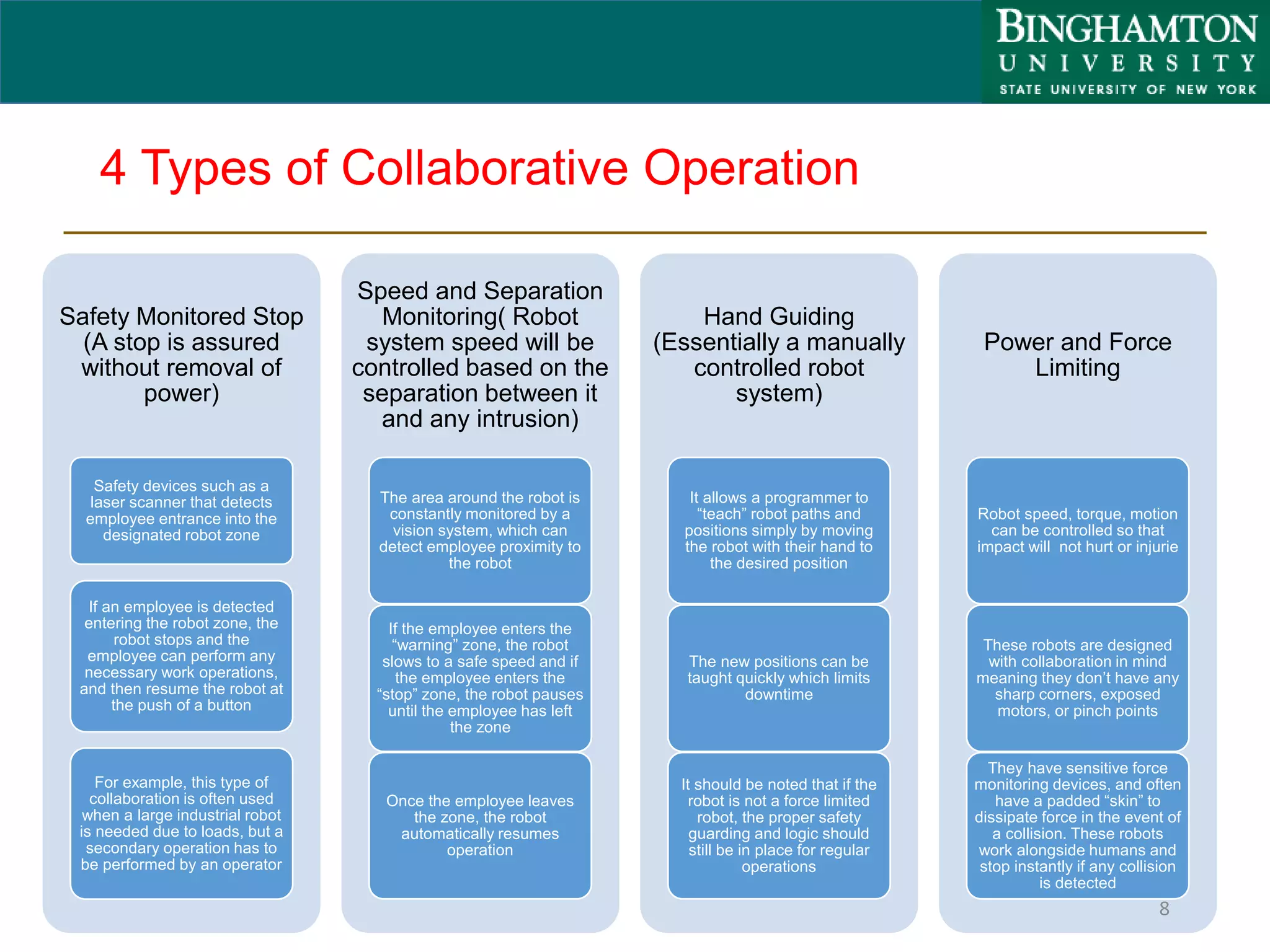

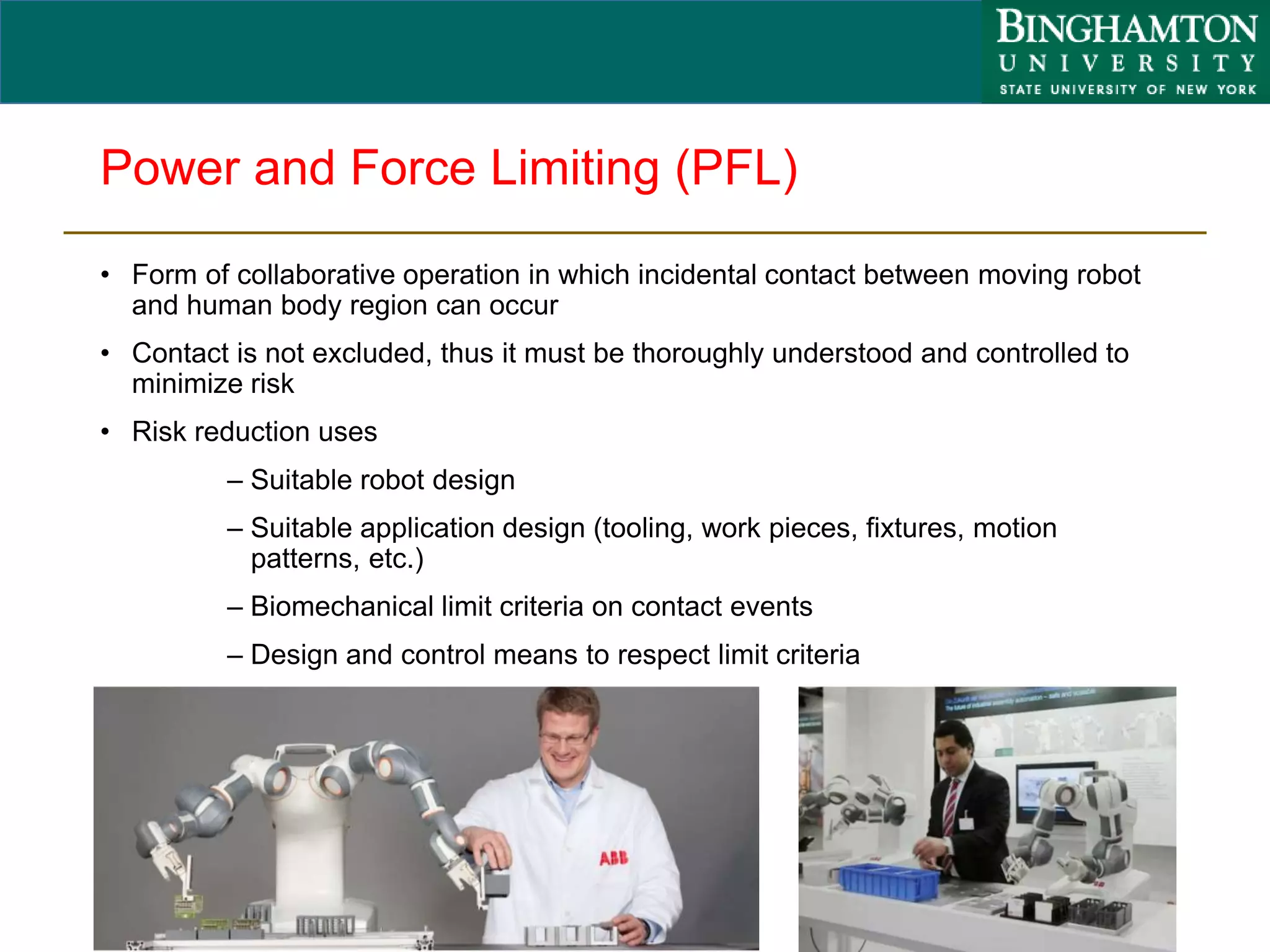

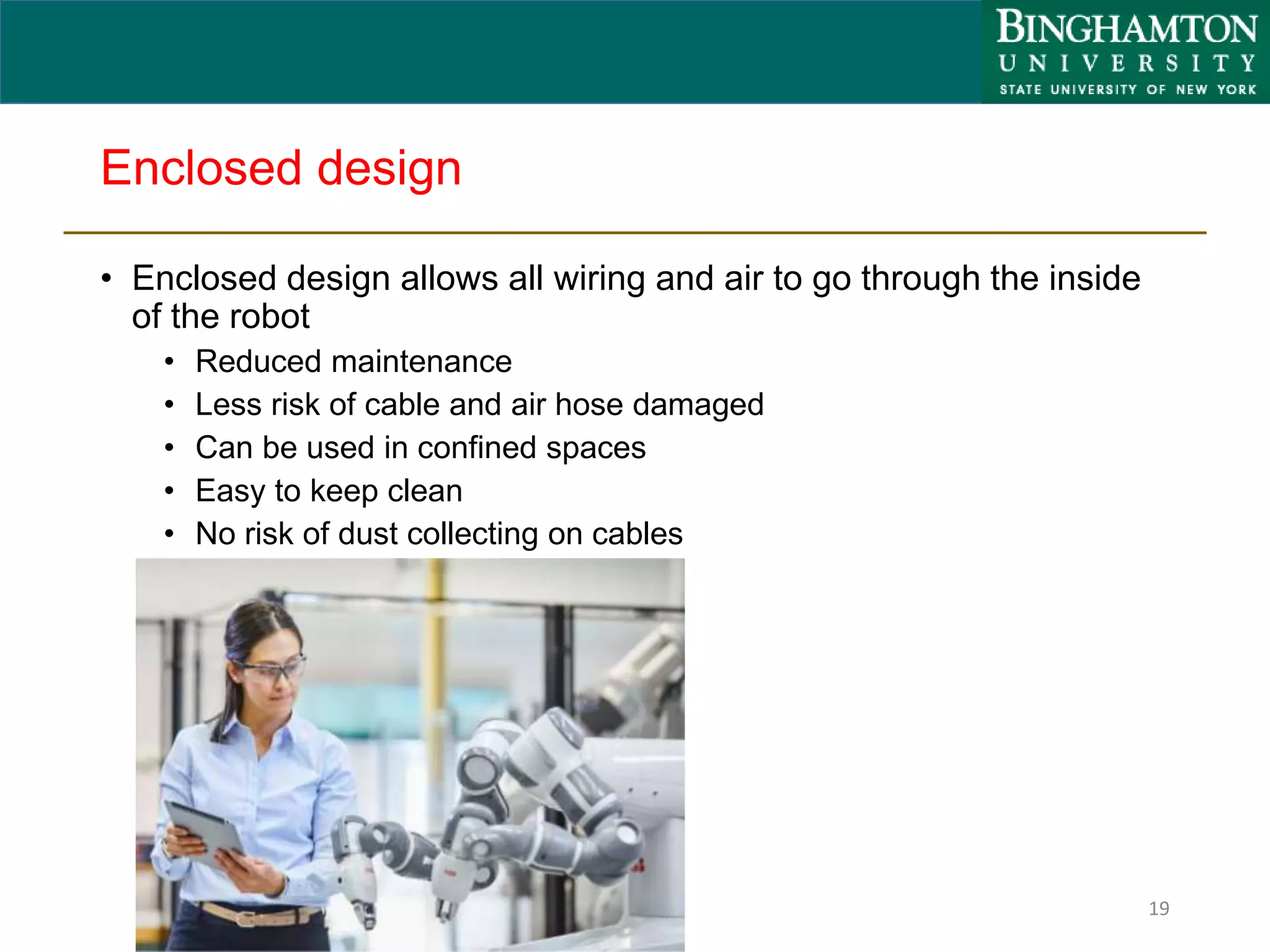

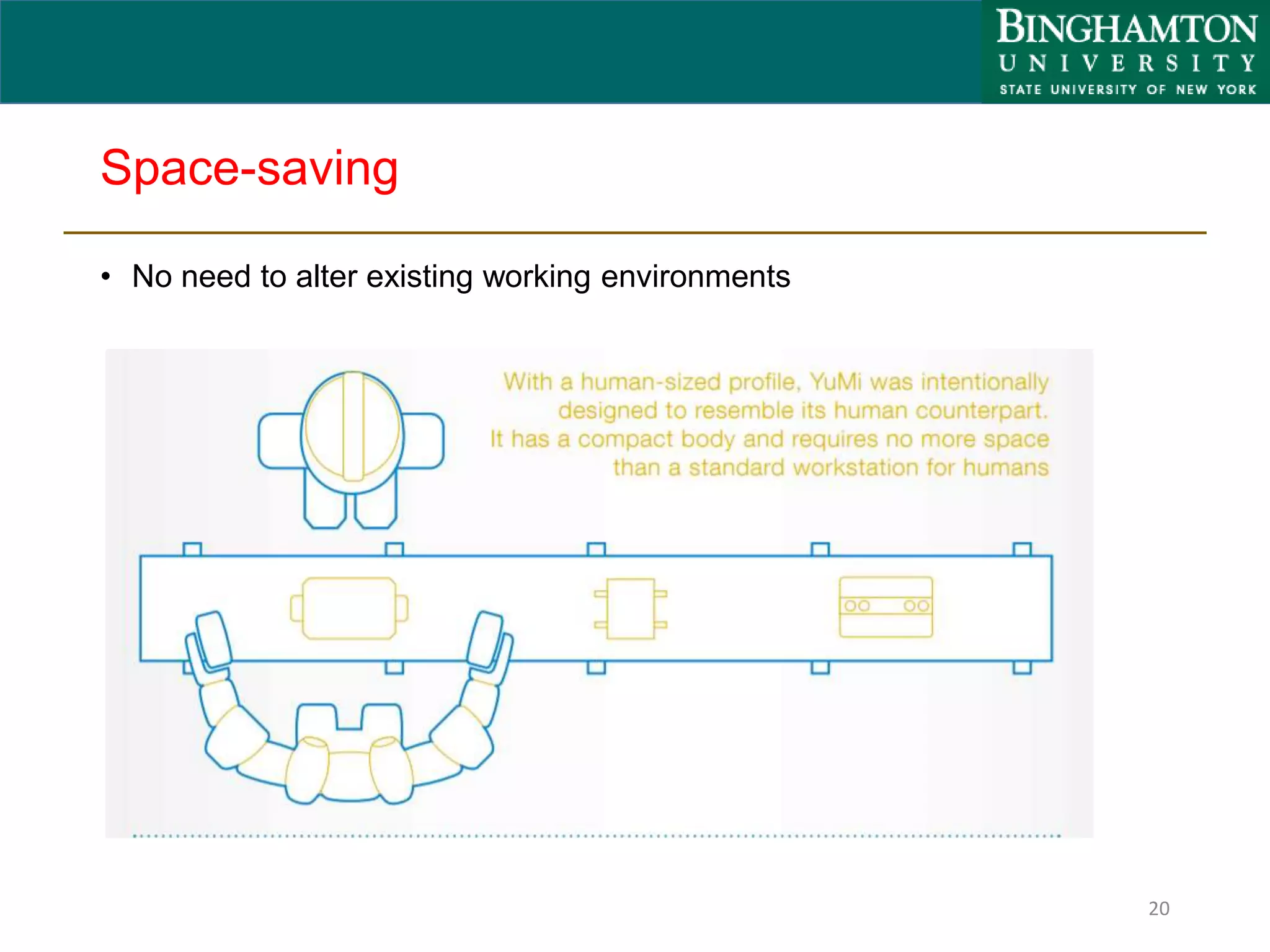

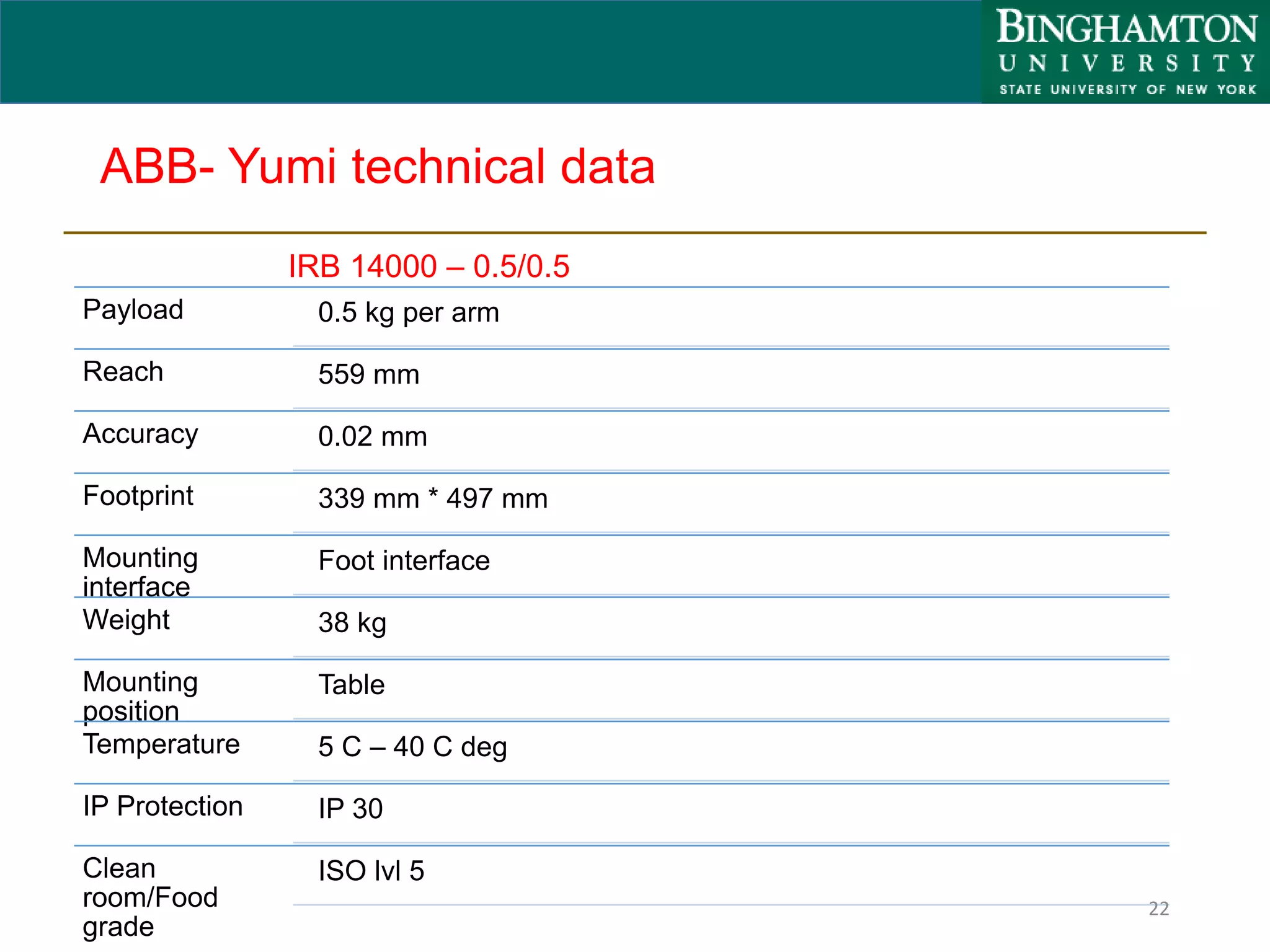

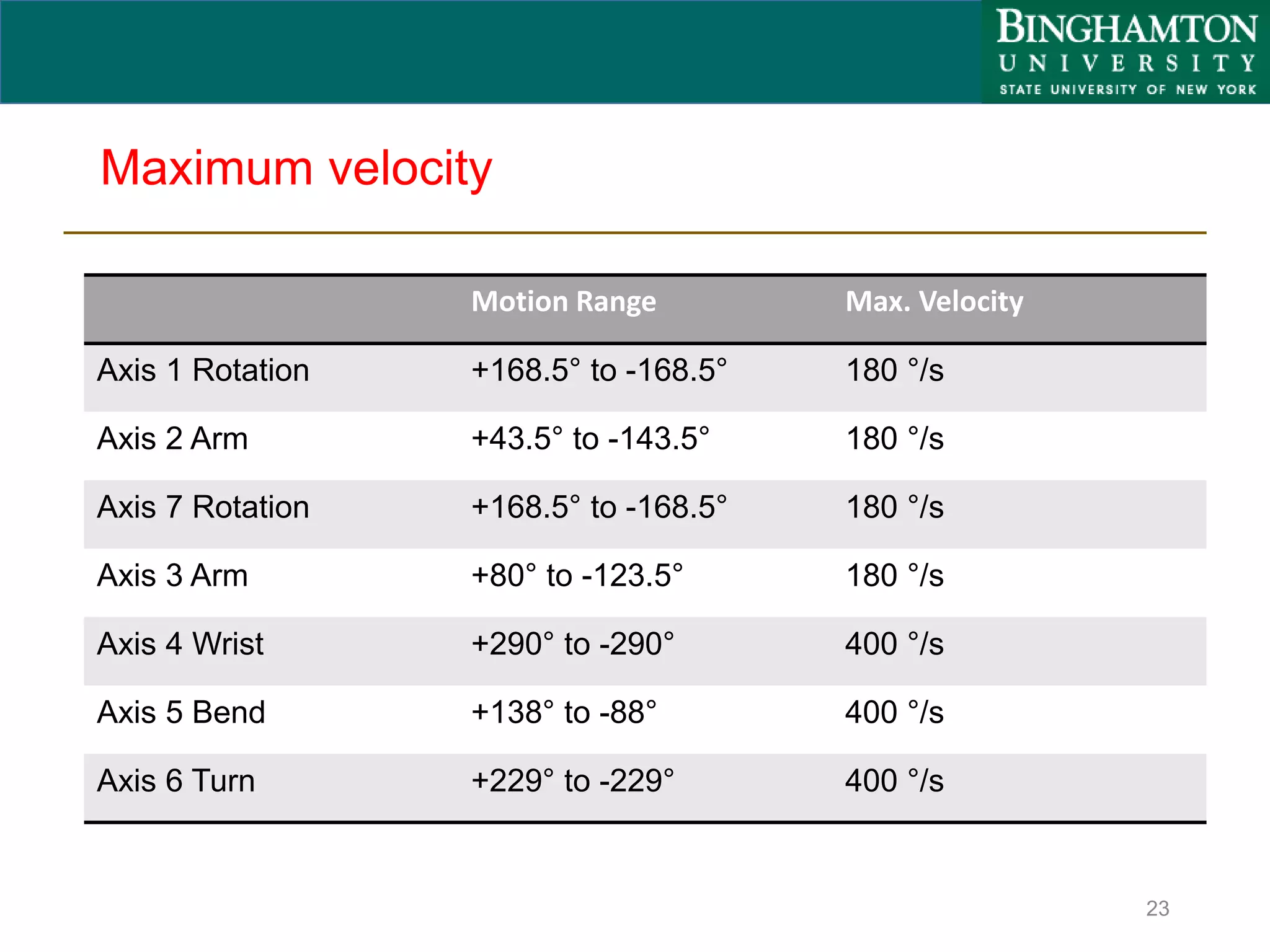

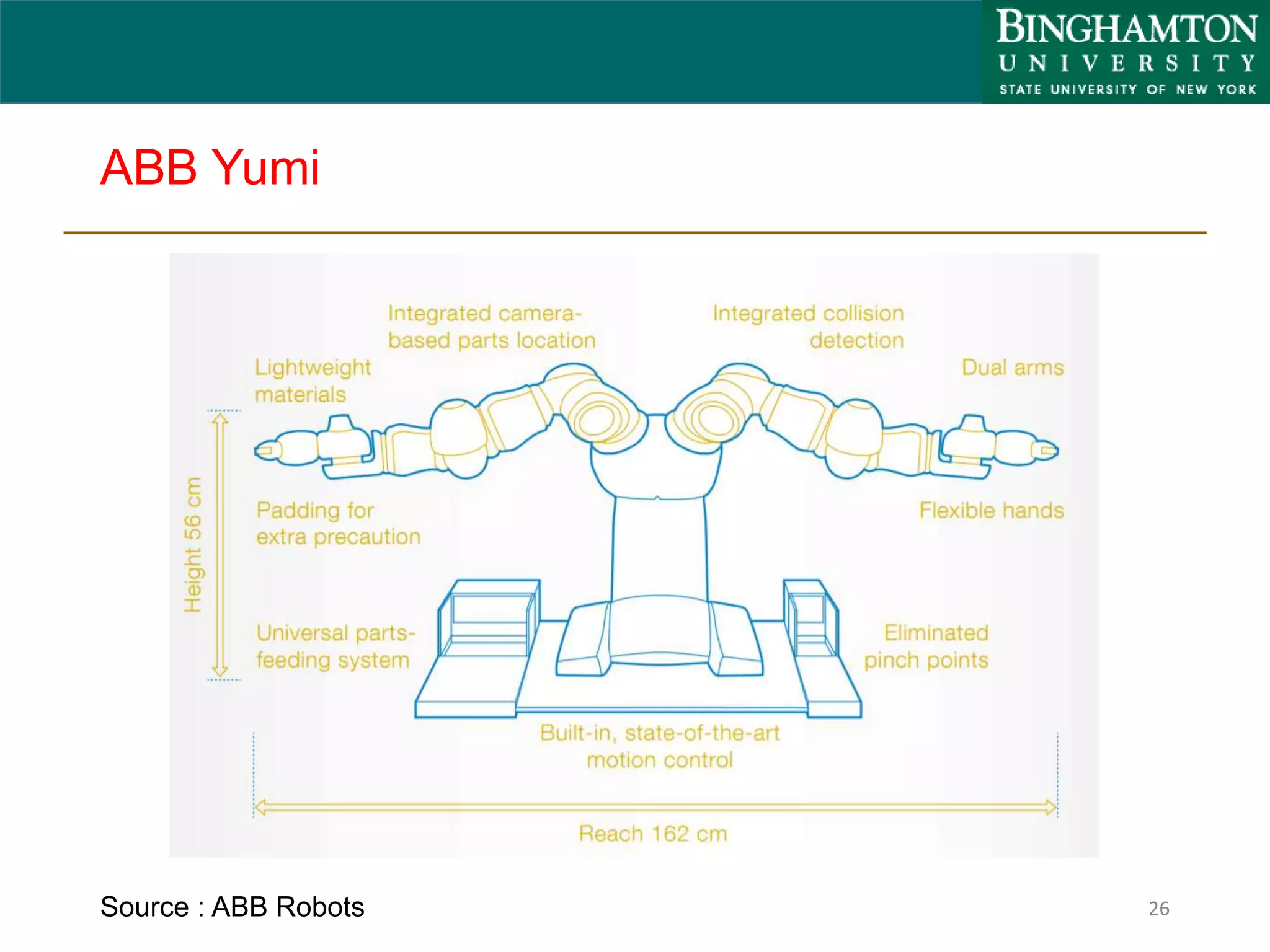

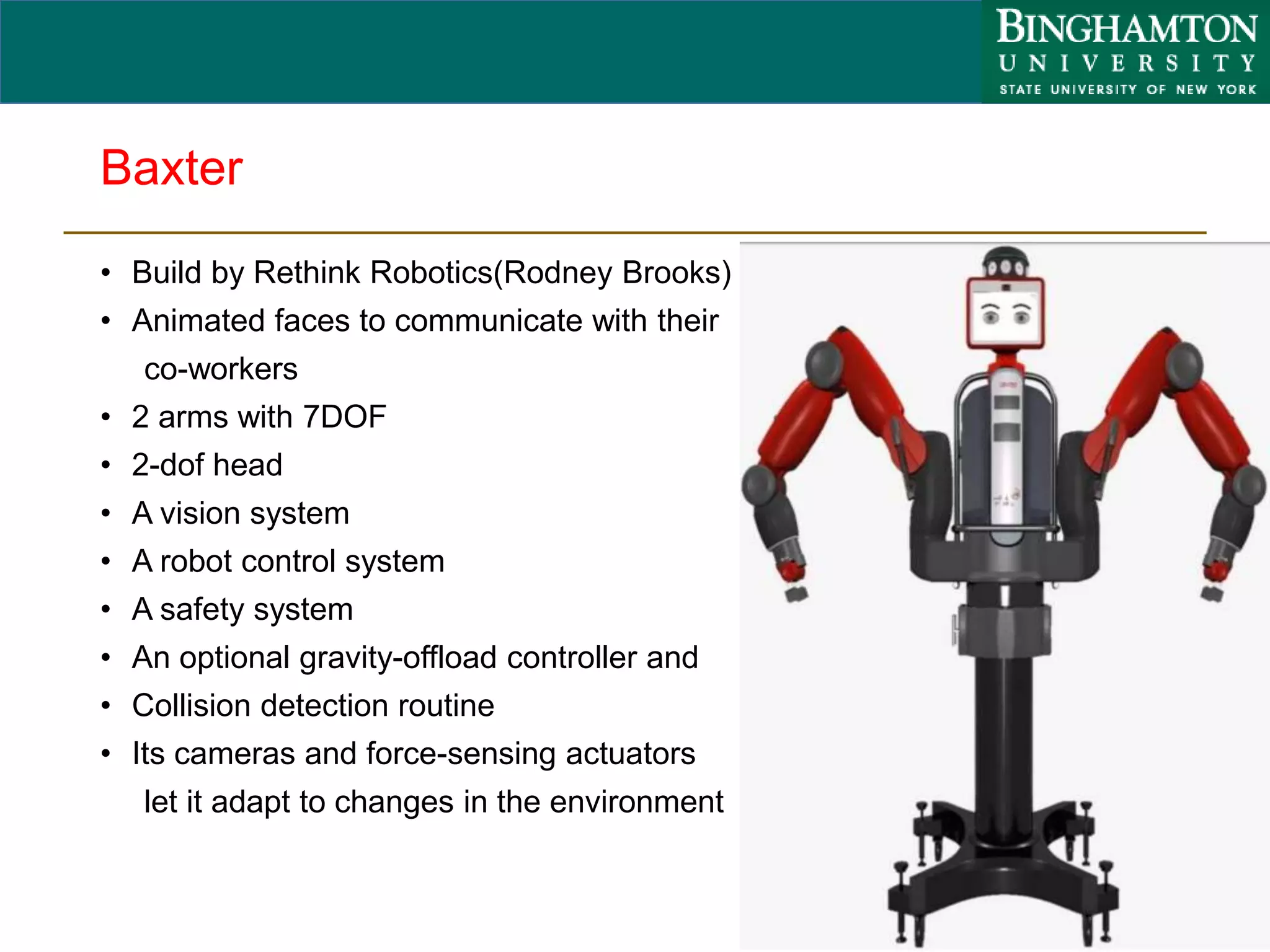

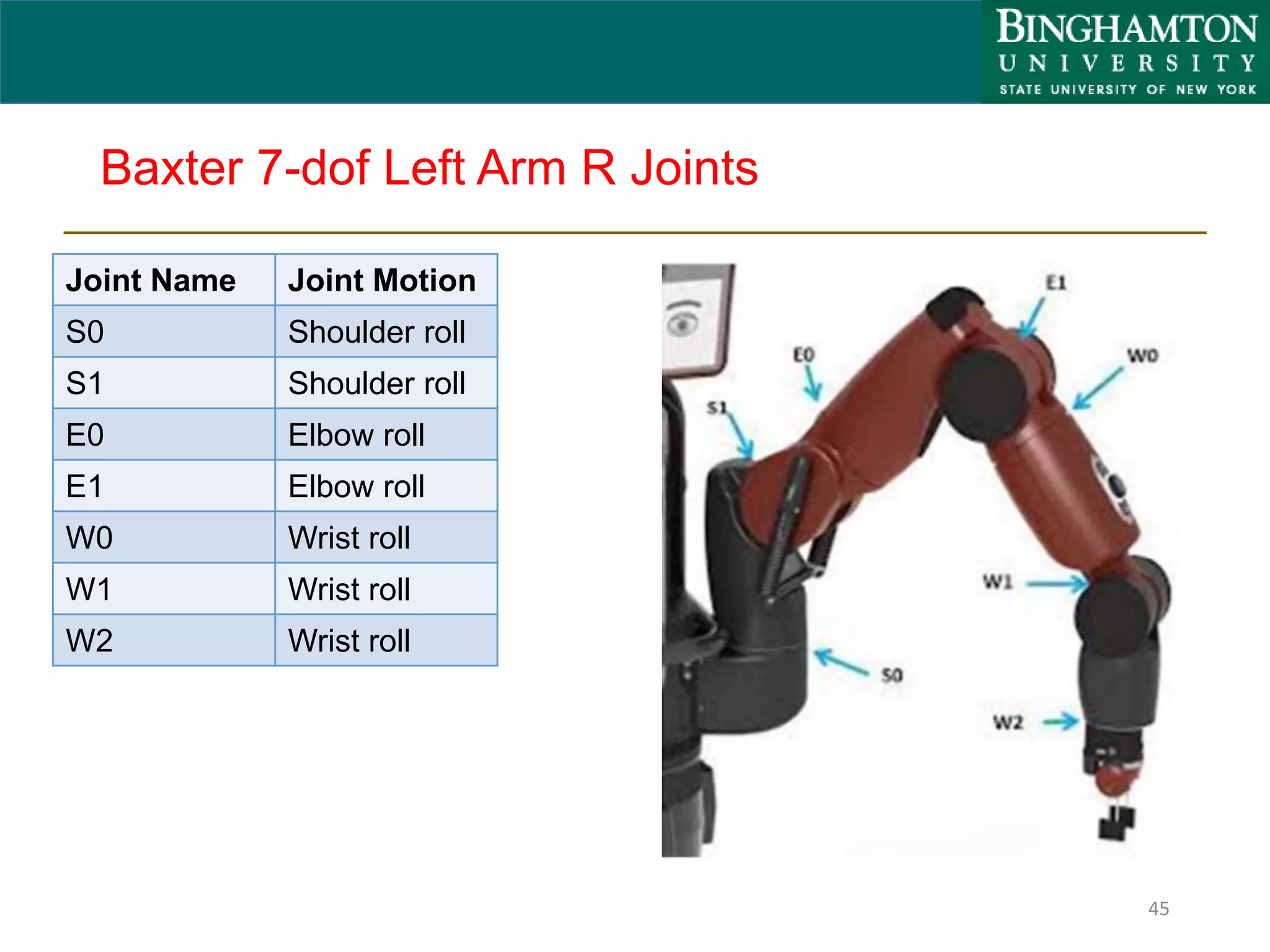

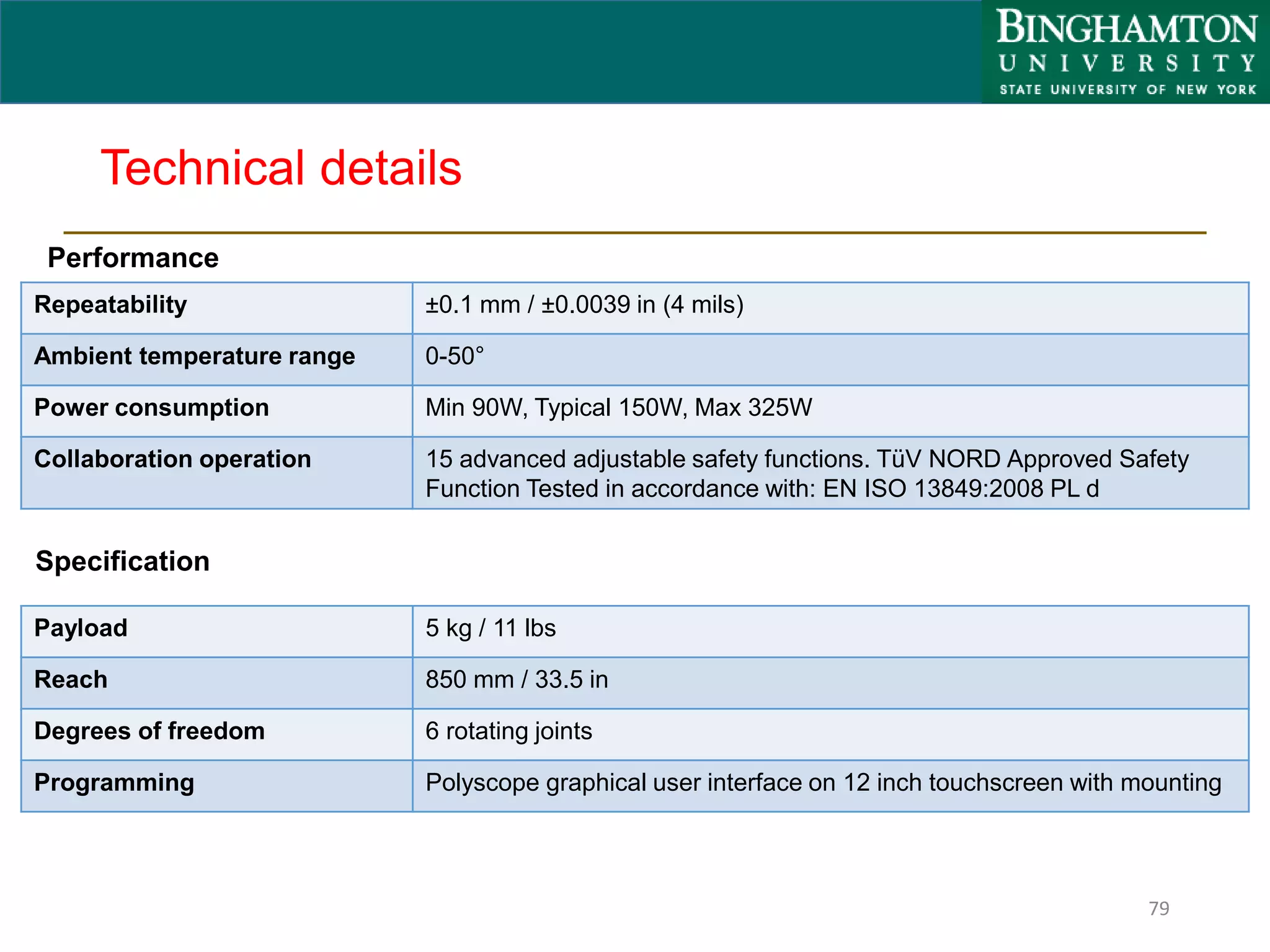

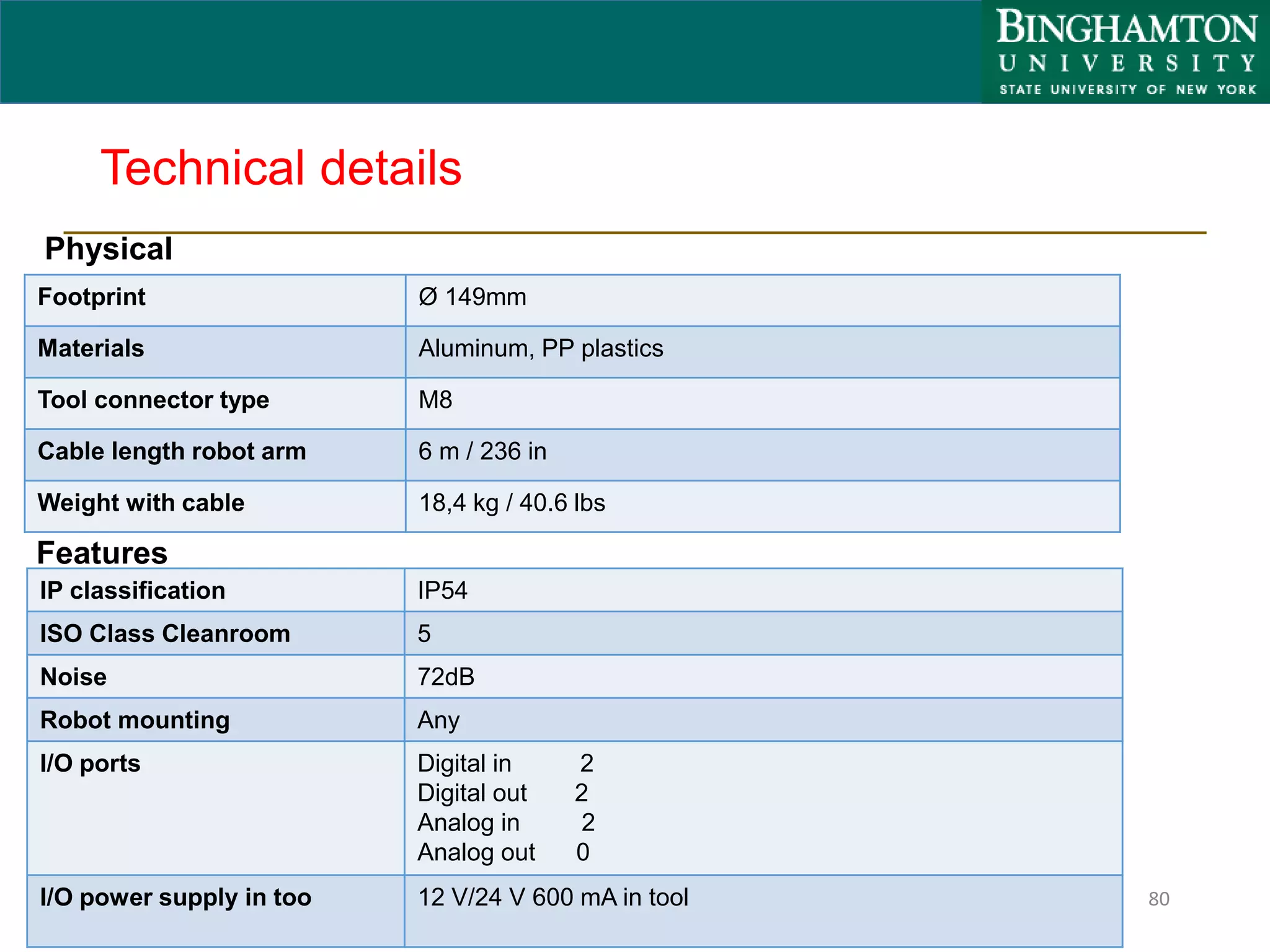

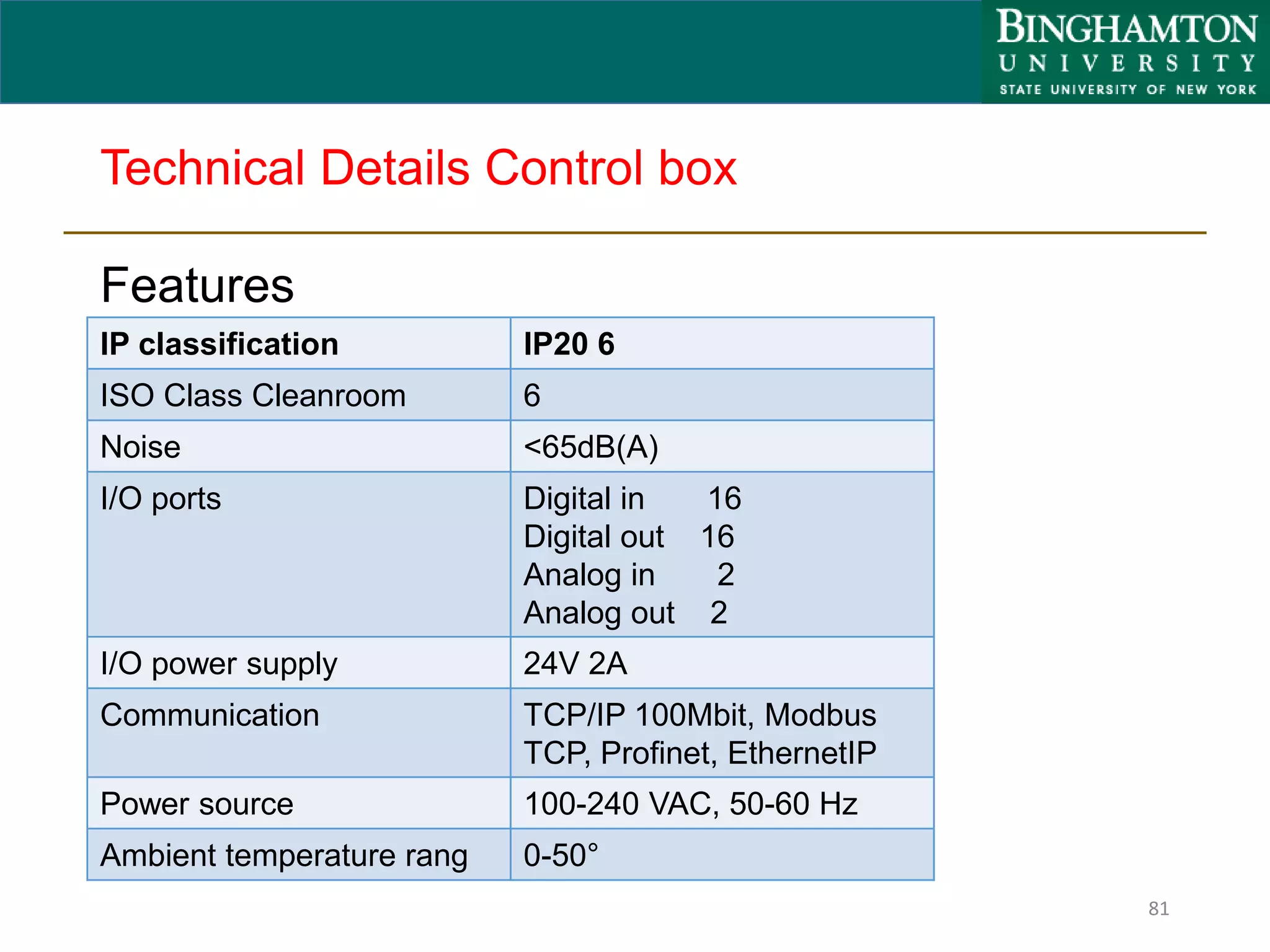

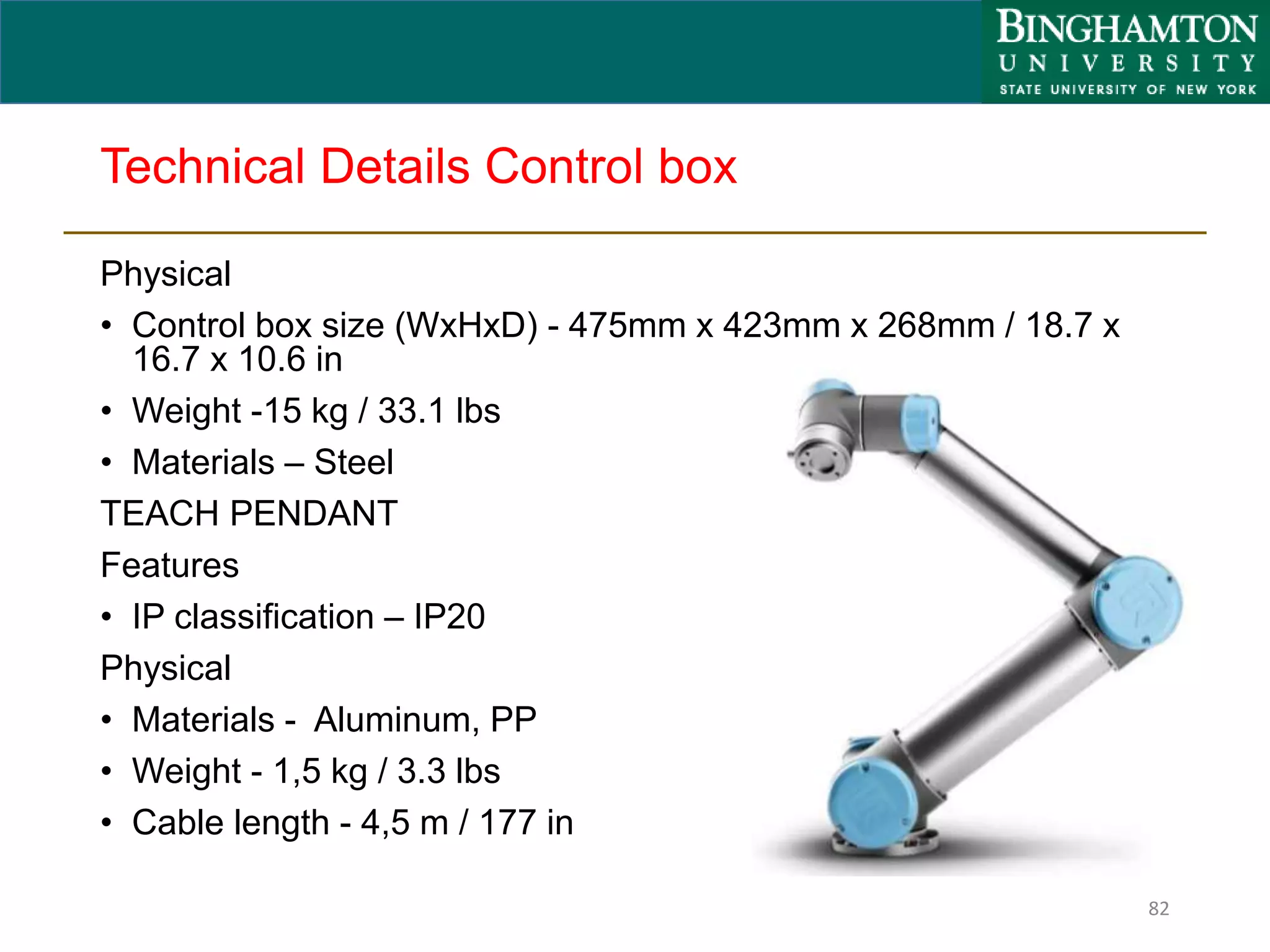

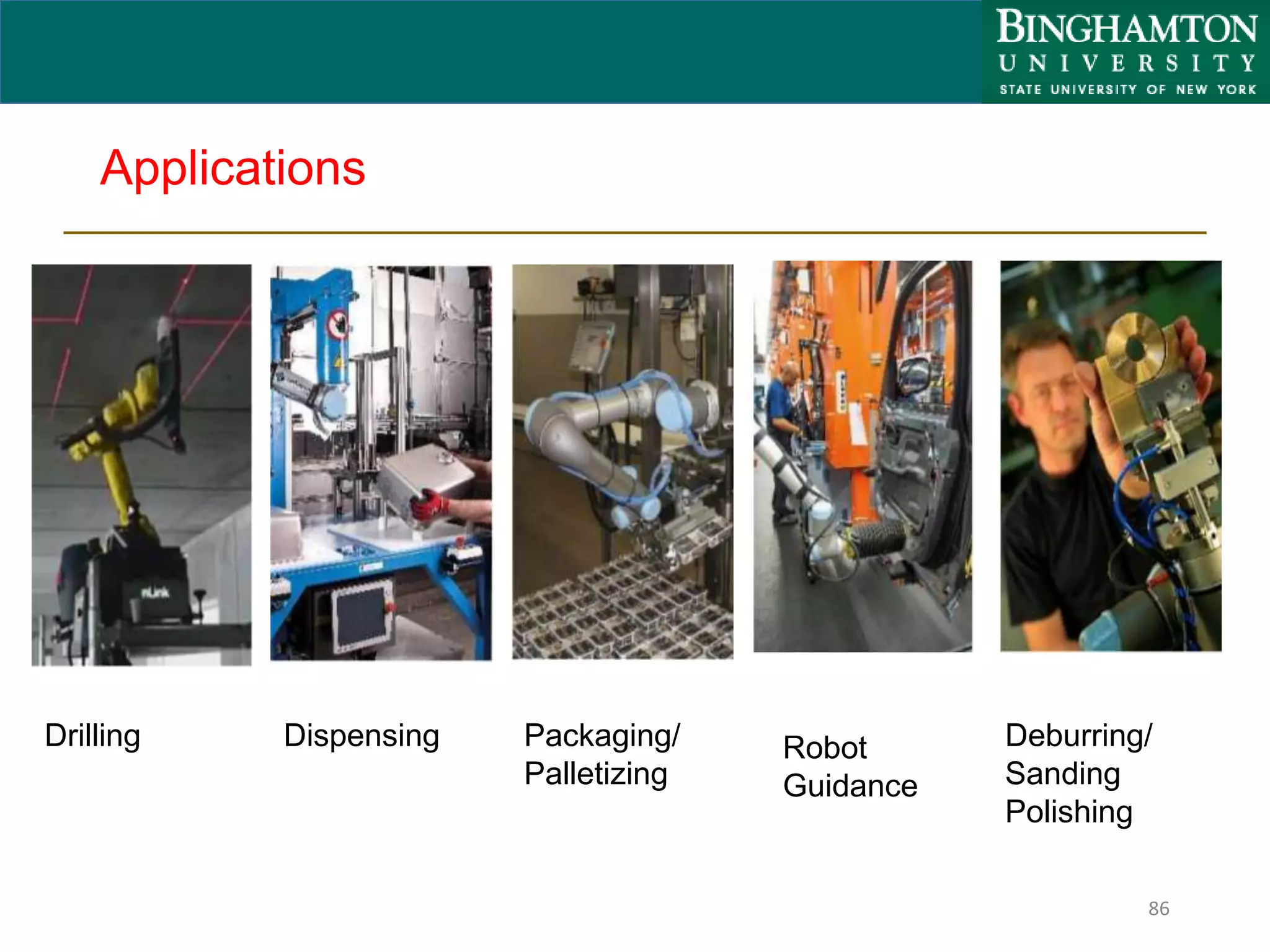

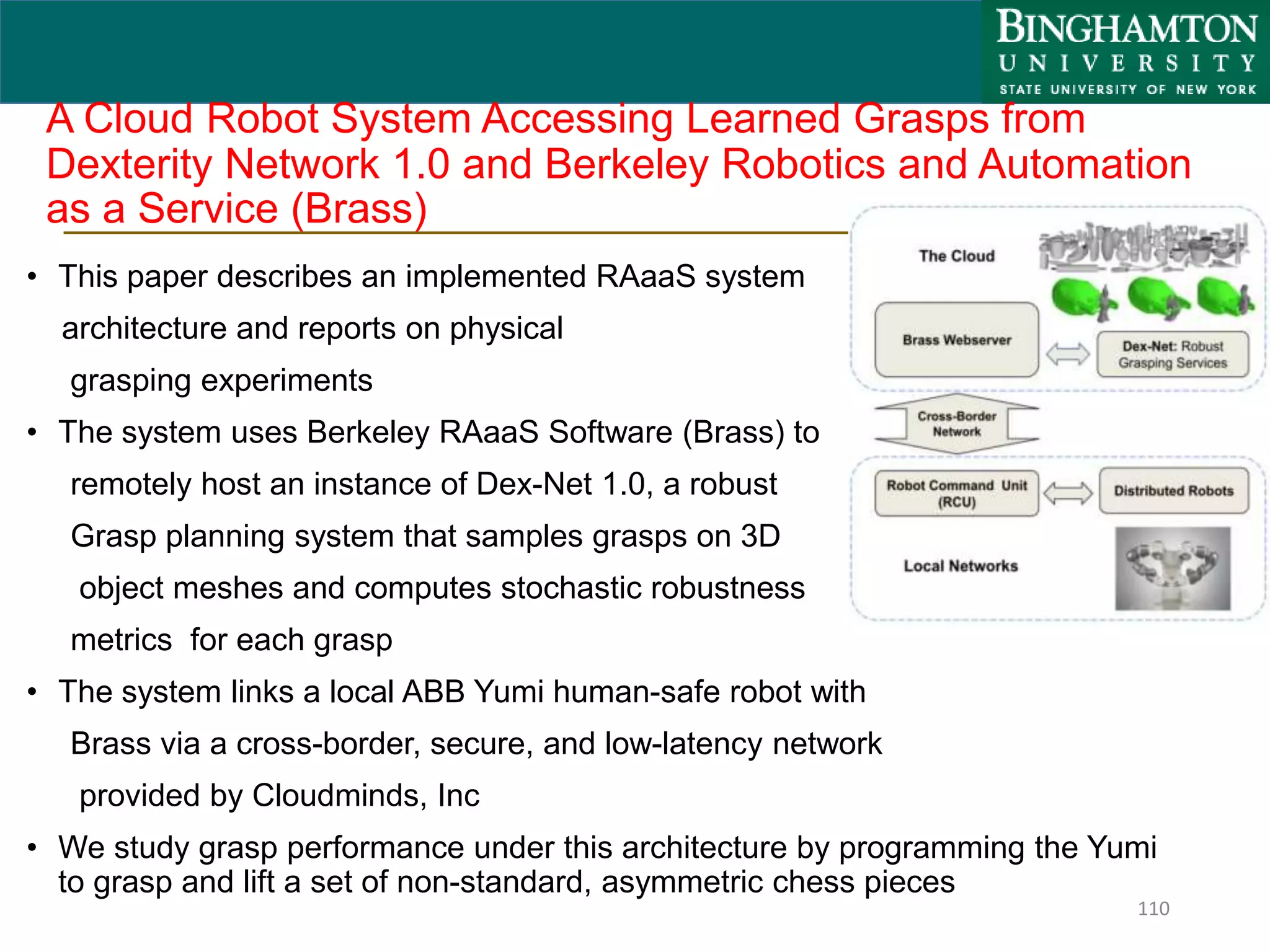

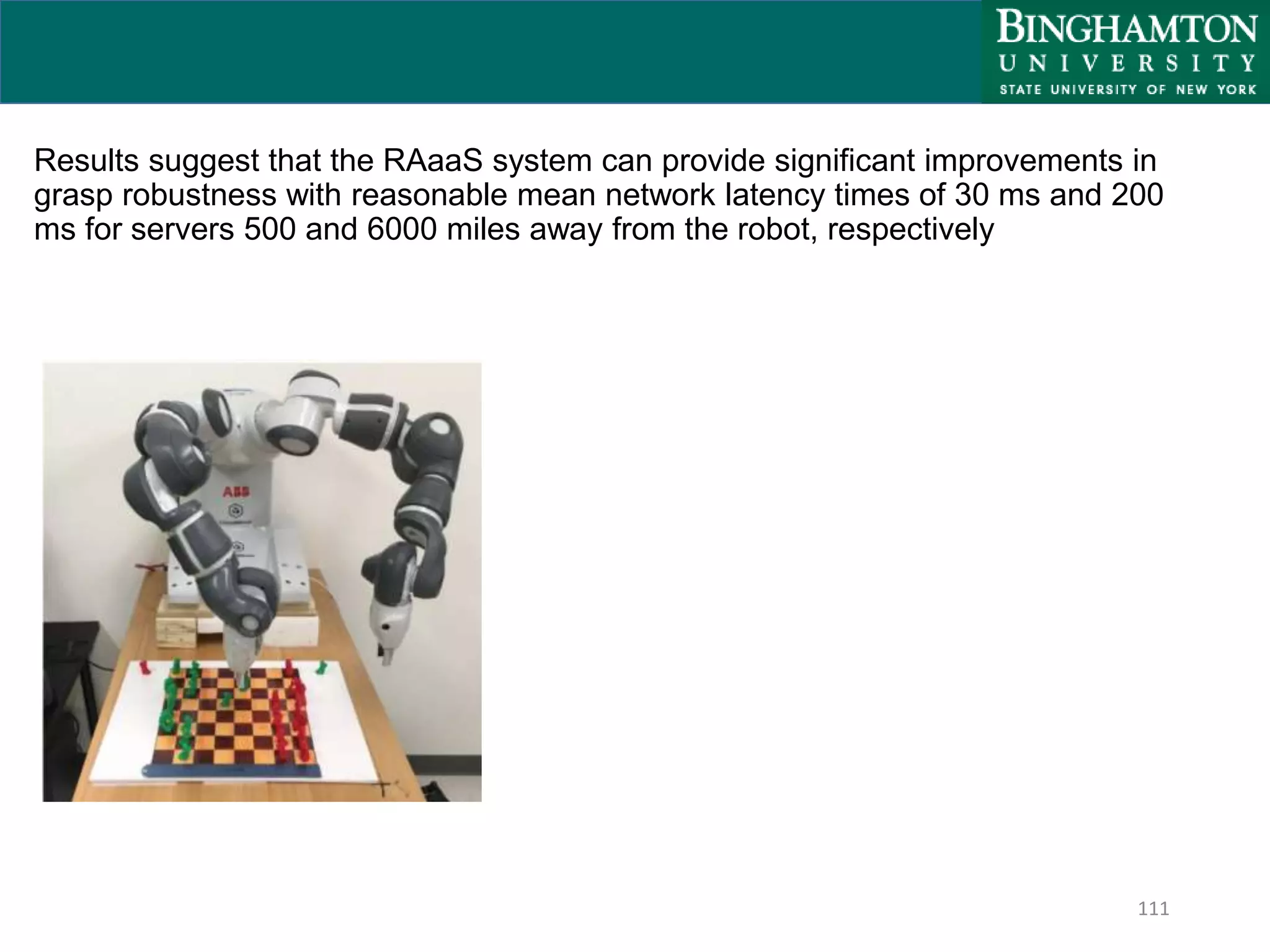

Collaborative robots, or cobots, are designed to safely work alongside humans in a shared workspace. This presentation discusses various cobots including ABB Yumi, Baxter, and UR5. It covers their key features such as safety mechanisms, ease of use, applications in assembly and material handling, and benefits for customers. Various collaborative operation modes are also presented, such as power and force limiting where contact with humans can occur safely.