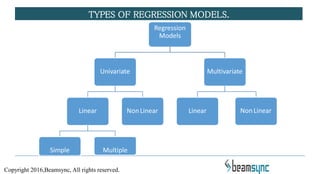

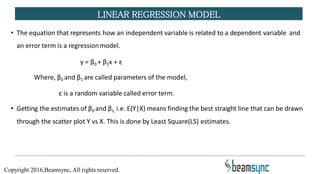

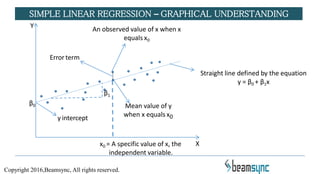

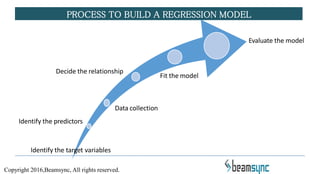

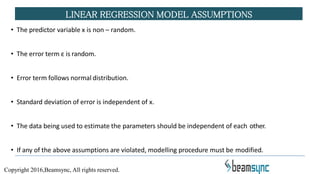

The document covers various predictive modeling techniques using R tools, focusing on regression analysis, including linear and logistic regression, cluster analysis, and time series analysis. It explains the purpose of regression analysis as a statistical tool for predicting and modeling relationships between variables, detailing how simple linear regression is established and its underlying assumptions. Additionally, it provides insights on model building processes and practical applications in business analytics.