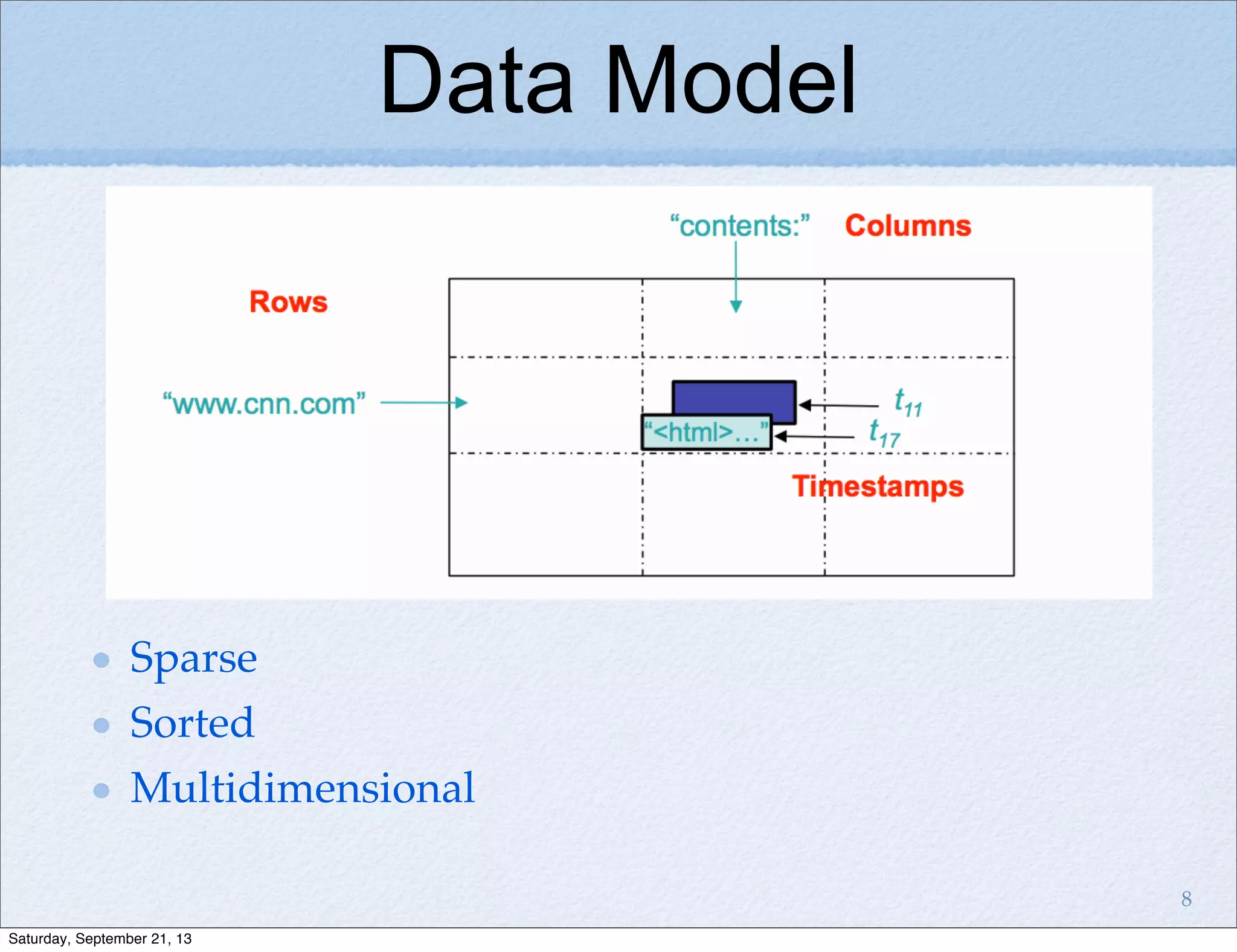

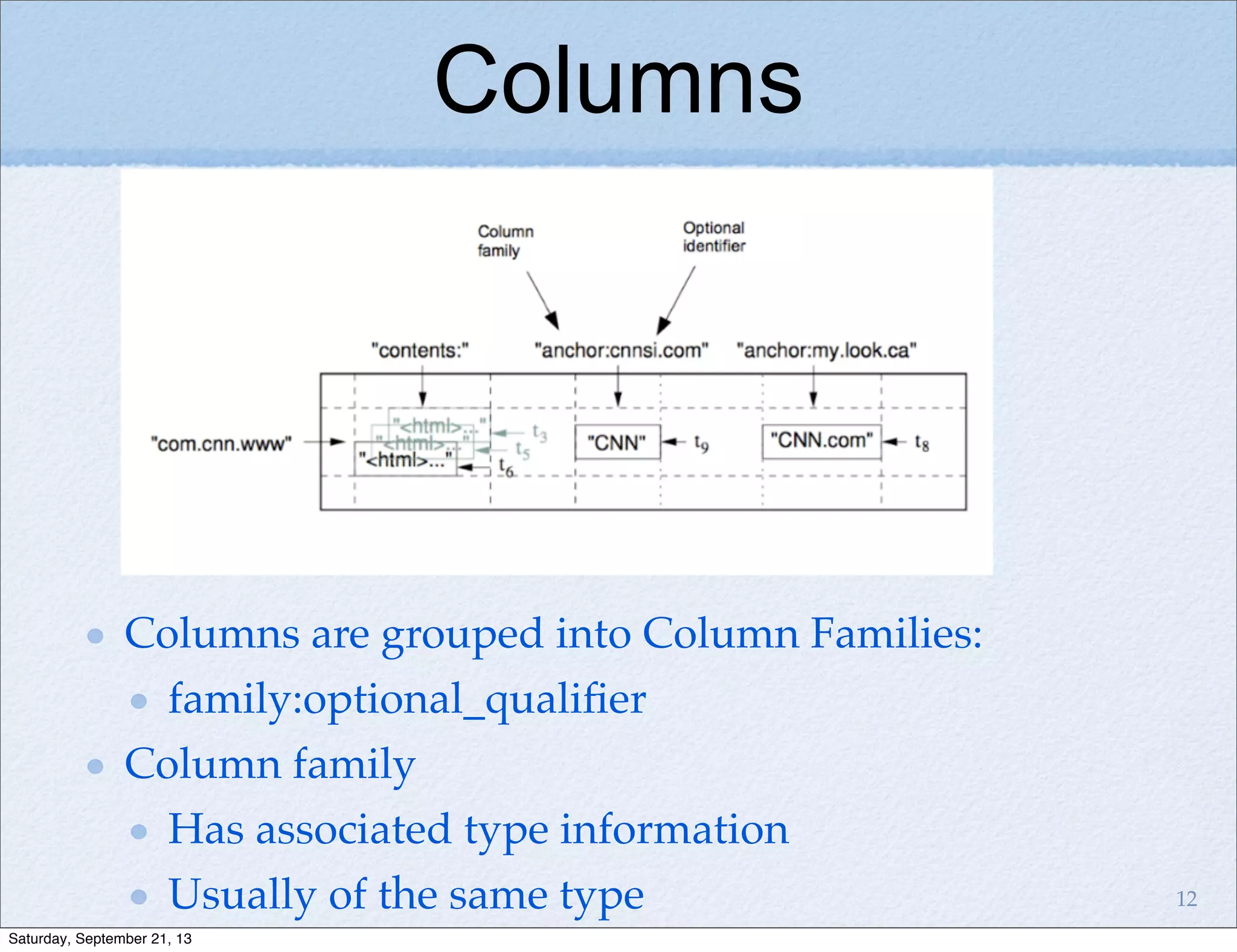

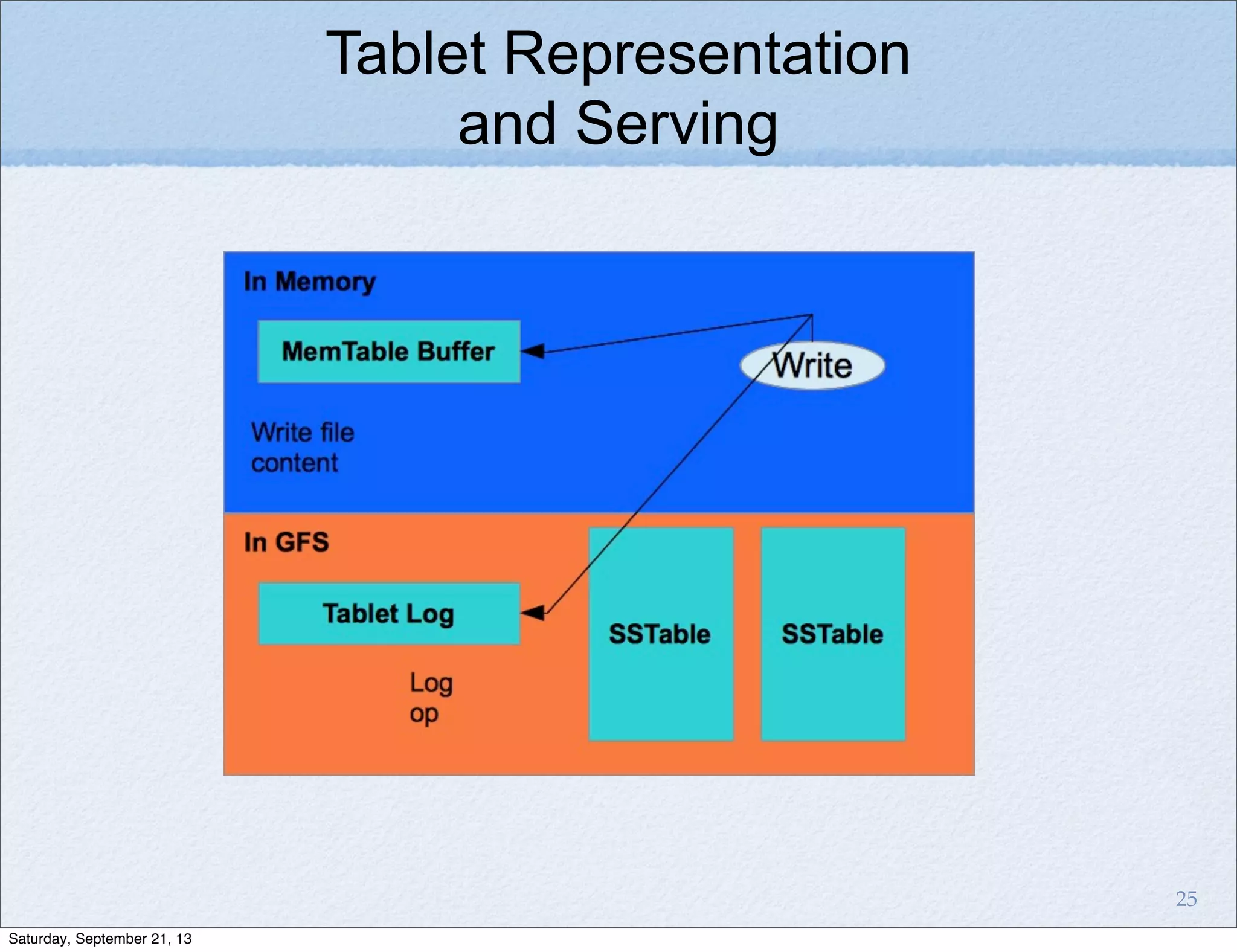

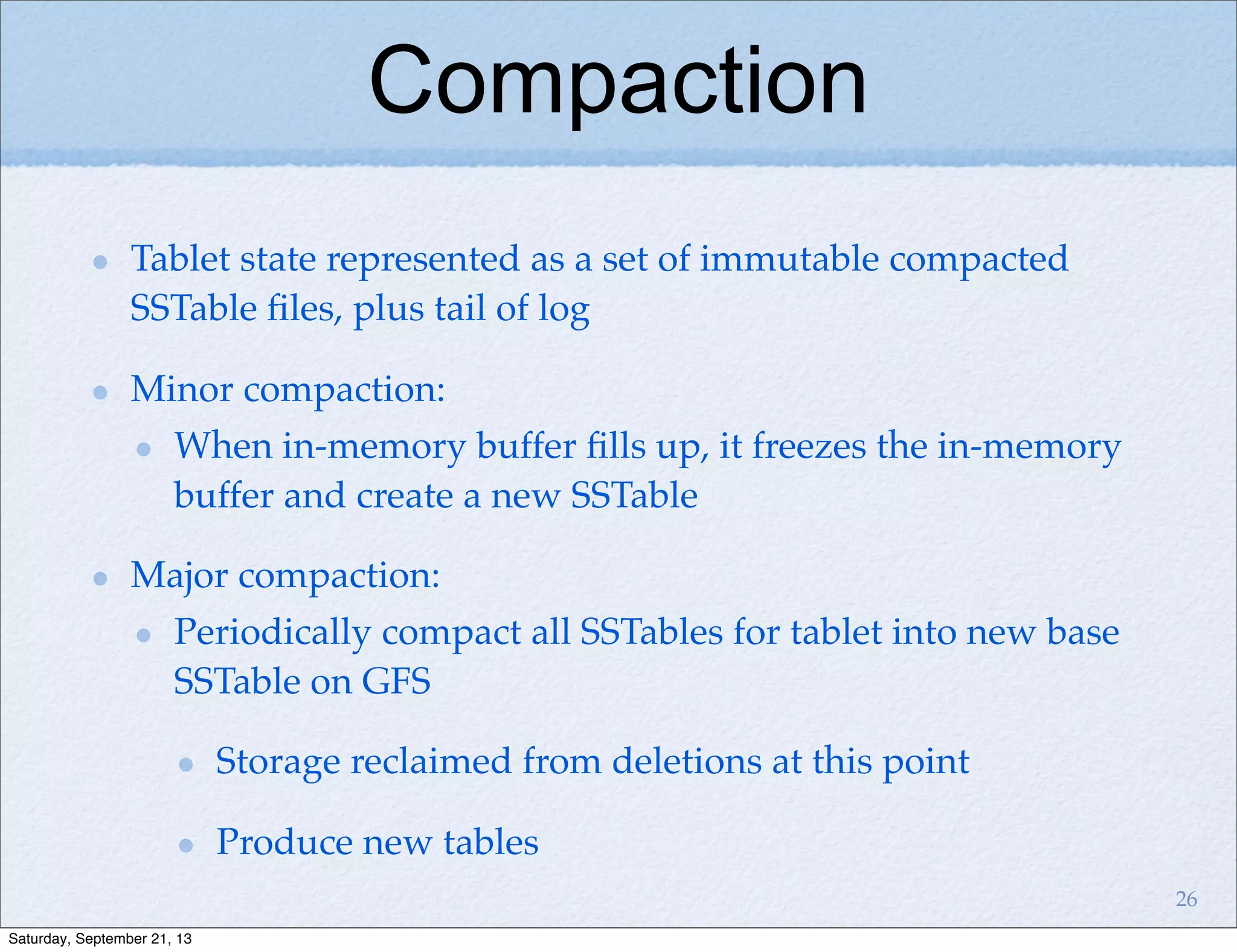

Bigtable is a distributed storage system for structured data designed to be scalable, high performance, and highly available. It uses a sparse, multidimensional sorted map to store data across many servers. Bigtable allows for asynchronous updates to different pieces of data at very high read and write rates and efficient scans across large datasets. It has been applied to applications like analytics, Earth imagery, and personalized search at Google.