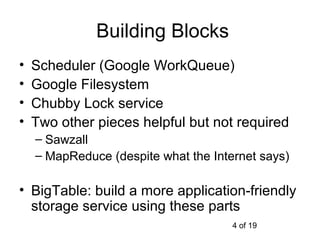

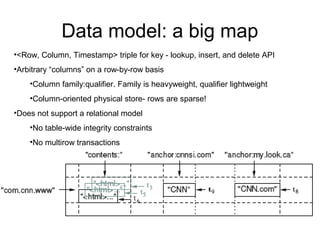

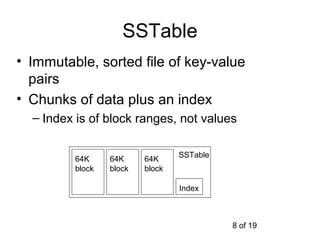

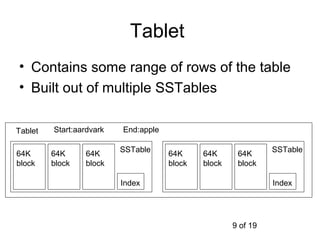

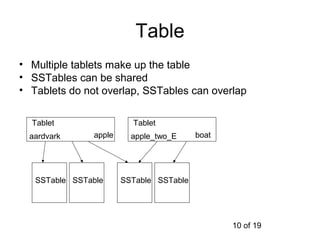

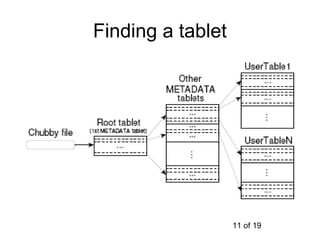

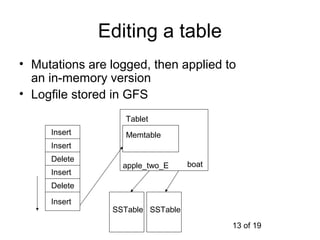

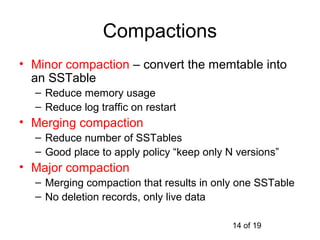

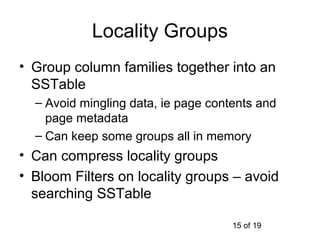

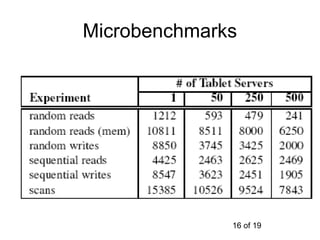

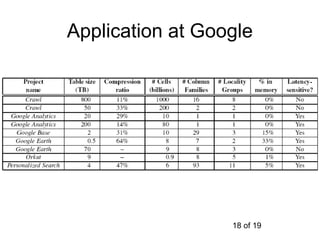

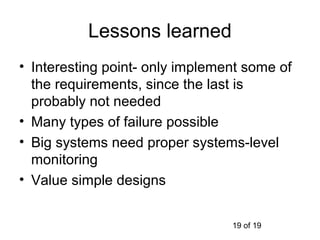

This document summarizes Google's Bigtable storage system. Bigtable stores data as a sparse, distributed, persistent multidimensional sorted map. It is built using the Google File System for storage, Chubby for locking, and a tablet structure with tablets split across multiple servers. Bigtable provides a simple data model and interfaces for clients to perform read and write operations on large datasets.