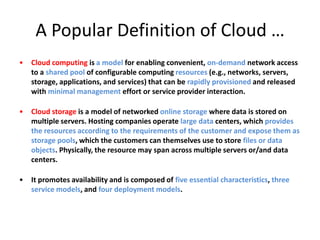

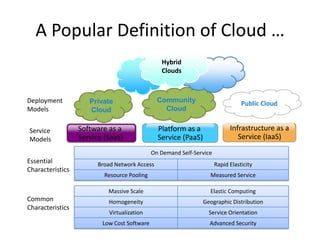

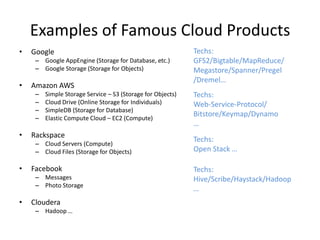

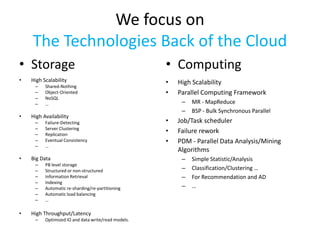

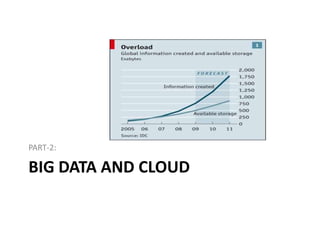

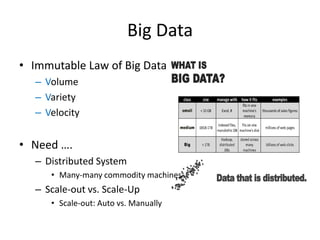

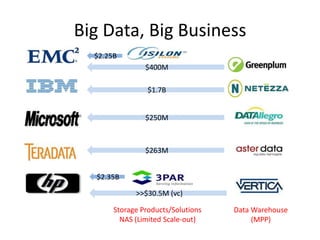

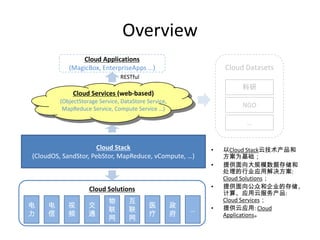

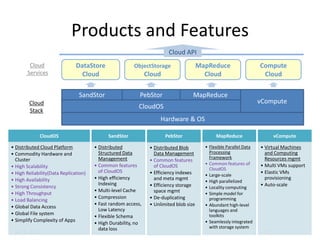

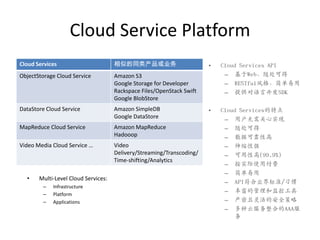

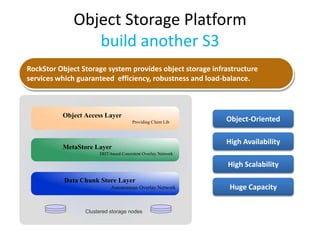

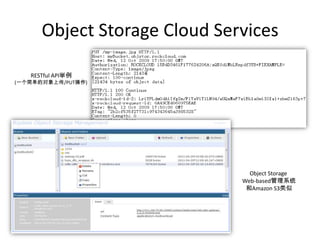

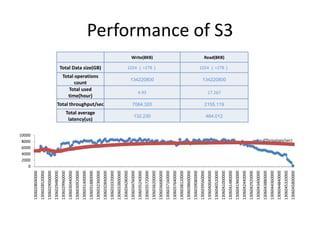

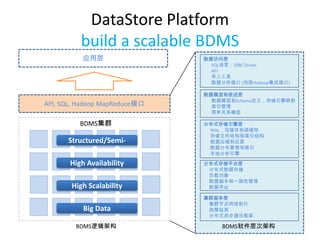

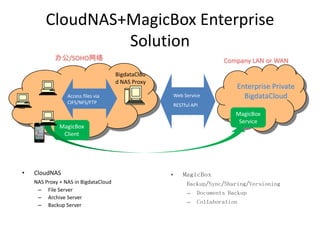

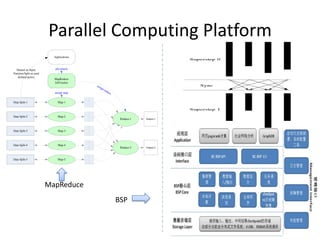

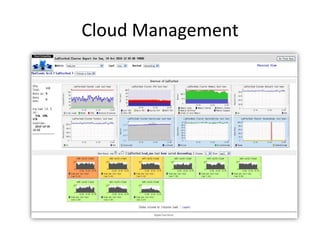

This document discusses big data and cloud computing. It introduces cloud storage and computing models. It then discusses how big data requires distributed systems that can scale out across many commodity machines to handle large volumes and varieties of data with high velocity. The document outlines some famous cloud products and their technologies. Finally, it provides an overview of the company's focus on enterprise big data management leveraging cloud technologies, and lists some of its cloud products and services including data storage, object storage, MapReduce and compute cloud services.