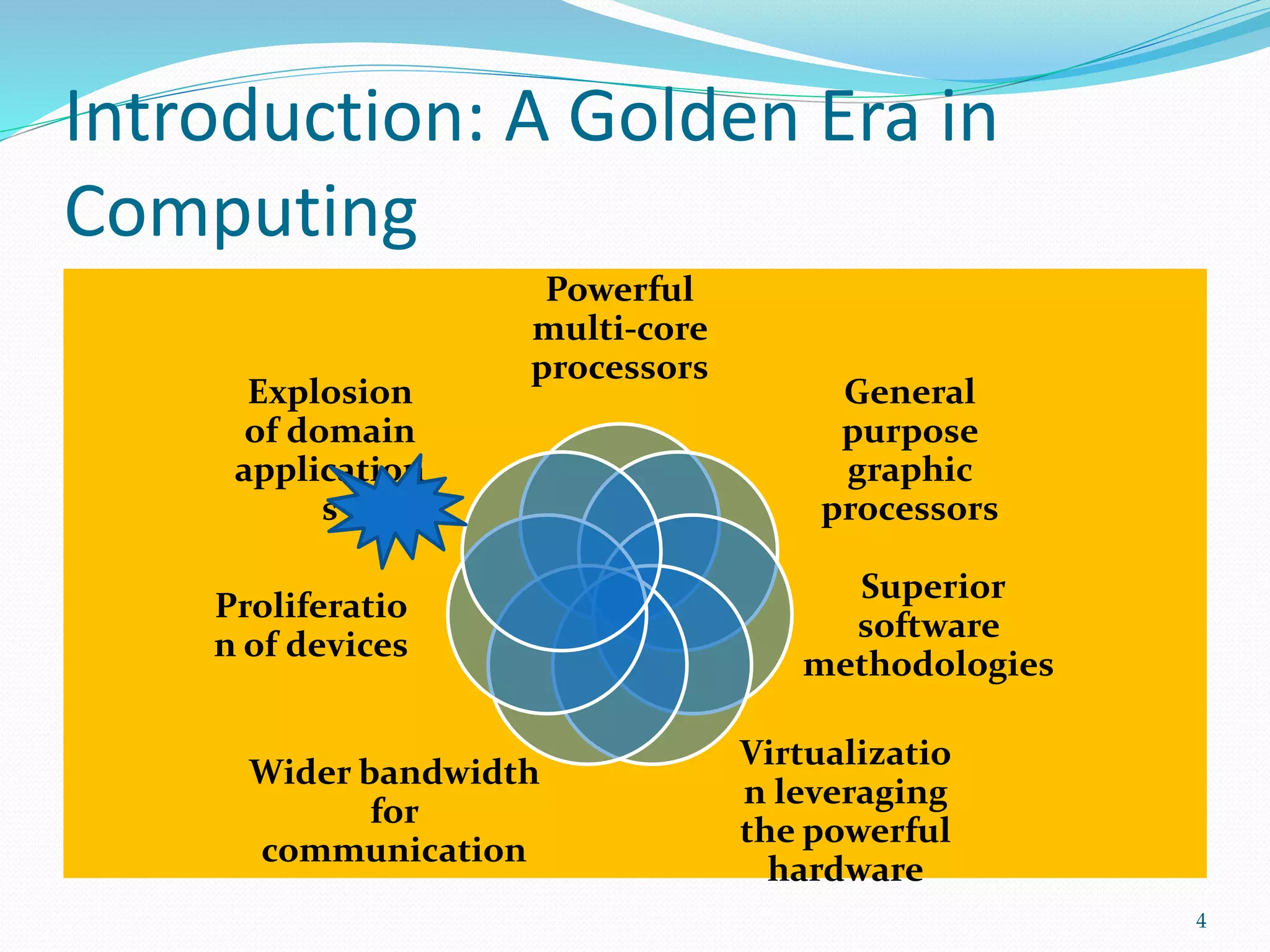

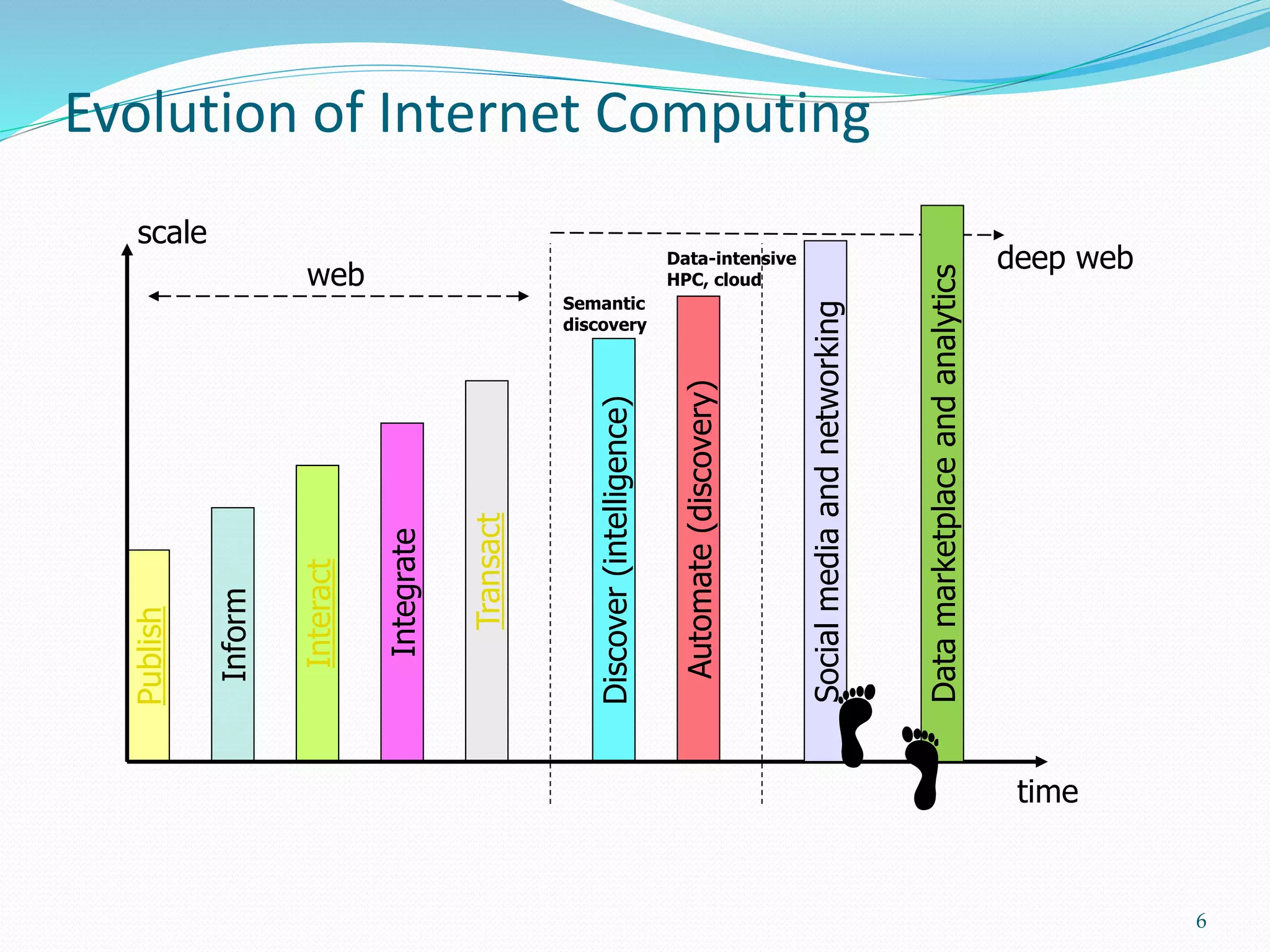

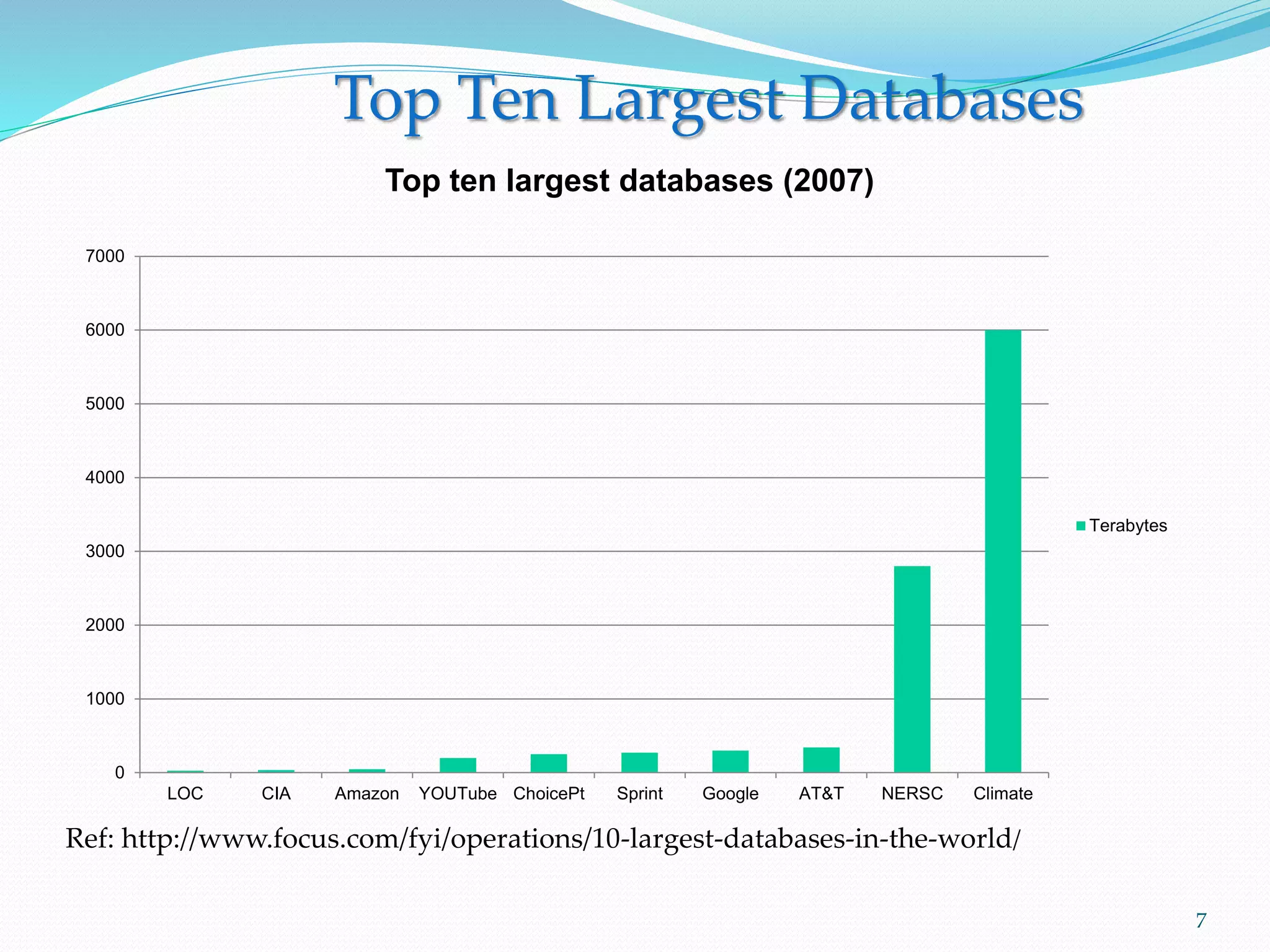

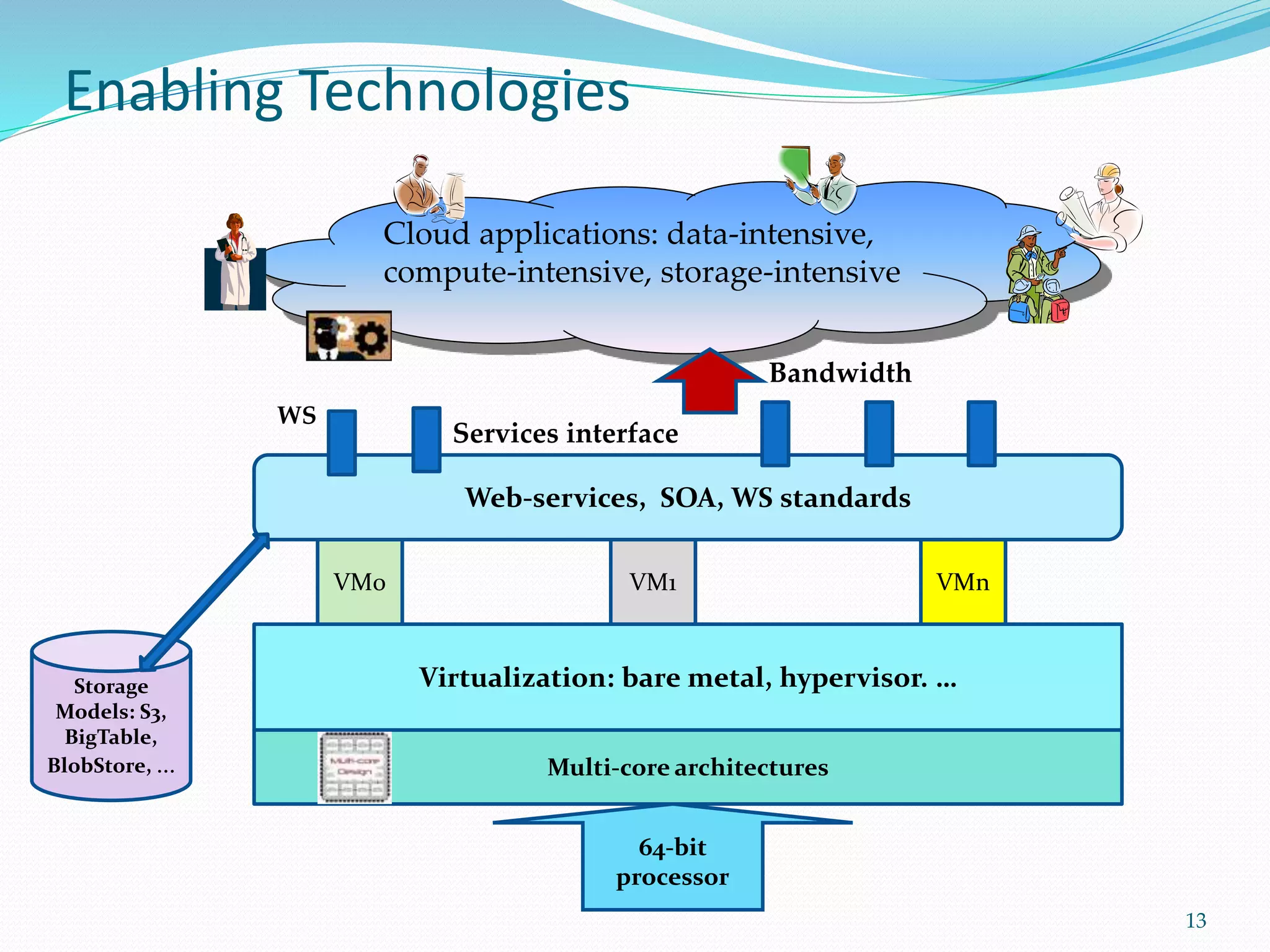

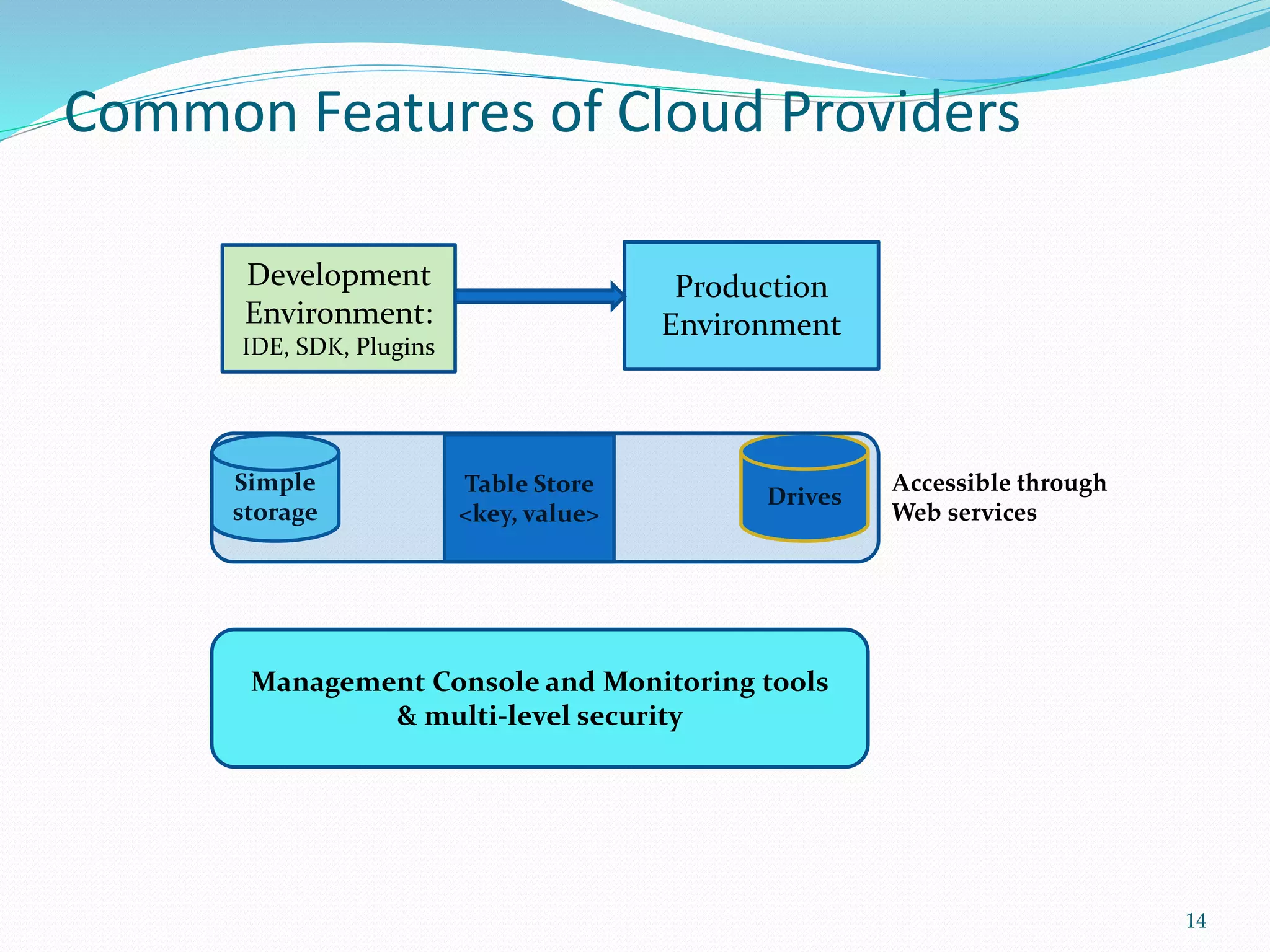

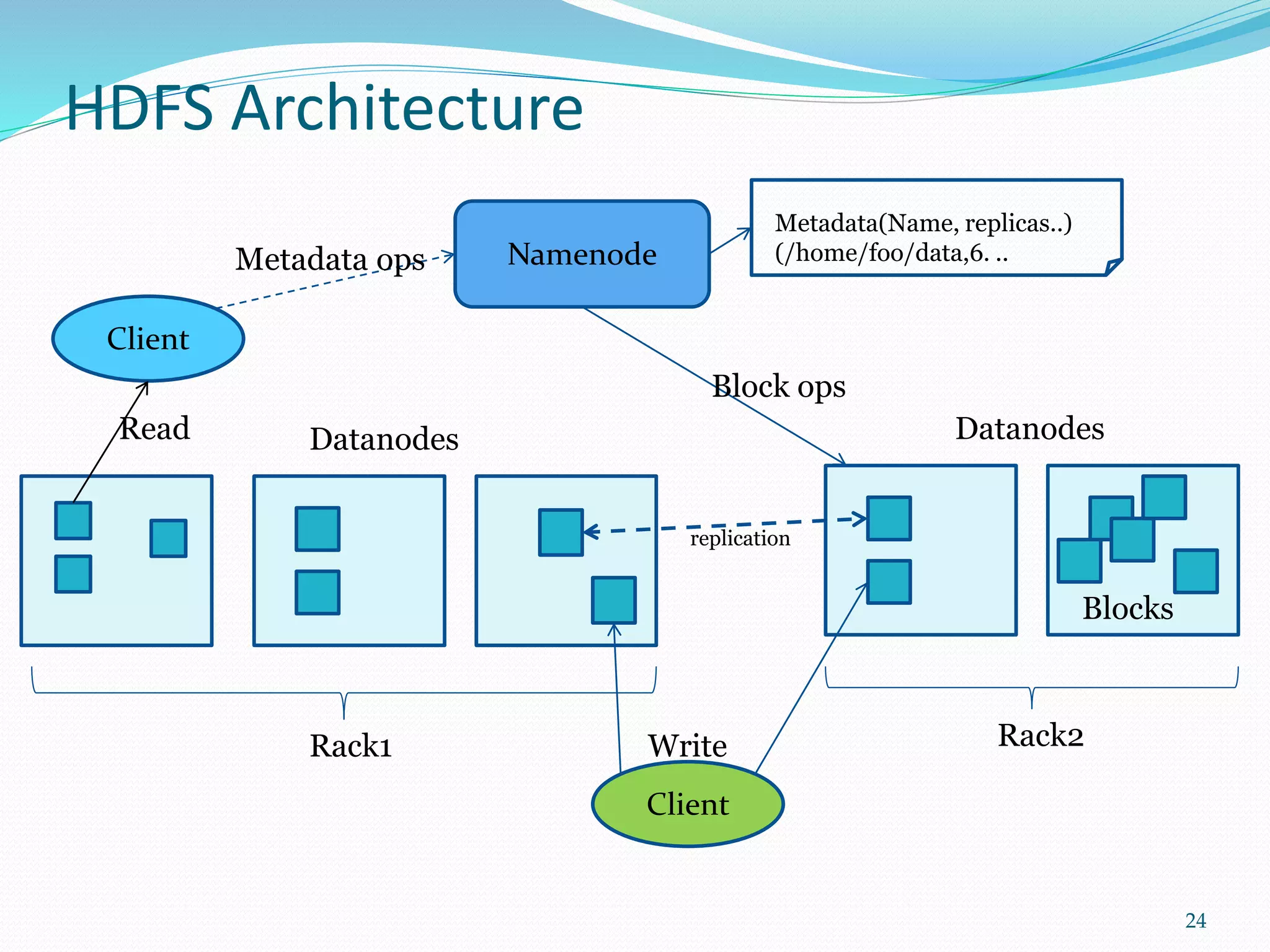

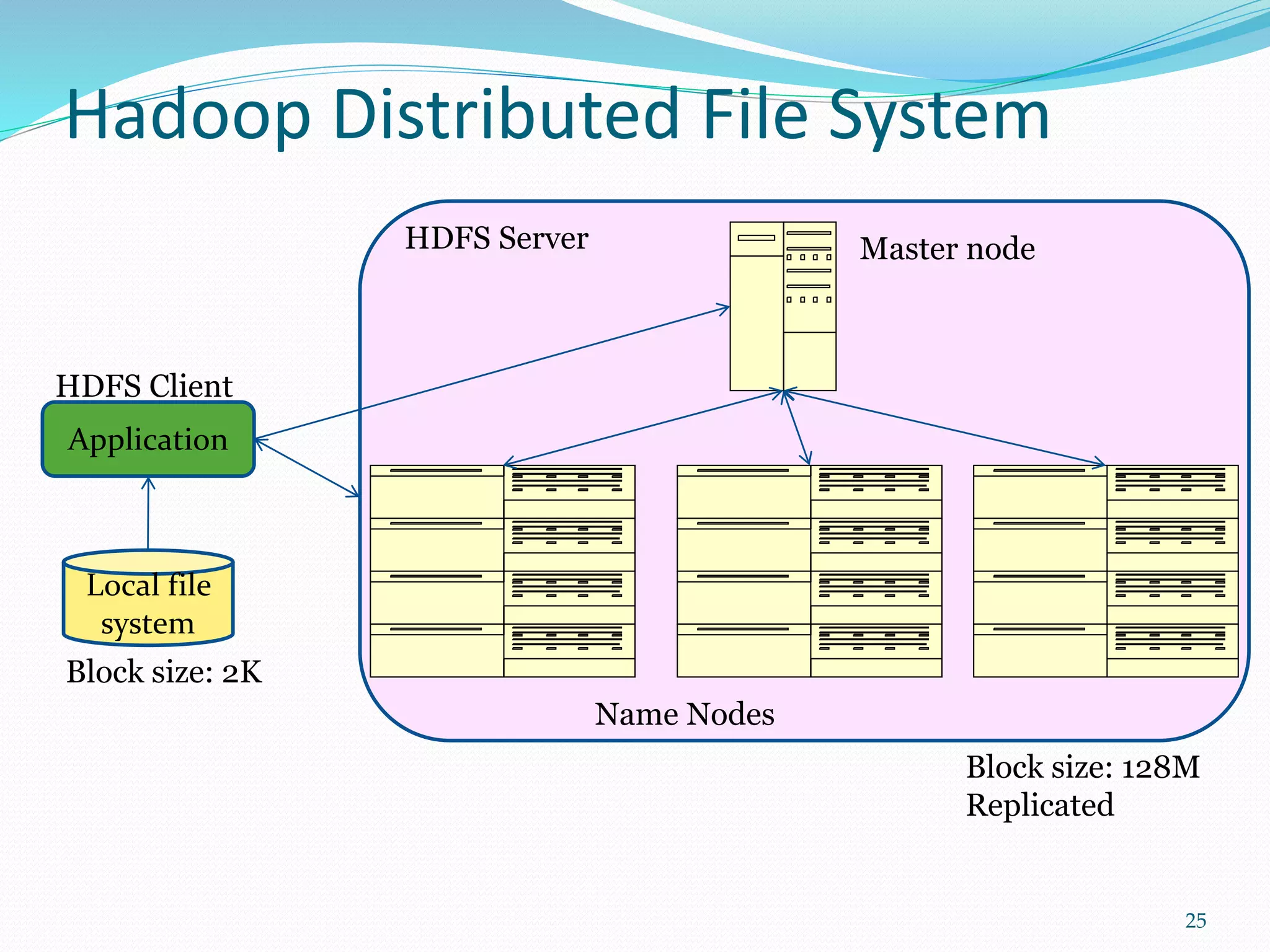

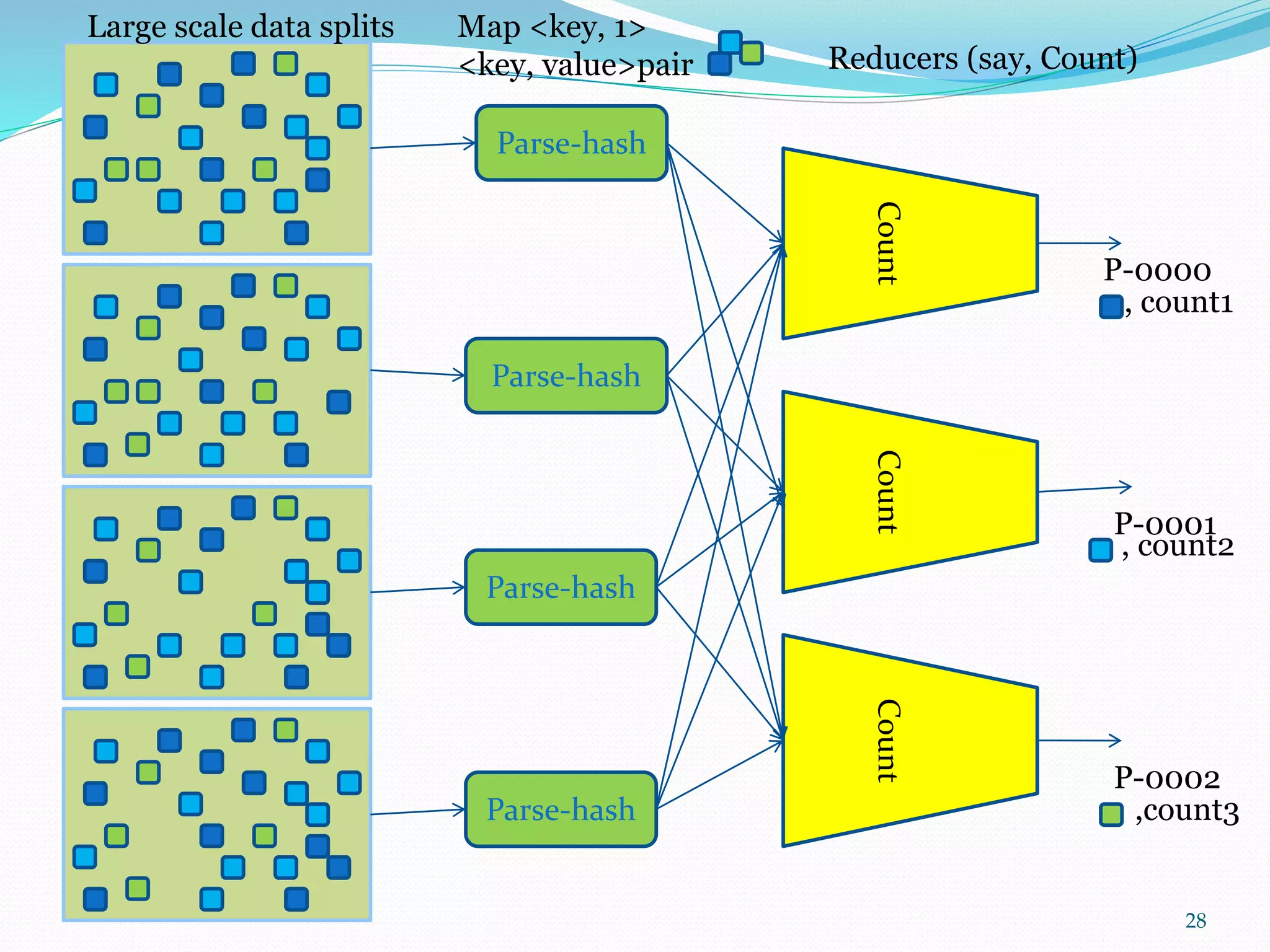

This document provides an outline for a talk on cloud computing. It begins with an introduction to cloud concepts and technologies like virtualization and parallel computing models. It then discusses different cloud models including IaaS, PaaS and SaaS. The outline includes demonstrations of cloud capabilities with Amazon AWS and Microsoft Azure, as well as data and computing models using MapReduce. It concludes with a case study of a real business application of the cloud and a question and answer section.