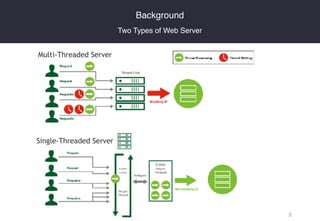

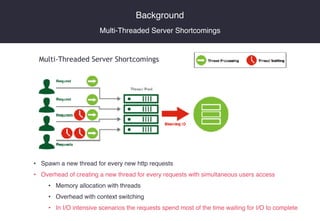

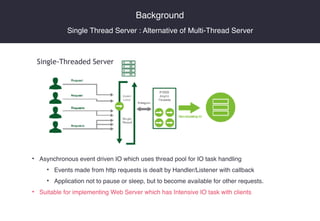

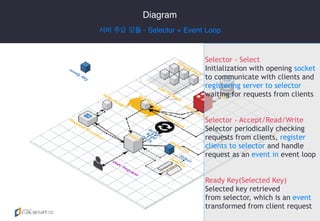

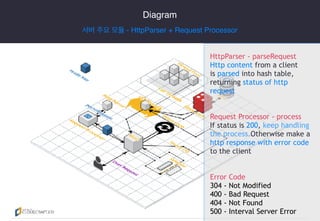

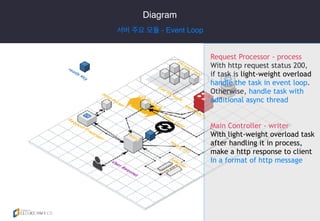

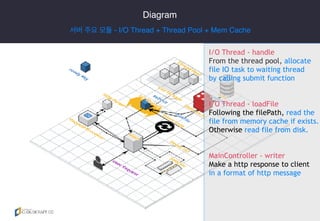

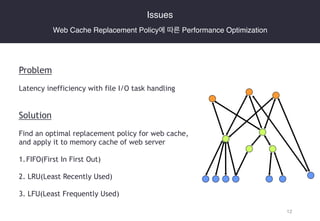

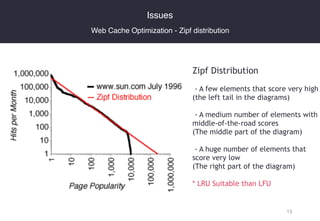

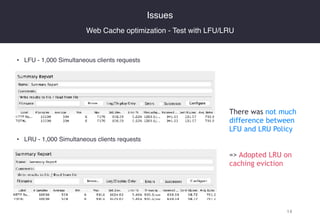

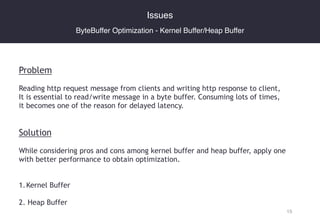

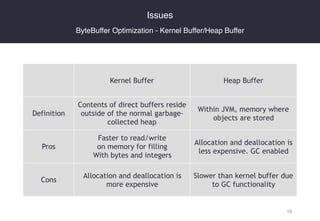

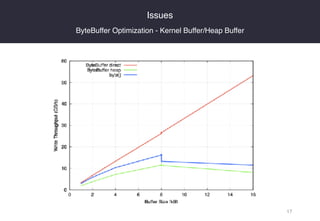

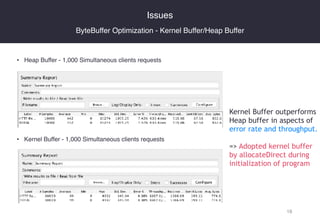

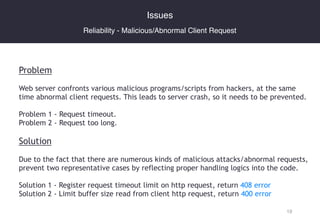

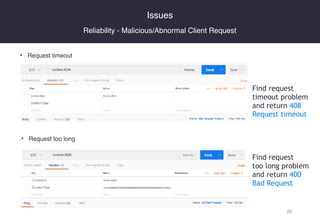

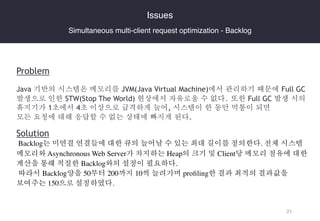

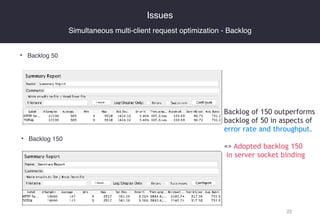

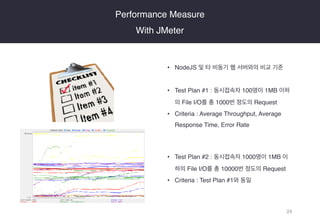

The document presents the development of a web server engine utilizing an event loop to manage multiple simultaneous HTTP requests more efficiently than traditional multi-threaded servers. It details the server architecture, including key modules such as the selector, HTTP parser, and I/O handling, optimized for performance and reliability against malicious requests. The findings emphasize the implementation of caching strategies and buffer optimizations, ultimately demonstrating improved server performance through a series of live demos and performance tests.