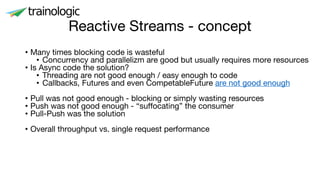

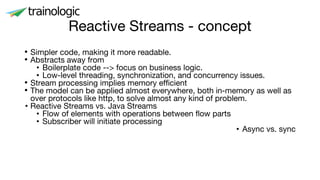

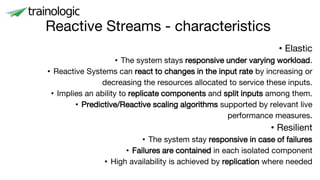

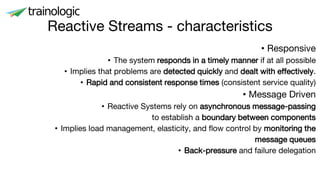

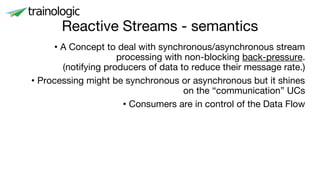

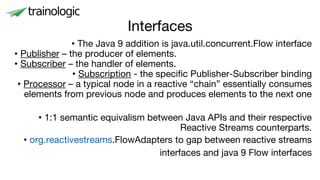

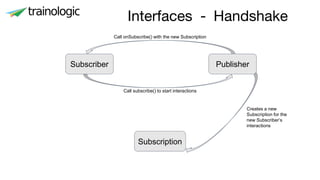

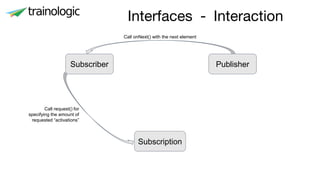

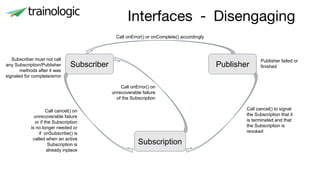

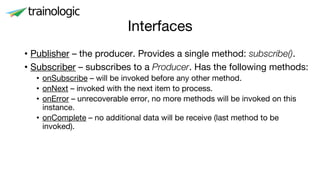

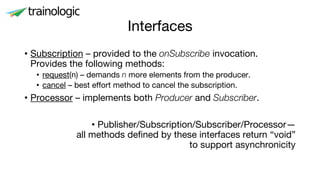

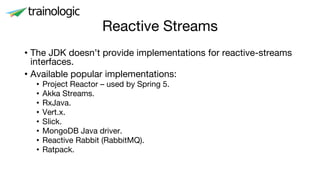

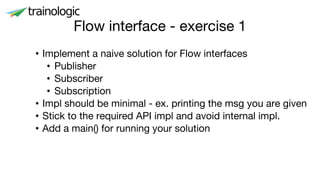

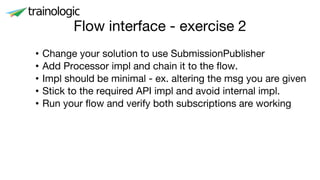

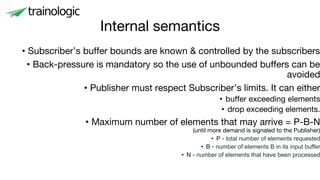

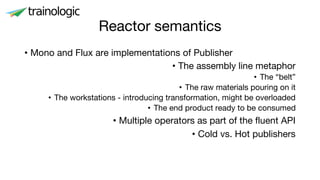

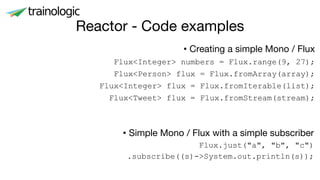

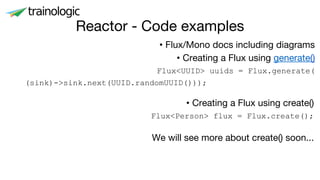

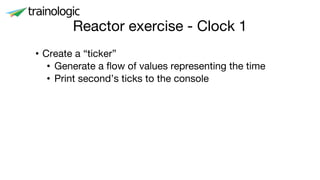

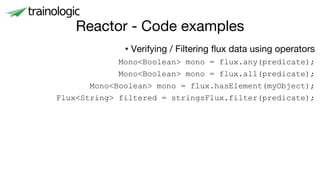

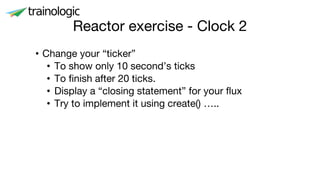

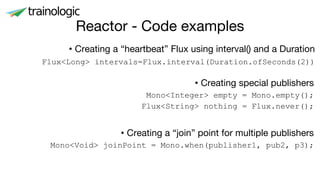

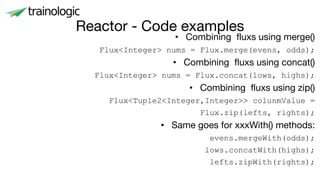

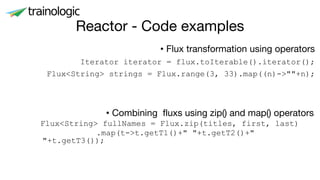

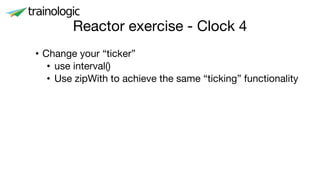

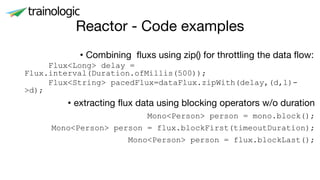

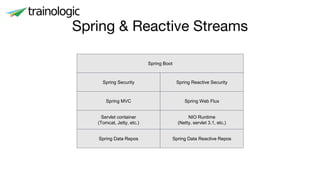

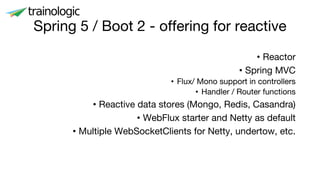

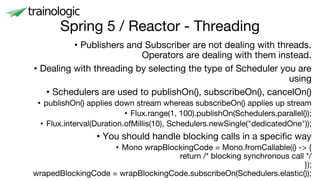

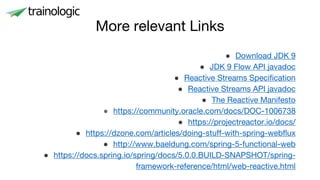

This document discusses reactive programming concepts using Java 9 and Spring Reactor. It introduces reactive streams interfaces in Java 9 like Publisher, Subscriber, and Subscription. It then covers Spring Reactor implementations of these interfaces using Mono and Flux. Code examples are provided for creating simple reactive streams and combining them using operators. The threading model and use of schedulers in Spring Reactor is also briefly explained.