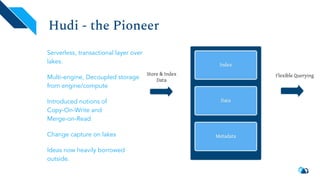

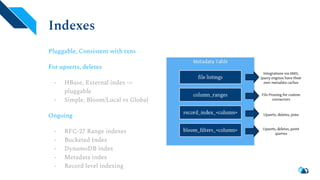

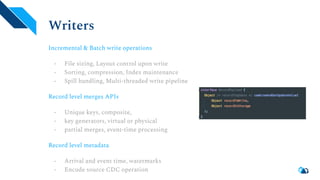

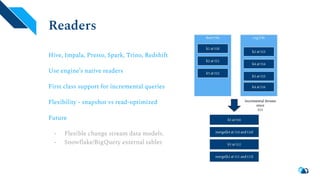

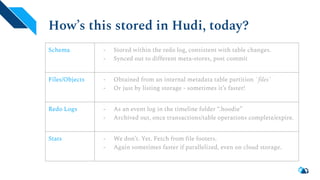

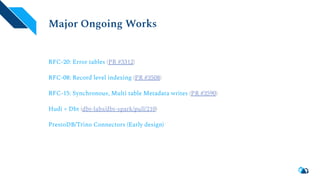

Apache Hudi is a serverless transactional layer designed for data lakes that supports both streaming and batch pipelines, enabling efficient data processing. It features components for table metadata management, indexing, and concurrency control, while ensuring scalability on Hadoop-compatible storage systems. The platform has an active community contributing to ongoing improvements and new features such as indexing, schema evolution, and integration with various data processing engines.