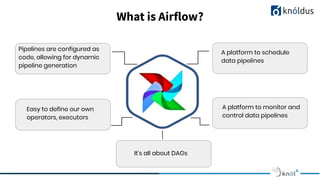

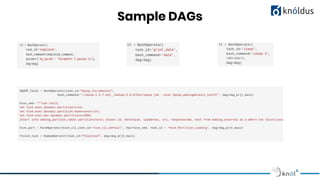

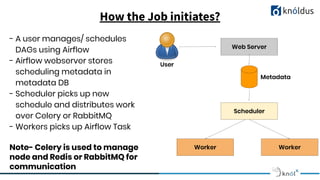

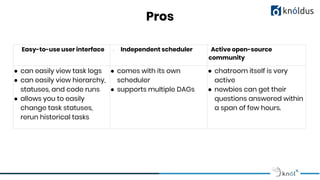

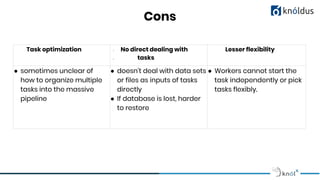

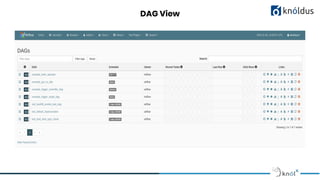

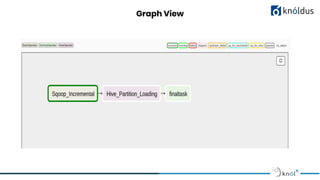

Apache Airflow is a platform developed by Airbnb in 2015 for programmatically authoring, scheduling, and monitoring workflows, known as DAGs (Directed Acyclic Graphs). It offers a flexible and user-friendly interface, supports various use cases like ETL pipelines and machine learning projects, and has a strong open-source community. While it has advantages such as task monitoring and extensibility, it also faces challenges with task optimization and flexibility in handling multiple tasks.