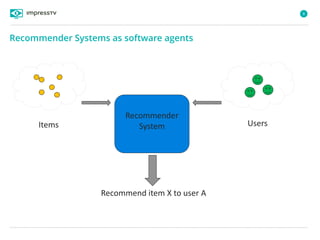

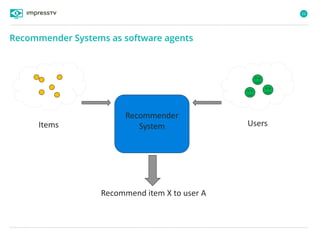

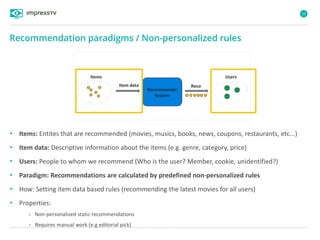

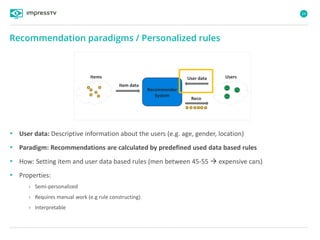

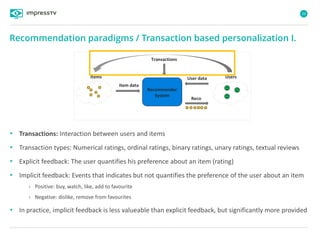

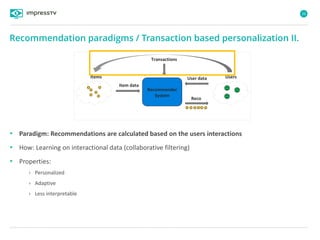

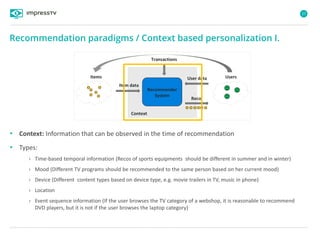

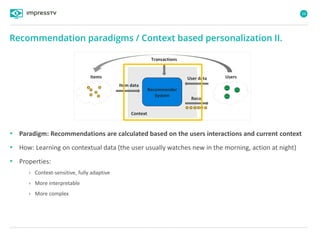

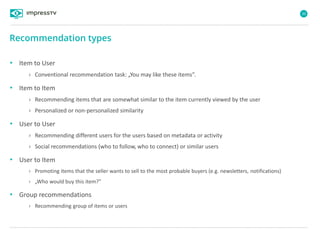

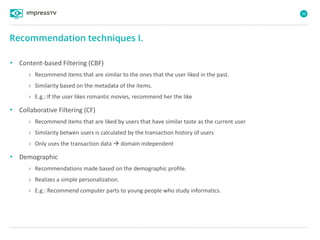

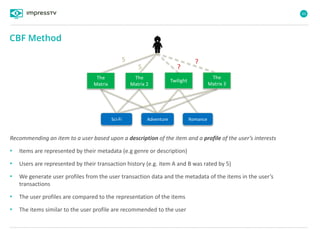

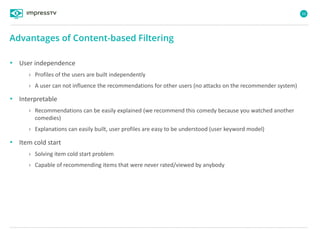

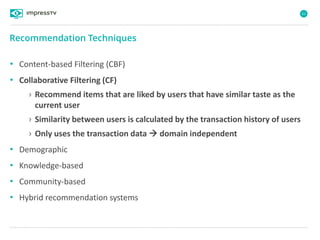

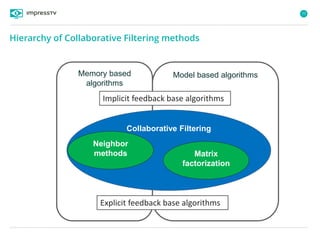

Recommender systems are software agents that analyze a user's preferences through transactions and provide personalized recommendations accordingly. There are several recommendation paradigms including non-personalized rules, personalized rules based on user data, and transaction-based collaborative filtering that learns from user interactions. Context-based recommender systems also consider additional information like time, location, or device to provide adaptive recommendations. Common techniques used in recommender systems include content-based filtering that recommends similar items, collaborative filtering that finds users with similar tastes, and demographic-based recommendations.

![7

„Recommender Systems (RS) are software agents that elicit the interests and

preferences of individual consumers […] and make recommendations

accordingly. They have the potential to support and improve the quality of the

decisions consumers make while searching for and selecting products online.„ 1

What are Recommender Systems?

1 Xiao, Bo, and Izak Benbasat, 2007, E-commerce product recommendation agents: Use, characteristics, and

impact, Mis Quarterly 31, 137-209.

Recommender Systems](https://image.slidesharecdn.com/introduction-to-recommender-systems-david-zibriczky-20141204-pub-160219114326/85/An-introduction-to-Recommender-Systems-7-320.jpg)