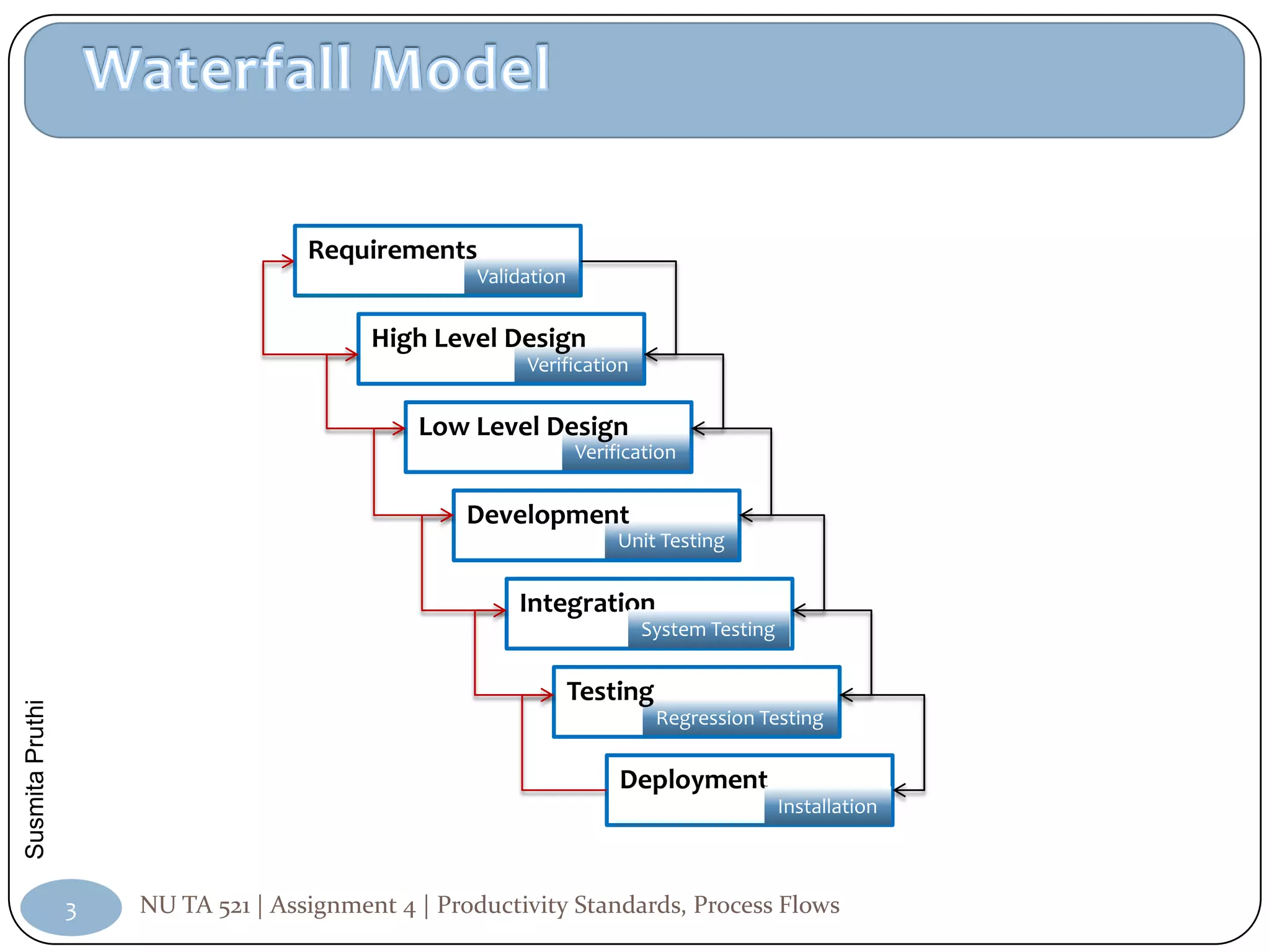

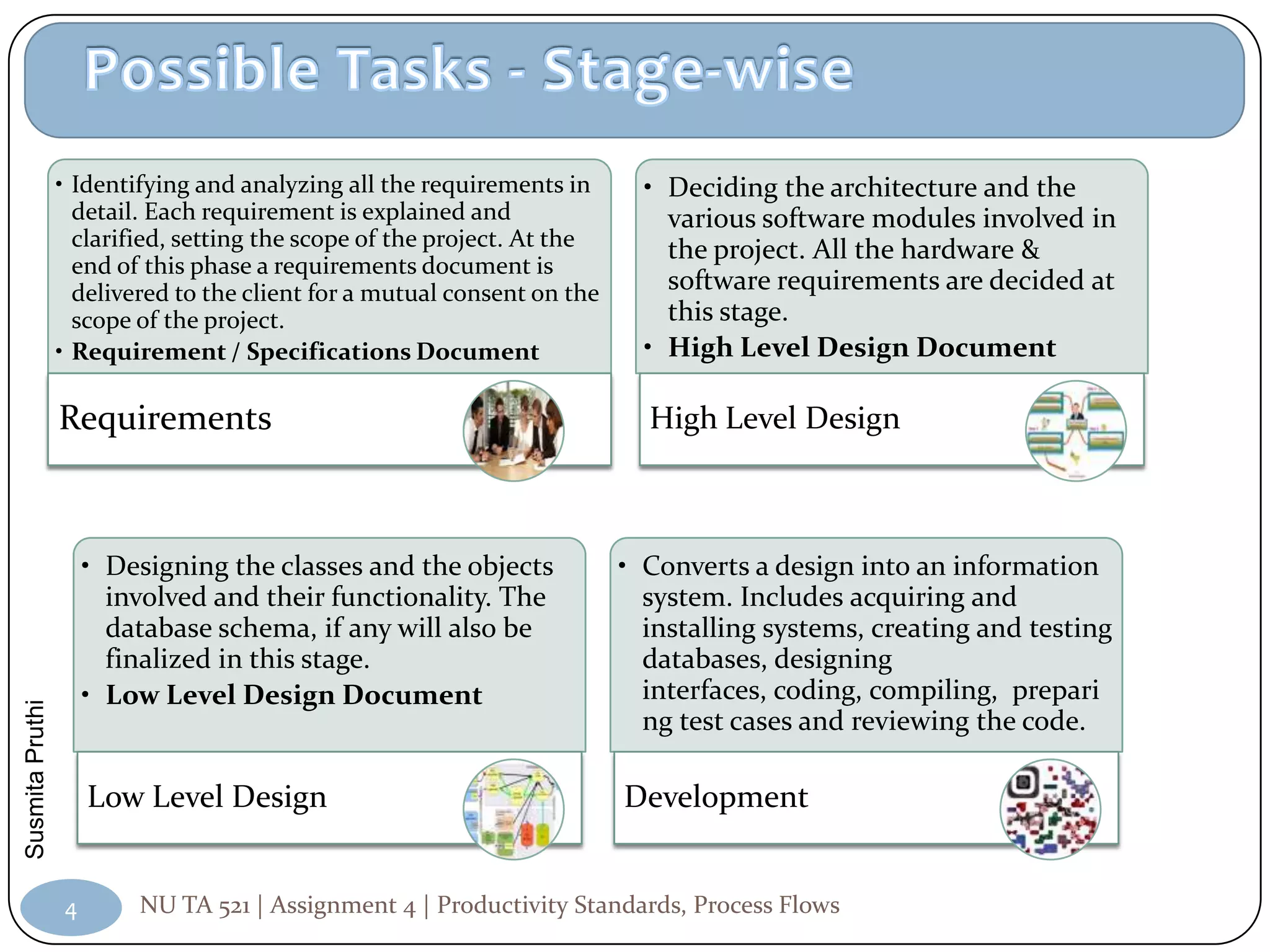

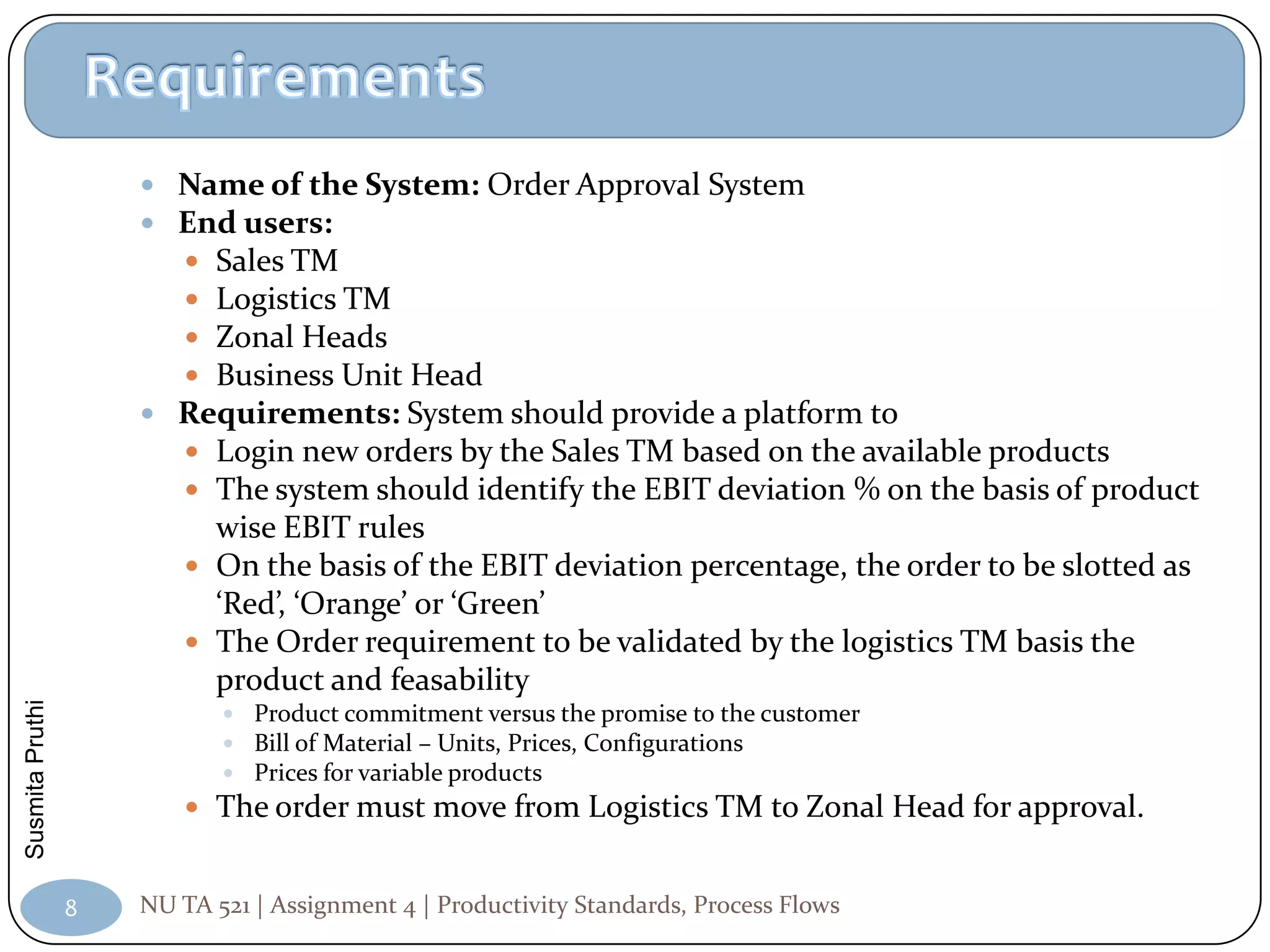

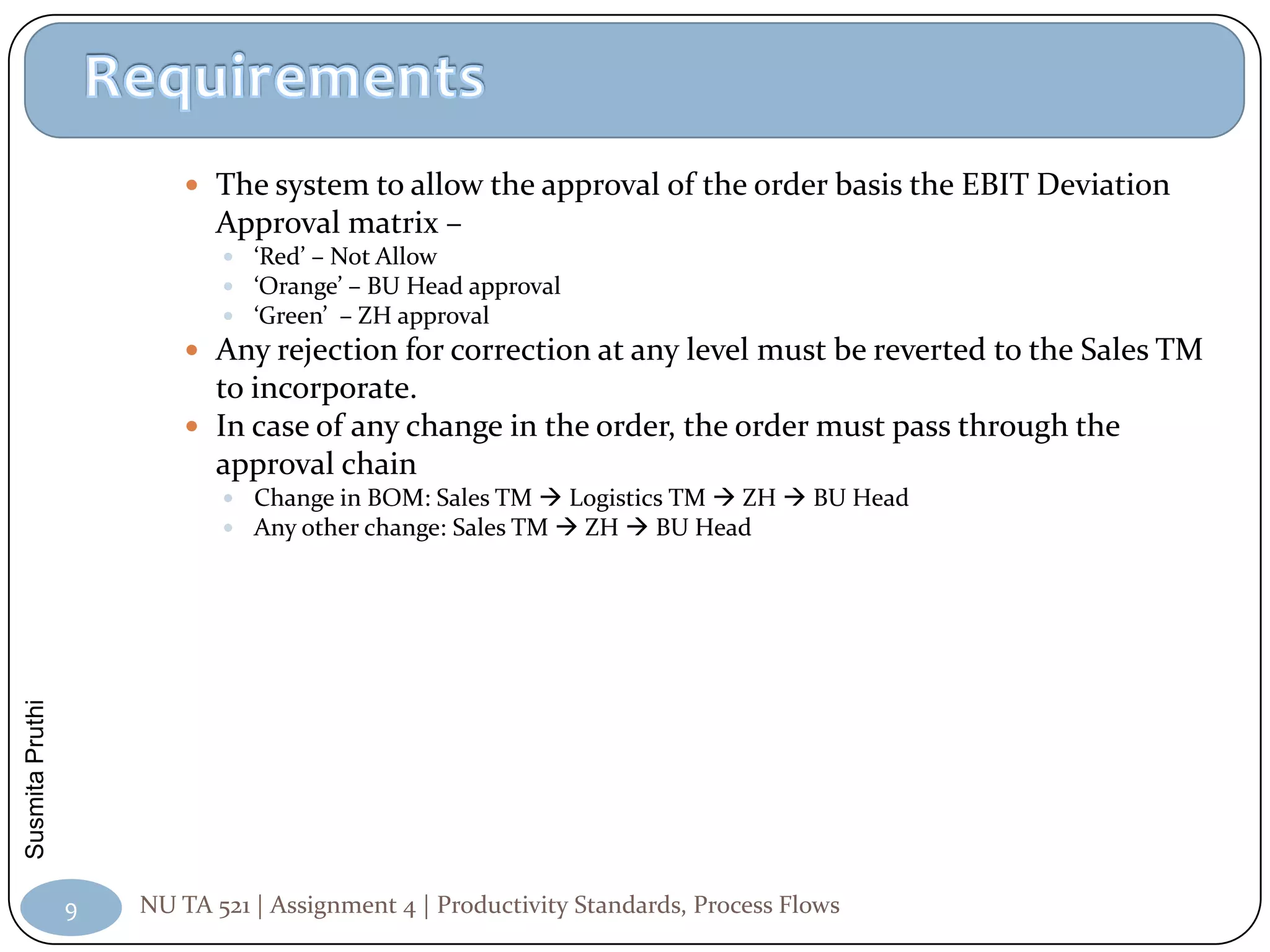

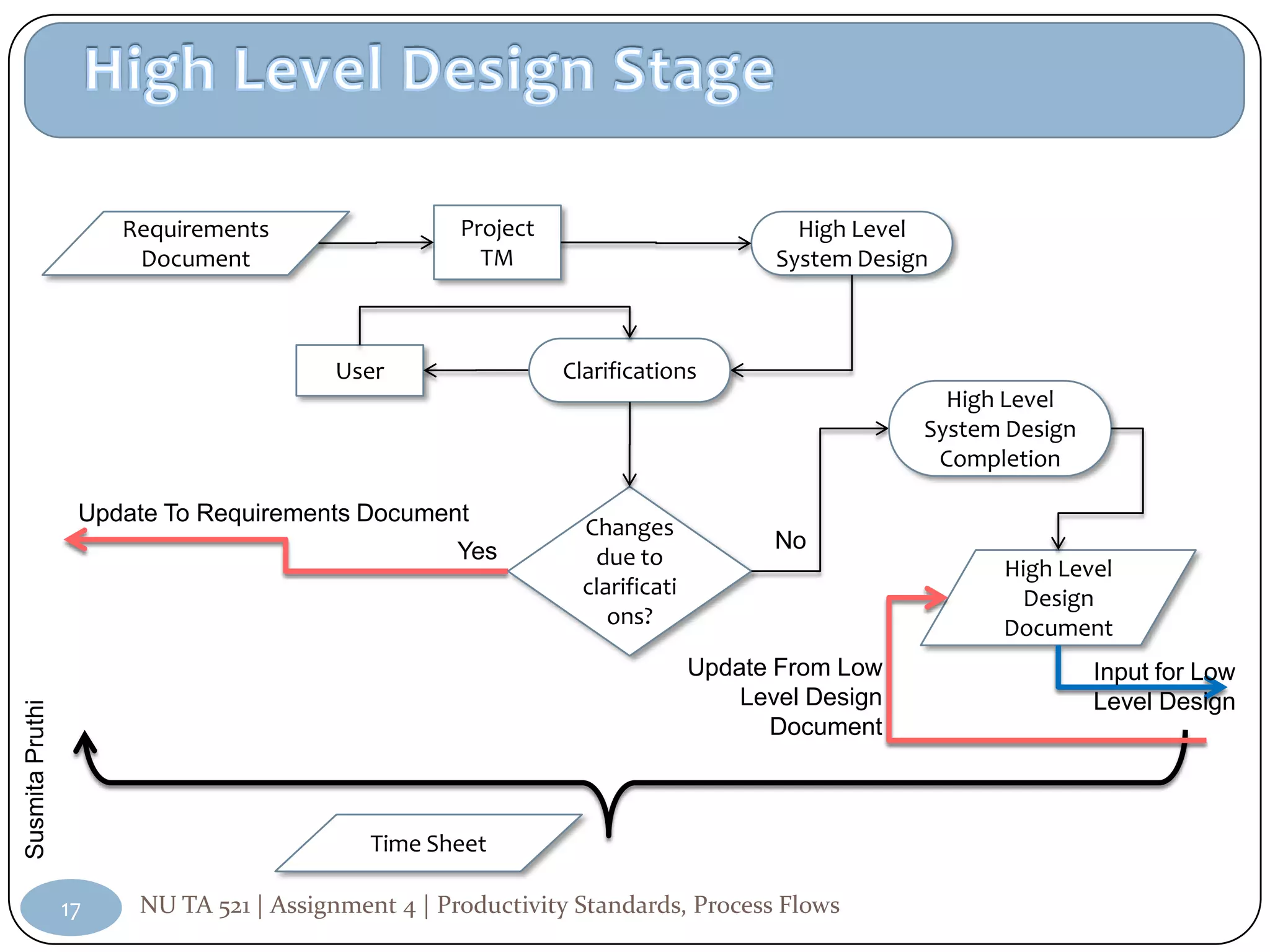

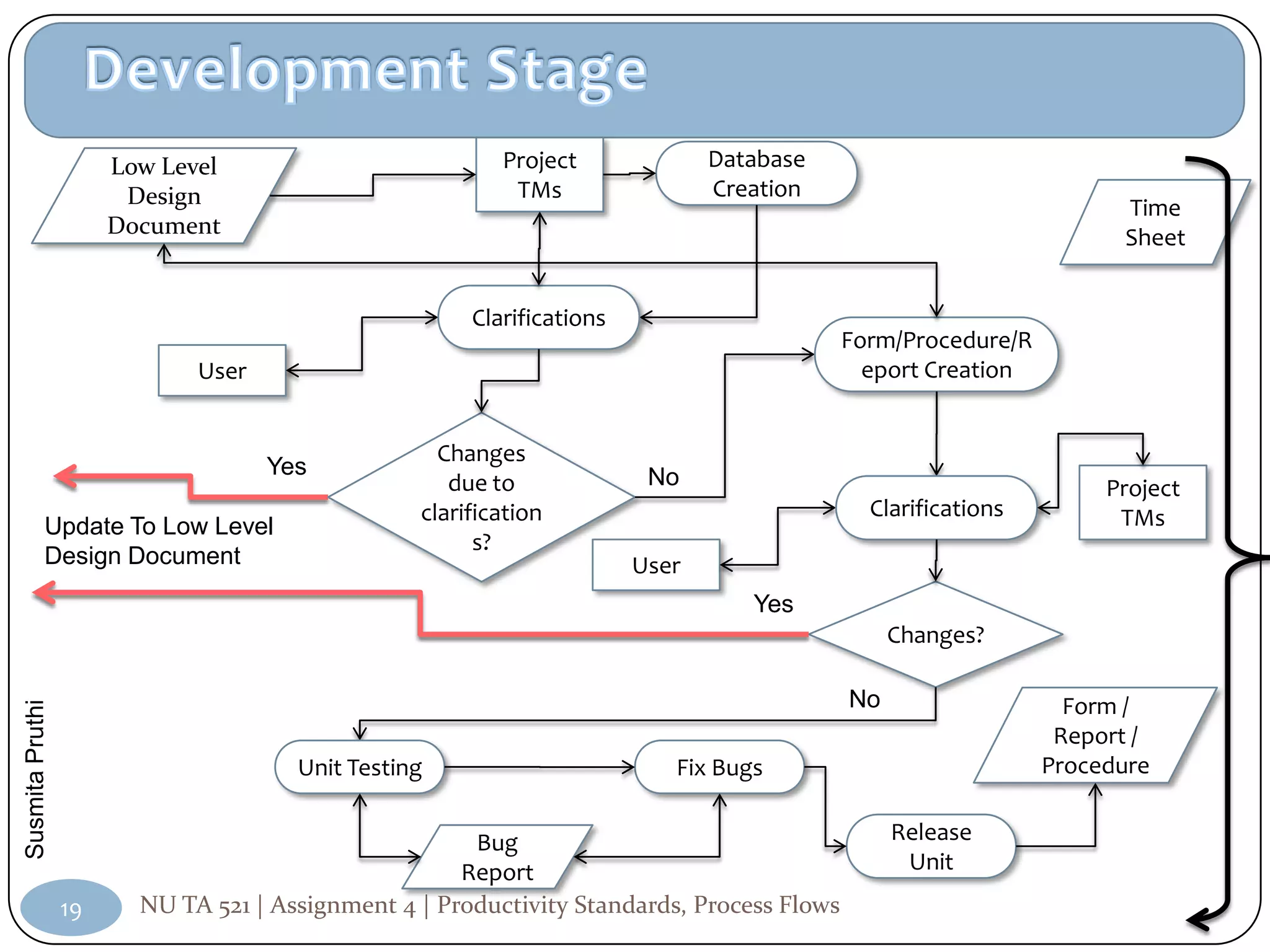

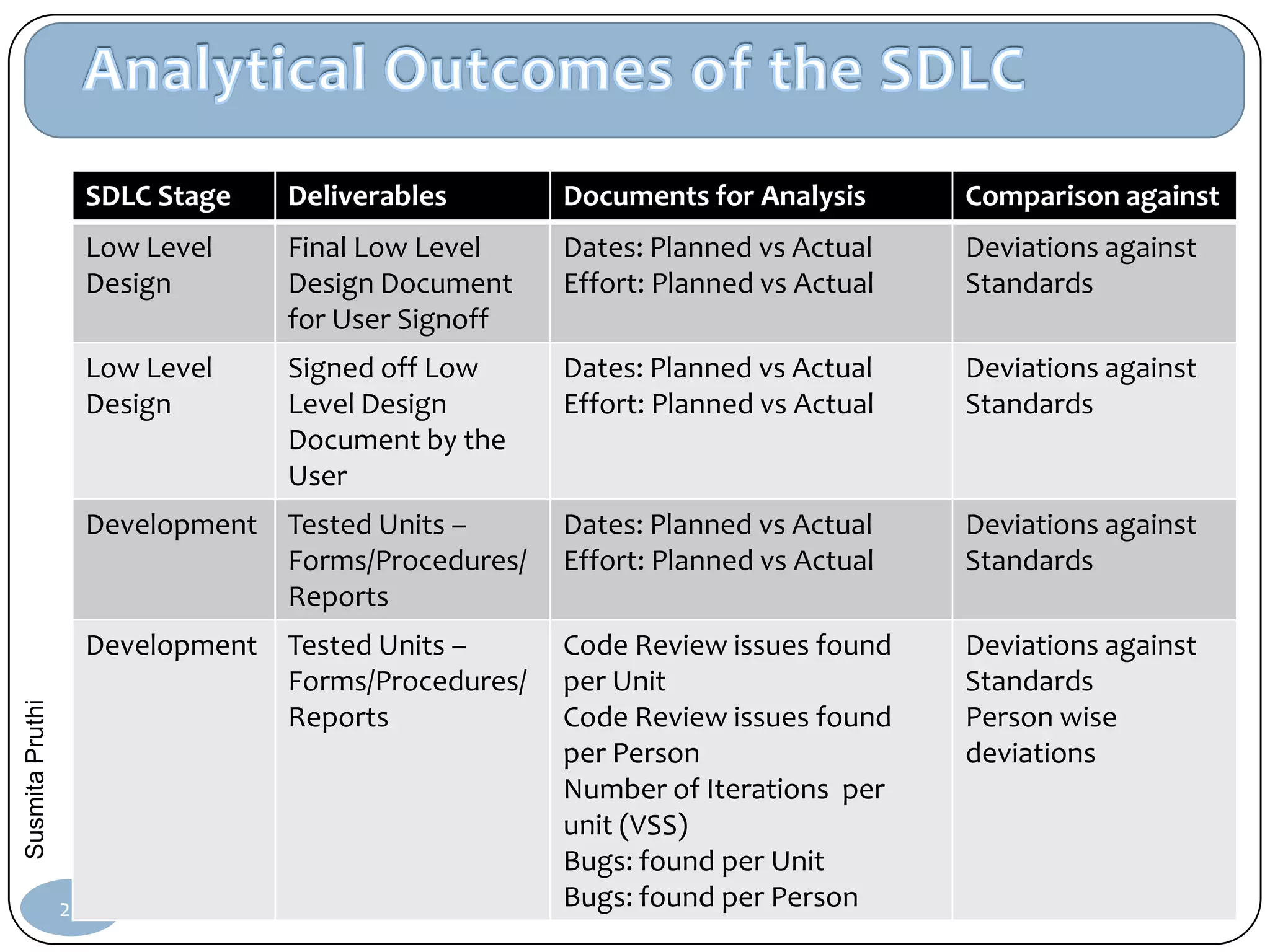

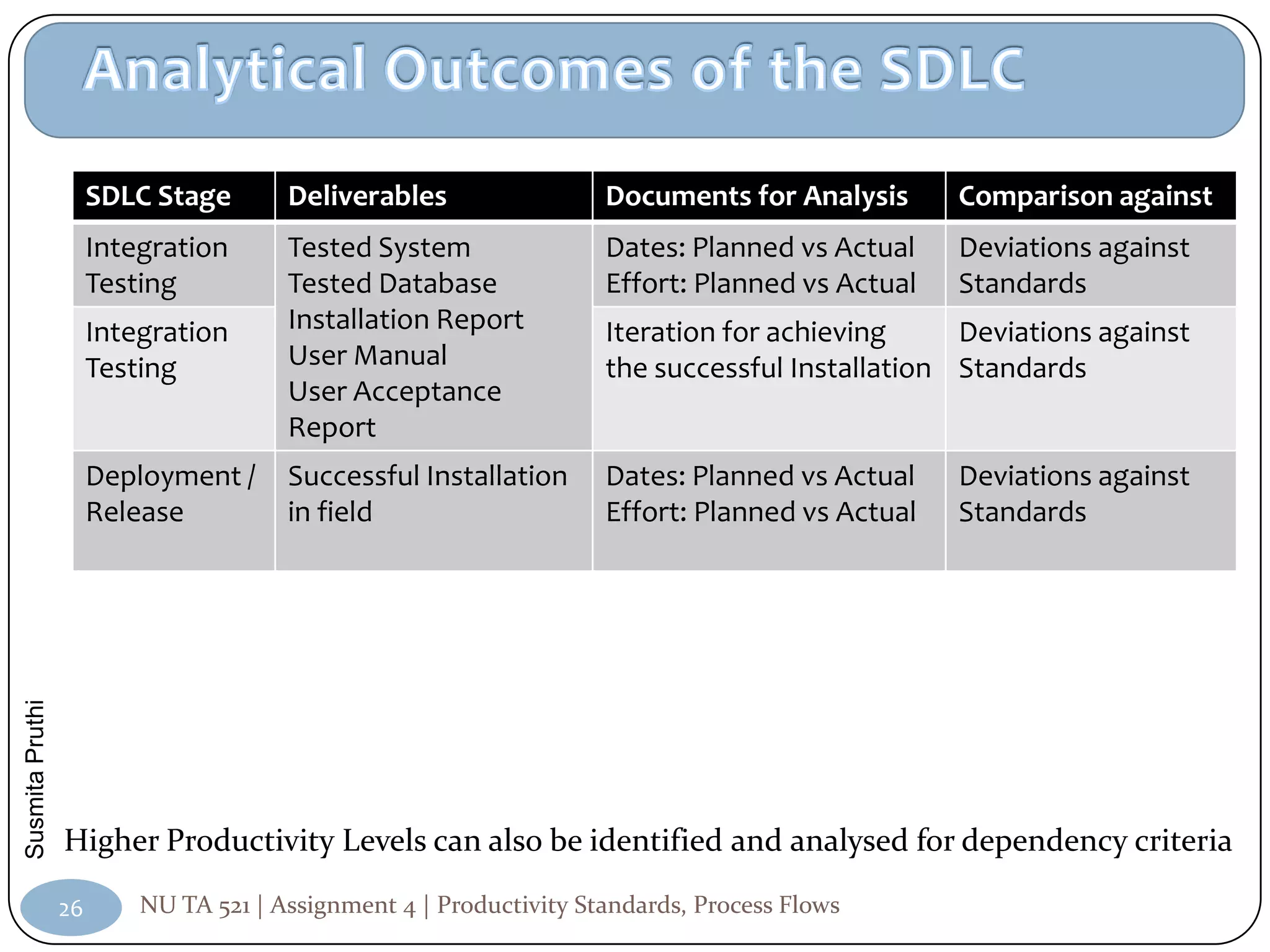

The document describes creating a software development lifecycle (SDLC) using the waterfall model and data flow diagram principles, with the goal of optimizing the SDLC for measurement and analysis. It instructs taking the initial SDLC and adding phases/stages to reach a second level of productivity in analysis. Requirements include creating a workflow, adding assumptions, and structuring phases to optimize the SDLC.