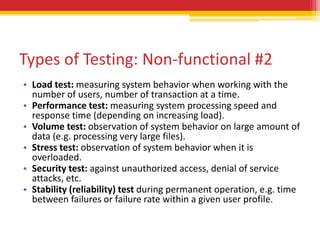

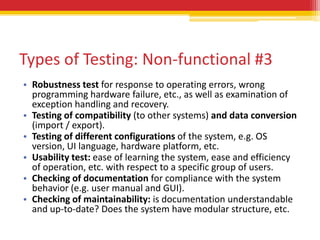

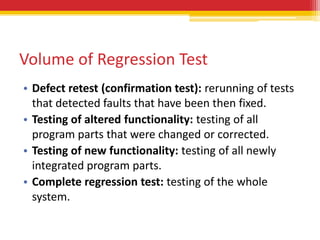

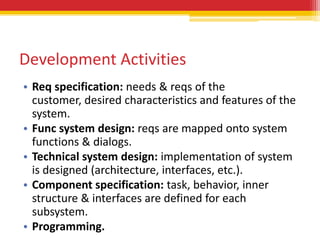

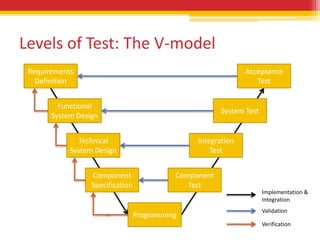

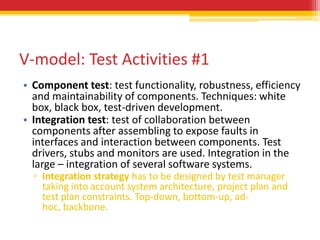

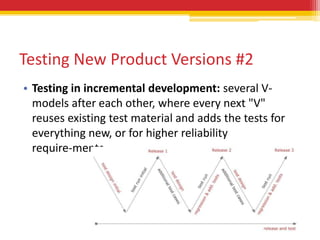

The document discusses different types and levels of software testing throughout the software development lifecycle. It describes the V-model which pairs testing activities with development activities. Component and integration testing occur at the lower levels, while system and acceptance testing occur at the higher levels. Regression testing is needed for new product versions and changes. Functional testing checks system behavior while non-functional testing examines qualities like performance, security and usability. Testing also targets the software structure and changes made between versions.

![V-model: Test Activities #2

• System test: check if the integrated product meets the

specified requirements. System is examined from the

point of view of customer and future user. Environment

should be as close to the operation environment as

possible, but this should NOT be the customer’s

operational environment.

• Acceptance test: “Formal testing with respect to user

needs, requirements, and business processes conducted

to determine whether or not a system satisfies the

acceptance criteria and to enable the user, customers or

other authorized entity to determine whether or not to

accept the system.” [After IEEE 610]. Customer must be

involved.](https://image.slidesharecdn.com/softwaretestingfoundationspart2-testinginswlifecycle-121017034535-phpapp02/85/Software-Testing-Foundations-Part-2-Testing-in-Software-Lifecycle-6-320.jpg)

![Types of Testing: Non-functional #1

Test how well the (partial) system carries out its

functions.

[ISO 9126]: reliability, usability, efficiency,

maintainability, portability.](https://image.slidesharecdn.com/softwaretestingfoundationspart2-testinginswlifecycle-121017034535-phpapp02/85/Software-Testing-Foundations-Part-2-Testing-in-Software-Lifecycle-13-320.jpg)