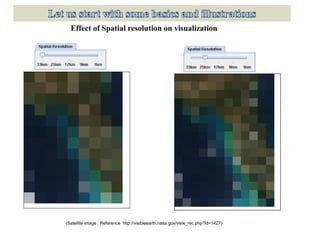

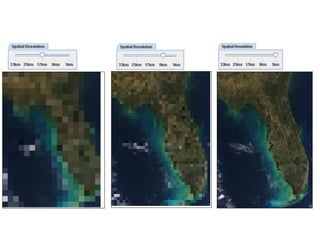

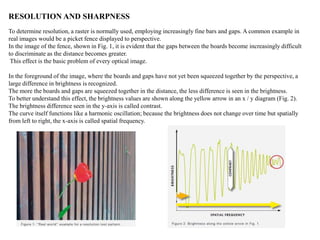

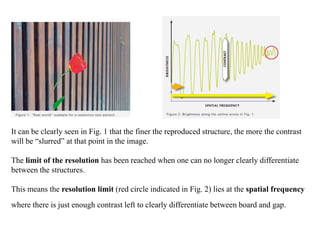

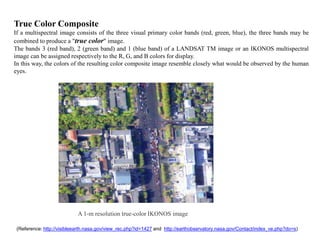

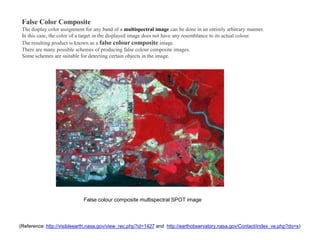

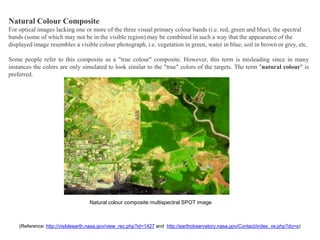

Spatial resolution refers to the ability to distinguish between two close objects or fine detail in an image. It depends on properties of the imaging system, not just pixel count. Higher spatial resolution means finer details can be distinguished. Pixel count alone does not determine spatial resolution, as color images require interpolation between sensor pixels. Spatial resolution is measured differently for various media like film, digital cameras, microscopes, and more. It affects the ability to distinguish fine detail like gaps in a fence as distance increases.