Operant Conditioning: Reinforcement and Punishment Shape Behavior

- 2. Operant Conditioning A method of learning that occurs through reinforcements and punishments for behavior. We learn to perform certain behaviors more often because they result in rewards, and learn to avoid other behaviors because they result in punishment or adverse consequences.

- 3. Operant Conditioning Experiences shape our future behavior choices, even if we don’t realize it is happening. “Punishment” is something bad happening to you. “Reinforcement” is something good happening. Remember, “Negative” means something is taken away, and “Positive” means something is added to the environment.

- 4. Types of Reinforcement/Punishment Rational Money Food Things Emotional Encouragement Attention Love/Affection Keep in mind that not all rewards are physical things. Even a smile can be enough reinforcement to encourage a behavior to continue. Think of what might occur if you lost or gained the items listed below.

- 5. B. F. Skinner Lived 1904-1990. Influential American psychologist considered to be one of the founders of behaviorism (along with Watson and Pavlov). He identified the principles behind operant conditioning, and was the first to study the behavioral effects of punishment and reinforcement in highly controlled experiments.

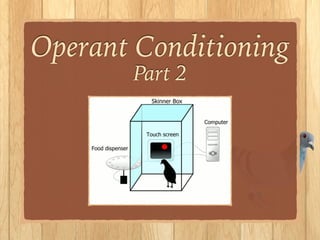

- 6. The Skinner Box Skinner’s operant conditioning chamber (also called a Skinner Box) was designed to teach rats how to push a lever. This behavior is not natural to rats, so operant conditioning with positive and negative reinforcement were performed in order to teach the behavior. Positive Reinforcement: A rat was awarded with food when he pressed the lever. Negative Reinforcement: A rat was able to turn off electric shocks produced by the floor by pressing the lever.

- 7. Positive Reinforcement • Initially, the rat’s behavior was random. It accidentally tripped the lever and a food pellet was released. • The rat soon discovered that intentionally pressing the lever resulted in a reward. • The consequence of performing the behavior (lever press) was desirable, ensuring that the rat would repeat the action.

- 8. Negative Reinforcement • An unpleasant electric current ran through the floor of the rat’s cage. • Initially, accidental lever pushing turned off the electric current. • The consequence of avoiding something painful (removal of an unpleasant stimulus) ensured that the rat continued to push the lever.

- 10. Variable Schedule of Reinforcement Skinner learned that behaviors become the most frequent when rewards are not given on a consistent schedule. Rather, rewards that are given at variable times cause behaviors to increase greatly. Wow! Slot machines are so addictive!

- 11. Schedules of Reinforcement Continuous Schedule of Reinforcement Every time a behavior is performed, a reward is given. (When first teaching a behaviour, this schedule helps the subject learn quickly.) Variable Reinforcement Schedule Behavior is reinforced/ rewarded at random (unpredictable) times. (In the long-run, this schedule causes the subject to perform the behavior more often, and remember it for longer.)

- 12. Shaping To achieve a desired behavior, step-by-step trials are used to direct the participant towards the end goal. Skinner noticed that the pigeons in the skinner box were not accidentally pushing the button that would release food. How could he teach the pigeon that pressing the button would result in a positive outcome? In other words: breaking down behavior into small steps, and giving positive reinforcement along the way can result in the learning of more complex behaviors.

- 13. Shaping Step 1: give the pigeon food when it turns toward the button. Step 3: give the pigeon food when raises its head to the height of the button. Step 2: give the pigeon food when it walks toward the button. Step 4: give the pigeon food when it taps the button with its beak.

- 14. Shaping: What else can we train the bird to do? “We first give the bird food when it turns slightly in the direction of the spot from any part of the cage.This increases the frequency of such behavior. We then withhold reinforcement until a slight movement is made toward the spot.This again alters the general distribution of behavior.We continue by reinforcing positions successively closer to the spot, then by reinforcing only when the head is moved slightly forward, and finally only when the beak actually makes contact with the spot. ...In this way we can build complicated operants which would never appear in the repertoire of the organism otherwise.”

- 15. Video 1

- 16. Shaping Skinner was able to teach pigeons many complex behaviors - such as telling the difference between different words and knocking bowling pins over with a miniature bowling ball. The technique did not work equally on all animals. Raccoons, for example, thought the ball itself was food, and did not cooperate in the experiment!

- 17. Shaping Humans EXAMPLES Learning to write. You might begin by tracing letters. Next, by connecting dots or dashes. Next, by looking at letters and copying them below. Finally, by writing the letters from memory. Learning to eat with a spoon. First you need to pick up the spoon. Next you need to put the spoon in the bowl. Next you need to scoop the food into the spoon. Next you need to lift the spoonful out of the bowl. Finally, you need to put the spoon into your mouth. Encouragement from parents along the way can reinforce these movements.