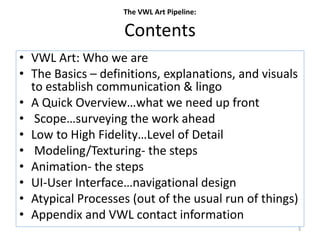

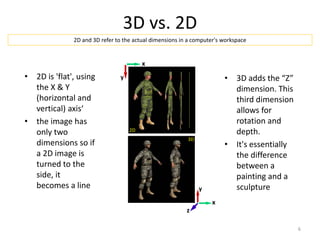

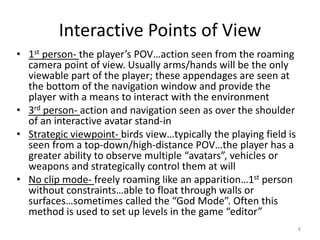

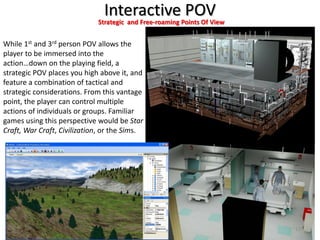

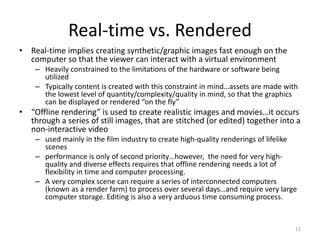

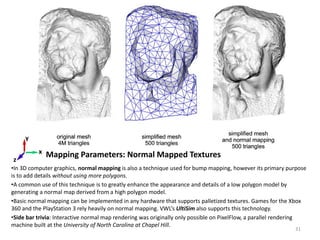

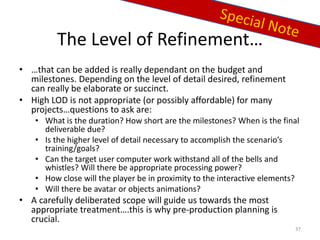

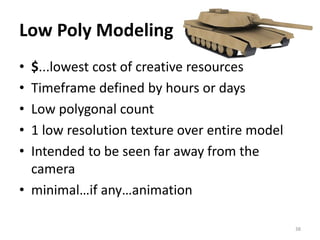

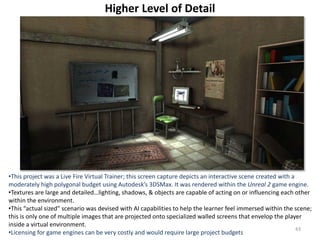

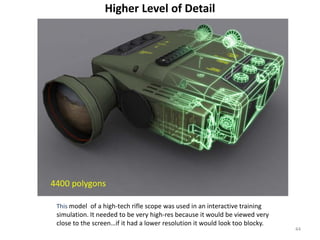

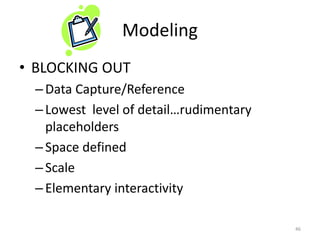

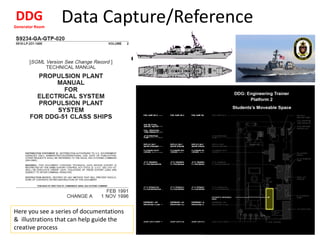

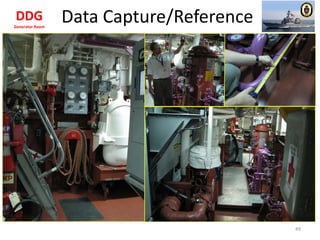

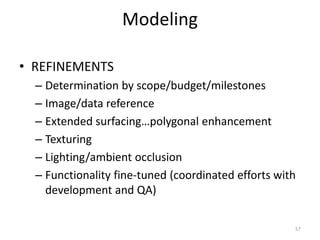

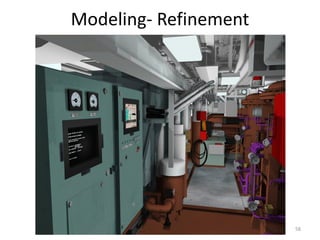

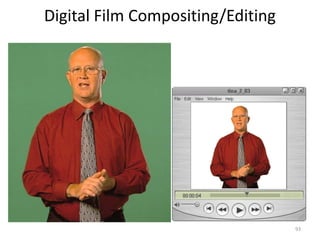

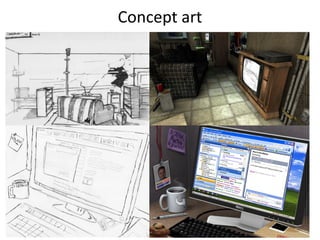

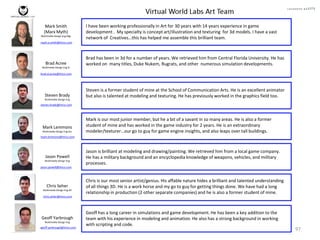

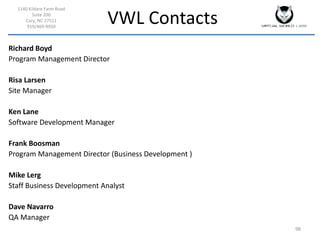

The document outlines the vwl art pipeline, detailing the intricate production process used to create virtual products for real-time applications, emphasizing efficiency, precision, and flexibility in workflows. It covers fundamental concepts such as 3D modeling, animation, and various user interface perspectives, as well as specific production requirements and constraints, including budget and security. Additionally, it describes the roles of the vwl art team and how they collaborate with other departments to deliver effective multimedia solutions.