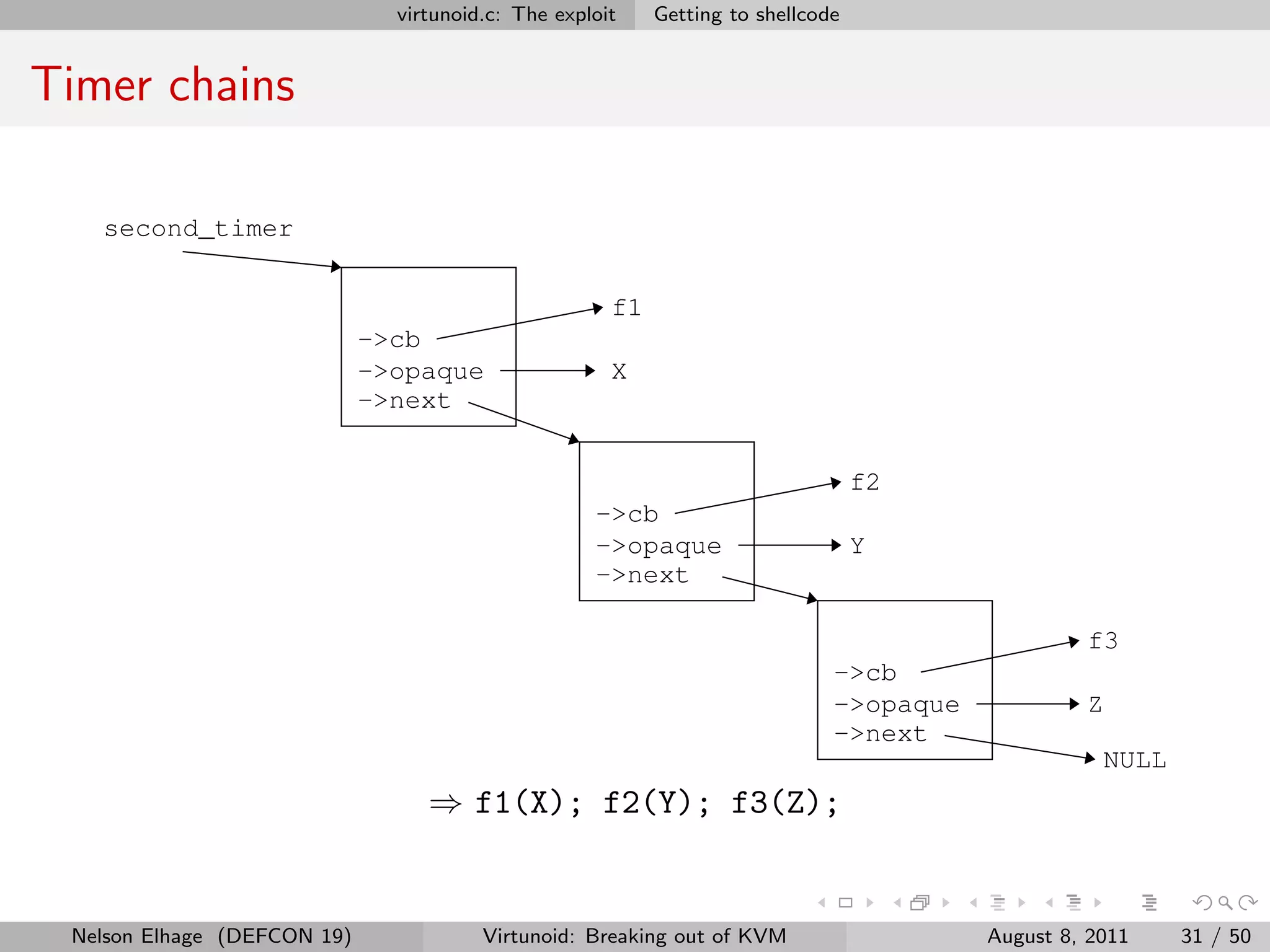

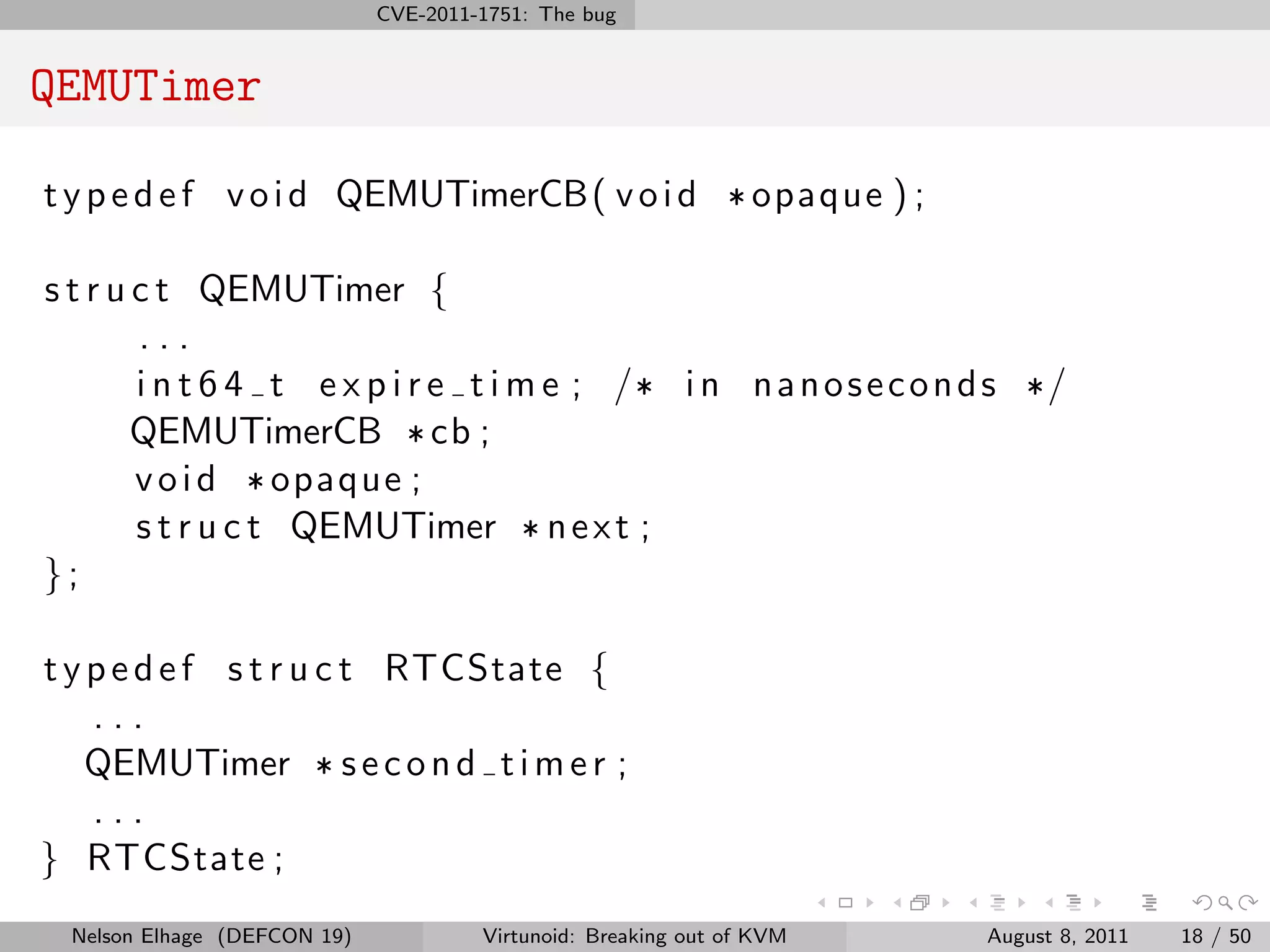

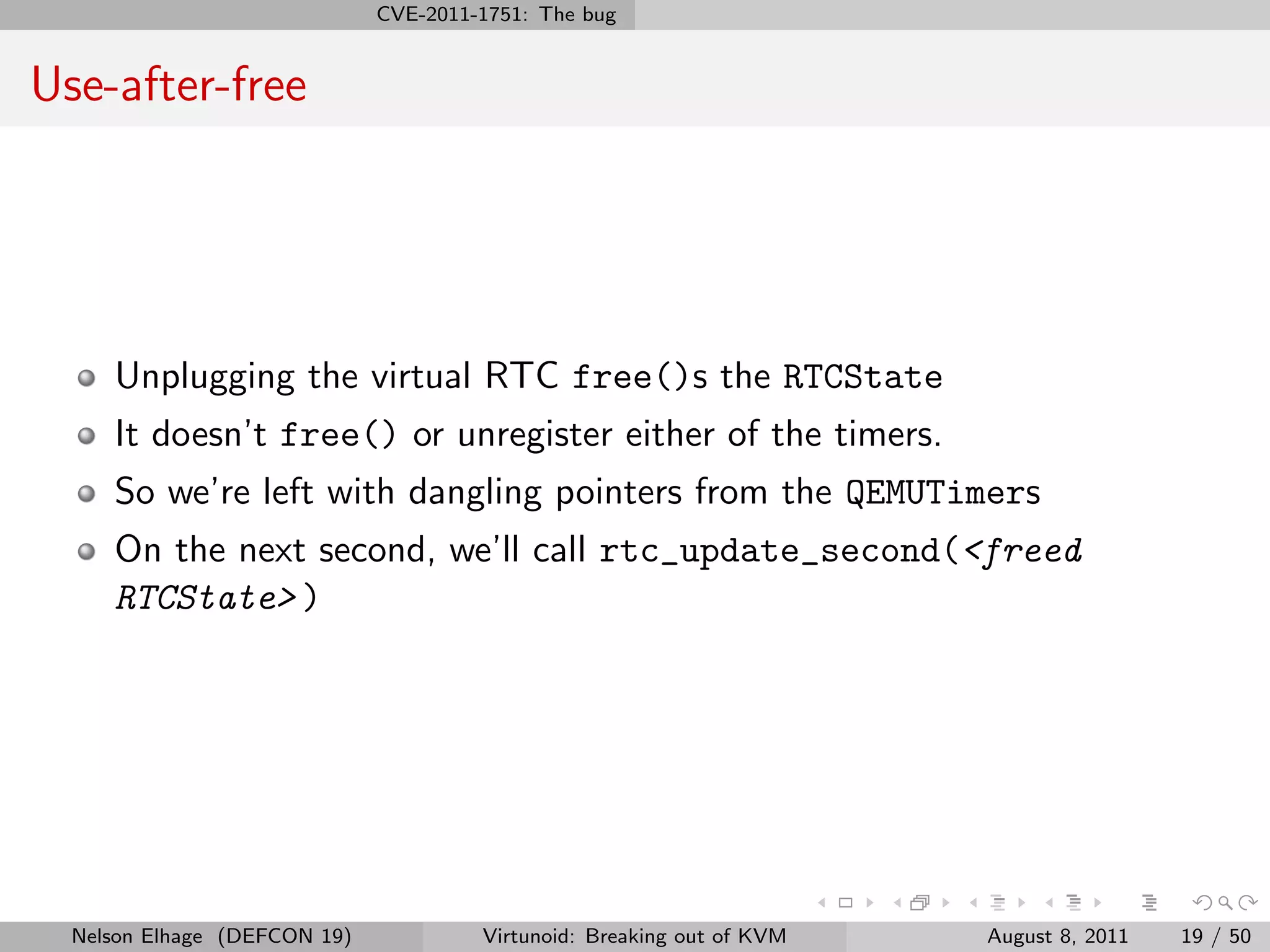

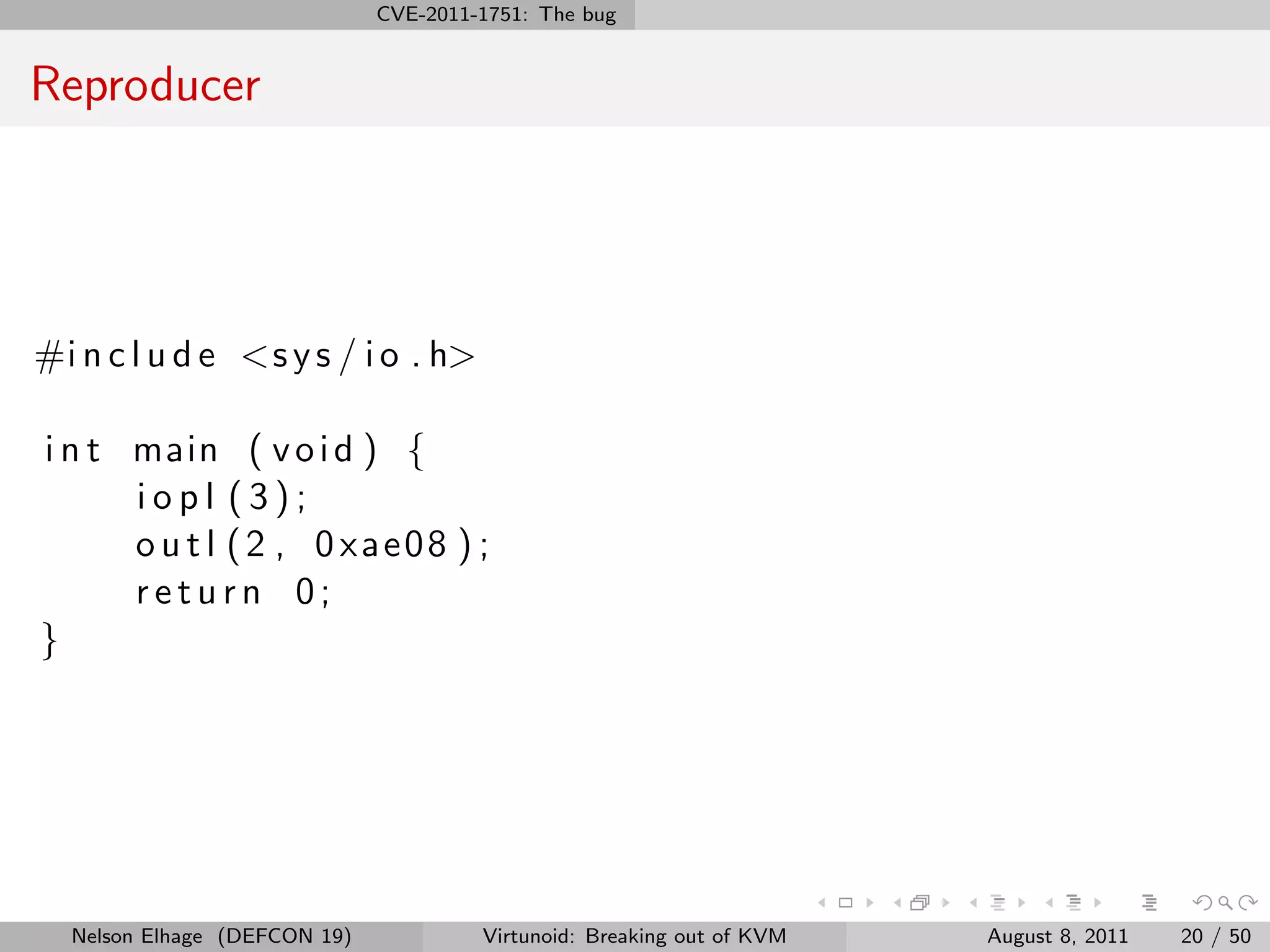

The document discusses a use-after-free vulnerability, CVE-2011-1751, that was found in the emulation of the PIIX4 southbridge chip in QEMU KVM. By hot unplugging the emulated ISA bridge, it was possible to exploit a use-after-free bug in the emulated RTC device and achieve arbitrary code execution on the host. The talk outlines an exploit, virtunoid.c, that leverages this bug to inject shellcode and achieve a root shell on the KVM host.

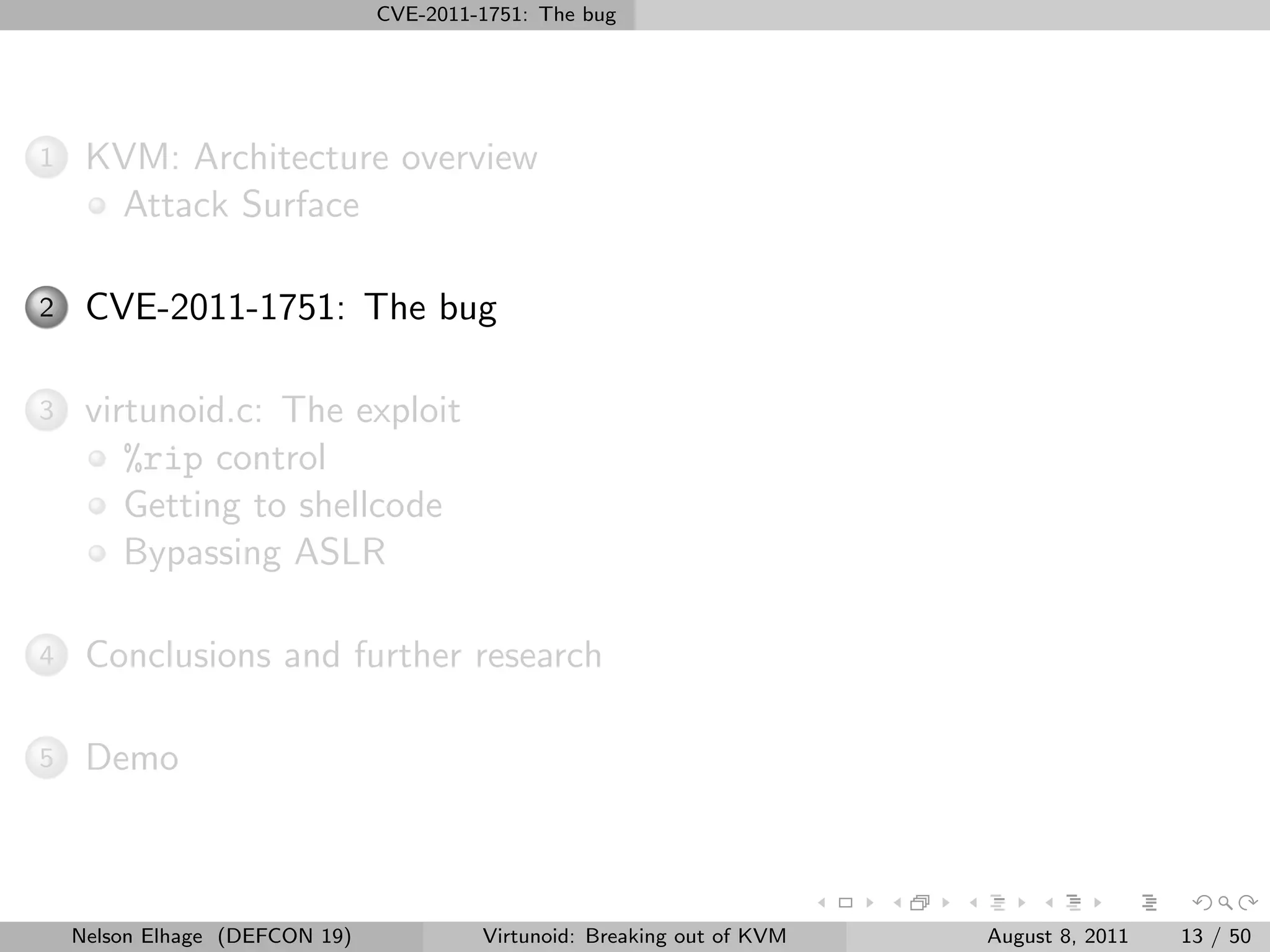

![virtunoid.c: The exploit Getting to shellcode

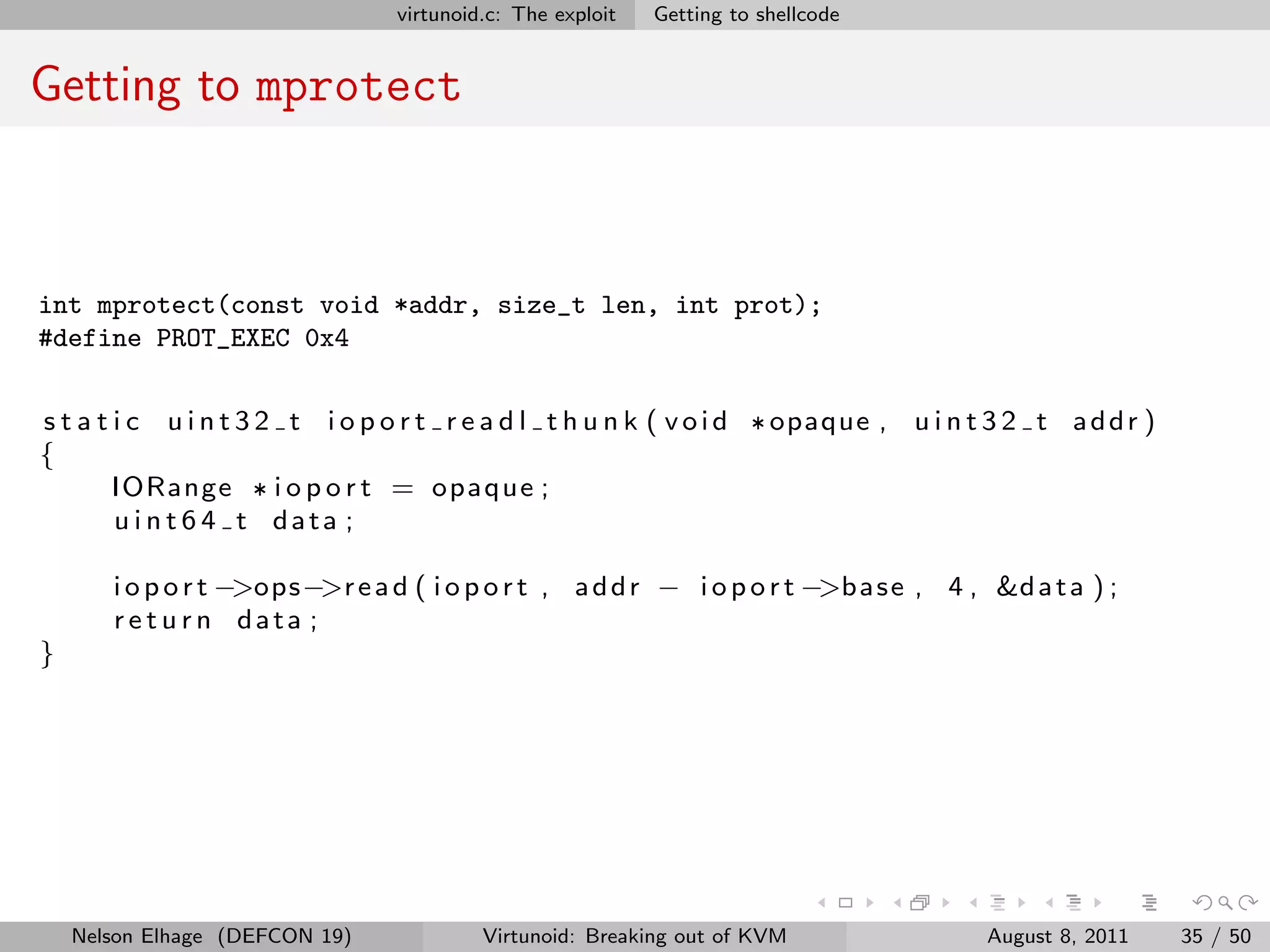

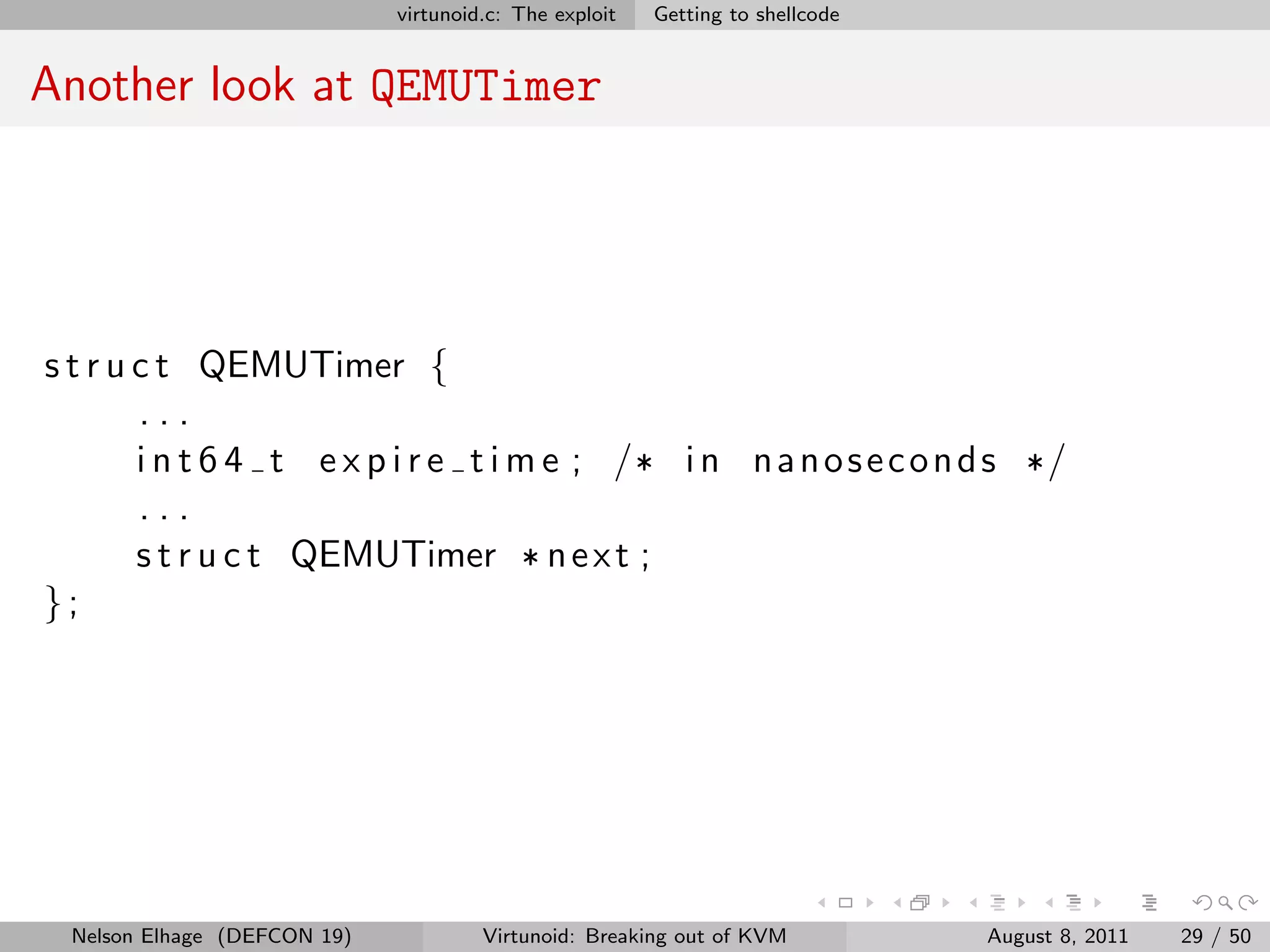

qemu_run_timers

s t a t i c v o i d q e m u r u n t i m e r s ( QEMUClock * c l o c k )

{

QEMUTimer ** p t i m e r h e a d , * t s ;

int64 t current time ;

current time = qemu get clock ns ( clock );

p t i m e r h e a d = &a c t i v e t i m e r s [ c l o c k −>t y p e ] ;

for ( ; ; ) {

ts = * ptimer head ;

i f ( ! qemu timer expired ns ( ts , c u r r e n t t i m e ))

break ;

* p t i m e r h e a d = t s −>n e x t ;

t s −>n e x t = NULL ;

t s −>cb ( t s −>opaque ) ;

}

}

Nelson Elhage (DEFCON 19) Virtunoid: Breaking out of KVM August 8, 2011 30 / 50](https://image.slidesharecdn.com/kvm-defcon-2011-110818165327-phpapp02/75/Virtunoid-Breaking-out-of-KVM-34-2048.jpg)