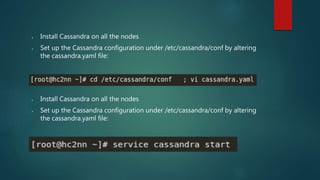

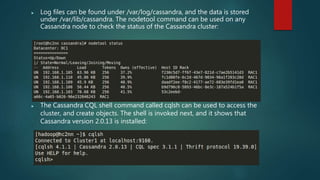

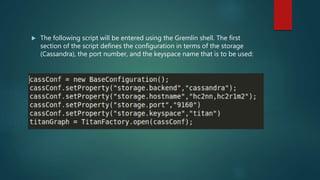

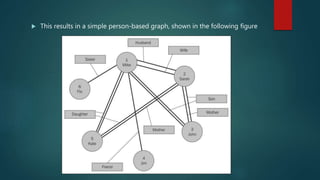

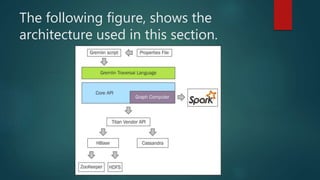

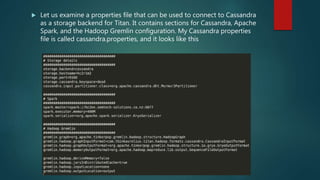

The document discusses using Spark for time-series graph analytics, highlighting the transformation of time series data into graphs and the algorithms that facilitate this. It compares graph databases Titan and Neo4j, emphasizing Titan's scalability and persistence features with Cassandra as the storage backend, along with installation and configuration instructions. The document also details how to access Titan graphs using Spark and Gremlin scripts to analyze the data effectively.