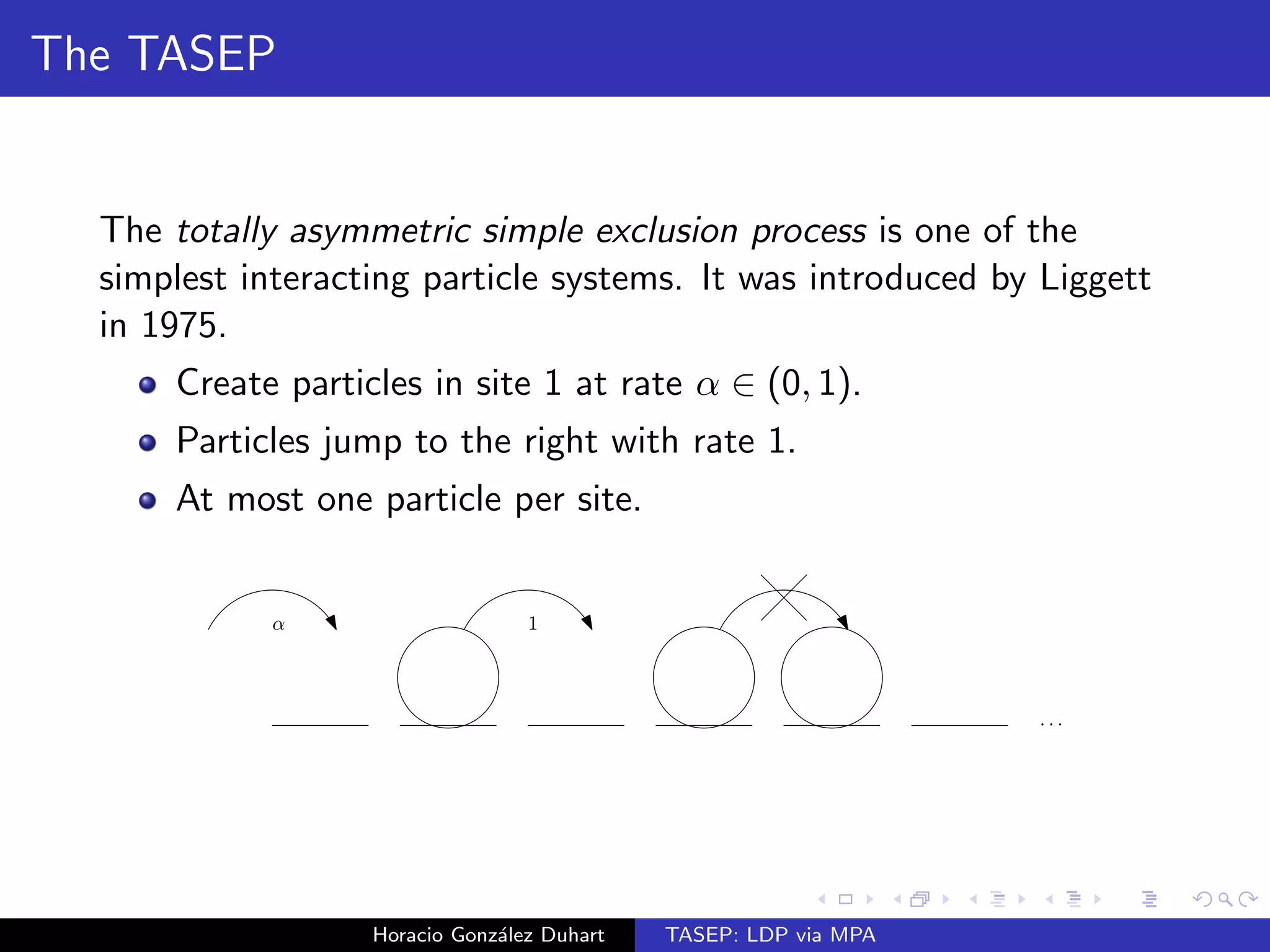

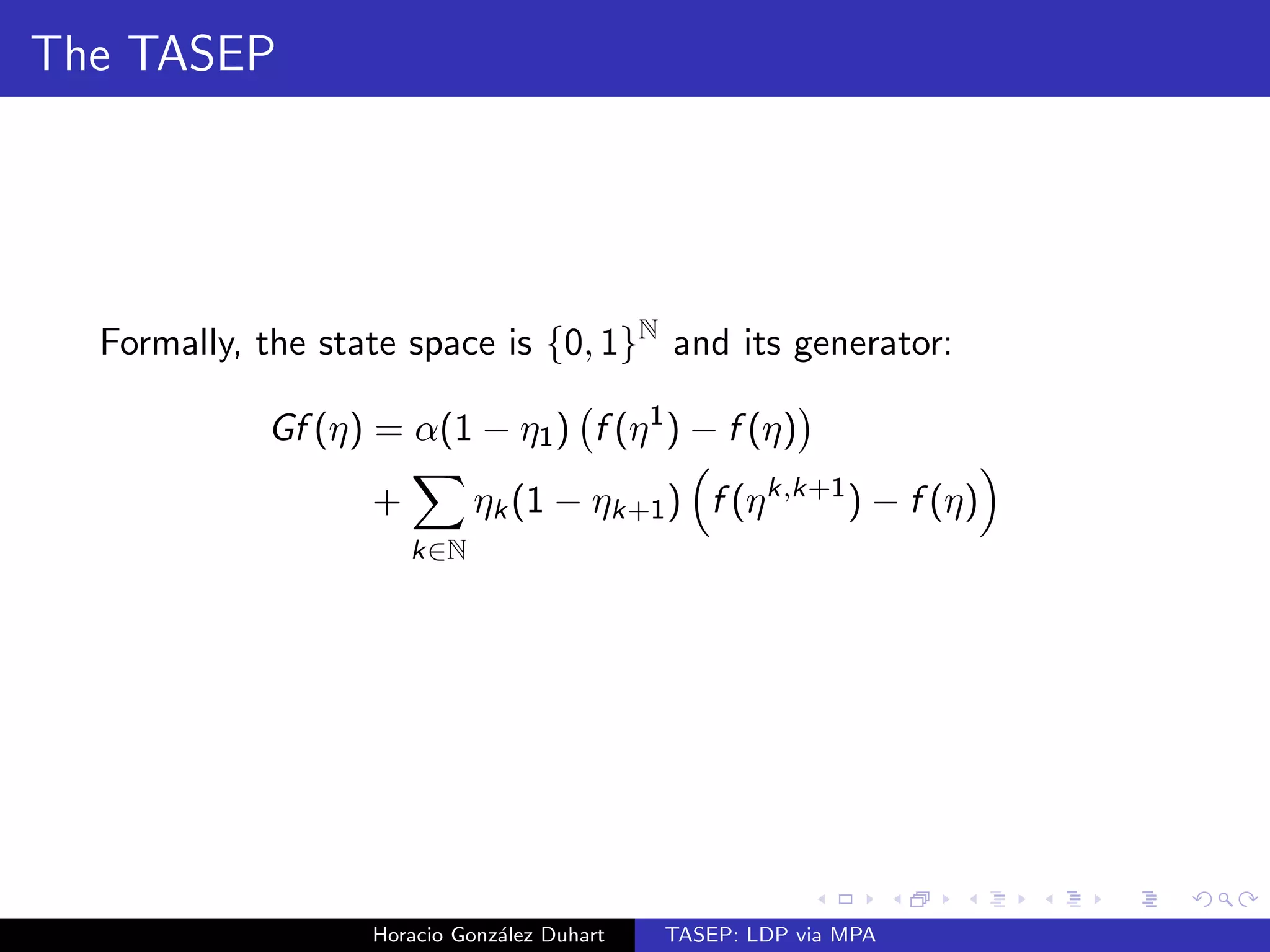

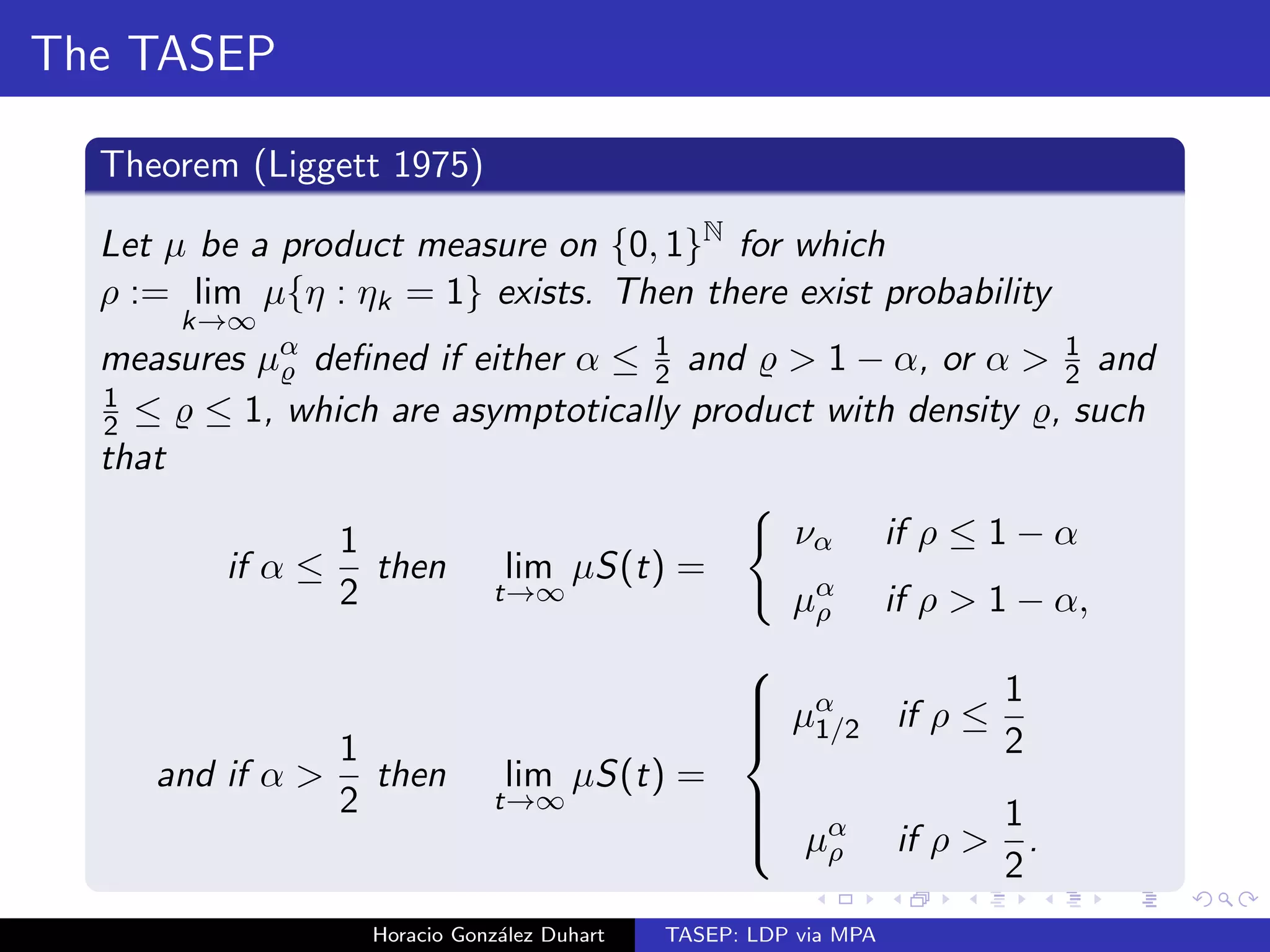

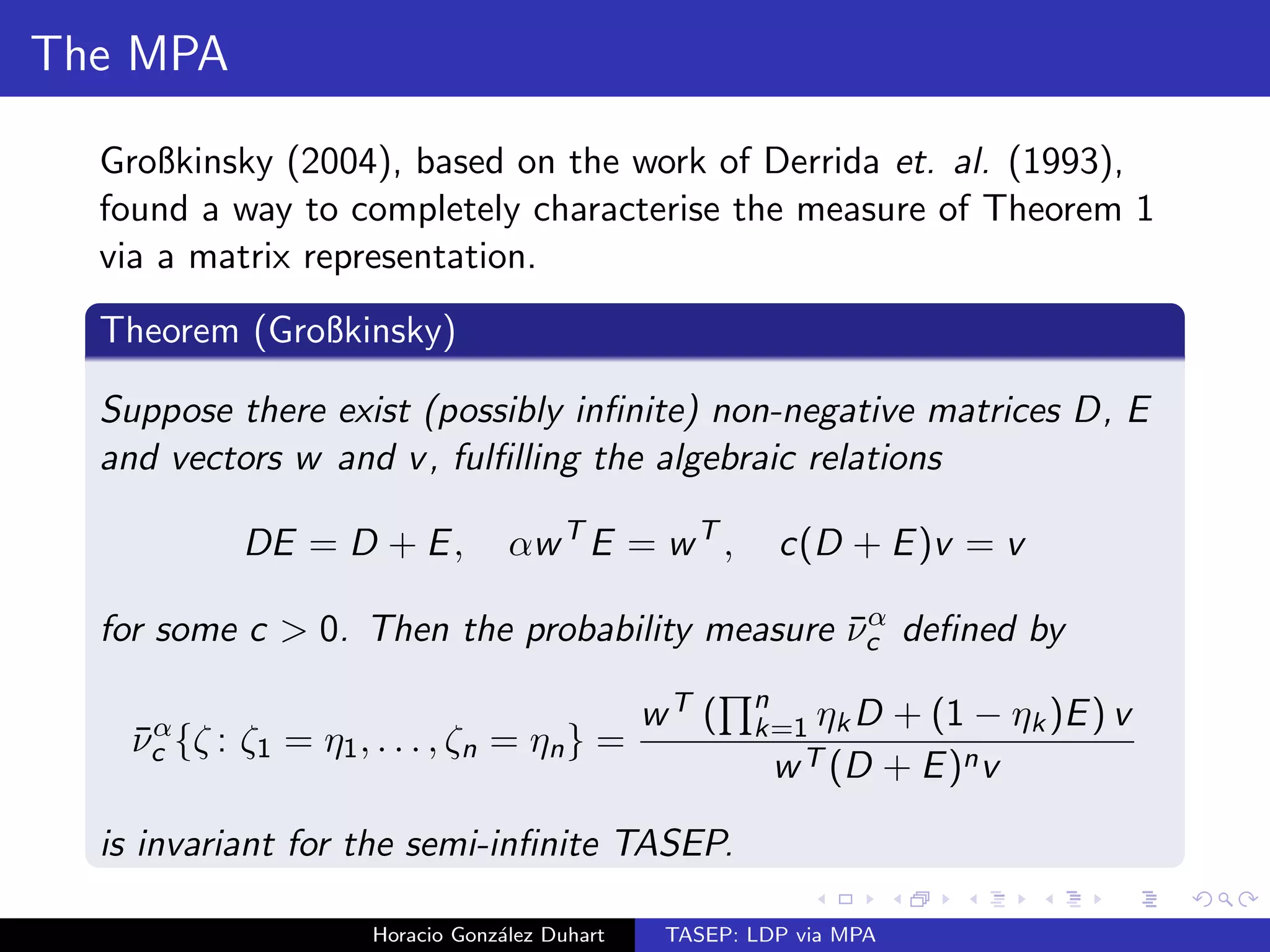

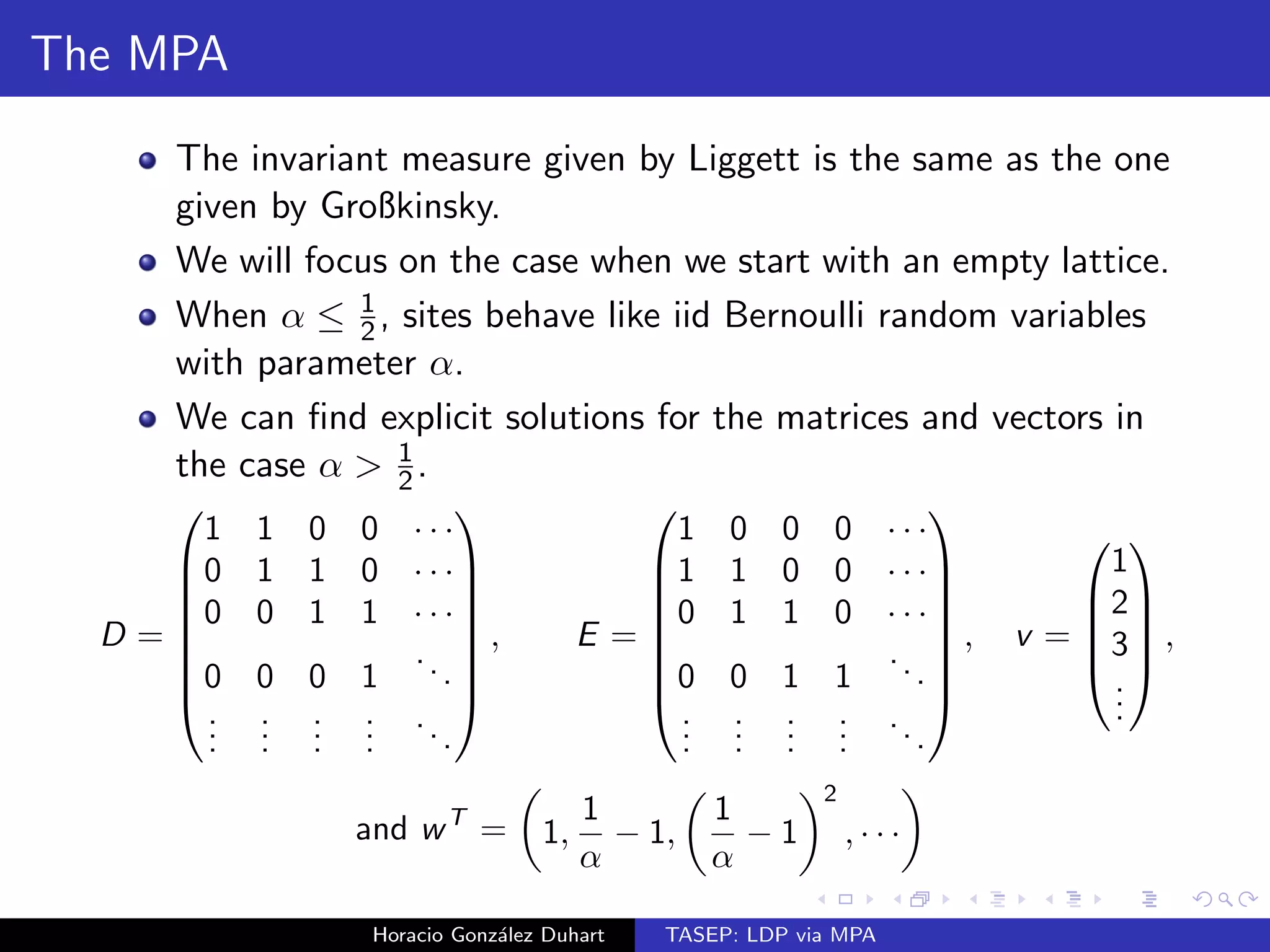

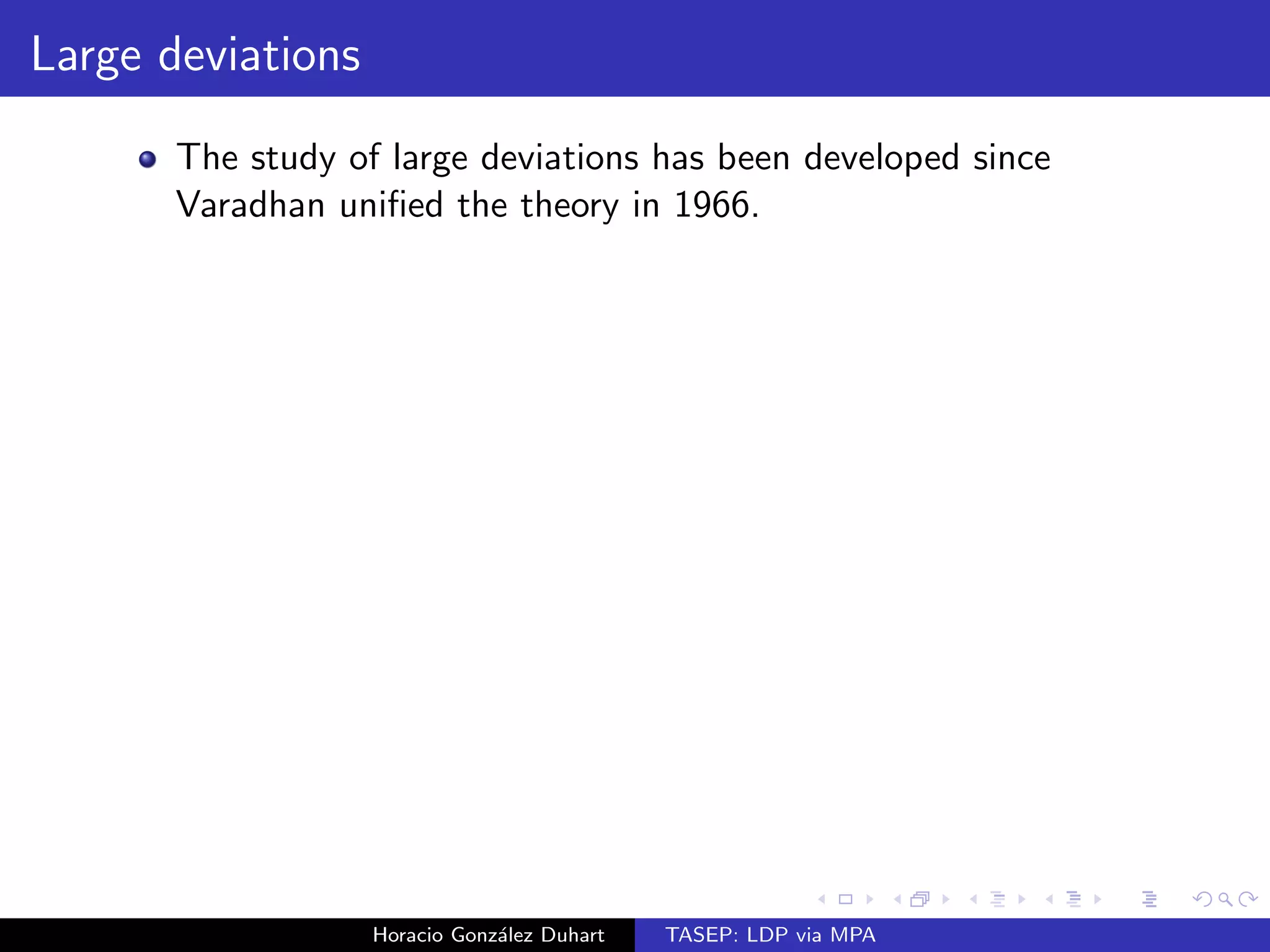

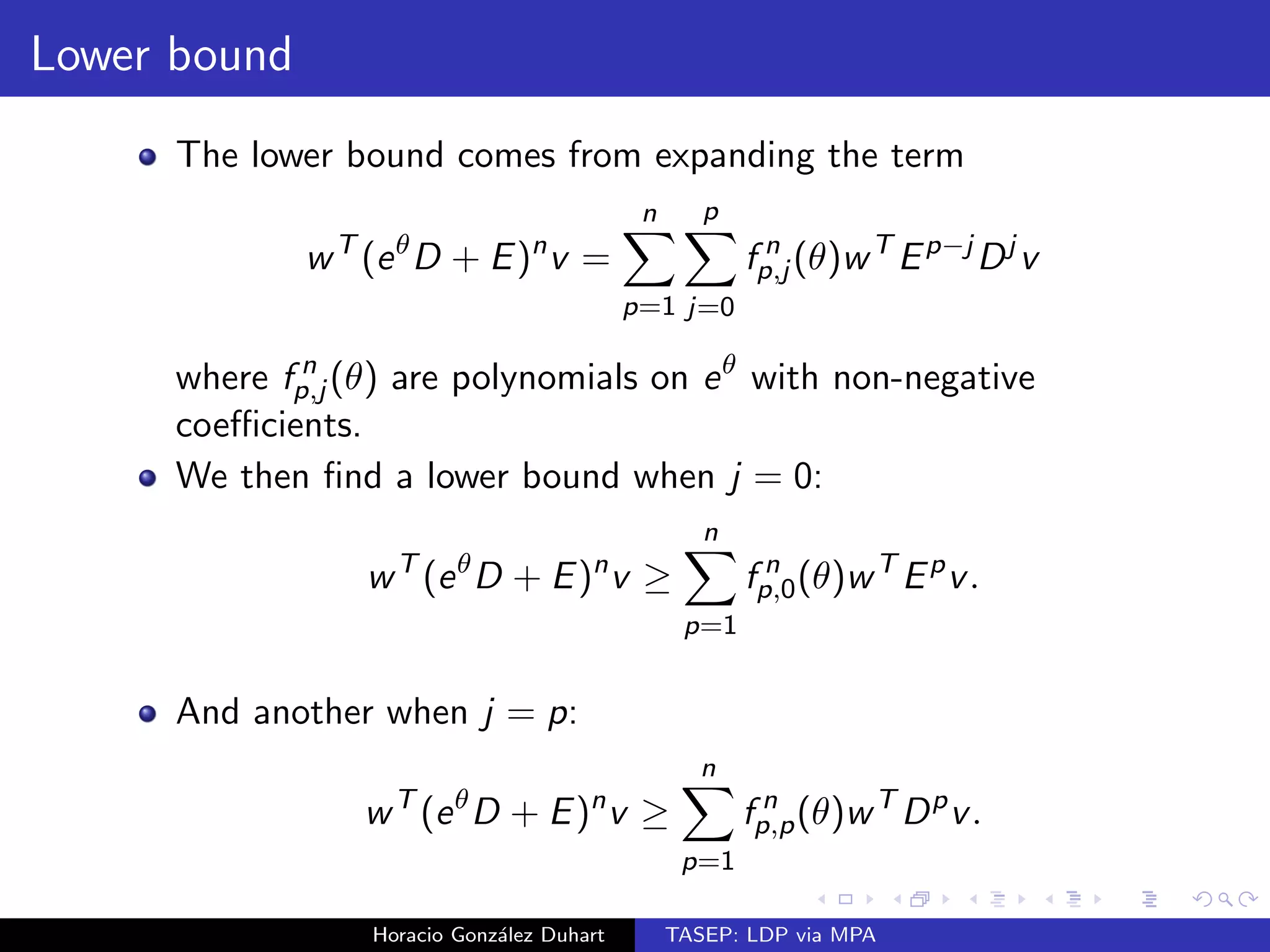

The document discusses the Totally Asymmetric Simple Exclusion Process (TASEP), a simple interacting particle system introduced in 1975, focusing on large deviations through matrix product representations. It establishes a large deviation principle for the empirical density of a semi-infinite TASEP starting with an empty lattice, providing a convex rate function under certain conditions. The results build on the work of previous researchers and employ matrix analysis to characterize invariant measures related to TASEP.

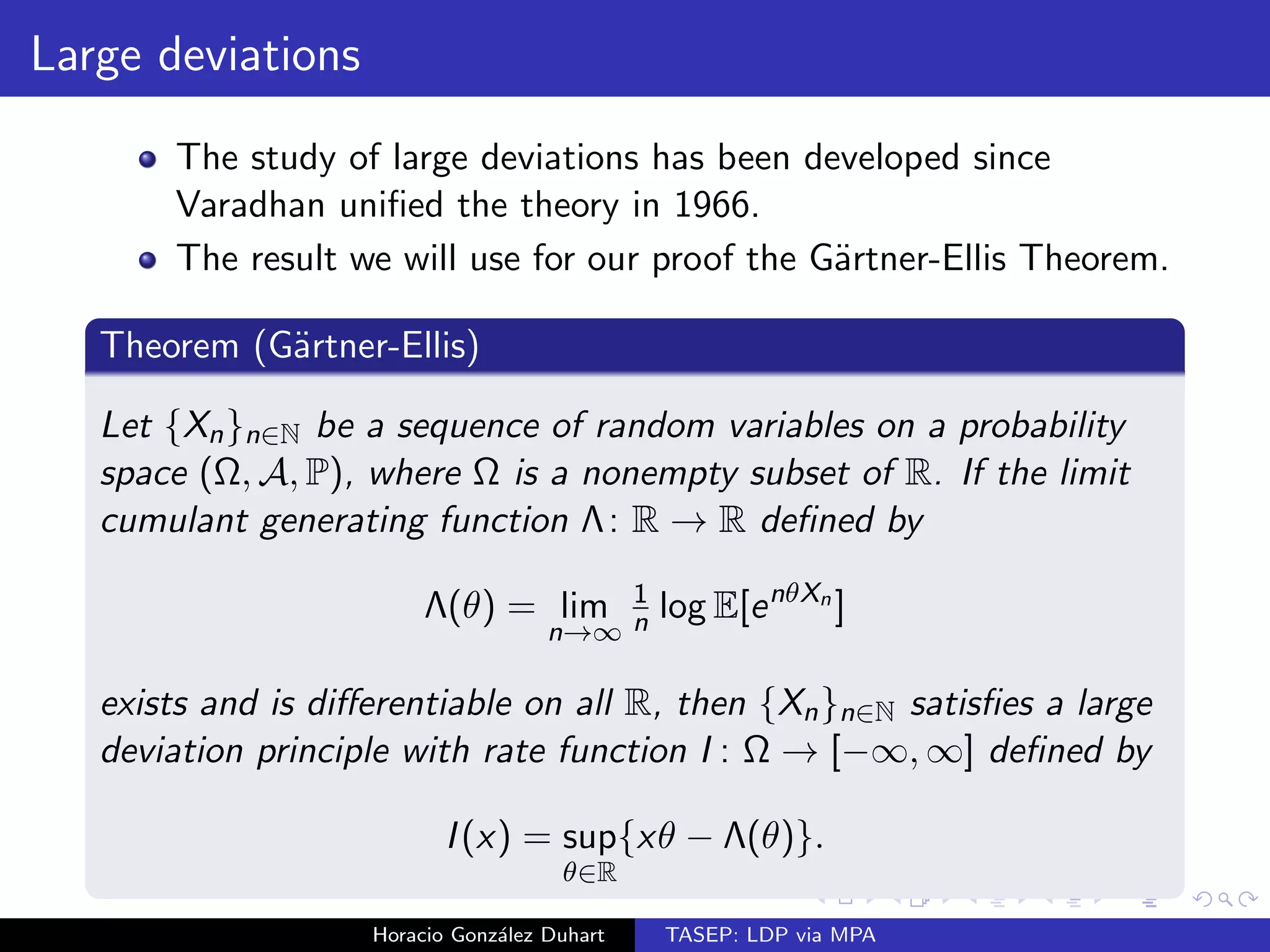

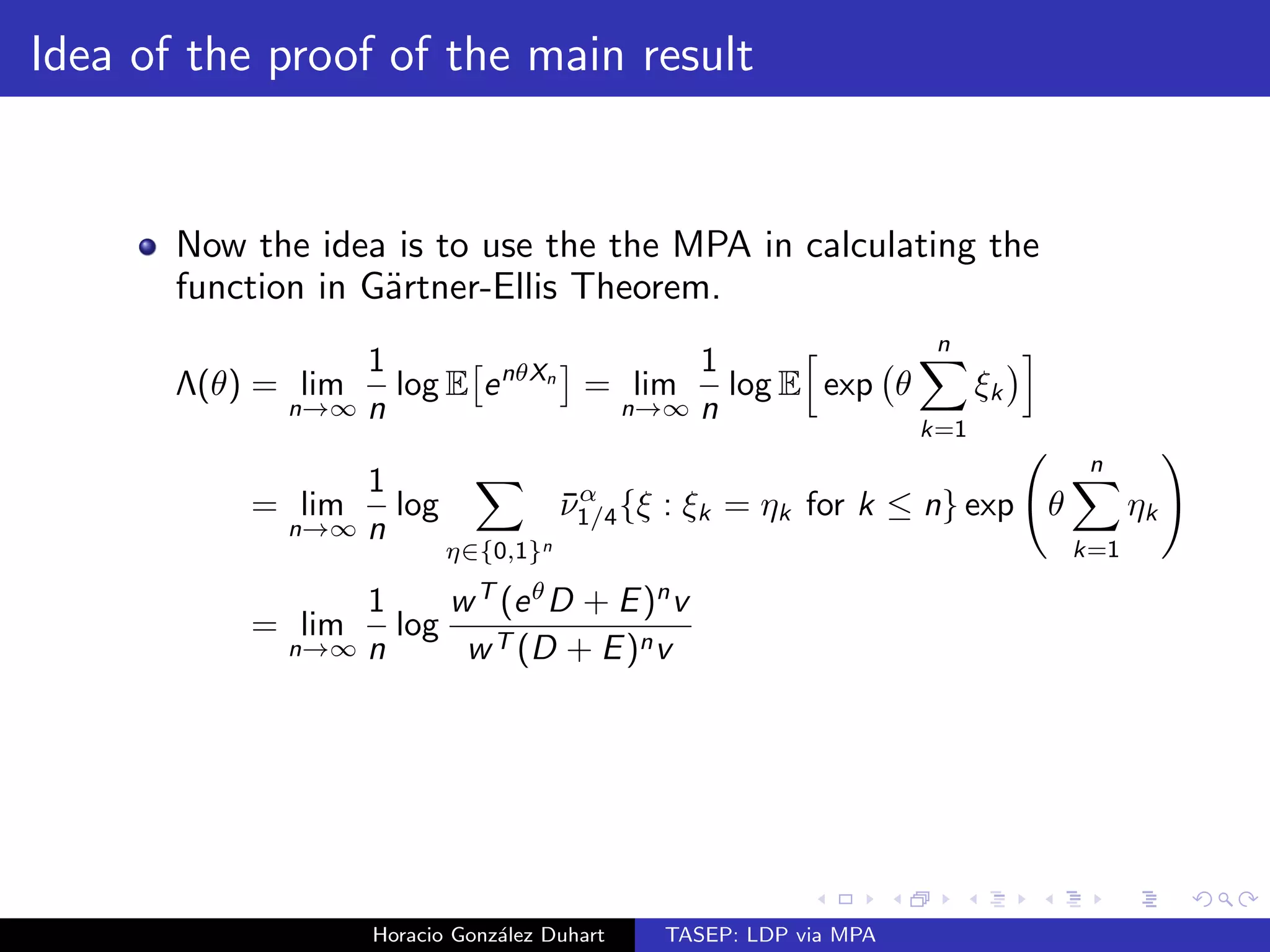

![Main result

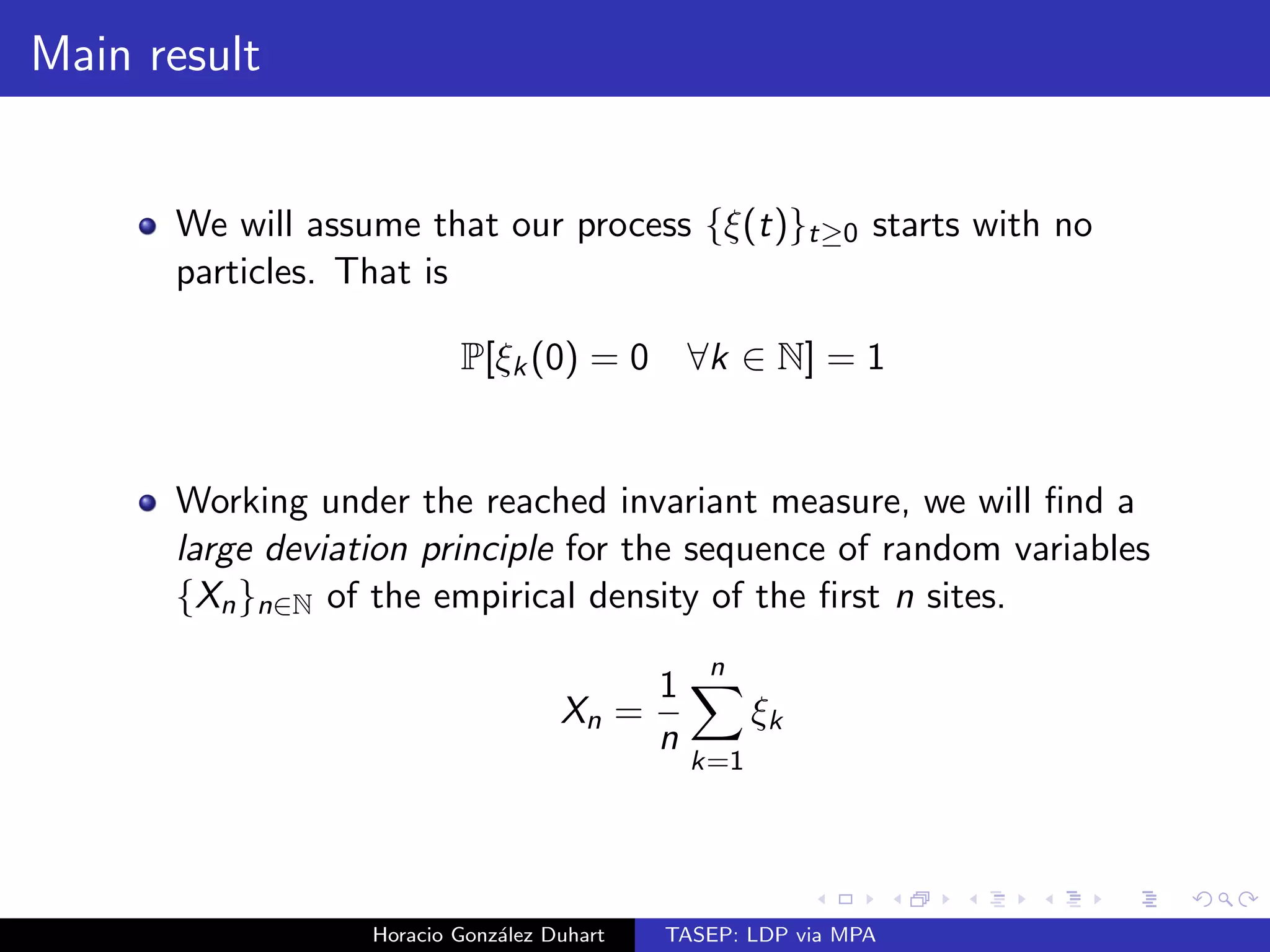

We will assume that our process f(t)gt0 starts with no

particles. That is

P[k(0) = 0 8k 2 N] = 1

Horacio Gonzalez Duhart TASEP: LDP via MPA](https://image.slidesharecdn.com/bristolshort-141119111409-conversion-gate02/75/TASEP-LDP-via-MPA-11-2048.jpg)

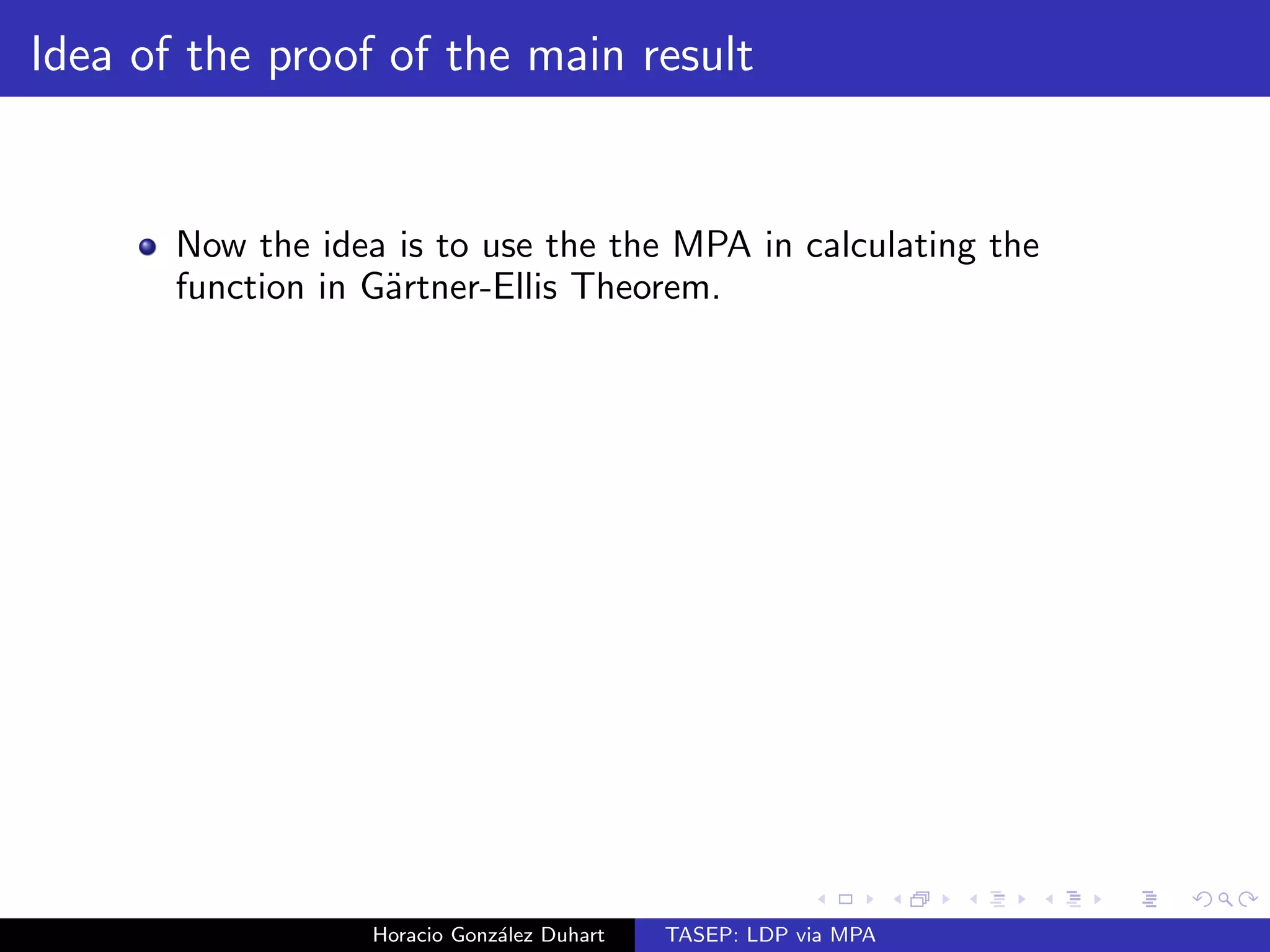

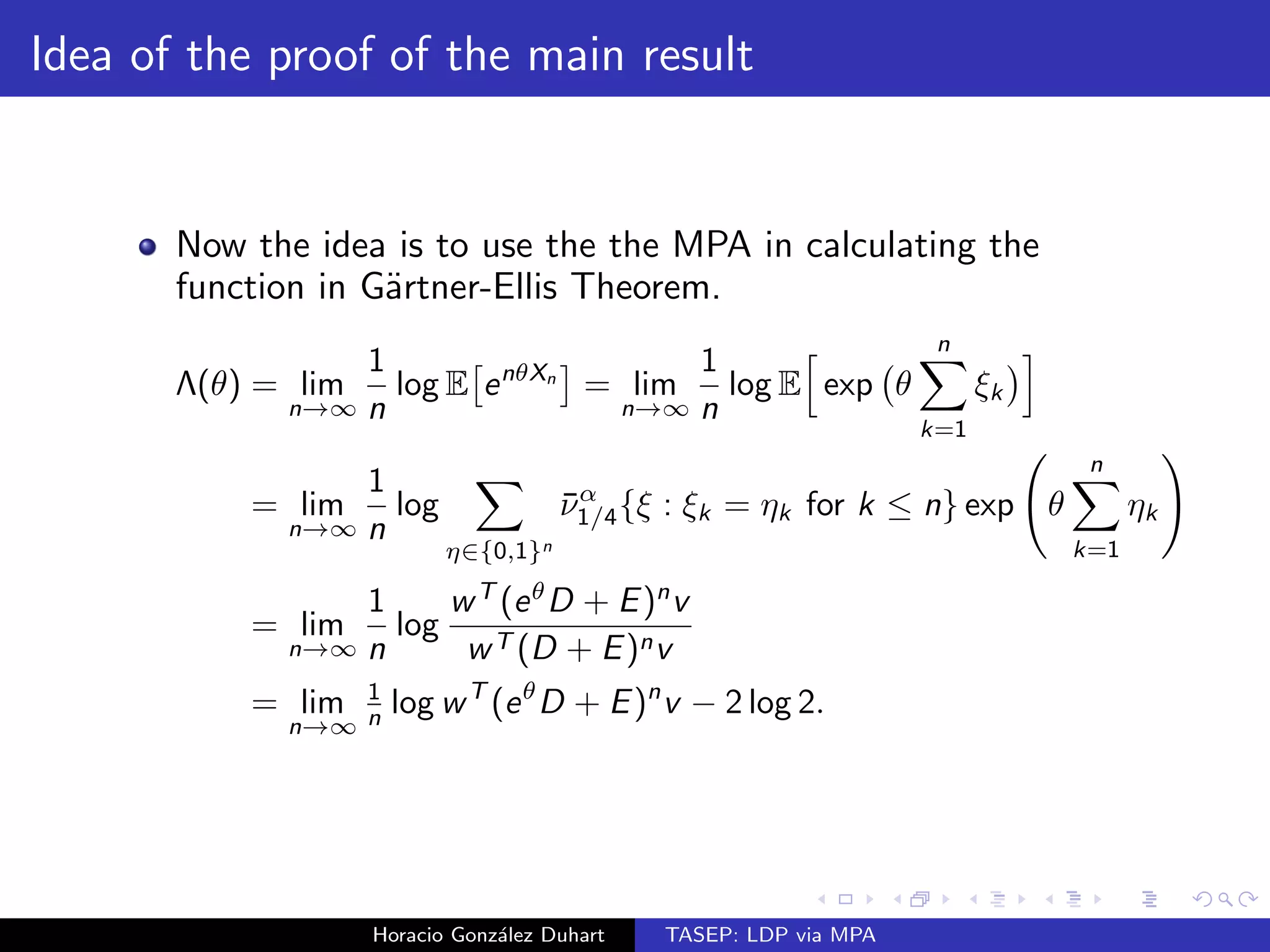

![Main result

We will assume that our process f(t)gt0 starts with no

particles. That is

P[k(0) = 0 8k 2 N] = 1

Working under the reached invariant measure, we will](https://image.slidesharecdn.com/bristolshort-141119111409-conversion-gate02/75/TASEP-LDP-via-MPA-12-2048.jpg)

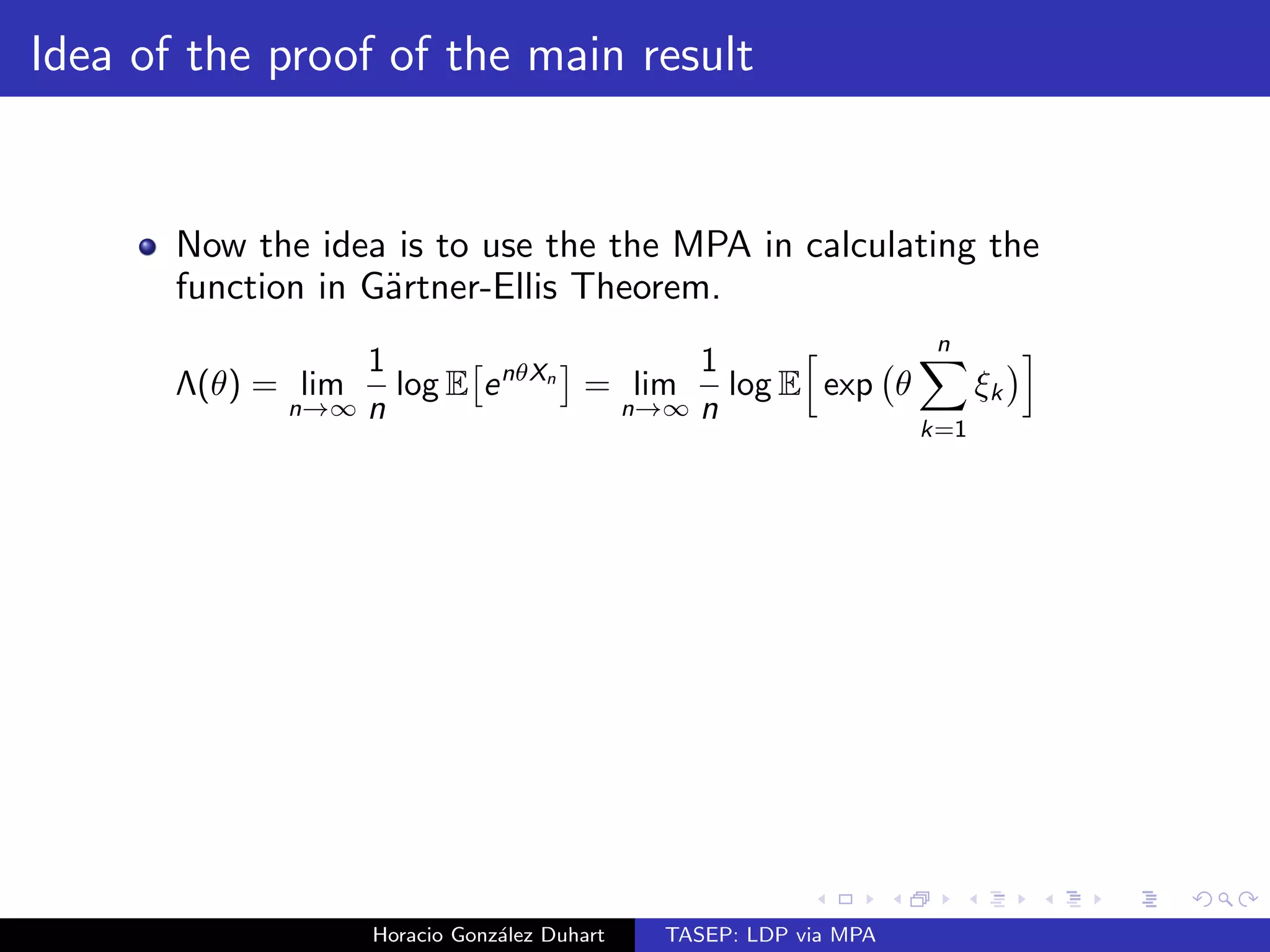

![es a large deviation principle with convex rate

function I : [0; 1] ! [0;1] given as follows:

(a) If

1

2

, then I (x) = x log

x

+ (1 x) log

1 x

1

.

(b) If

1

2

, then

I (x) =

8

:

x log

x

+ (1 x) log

1 x

1

+ log (4(1 )) if 0 x 1 ;

2 [x log x + (1 x) log(1 x) + log 2] if 1 x

1

2

;

x log x + (1 x) log(1 x) + log 2 if

1

2

x 1:

Horacio Gonzalez Duhart TASEP: LDP via MPA](https://image.slidesharecdn.com/bristolshort-141119111409-conversion-gate02/75/TASEP-LDP-via-MPA-17-2048.jpg)

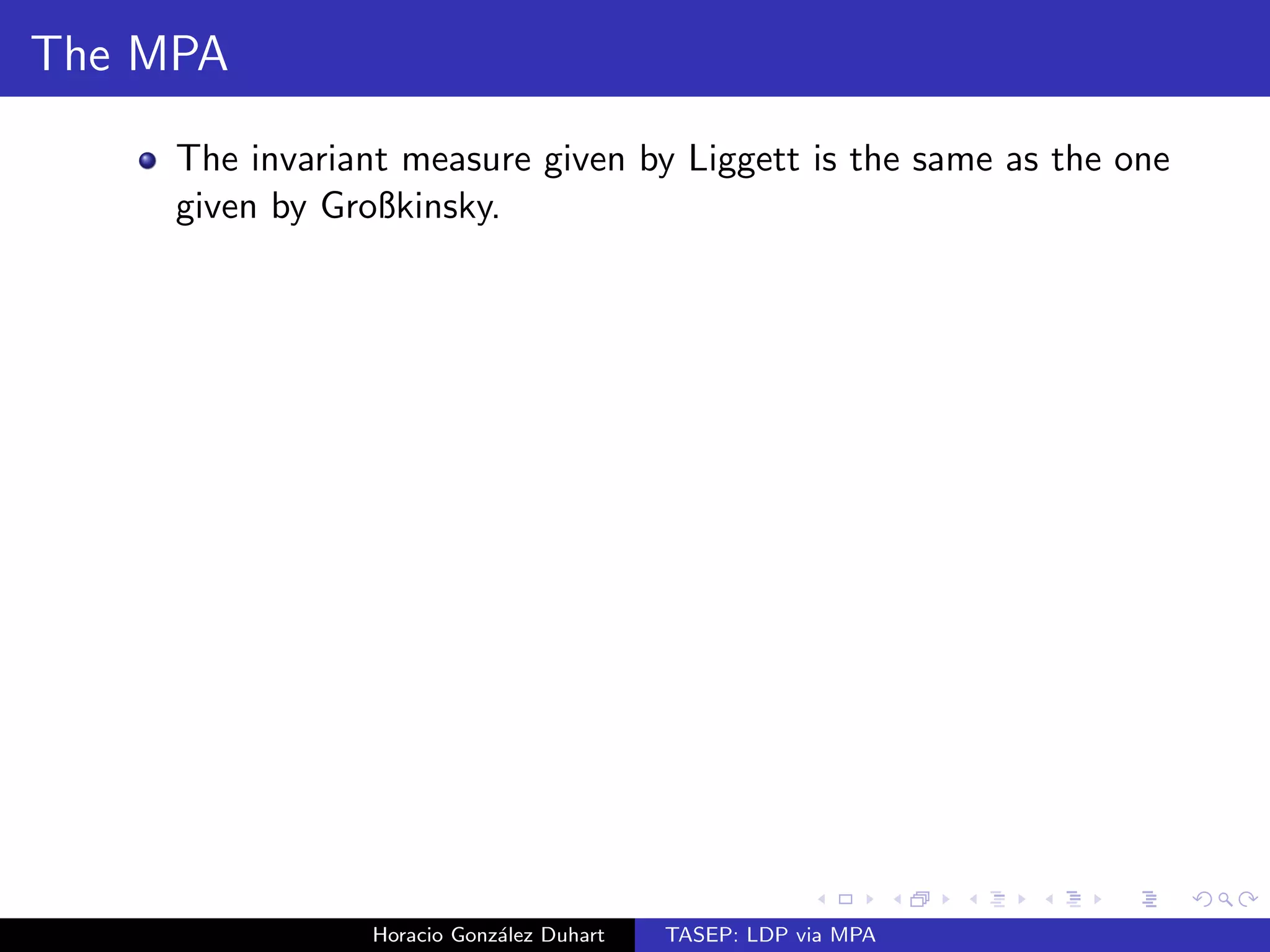

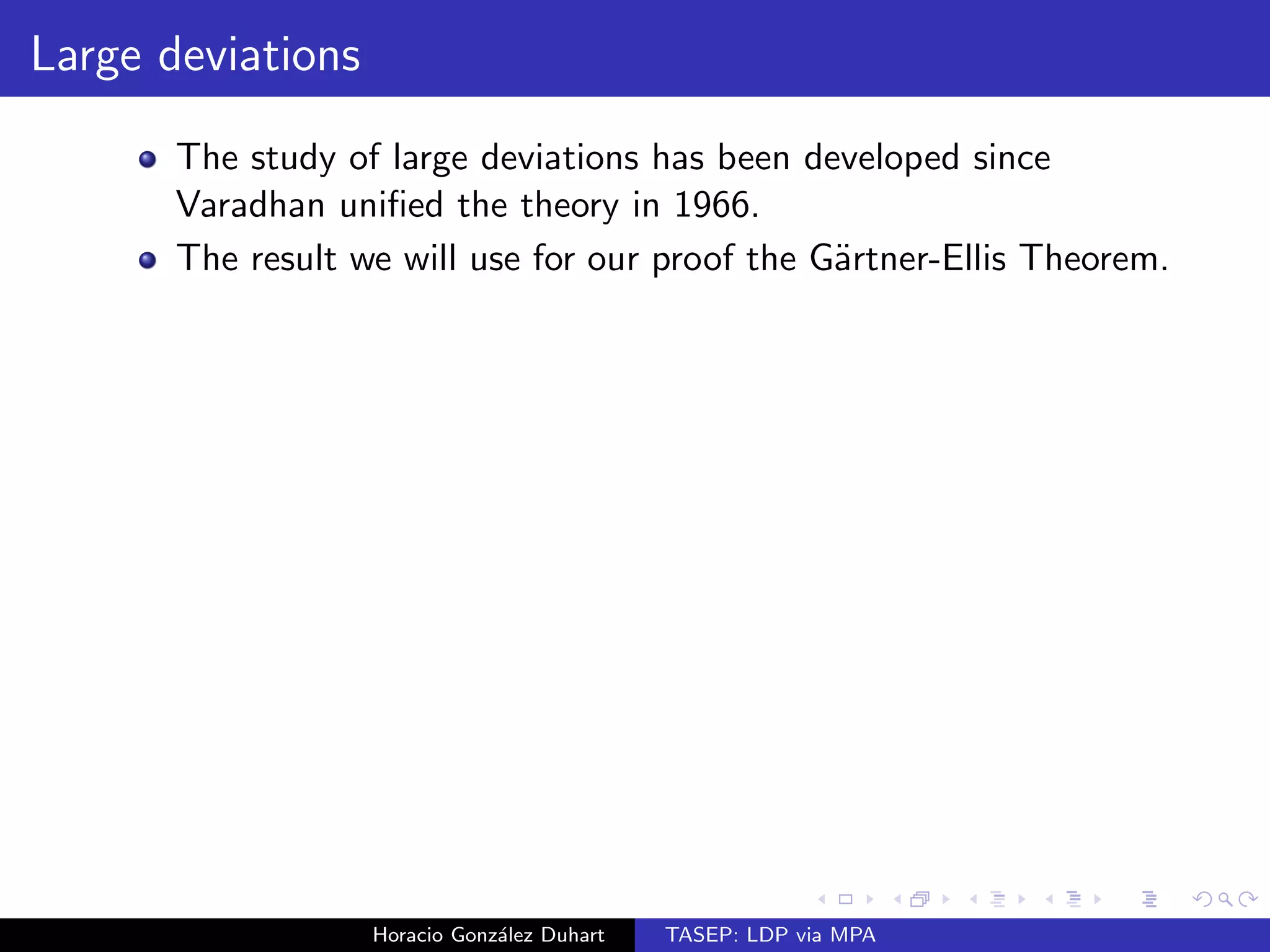

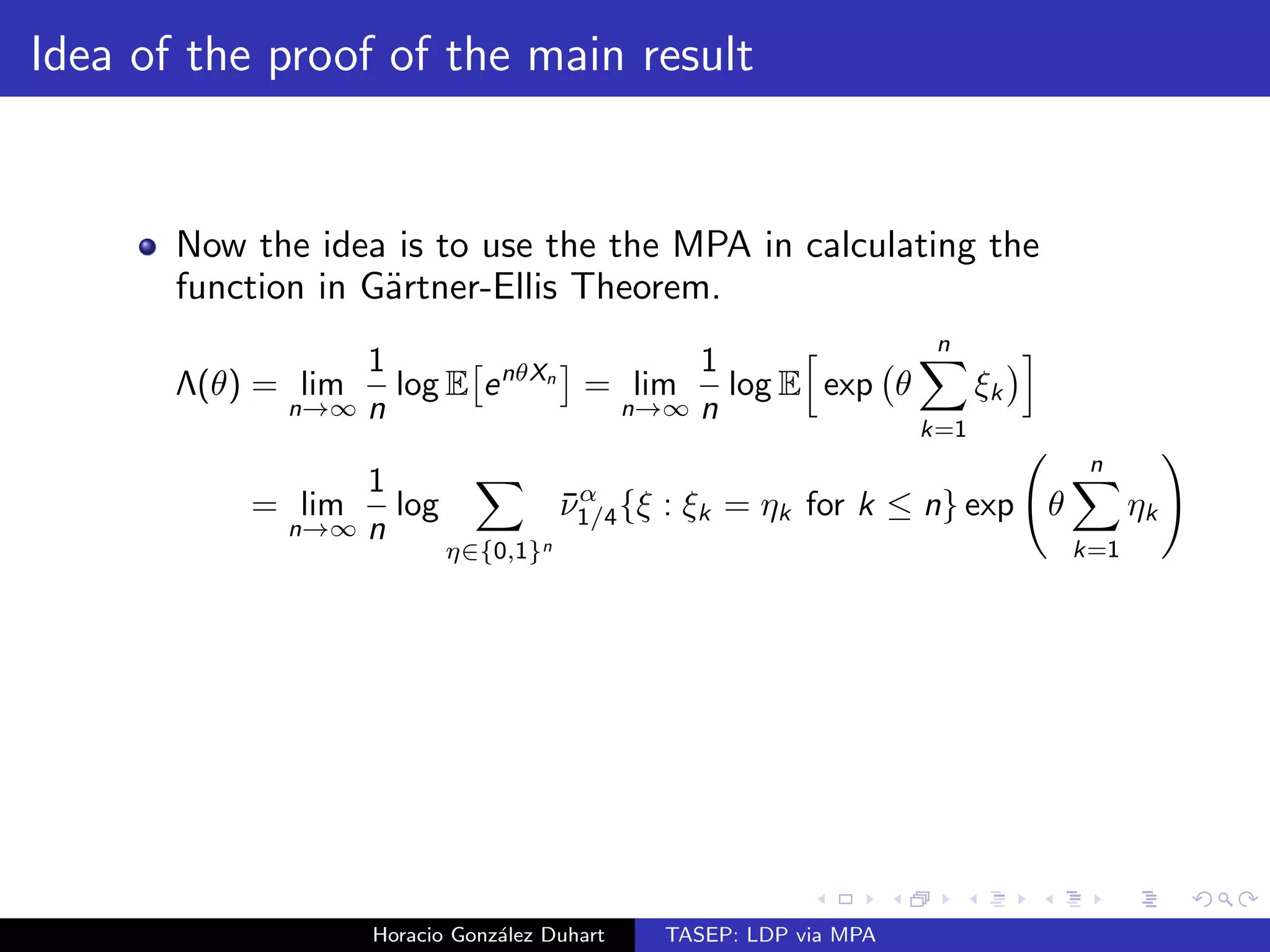

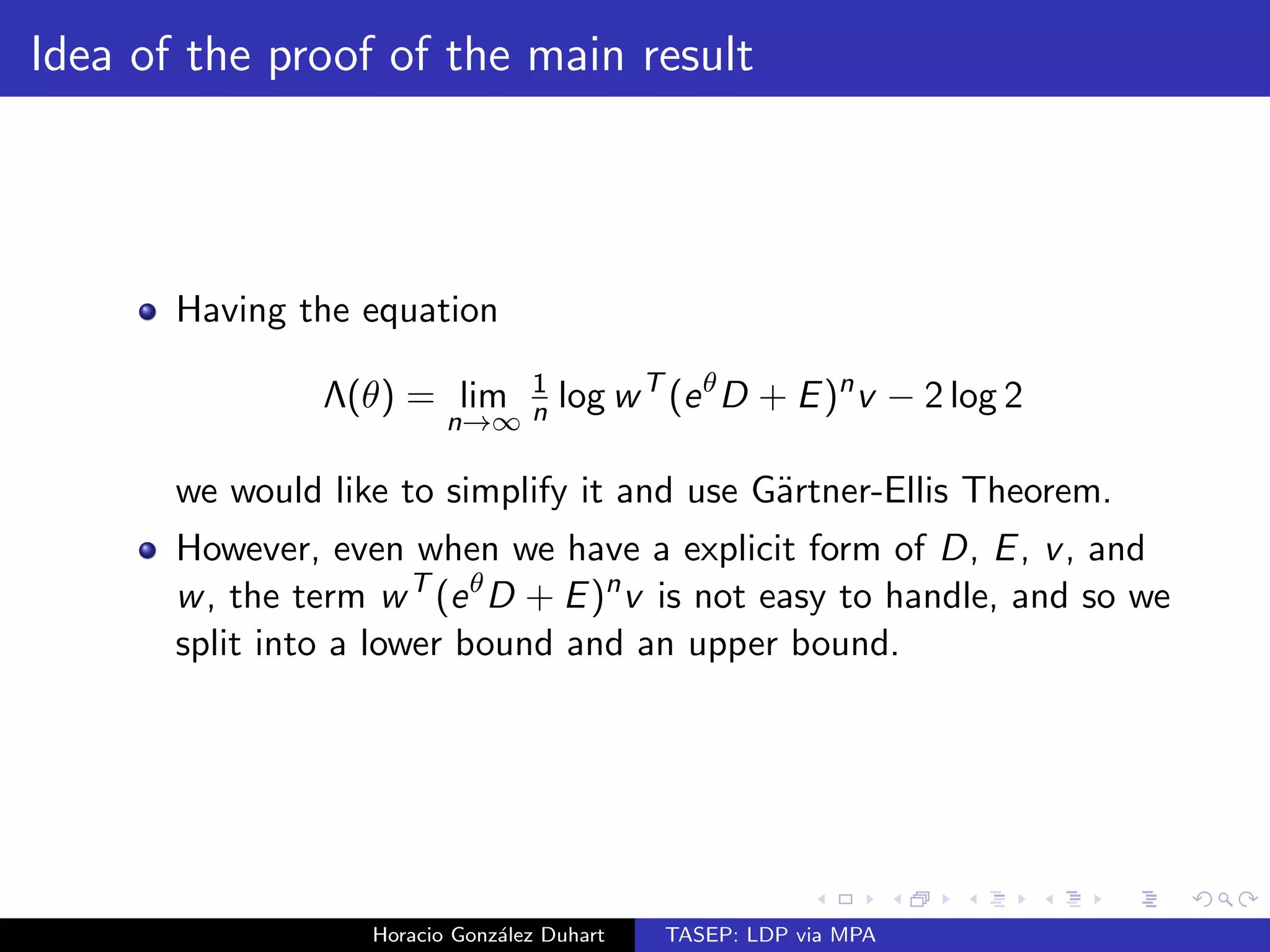

![es

a LDP with rate function I if

P[Xn x] expfnI (x)g

for some non-negative function I : R ! [0;1]

Horacio Gonzalez Duhart TASEP: LDP via MPA](https://image.slidesharecdn.com/bristolshort-141119111409-conversion-gate02/75/TASEP-LDP-via-MPA-35-2048.jpg)

![es a large deviation

principle with rate function I if the following three conditions meet:

i) I is a rate function (non-negative and lsc).

ii) lim sup

n!1

1

n

log Pn[F] inf

x2F

I (x) 8F X closed

iii) lim inf

n!1

1

n

log Pn[G] inf

x2G

I (x) 8G X open:

Horacio Gonzalez Duhart TASEP: LDP via MPA](https://image.slidesharecdn.com/bristolshort-141119111409-conversion-gate02/75/TASEP-LDP-via-MPA-38-2048.jpg)