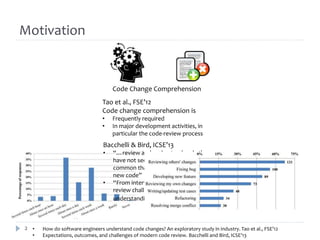

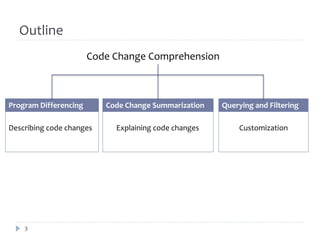

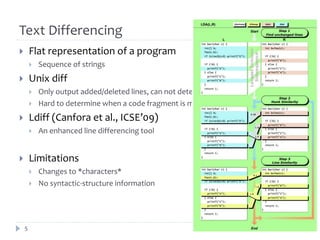

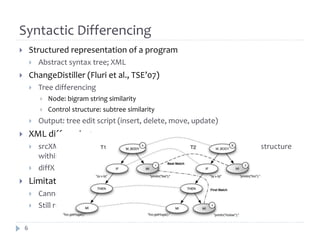

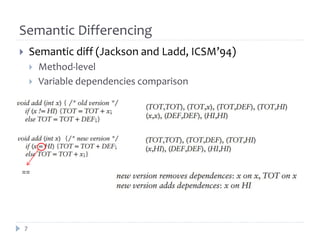

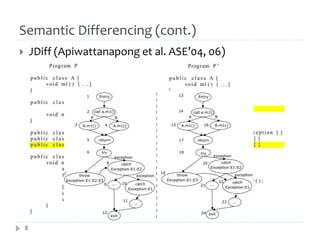

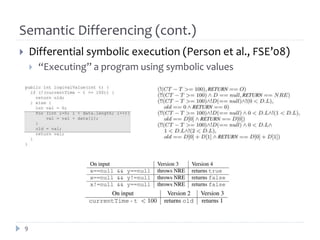

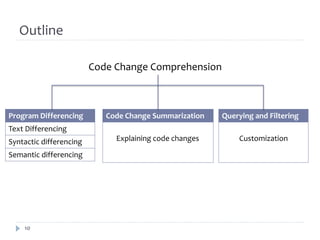

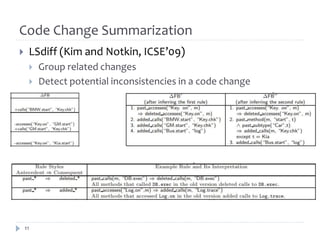

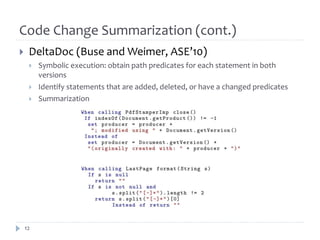

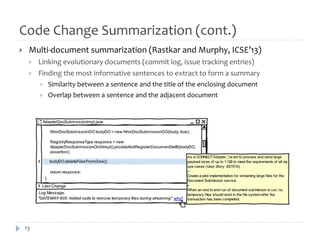

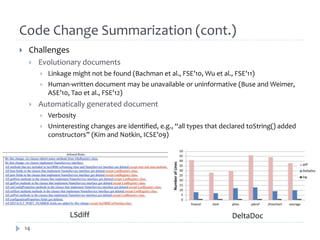

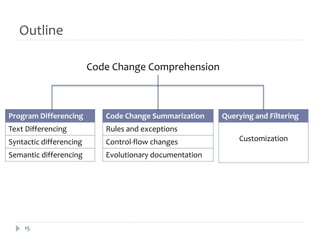

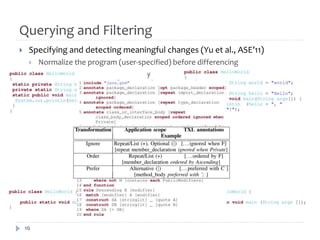

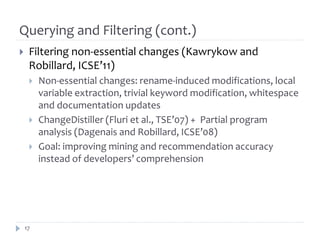

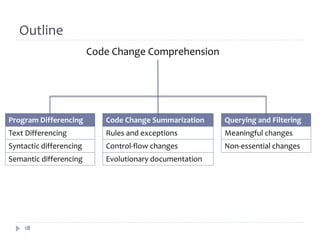

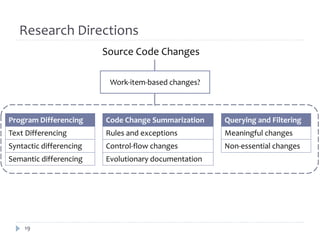

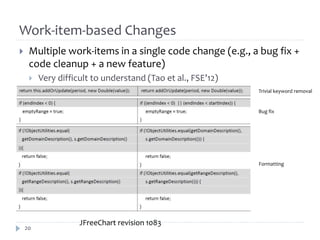

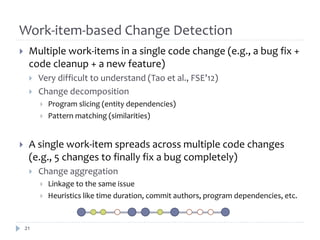

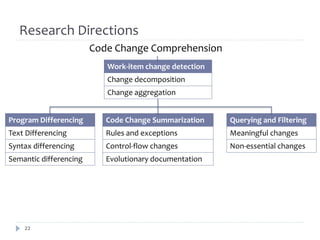

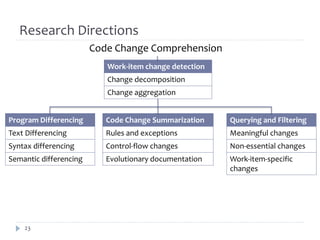

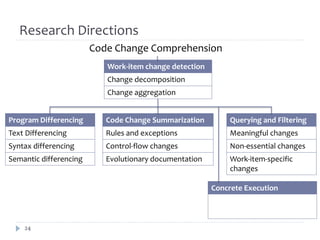

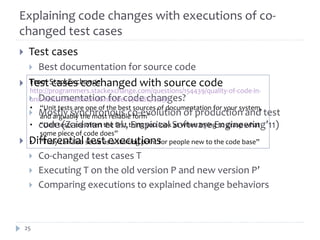

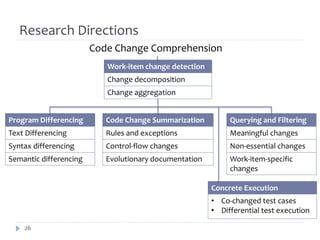

The document discusses research on assisting software engineers in comprehending source code changes. It outlines techniques for differencing code at the text, syntactic and semantic levels. It also covers summarizing code changes, explaining changes through rules and control flow analysis, and leveraging related documentation. Future work opportunities include detecting work-item specific changes, decomposing and aggregating changes, and explaining changes through differential execution of co-changed test cases.