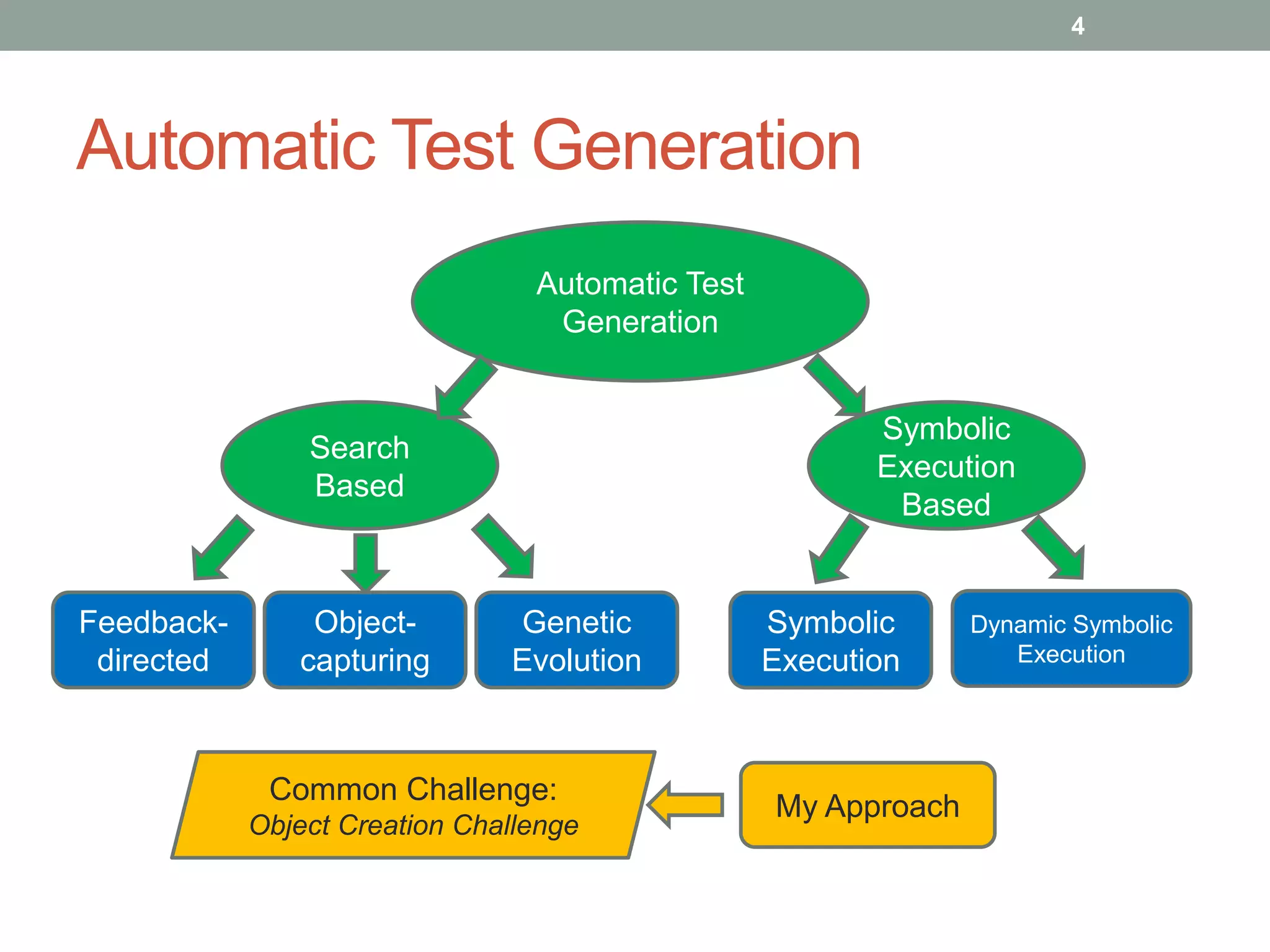

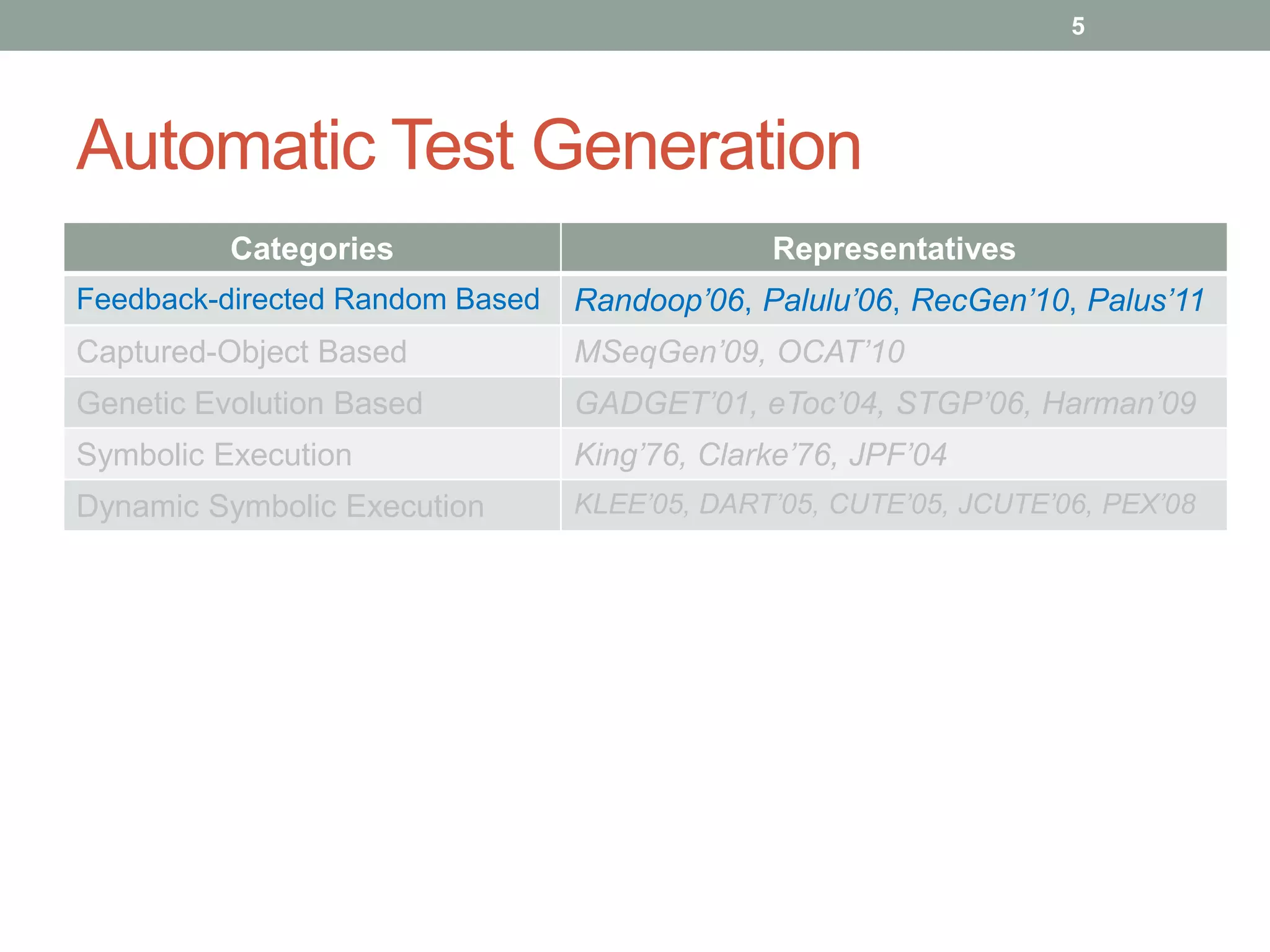

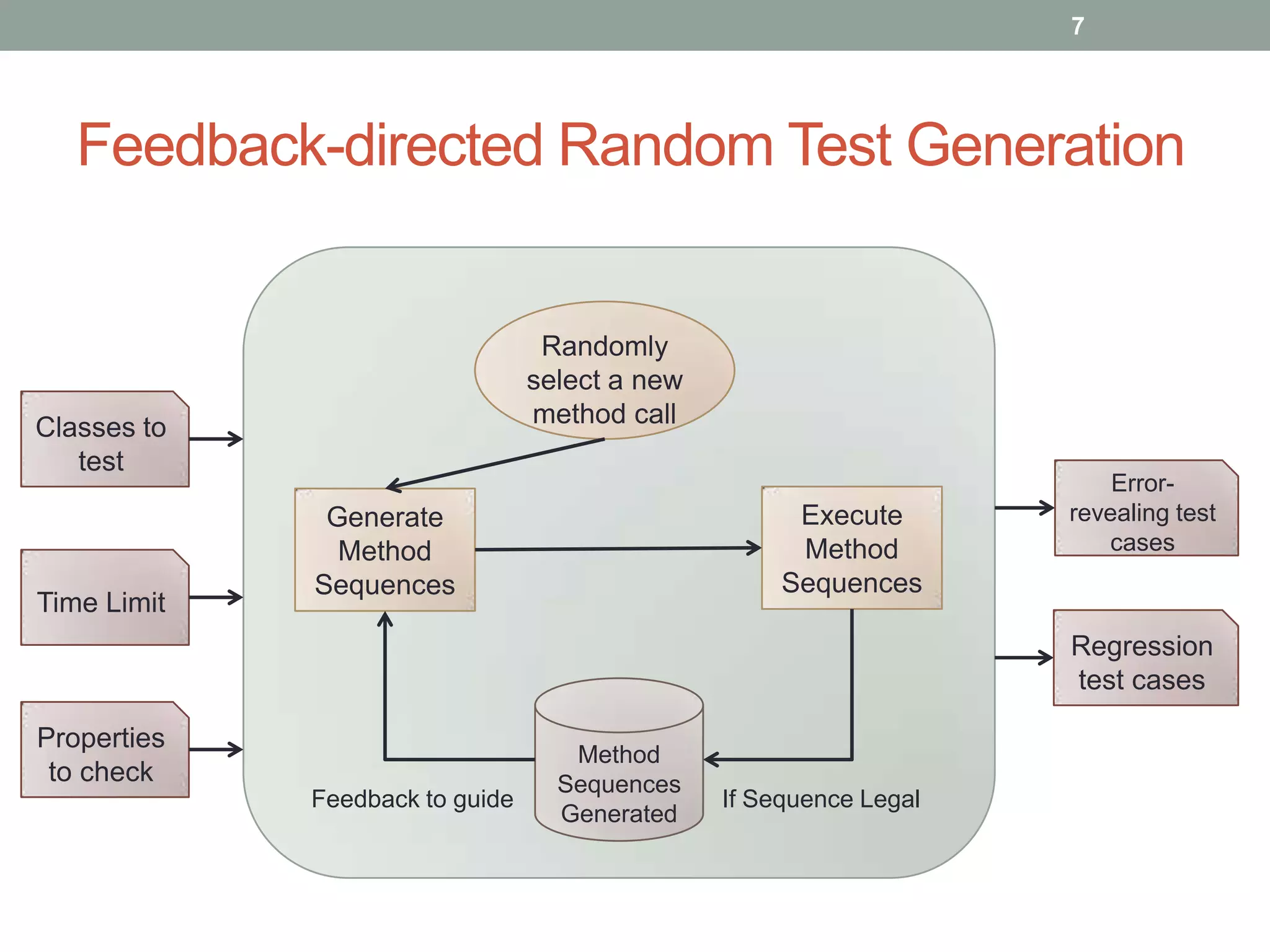

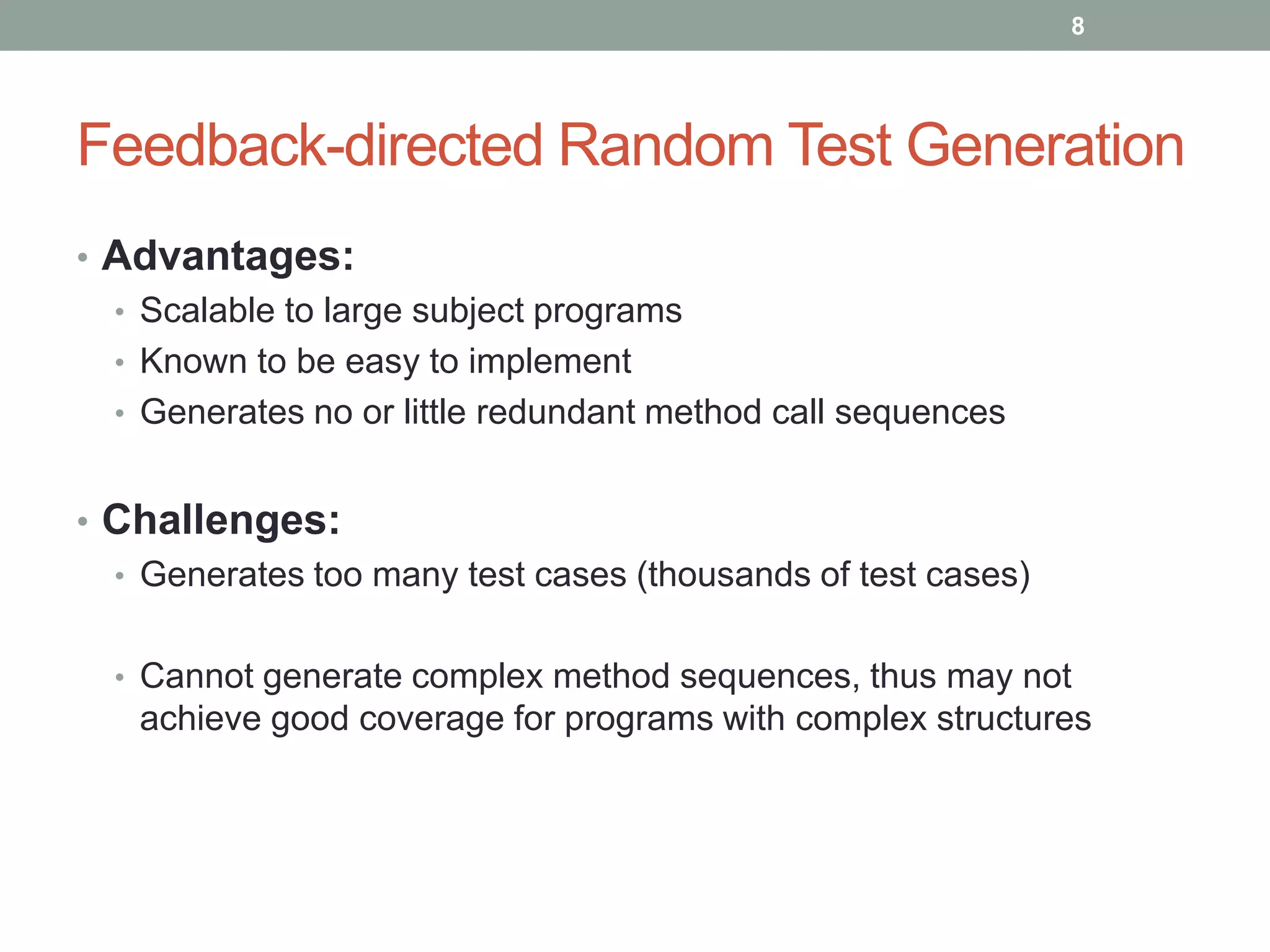

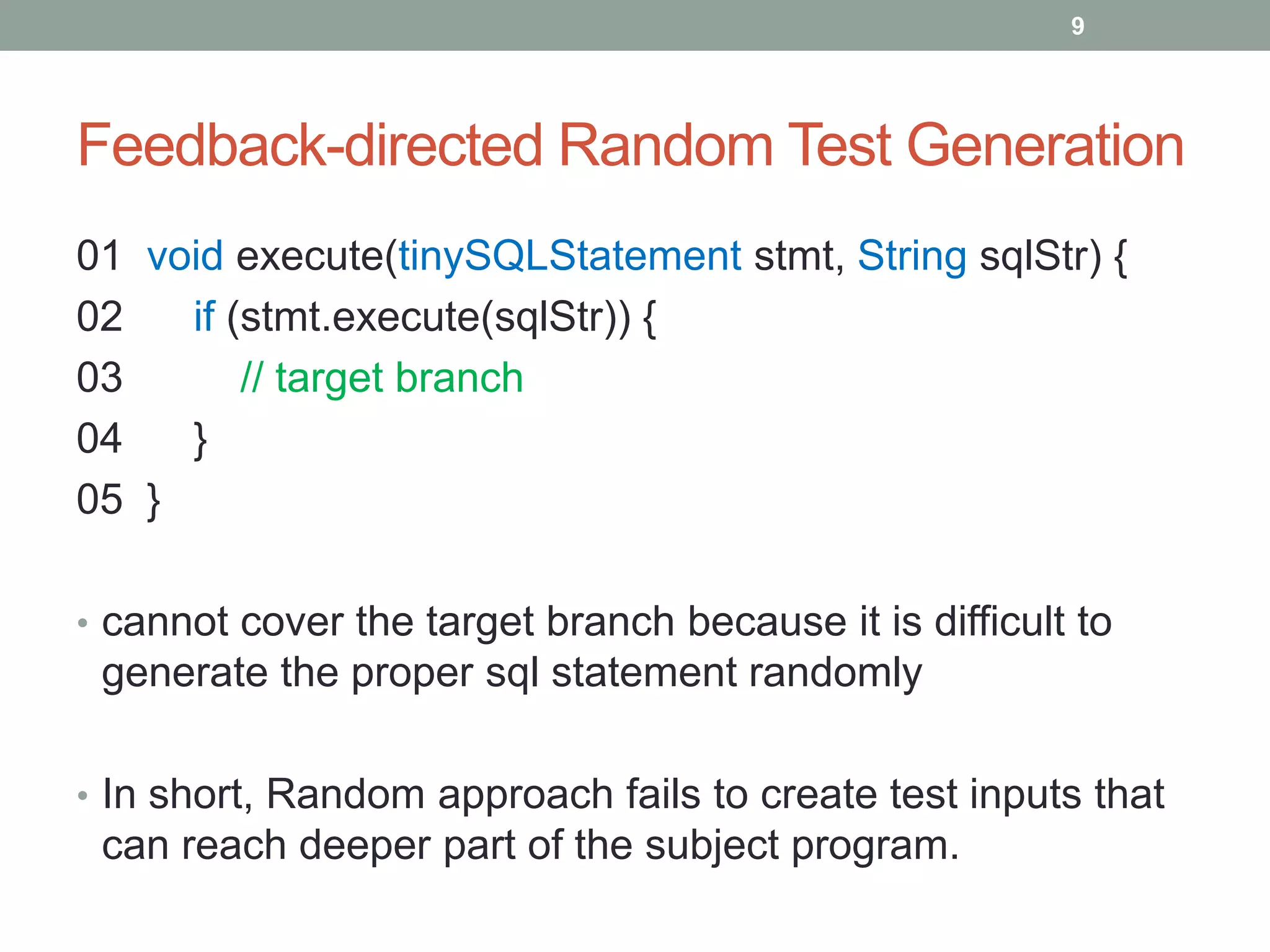

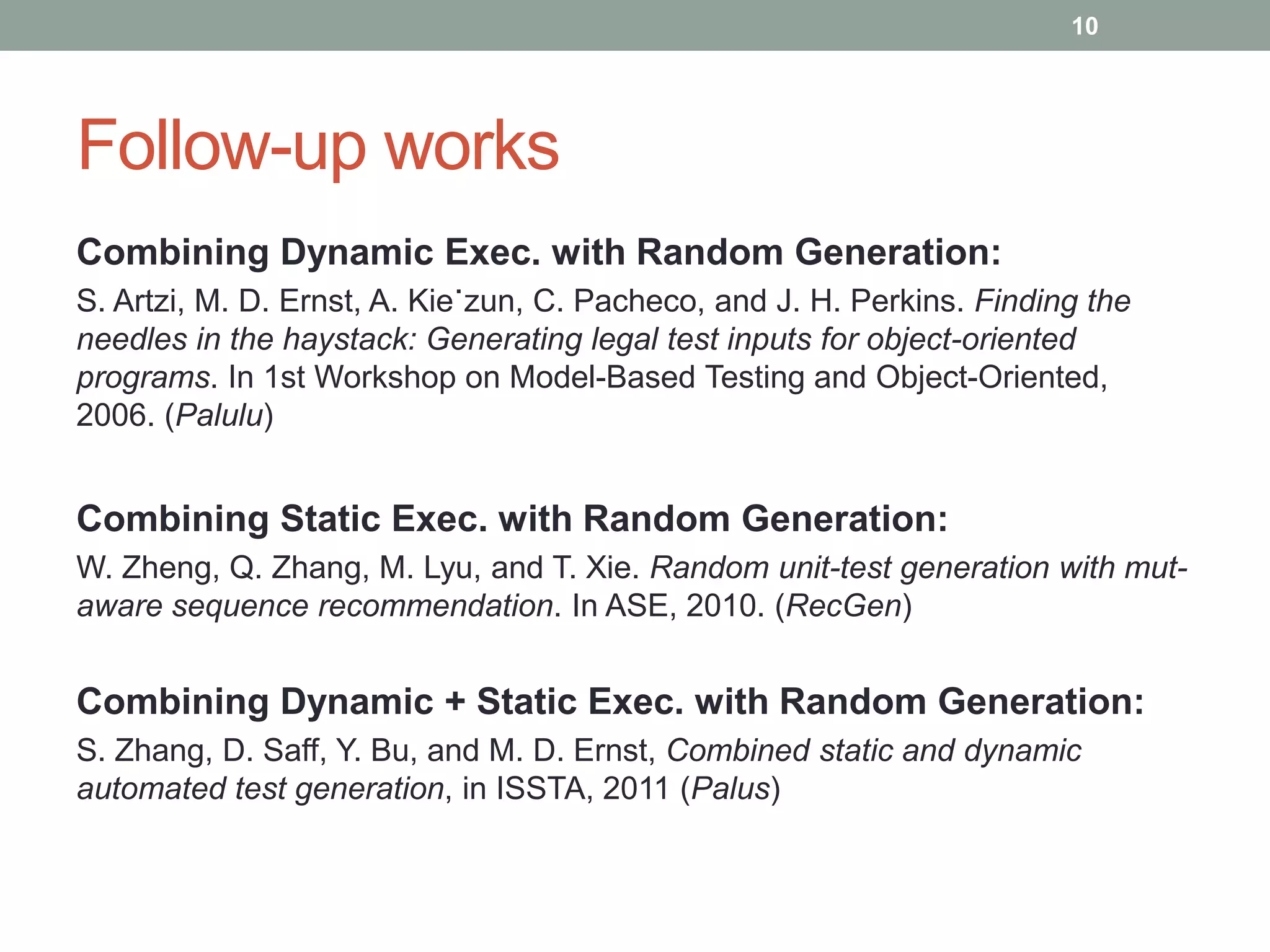

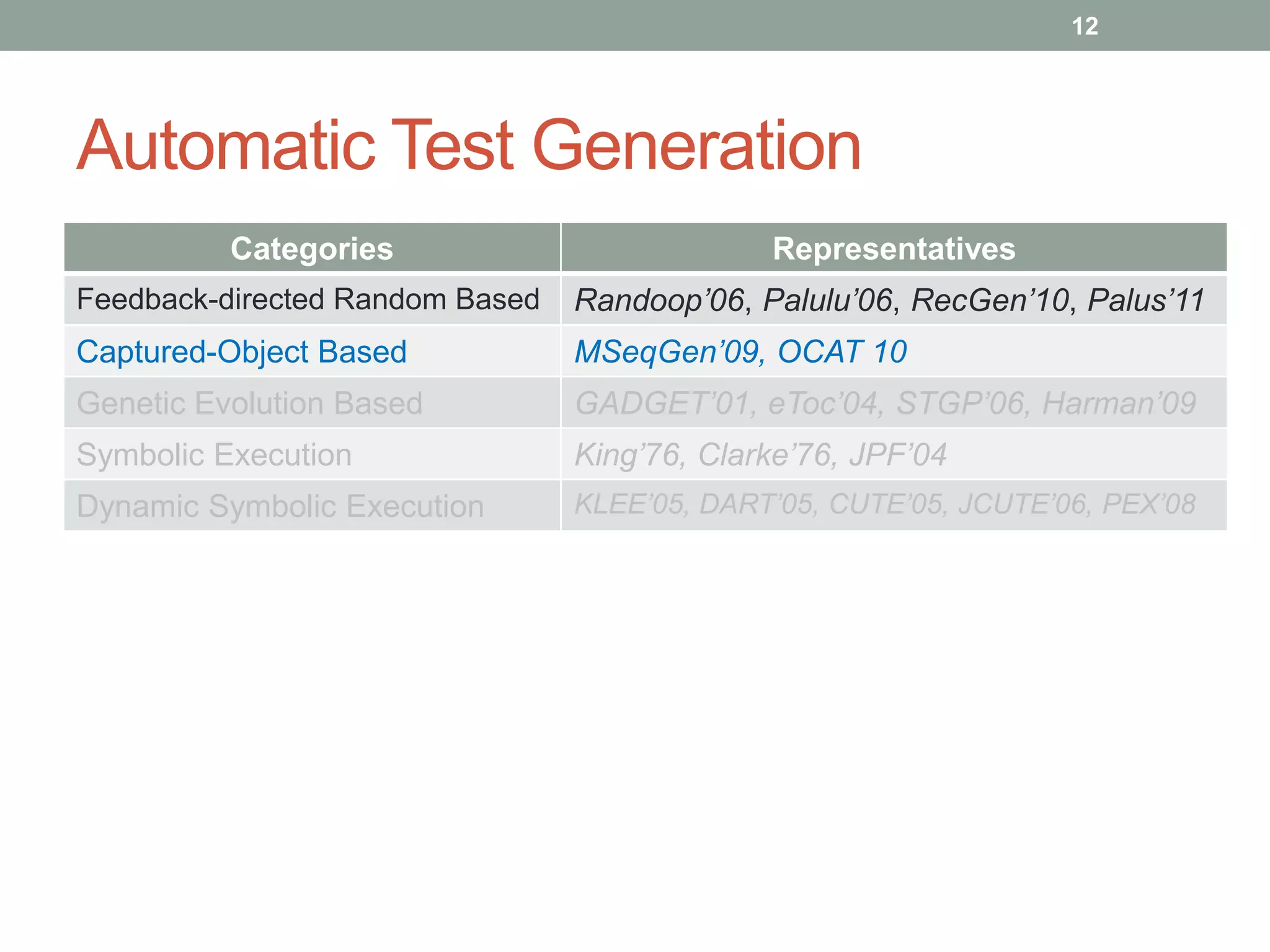

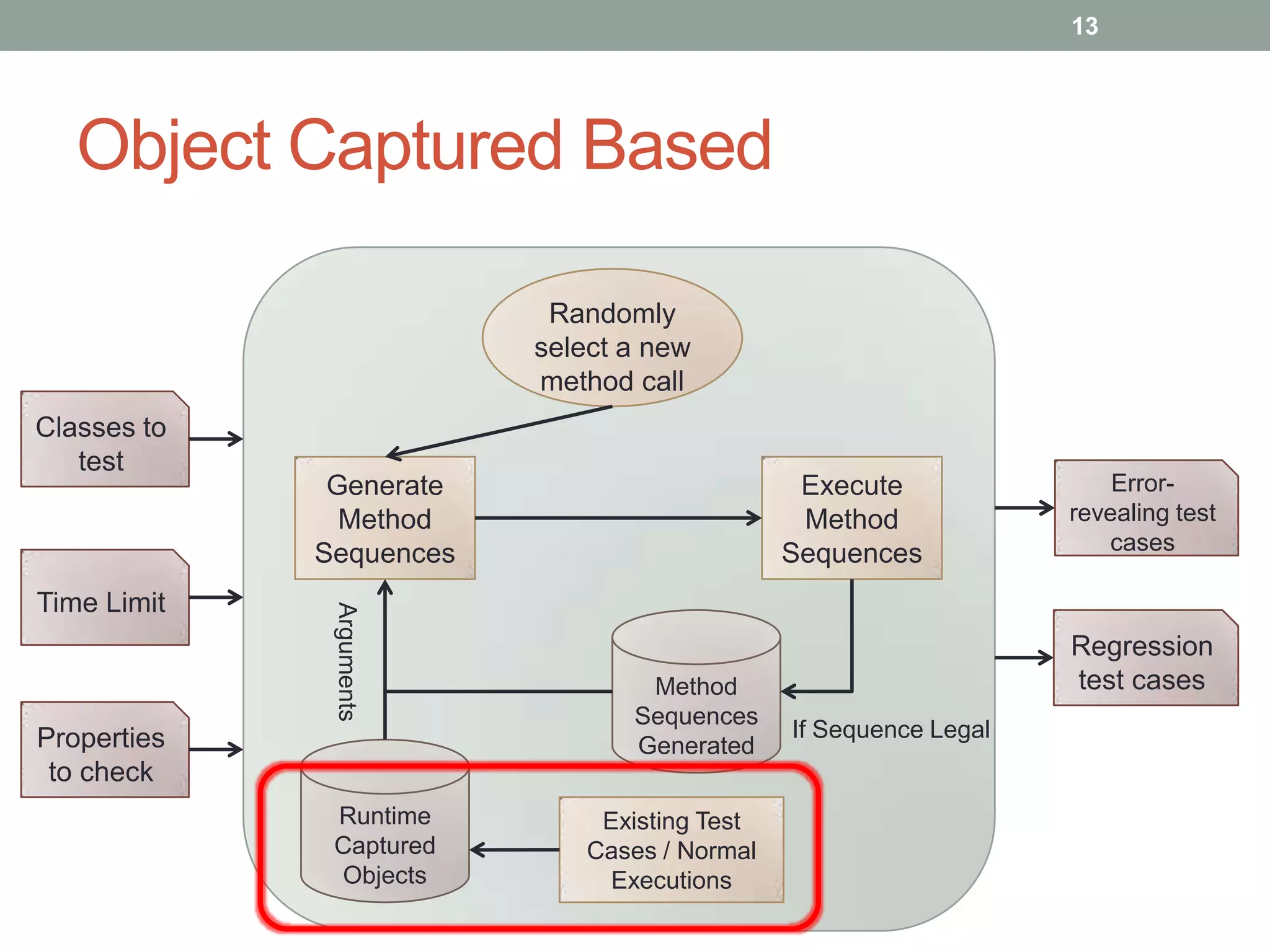

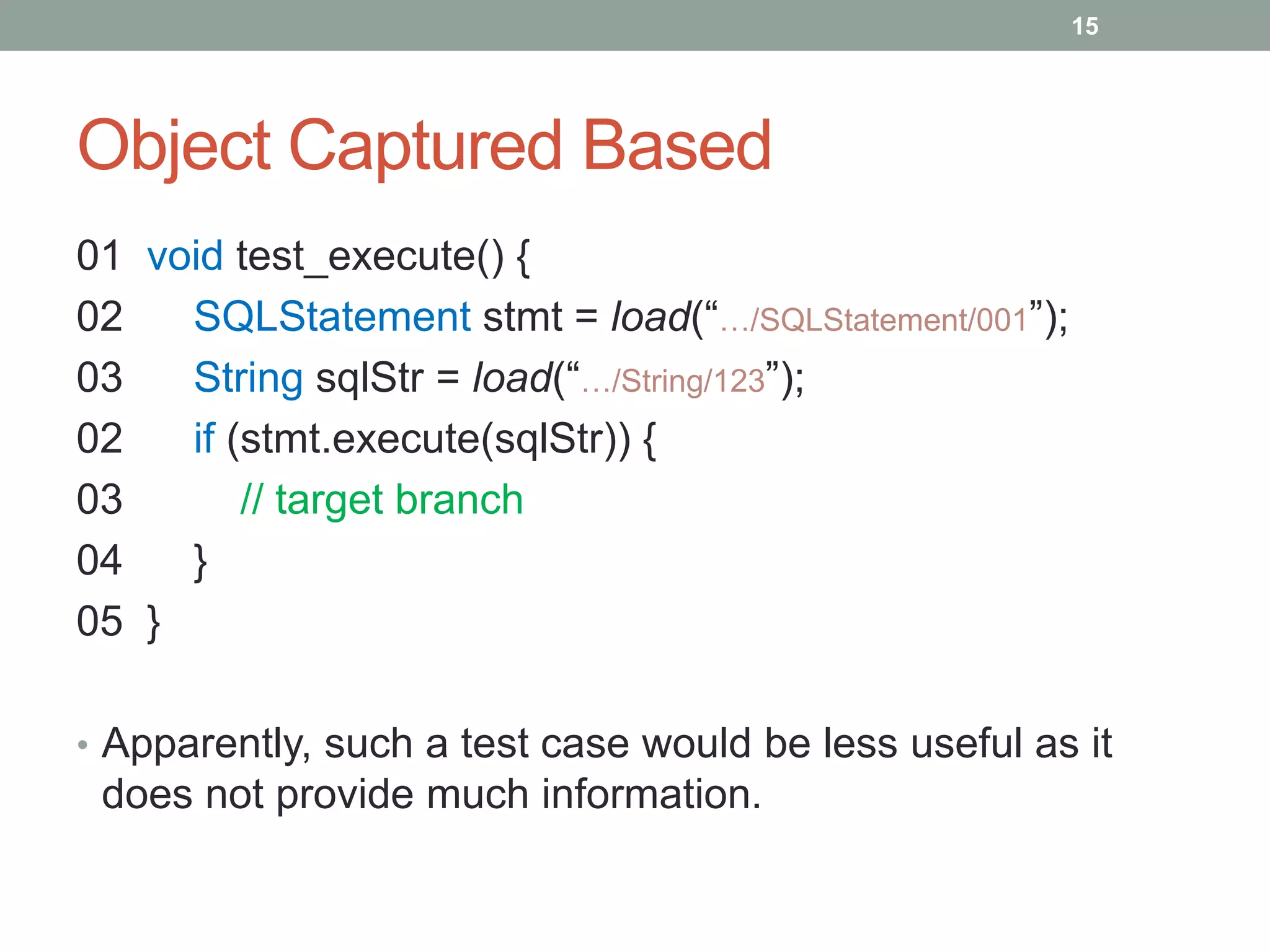

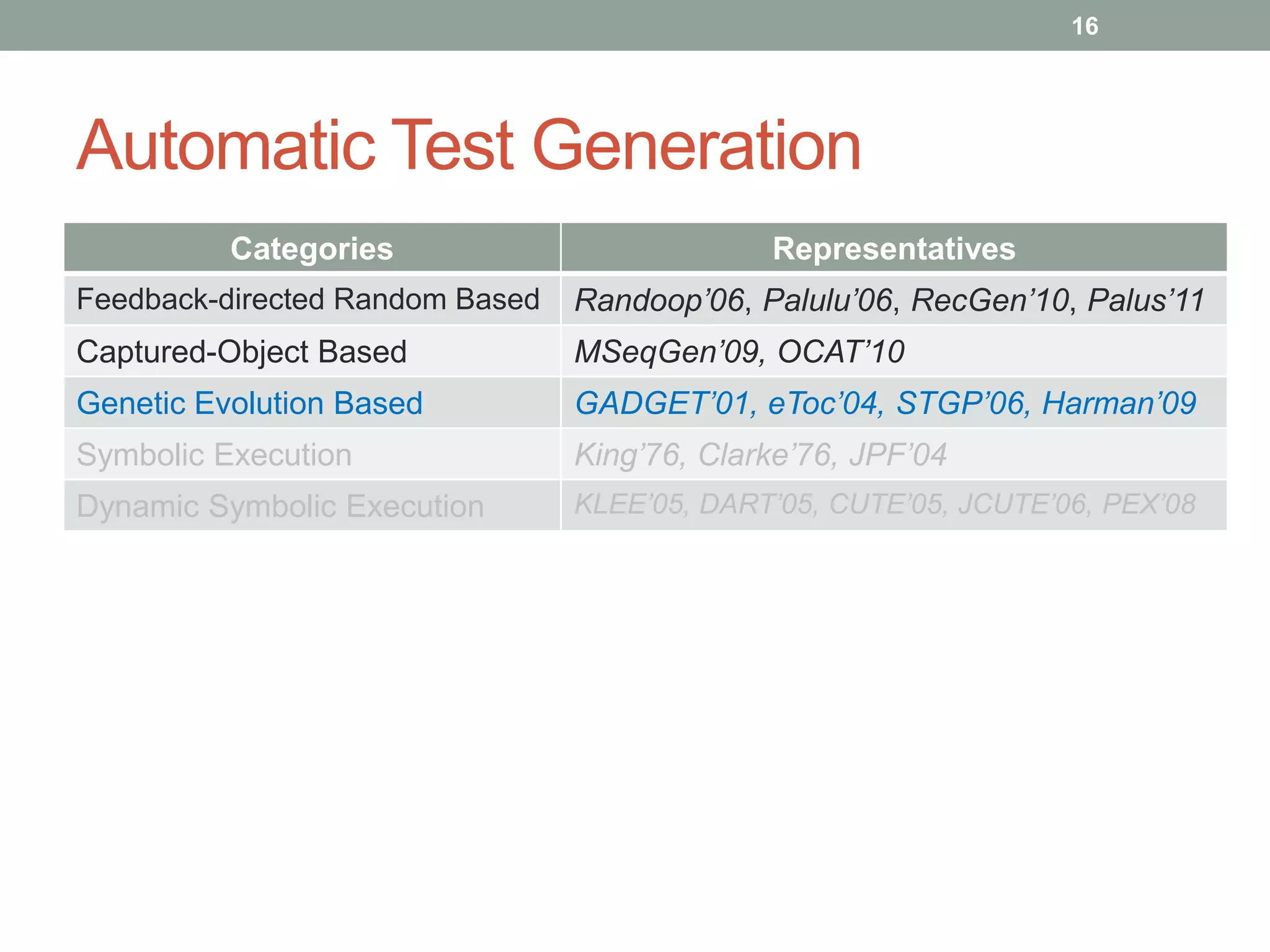

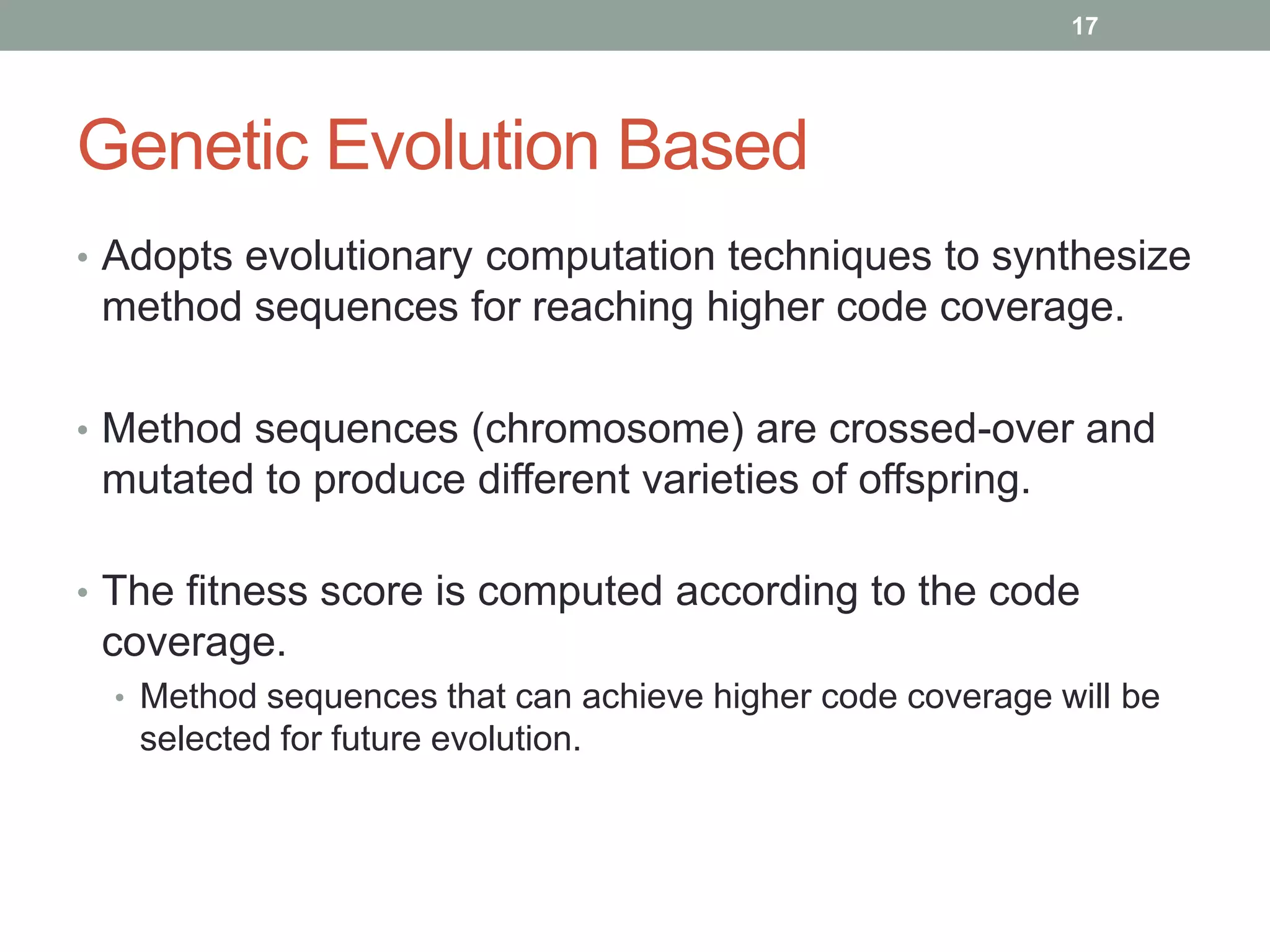

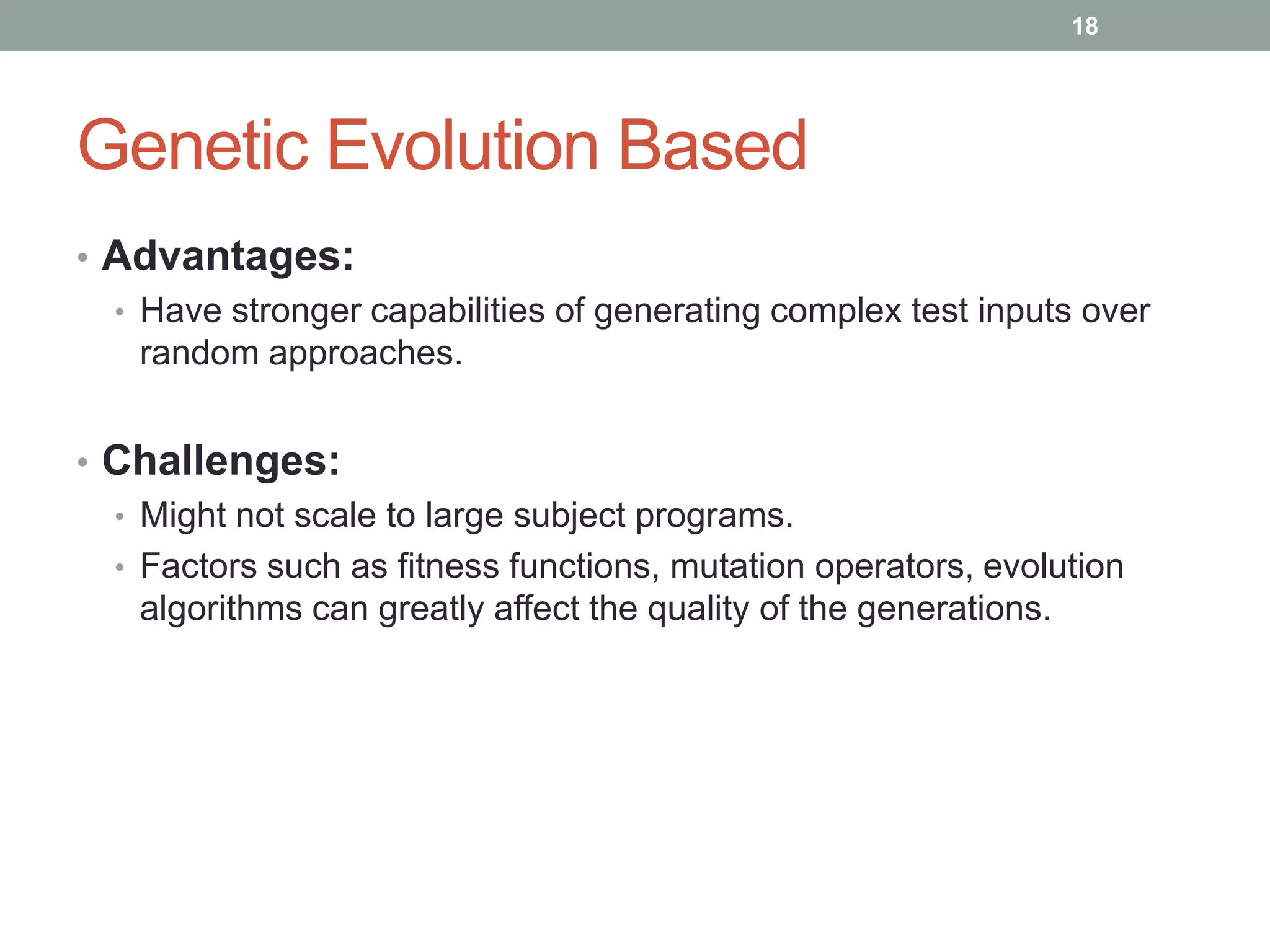

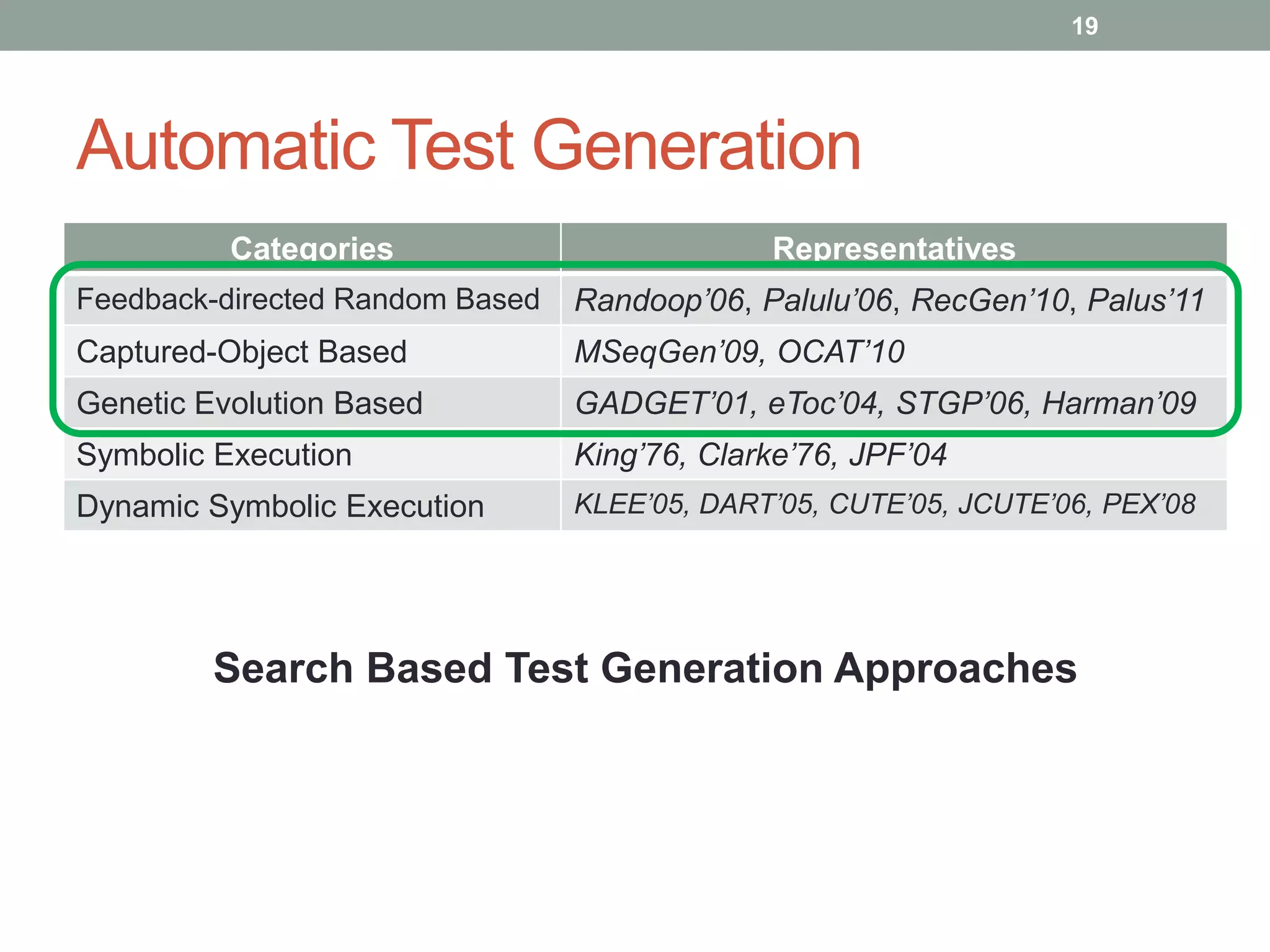

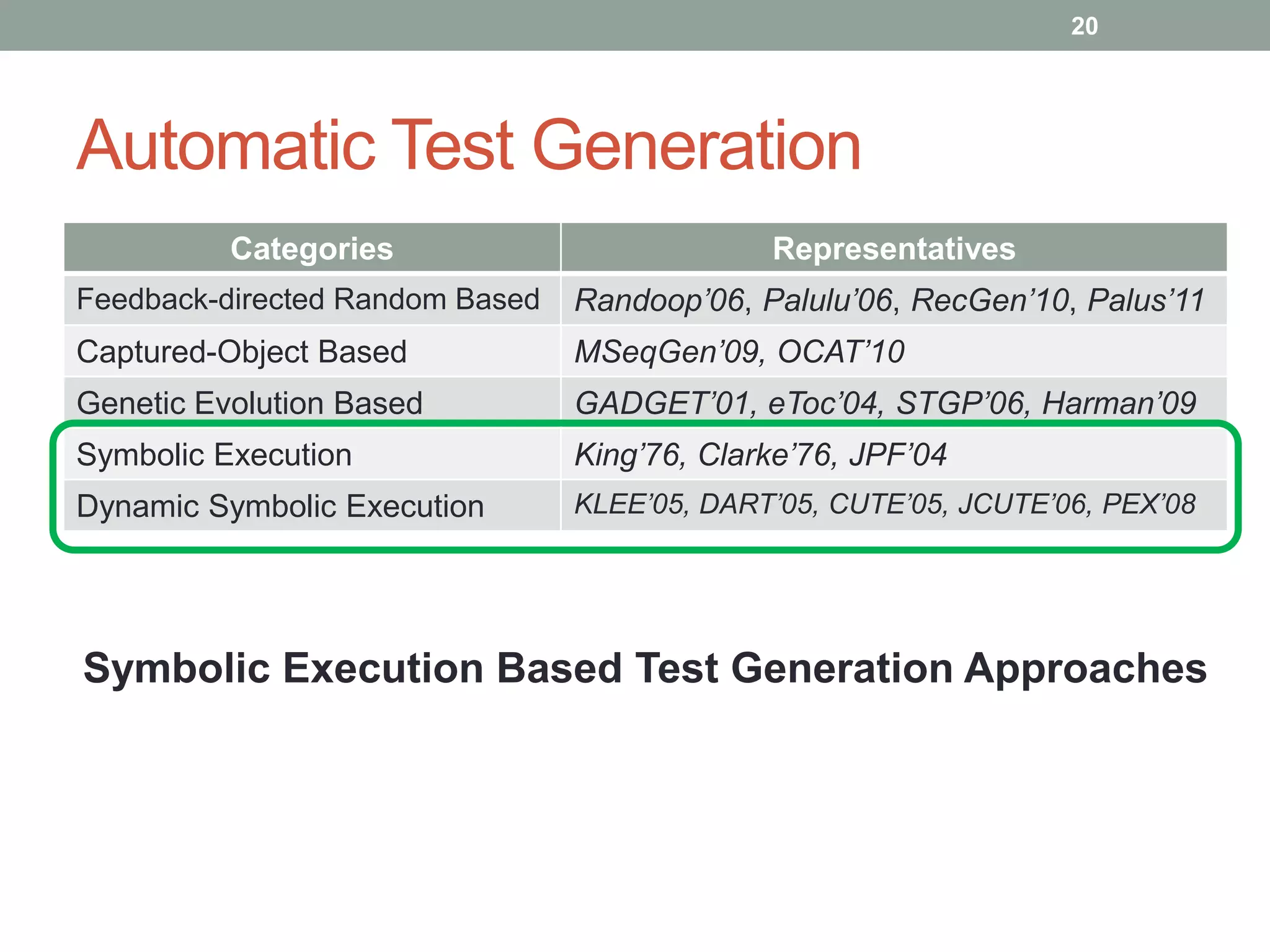

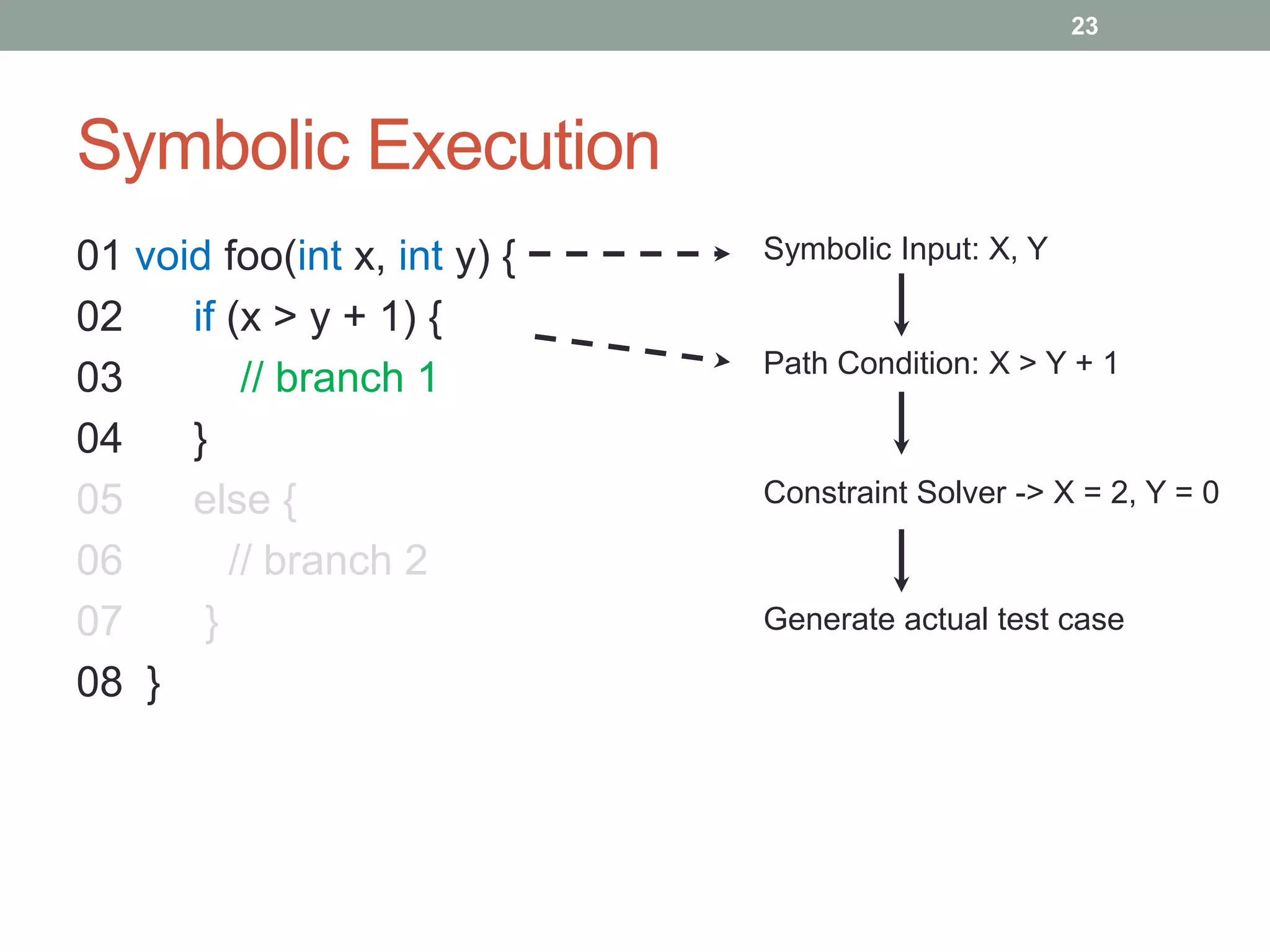

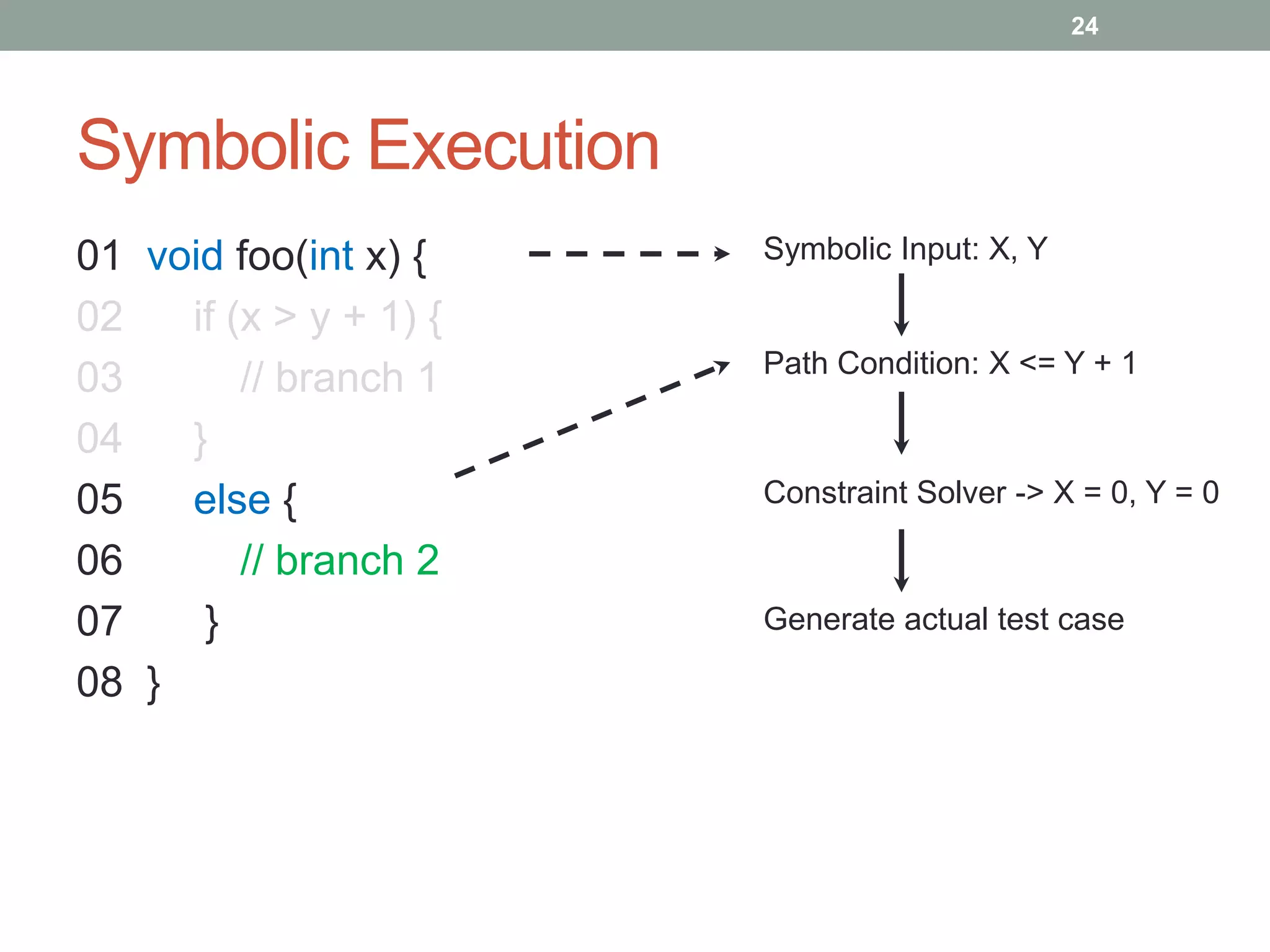

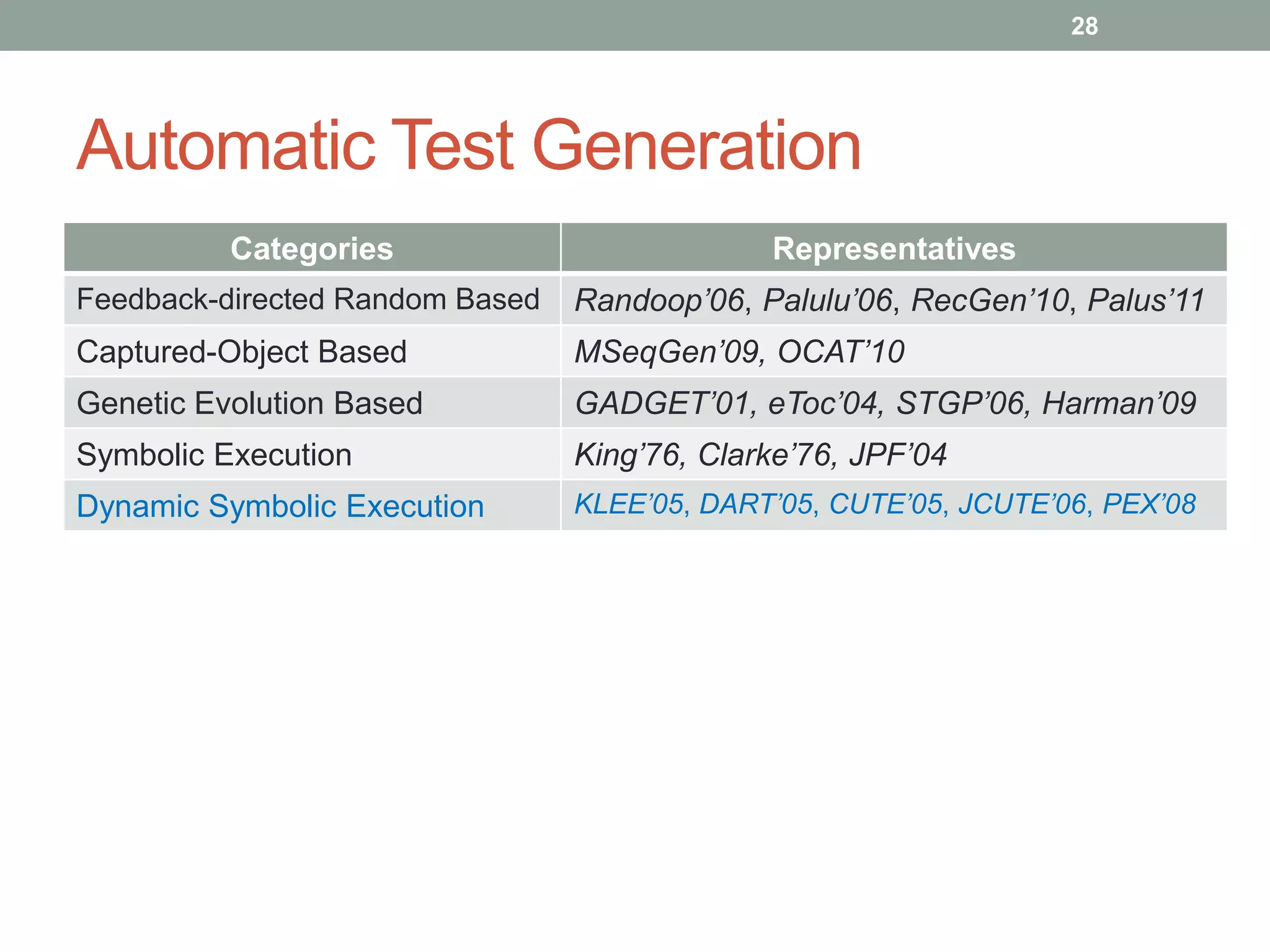

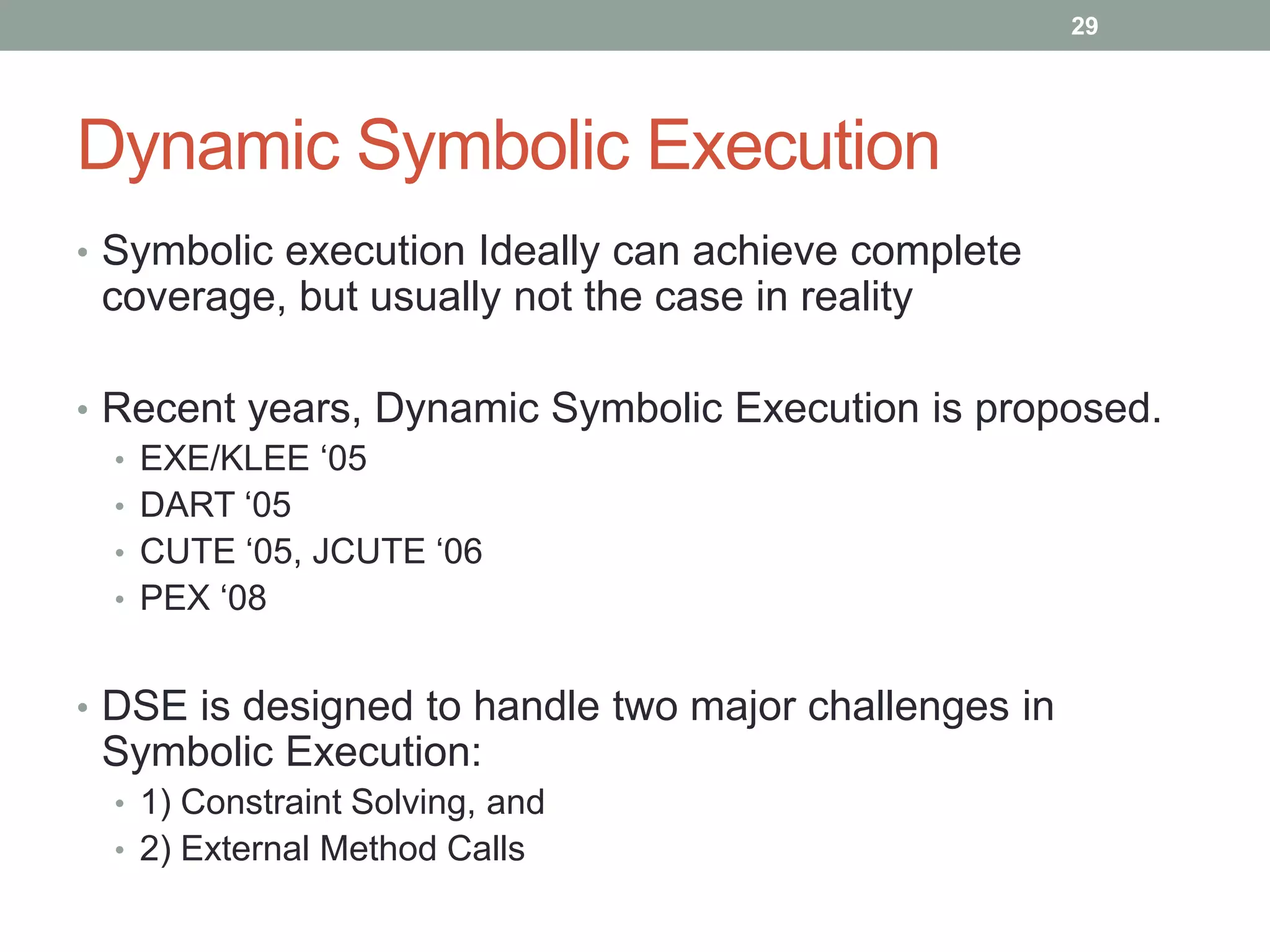

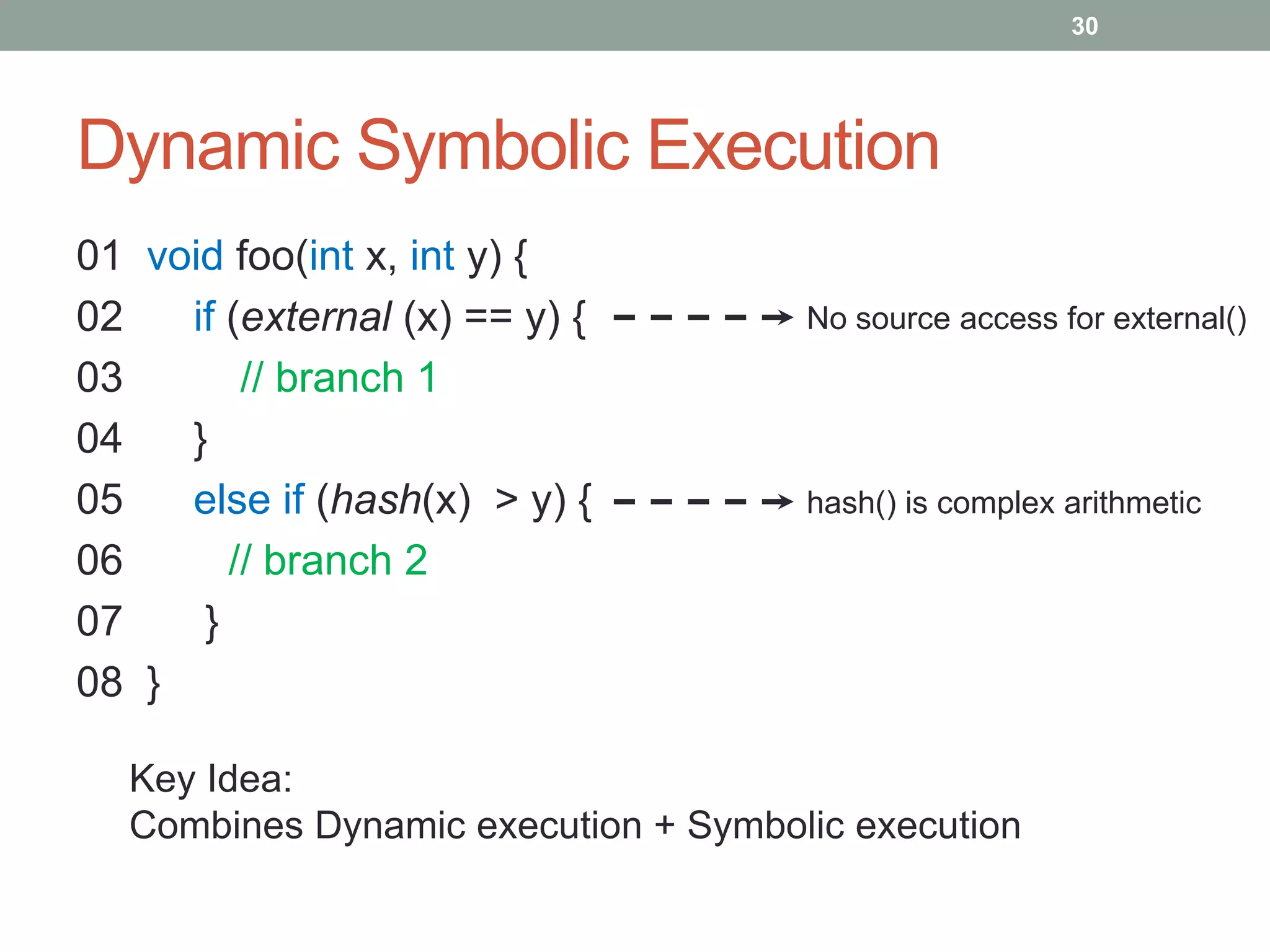

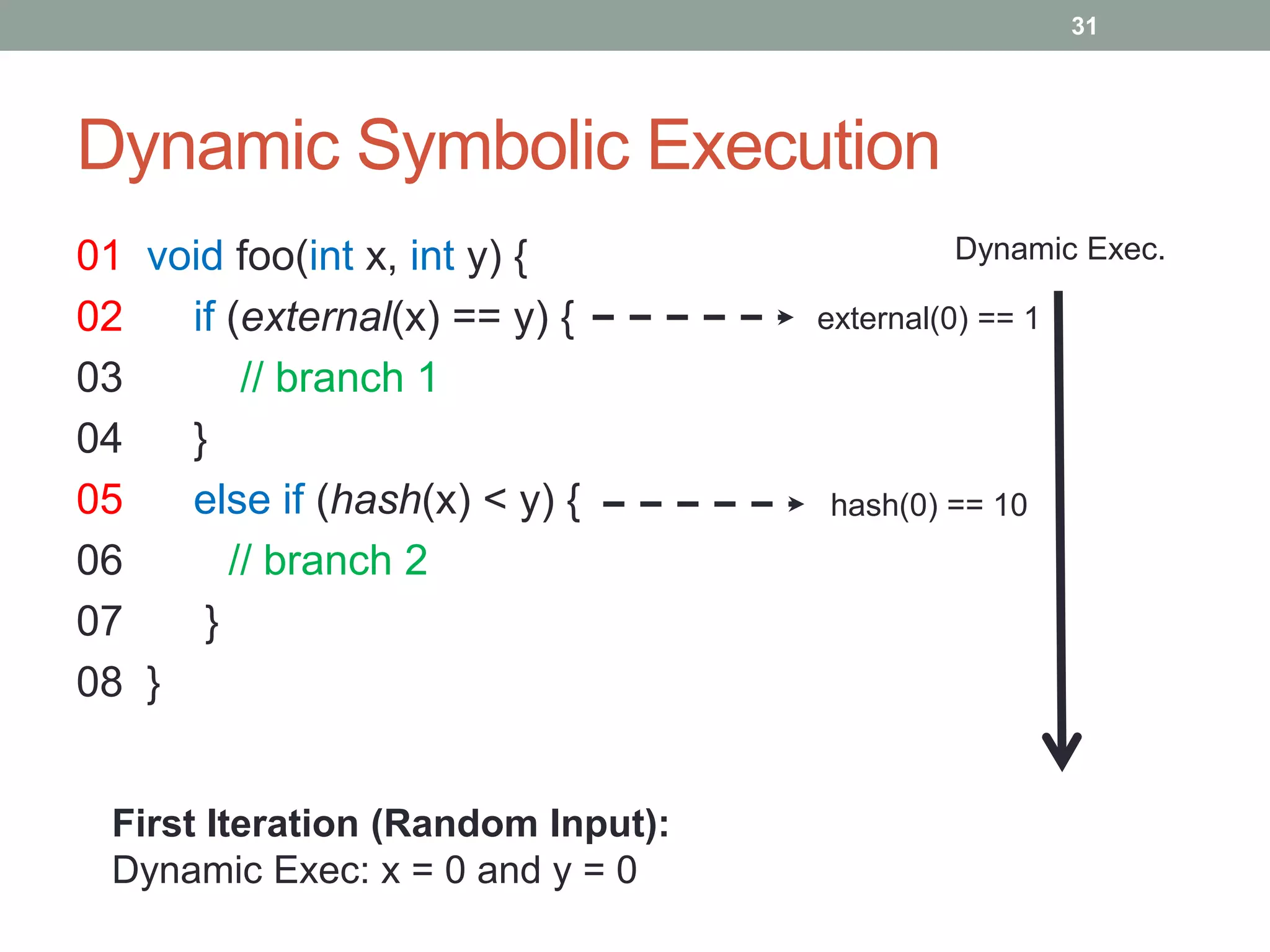

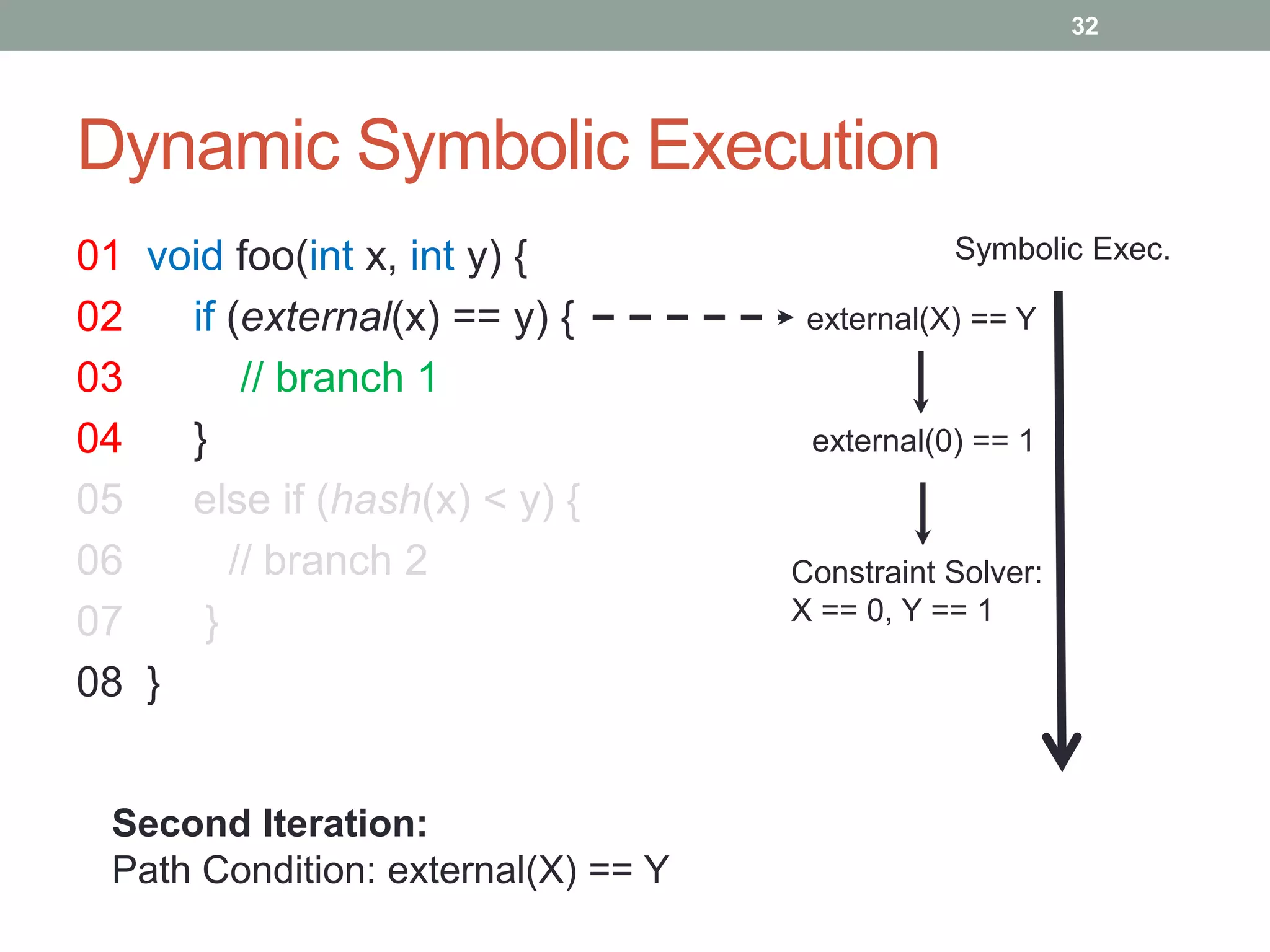

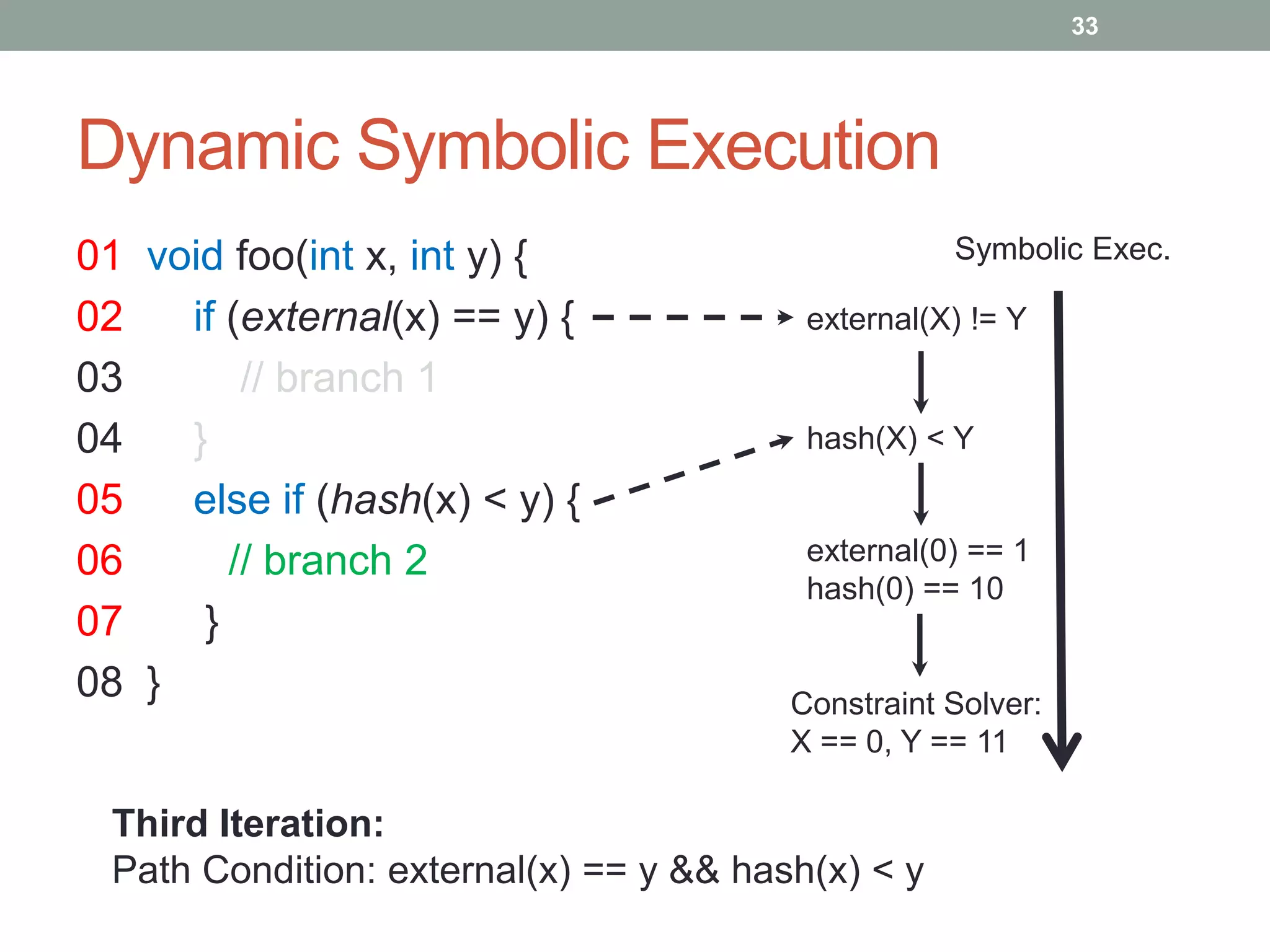

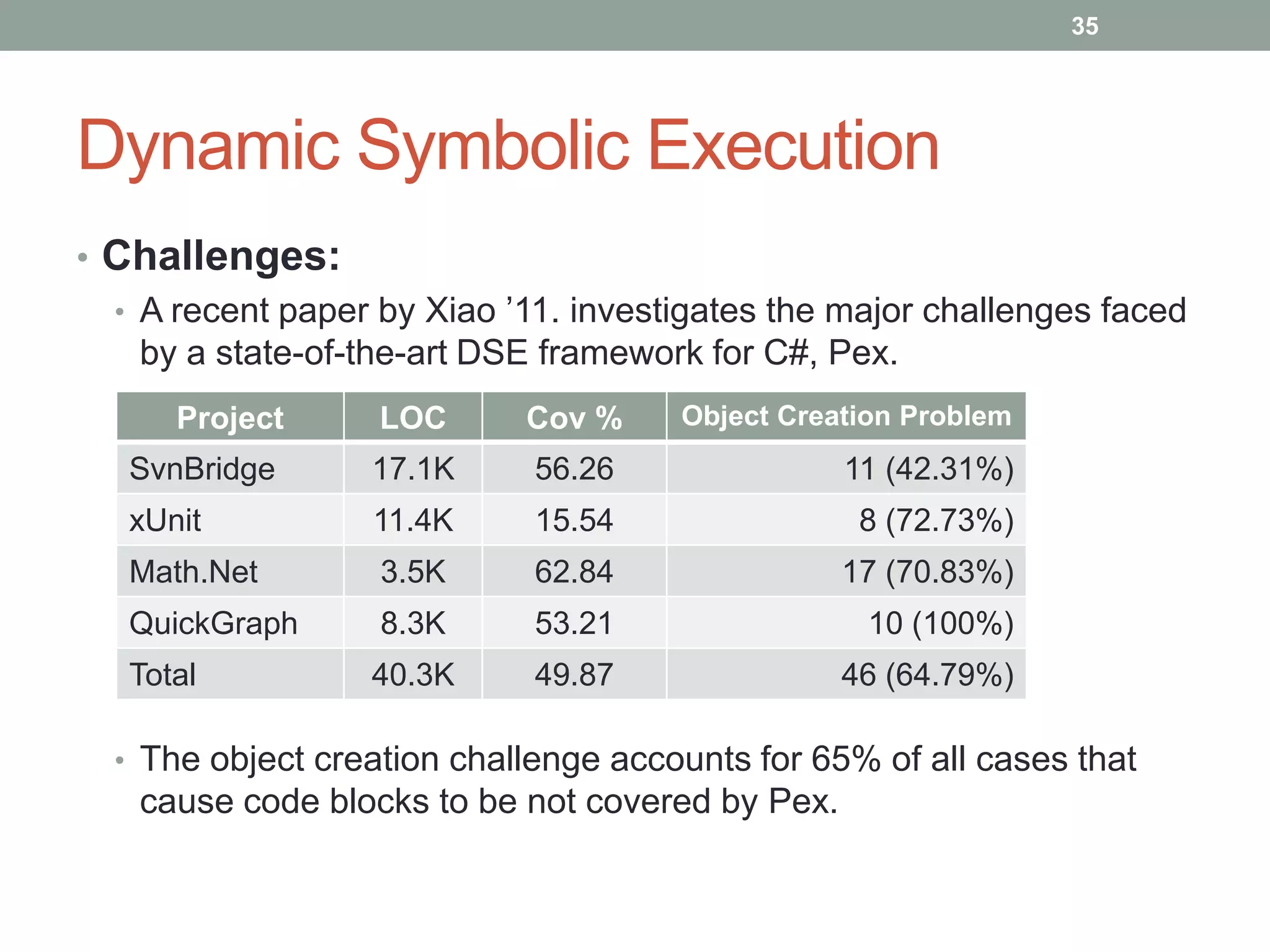

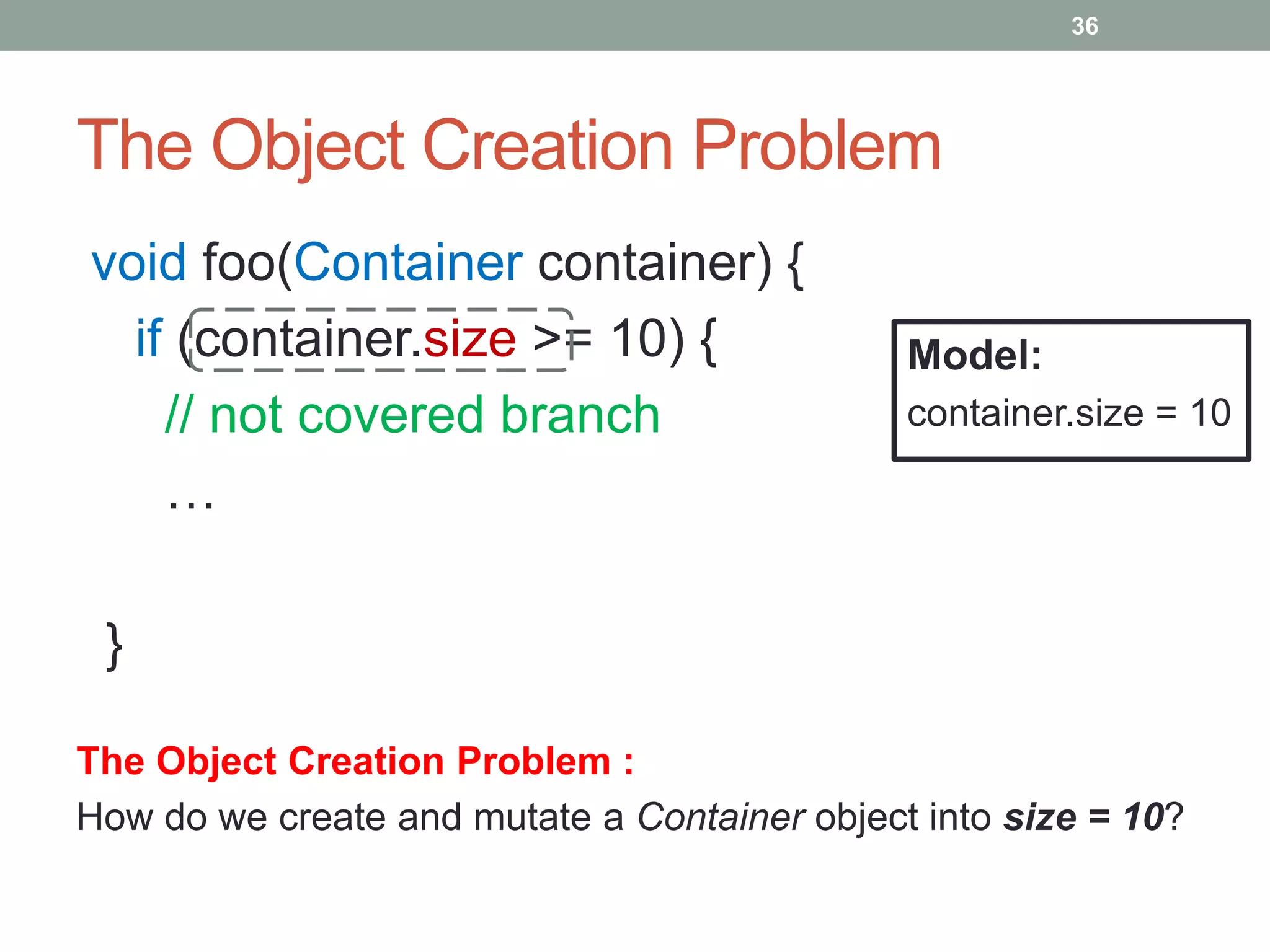

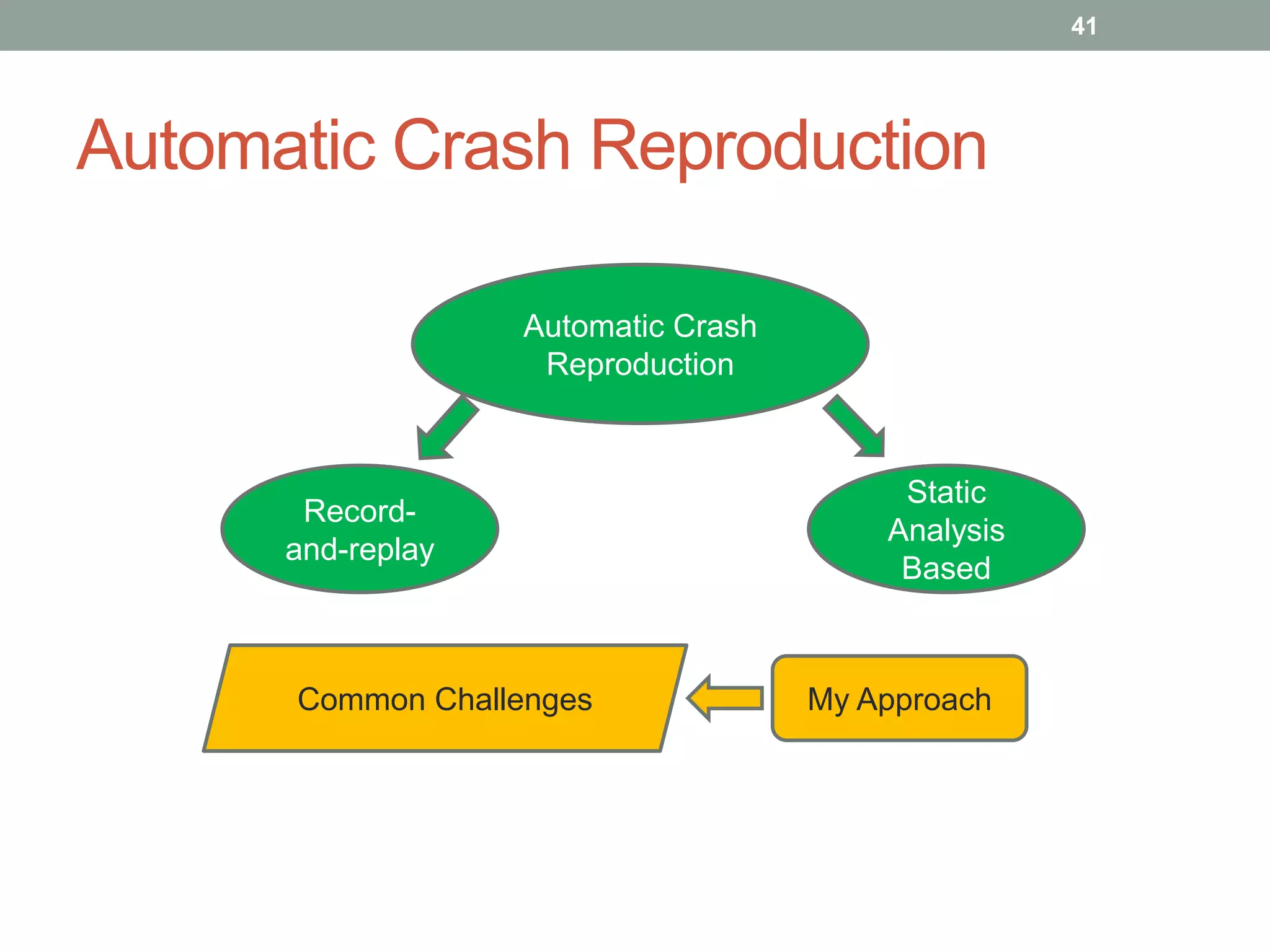

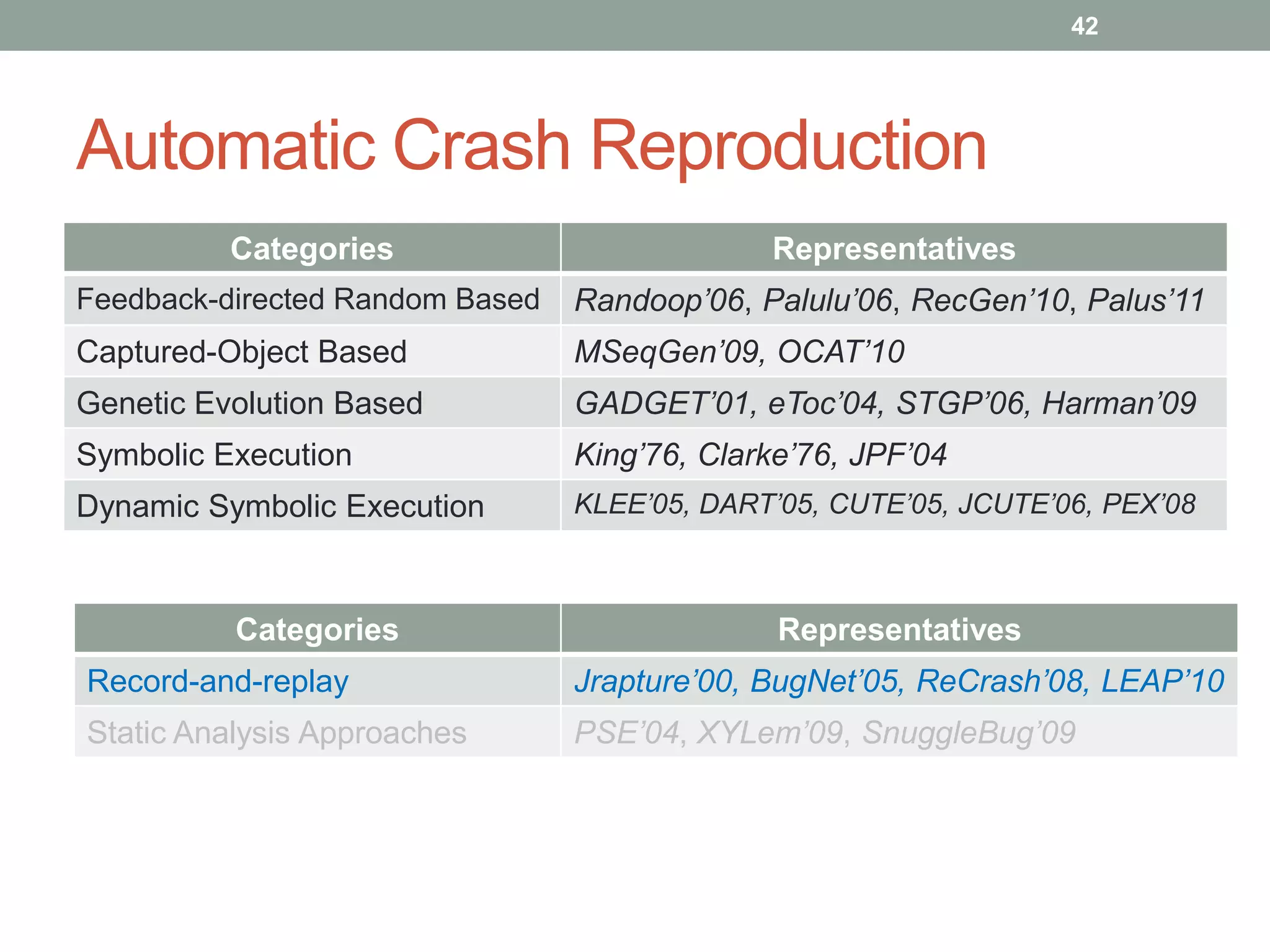

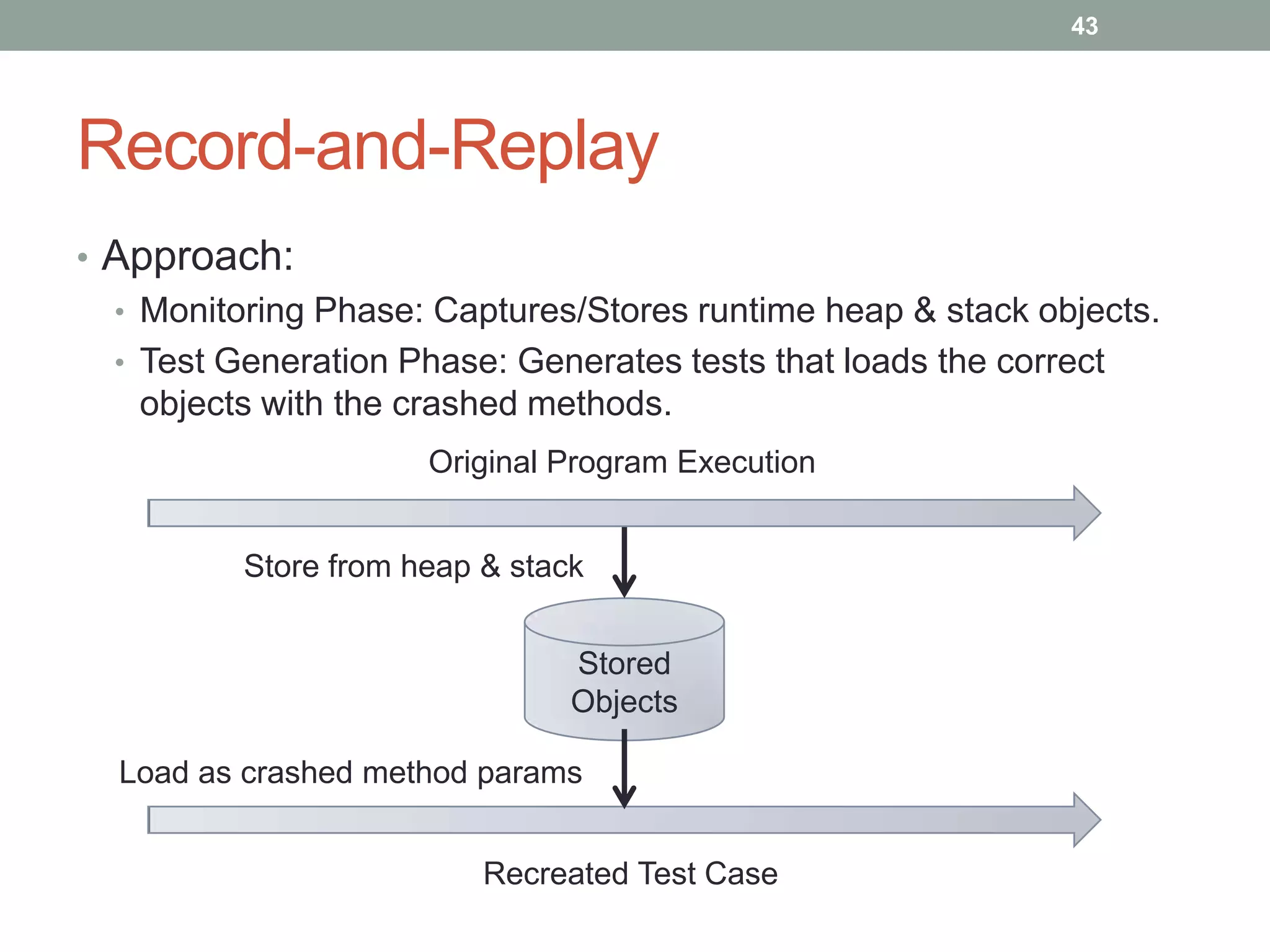

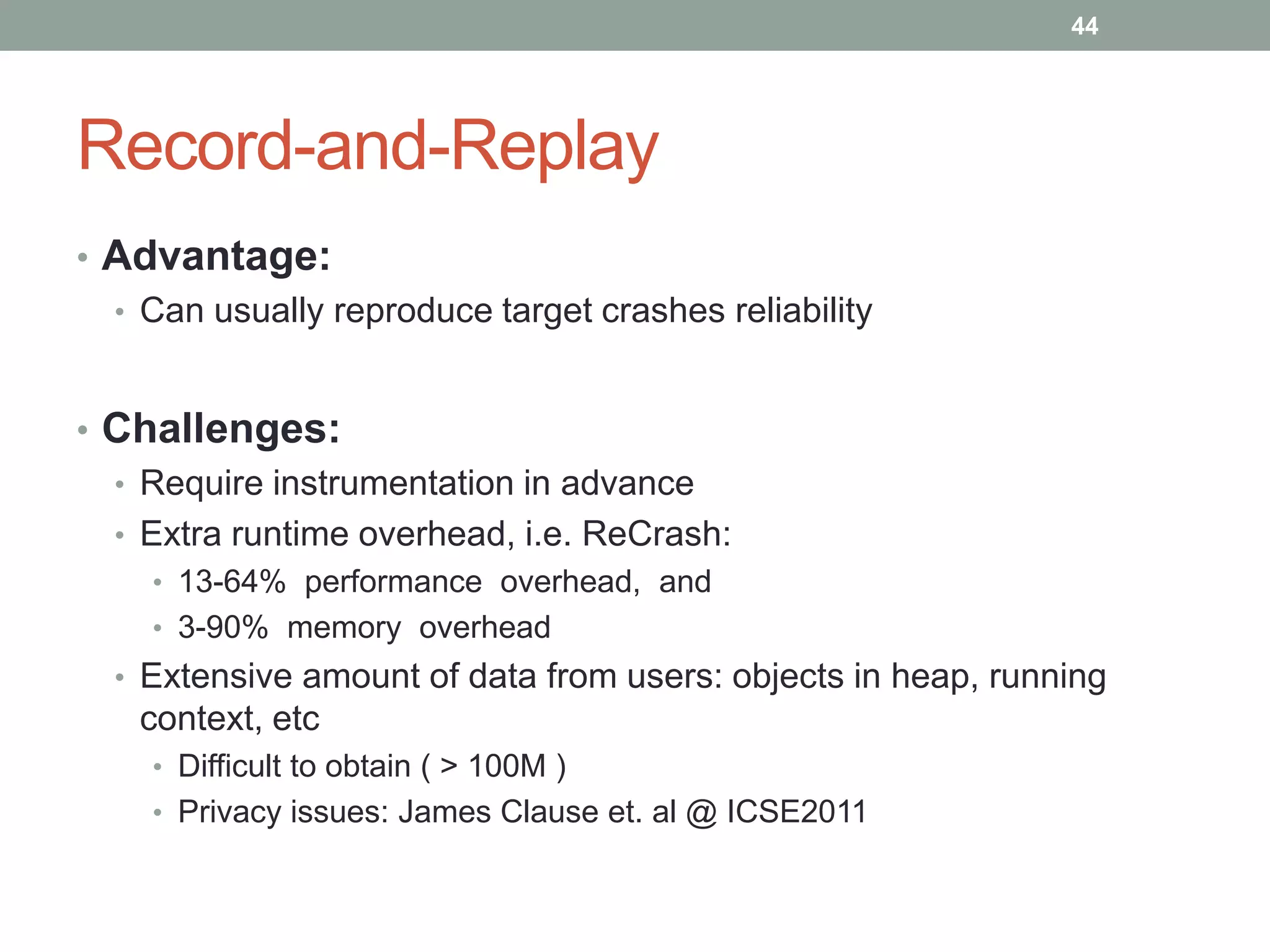

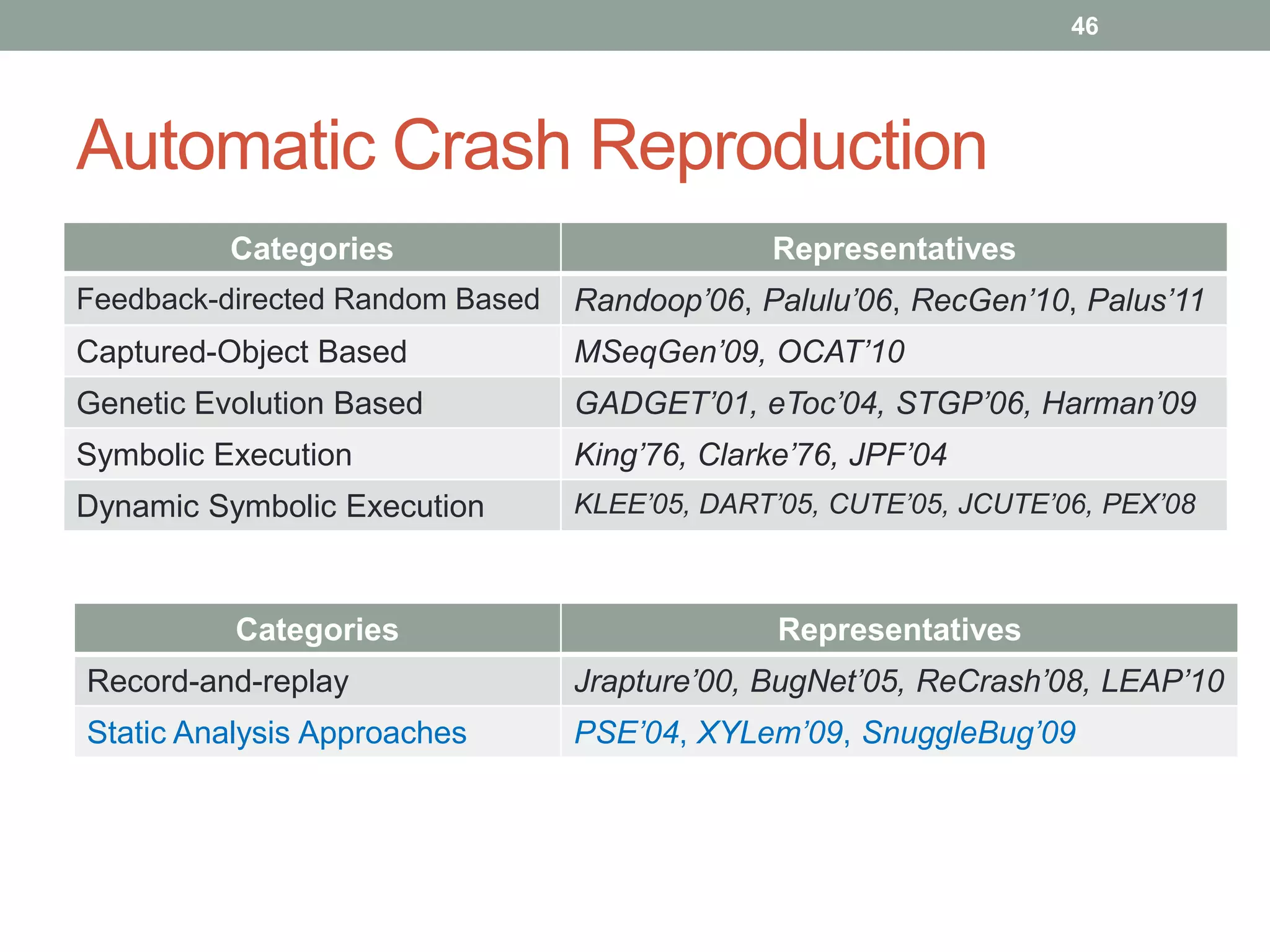

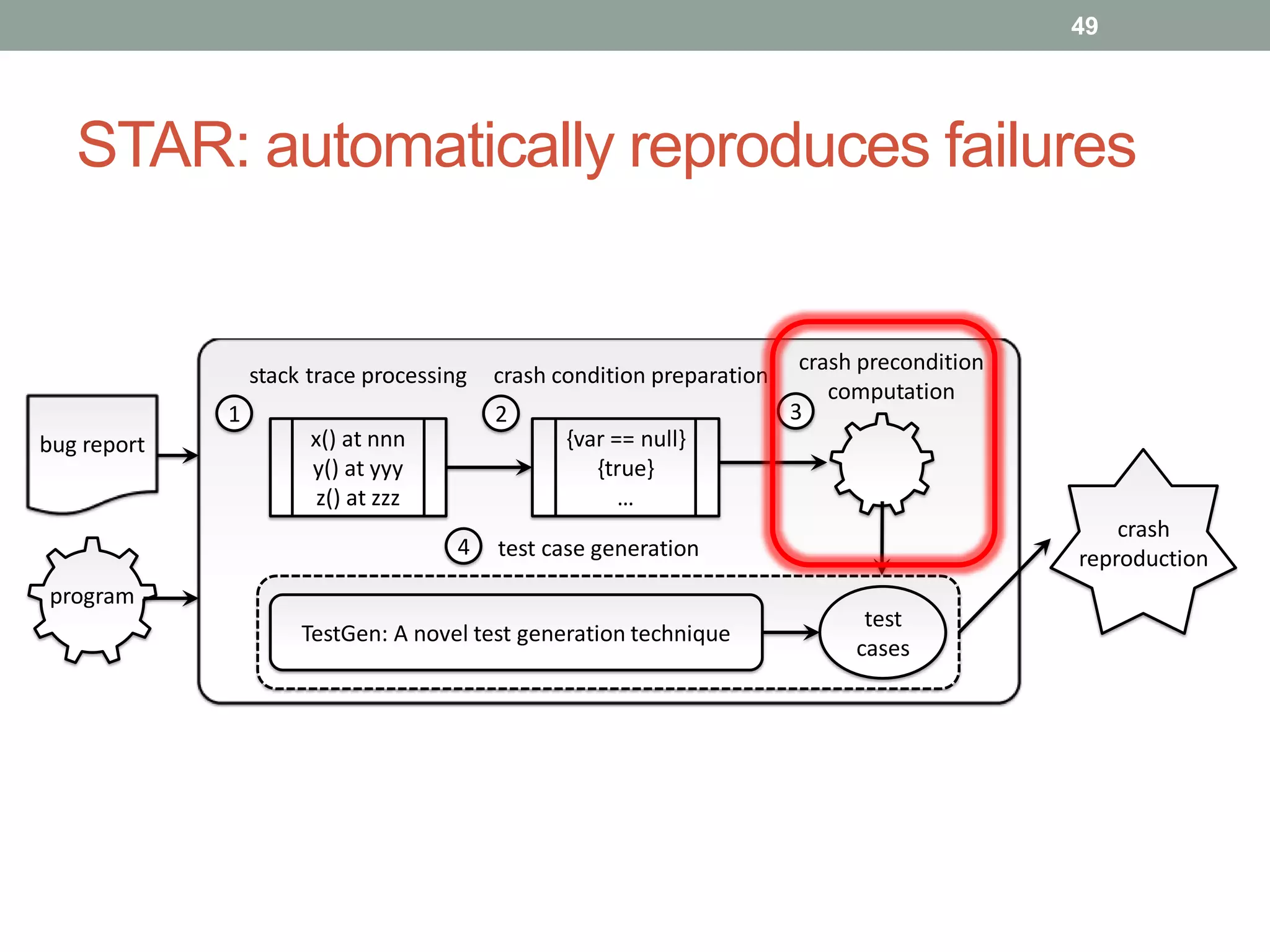

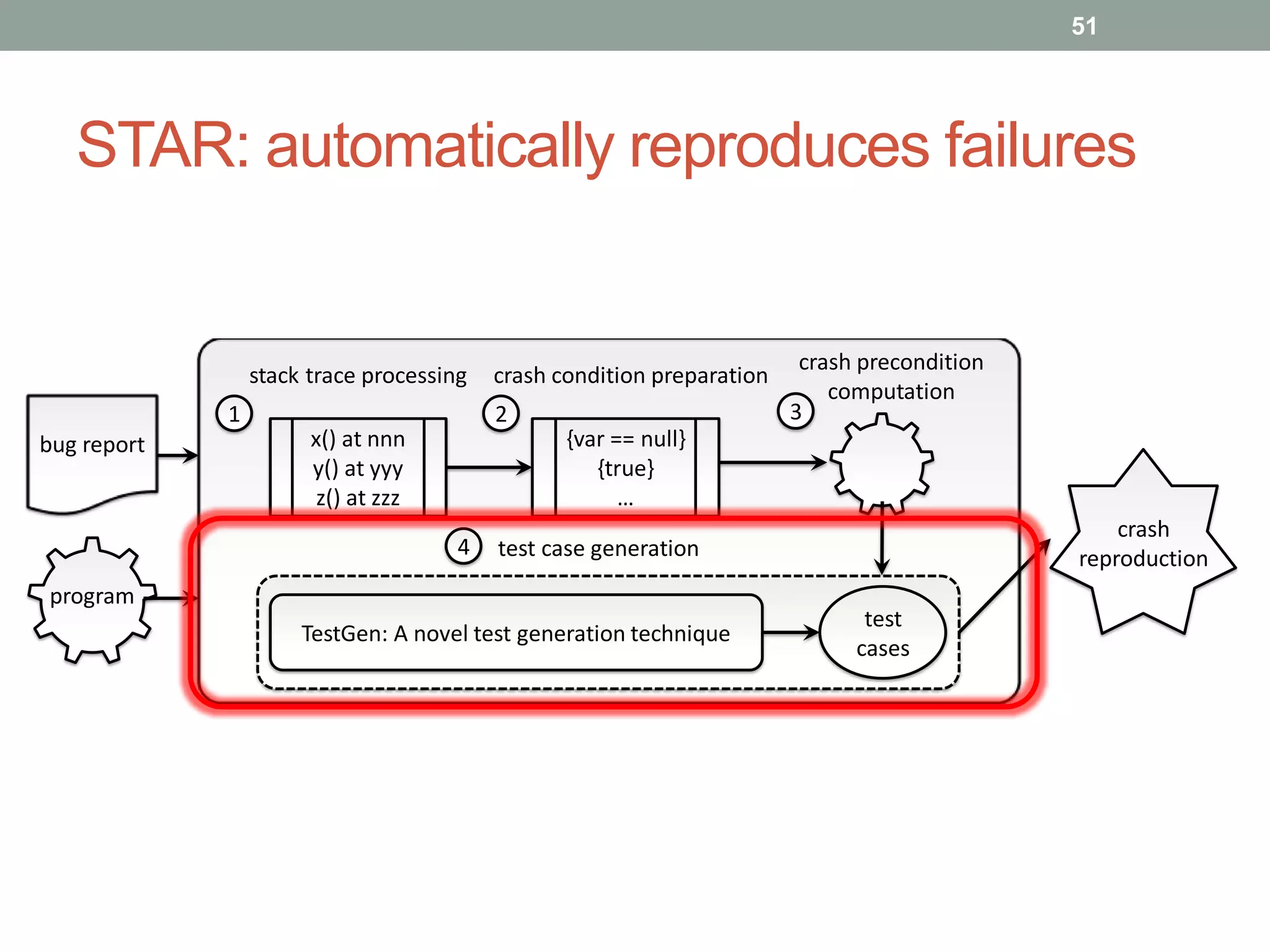

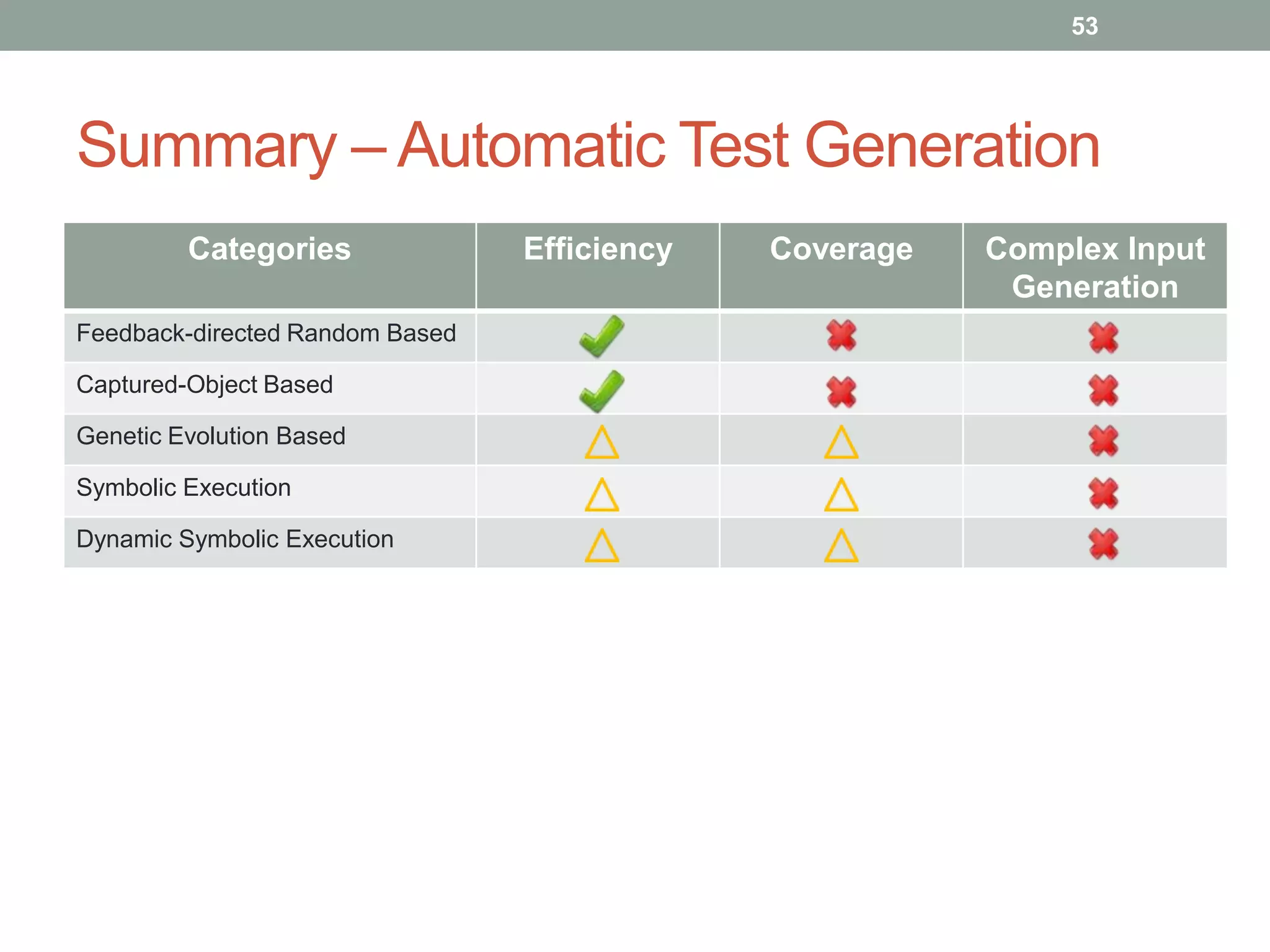

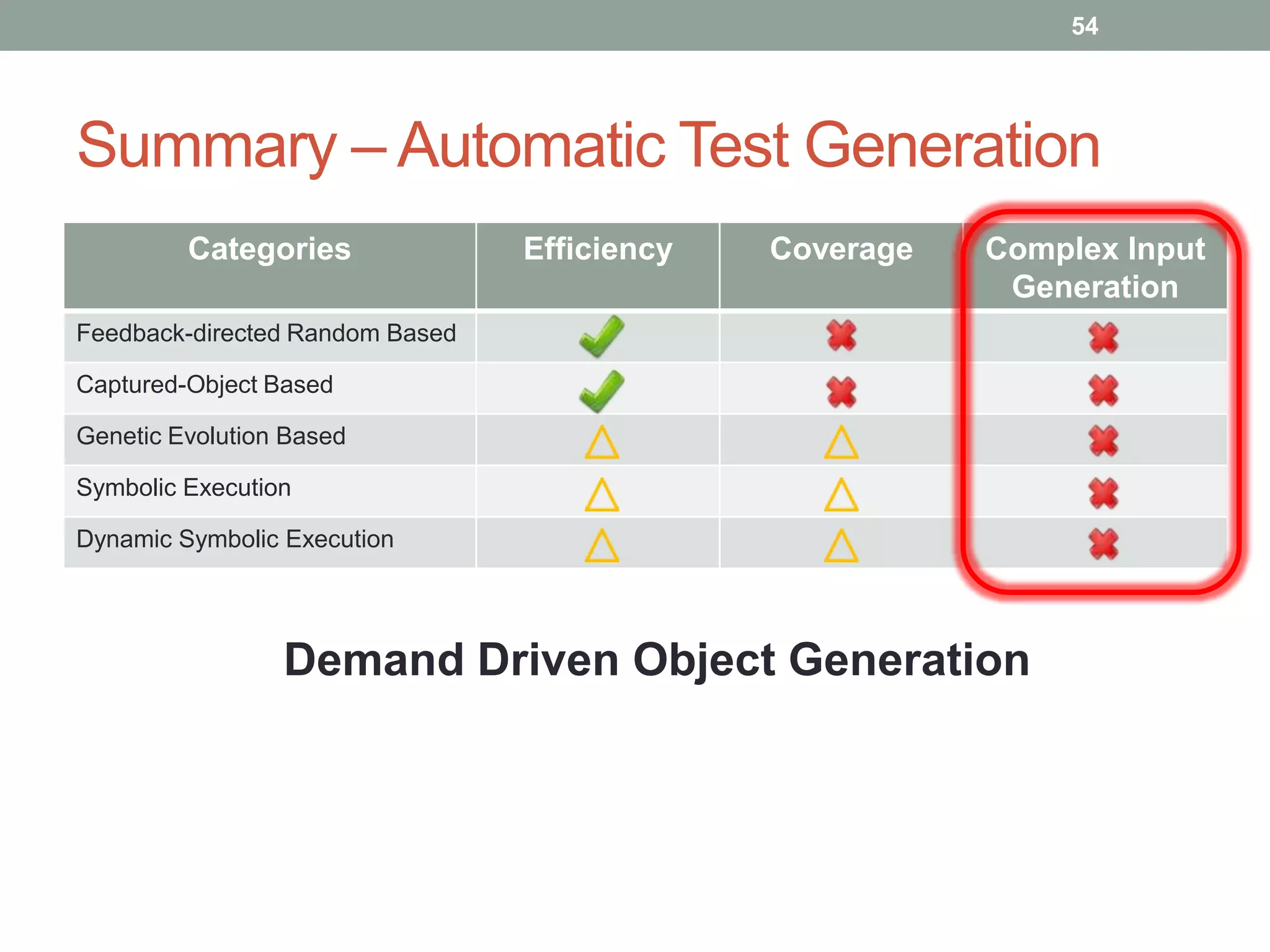

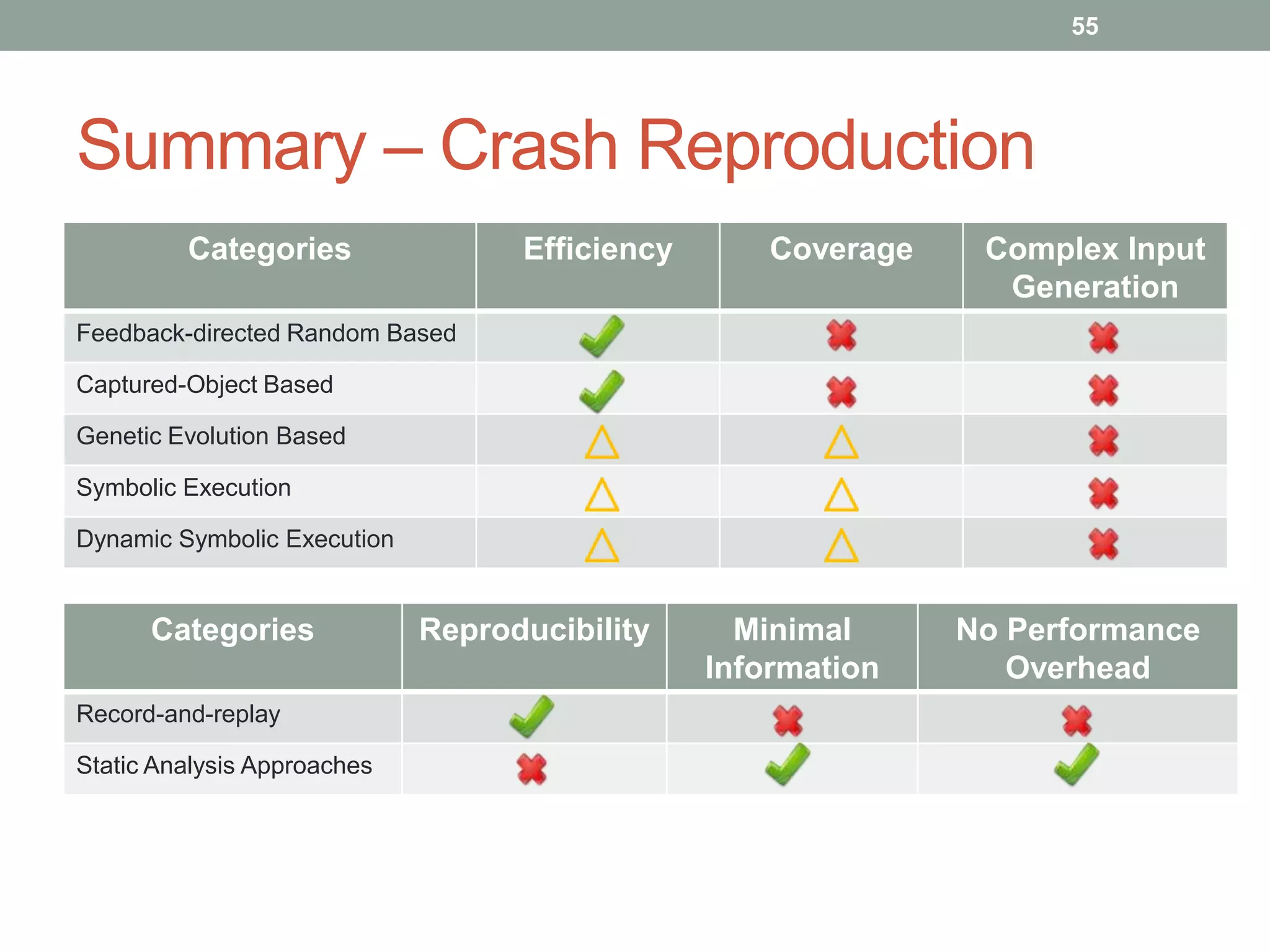

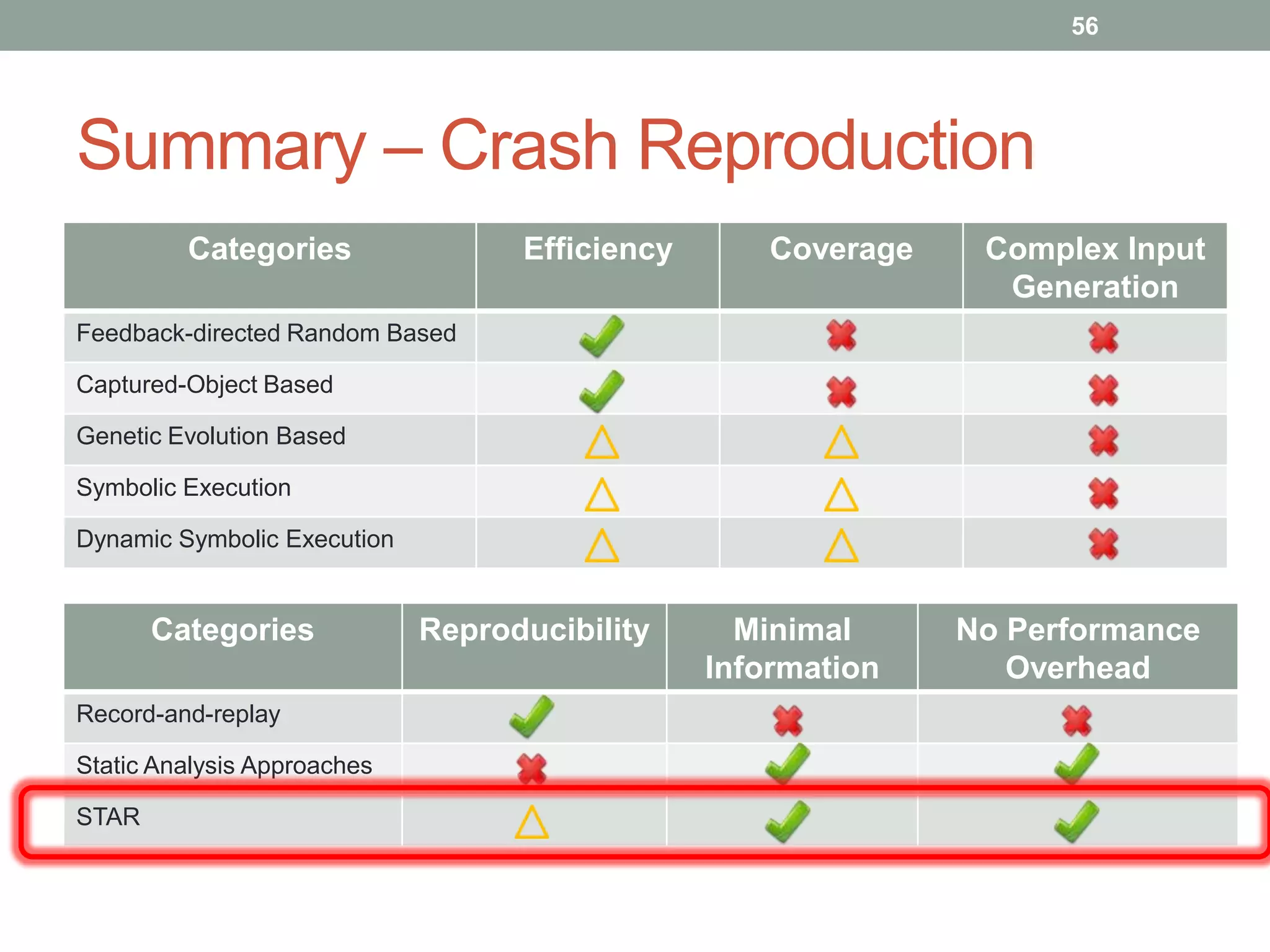

The document provides an outline for a PhD qualifying exam on automatic test generation and crash reproduction. It discusses various approaches to automatic test generation including feedback-directed random generation, captured-object based generation, genetic evolution based generation, symbolic execution, and dynamic symbolic execution. It also covers approaches to automatic crash reproduction including record-and-replay and static analysis based approaches. The document identifies common challenges faced by these techniques such as the object creation problem in automatic test generation.