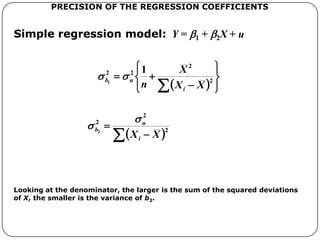

1. The document discusses the simple linear regression model and how to derive the regression coefficients using the least squares method.

2. It uses a numerical example to show how to calculate the regression coefficients b1 and b2 by minimizing the sum of squared residuals.

3. The general method is then described for a model with n observations, where the regression coefficients b1 and b2 are the values that minimize the total sum of squared residuals.