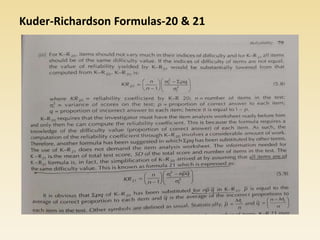

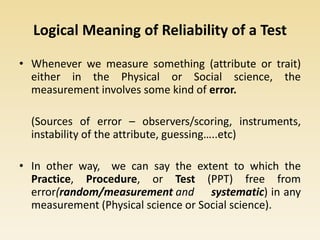

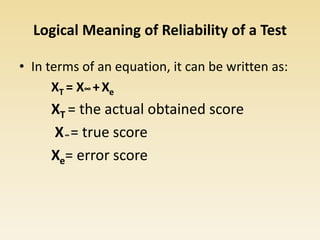

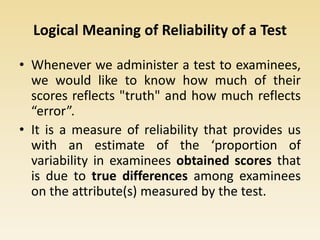

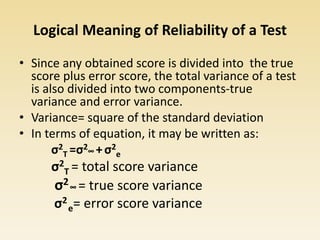

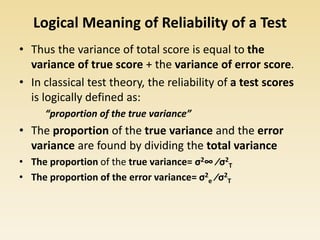

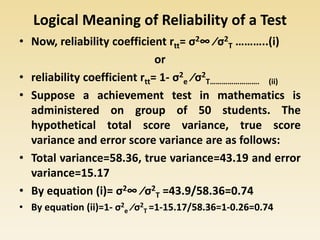

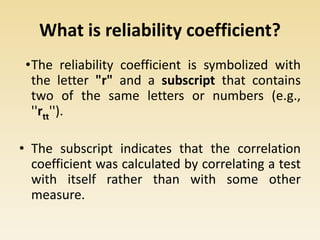

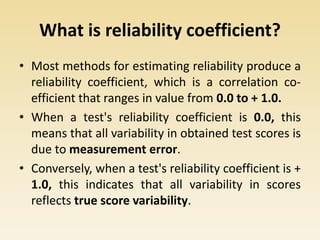

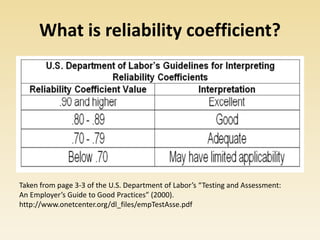

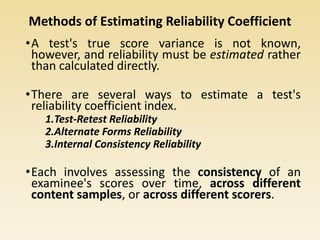

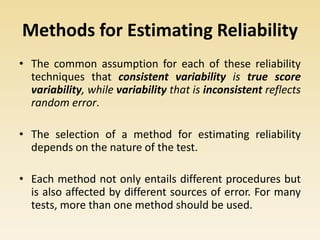

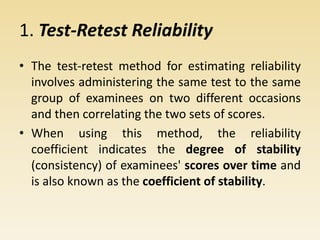

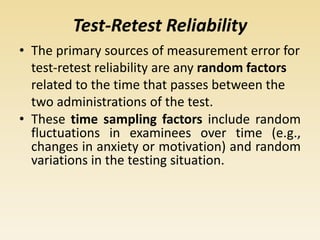

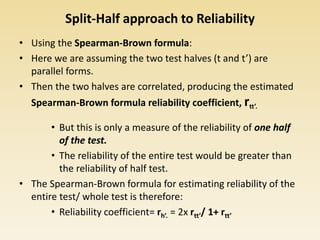

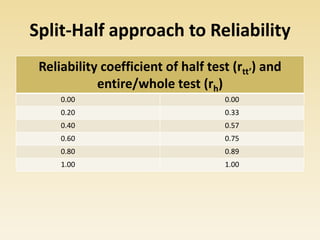

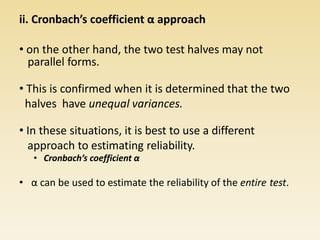

This document discusses the meaning and importance of reliability in testing. It defines reliability as the consistency or stability of test scores if the test is administered multiple times. Several methods for estimating reliability are described, including test-retest reliability, alternate forms reliability, and internal consistency estimates like split-half reliability and Cronbach's alpha. Factors that can impact reliability coefficients like test length, score range, and guessing are also covered.

![Cronbach’s coefficient α approach

Cronbach’s coefficient α= 2 [σh

2 –(σt1

2 – σt2

2 )]/σh

2

σh = variance of the entire test, h

σt1

= variance of the half test, t1

σt2

= variance of the half test, t2

• It is the case, that if the variances on both test

halves are equal, then the Spearman-Brown

formula and Cronbach’s α will produce

identical results.](https://image.slidesharecdn.com/reliabilityoftest-200504145818/85/Reliability-of-test-31-320.jpg)