Embed presentation

Downloaded 28 times

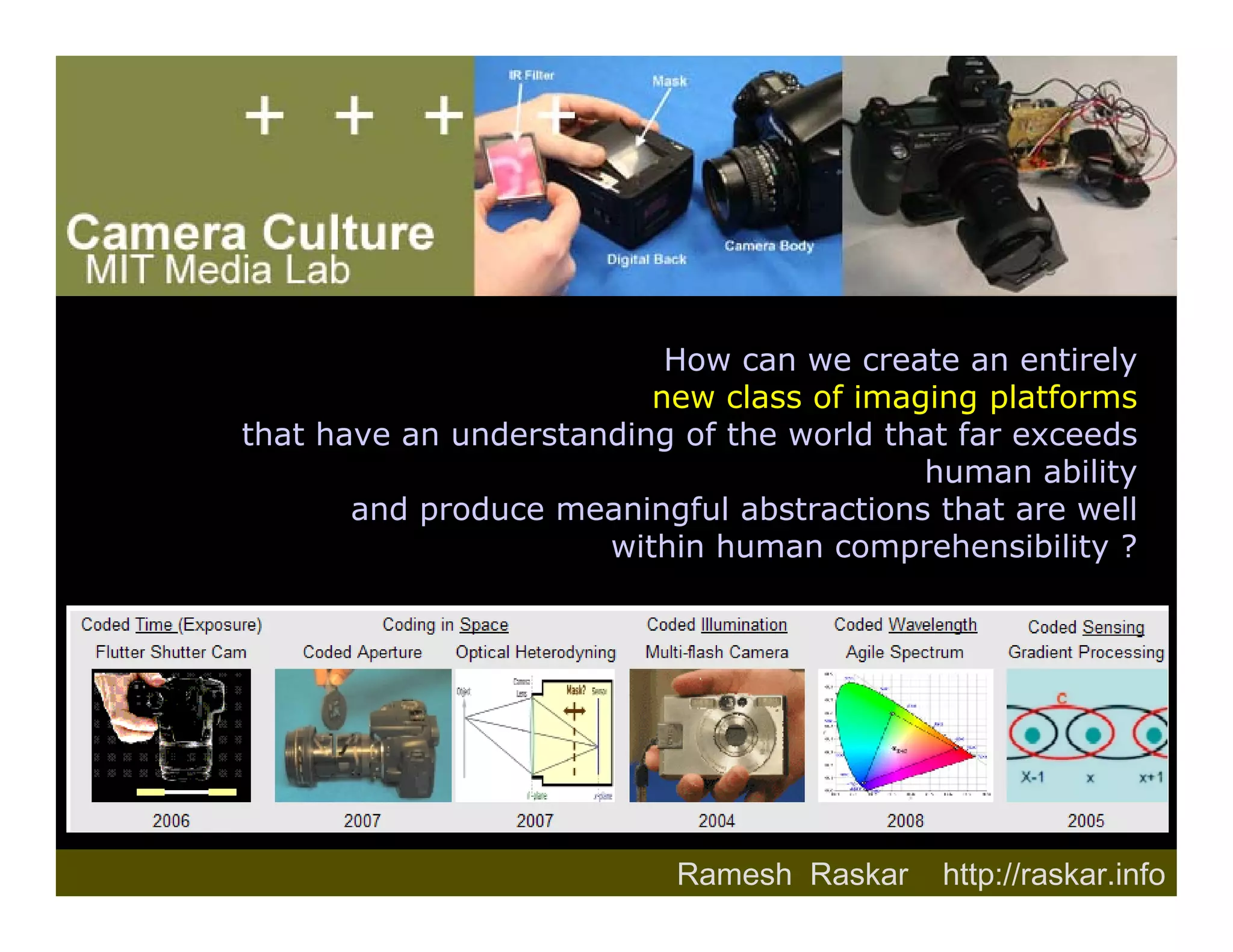

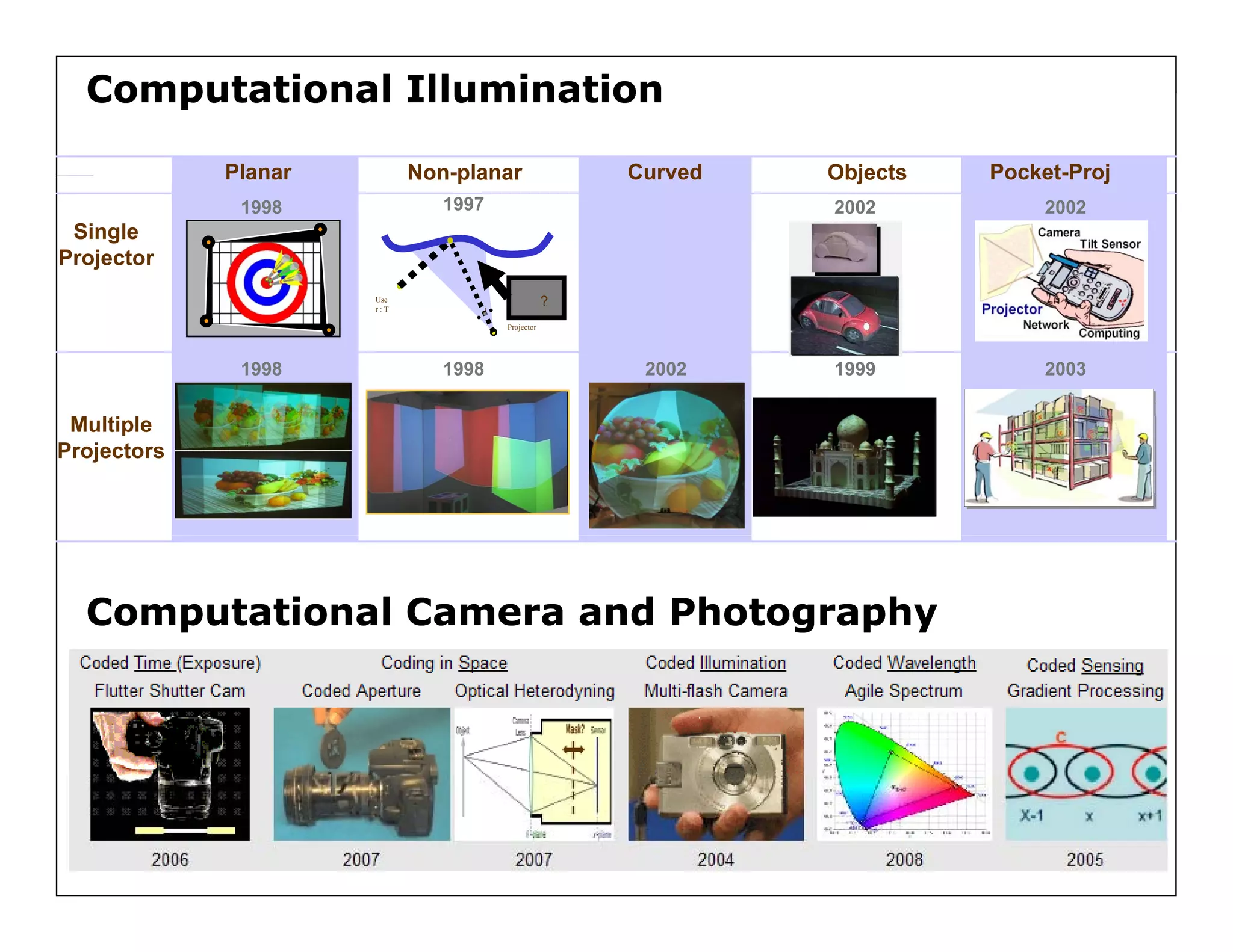

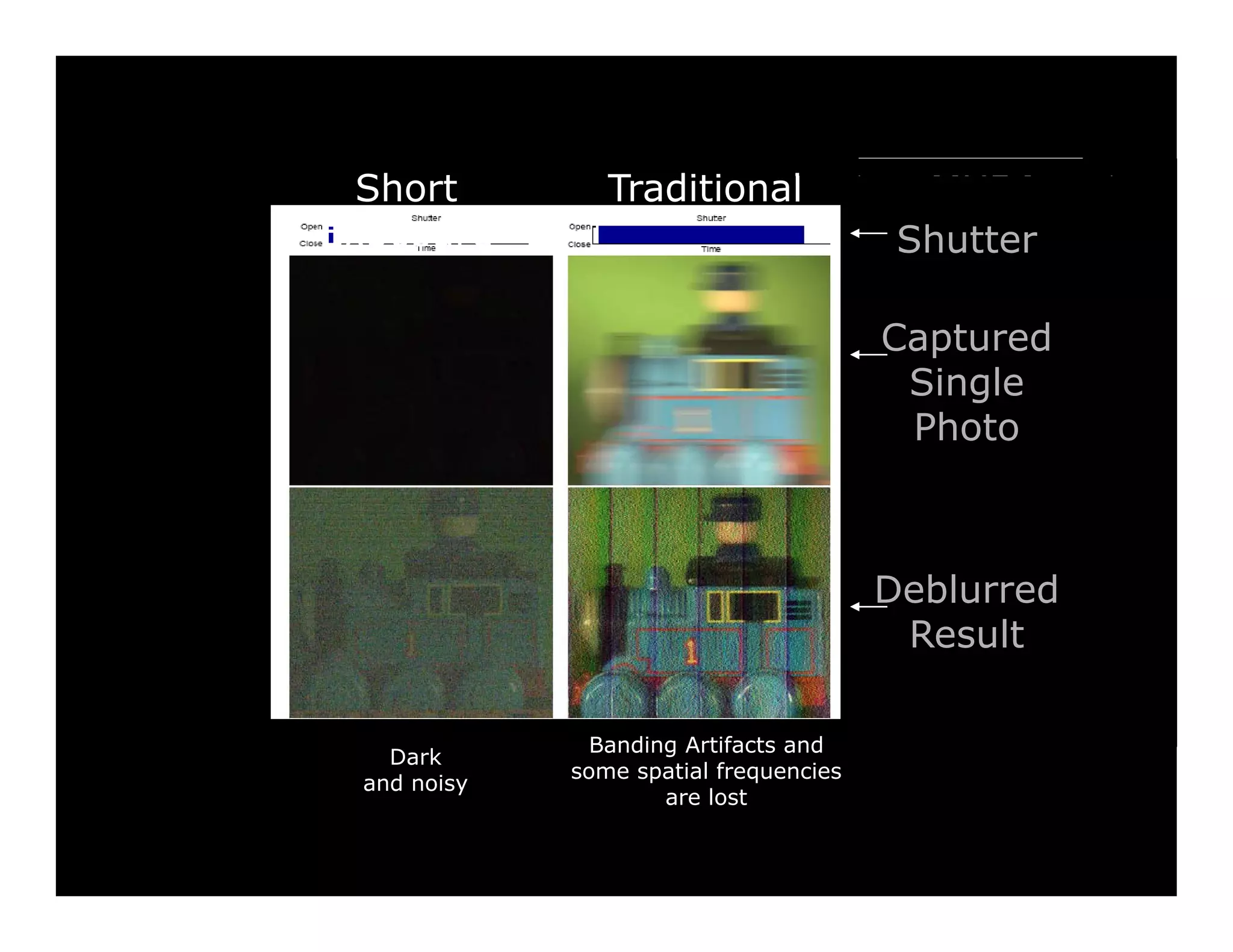

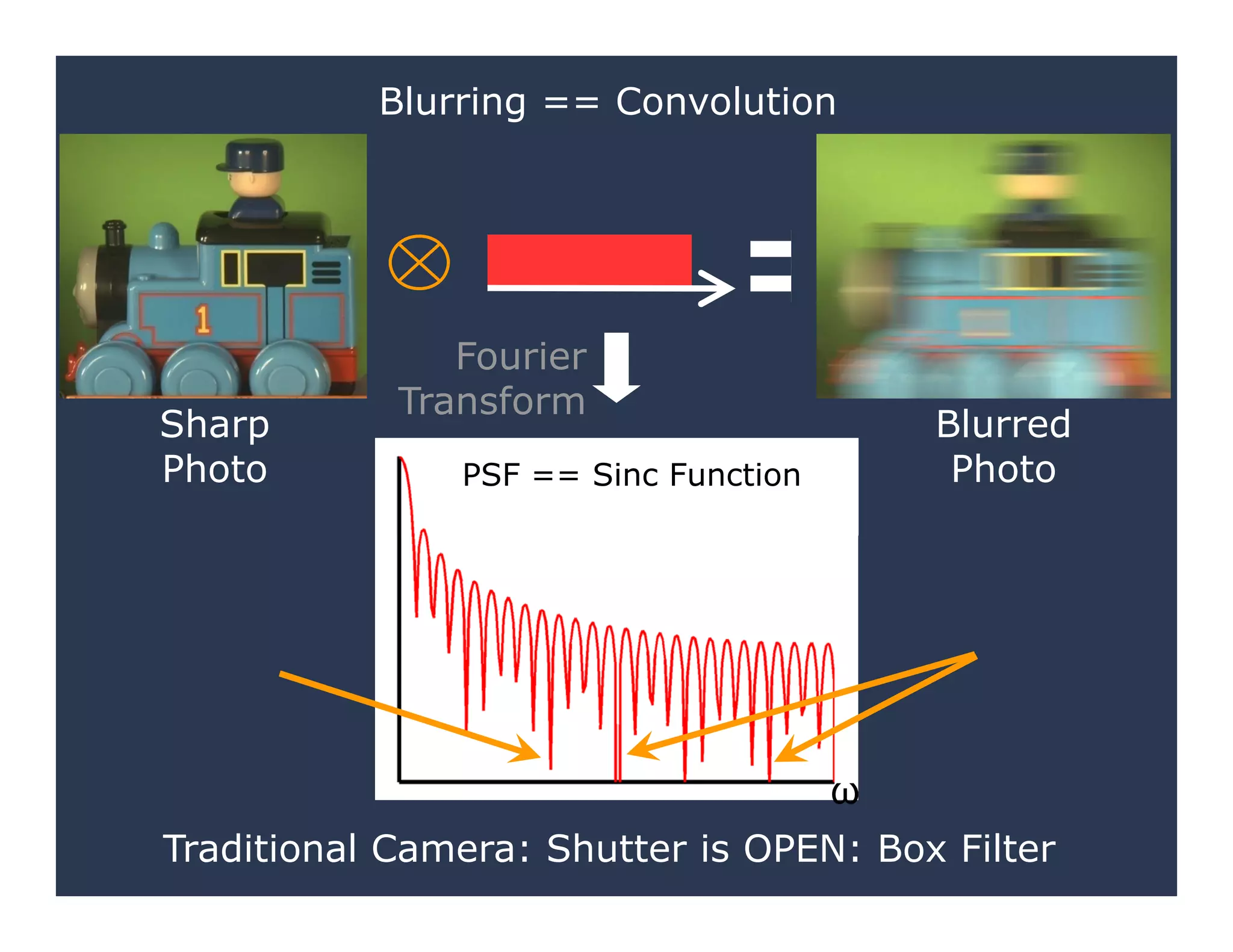

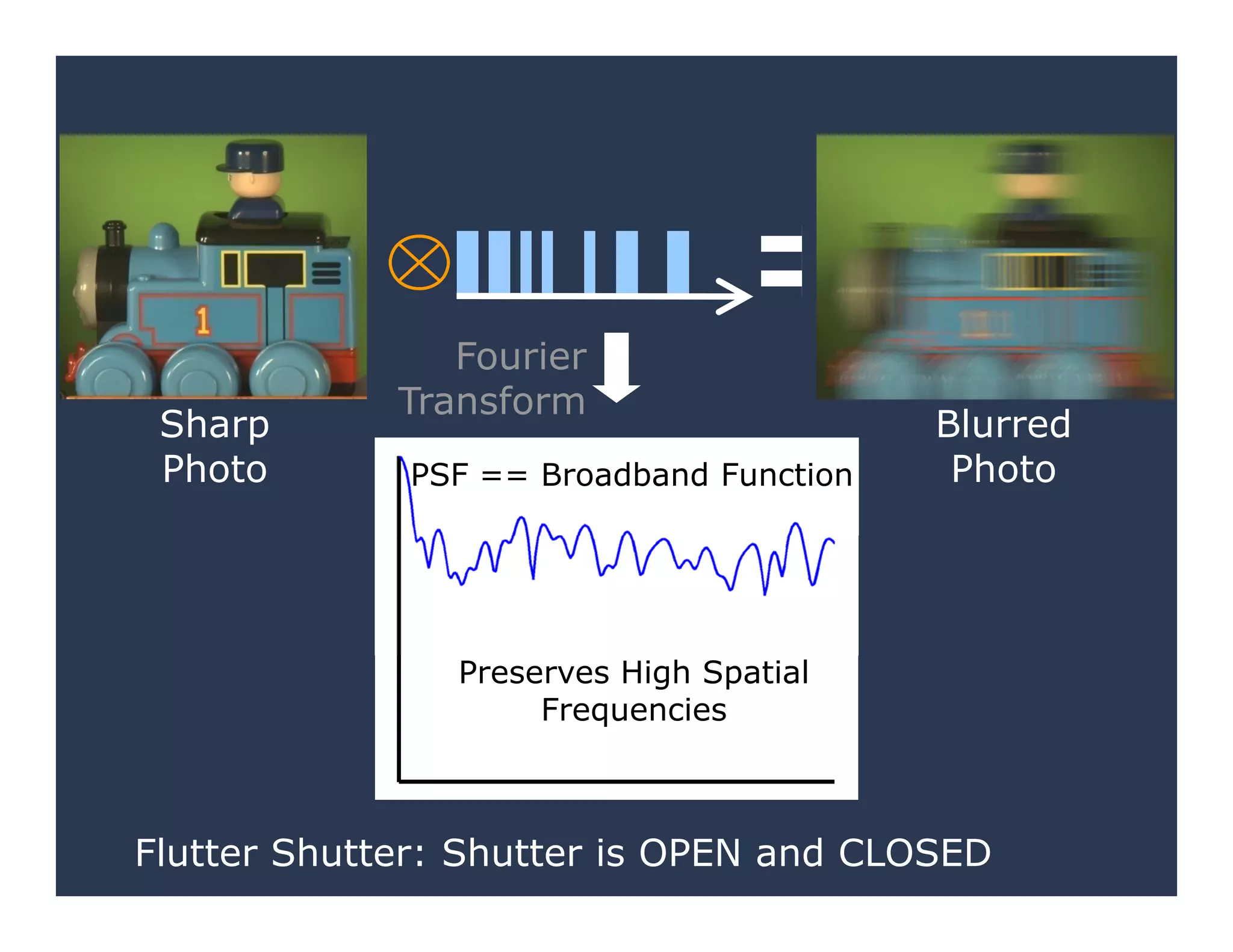

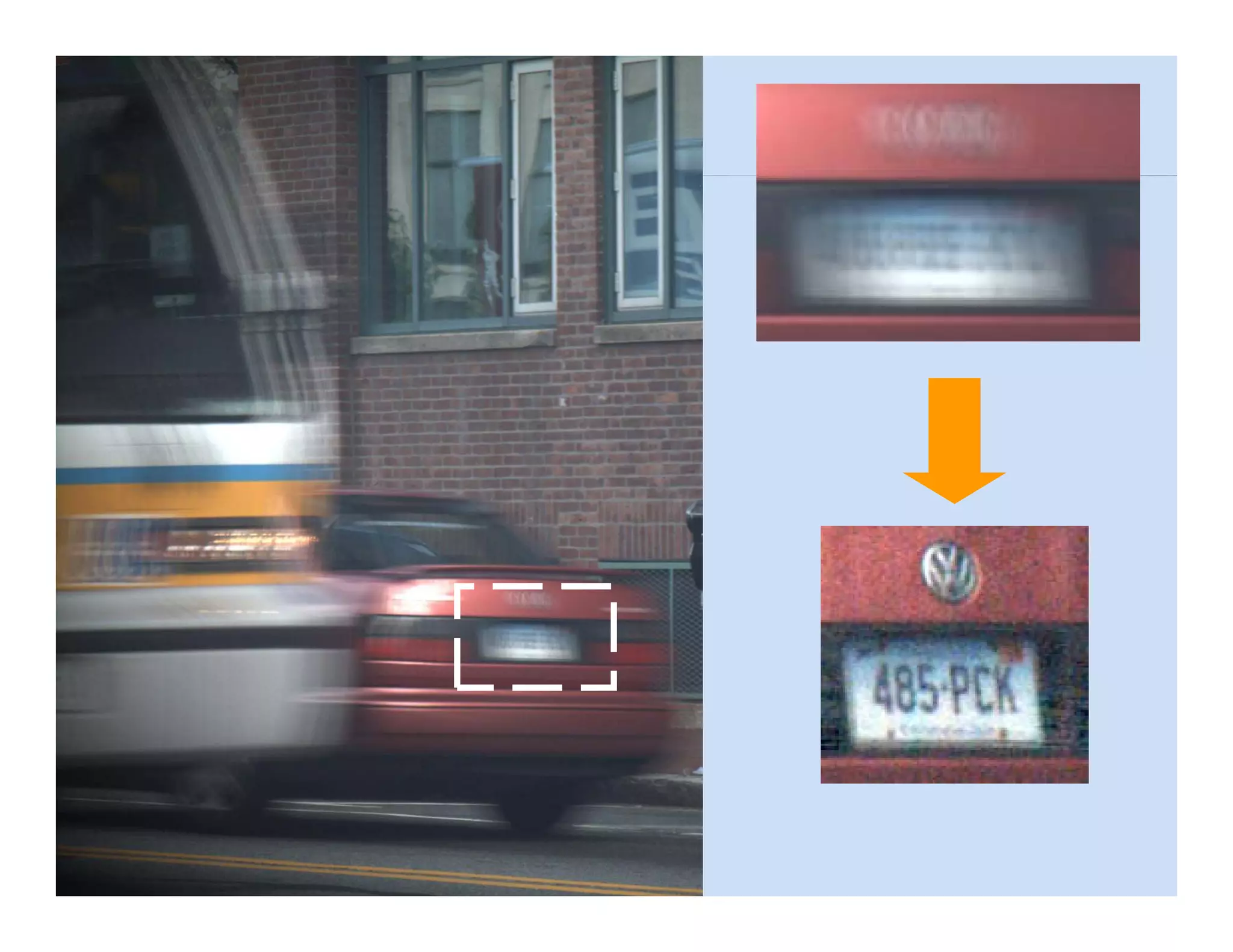

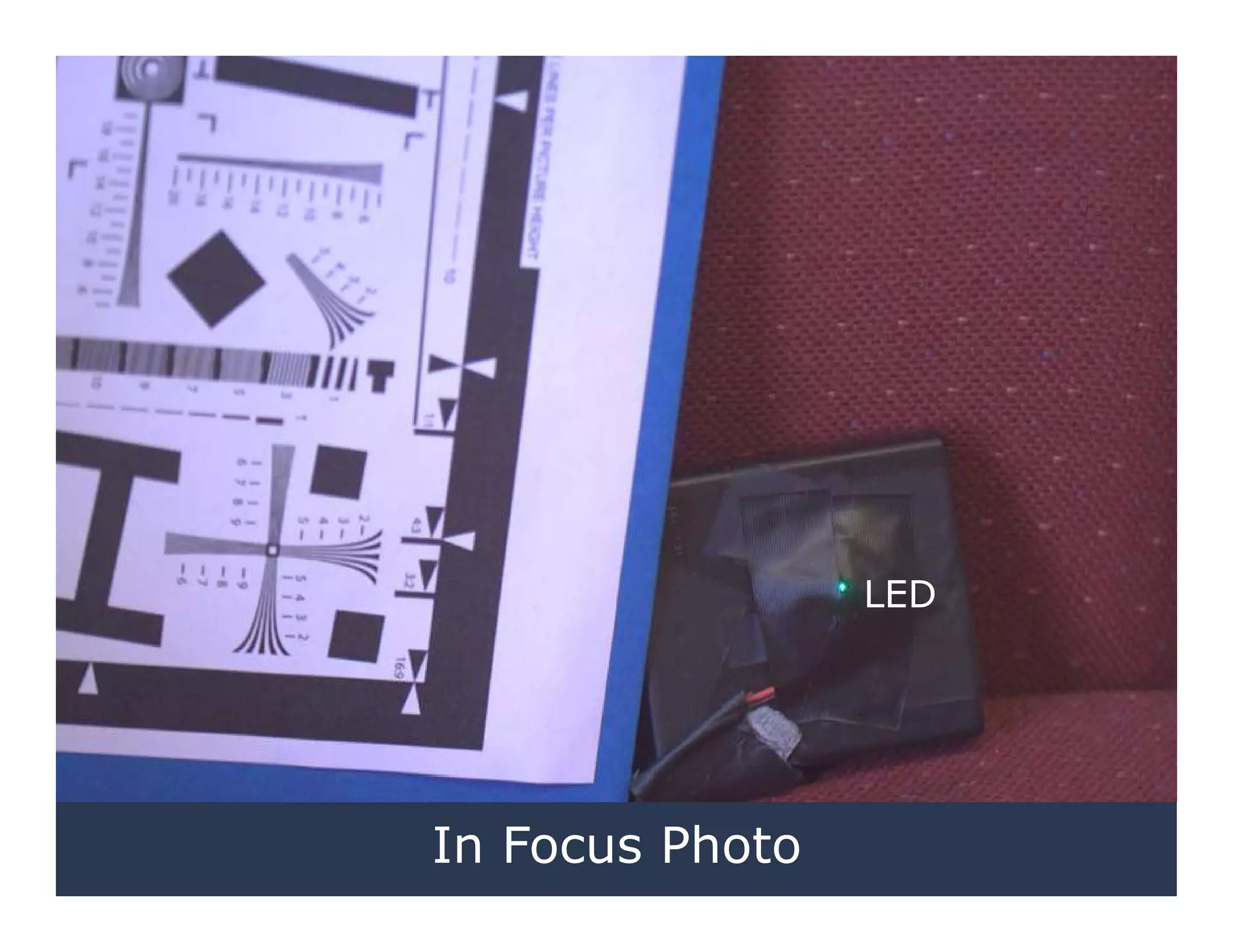

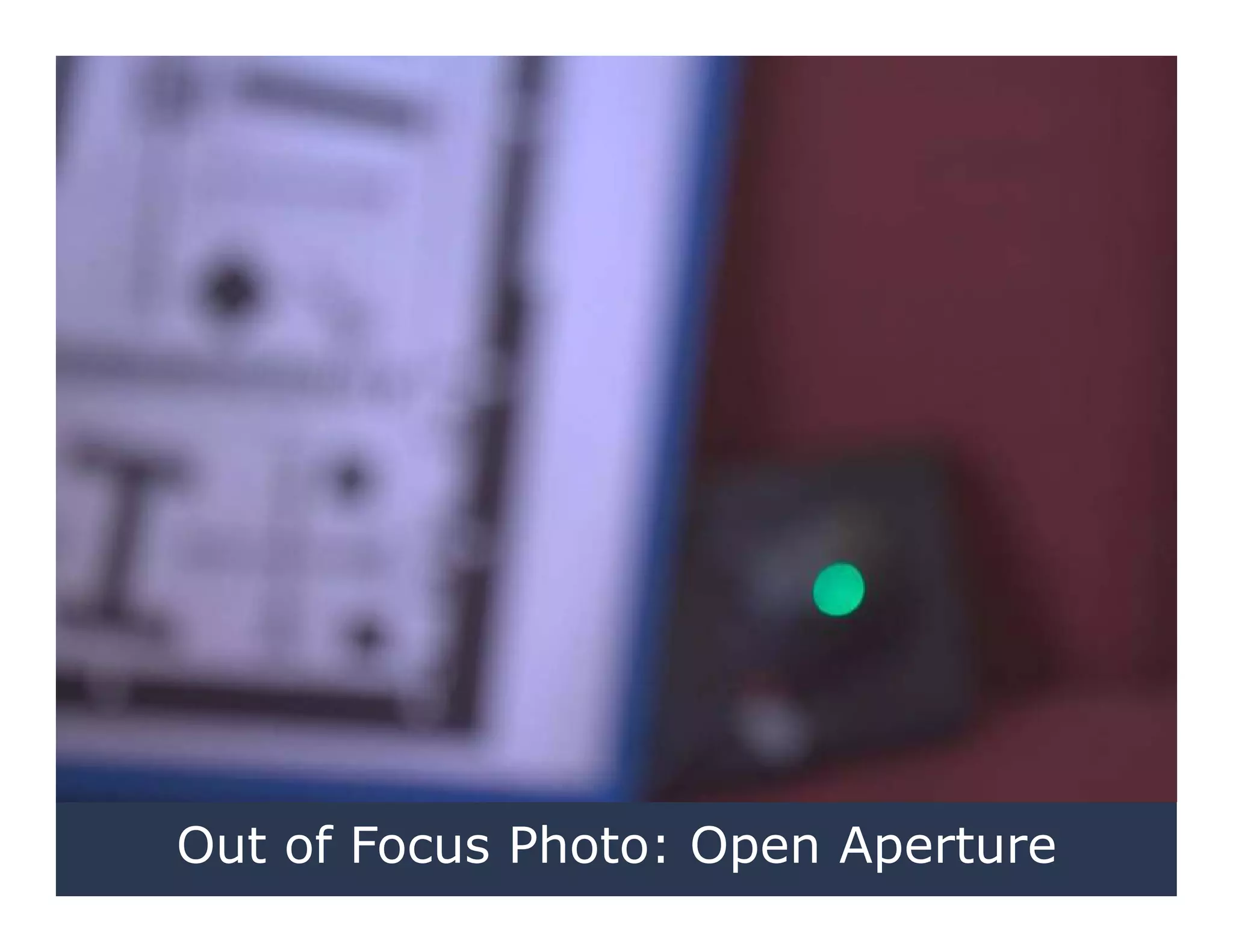

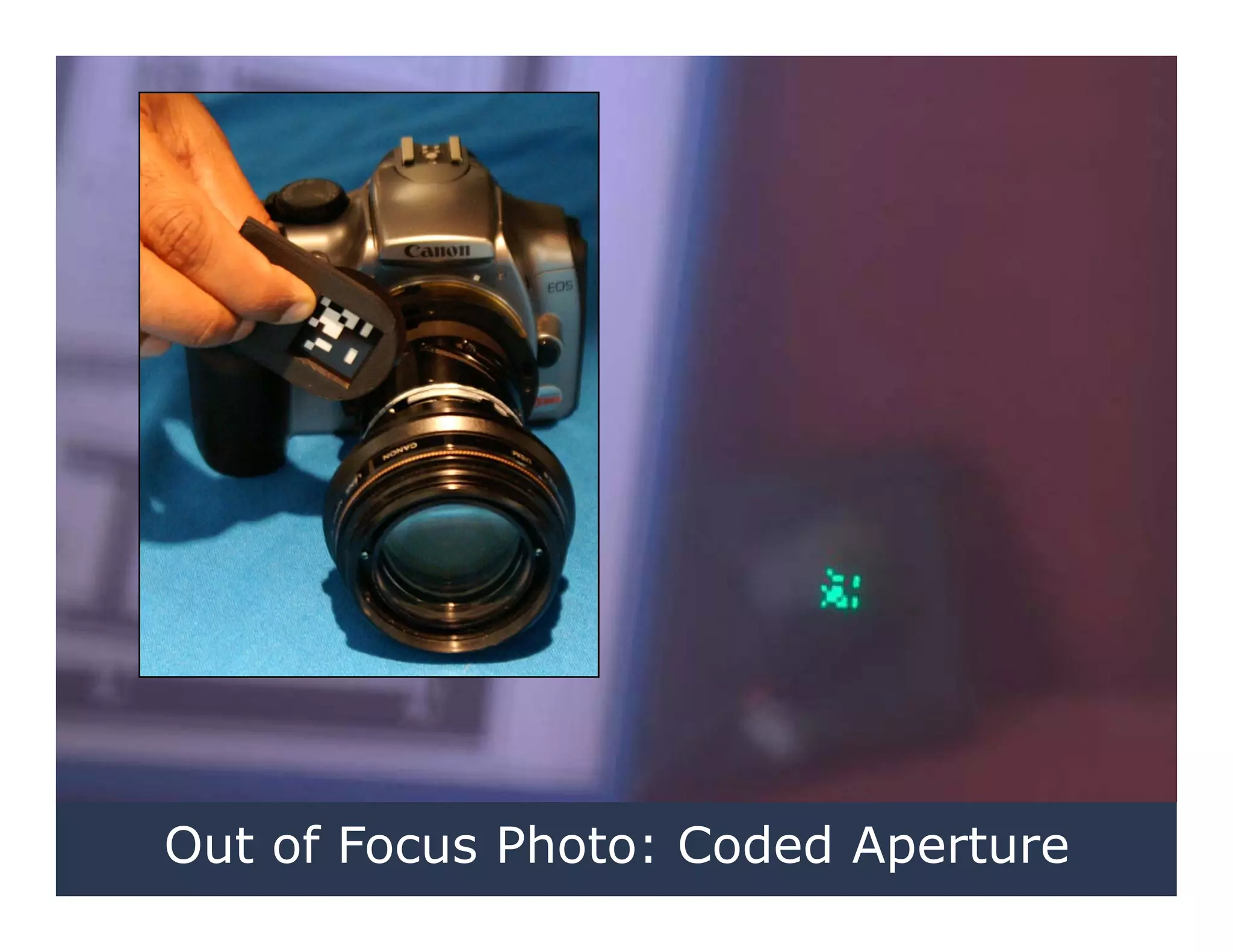

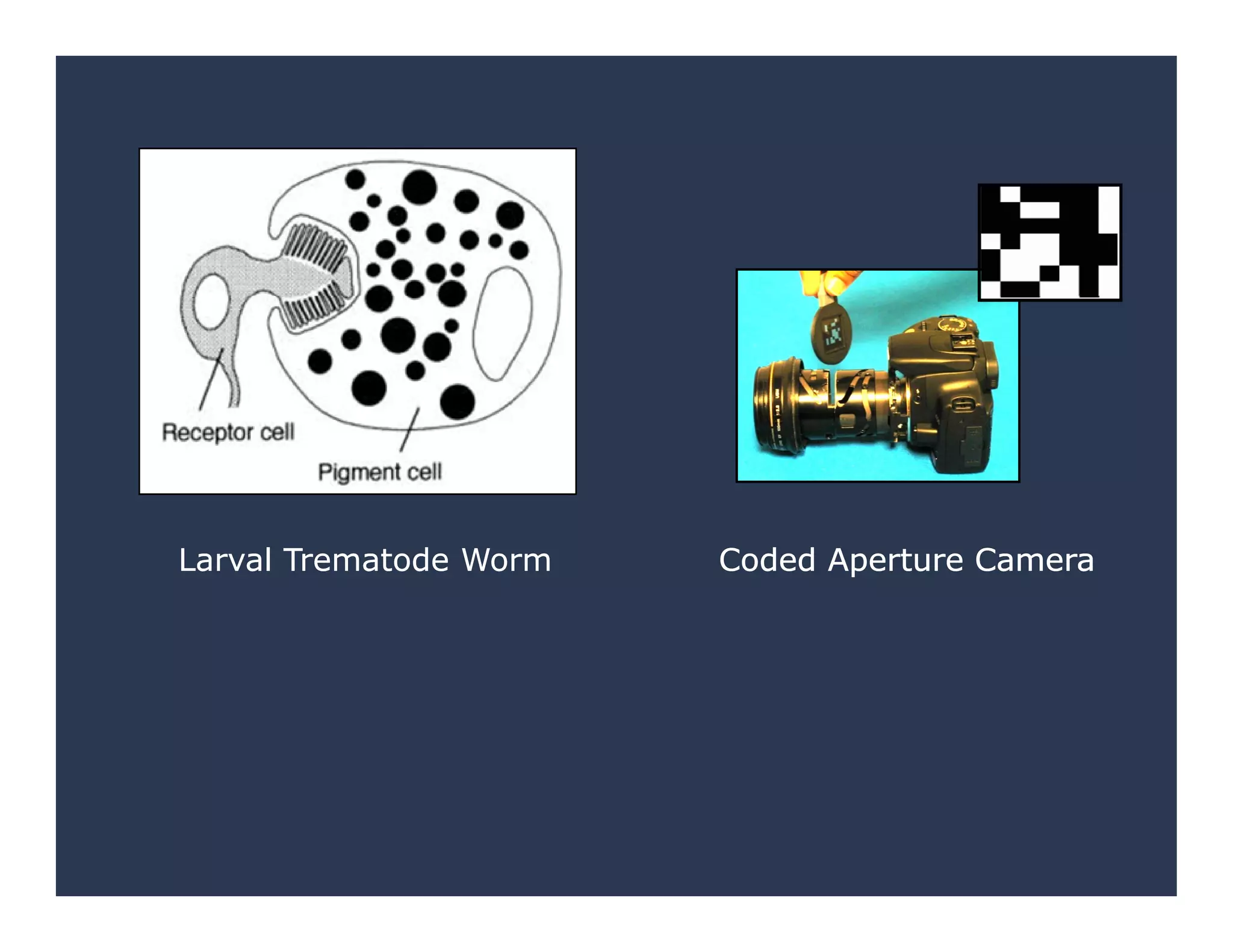

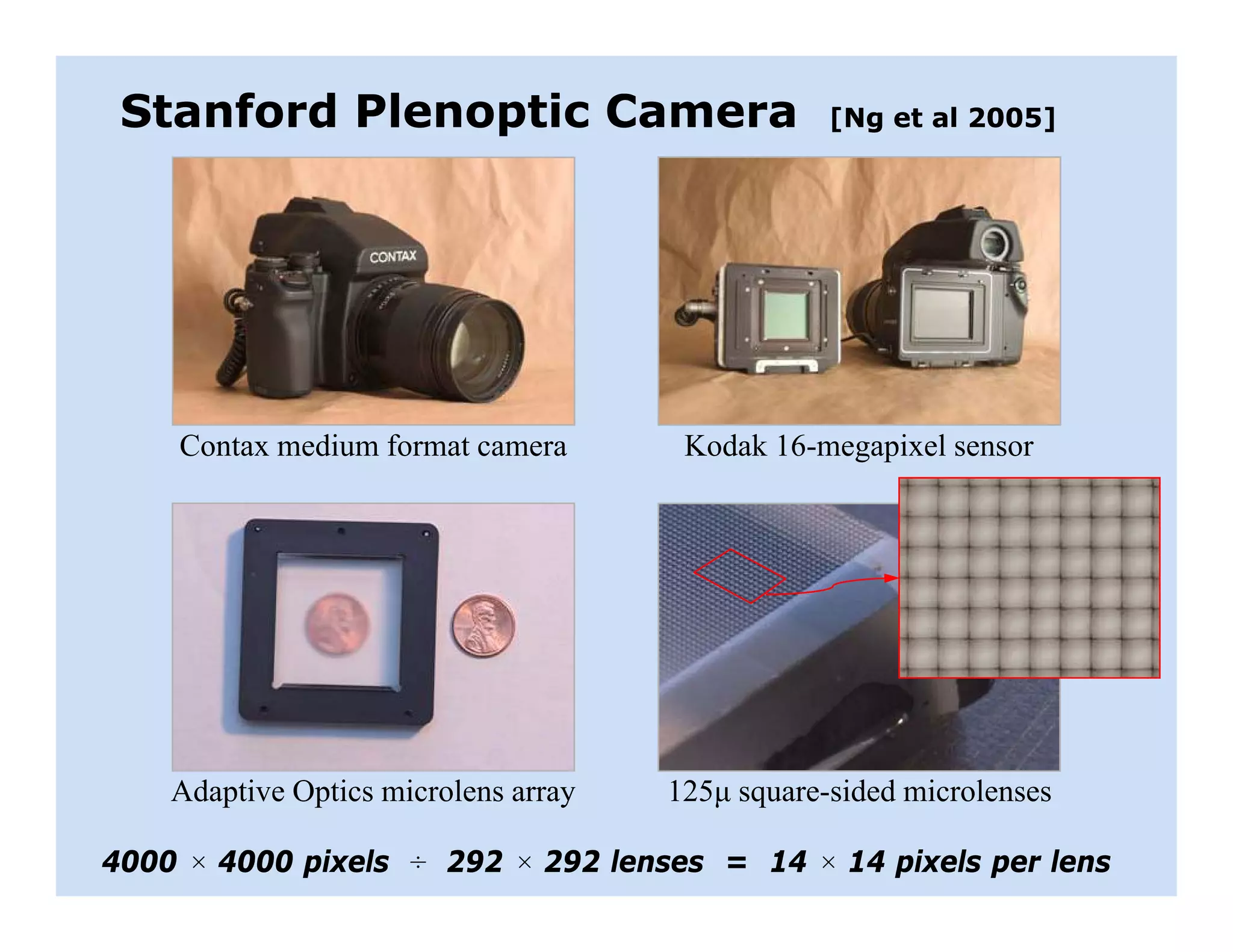

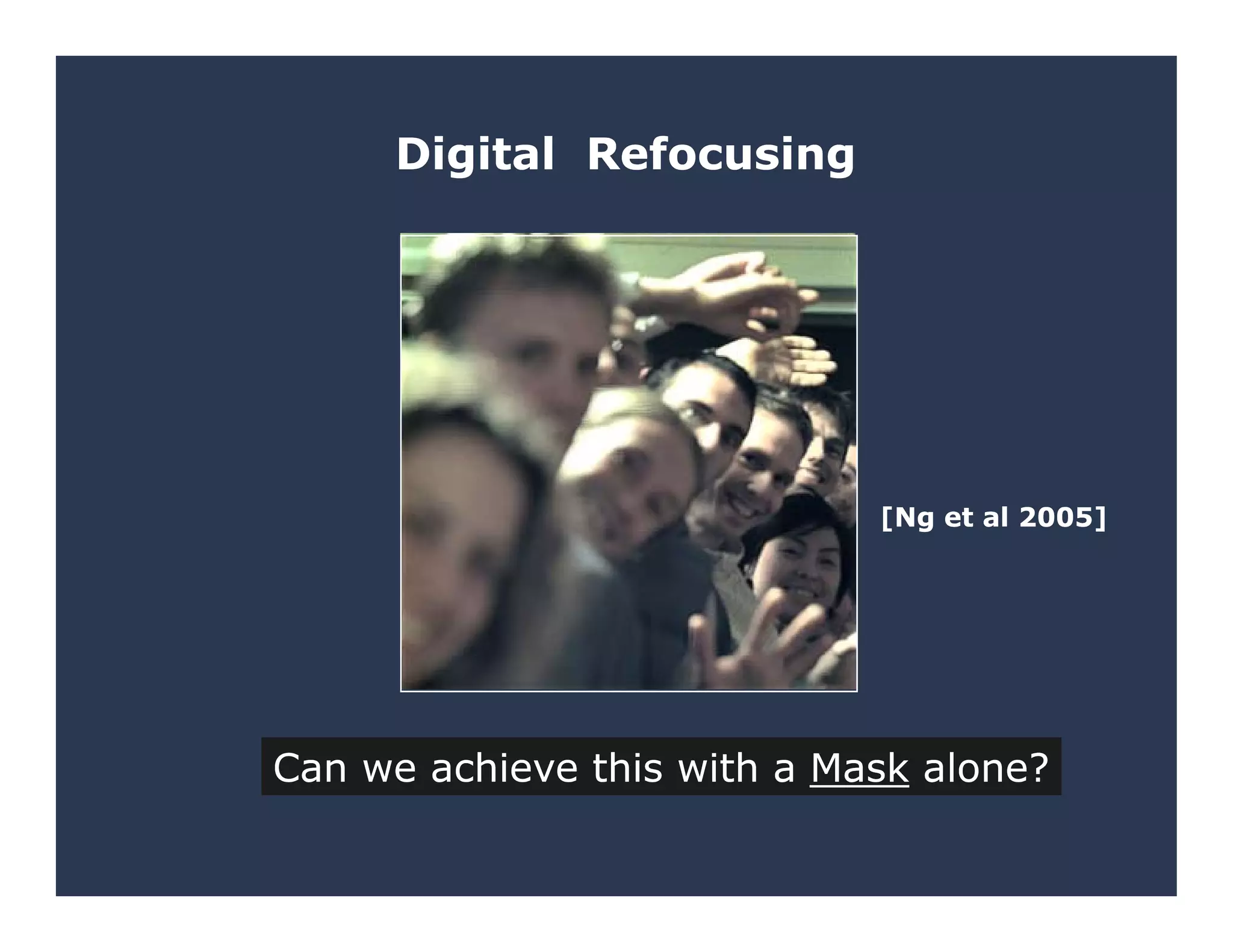

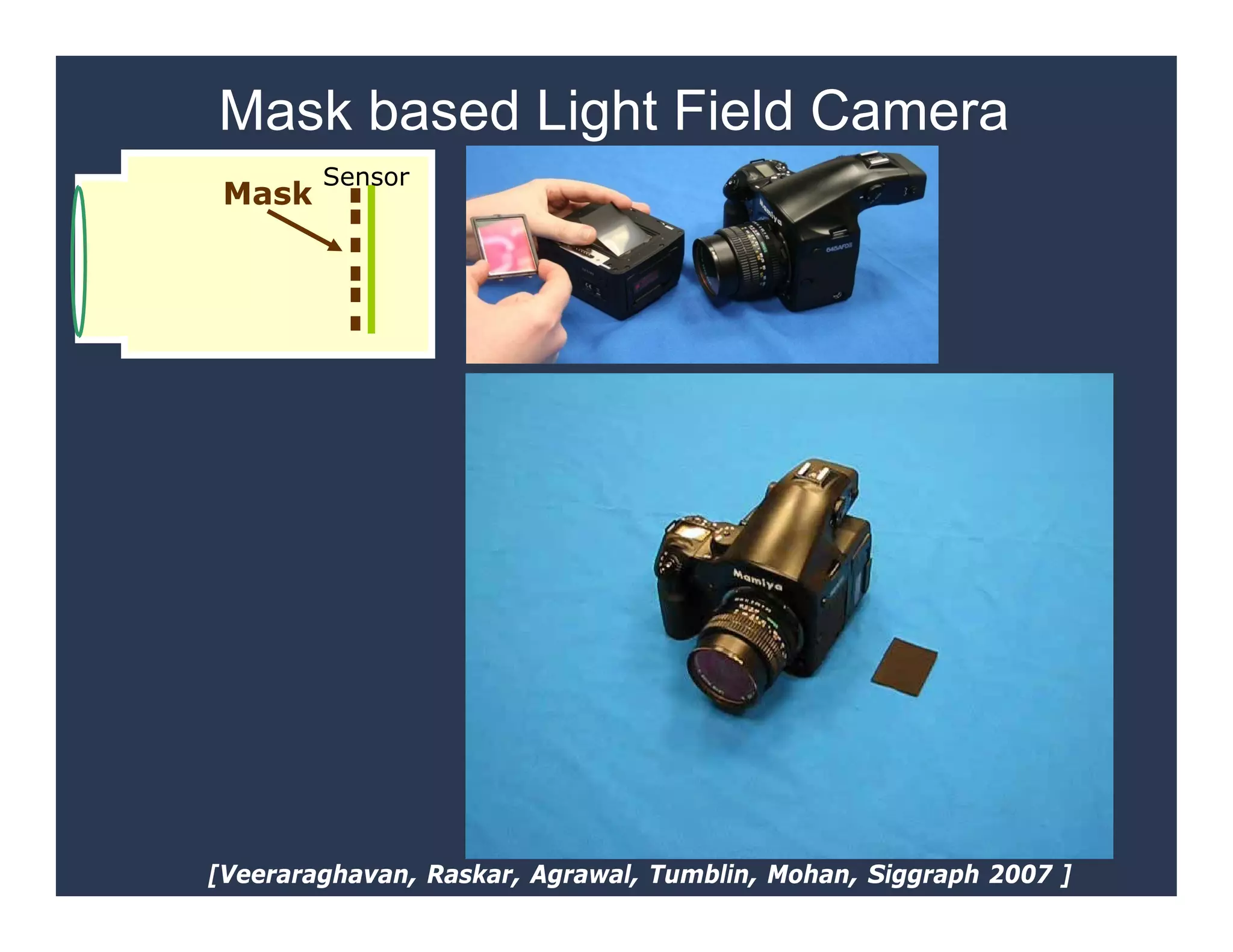

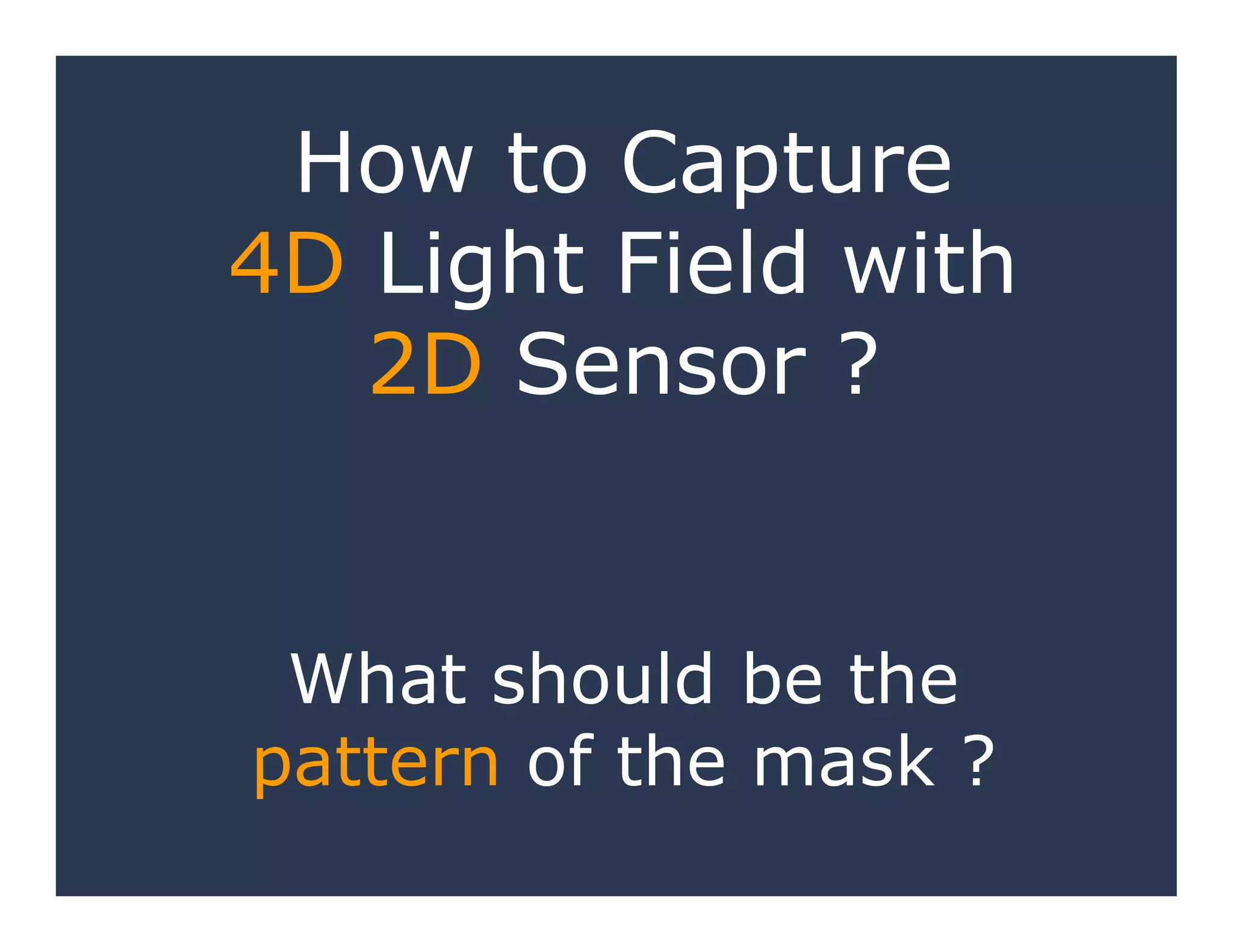

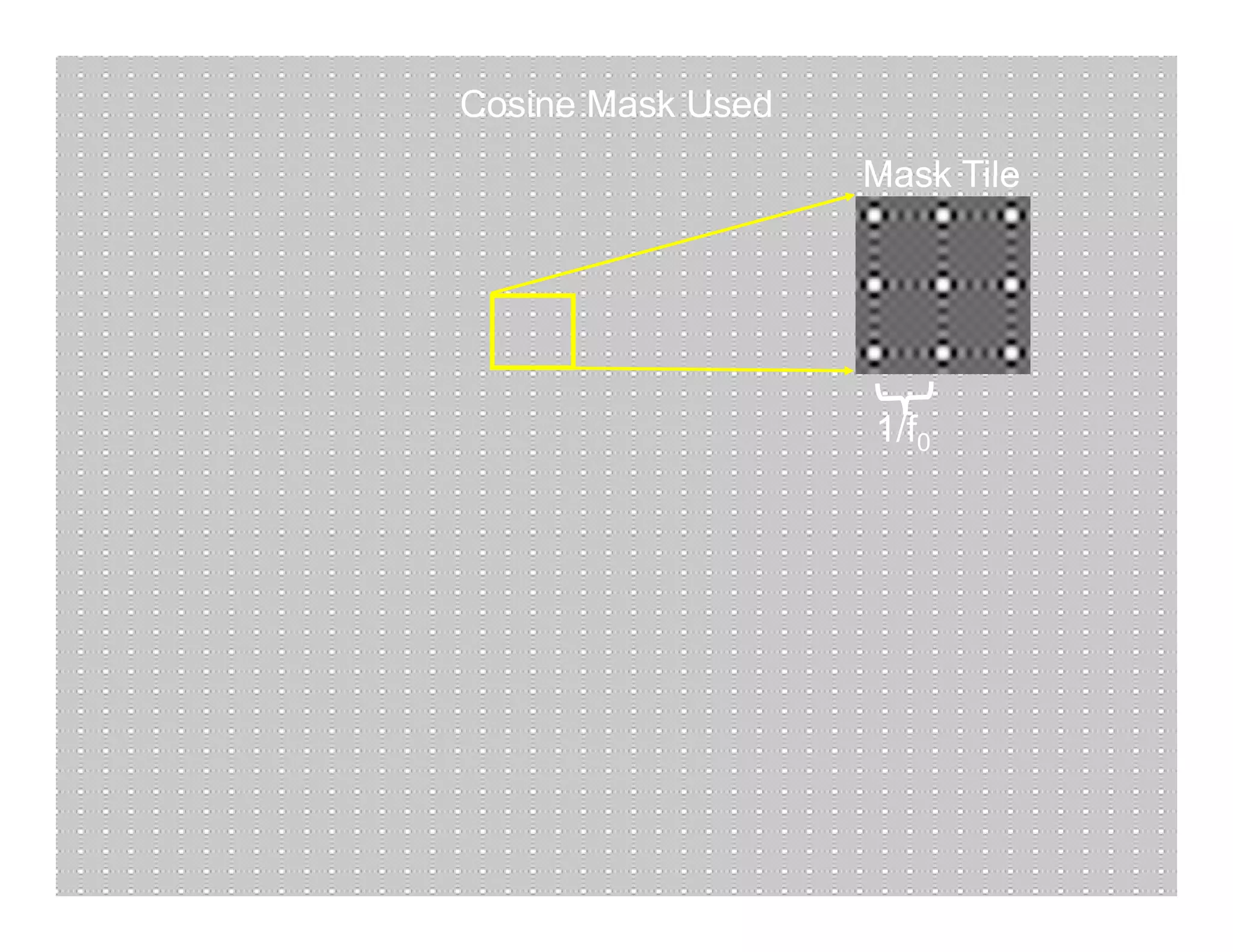

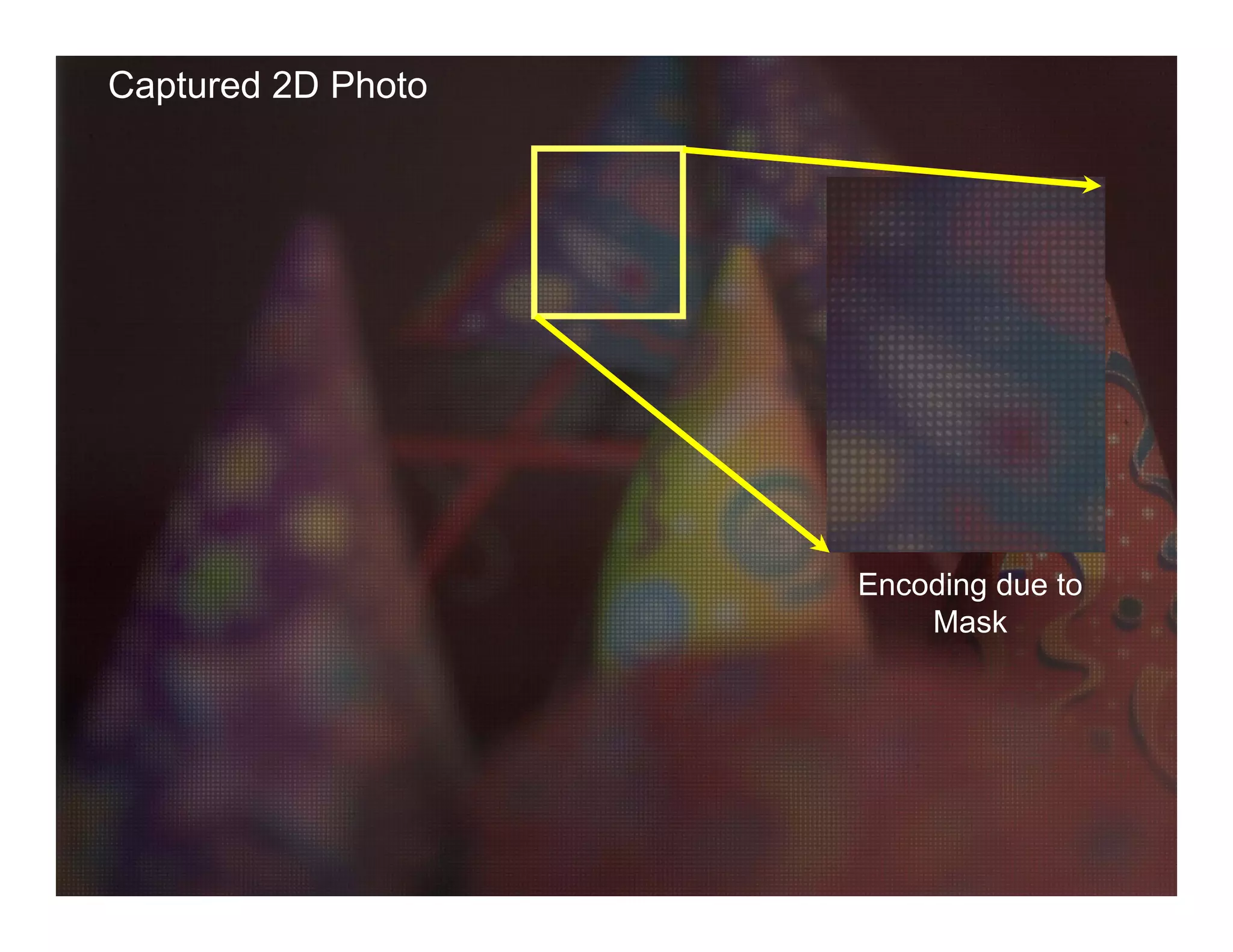

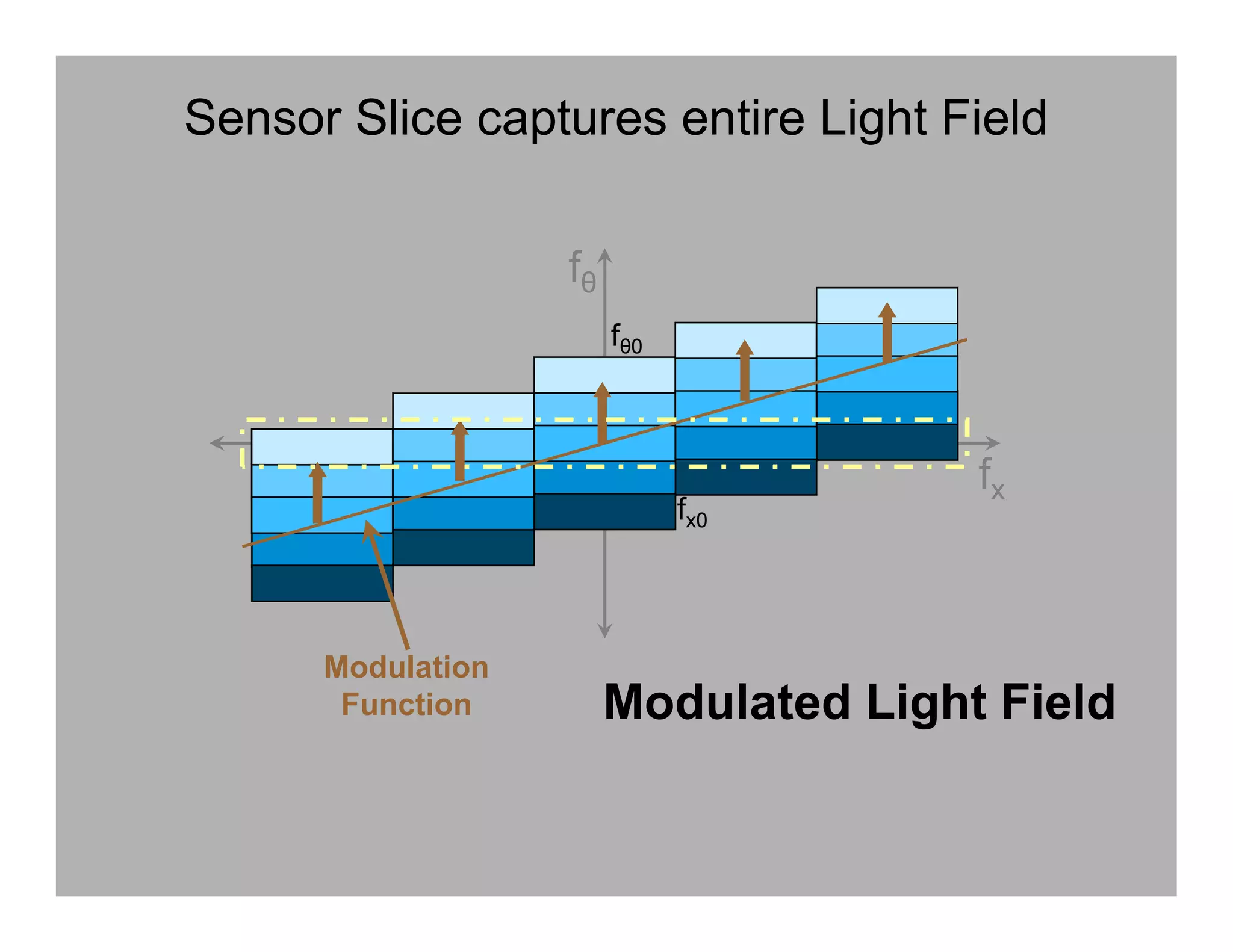

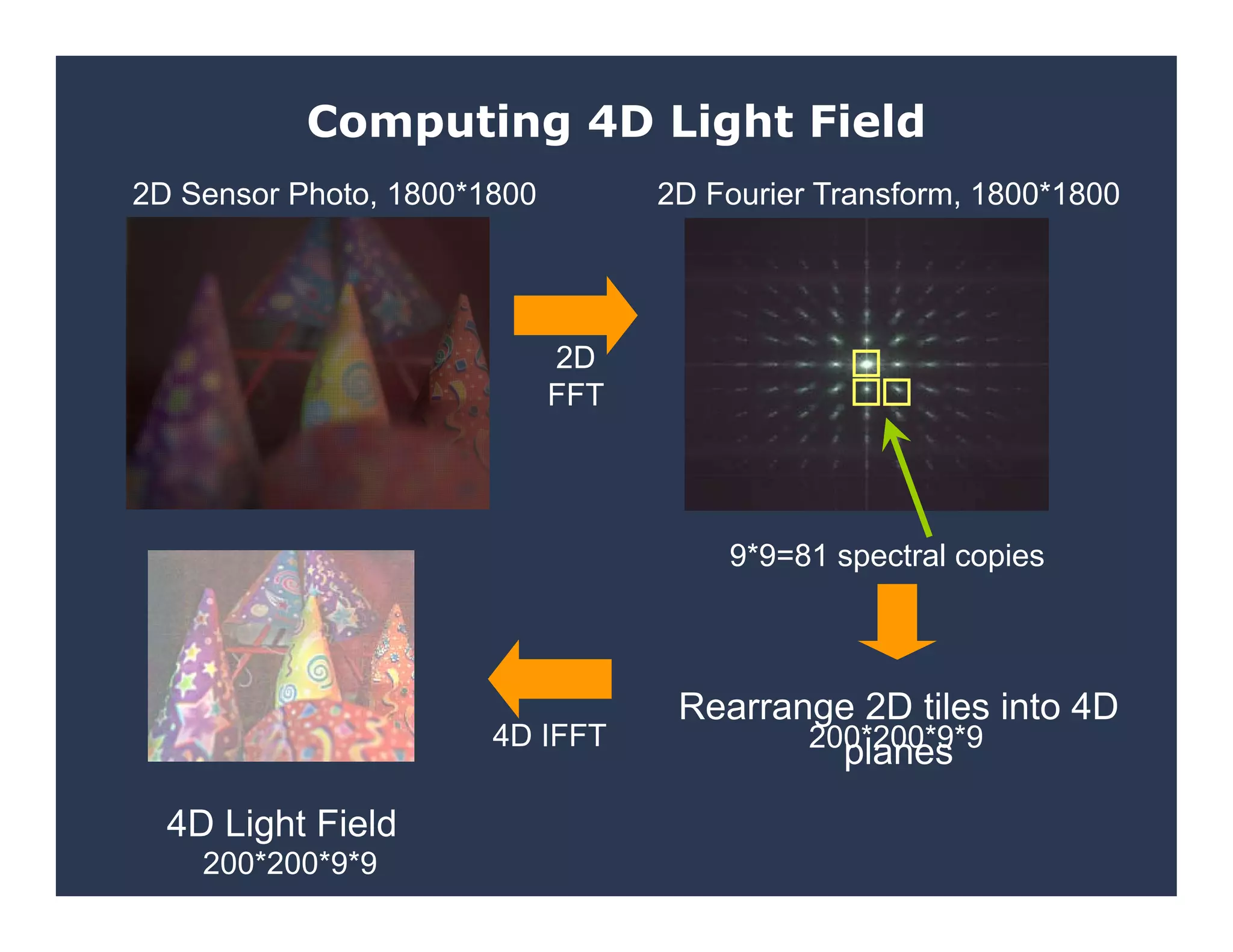

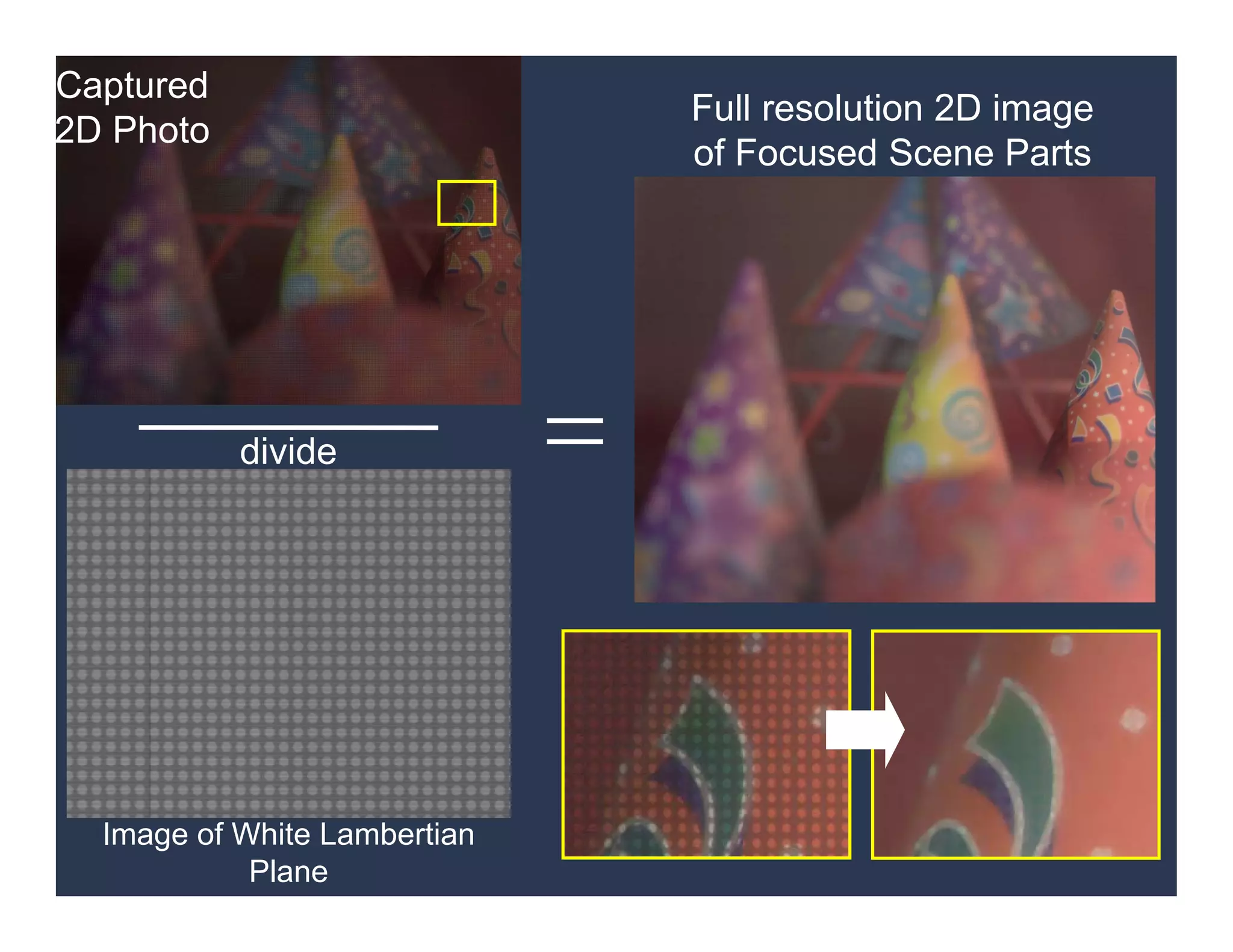

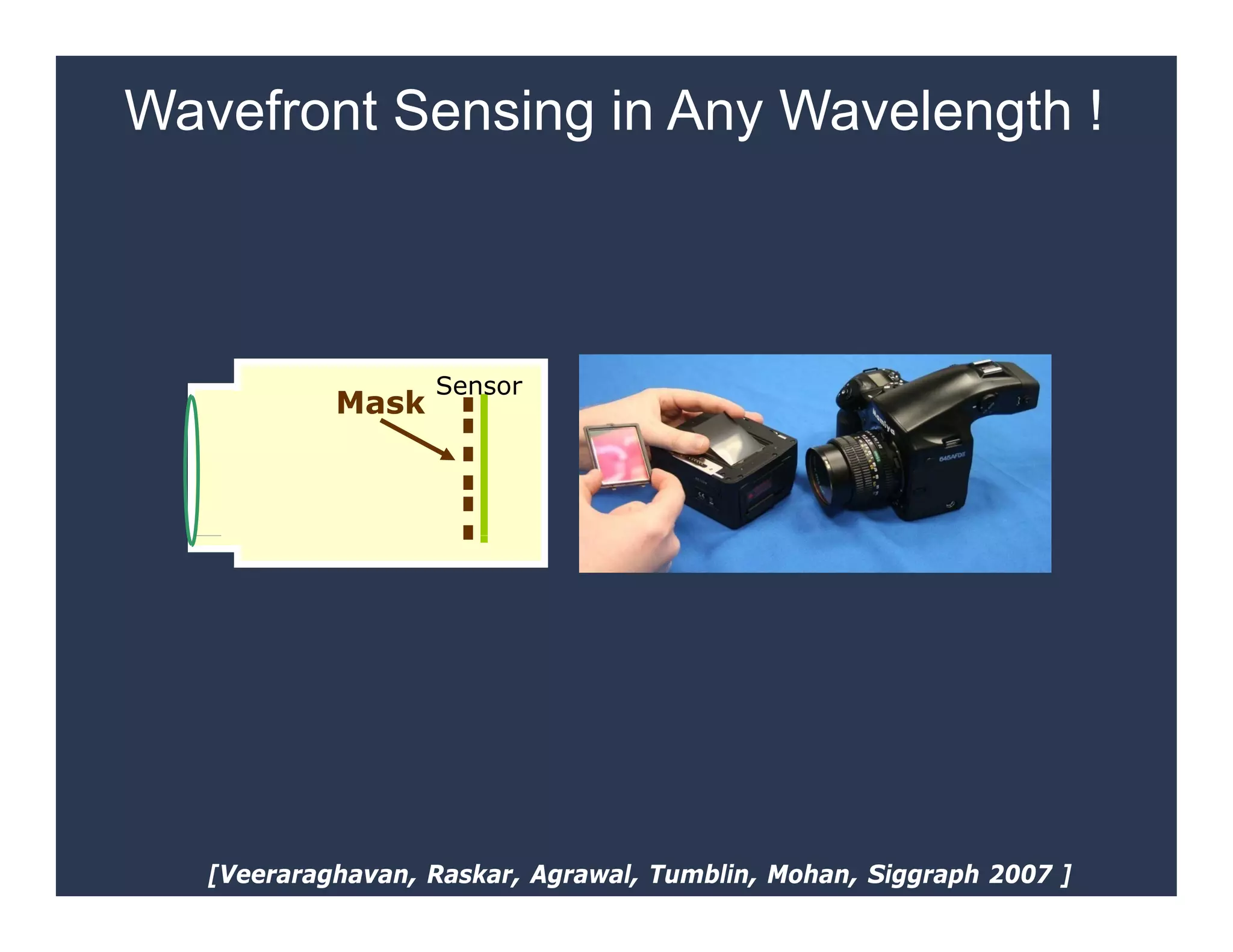

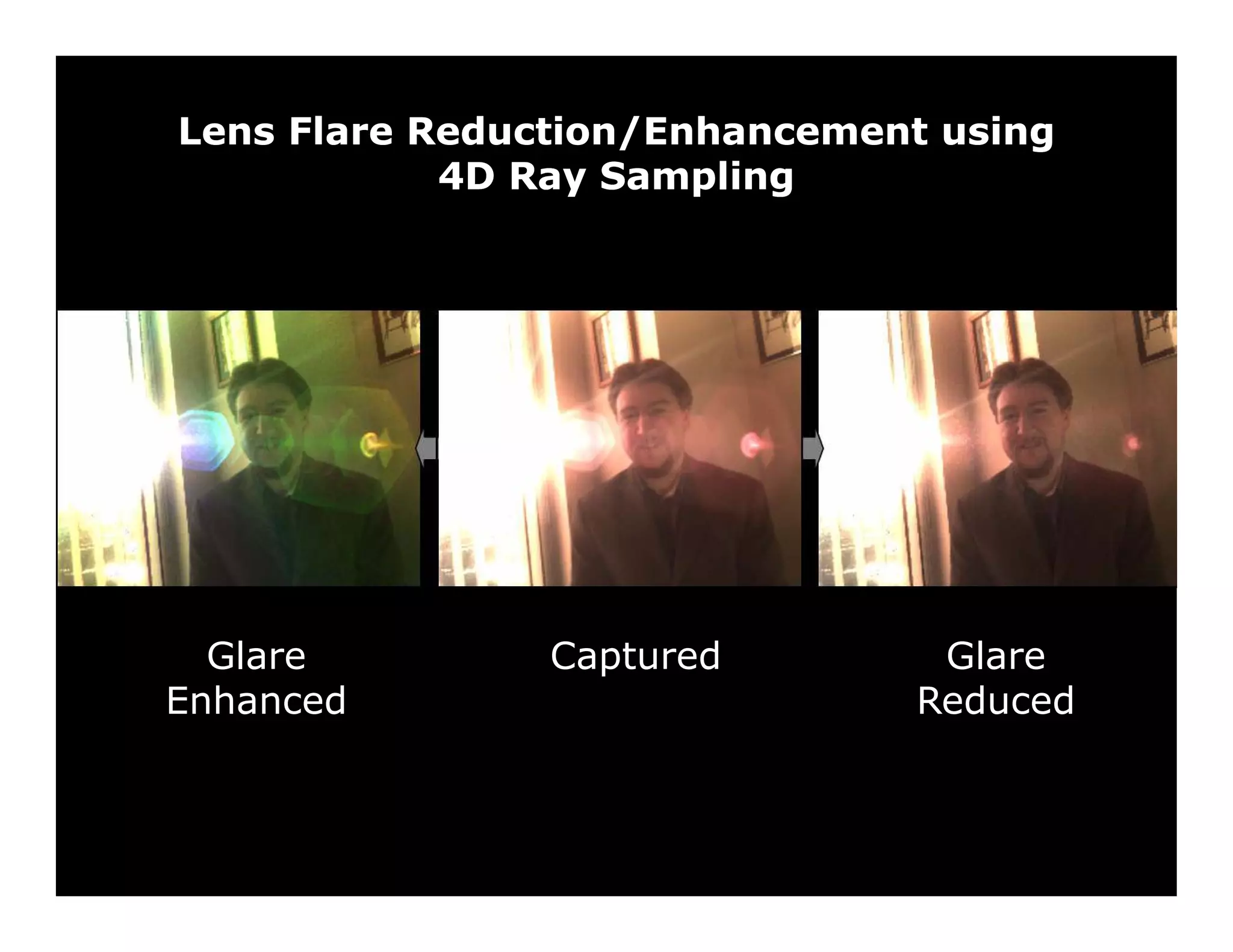

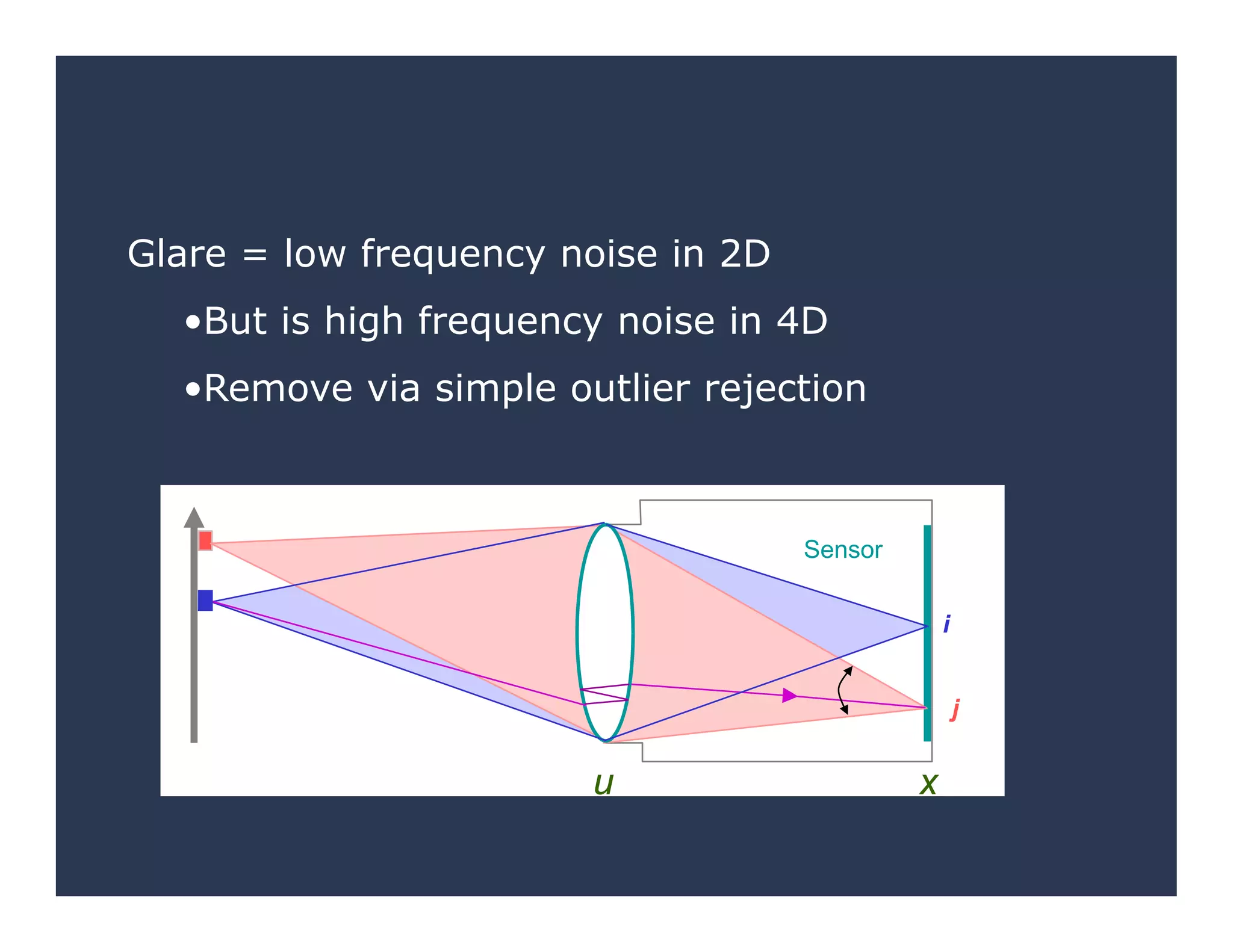

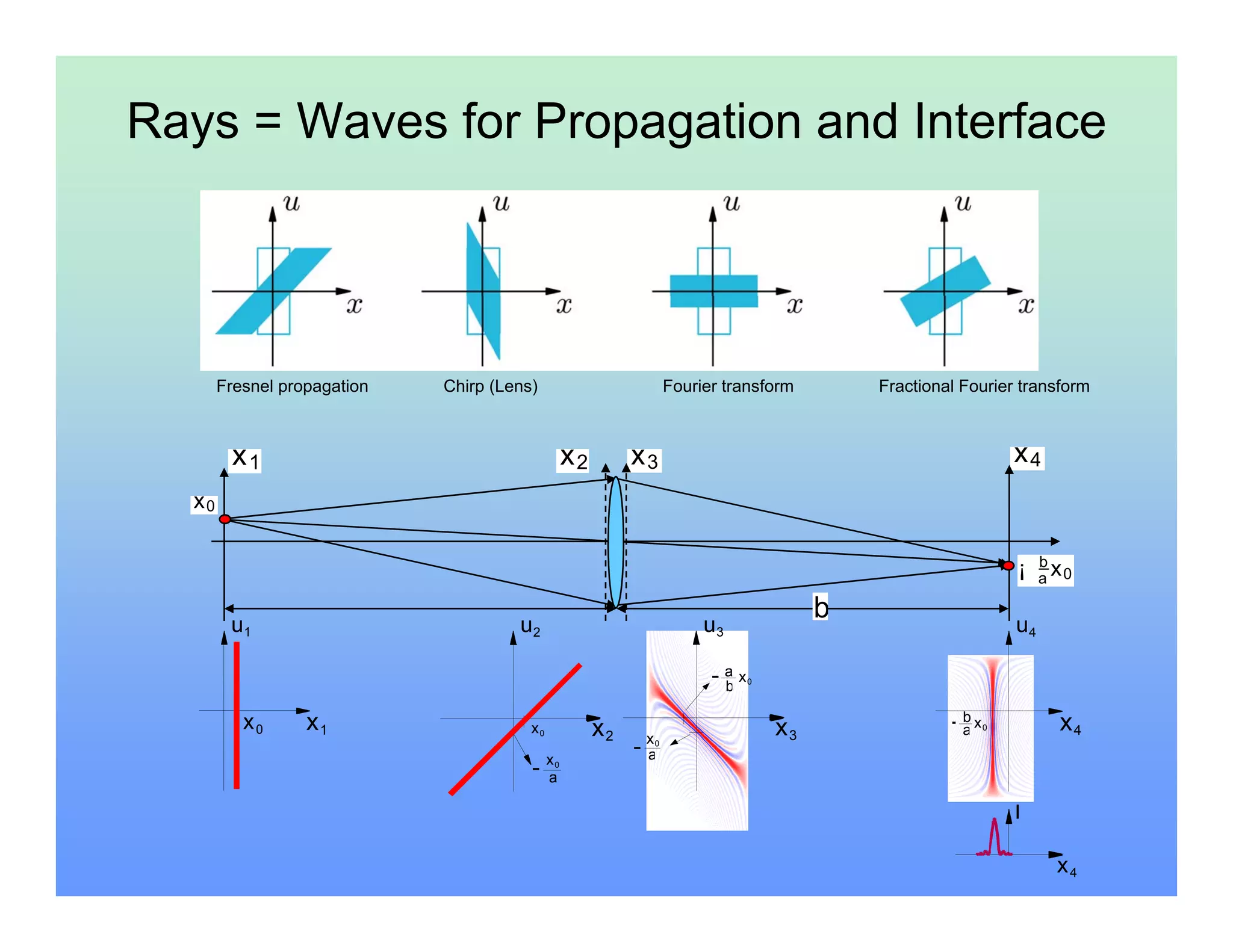

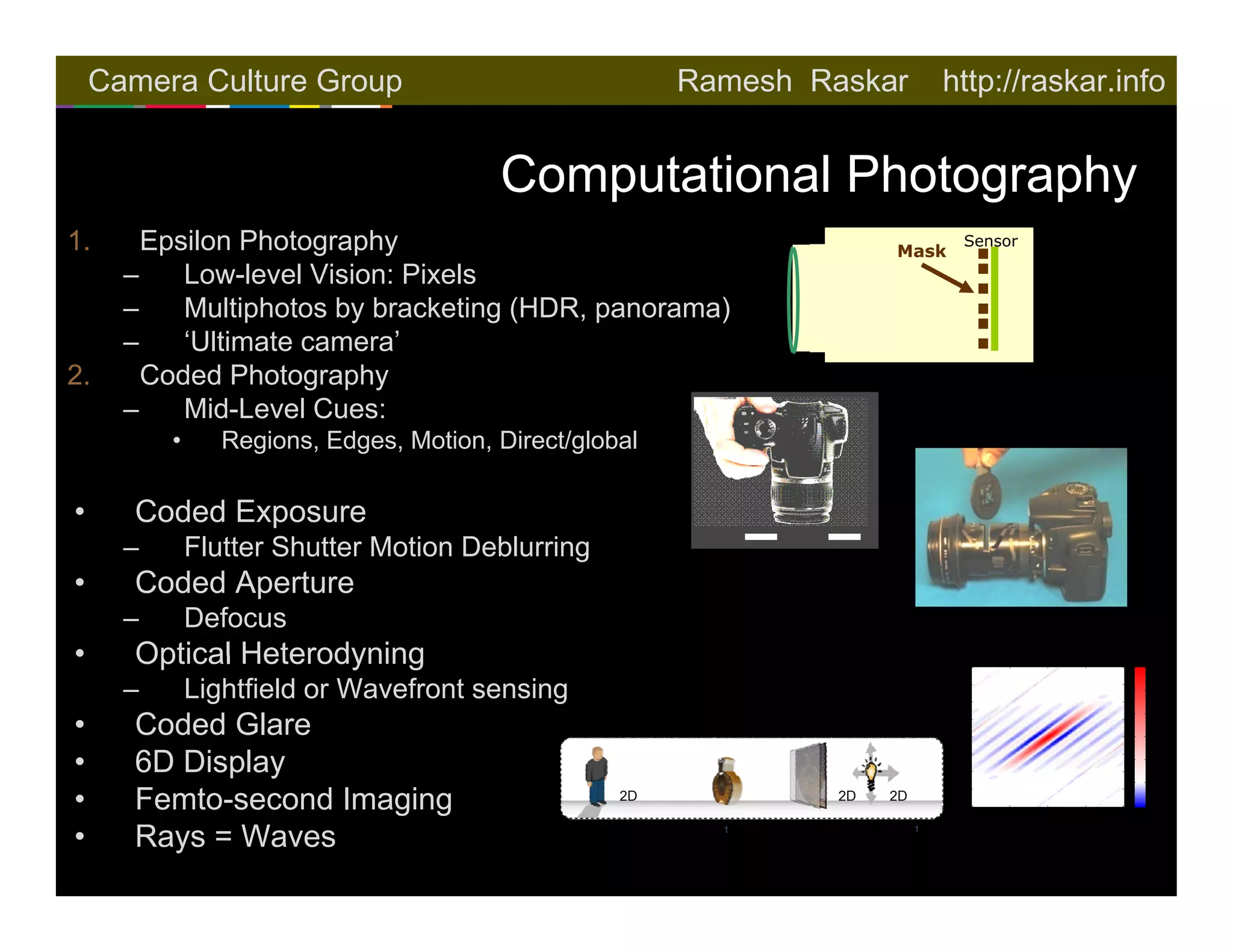

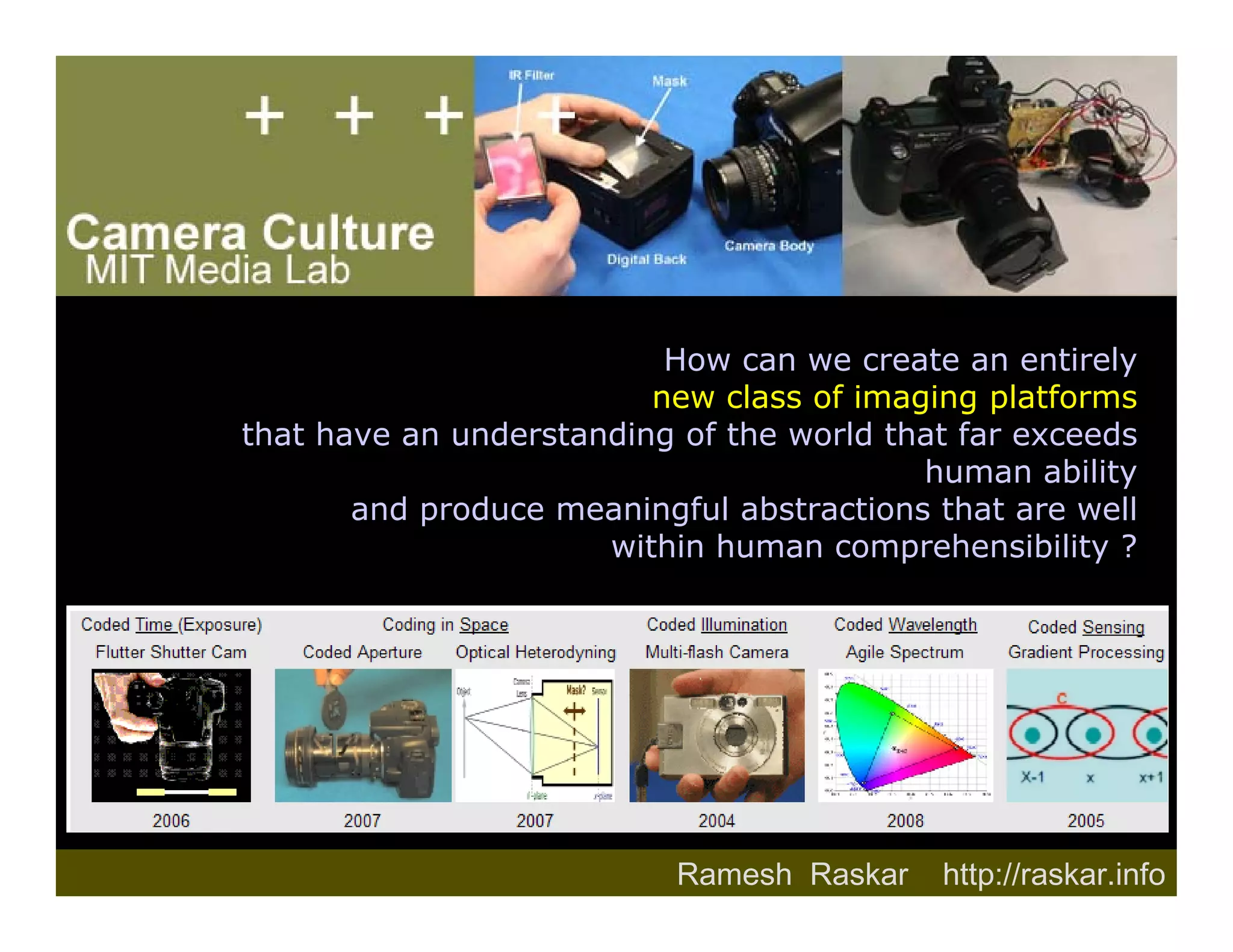

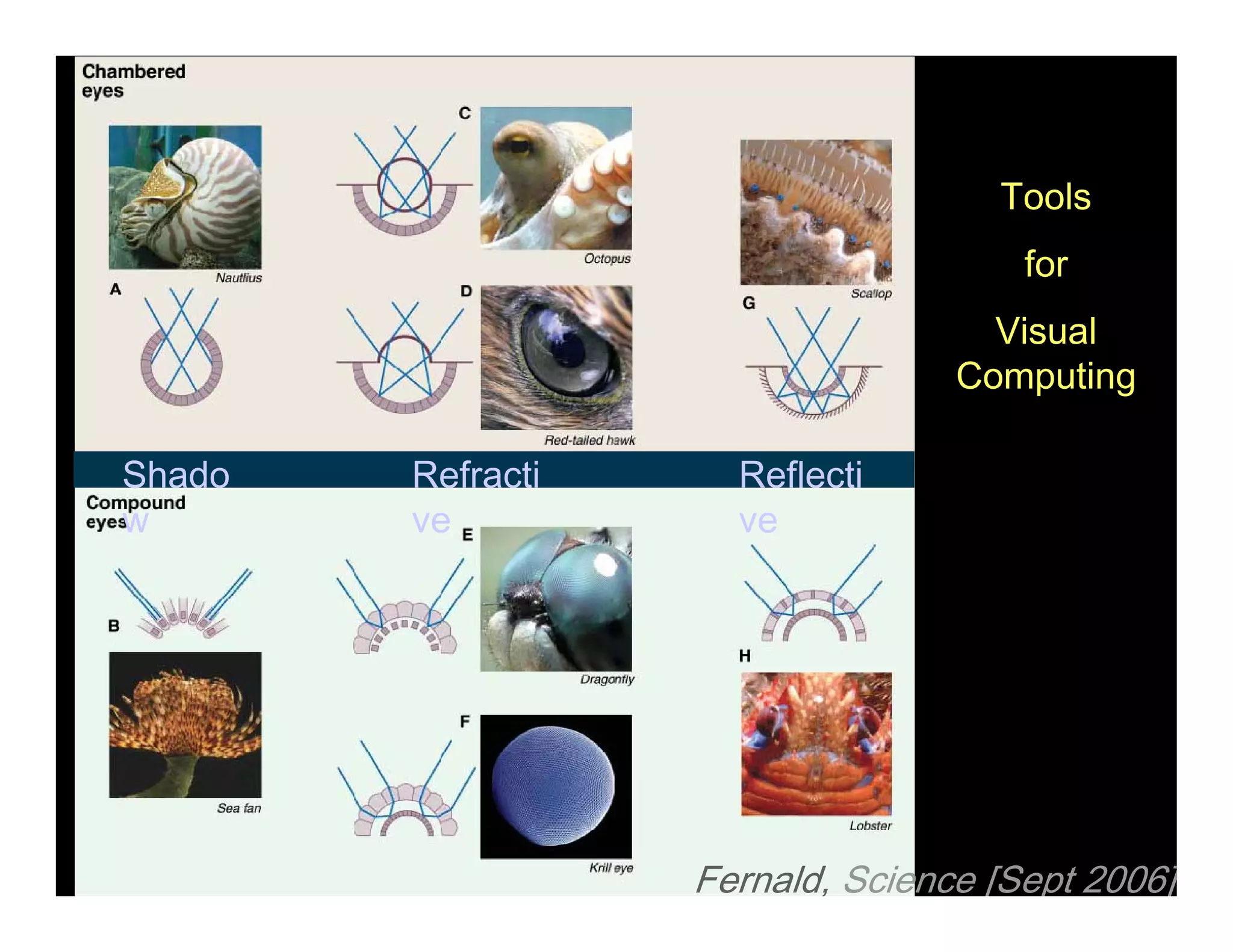

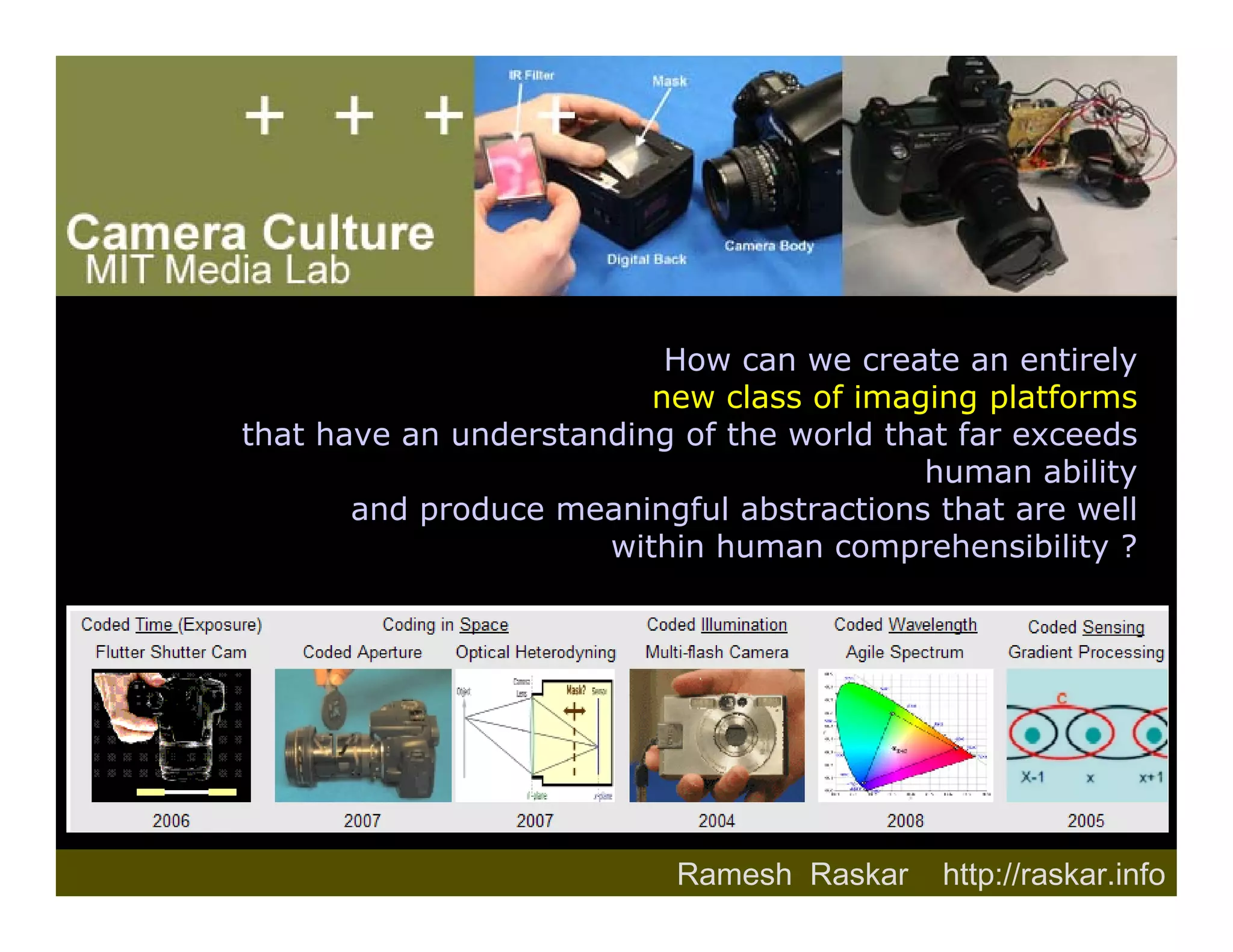

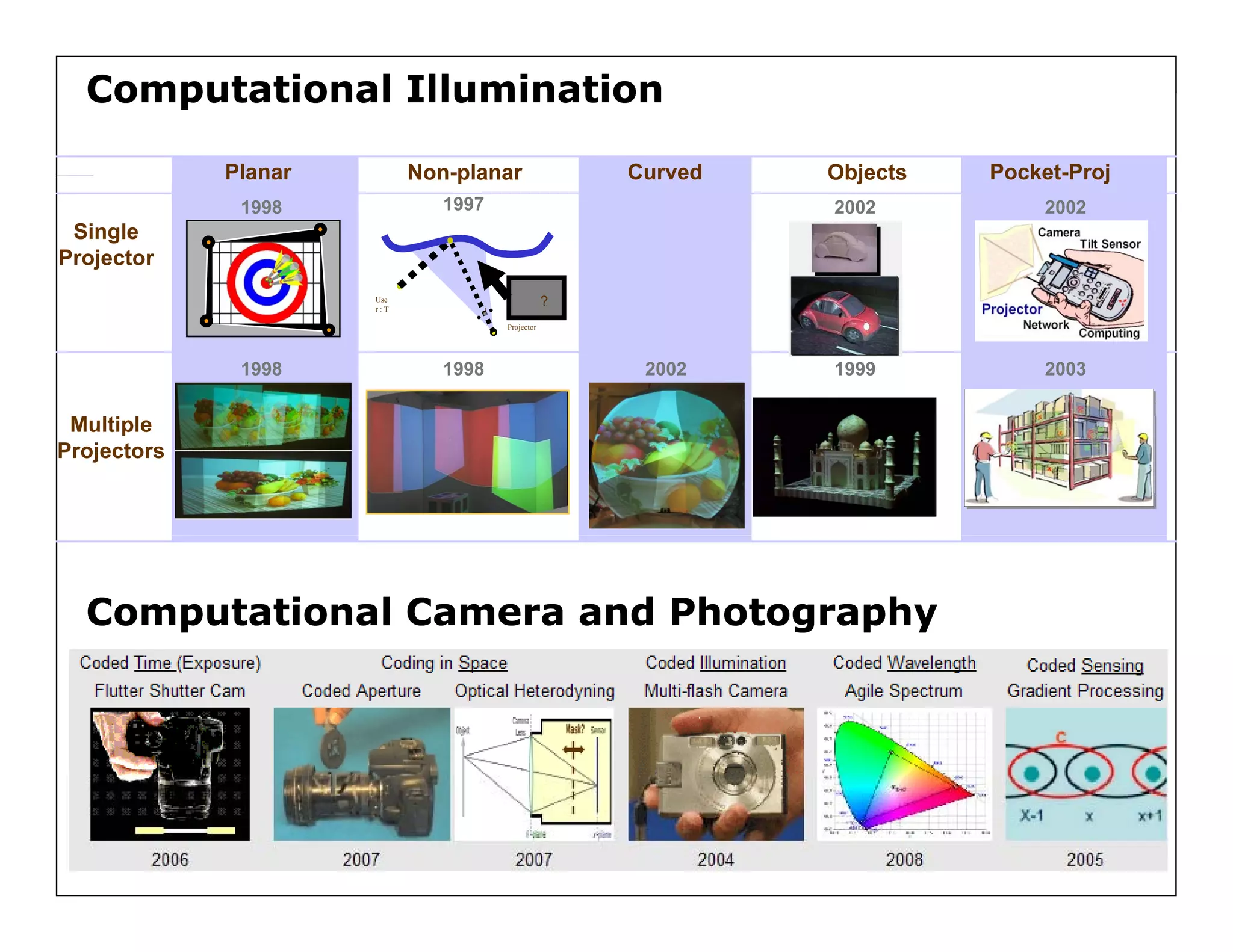

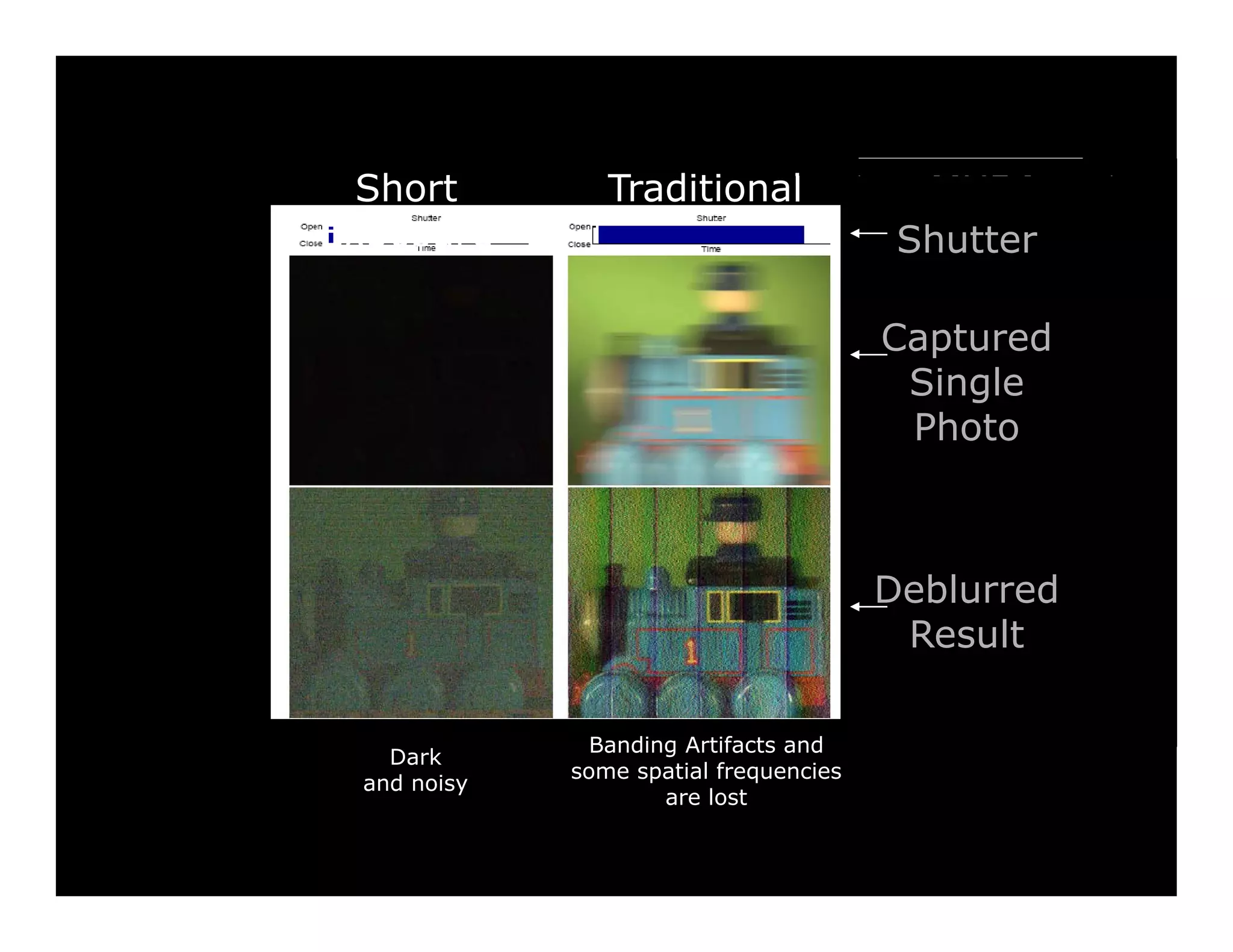

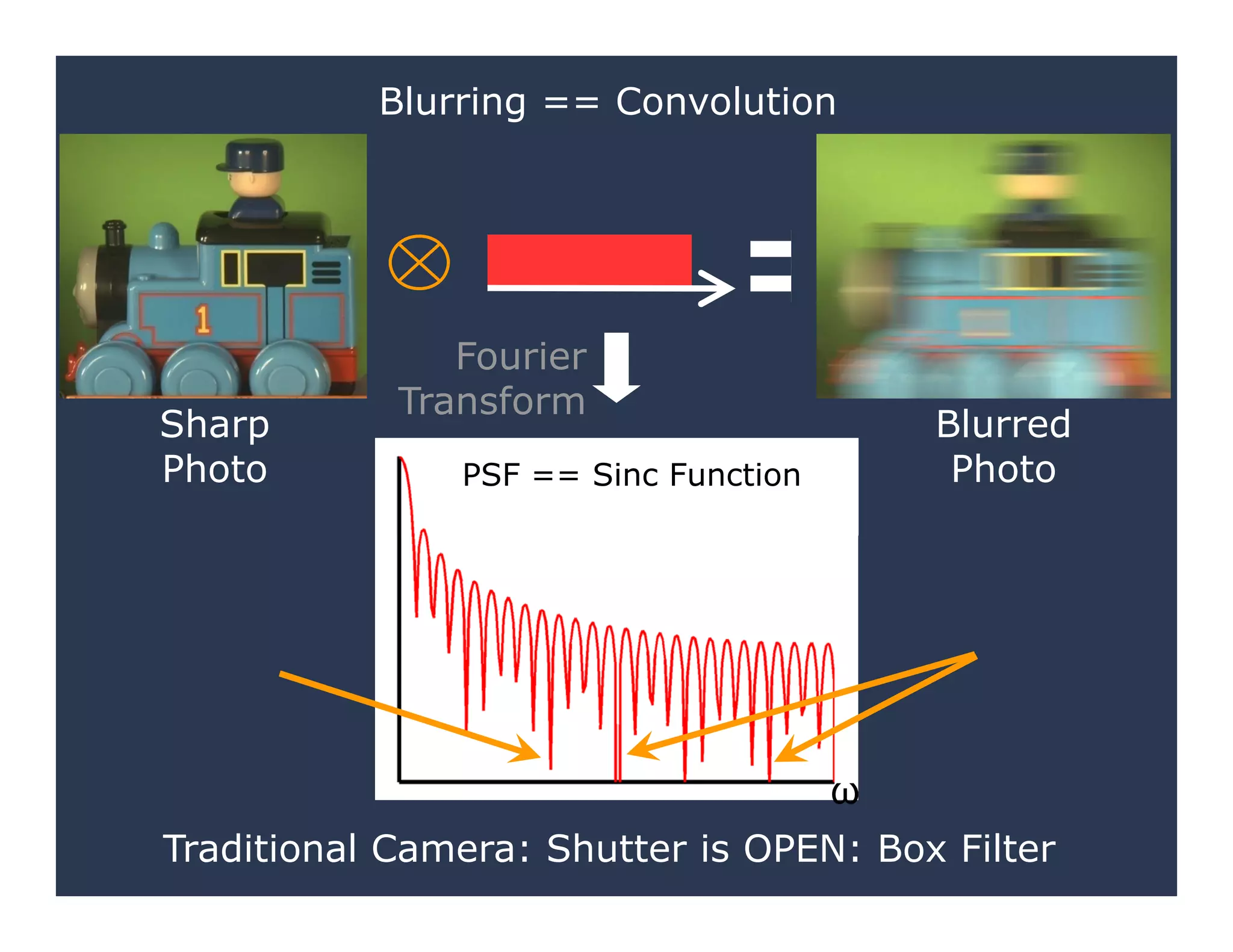

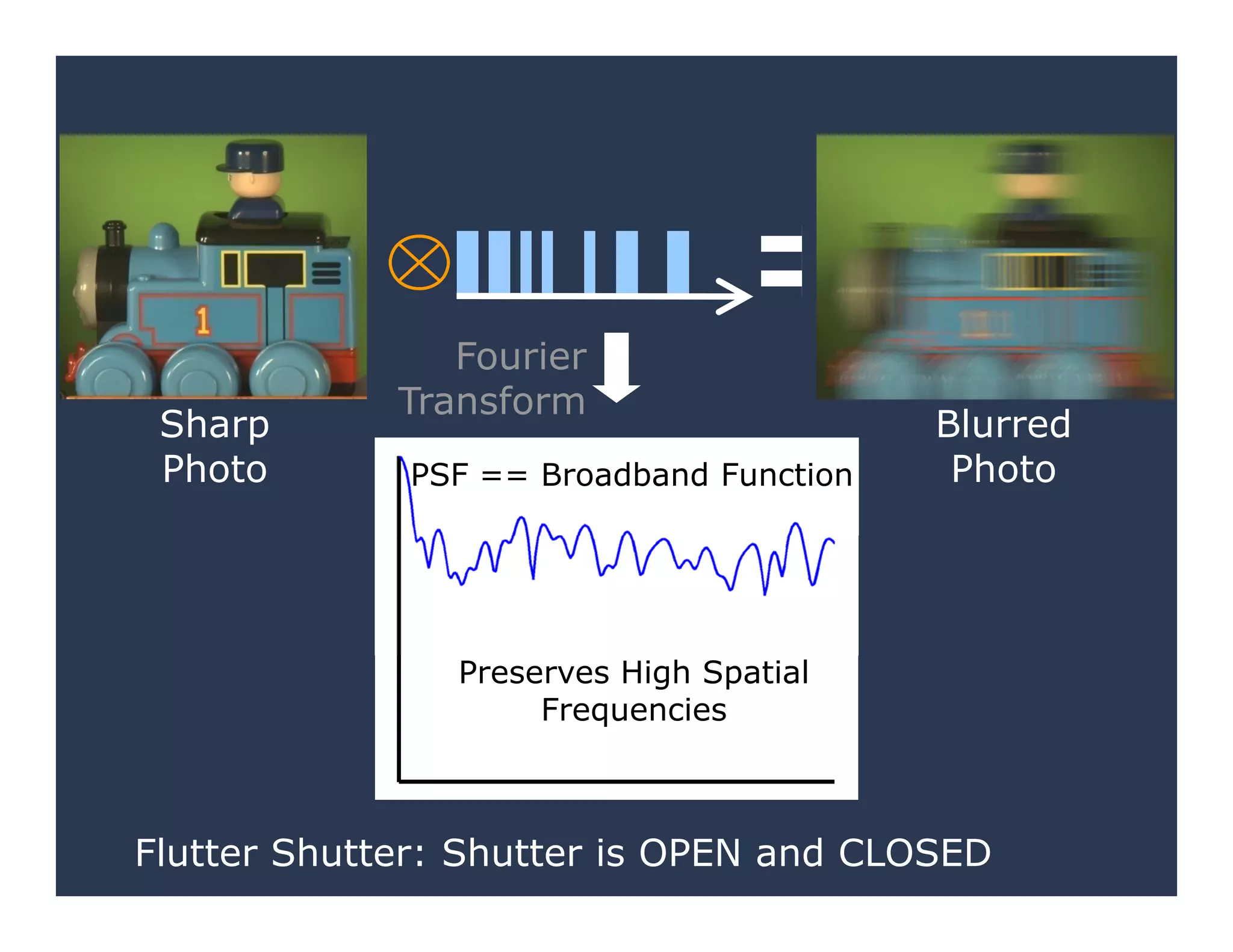

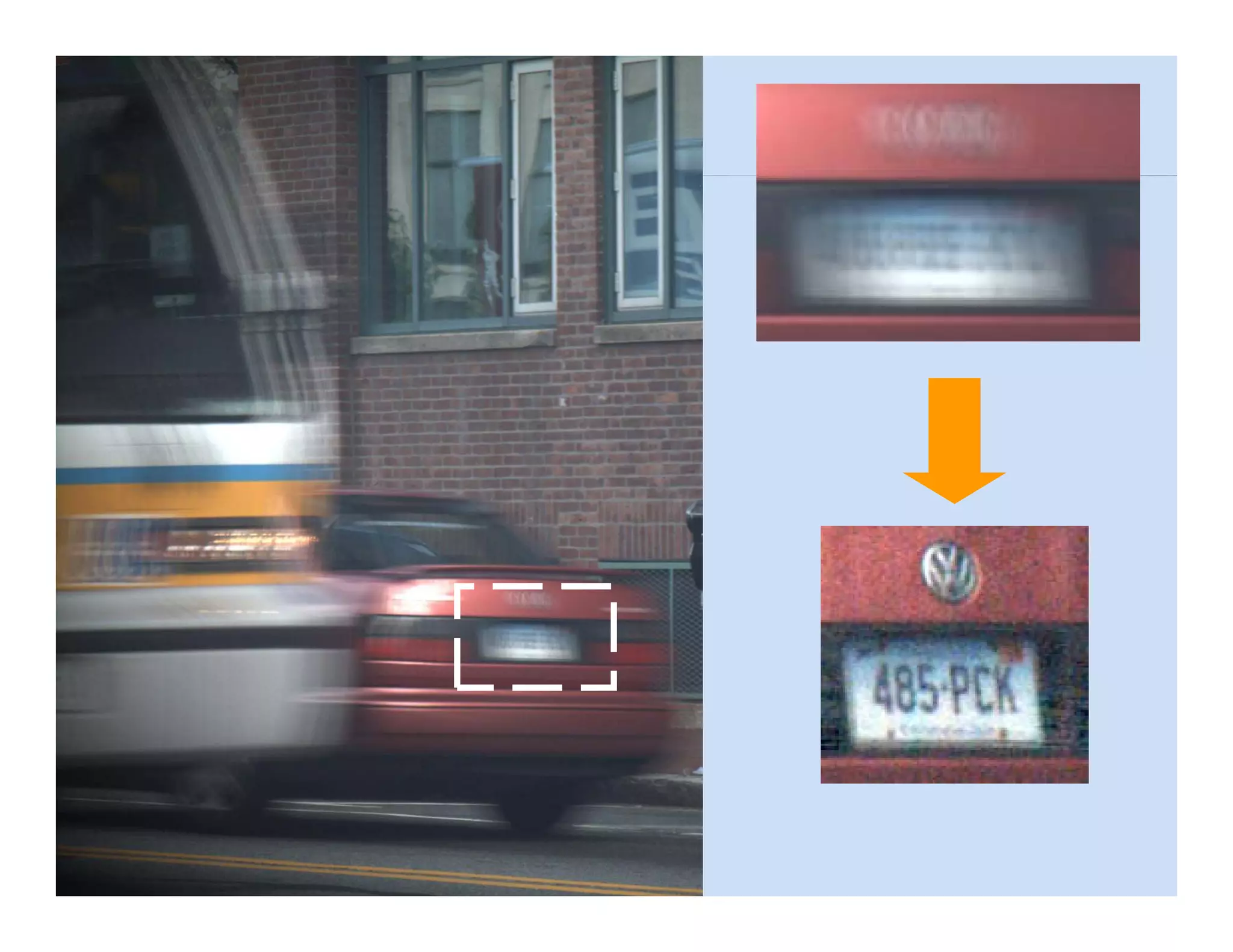

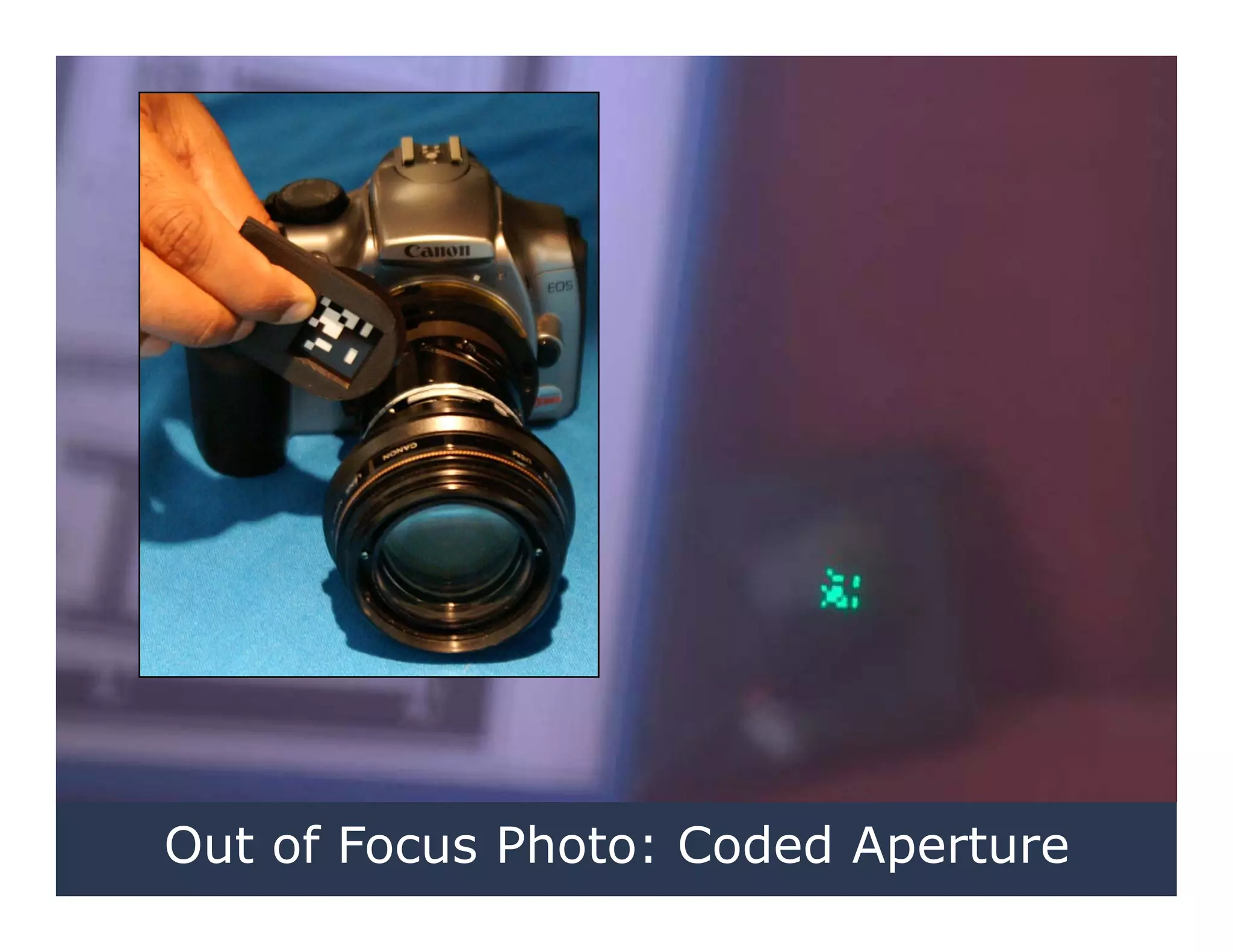

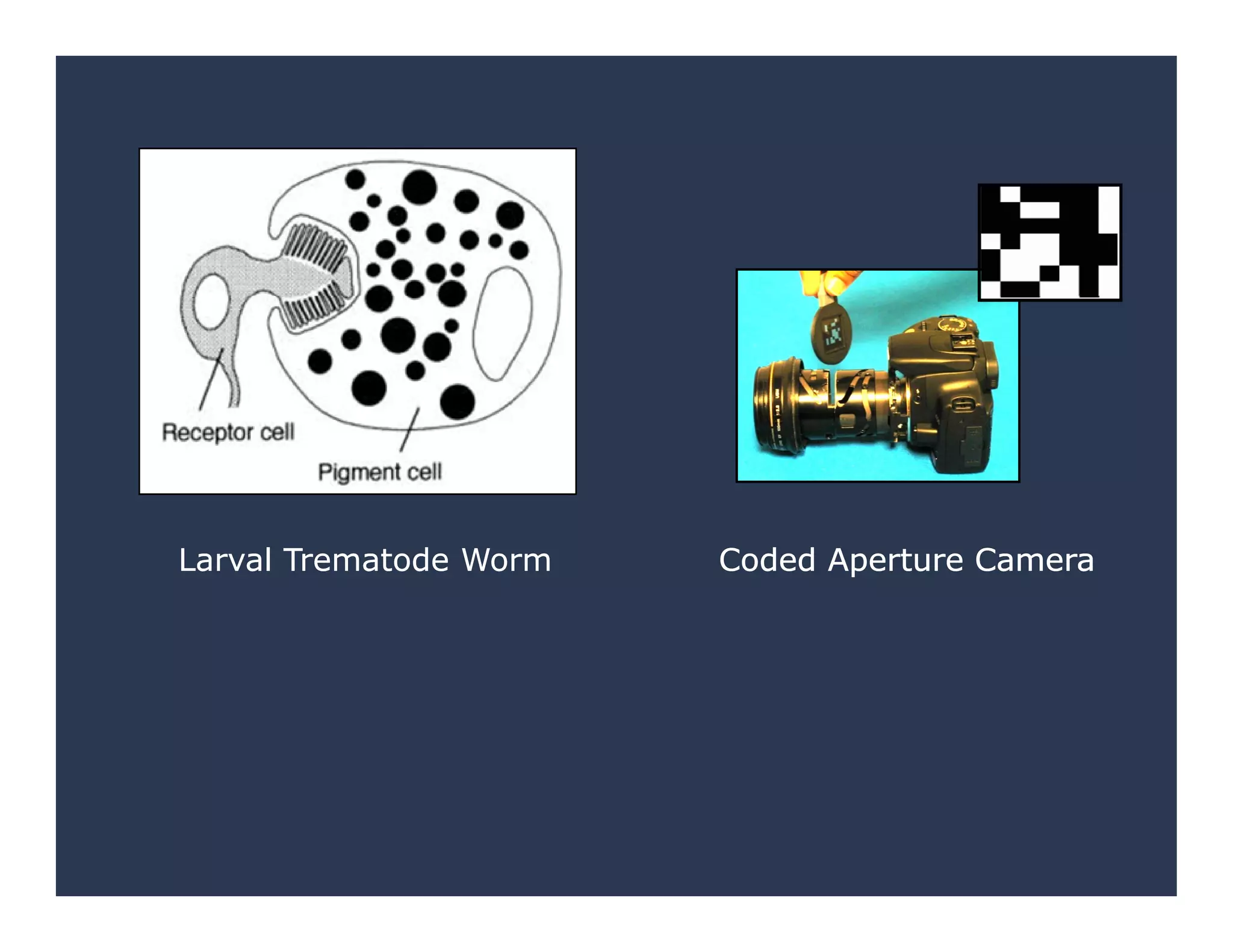

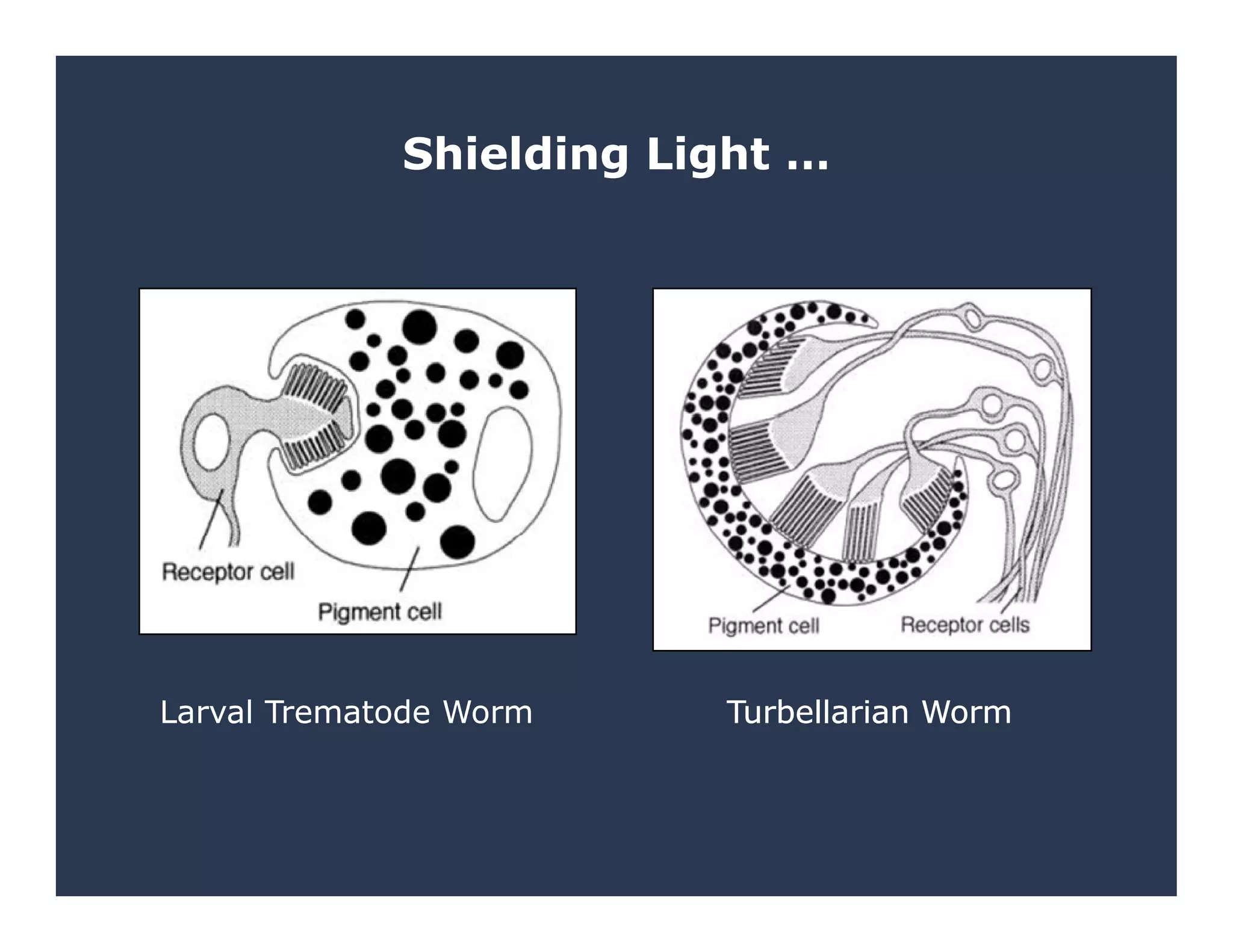

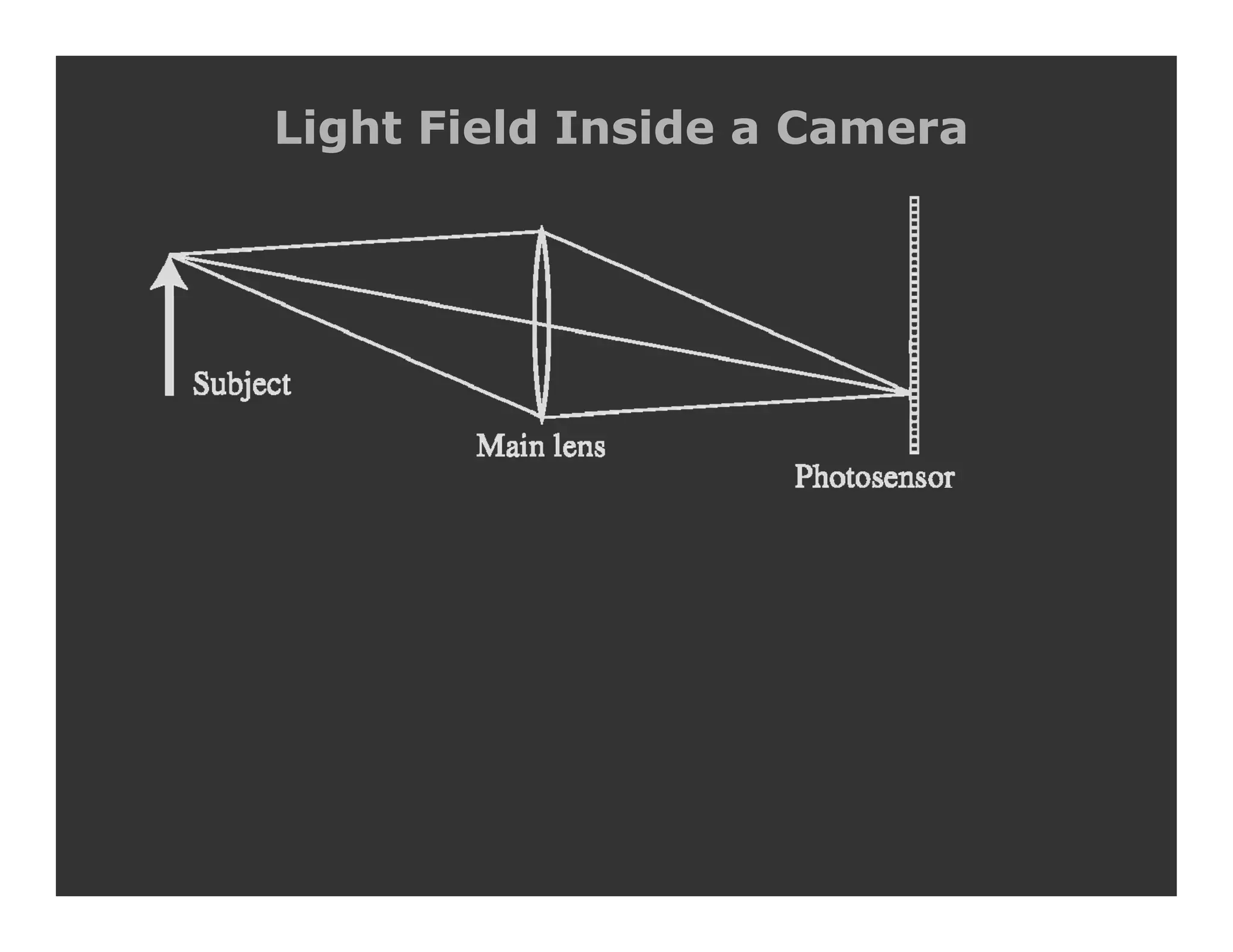

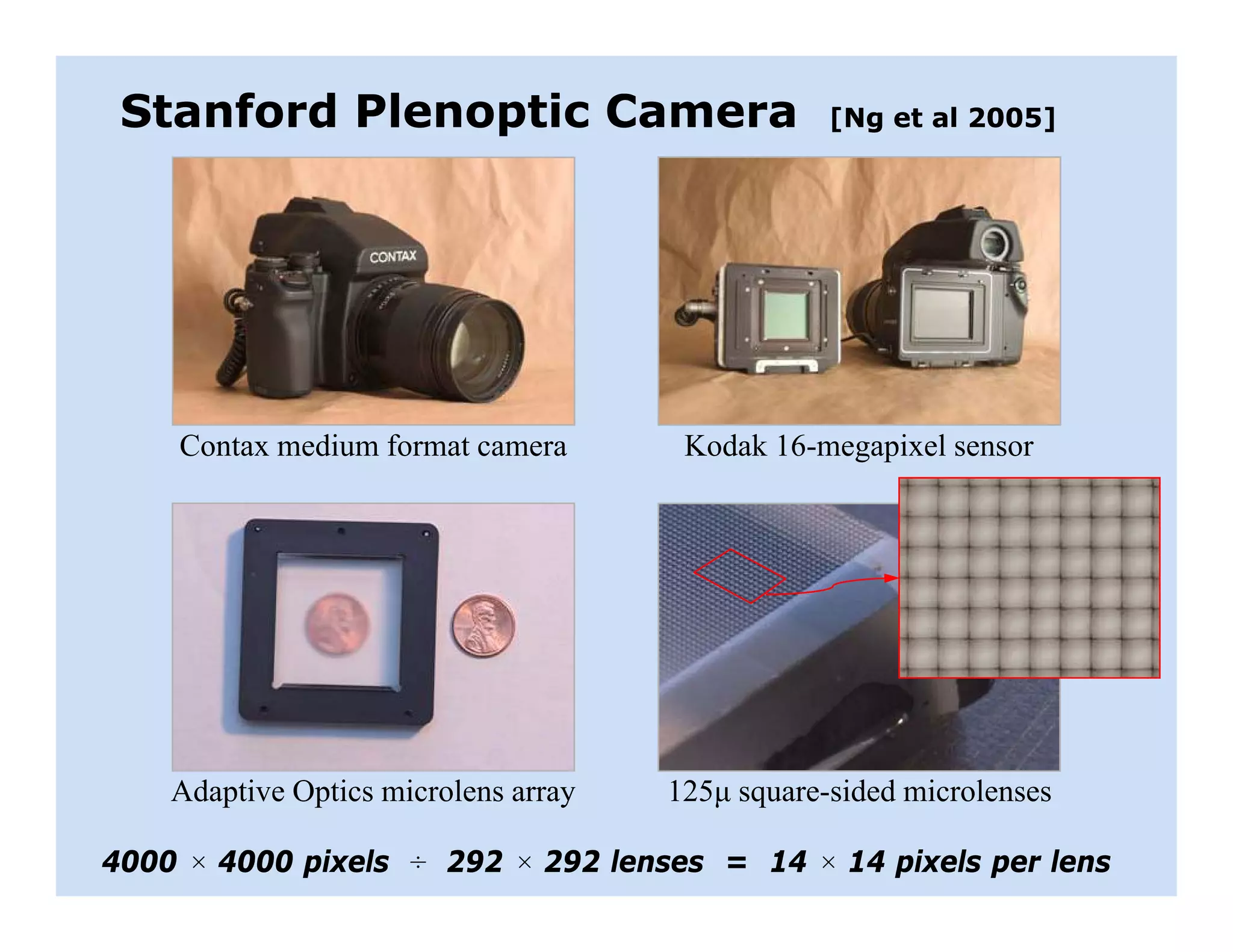

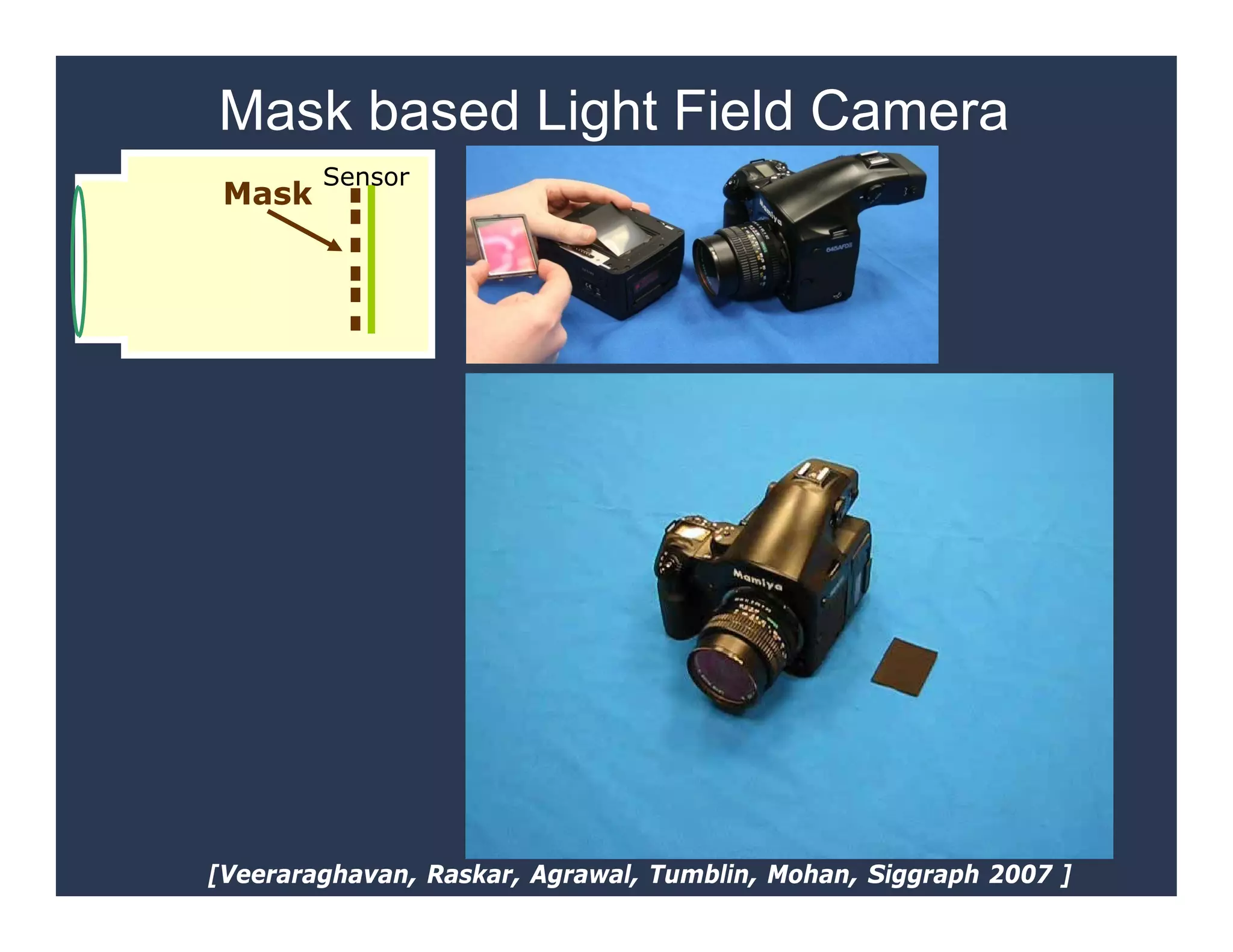

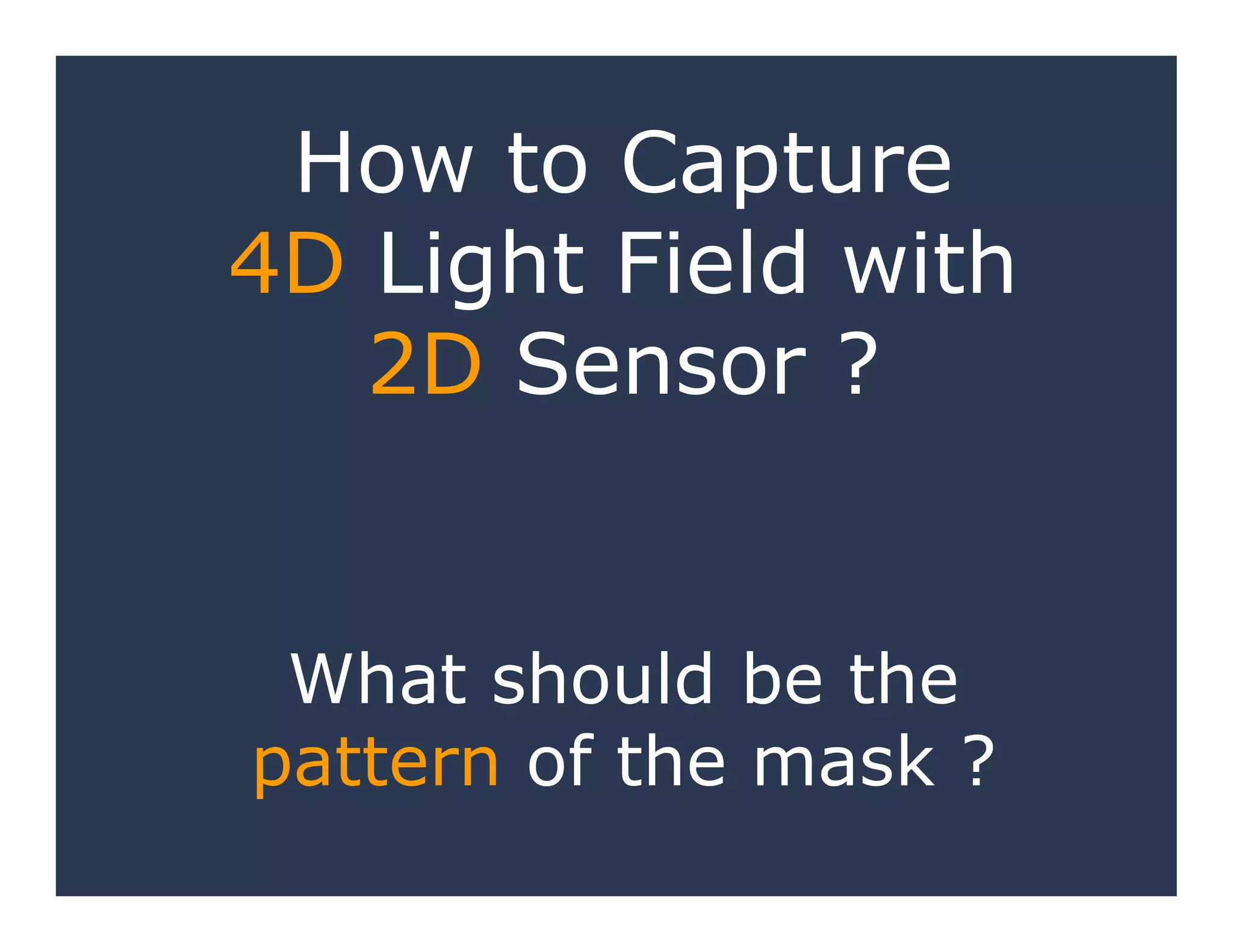

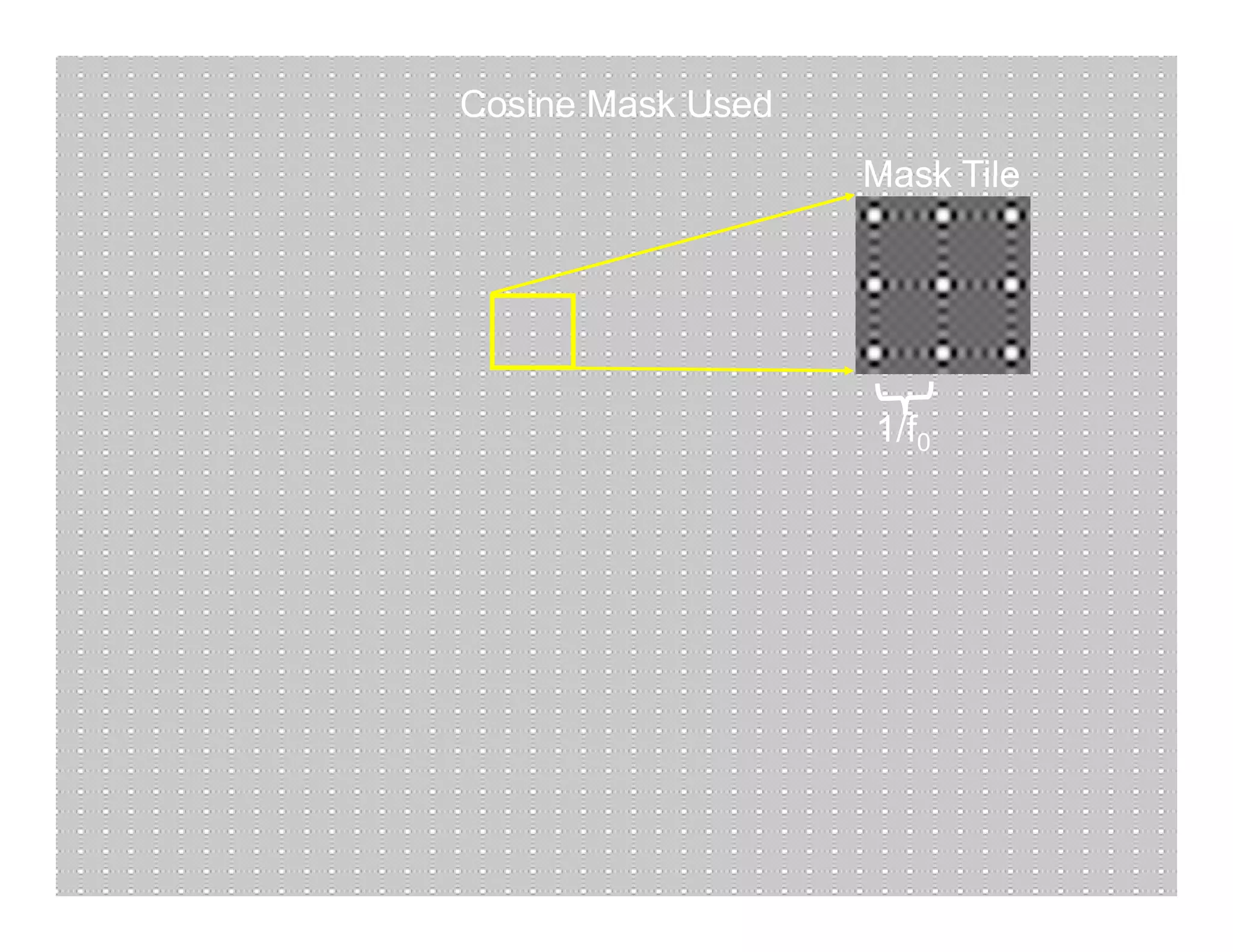

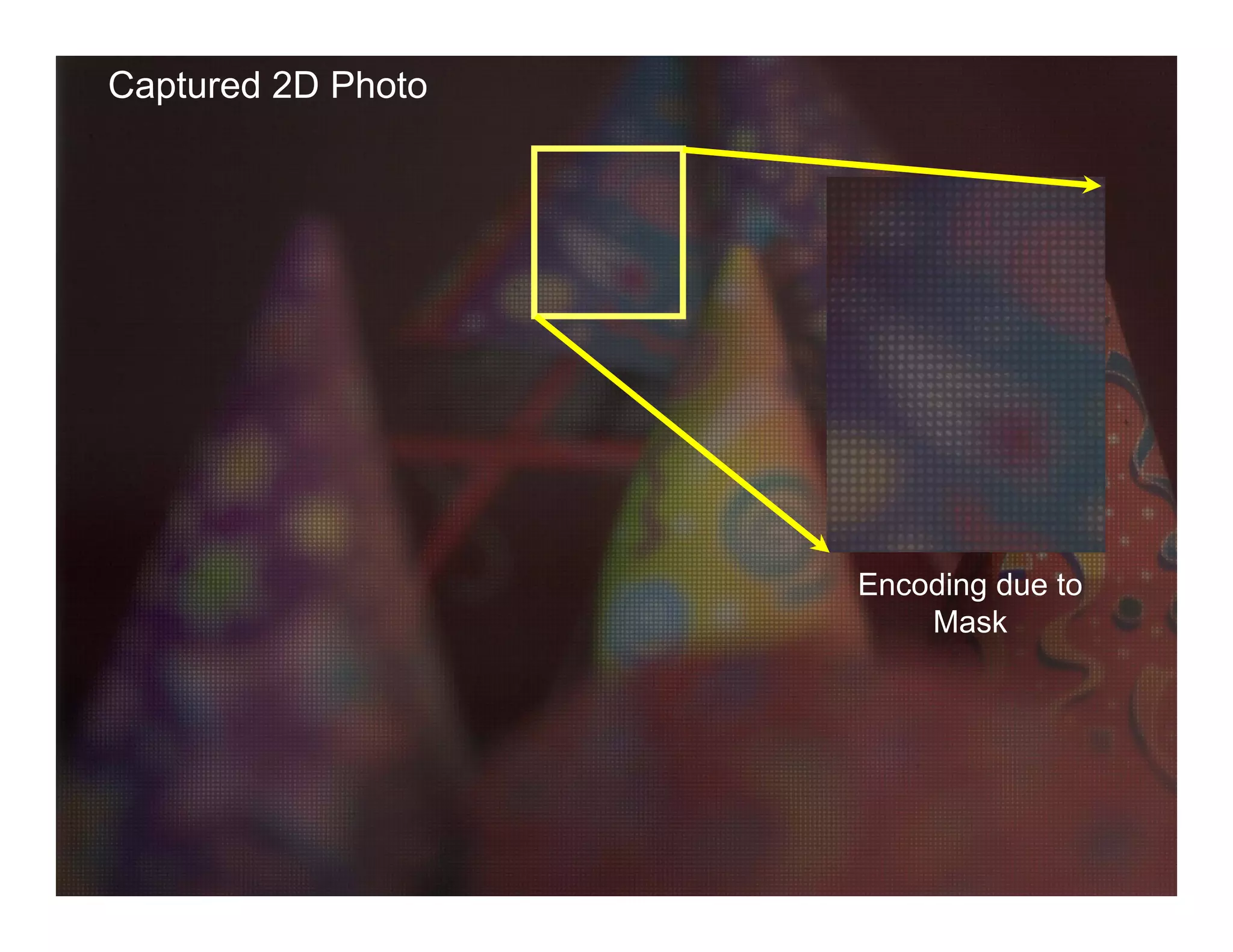

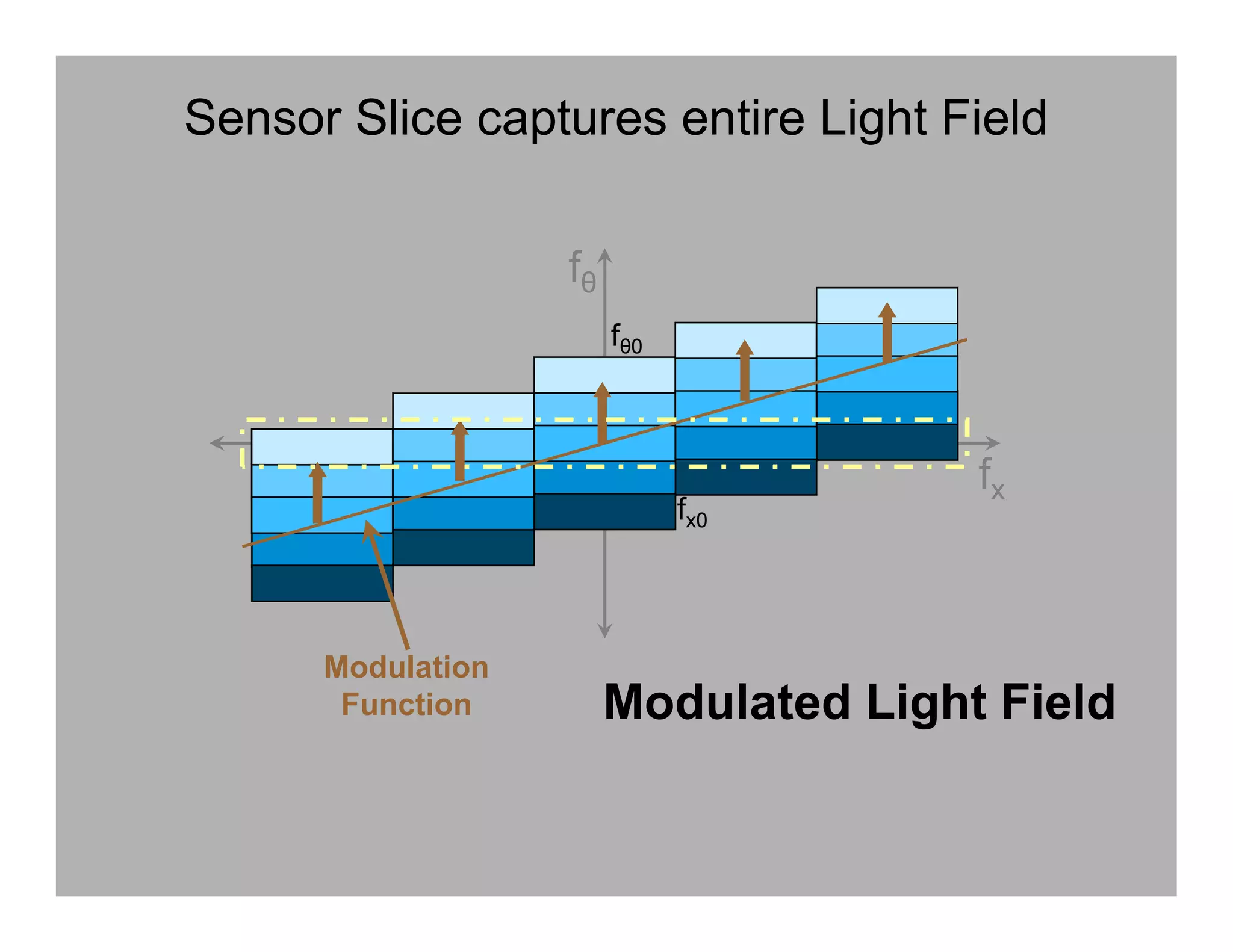

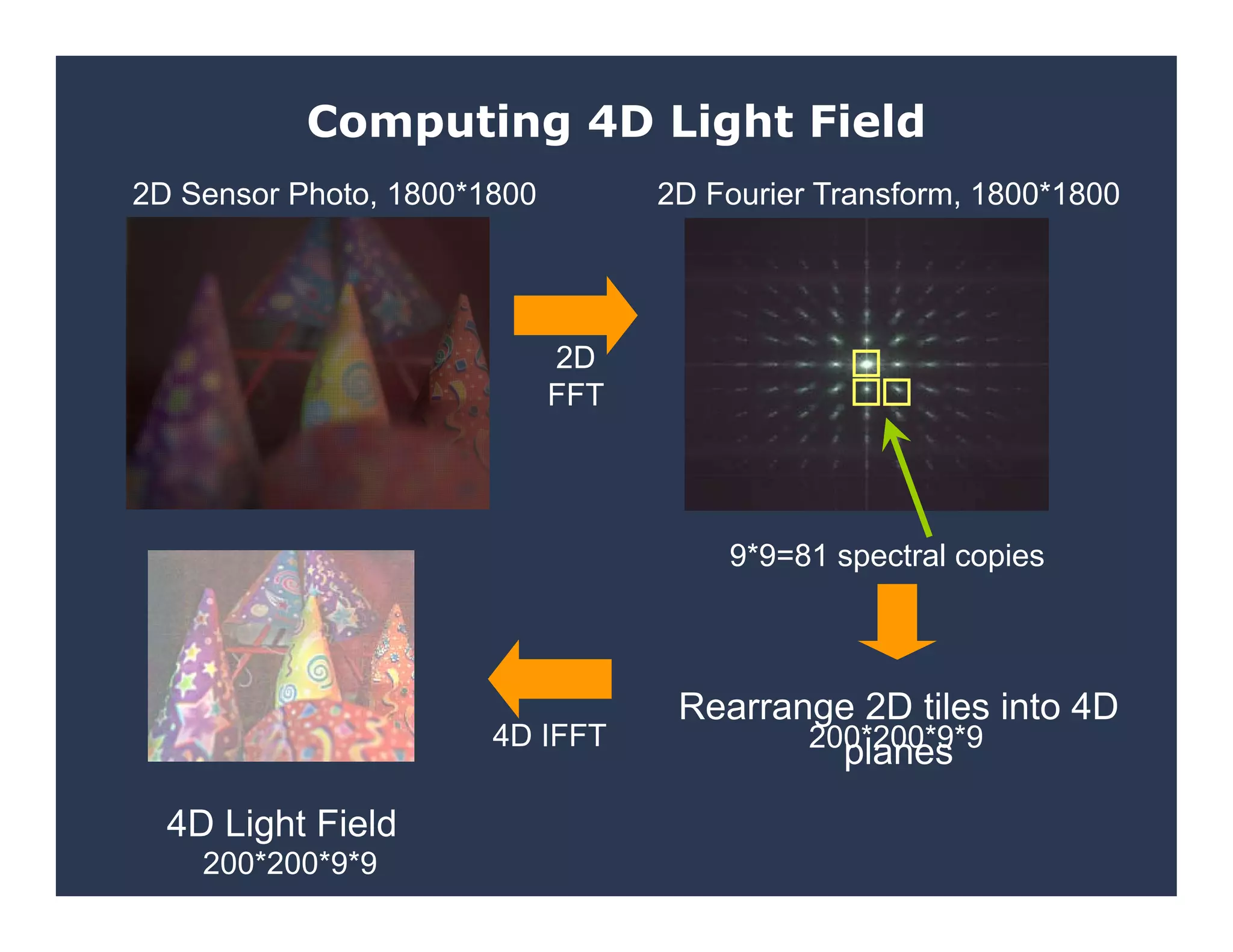

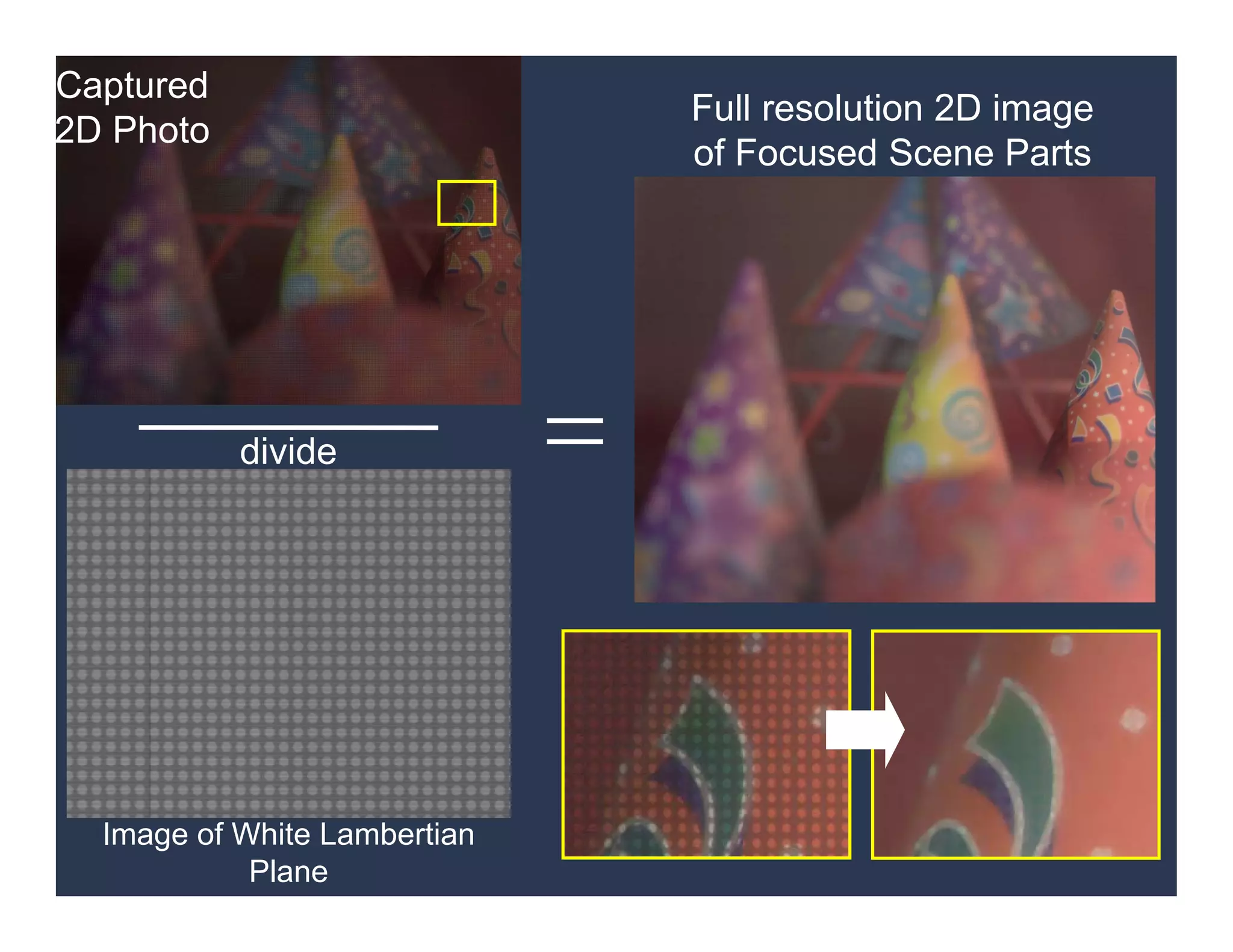

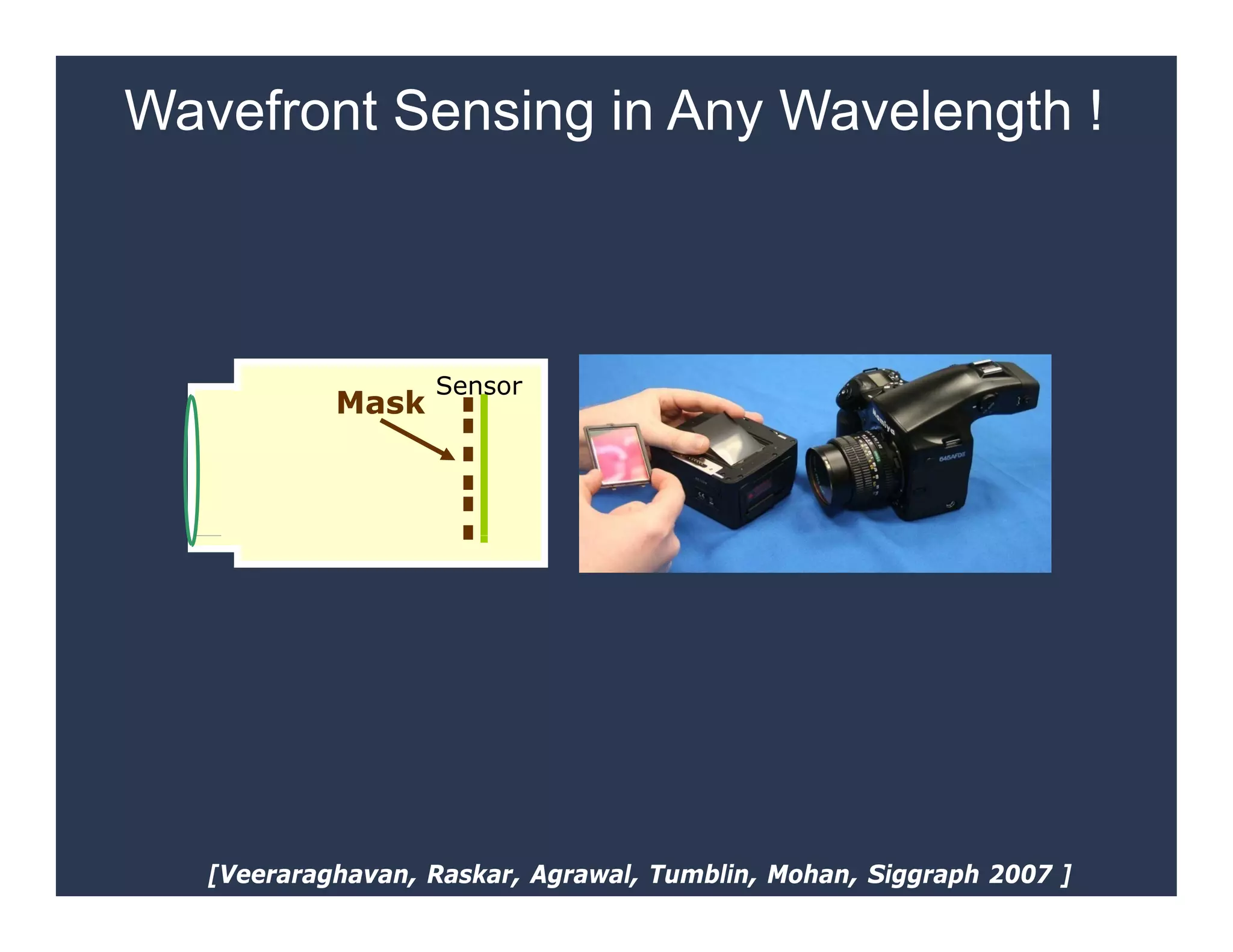

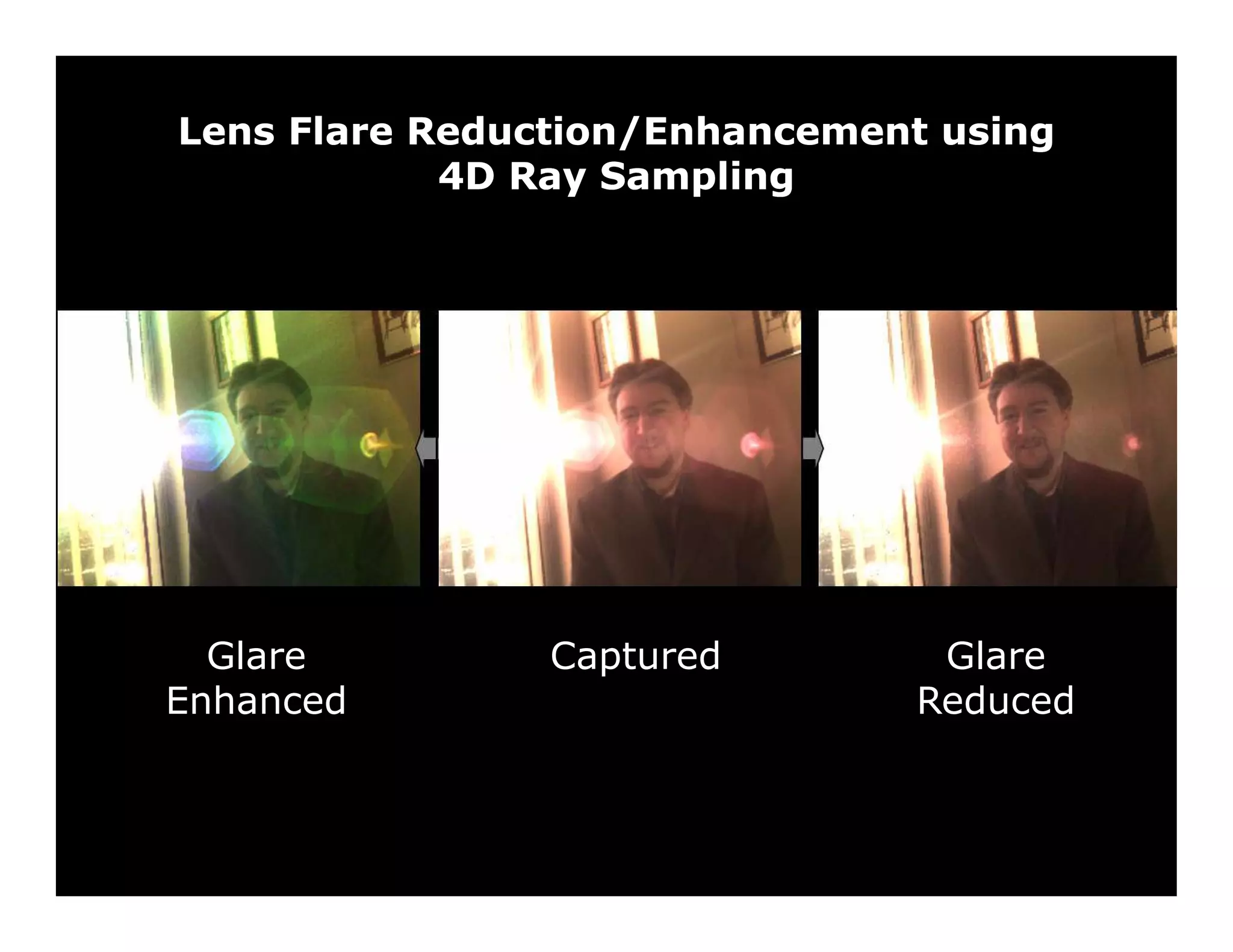

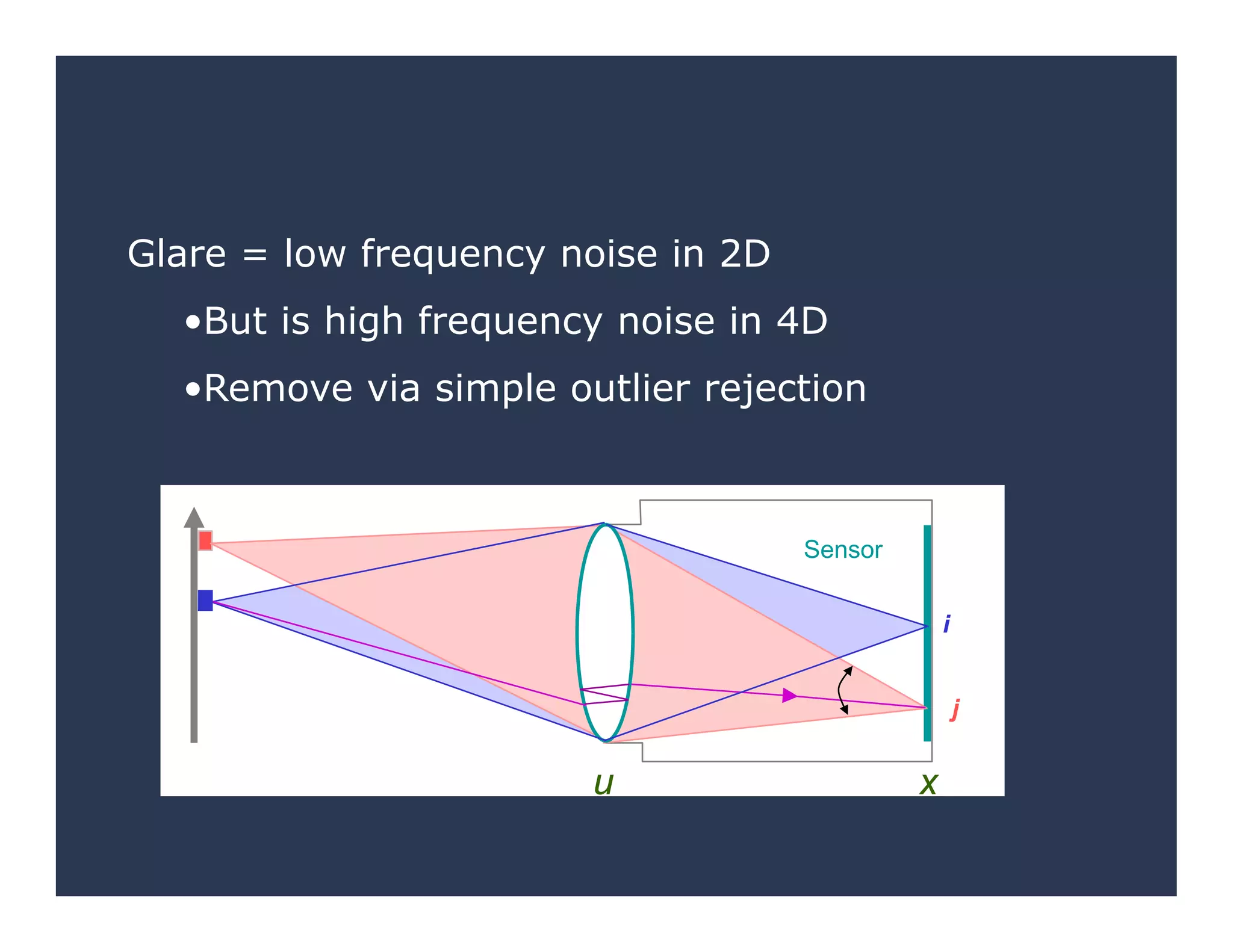

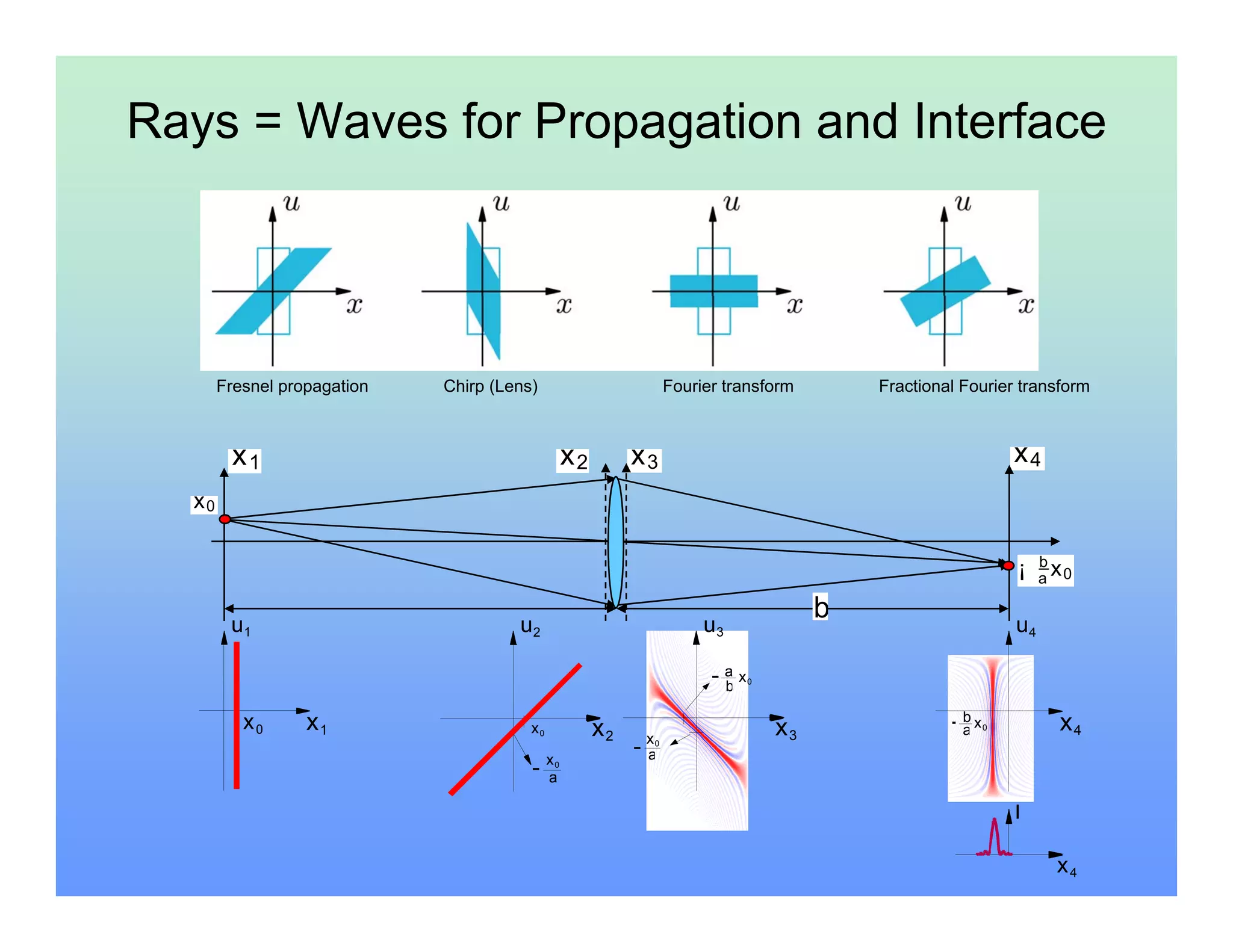

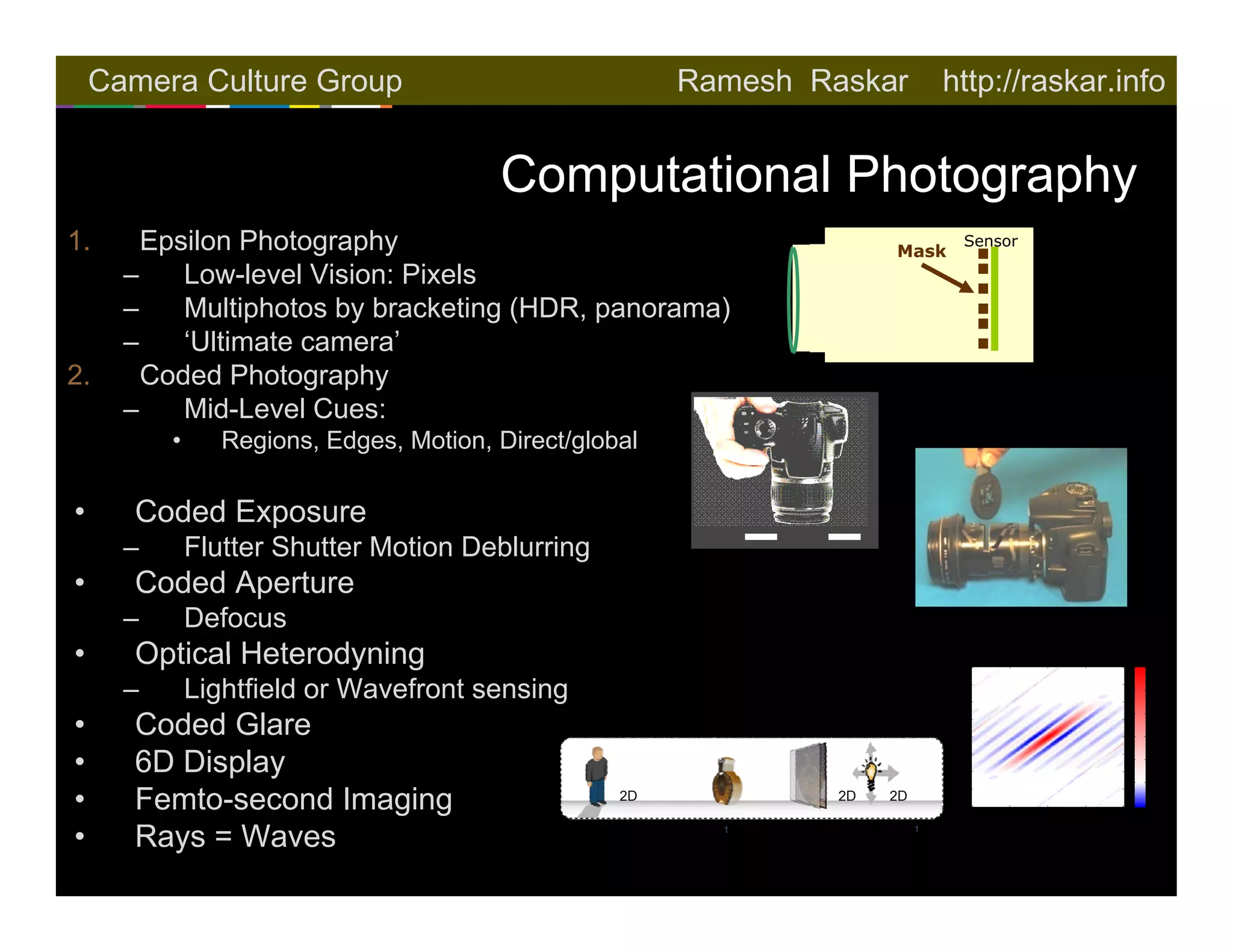

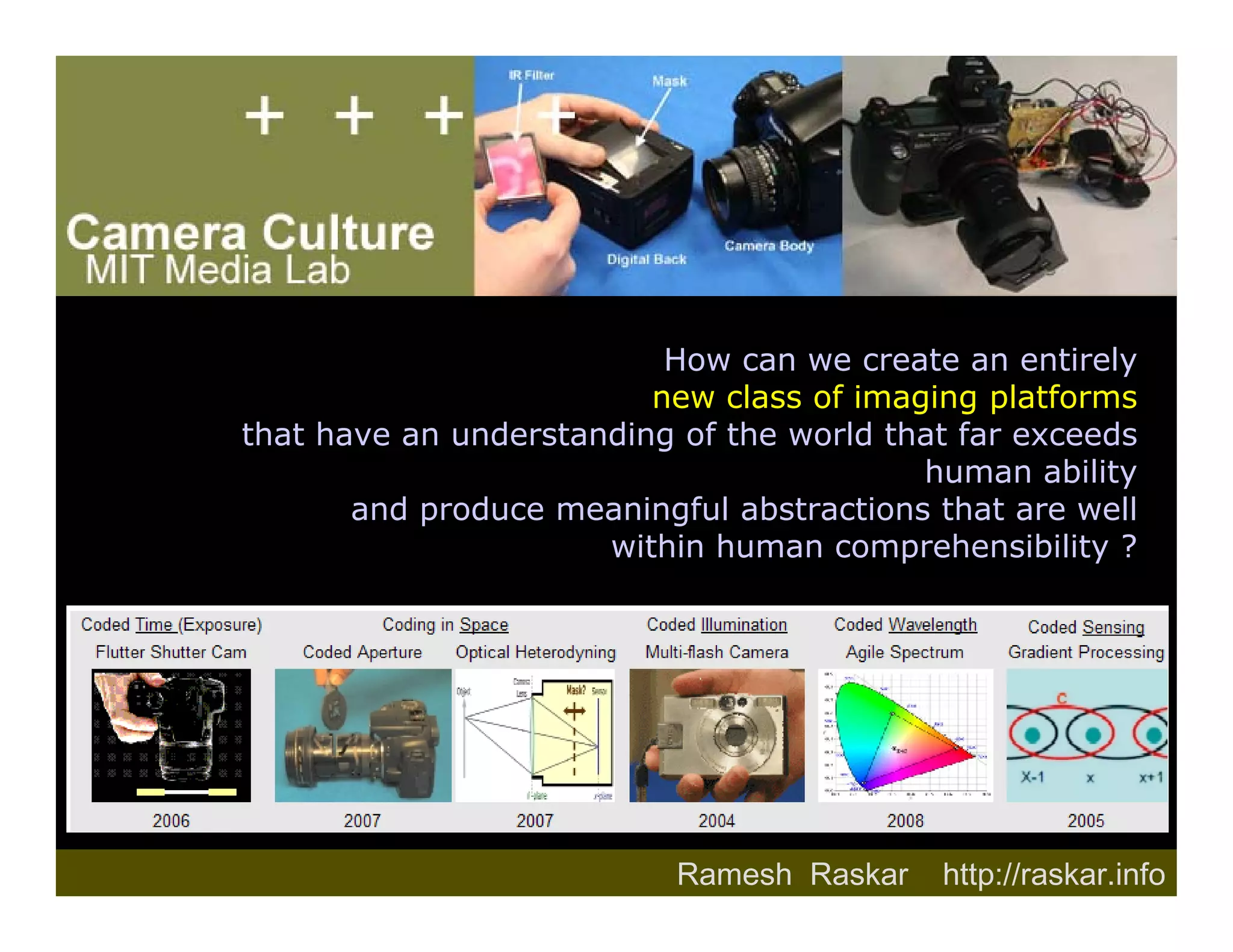

1. Ramesh Raskar is an associate professor at the MIT Media Lab researching computational photography. 2. Raskar discusses three levels of computational photography - epsilon, coded, and essence photography. Coded photography uses single or few snapshots but introduces reversible encoding of light through techniques like coded exposure and coded apertures. 3. Examples of coded photography techniques presented include flutter shutter motion deblurring, coded aperture defocus, optical heterodyning for lightfield or wavefront sensing, and using a coded glare mask. The goal is to create new imaging capabilities beyond what is possible with traditional cameras.