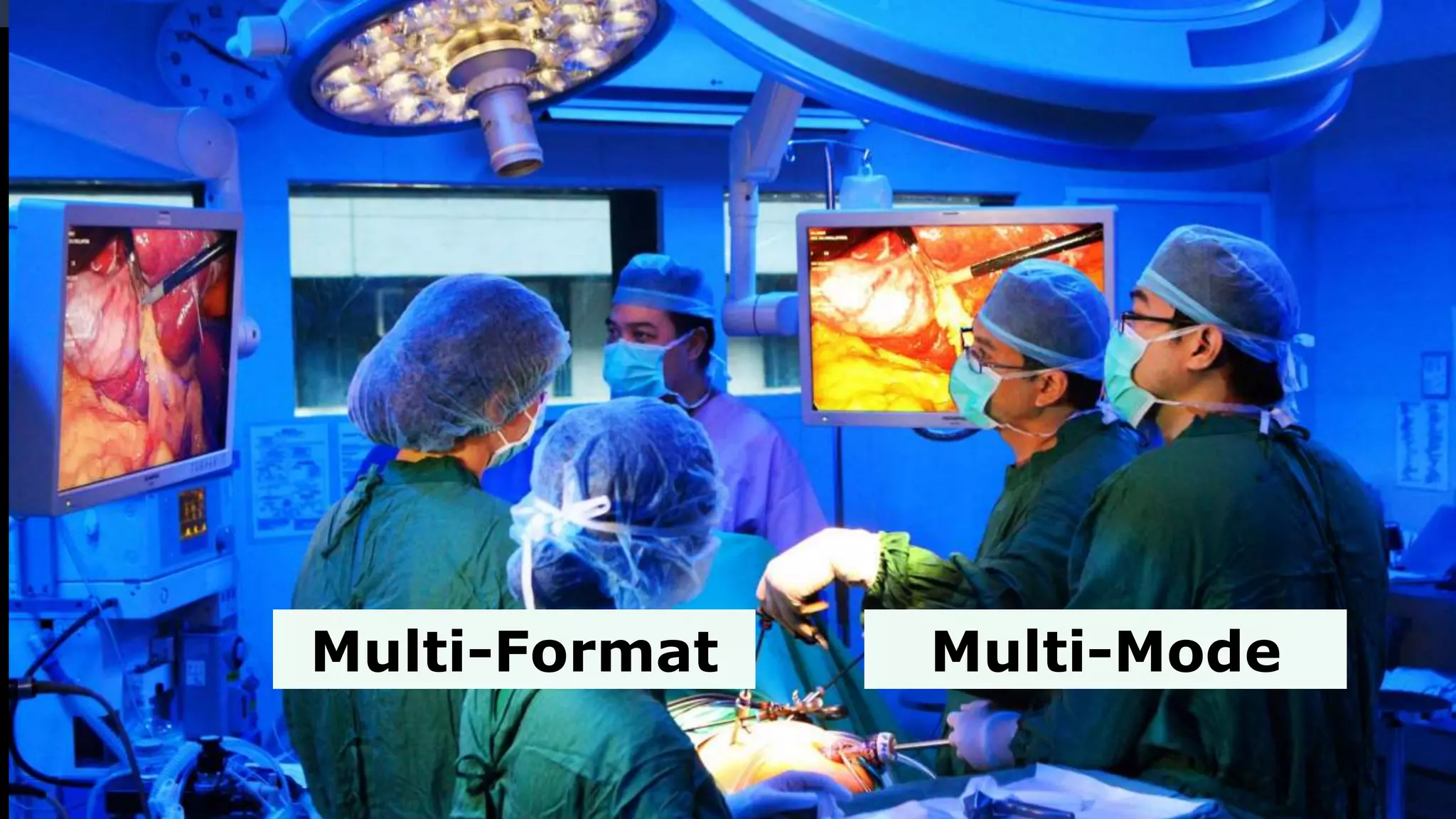

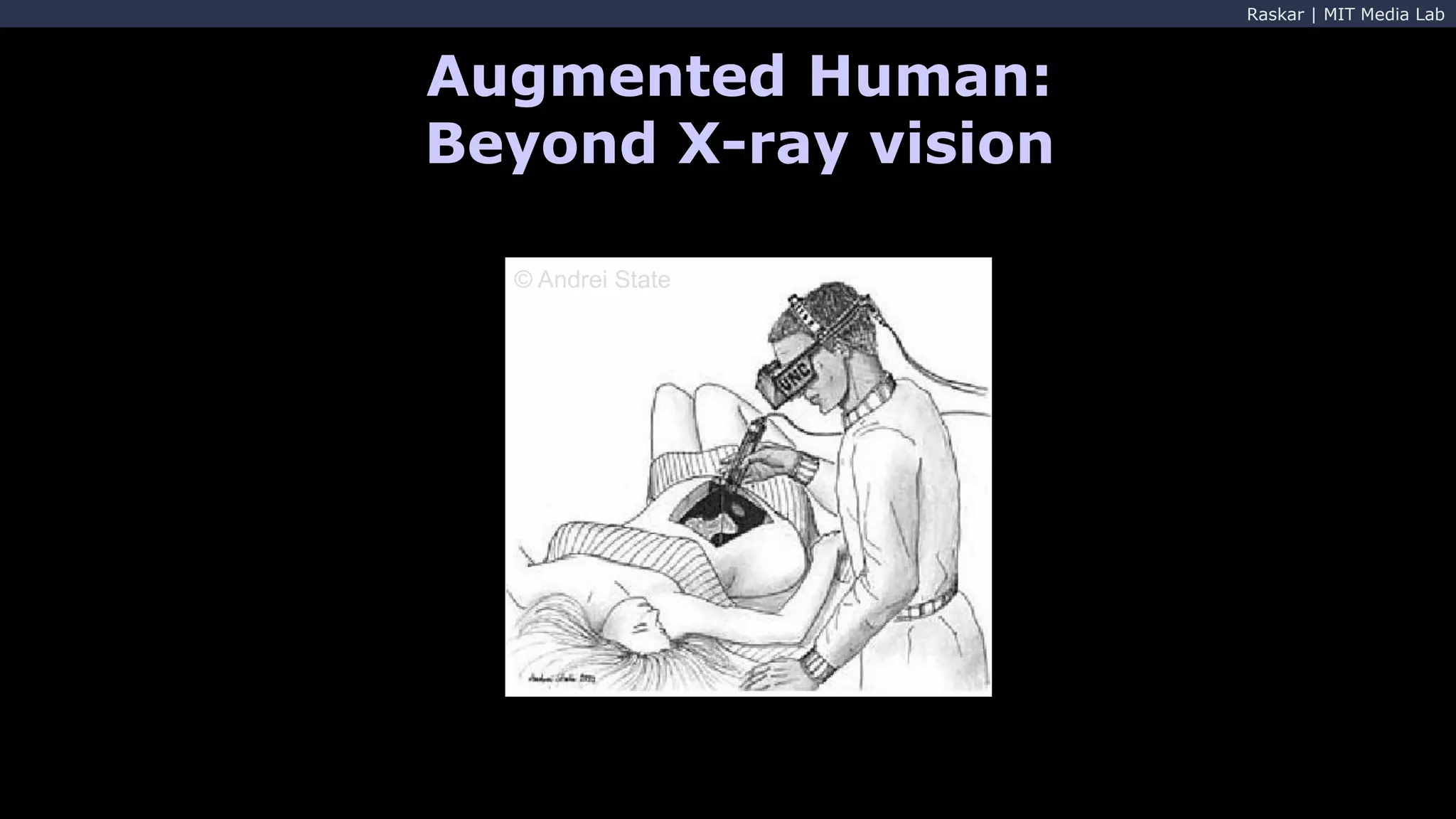

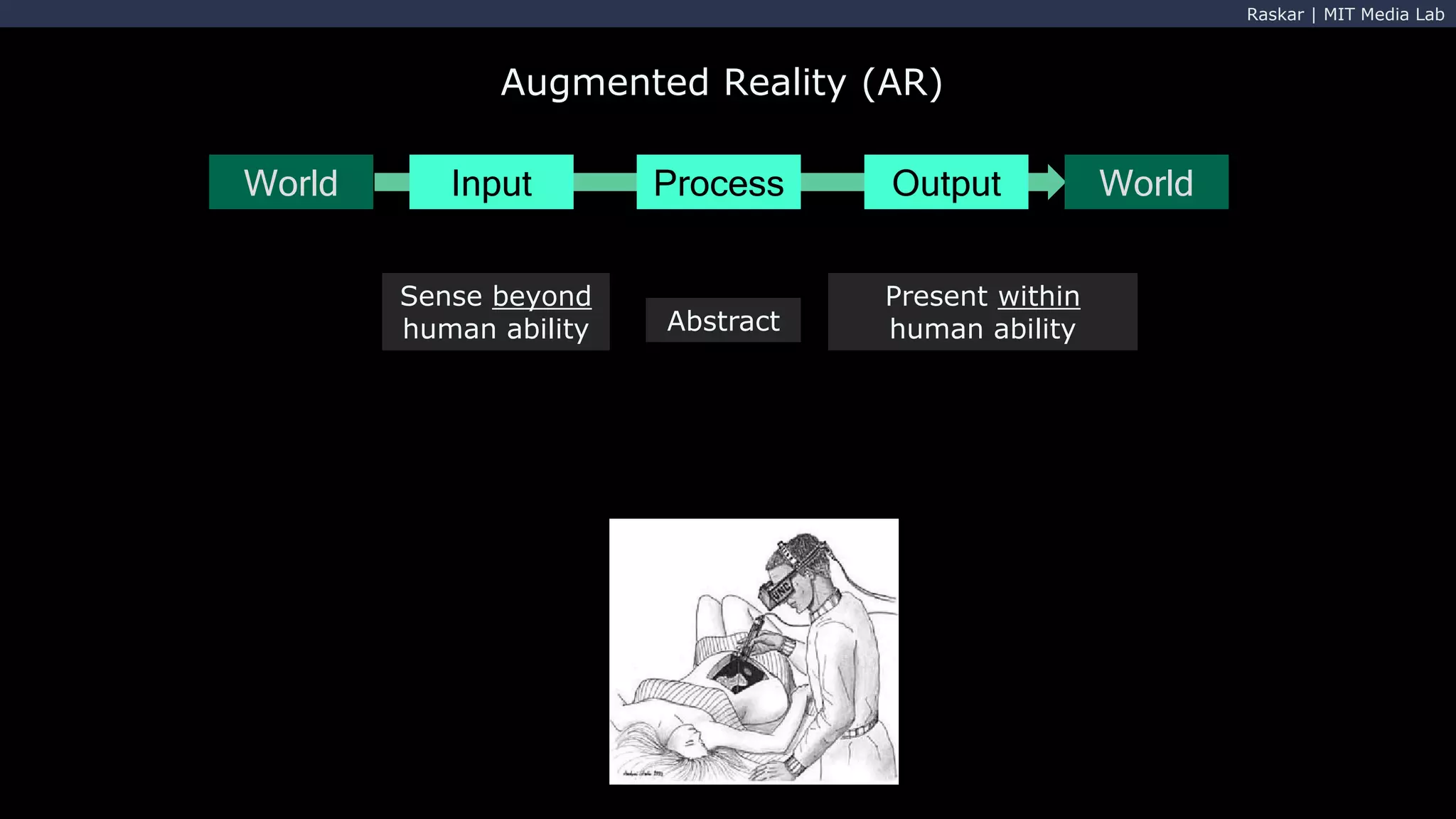

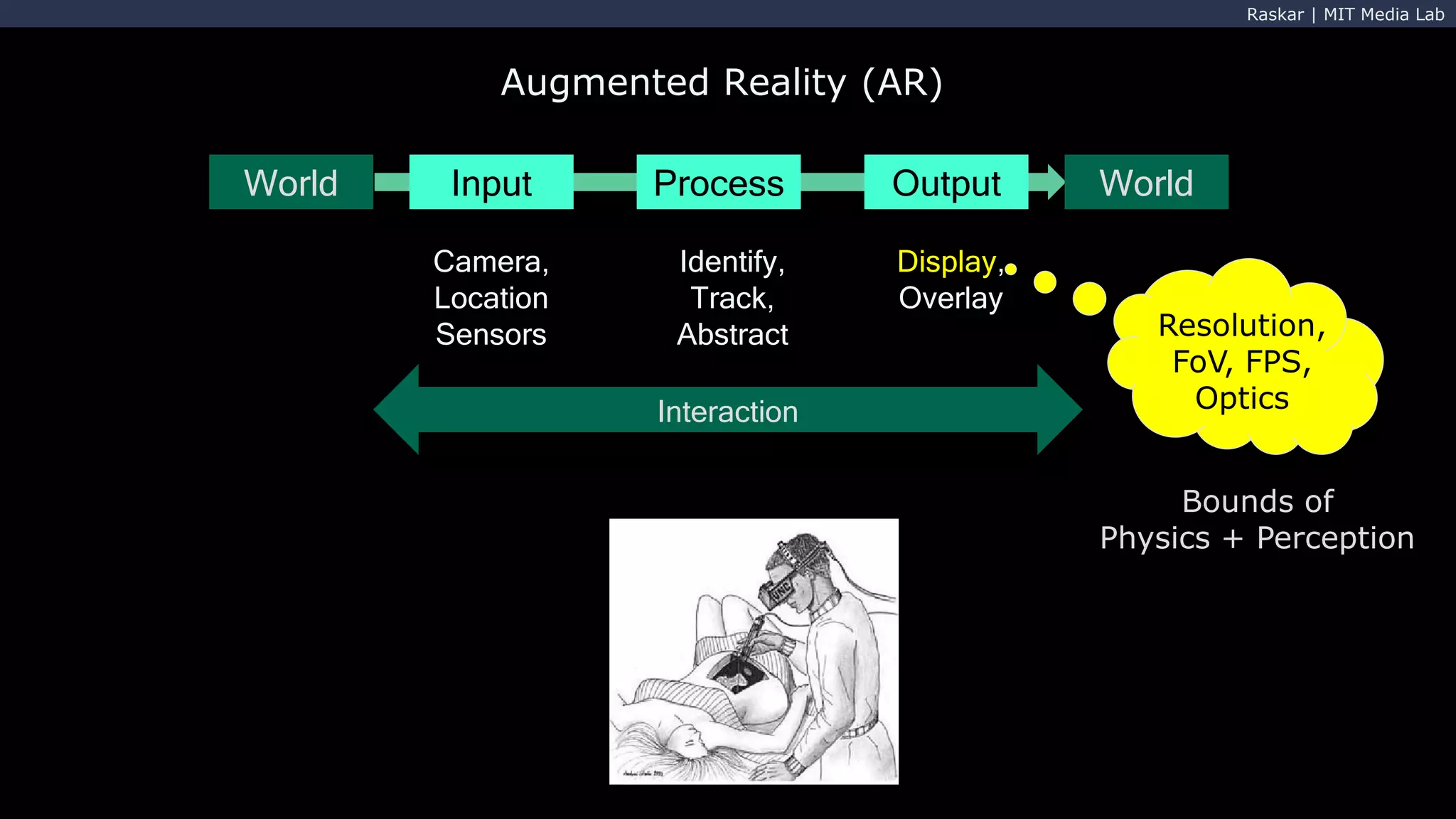

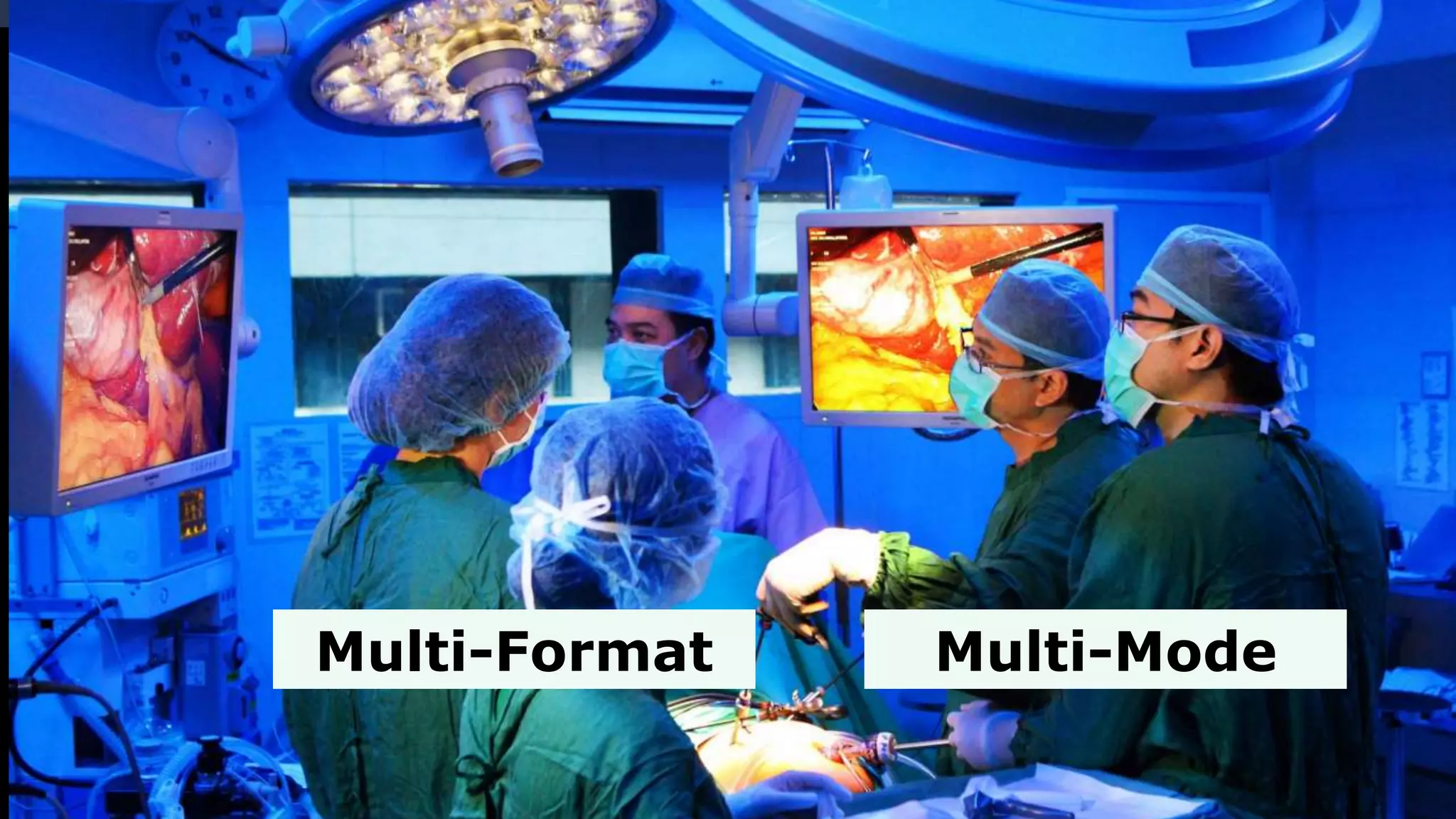

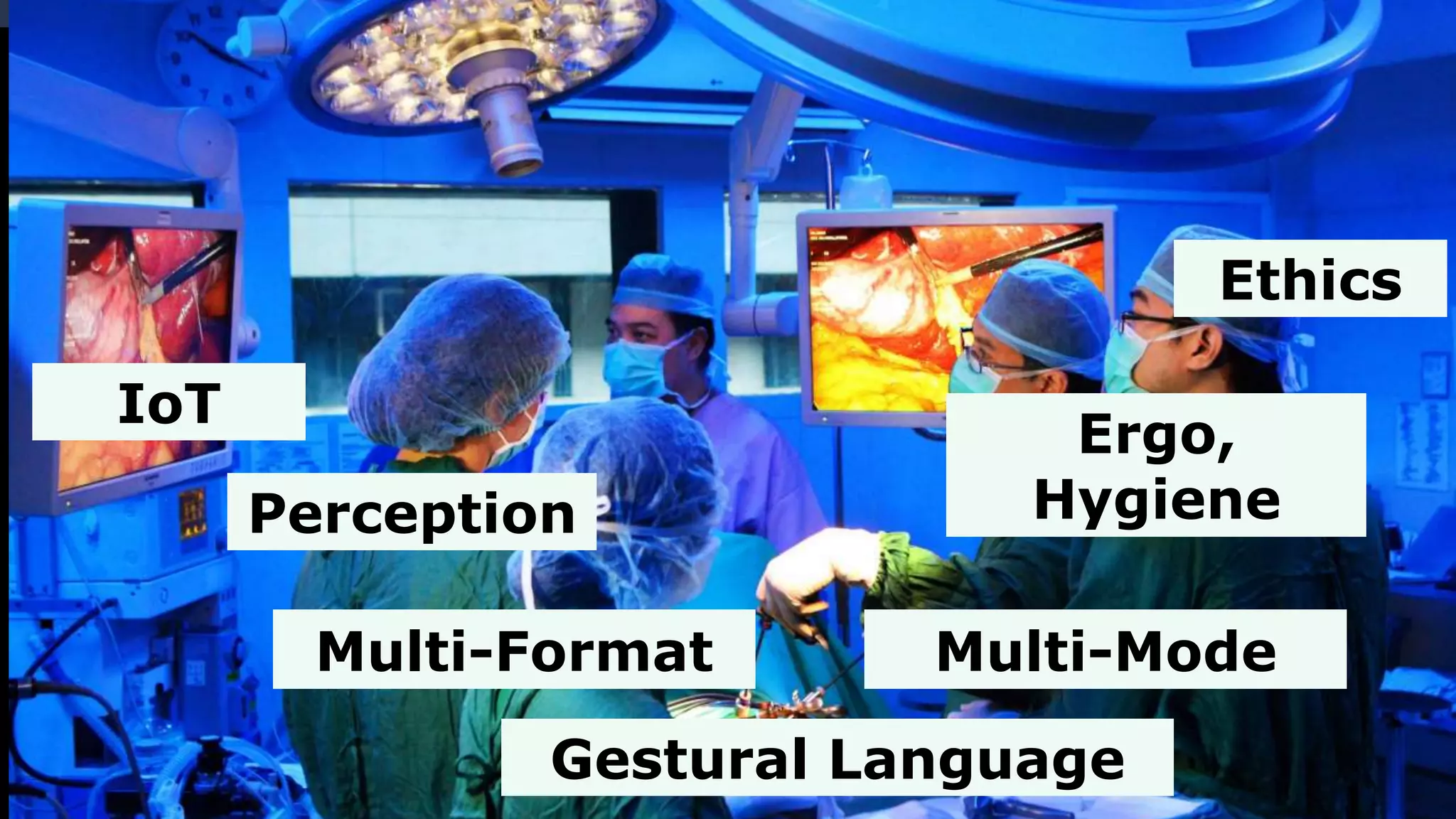

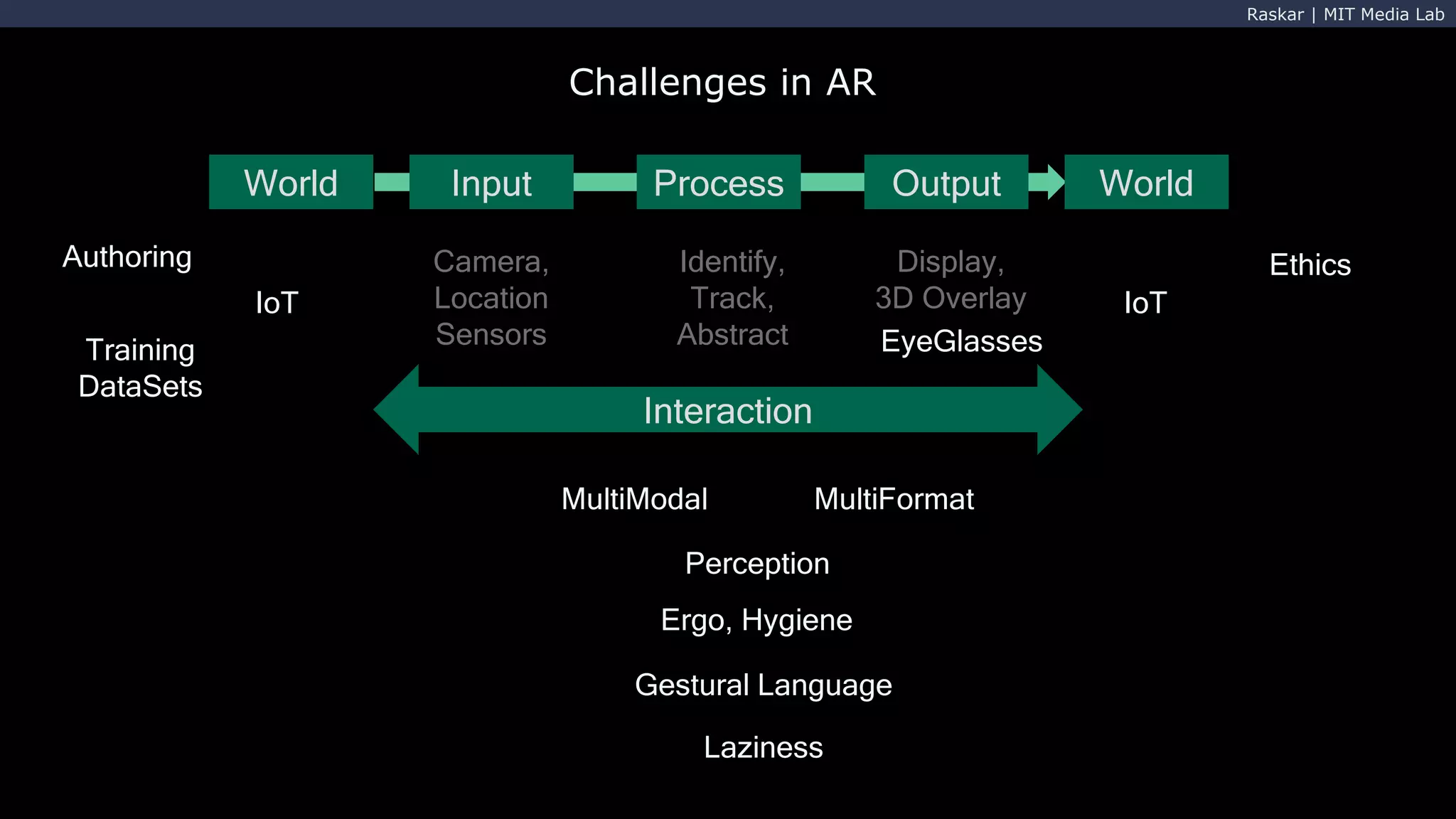

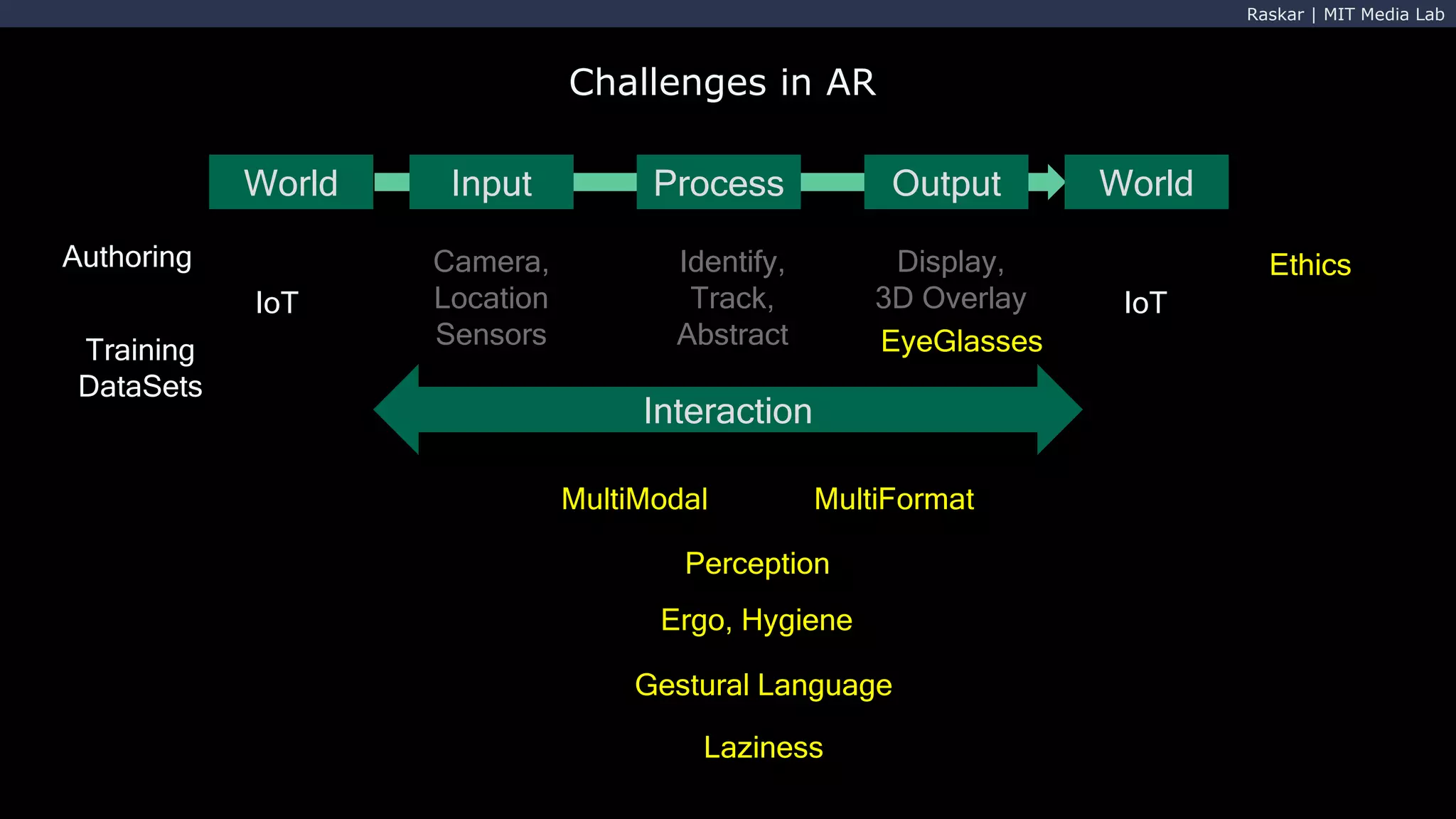

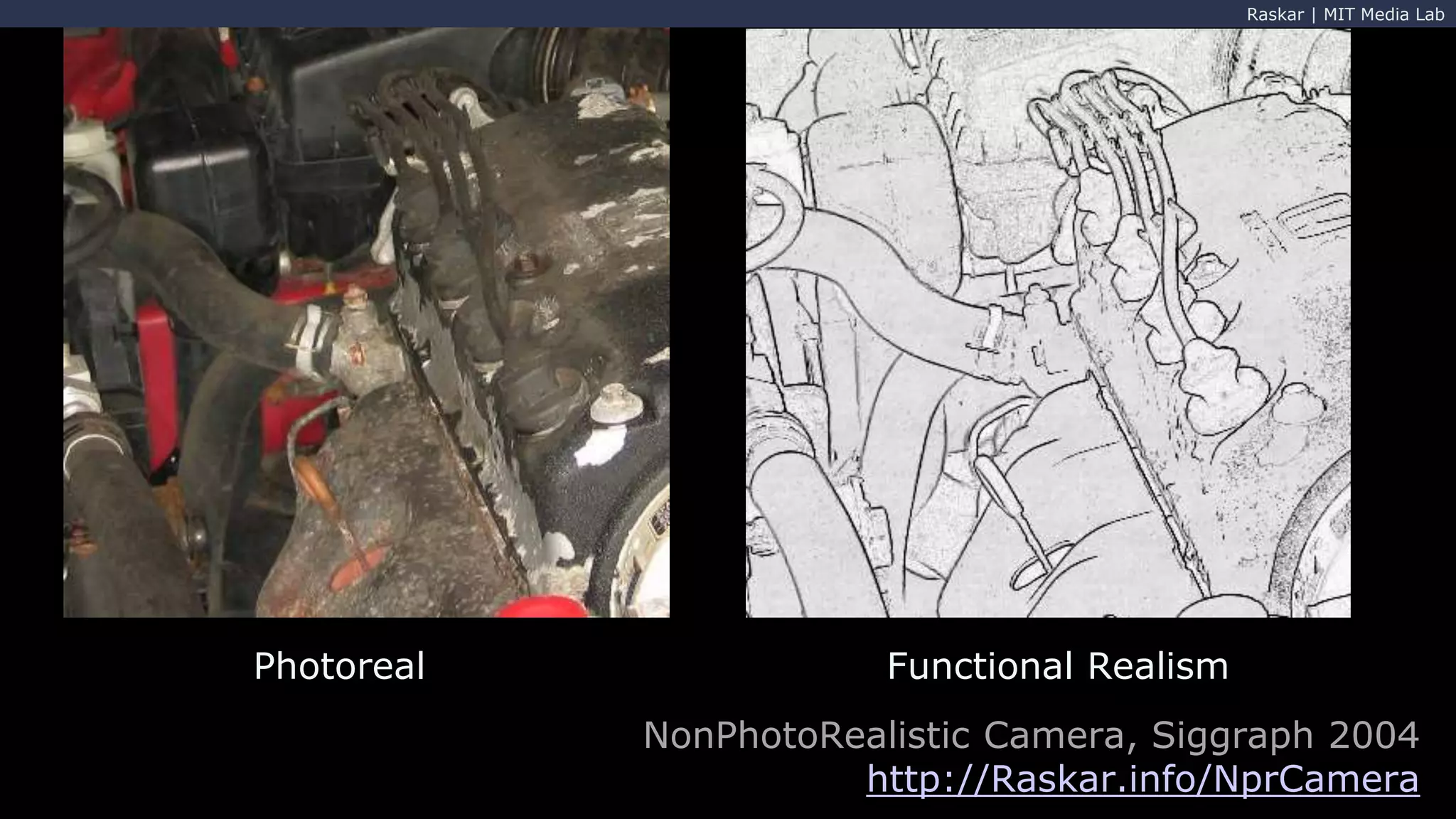

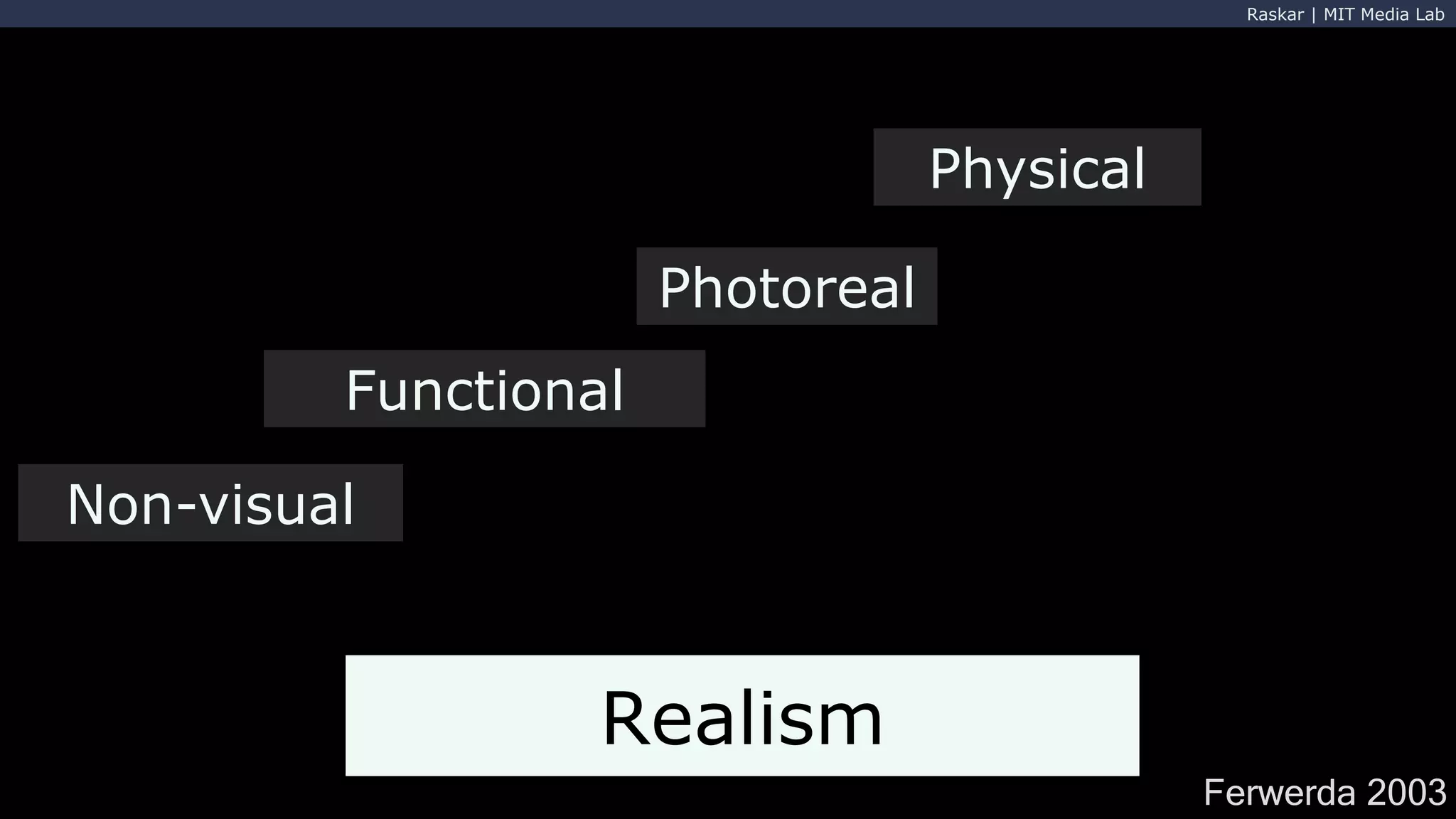

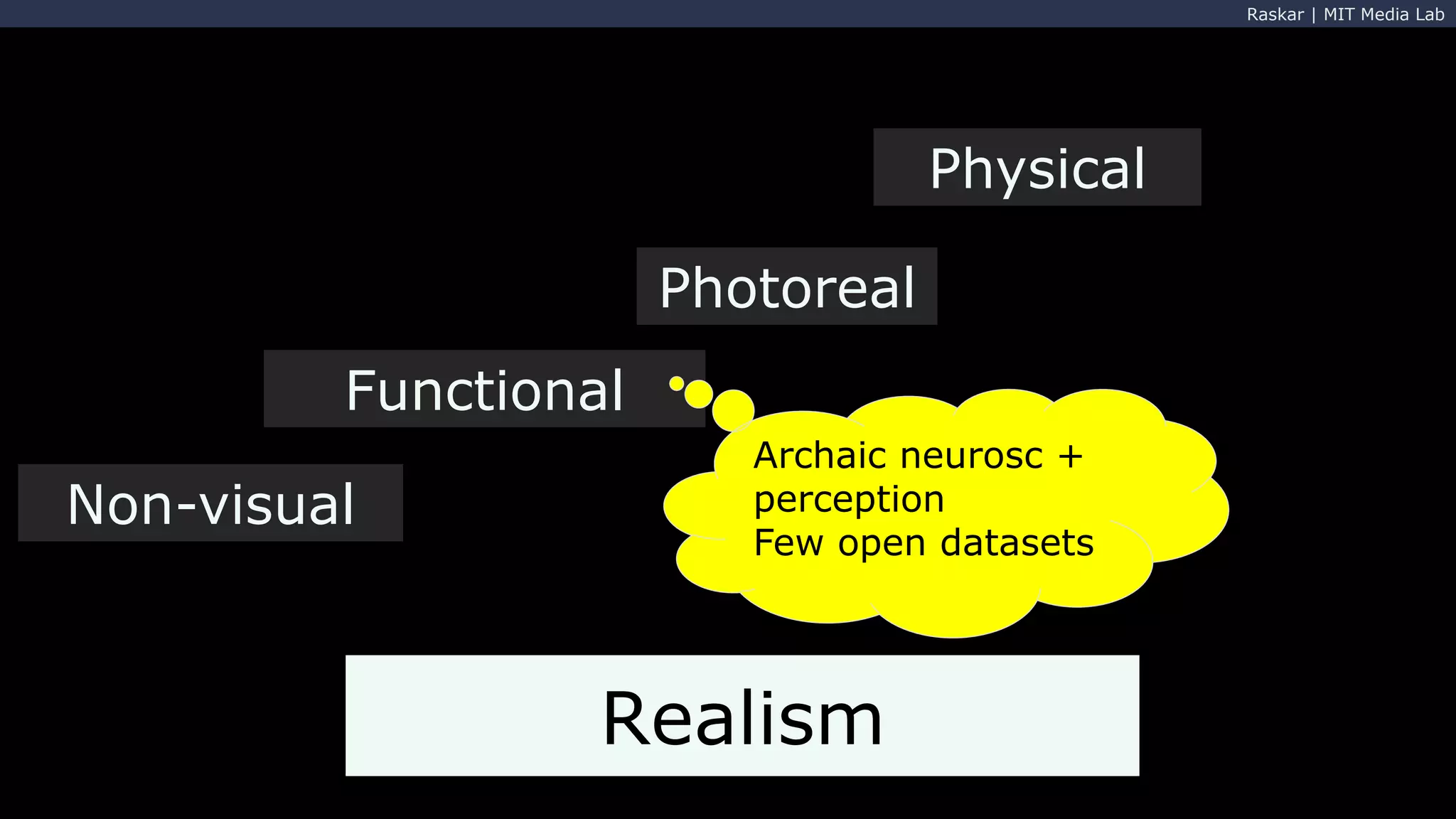

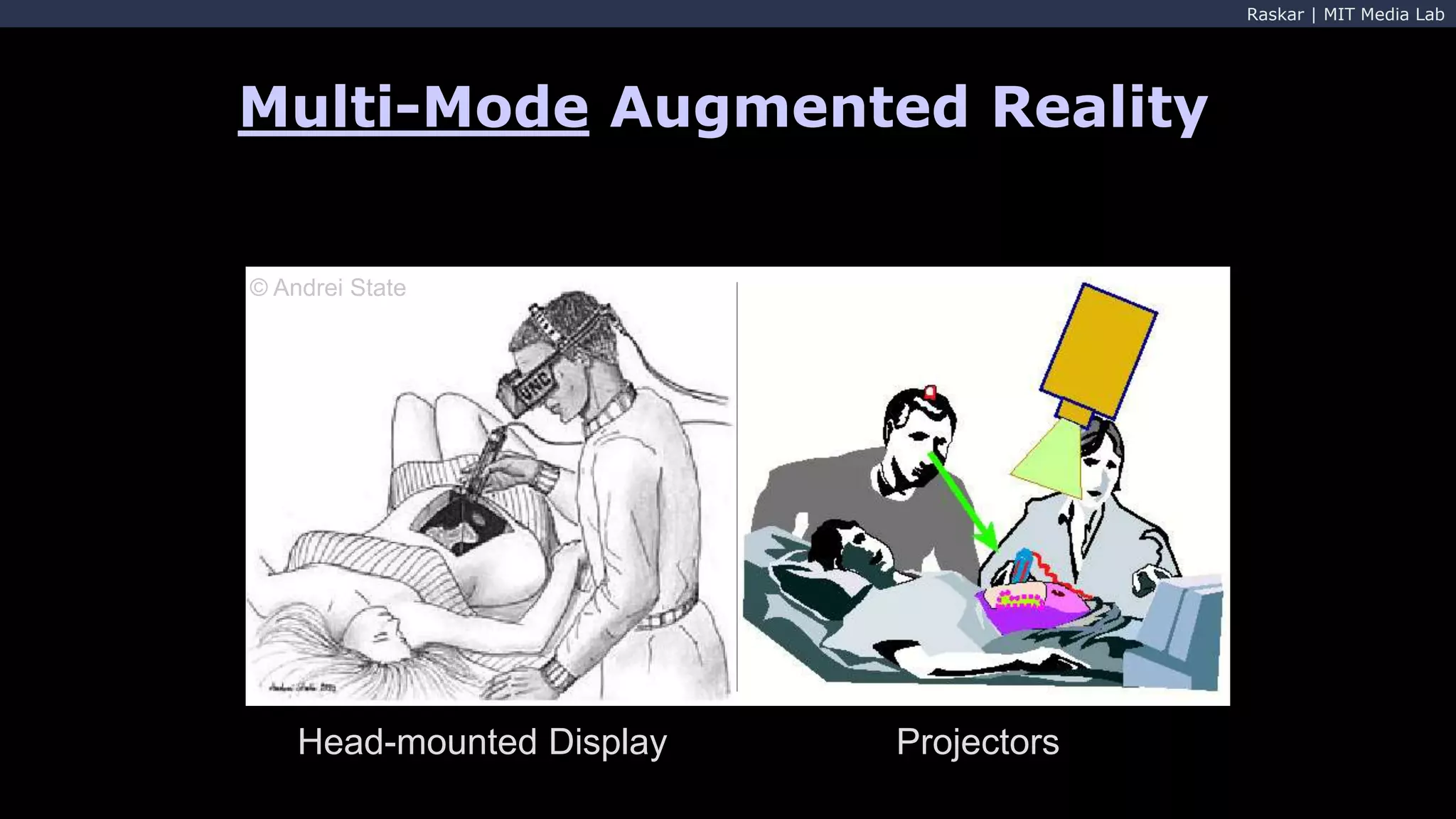

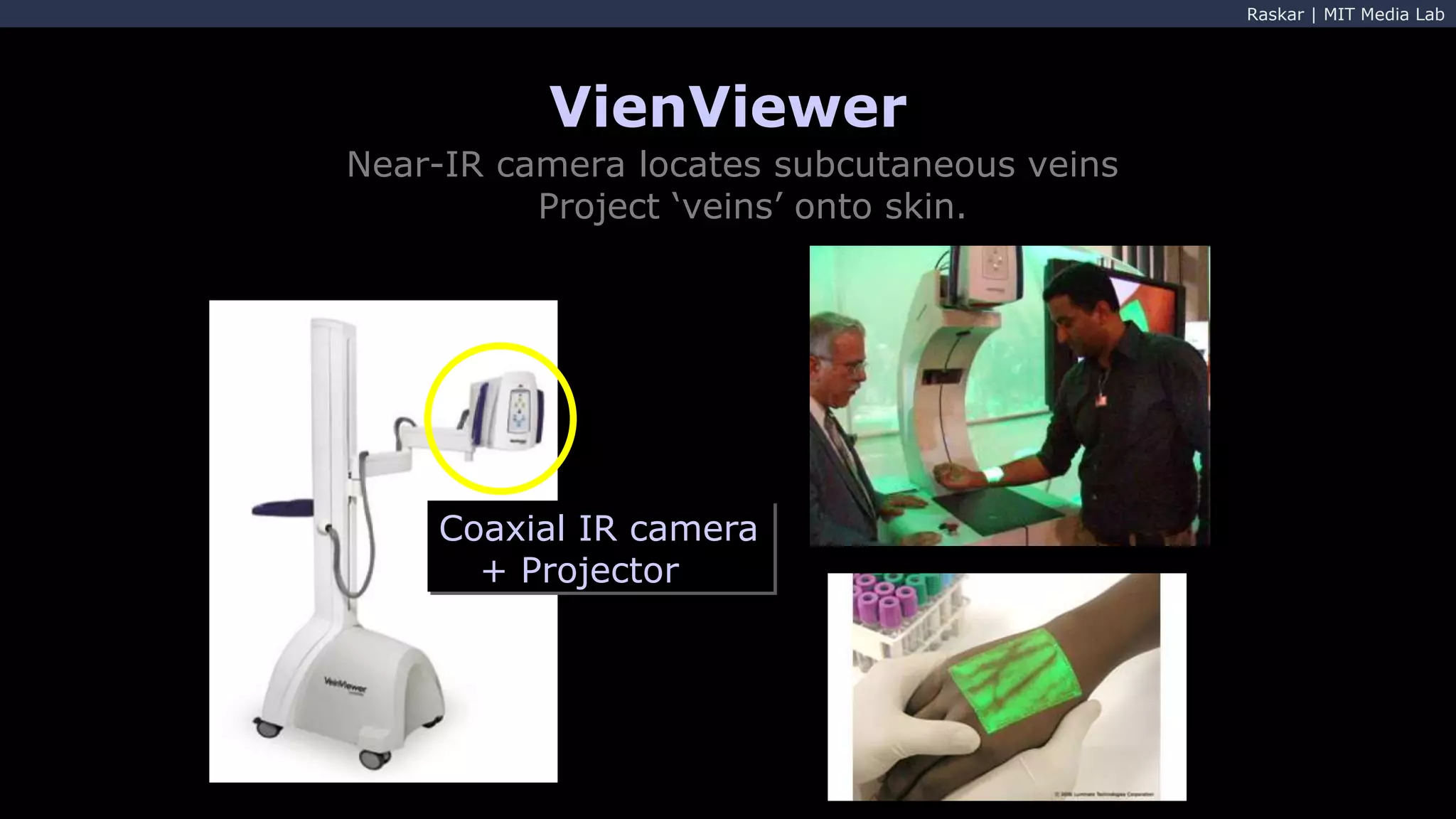

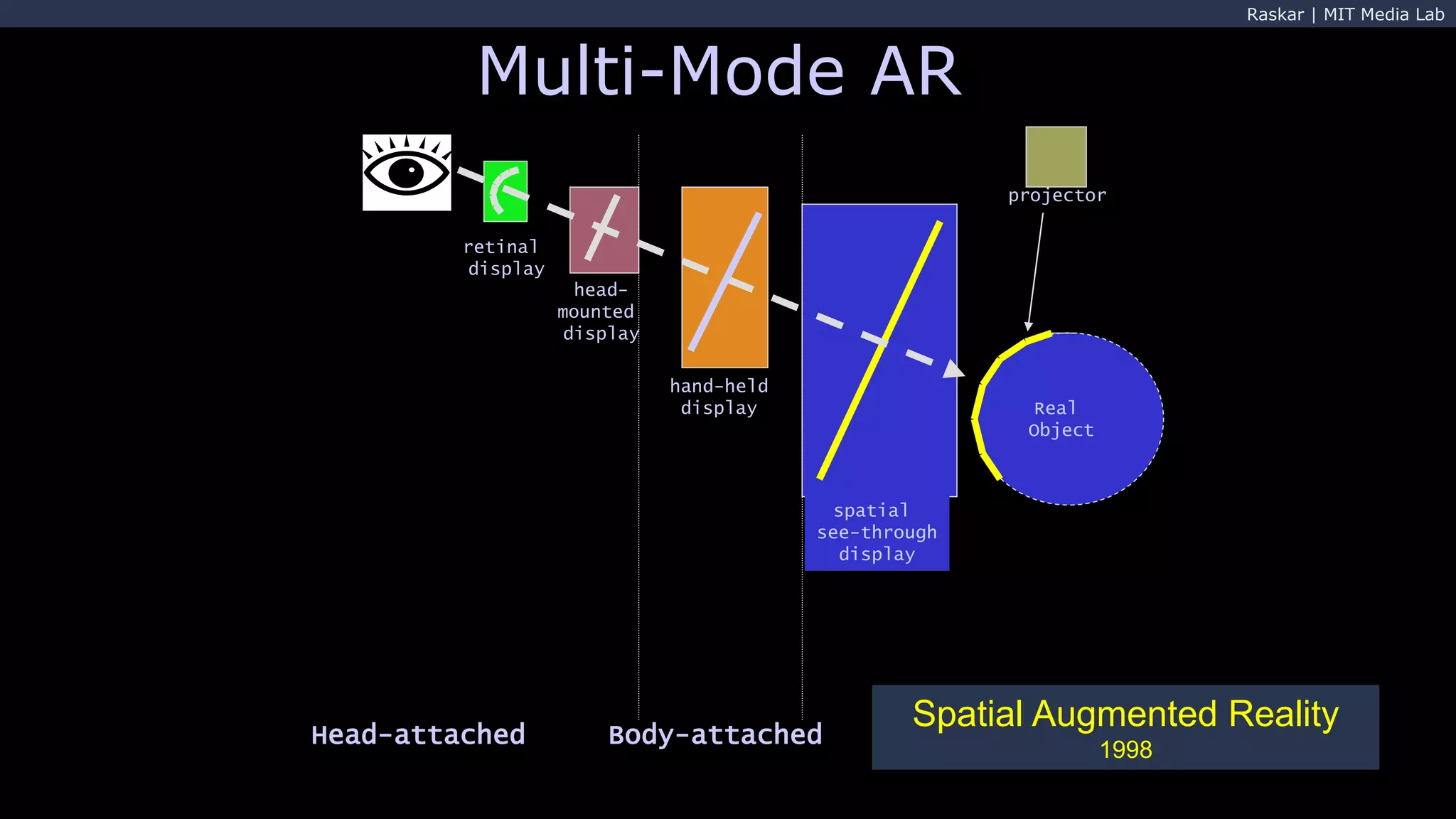

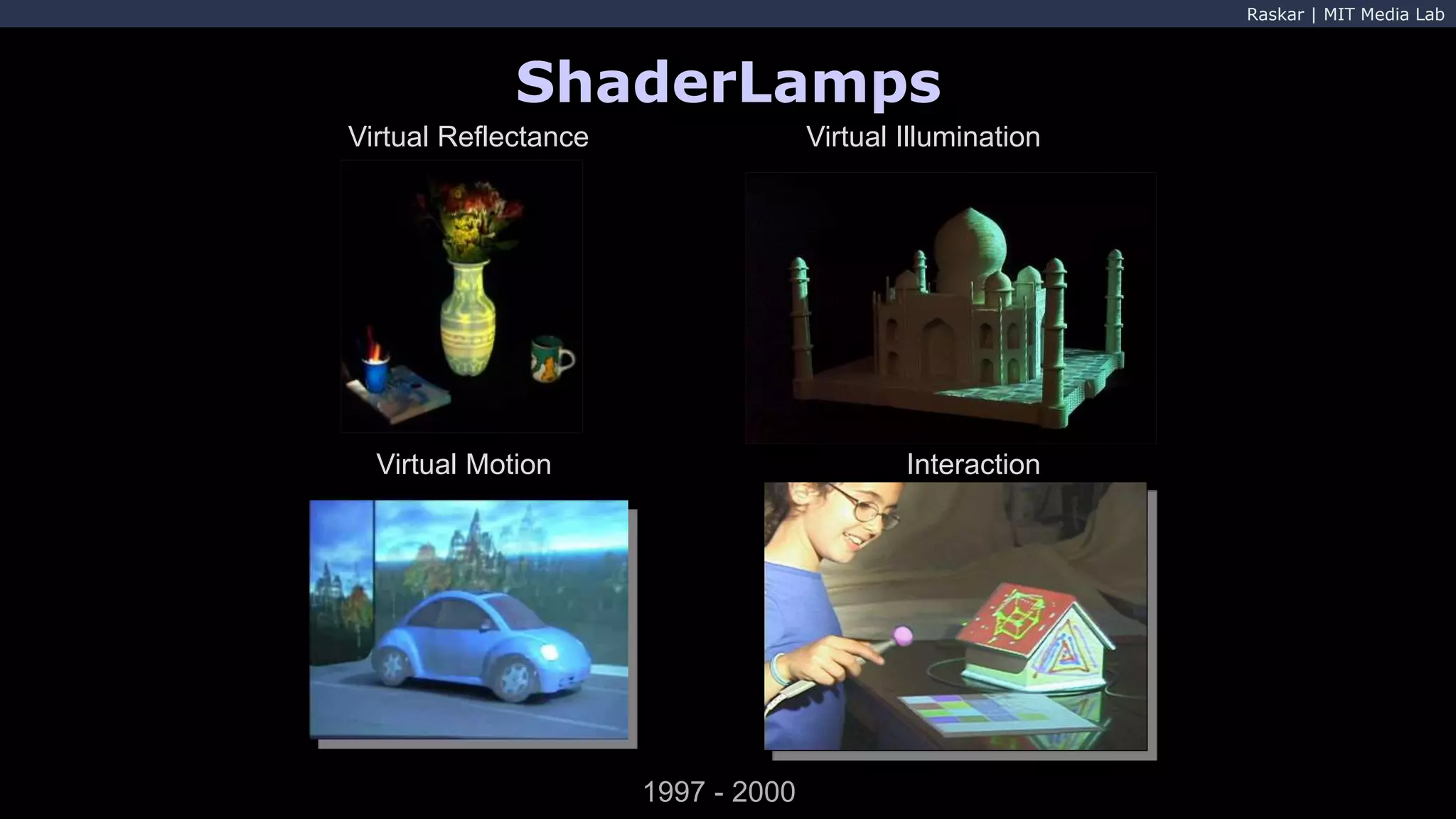

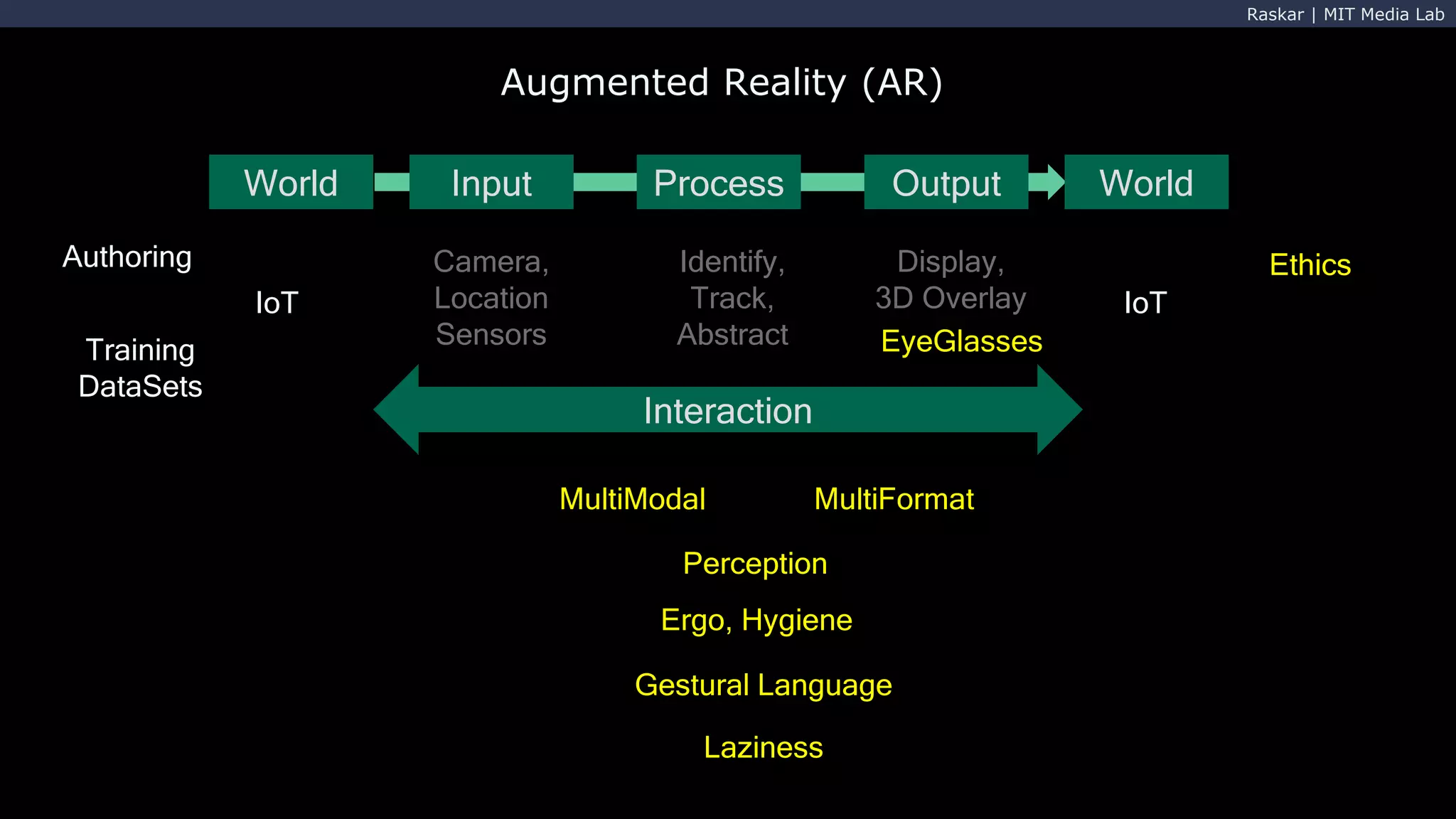

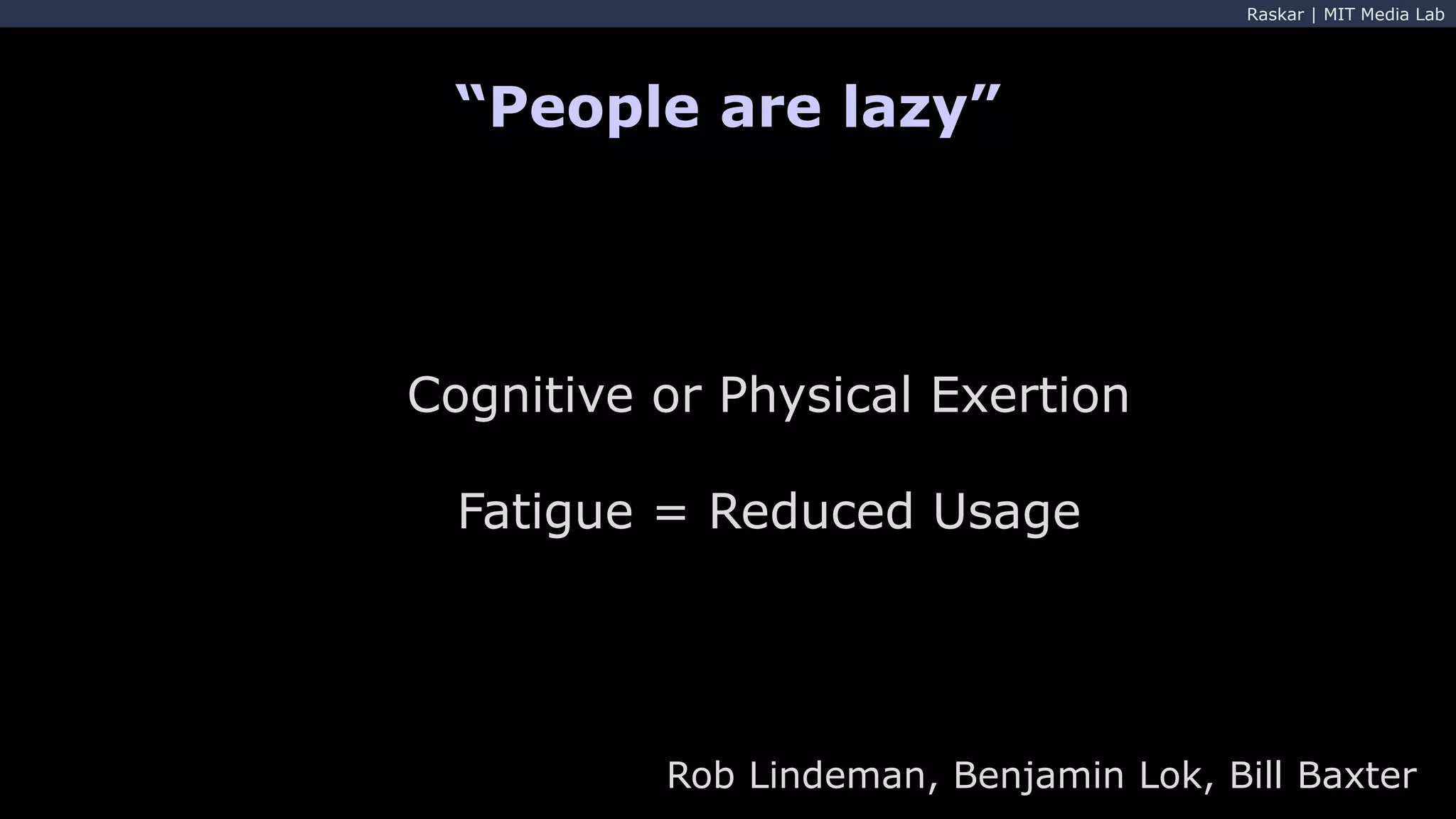

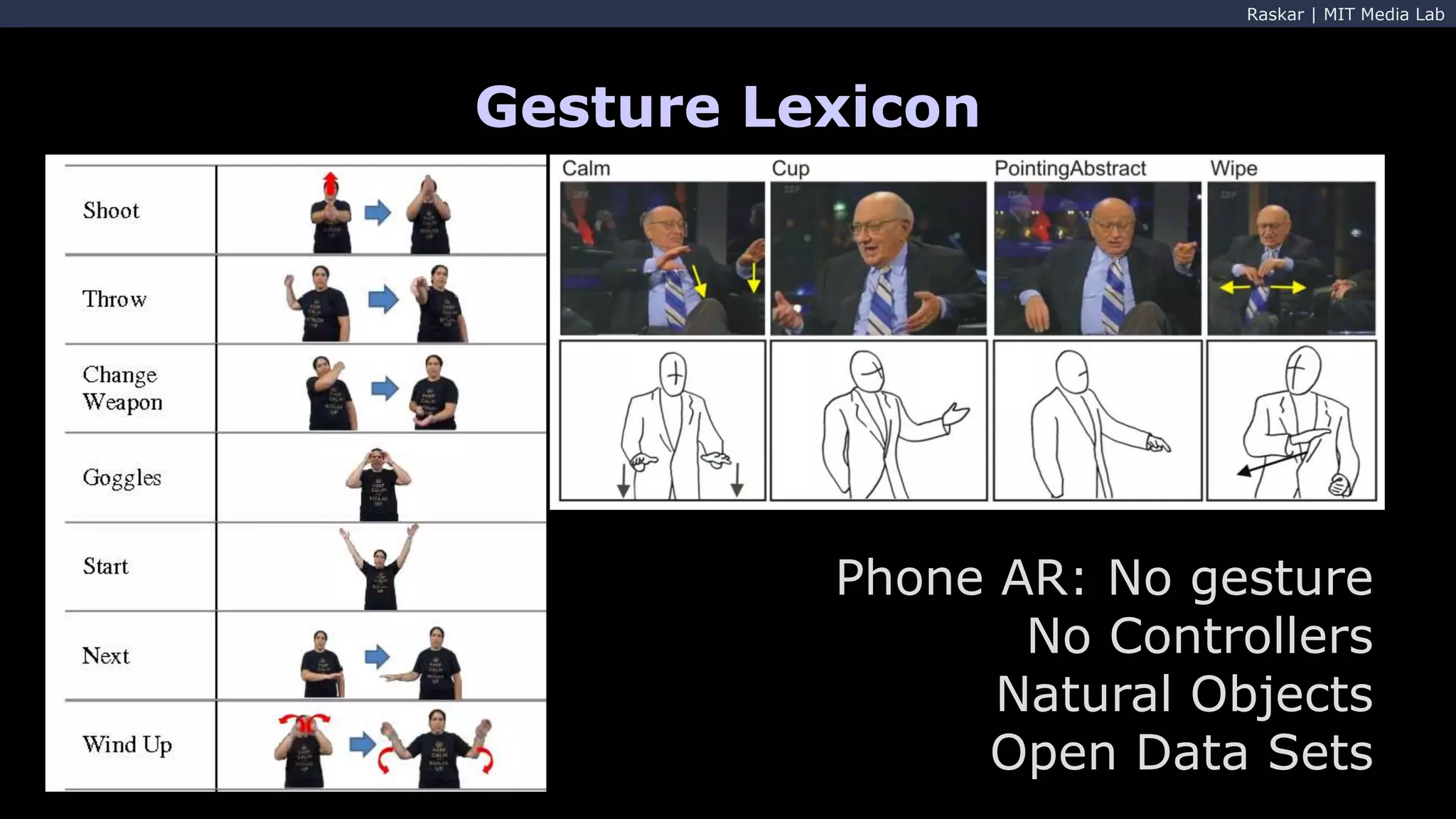

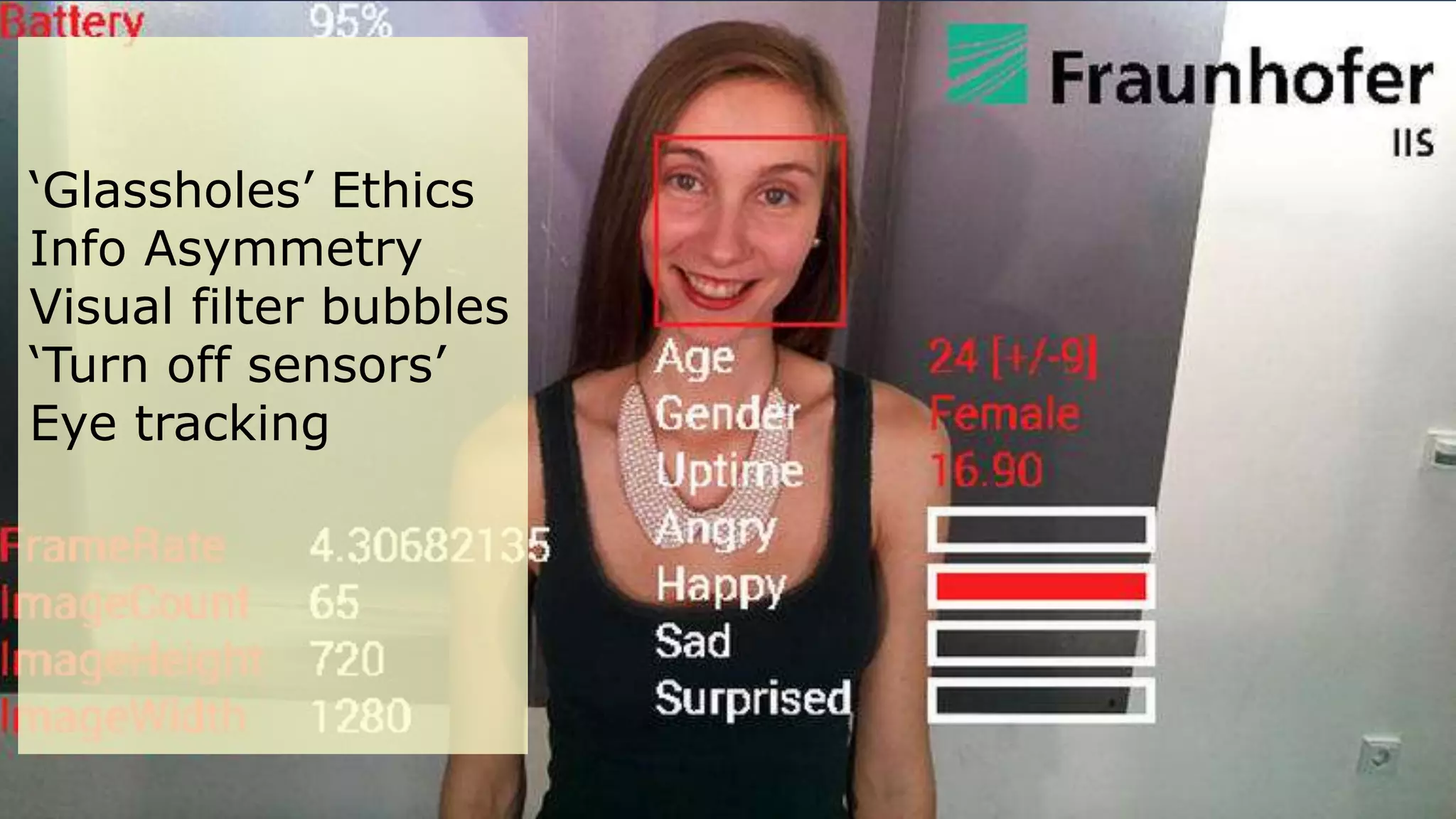

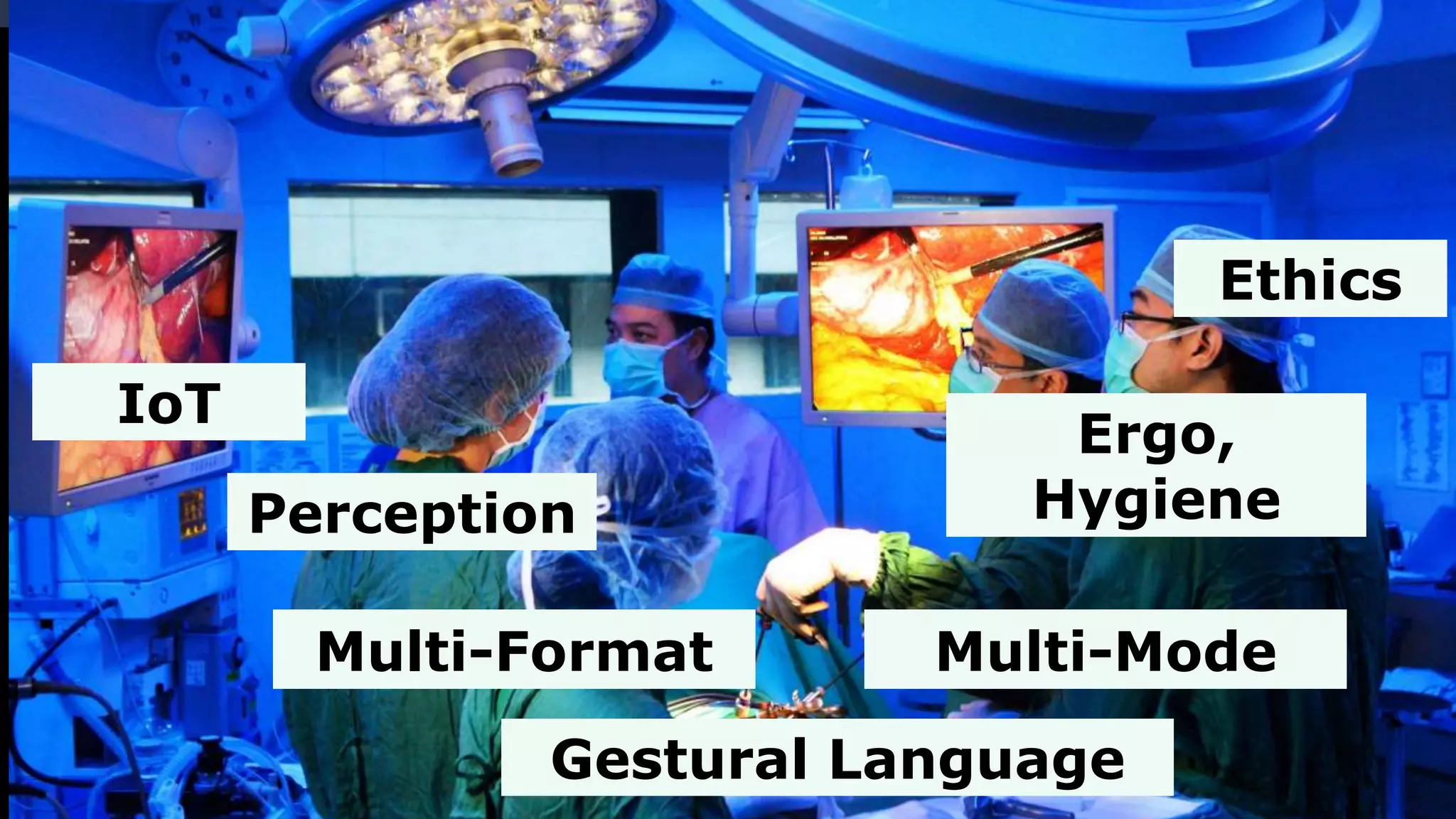

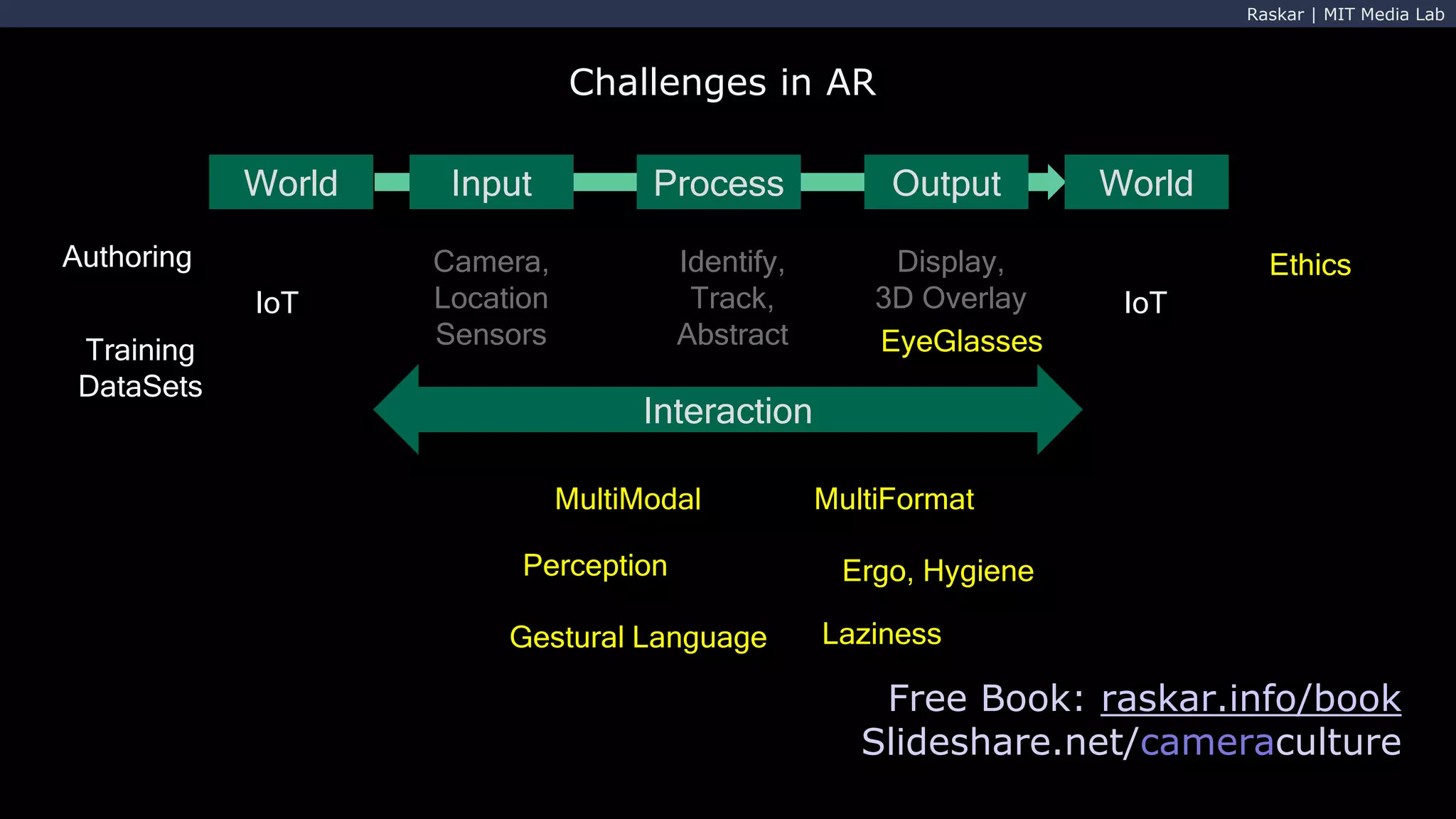

The document discusses the challenges and advancements in augmented reality (AR) and virtual reality (VR), particularly focusing on input processes, perception, and interaction in AR environments. It addresses issues such as human laziness, ethical concerns, and technological limitations while exploring various modalities and formats for AR applications. Additionally, it acknowledges contributions from various individuals and groups in the field of AR research and development.