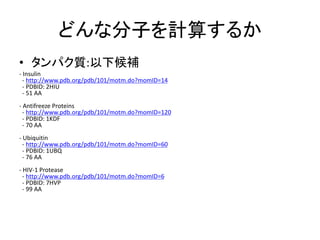

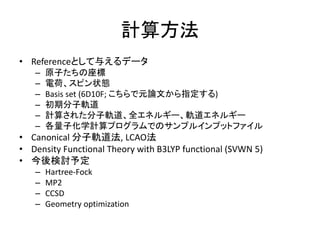

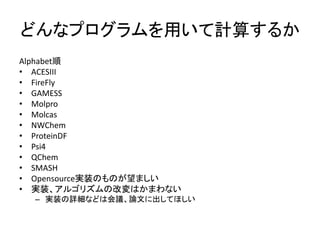

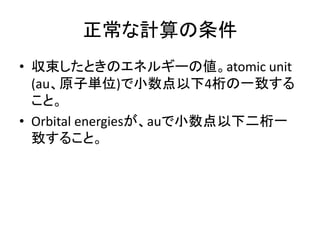

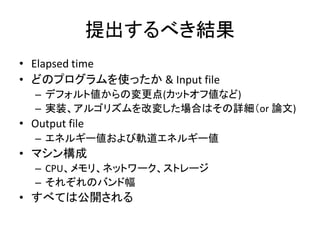

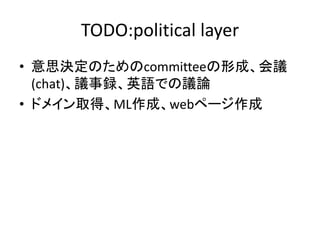

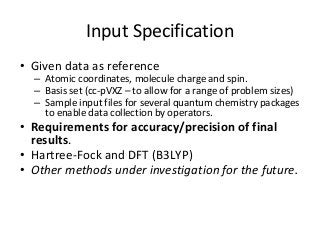

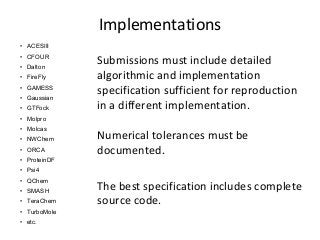

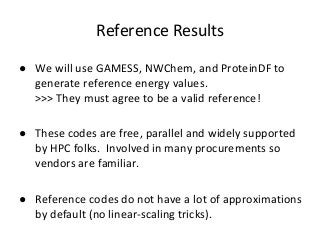

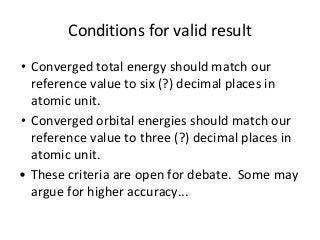

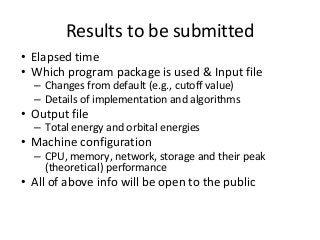

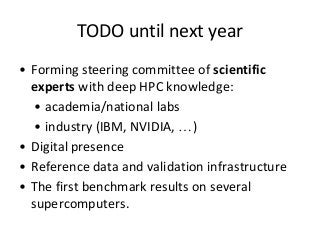

The document outlines the establishment and purpose of the QuantumChemistry500 benchmark, targeting quantum chemistry applications with specific focus on the Hartree-Fock method and its variants. It discusses current high-performance computing (HPC) benchmarks, the unique properties of QuantumChemistry500, and the requirement for convergence with established reference codes for accurate performance evaluation. The initiative aims to encourage hardware optimization and new software development while being science-driven and scale-invariant.