The document discusses the extension of graph neural networks (GNNs) for handling large and sparse graphs, particularly in the context of a summer internship project. Key techniques such as scatter operations and sparse matrix multiplication are highlighted for their efficiency in memory and computation, with various experiments conducted on chemical and network datasets. The findings suggest that while sparse patterns are generally beneficial, COO matrix representations are also valuable for very large graphs despite slower performance.

![Introduction to Graph Neural Network (GNN)

2D Convolution Graph Convolution

[2019 Zonghan+]

https://arxiv.org/pdf/1901.00596.pdf](https://image.slidesharecdn.com/20190930pfninternship2019extensionofchainer-chemistryforlargesparsegraphkenshinabe-191001022344/75/PFN-Summer-Internship-2019-Kenshin-Abe-Extension-of-Chainer-Chemistry-for-Large-and-Sparse-Graph-2-2048.jpg)

![Scatter Operation

[PyTorch Scatter]

https://pytorch-scatter.readthedoc

s.io/en/latest/functions/add.html

• Add each value of input to an element of output

specified by index](https://image.slidesharecdn.com/20190930pfninternship2019extensionofchainer-chemistryforlargesparsegraphkenshinabe-191001022344/75/PFN-Summer-Internship-2019-Kenshin-Abe-Extension-of-Chainer-Chemistry-for-Large-and-Sparse-Graph-8-2048.jpg)

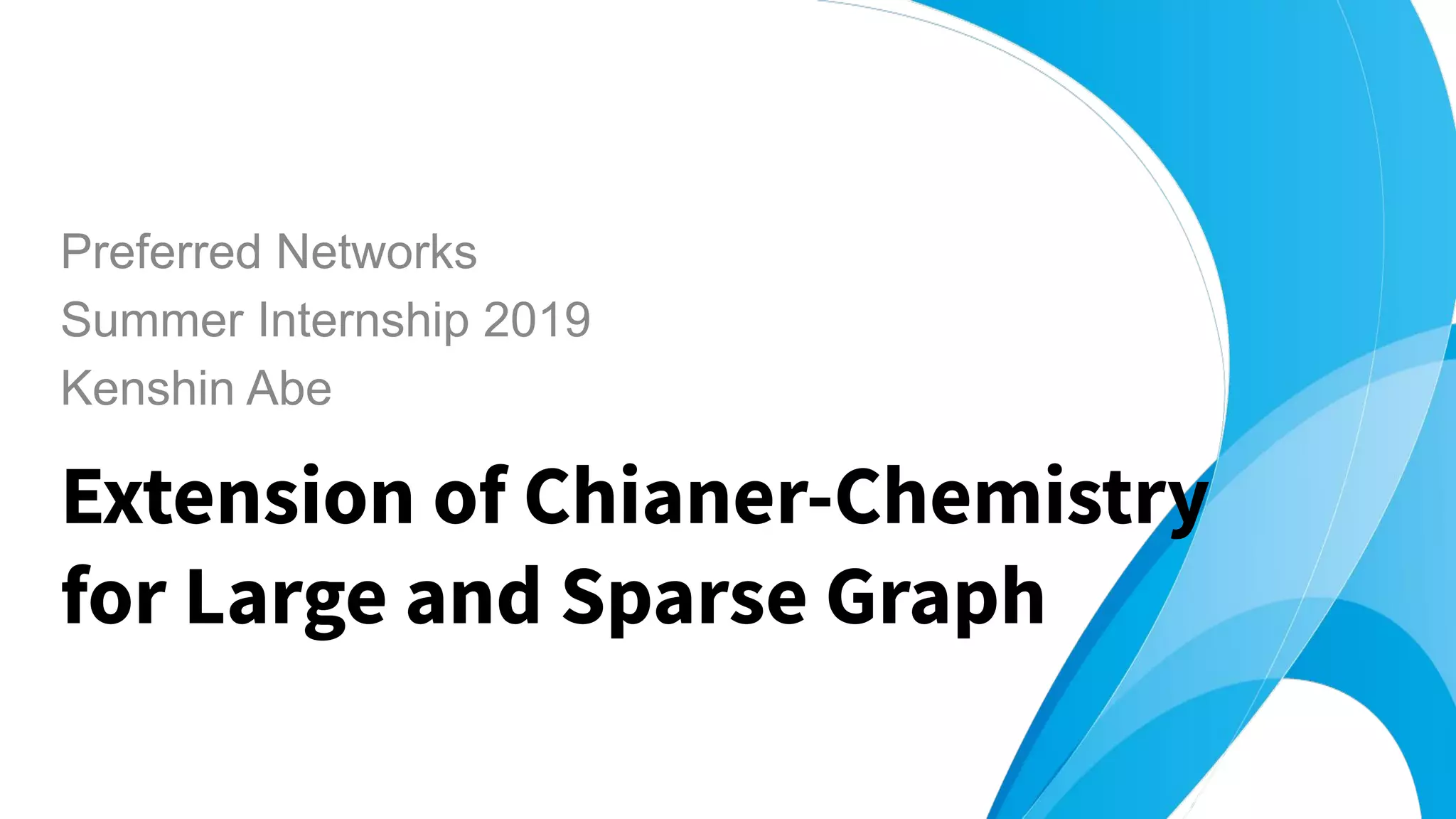

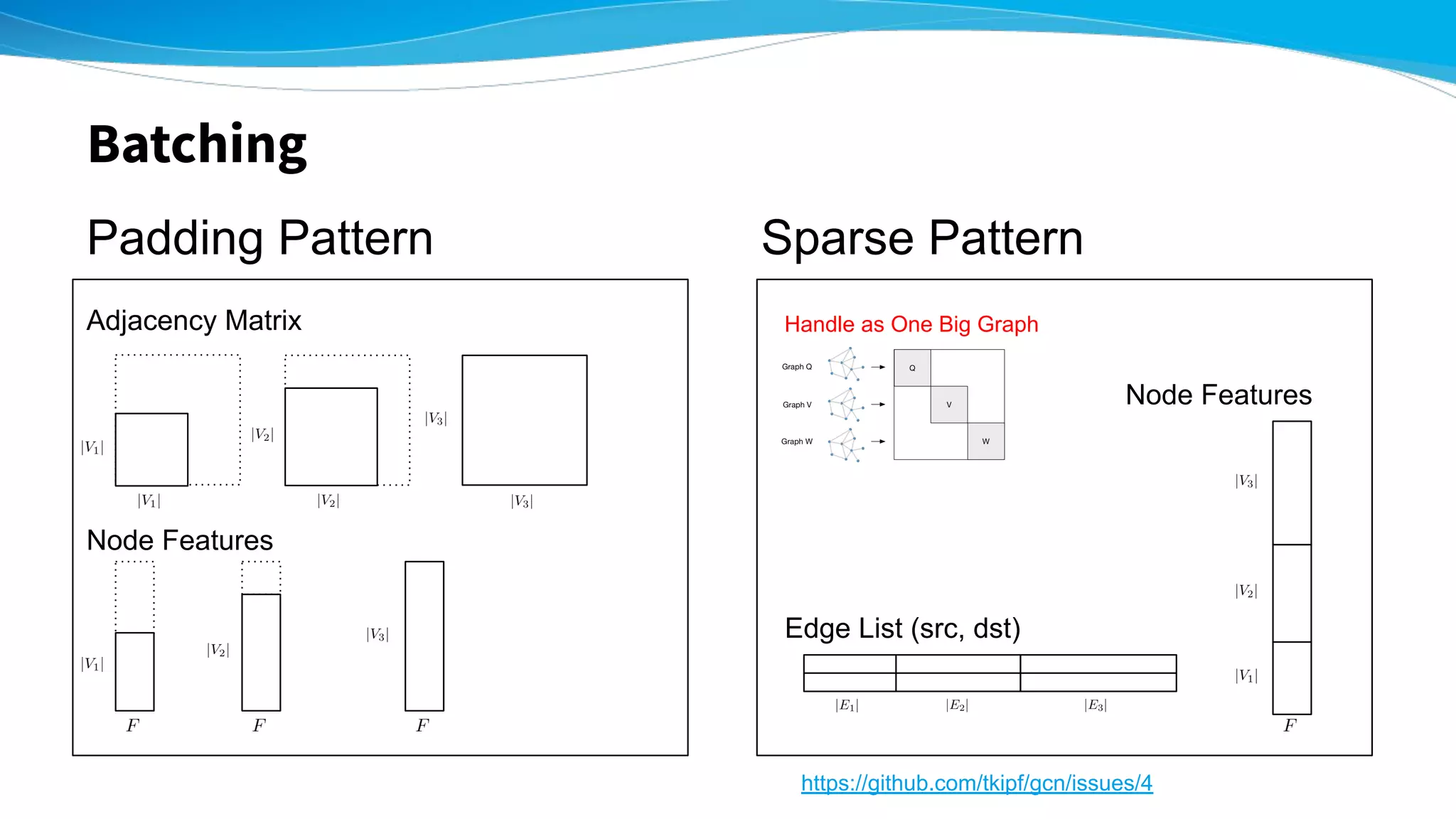

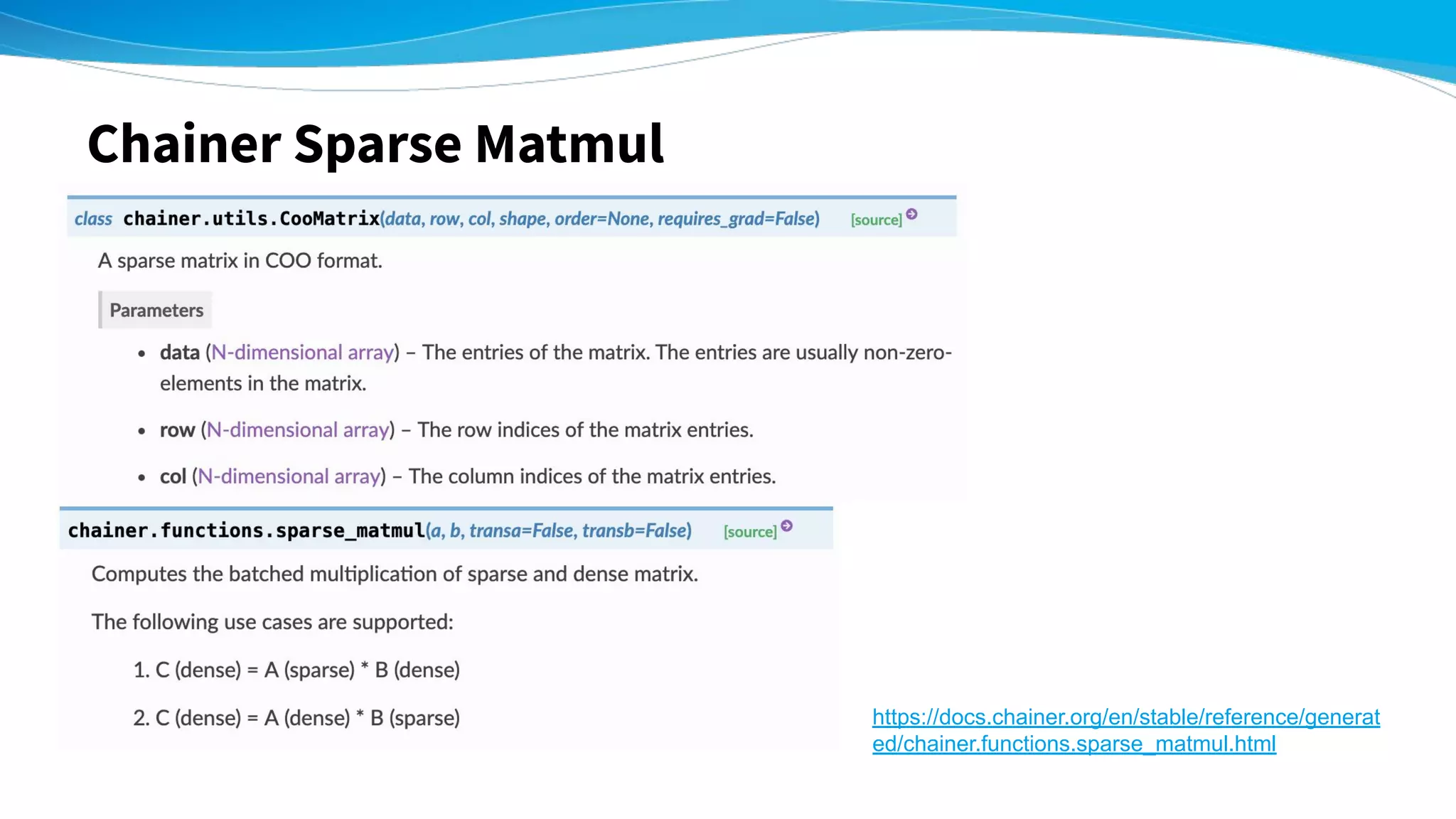

![Experiment - Chemical Dataset -

Padding Pattern

(s / epoch)

Sparse Pattern

(s / epoch)

QM9

V=1~9

133,885 graphs

6.92 5.56 1.24 times faster!

ZINC

V=~38 nodes

249,455 graphs

16.67 11.47 1.45 times faster!!

Training of RelGCN [2019 Schlichtkrull+]

layer_num=2, feature_num=16, batchsize=256

Intel(R) Xeon(R) Gold 6254 CPU @ 3.10GHz](https://image.slidesharecdn.com/20190930pfninternship2019extensionofchainer-chemistryforlargesparsegraphkenshinabe-191001022344/75/PFN-Summer-Internship-2019-Kenshin-Abe-Extension-of-Chainer-Chemistry-for-Large-and-Sparse-Graph-11-2048.jpg)

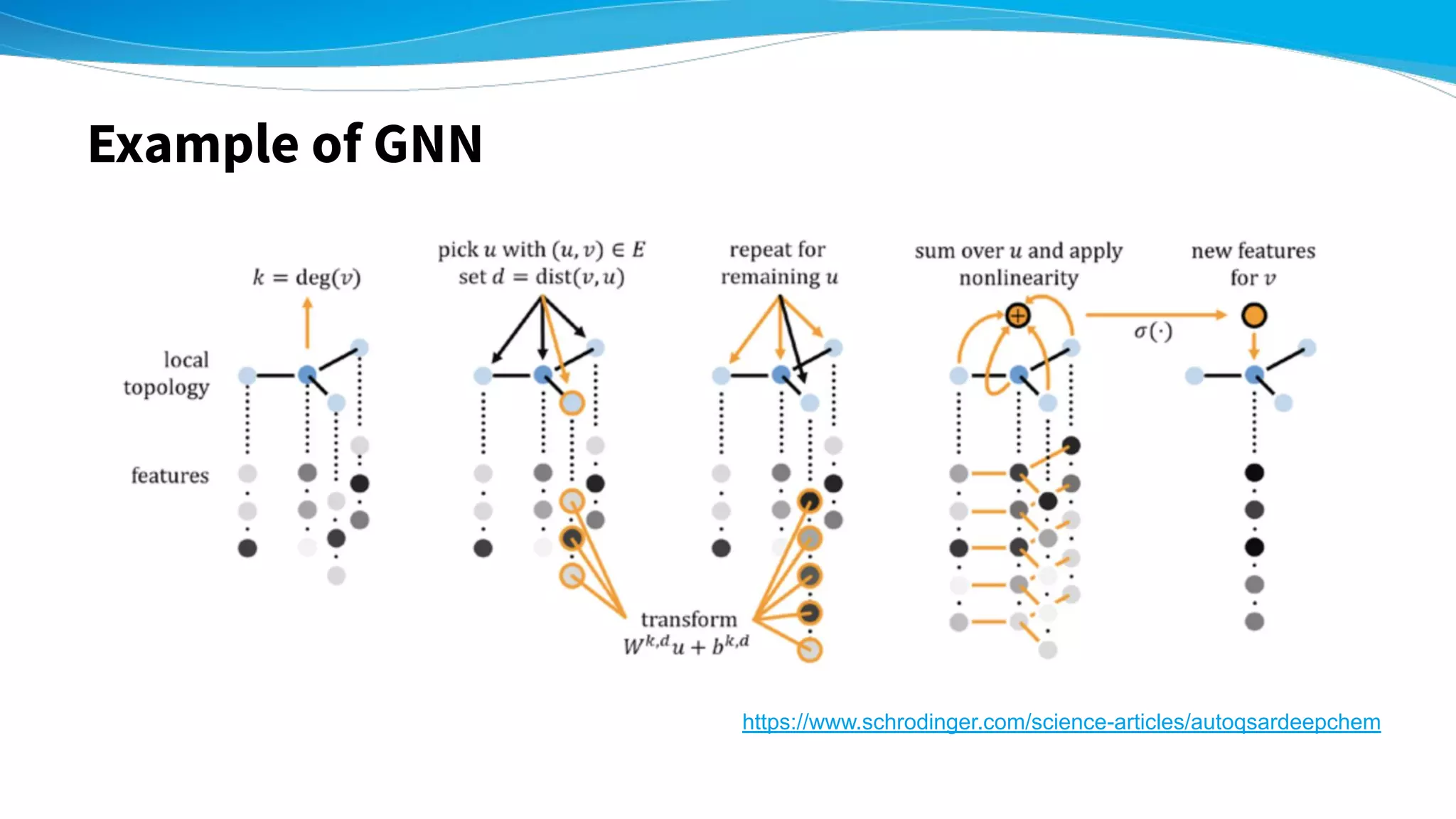

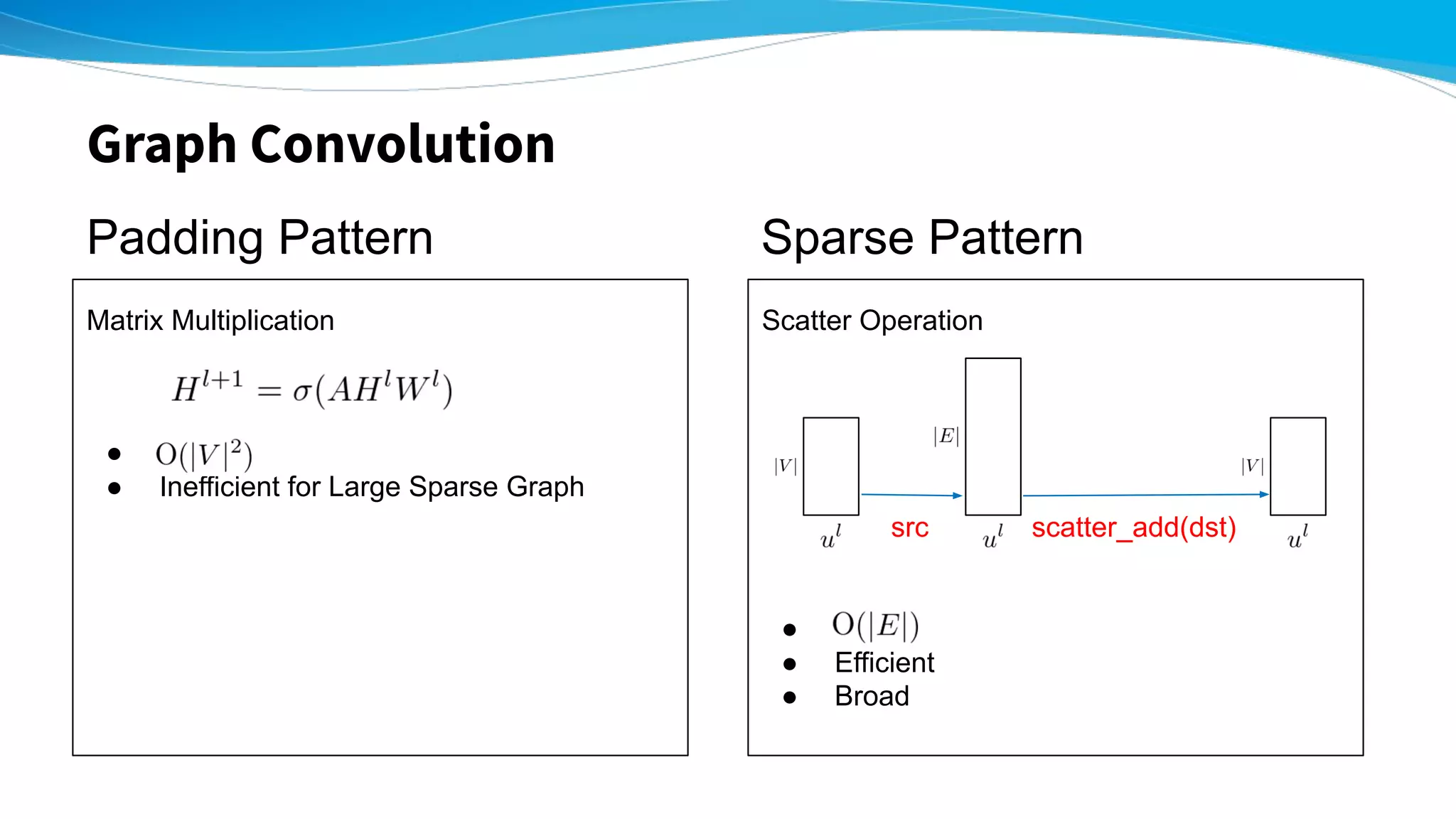

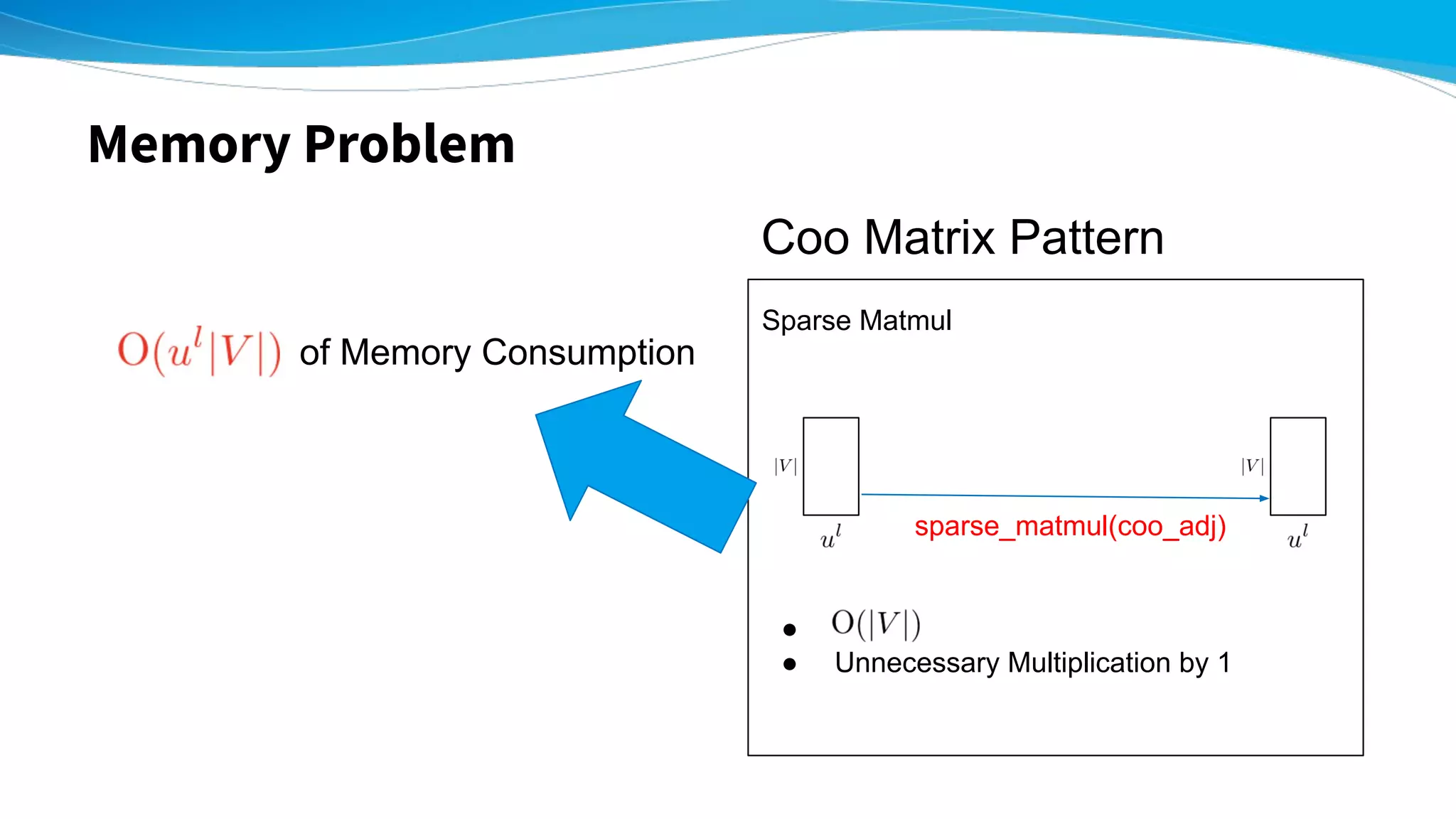

![Experiment - Network Dataset on GPU -

Padding Pattern

(s / 100 epoch)

Sparse Pattern

(s / 100 epoch)

Coo Matrix Pattern

(s / 100 epoch)

Cora

V=2,708

E=5,278

3.3760 3.0190 3.5500

Citeseer

V=3,312

E=4,660

6.8128 3.3024 6.2707

Reddit

V=232,965

E=11,606,919

Out of Memory Out of Memory 318.76

(5.452 GB)

頂点: 23万

辺:1100万!!

Training of GIN [2019 Keyulu+]

layer_num=2, feature_num=64

On a single Tesla V100-SXM2](https://image.slidesharecdn.com/20190930pfninternship2019extensionofchainer-chemistryforlargesparsegraphkenshinabe-191001022344/75/PFN-Summer-Internship-2019-Kenshin-Abe-Extension-of-Chainer-Chemistry-for-Large-and-Sparse-Graph-15-2048.jpg)

![Experiment - Network Dataset on CPU -

Padding Pattern

(s / 100 epoch)

Sparse Pattern

(s / 100 epoch)

Coo Matrix Pattern

(s / 100 epoch)

Cora

V=2,708

E=5,278

224.439 22.8092 12.1168

Citeseer

V=3,312

E=4,660

1346.11 23.3707 39.8982

Reddit

V=232,965

E=11,606,919

Out of Memory Out of Memory 28097.187

頂点: 23万

辺:1100万!!

Training of GIN [2019 Keyulu+]

layer_num=2, feature_num=64

On Intel(R) Xeon(R) Gold 6254 CPU @ 3.10GHz](https://image.slidesharecdn.com/20190930pfninternship2019extensionofchainer-chemistryforlargesparsegraphkenshinabe-191001022344/75/PFN-Summer-Internship-2019-Kenshin-Abe-Extension-of-Chainer-Chemistry-for-Large-and-Sparse-Graph-16-2048.jpg)