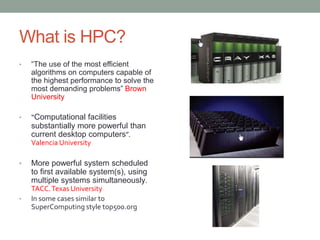

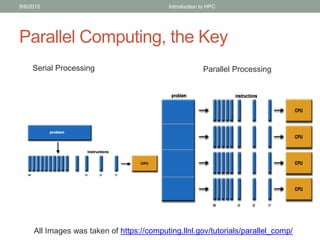

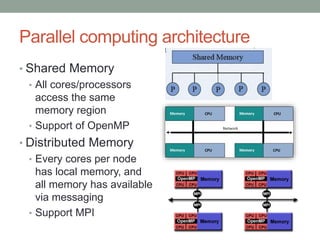

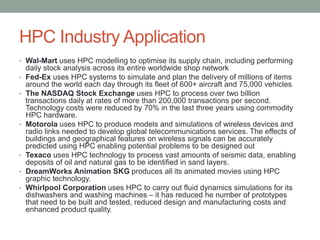

Esteban Hernandez is a PhD candidate researching heterogeneous parallel programming for weather forecasting. He has 12 years of experience in software architecture, including Linux clusters, distributed file systems, and high performance computing (HPC). HPC involves using the most efficient algorithms on high-performance computers to solve demanding problems. It is used for applications like weather prediction, fluid dynamics simulations, protein folding, and bioinformatics. Performance is often measured in floating point operations per second. Parallel computing using techniques like OpenMP, MPI, and GPUs is key to HPC. HPC systems are used across industries for applications like supply chain optimization, seismic data processing, and drug development.