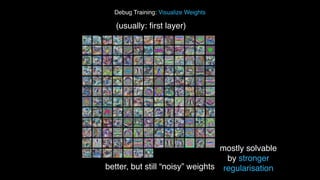

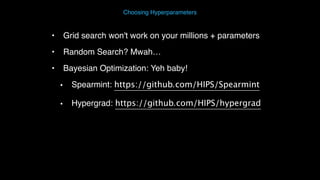

The document discusses deep learning and its significance in image understanding through convolutional neural networks, highlighting its ability to learn features automatically as opposed to manually designed features. It outlines the deep learning process, including data preprocessing, model architecture selection, training, optimization, and debugging techniques, while emphasizing the importance of regularization methods like dropout and batch normalization. Furthermore, it provides resources for implementing deep learning in Python and references various research studies related to the field.