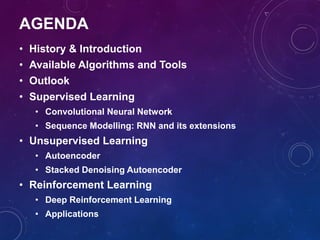

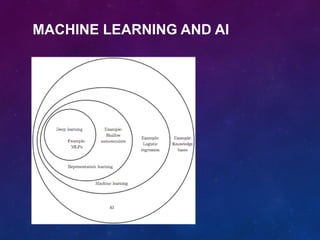

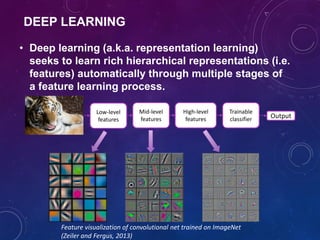

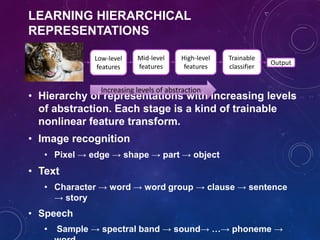

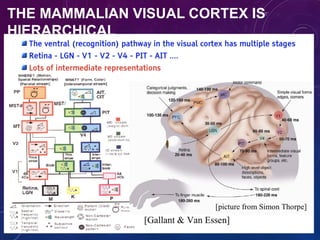

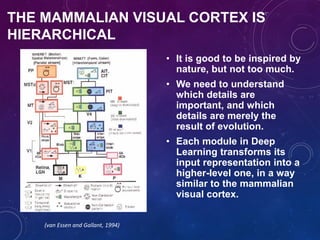

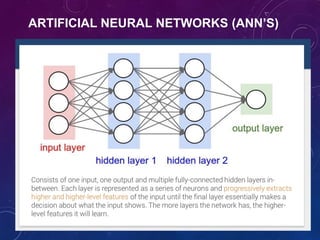

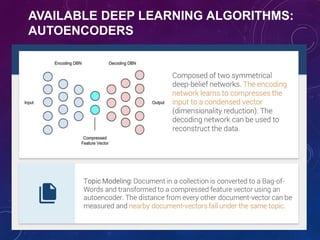

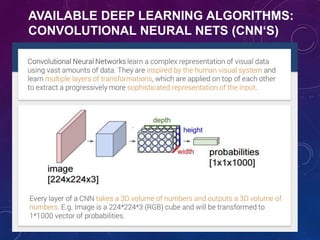

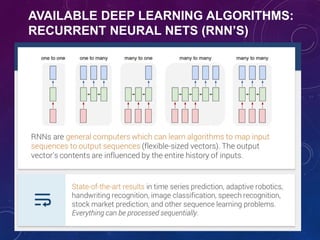

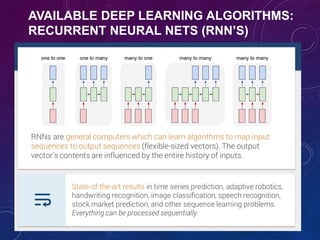

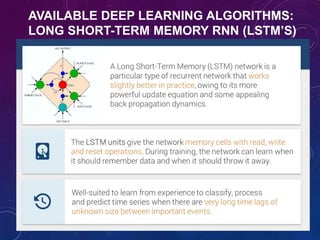

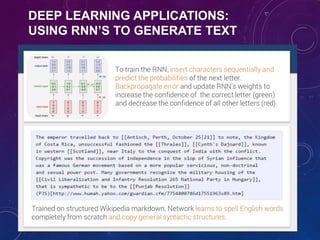

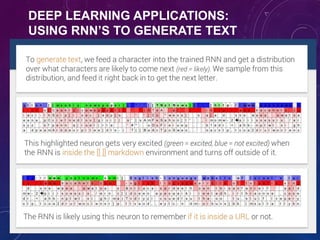

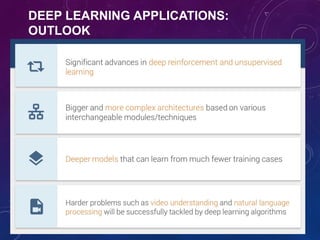

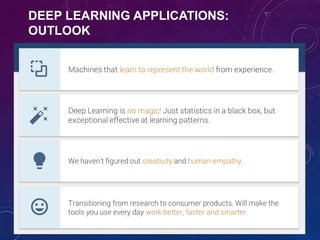

This document provides an overview of deep learning, including its history, algorithms, tools, and applications. It begins with the history and evolution of deep learning techniques. It then discusses popular deep learning algorithms like convolutional neural networks, recurrent neural networks, autoencoders, and deep reinforcement learning. It also covers commonly used tools for deep learning and highlights applications in areas such as computer vision, natural language processing, and games. In the end, it discusses the future outlook and opportunities of deep learning.

![CONVOLUTIONAL NEURAL NETWORK

• Input can have very high dimension. Using a fully-connected

neural network would need a large amount of parameters.

• Inspired by the neurophysiological experiments conducted

by [Hubel & Wiesel 1962], CNNs are a special type of neural

network whose hidden units are only connected to local

receptive field. The number of parameters needed by CNNs

is much smaller.

Example: 200x200 image

a) fully connected: 40,000

hidden units => 1.6

billion parameters

b) CNN: 5x5 kernel, 100

feature maps => 2,500

parameters](https://image.slidesharecdn.com/itrain-deeplearningtalk1-180212062234/85/Deep-Learning-Application-Opportunity-55-320.jpg)