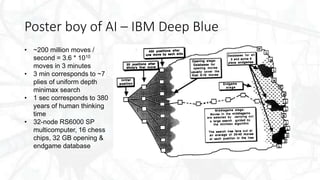

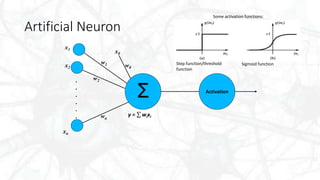

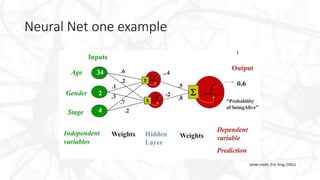

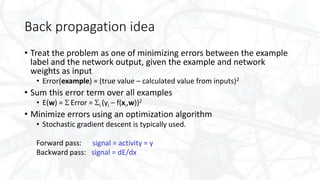

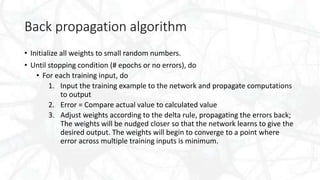

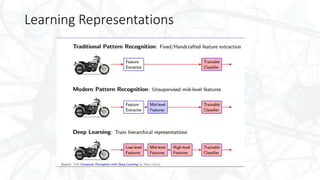

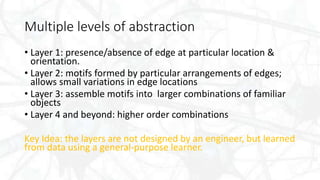

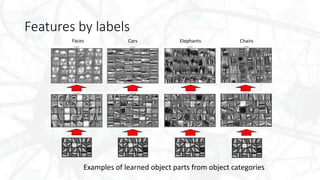

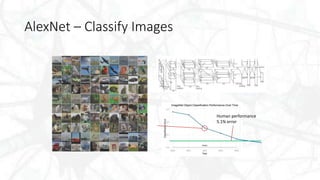

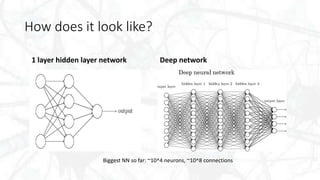

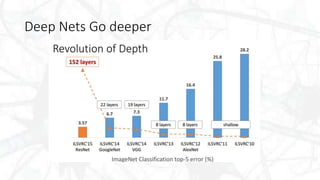

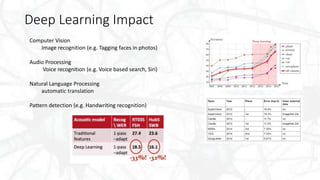

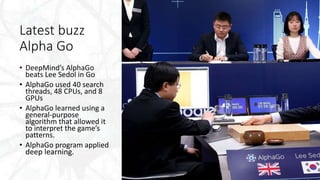

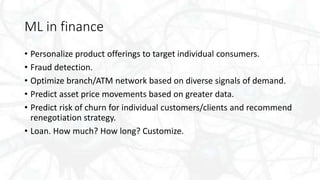

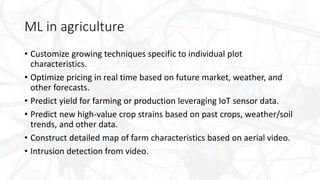

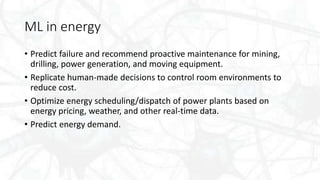

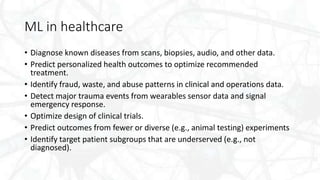

The document discusses the history and evolution of deep learning, highlighting its relevance in artificial intelligence, machine learning, and various applications across multiple industries such as healthcare, finance, and autonomous driving. It explains key concepts including neural networks, backpropagation, and feature extraction, placing emphasis on the importance of large datasets and model tuning for effective algorithm performance. The potential future impacts and challenges of deep learning are also addressed, alongside examples of successful implementations in real-world scenarios.