Graph, Data-science, and Deep Learning

•

0 likes•2,002 views

short few pointers on Graphs for Deep Learning, given at the Deep Learning Meetup Stockholm, 20 April 2015

Report

Share

Report

Share

Download to read offline

Recommended

New Directions Seminar Dec 2008

Slides for presentation New Directions in Structured Thinking for Intelligence Analysis at the Joint Australian Associations’ Security and Intelligence Seminar Program

Signposts for a Security Road Map

Evolution Strategies as a Scalable Alternative to Reinforcement Learning

Presentation slides on evolution strategies

Python for Image Understanding: Deep Learning with Convolutional Neural Nets

Talk given at PyData 2015 London. June 21, 2015. Practical approach to how to train your deep neural net for images.

Deep Learning as a Cat/Dog Detector

Talk given at PYCON Stockholm 2015

Intro to Deep Learning + taking pretrained imagenet network, extracting features, and RBM on top = 97 Accuracy after 1 hour (!) of training (in top 10% of kaggle cat vs dog competition)

Deep Neural Networks

that talk (Back)… with style

Talk at Nuclai 2016 in Vienna

Can neural networks sing, dance, remix and rhyme? And most importantly, can they talk back? This talk will introduce Deep Neural Nets with textual and auditory understanding and some of the recent breakthroughs made in these fields. It will then show some of the exciting possibilities these technologies hold for "creative" use and explorations of human-machine interaction, where the main theorem is "augmentation, not automation".

http://events.nucl.ai/track/cognitive/#deep-neural-networks-that-talk-back-with-style

Recommended

New Directions Seminar Dec 2008

Slides for presentation New Directions in Structured Thinking for Intelligence Analysis at the Joint Australian Associations’ Security and Intelligence Seminar Program

Signposts for a Security Road Map

Evolution Strategies as a Scalable Alternative to Reinforcement Learning

Presentation slides on evolution strategies

Python for Image Understanding: Deep Learning with Convolutional Neural Nets

Talk given at PyData 2015 London. June 21, 2015. Practical approach to how to train your deep neural net for images.

Deep Learning as a Cat/Dog Detector

Talk given at PYCON Stockholm 2015

Intro to Deep Learning + taking pretrained imagenet network, extracting features, and RBM on top = 97 Accuracy after 1 hour (!) of training (in top 10% of kaggle cat vs dog competition)

Deep Neural Networks

that talk (Back)… with style

Talk at Nuclai 2016 in Vienna

Can neural networks sing, dance, remix and rhyme? And most importantly, can they talk back? This talk will introduce Deep Neural Nets with textual and auditory understanding and some of the recent breakthroughs made in these fields. It will then show some of the exciting possibilities these technologies hold for "creative" use and explorations of human-machine interaction, where the main theorem is "augmentation, not automation".

http://events.nucl.ai/track/cognitive/#deep-neural-networks-that-talk-back-with-style

Explore Data: Data Science + Visualization

Talk on Data Visualization for Data Scientist at Stockholm NLP Meetup June 2015: http://www.meetup.com/Stockholm-Natural-Language-Processing-Meetup/events/222609869/

Video recording at https://www.youtube.com/watch?v=3Li_xIQ1K84

Creative AI & multimodality: looking ahead

Lecture on Creative AI (Generative Deep Learning) at Imperial College London, 1 December 2015

Deep Learning for Information Retrieval

Guest Lecture at DD2476 Search Engines and Information Retrieval Systems. 28 April 2015

Deep Learning for Natural Language Processing: Word Embeddings

Guest Lecture on NLP & Deep Learning (Word Embeddings) at the course Language technology at KTH, Stockholm, 3 December 2015

Deep Learning: a birds eye view

presentation given at the Deep Learning Meetup Stockholm, 20 April 2015

Visual-Semantic Embeddings: some thoughts on Language

Language technology is rapidly evolving. A resurgence in the use of distributed semantic representations and word embeddings, combined with the rise of deep neural networks has led to new approaches and new state of the art results in many natural language processing tasks. One such exciting - and most recent - trend can be seen in multimodal approaches fusing techniques and models of natural language processing (NLP) with that of computer vision.

The talk is aimed at giving an overview of the NLP part of this trend. It will start with giving a short overview of the challenges in creating deep networks for language, as well as what makes for a “good” language models, and the specific requirements of semantic word spaces for multi-modal embeddings.

Learning to understand phrases by embedding the dictionary

review of "Learning to Understand Phrases by Embedding the Dictionary" by Felix Hill, Kyunghyun Cho, Anna Korhonen, Yoshua Bengio

at KTH's Deep Learning reading group:

www.csc.kth.se/cvap/cvg/rg/

Multi modal retrieval and generation with deep distributed models

Guest Lecture at DD2476 Search engines and Information retrieval systems (KTH, Stockholm 26 April 2016)

Multi-modal embeddings: from discriminative to generative models and creative ai

Guest lecture #2 at DD2427 Image Based Recognition and Classification, KTH, Stockholm, 2 May 2016

Deep learning for natural language embeddings

Guest lecture #1 at DD2427 Image Based Recognition and Classification, KTH, Stockholm, 2 May 2016

Zero shot learning through cross-modal transfer

review of the paper "Zero-Shot Learning Through Cross-Modal Transfer" by Richard Socher, Milind Ganjoo, Hamsa Sridhar, Osbert Bastani, Christopher D. Manning, Andrew Y. Ng.

at KTH's Deep Learning reading group:

www.csc.kth.se/cvap/cvg/rg/

Building a Deep Learning (Dream) Machine

Building a Deep Learning (Dream) Machine: DIY hardware hacking for the uninitiated. Talk given at Data Science London Meetup. 27 June 2016

Deep Learning, an interactive introduction for NLP-ers

Deep Learning intro for NLP Meetup Stockholm

22 January 2015

http://www.meetup.com/Stockholm-Natural-Language-Processing-Meetup/events/219787462/

Deep Learning for NLP: An Introduction to Neural Word Embeddings

Guest Lecture for Language Technology Course. 4 Dec 2014, KTH, Stockholm

Deep Learning - The Past, Present and Future of Artificial Intelligence

In the last couple of years, deep learning techniques have transformed the world of artificial intelligence. One by one, the abilities and techniques that humans once imagined were uniquely our own have begun to fall to the onslaught of ever more powerful machines. Deep neural networks are now better than humans at tasks such as face recognition and object recognition. They’ve mastered the ancient game of Go and thrashed the best human players. “The pace of progress in artificial general intelligence is incredible fast” (Elon Musk – CEO Tesla & SpaceX) leading to an AI that “would be either the best or the worst thing ever to happen to humanity” (Stephen Hawking – Physicist).

What sparked this new hype? How is Deep Learning different from previous approaches? Let’s look behind the curtain and unravel the reality. This talk will introduce the core concept of deep learning, explore why Sundar Pichai (CEO Google) recently announced that “machine learning is a core transformative way by which Google is rethinking everything they are doing” and explain why “deep learning is probably one of the most exciting things that is happening in the computer industry“ (Jen-Hsun Huang – CEO NVIDIA).

Deep Learning - Convolutional Neural Networks

Presentation about Deep Learning and Convolutional Neural Networks.

Google Cloud Platform - Building a scalable mobile application

In this presentation we give an overview on several services of the Google Cloud Platform and showcase an Android application utilizing these technologies. We cover technologies, such as Google App Engine, Cloud Endpoints, Cloud Storage, Cloud Datastore and Google Cloud Messaging (GCM). We will talk about pitfalls, show meaningful code examples (in Java) and provide several tips and dev tools on how to get the most out of Google’s Cloud Platform.

Deep learning (20170213)

Overview of the field of deep learning with background information, history, a little theory and applications.

More Related Content

Viewers also liked

Explore Data: Data Science + Visualization

Talk on Data Visualization for Data Scientist at Stockholm NLP Meetup June 2015: http://www.meetup.com/Stockholm-Natural-Language-Processing-Meetup/events/222609869/

Video recording at https://www.youtube.com/watch?v=3Li_xIQ1K84

Creative AI & multimodality: looking ahead

Lecture on Creative AI (Generative Deep Learning) at Imperial College London, 1 December 2015

Deep Learning for Information Retrieval

Guest Lecture at DD2476 Search Engines and Information Retrieval Systems. 28 April 2015

Deep Learning for Natural Language Processing: Word Embeddings

Guest Lecture on NLP & Deep Learning (Word Embeddings) at the course Language technology at KTH, Stockholm, 3 December 2015

Deep Learning: a birds eye view

presentation given at the Deep Learning Meetup Stockholm, 20 April 2015

Visual-Semantic Embeddings: some thoughts on Language

Language technology is rapidly evolving. A resurgence in the use of distributed semantic representations and word embeddings, combined with the rise of deep neural networks has led to new approaches and new state of the art results in many natural language processing tasks. One such exciting - and most recent - trend can be seen in multimodal approaches fusing techniques and models of natural language processing (NLP) with that of computer vision.

The talk is aimed at giving an overview of the NLP part of this trend. It will start with giving a short overview of the challenges in creating deep networks for language, as well as what makes for a “good” language models, and the specific requirements of semantic word spaces for multi-modal embeddings.

Learning to understand phrases by embedding the dictionary

review of "Learning to Understand Phrases by Embedding the Dictionary" by Felix Hill, Kyunghyun Cho, Anna Korhonen, Yoshua Bengio

at KTH's Deep Learning reading group:

www.csc.kth.se/cvap/cvg/rg/

Multi modal retrieval and generation with deep distributed models

Guest Lecture at DD2476 Search engines and Information retrieval systems (KTH, Stockholm 26 April 2016)

Multi-modal embeddings: from discriminative to generative models and creative ai

Guest lecture #2 at DD2427 Image Based Recognition and Classification, KTH, Stockholm, 2 May 2016

Deep learning for natural language embeddings

Guest lecture #1 at DD2427 Image Based Recognition and Classification, KTH, Stockholm, 2 May 2016

Zero shot learning through cross-modal transfer

review of the paper "Zero-Shot Learning Through Cross-Modal Transfer" by Richard Socher, Milind Ganjoo, Hamsa Sridhar, Osbert Bastani, Christopher D. Manning, Andrew Y. Ng.

at KTH's Deep Learning reading group:

www.csc.kth.se/cvap/cvg/rg/

Building a Deep Learning (Dream) Machine

Building a Deep Learning (Dream) Machine: DIY hardware hacking for the uninitiated. Talk given at Data Science London Meetup. 27 June 2016

Deep Learning, an interactive introduction for NLP-ers

Deep Learning intro for NLP Meetup Stockholm

22 January 2015

http://www.meetup.com/Stockholm-Natural-Language-Processing-Meetup/events/219787462/

Deep Learning for NLP: An Introduction to Neural Word Embeddings

Guest Lecture for Language Technology Course. 4 Dec 2014, KTH, Stockholm

Deep Learning - The Past, Present and Future of Artificial Intelligence

In the last couple of years, deep learning techniques have transformed the world of artificial intelligence. One by one, the abilities and techniques that humans once imagined were uniquely our own have begun to fall to the onslaught of ever more powerful machines. Deep neural networks are now better than humans at tasks such as face recognition and object recognition. They’ve mastered the ancient game of Go and thrashed the best human players. “The pace of progress in artificial general intelligence is incredible fast” (Elon Musk – CEO Tesla & SpaceX) leading to an AI that “would be either the best or the worst thing ever to happen to humanity” (Stephen Hawking – Physicist).

What sparked this new hype? How is Deep Learning different from previous approaches? Let’s look behind the curtain and unravel the reality. This talk will introduce the core concept of deep learning, explore why Sundar Pichai (CEO Google) recently announced that “machine learning is a core transformative way by which Google is rethinking everything they are doing” and explain why “deep learning is probably one of the most exciting things that is happening in the computer industry“ (Jen-Hsun Huang – CEO NVIDIA).

Deep Learning - Convolutional Neural Networks

Presentation about Deep Learning and Convolutional Neural Networks.

Google Cloud Platform - Building a scalable mobile application

In this presentation we give an overview on several services of the Google Cloud Platform and showcase an Android application utilizing these technologies. We cover technologies, such as Google App Engine, Cloud Endpoints, Cloud Storage, Cloud Datastore and Google Cloud Messaging (GCM). We will talk about pitfalls, show meaningful code examples (in Java) and provide several tips and dev tools on how to get the most out of Google’s Cloud Platform.

Deep learning (20170213)

Overview of the field of deep learning with background information, history, a little theory and applications.

Viewers also liked (20)

Deep Learning for Natural Language Processing: Word Embeddings

Deep Learning for Natural Language Processing: Word Embeddings

Visual-Semantic Embeddings: some thoughts on Language

Visual-Semantic Embeddings: some thoughts on Language

Learning to understand phrases by embedding the dictionary

Learning to understand phrases by embedding the dictionary

Multi modal retrieval and generation with deep distributed models

Multi modal retrieval and generation with deep distributed models

Multi-modal embeddings: from discriminative to generative models and creative ai

Multi-modal embeddings: from discriminative to generative models and creative ai

Deep Learning, an interactive introduction for NLP-ers

Deep Learning, an interactive introduction for NLP-ers

Deep Learning for NLP: An Introduction to Neural Word Embeddings

Deep Learning for NLP: An Introduction to Neural Word Embeddings

Deep Learning - The Past, Present and Future of Artificial Intelligence

Deep Learning - The Past, Present and Future of Artificial Intelligence

Google Cloud Platform - Building a scalable mobile application

Google Cloud Platform - Building a scalable mobile application

Graph, Data-science, and Deep Learning

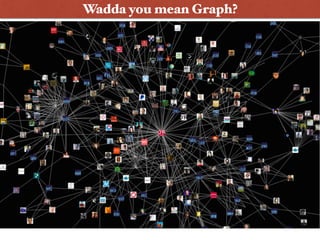

- 1. Wadda you mean, Graph?Wadda you mean Graph?

- 5. A graph is a graph is a graph what drugs will bind to protein X and not interact with drug Y?

- 6. Graph-based Deep Learning • Graphs + NLP = AWESOME • Novel possibilities: Time is a fundamental factor for any analysis • Graph (natural NLP and other fancy affinities) vs Matrices (complicated, not scalable algorithms/ mathematics) • Whiteboard/Visualization friendly • Thin Application layer = Focus on the graph, not the software layer

- 7. Richard Socher, Alex Perelygin, Jean Wu, Jason Chuang, Chris Manning, Andrew Ng and Chris Potts. 2013. Recursive Deep Models for Semantic Compositionality Over a Sentiment Treebank. EMNLP 2013 code & demo: http://nlp.stanford.edu/sentiment/index.html Graph-based Deep Learning*