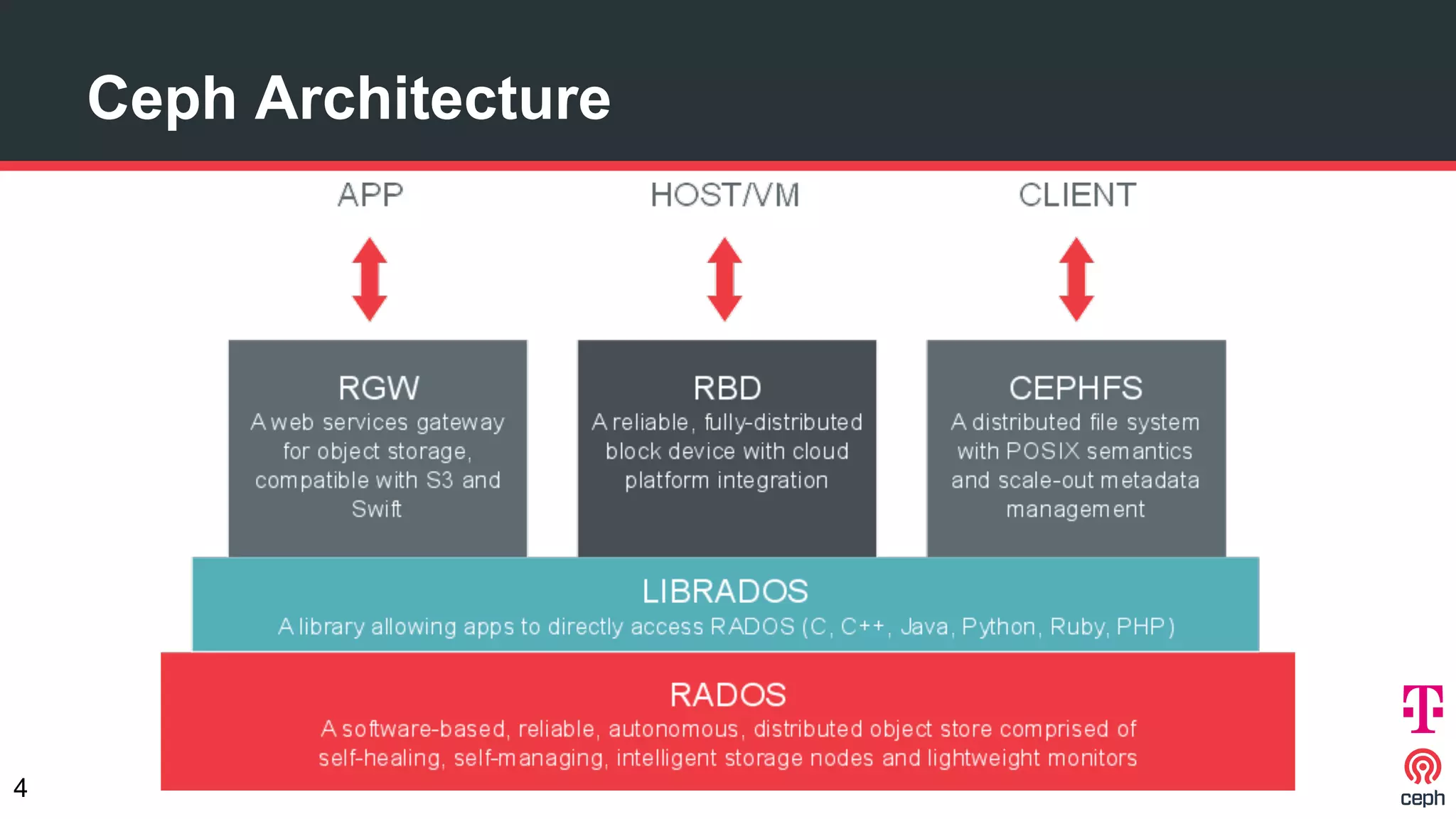

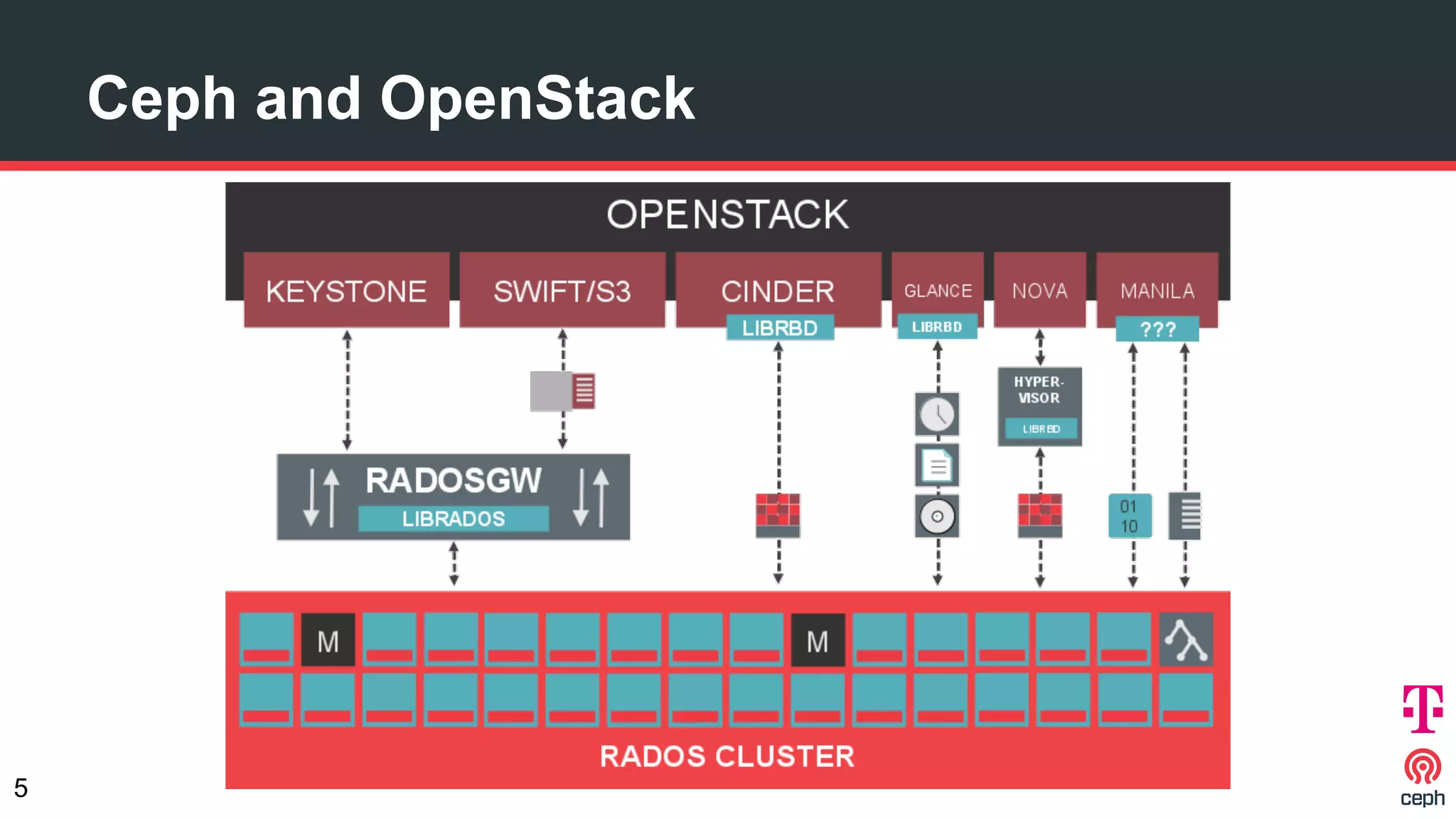

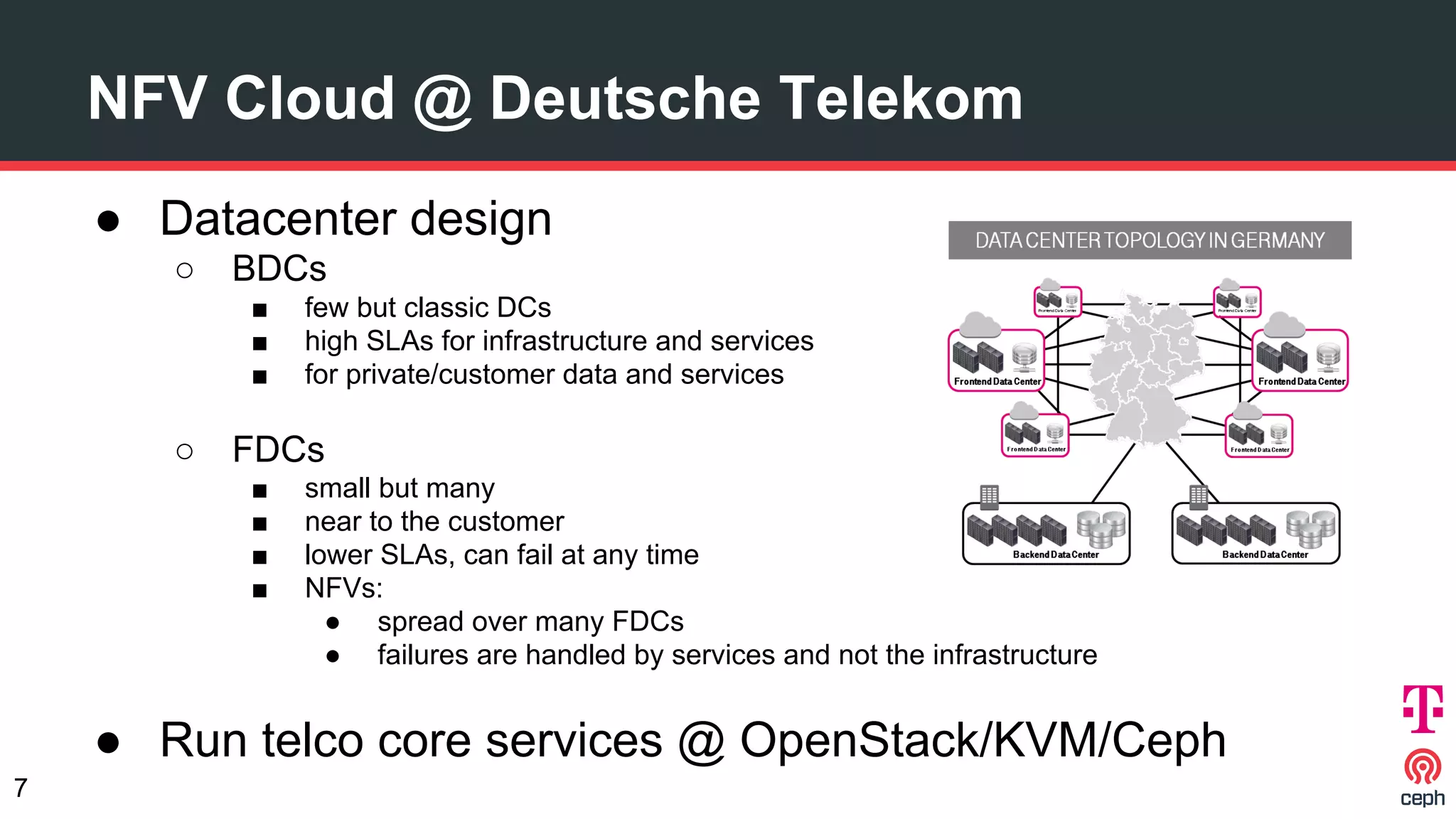

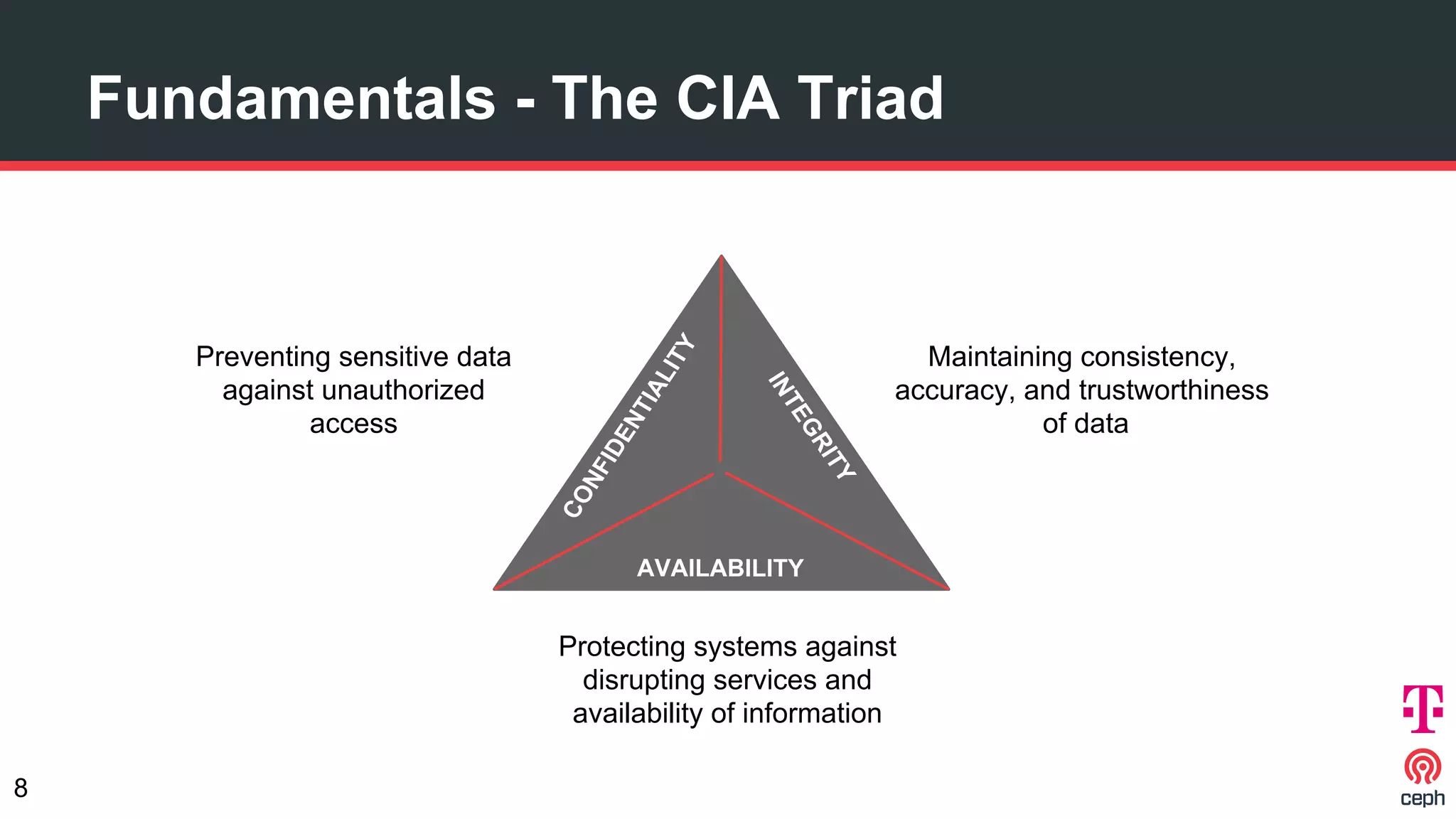

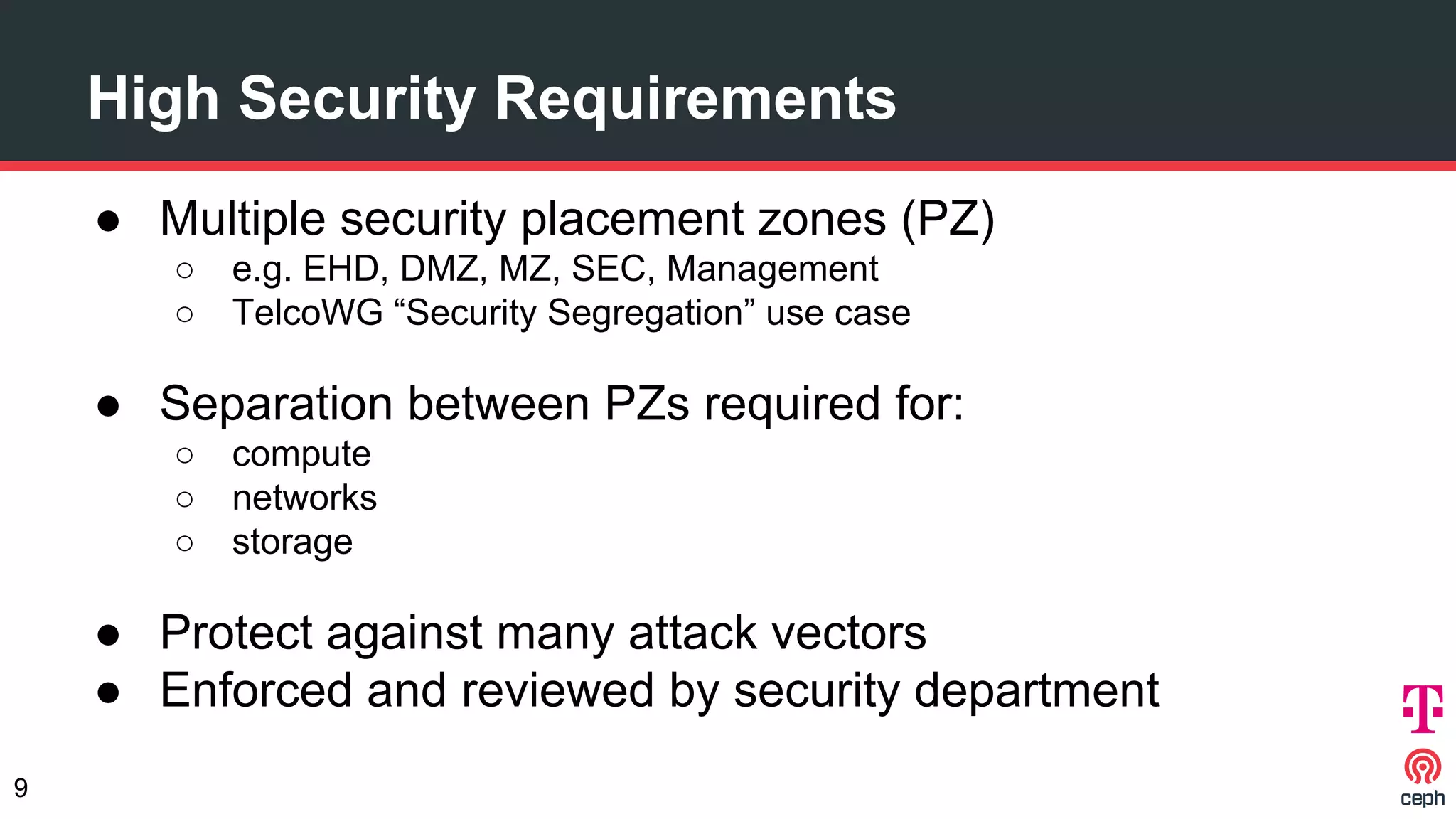

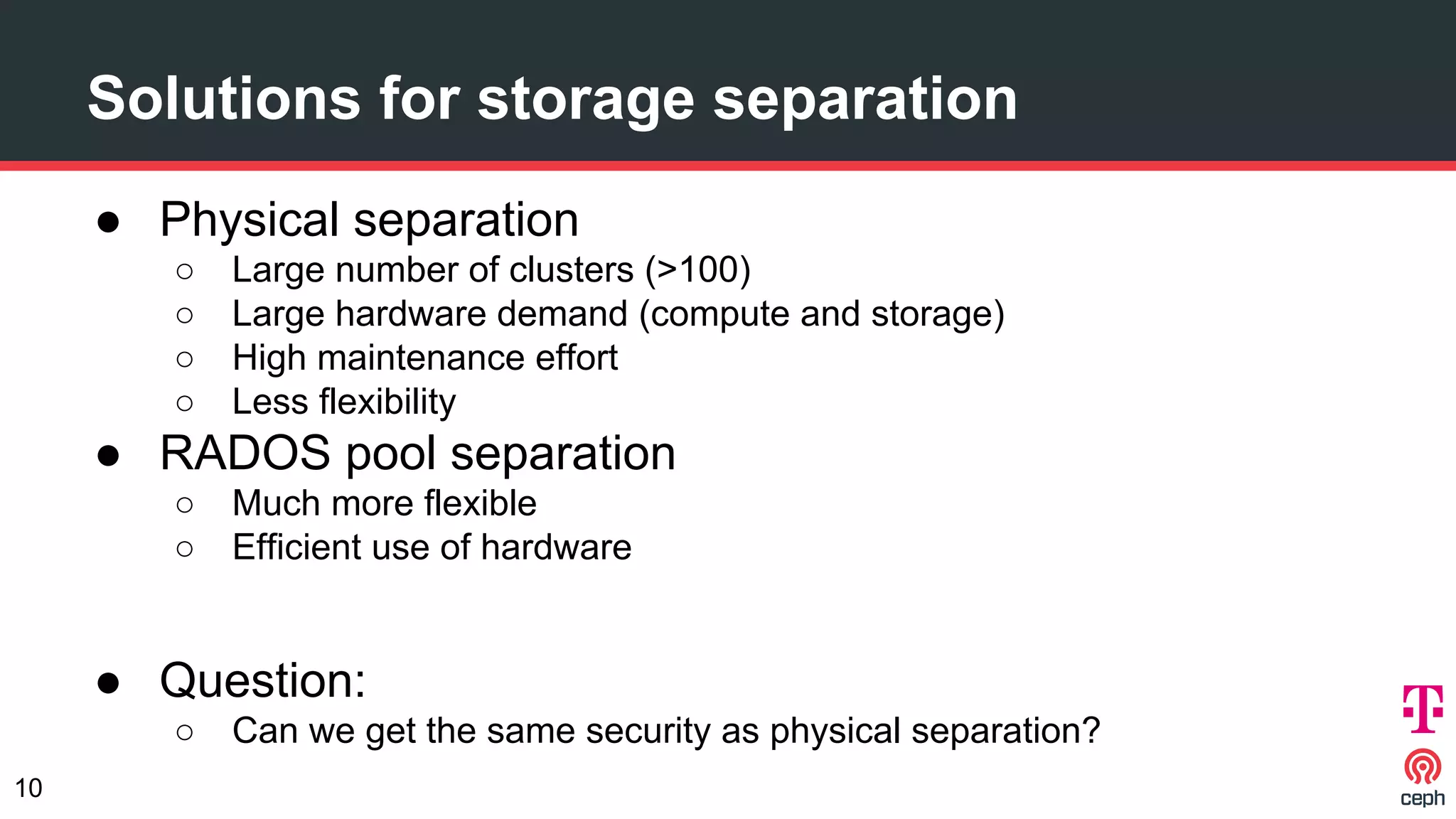

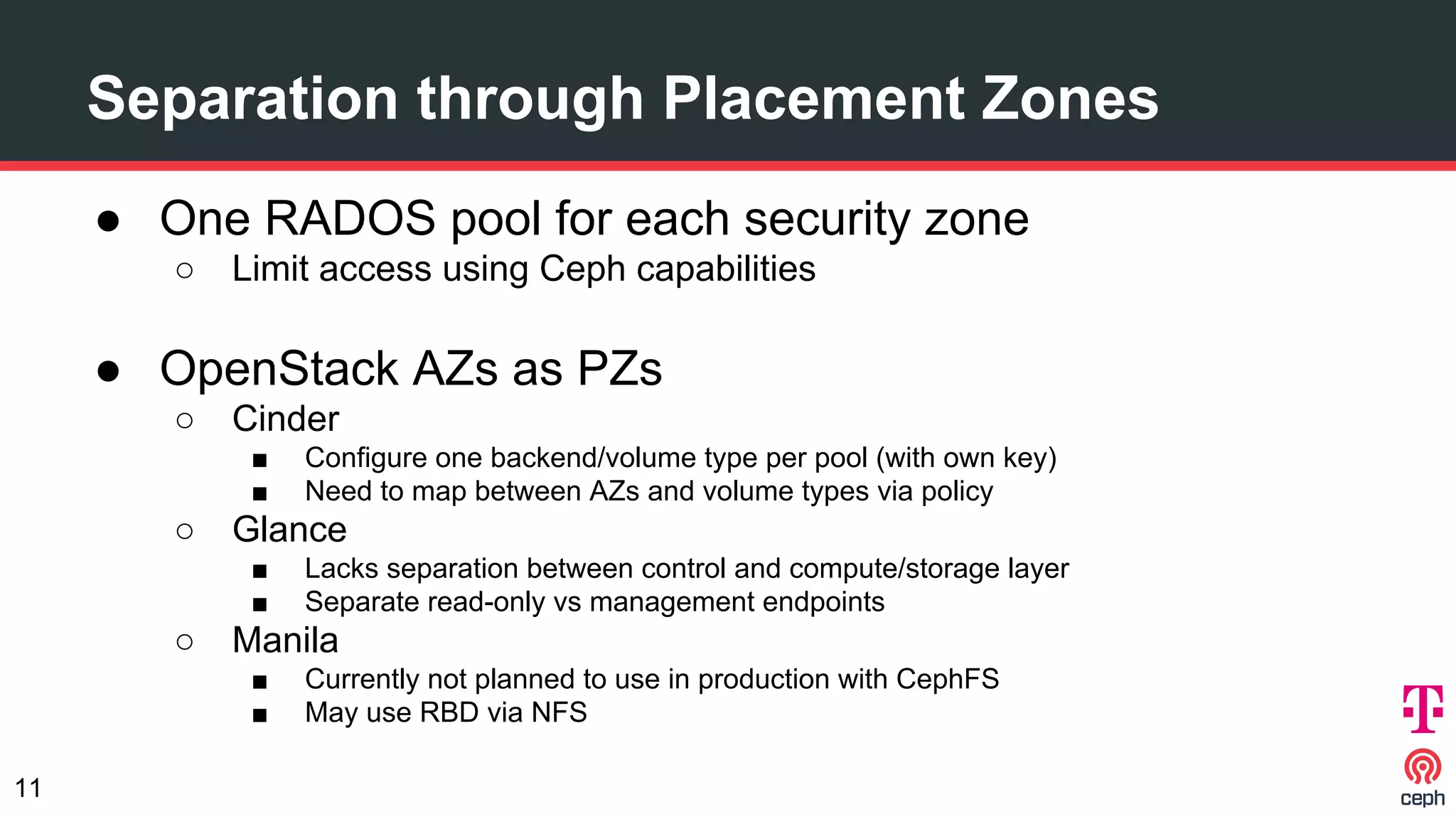

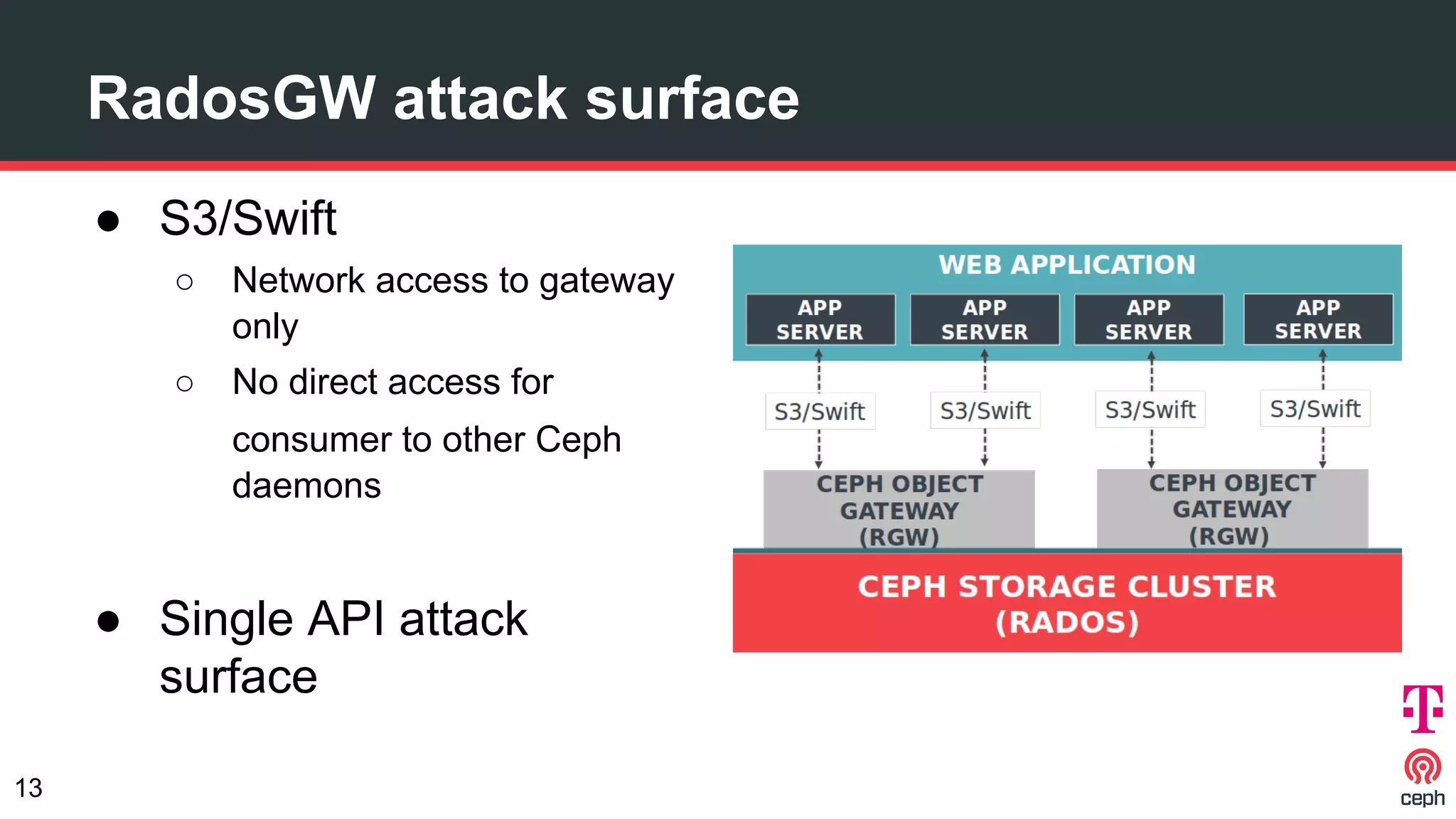

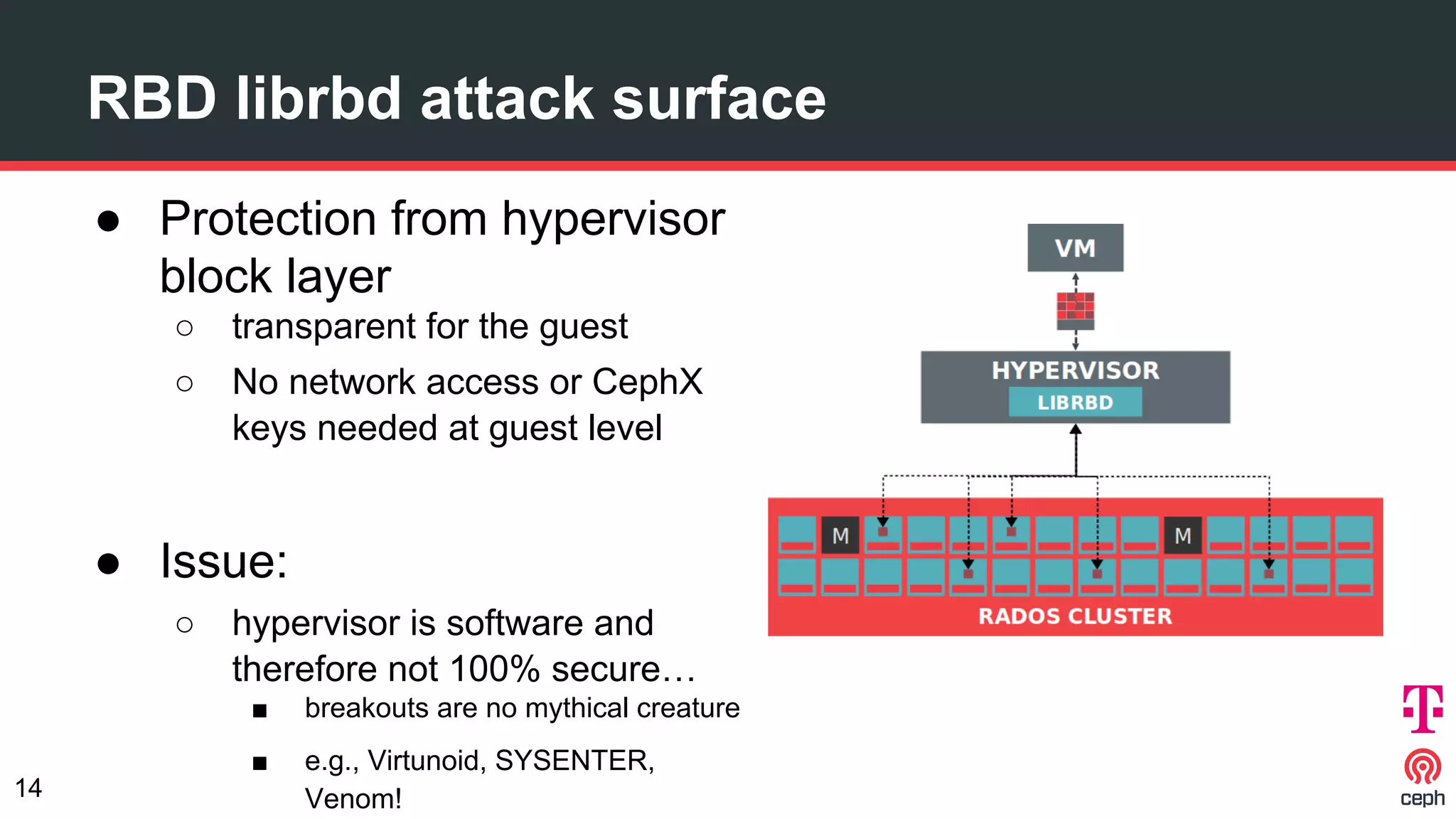

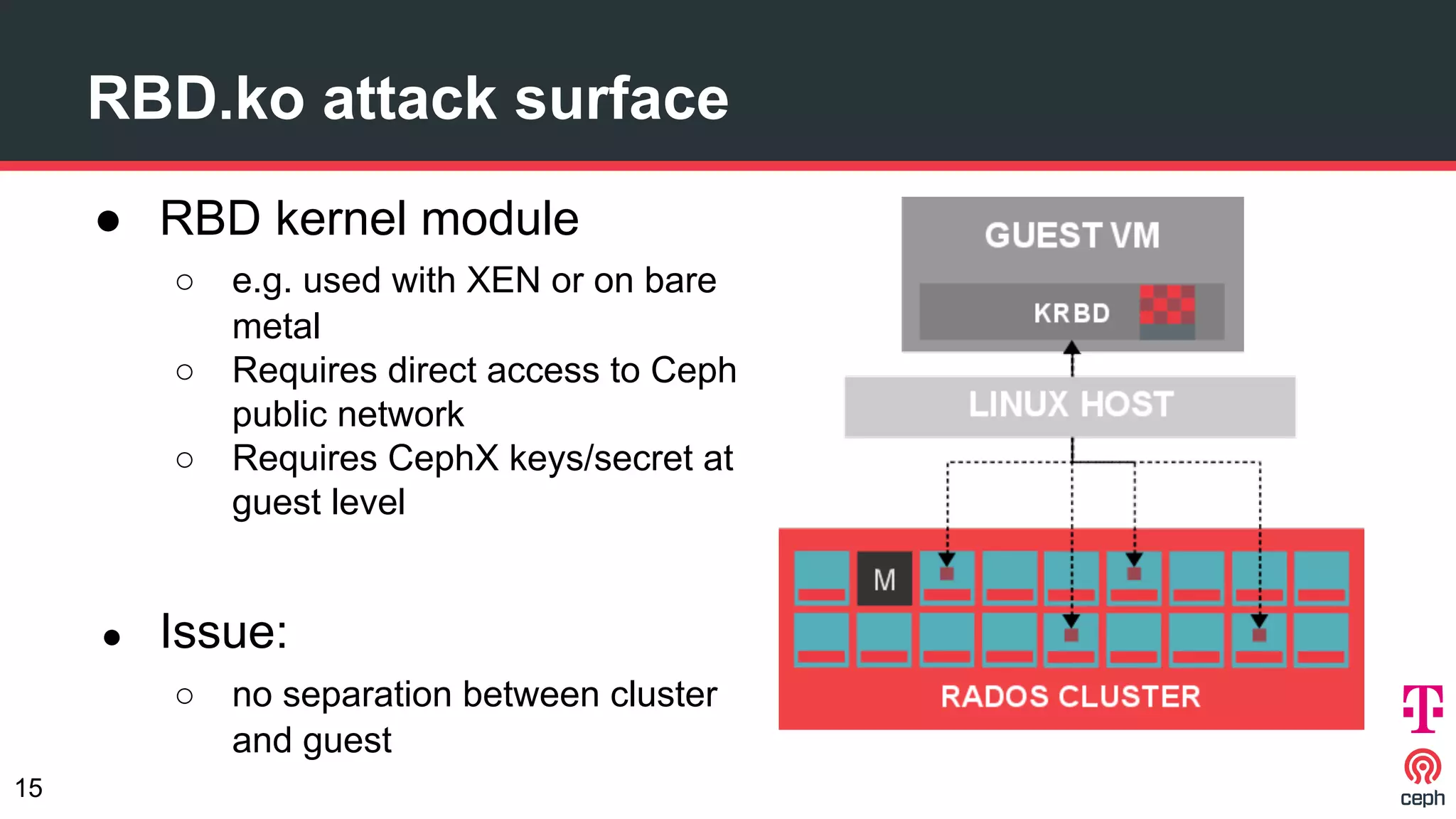

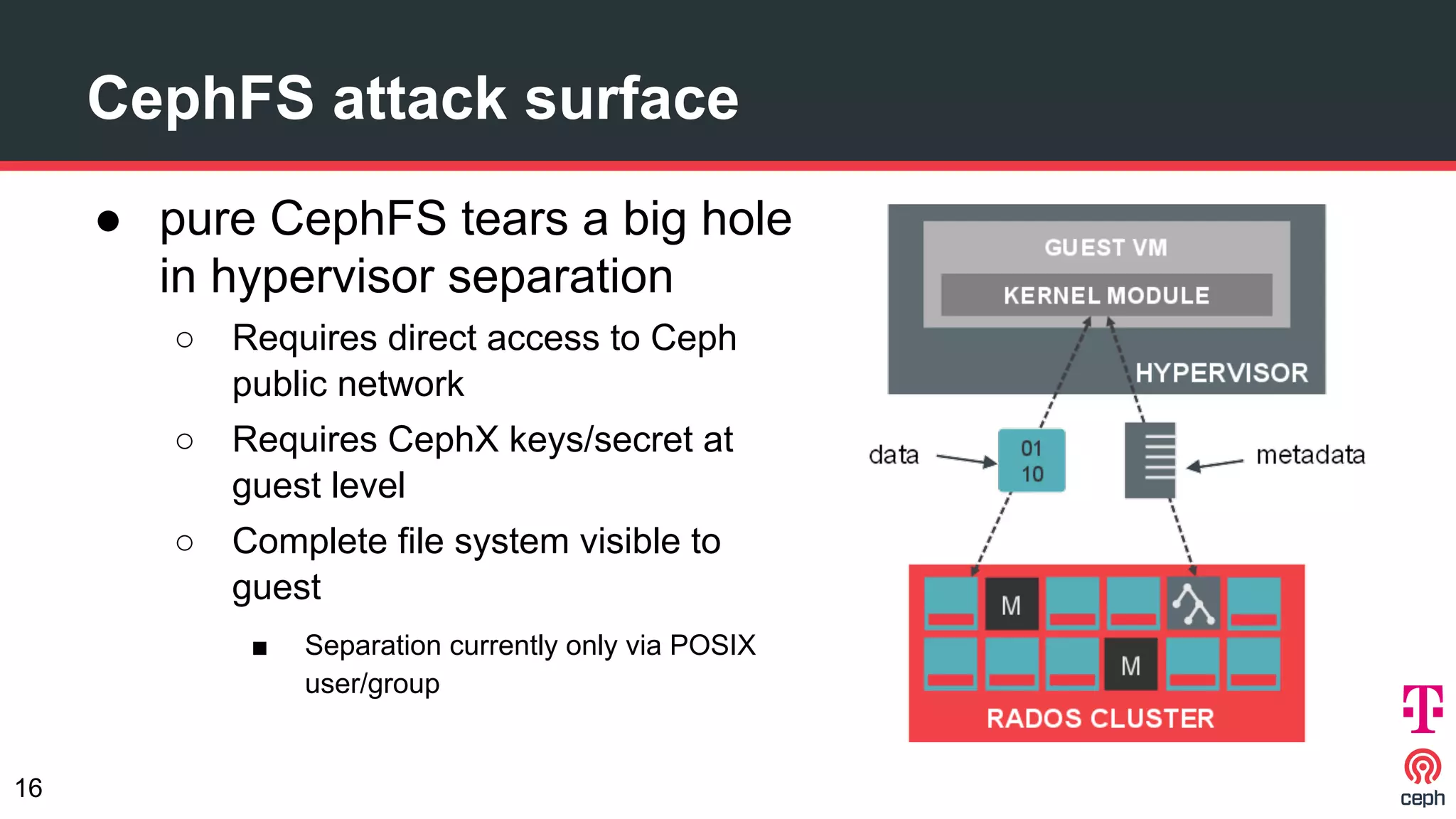

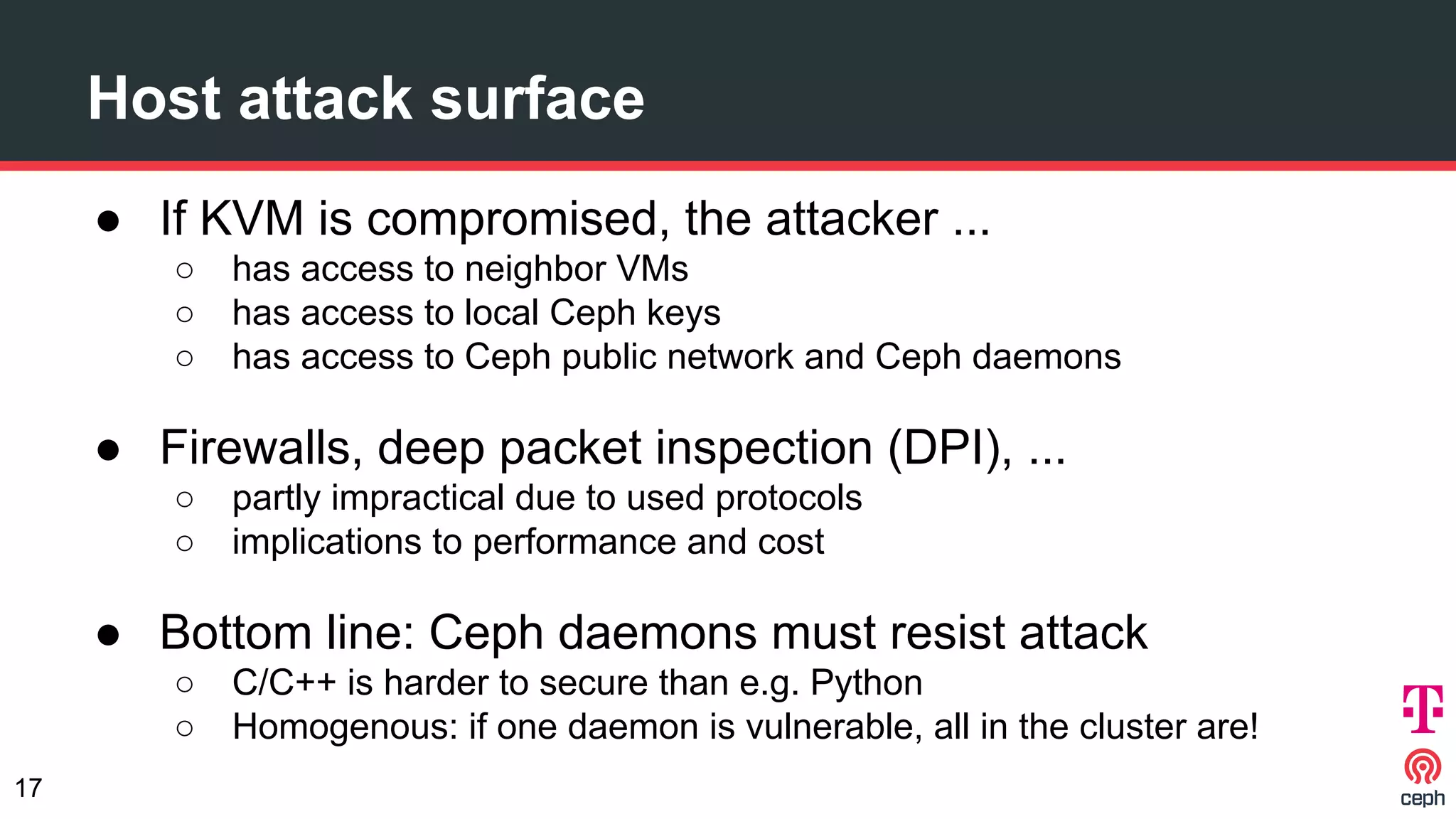

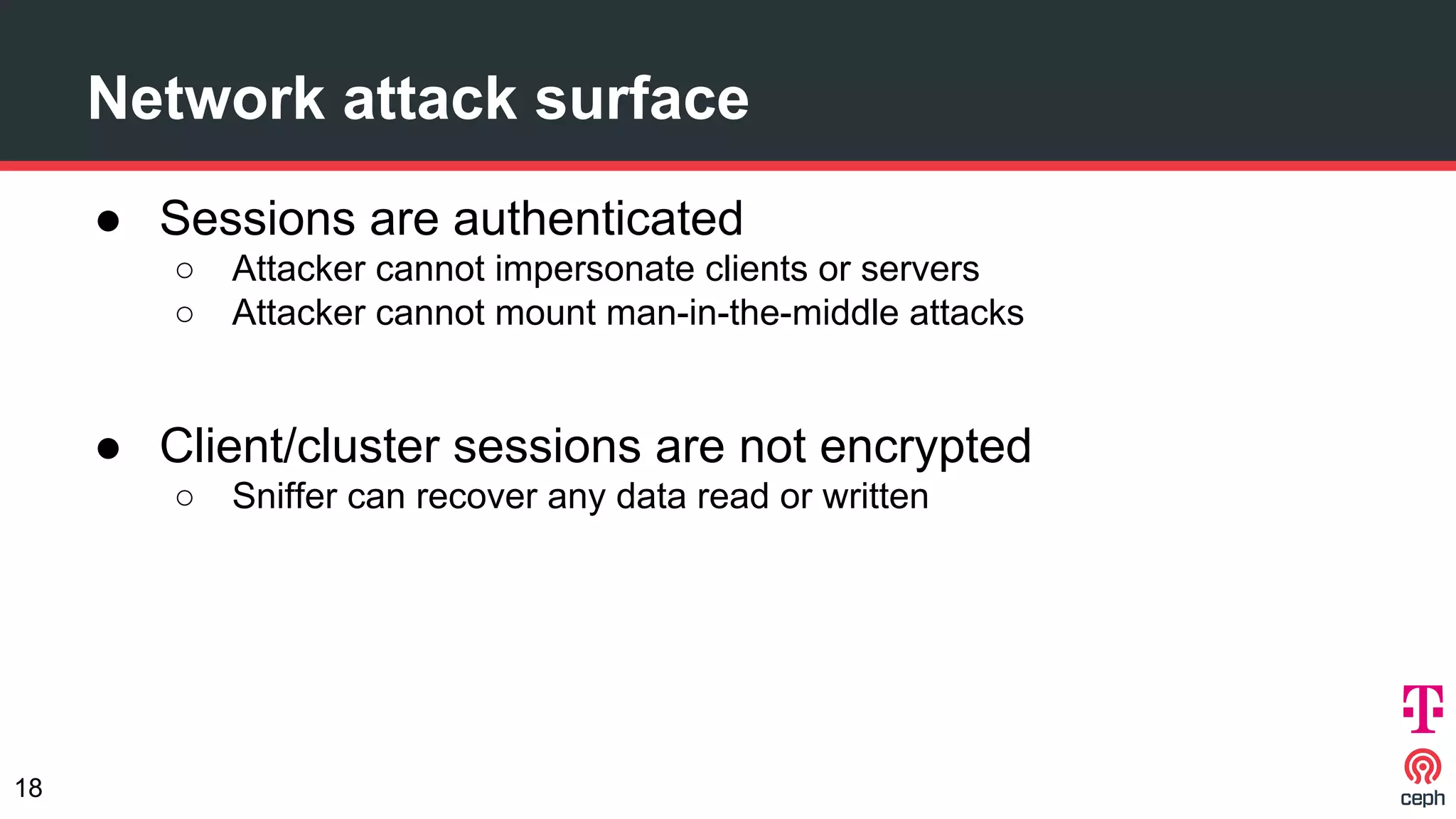

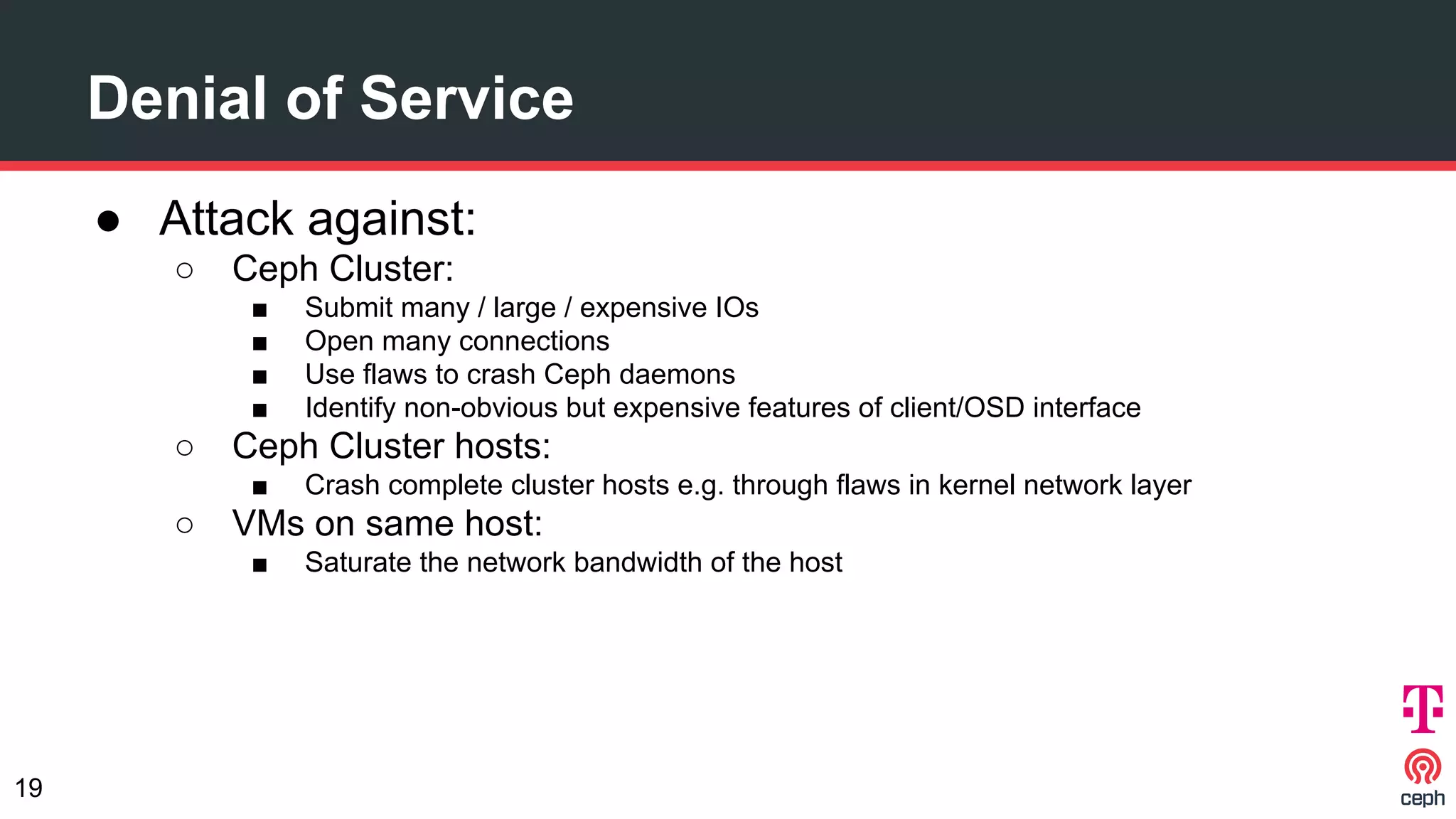

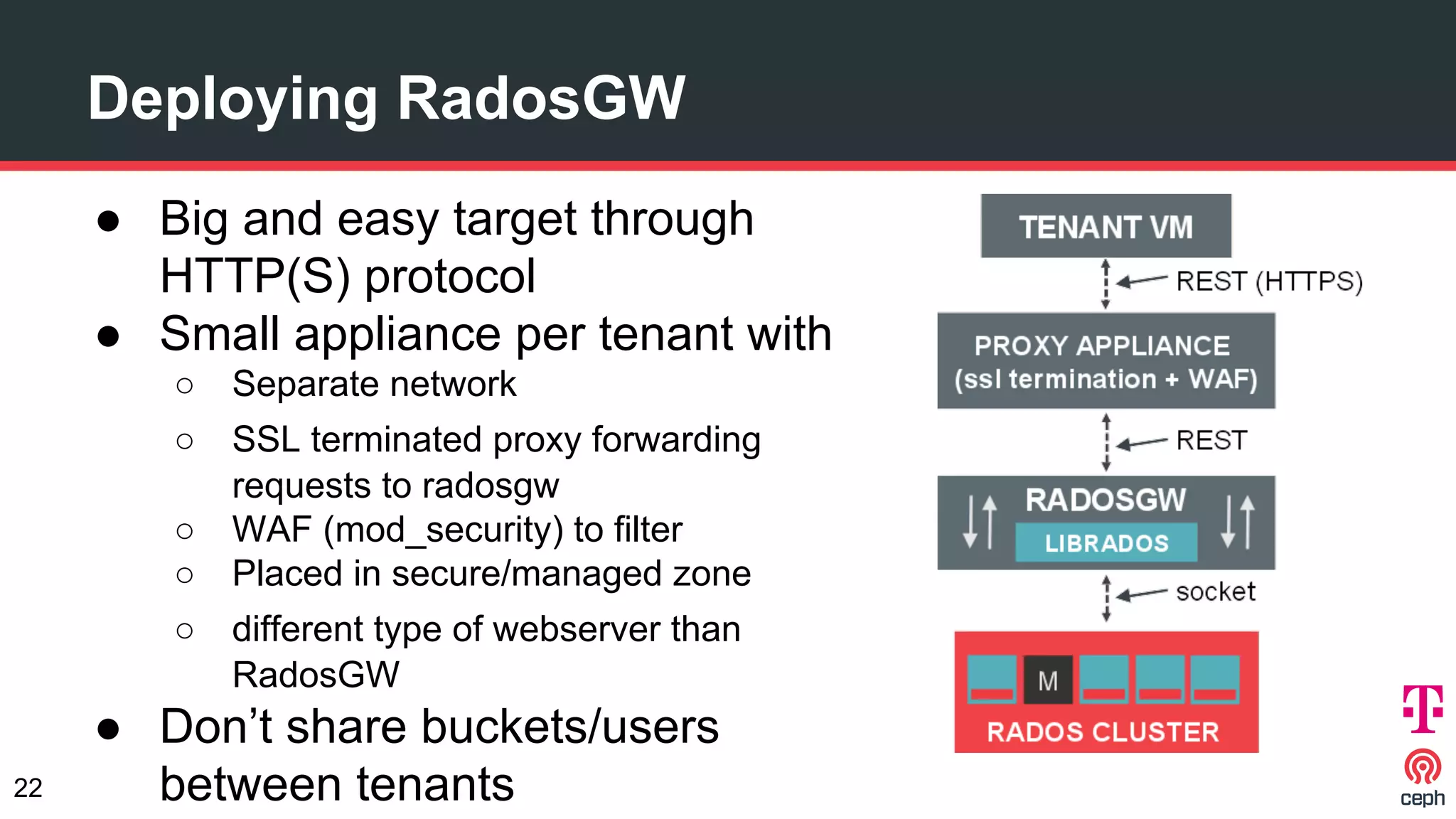

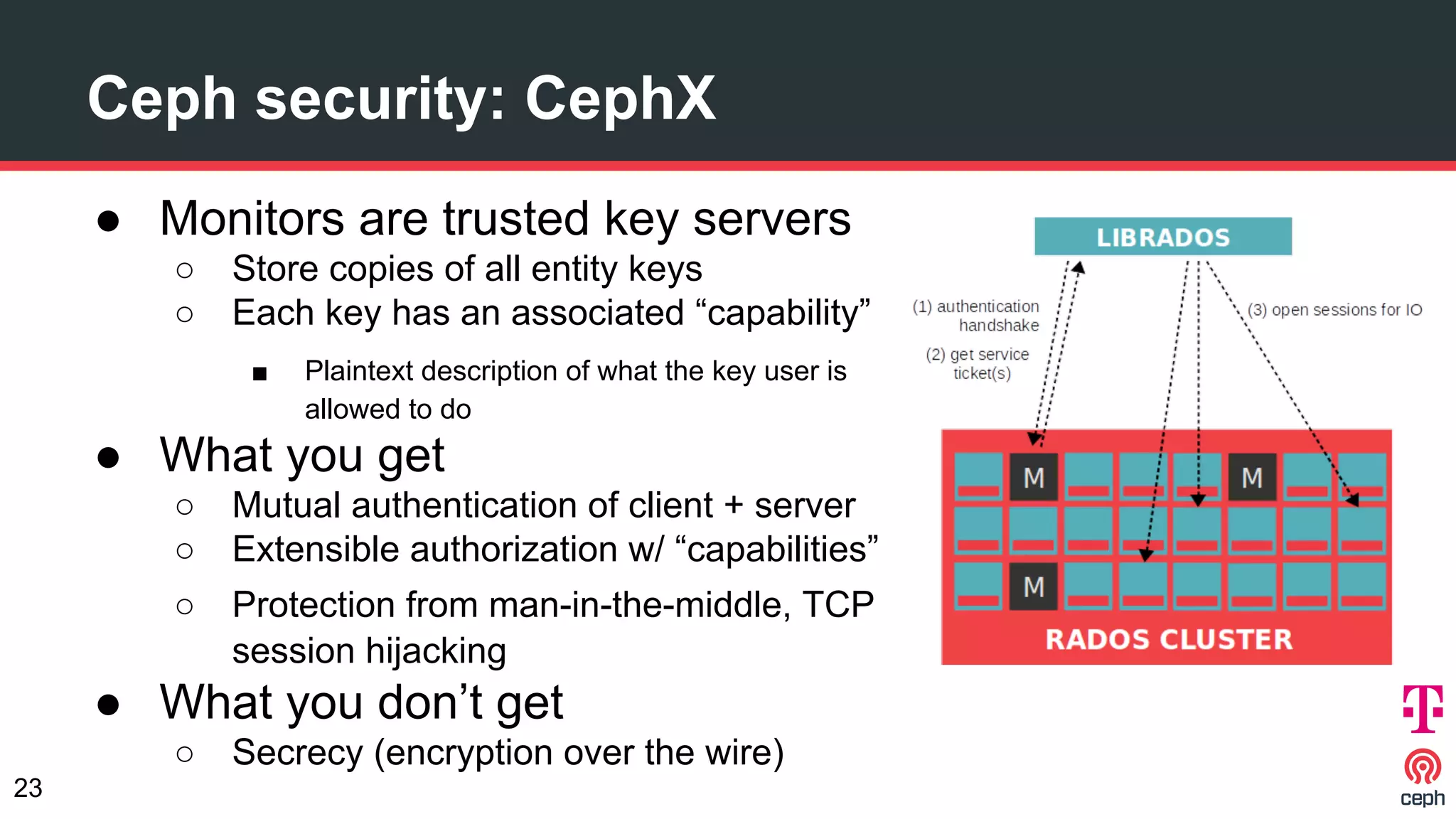

The document discusses implementing Ceph within a secure OpenStack cloud environment, particularly focusing on Deutsche Telekom's NFV cloud design and security measures. It outlines proactive and reactive countermeasures against various attack surfaces and emphasizes the importance of separation of security zones and robust key management. The document also highlights ongoing efforts to enhance security practices, including encryption and vulnerability assessment processes.