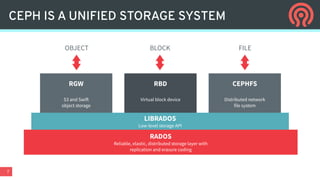

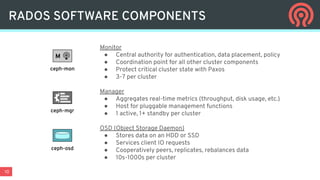

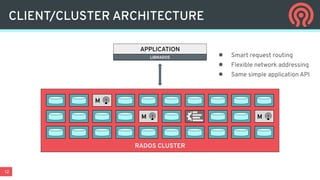

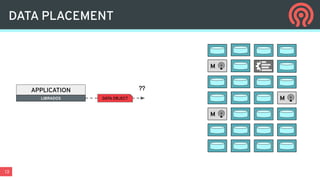

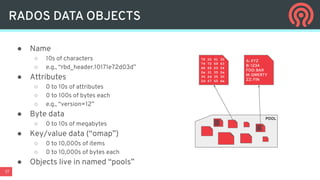

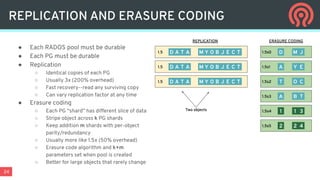

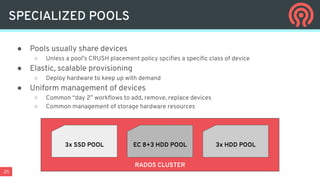

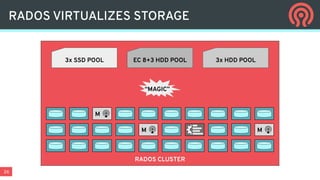

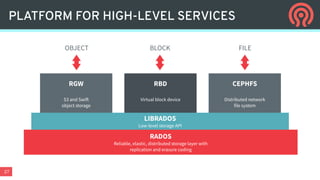

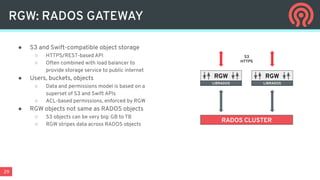

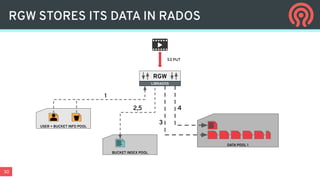

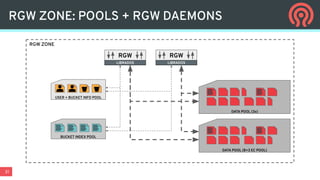

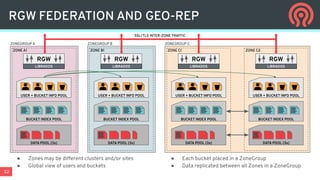

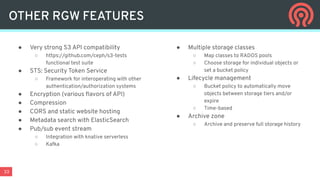

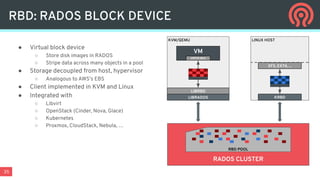

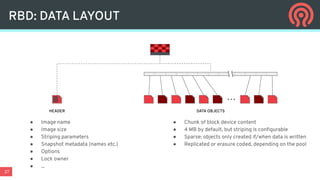

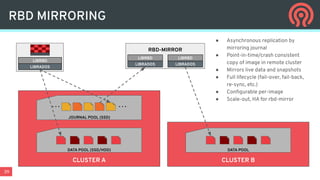

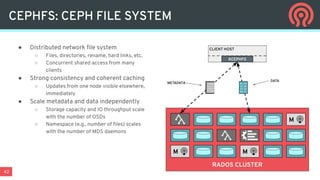

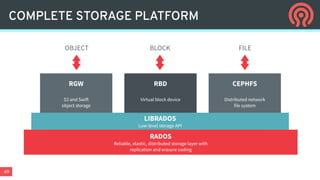

Ceph is an open-source distributed storage platform that provides file, block, and object storage in a single unified system. It uses a distributed storage component called RADOS that provides reliable and scalable storage through data replication and erasure coding across commodity hardware. Higher-level services like RBD provide virtual block devices, RGW provides S3-compatible object storage, and CephFS provides a distributed file system.