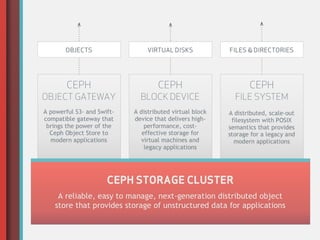

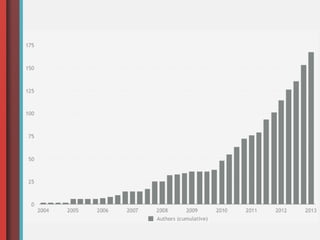

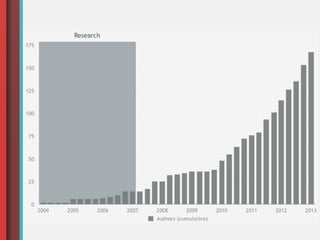

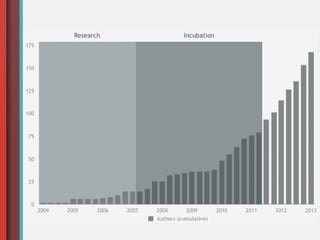

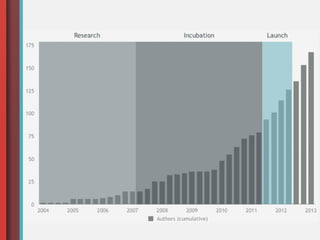

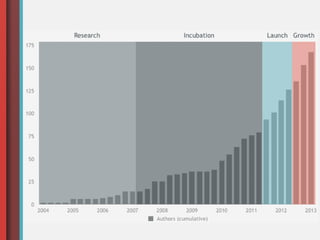

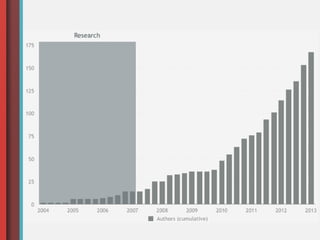

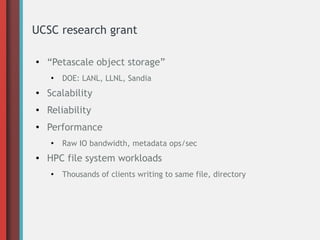

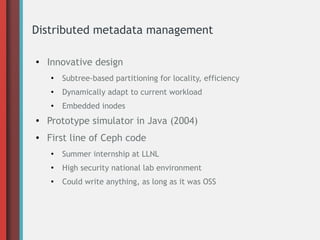

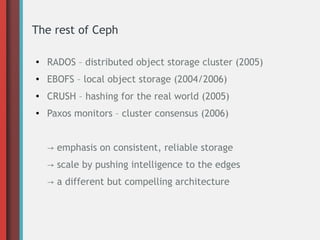

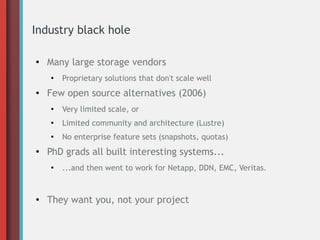

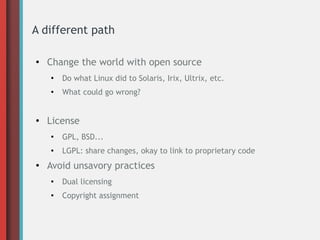

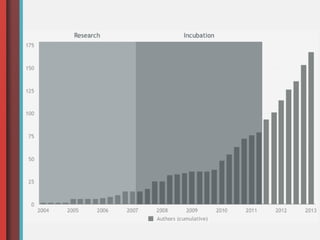

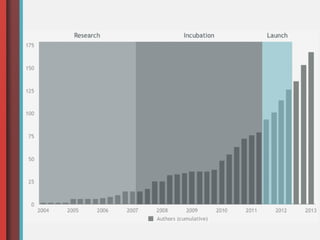

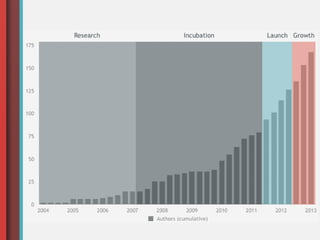

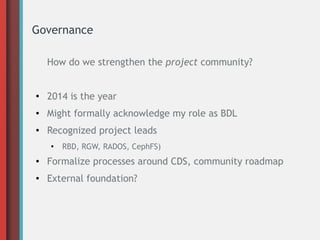

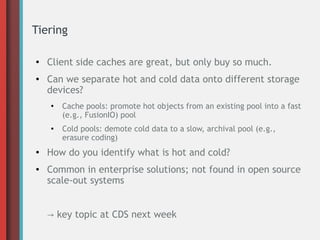

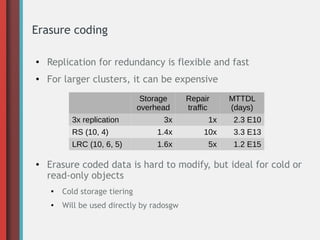

This document provides an overview of the history and development of Ceph, an open-source distributed storage system. It discusses Sage Weil's initial research that led to Ceph's creation, the incubation of Ceph at DreamHost, the launch of Inktank to support Ceph's development and adoption, and the current state of Ceph including its growing community and usage in production deployments. It also outlines Weil's vision for Ceph's future, including improving governance, adding new technologies like tiering and erasure coding, and expanding its role in areas like big data and the enterprise storage market.