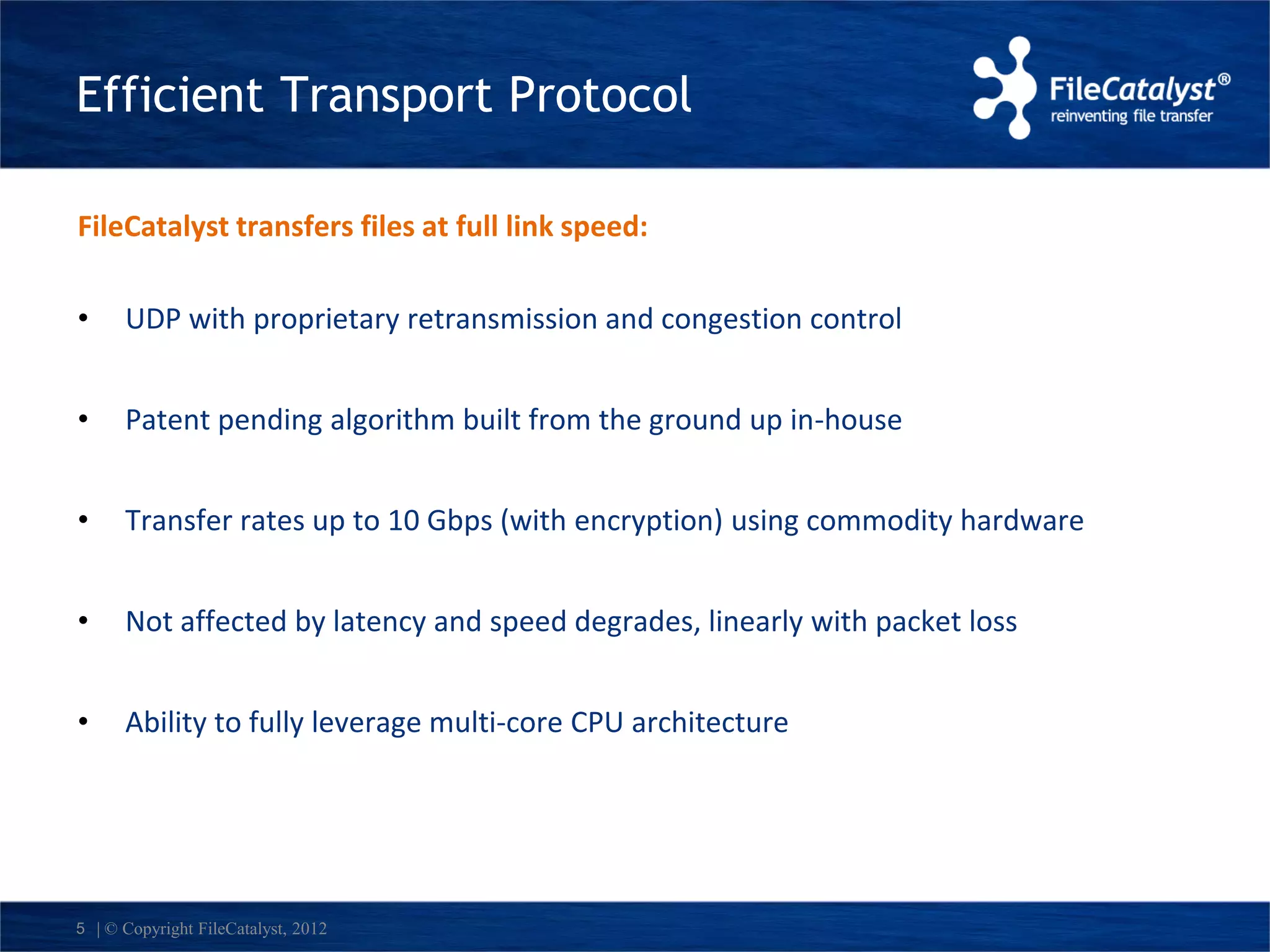

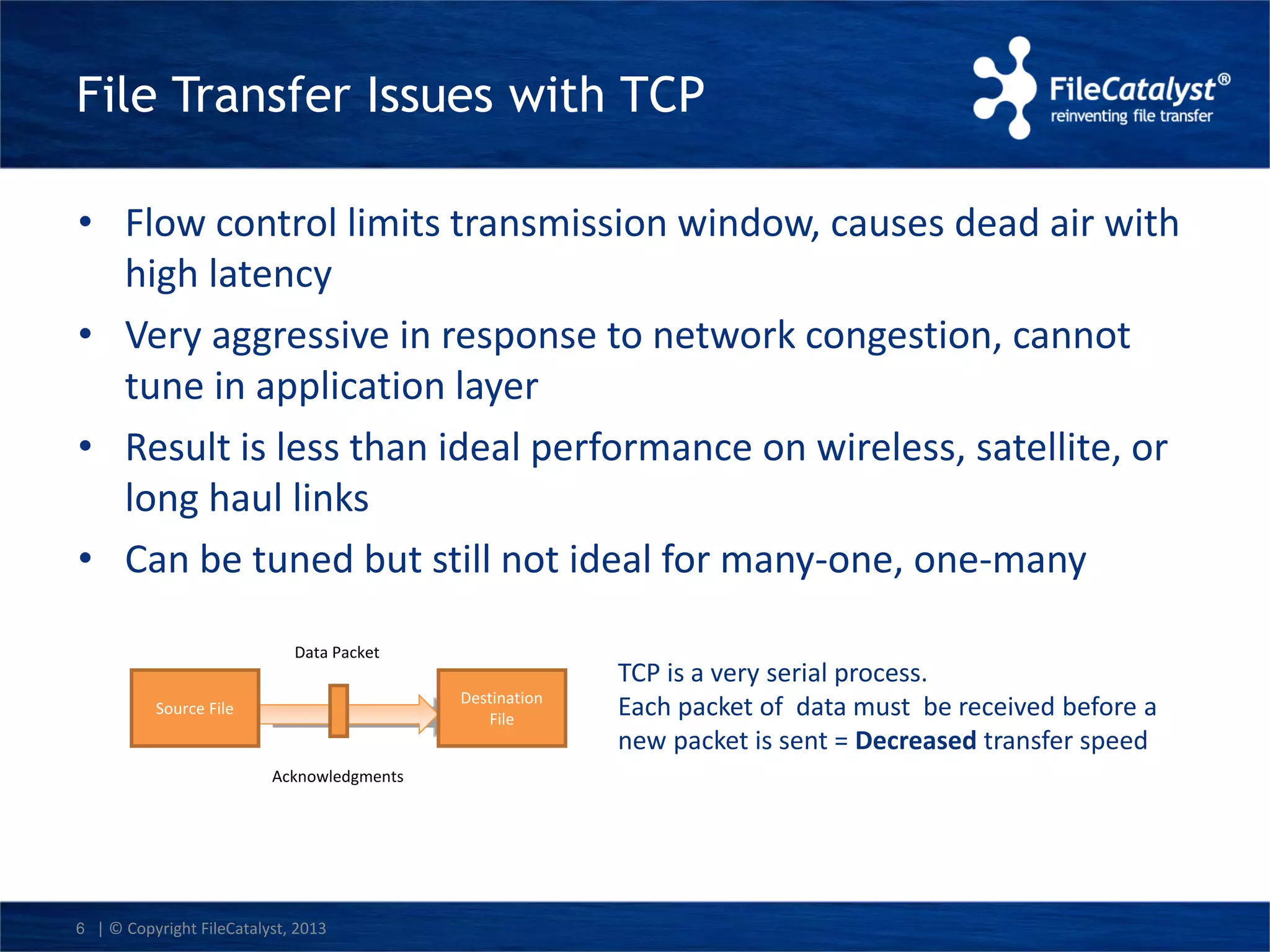

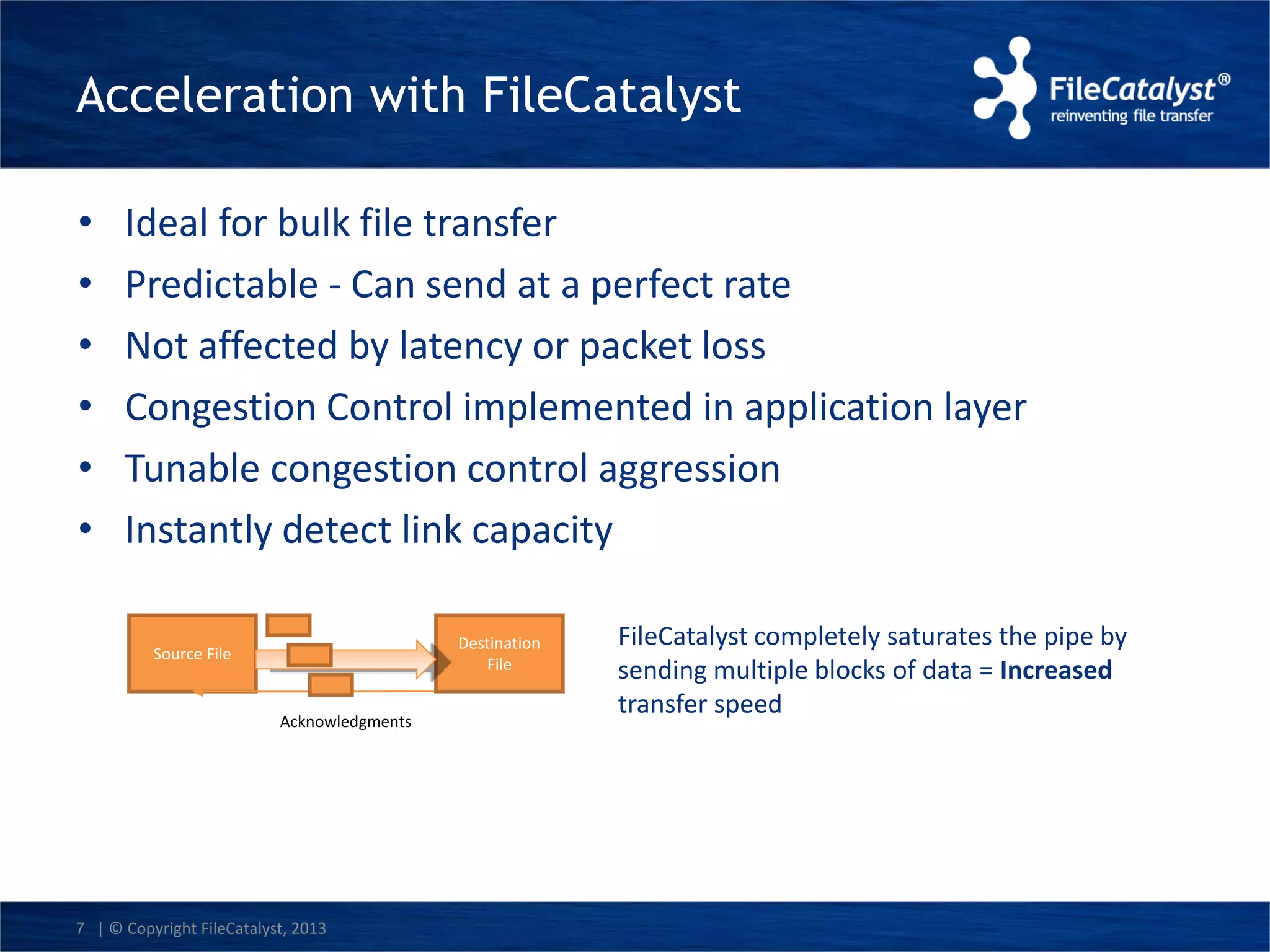

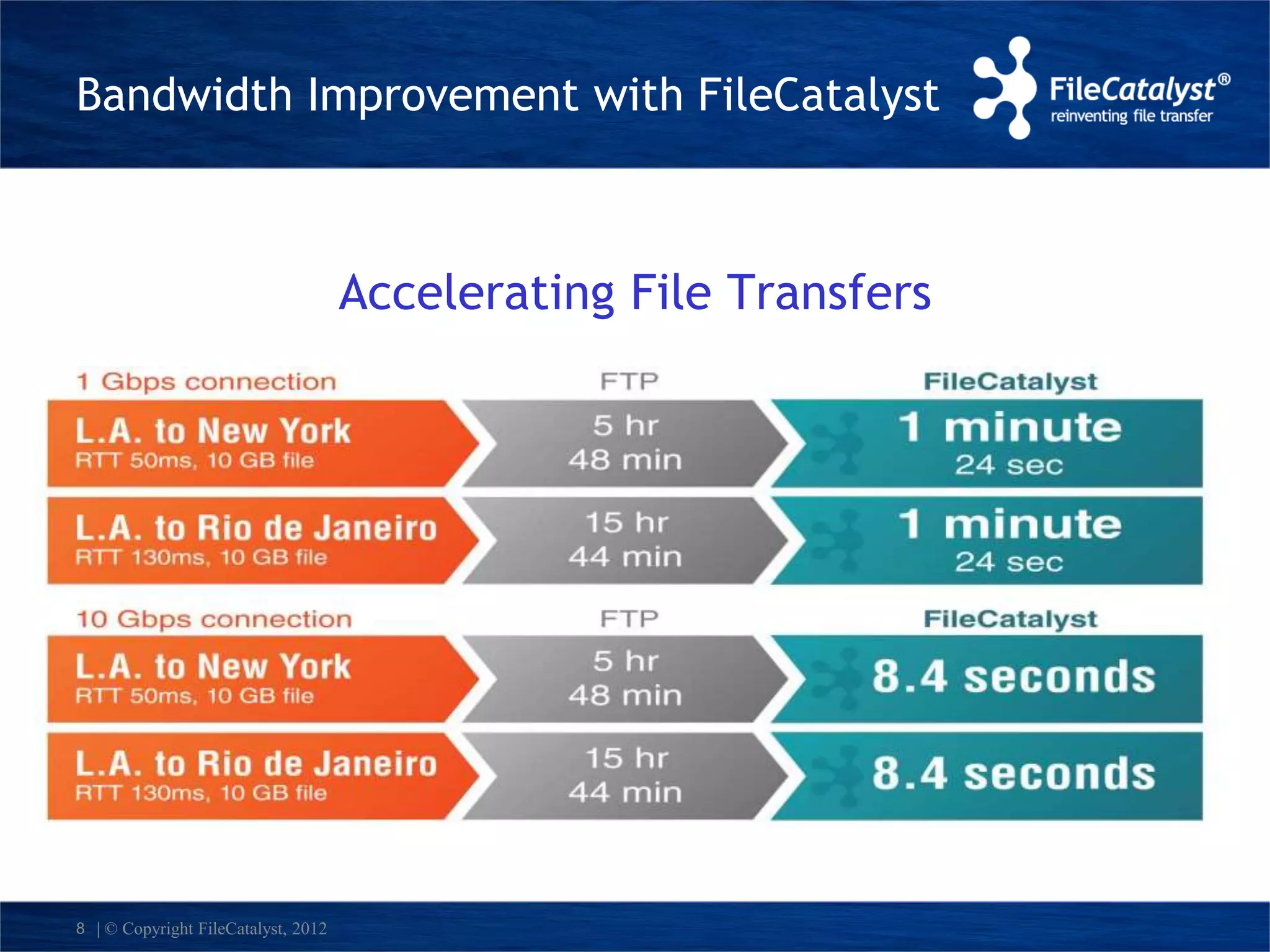

The document discusses FileCatalyst's solutions for accelerating LAN file transfers using a unique UDP-based approach, which allows for higher transfer speeds and better performance compared to traditional TCP methods. It highlights common issues with TCP, such as flow control limitations and responsiveness to network congestion, and showcases the benefits of FileCatalyst in terms of reliability and security for large data transfers. Additionally, the document includes a demonstration of the FileCatalyst server capabilities and its advantages over existing technologies.