Embed presentation

Download as PDF, PPTX

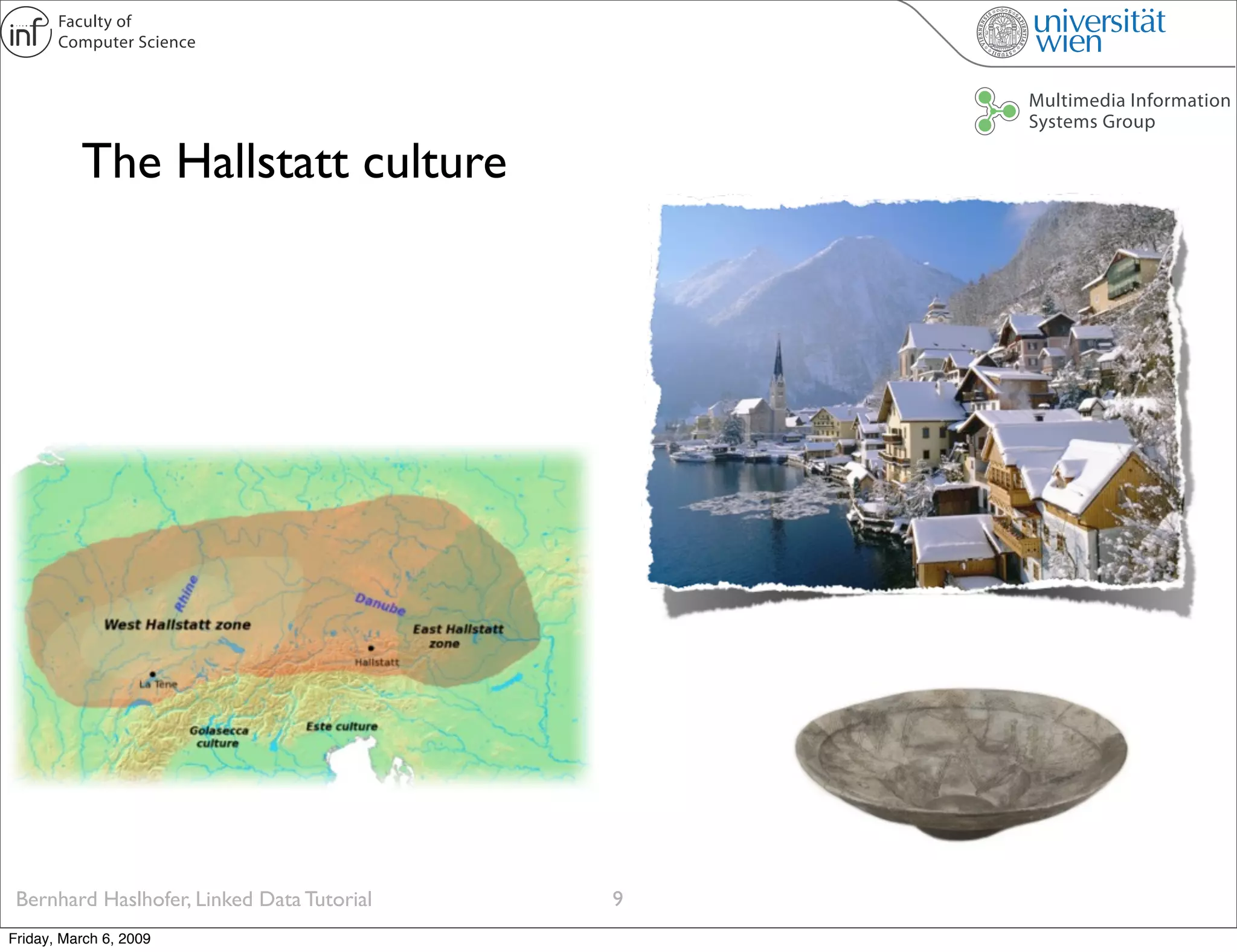

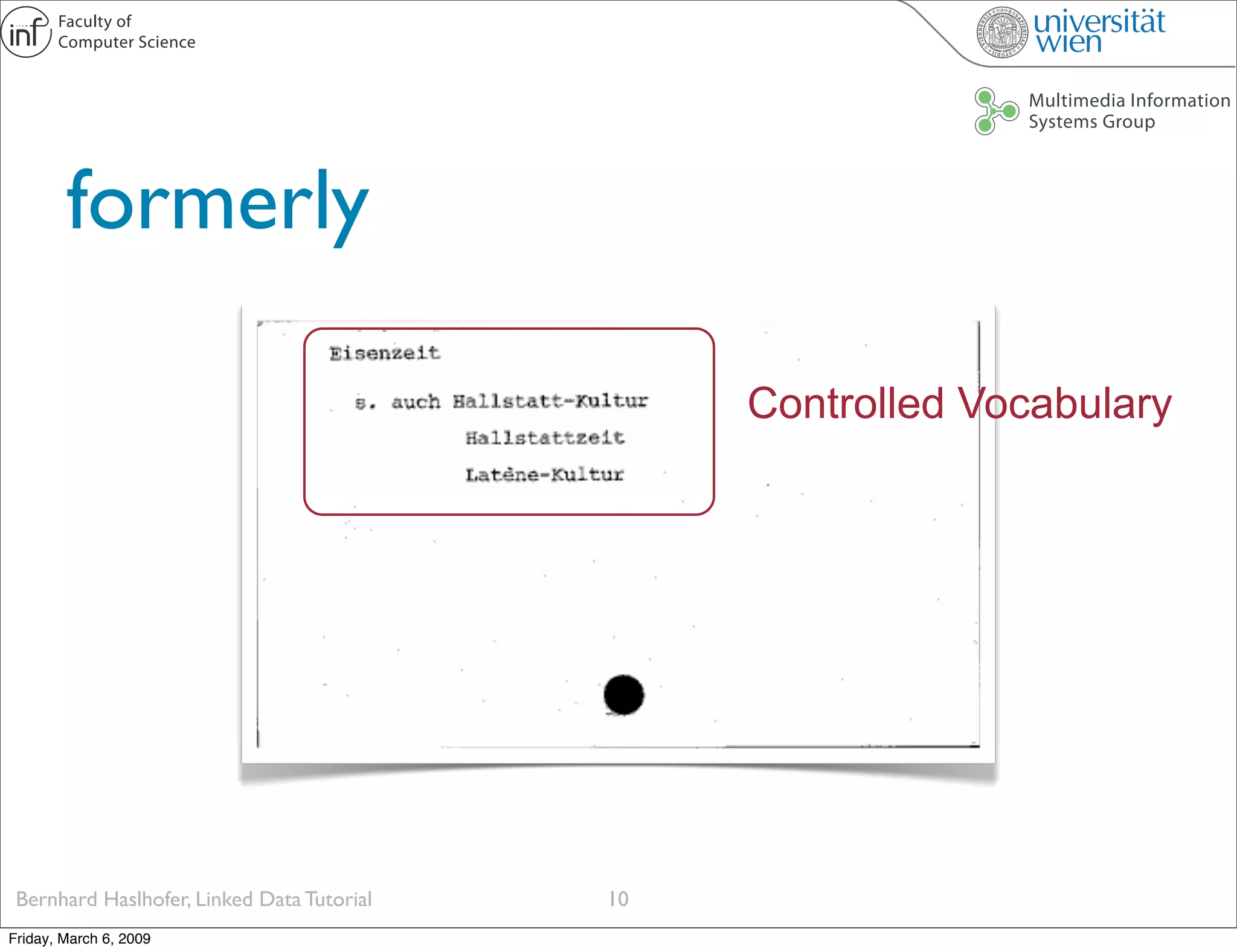

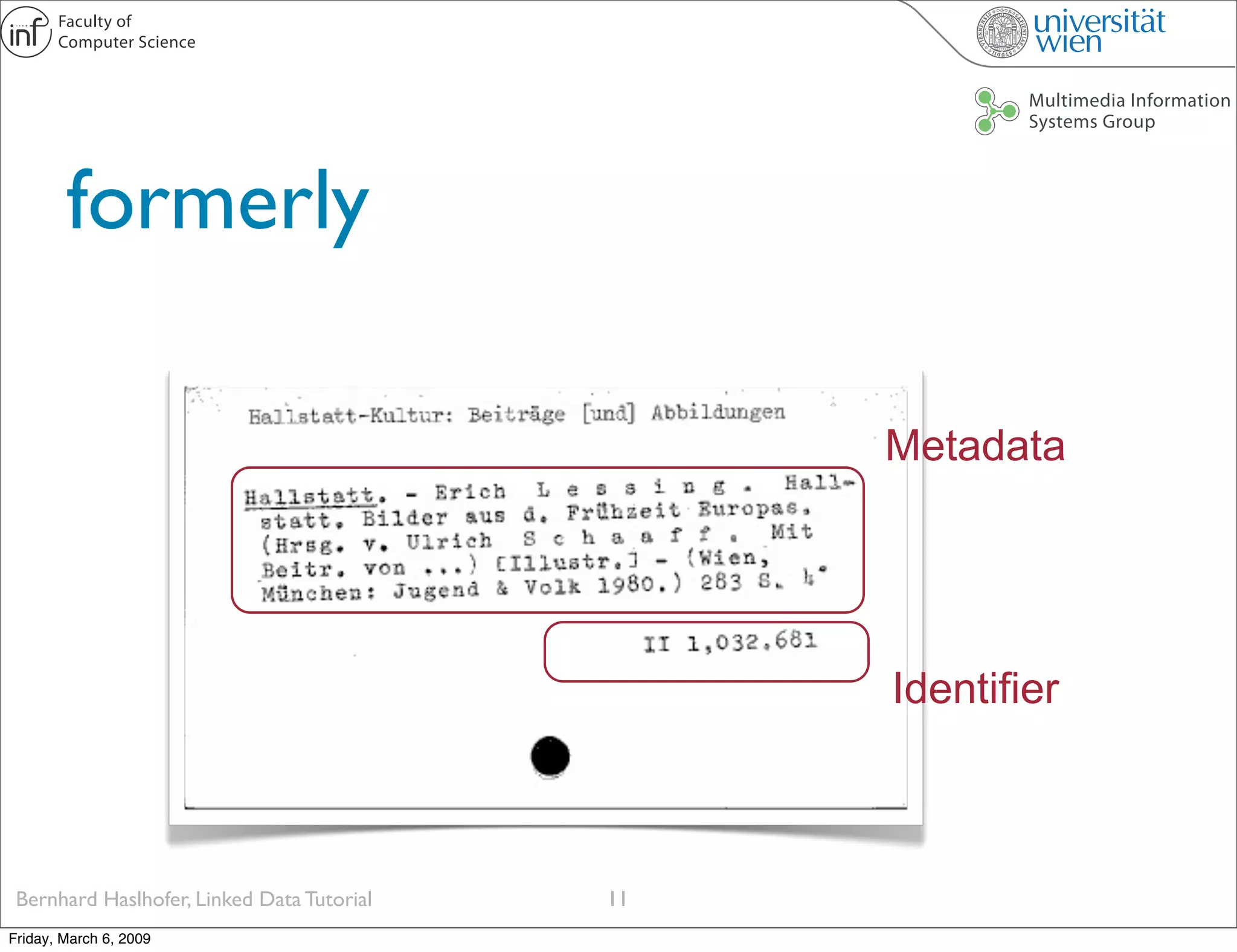

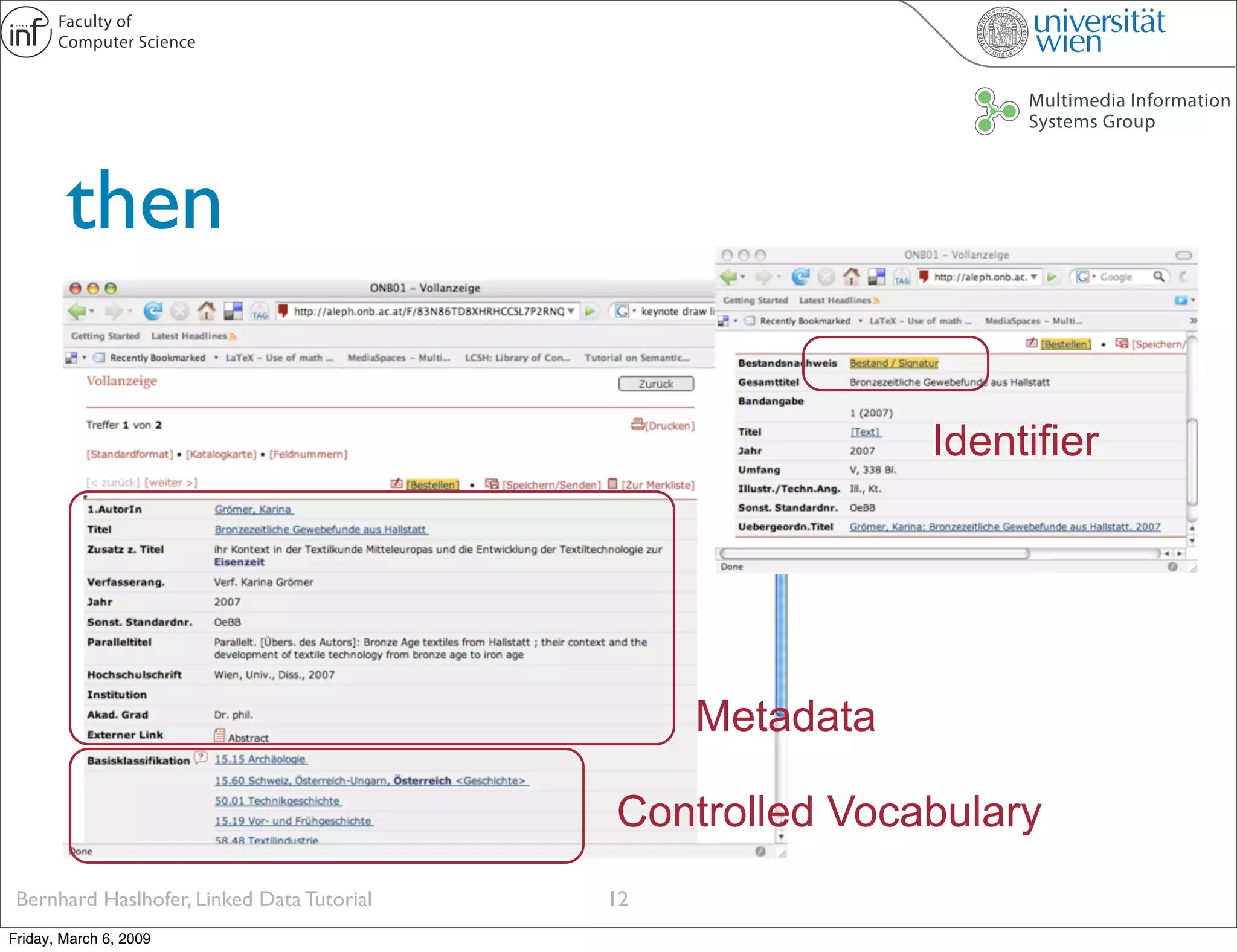

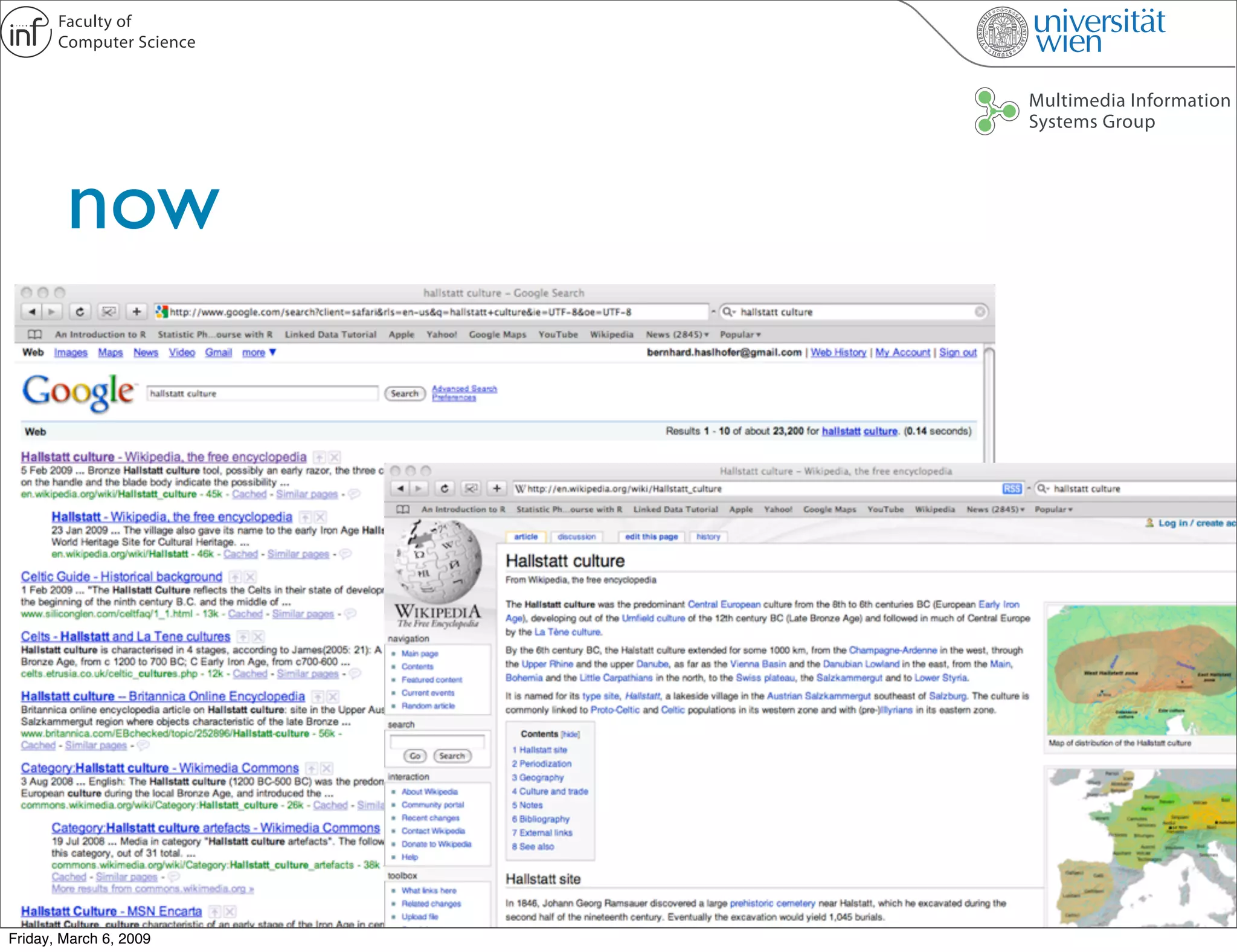

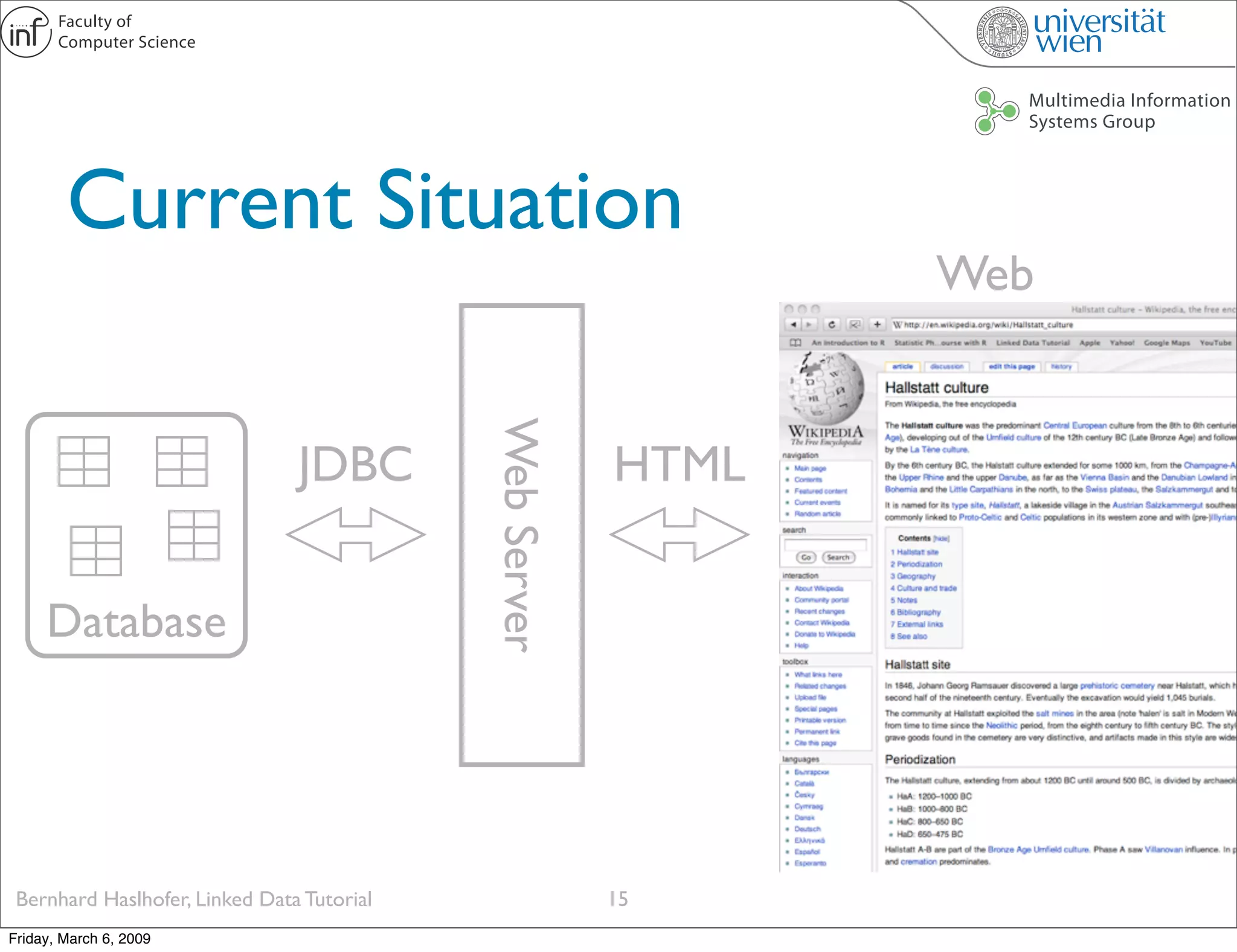

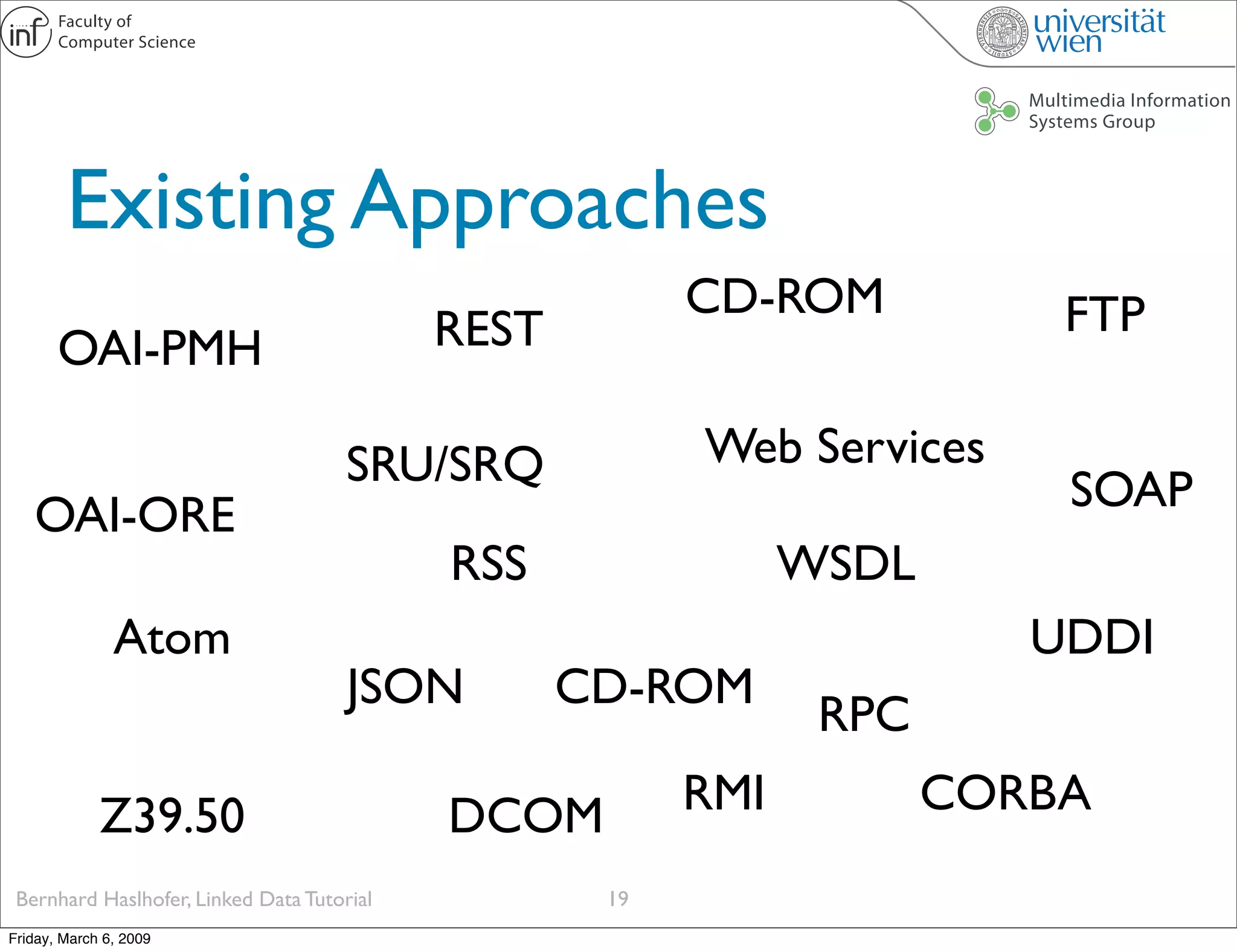

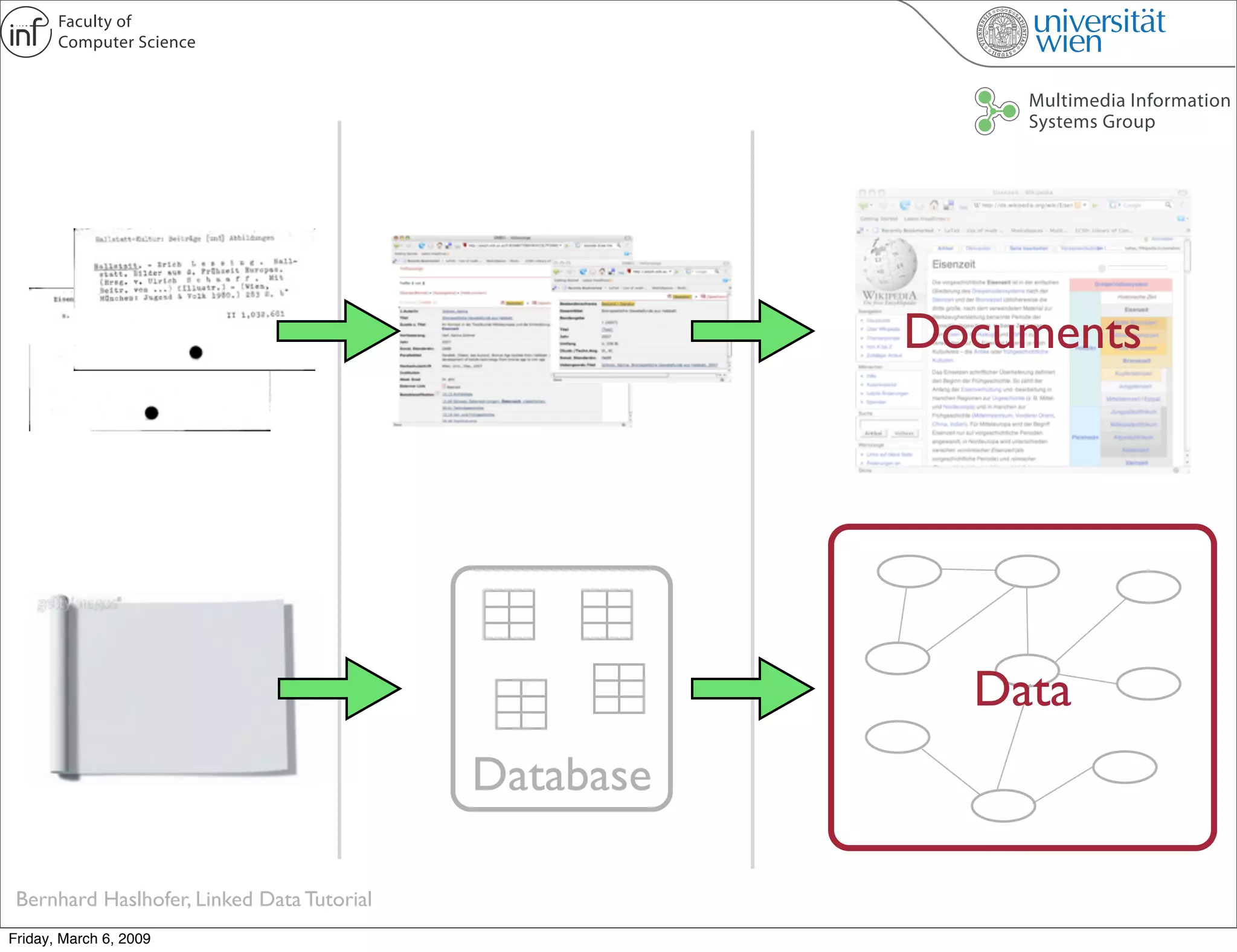

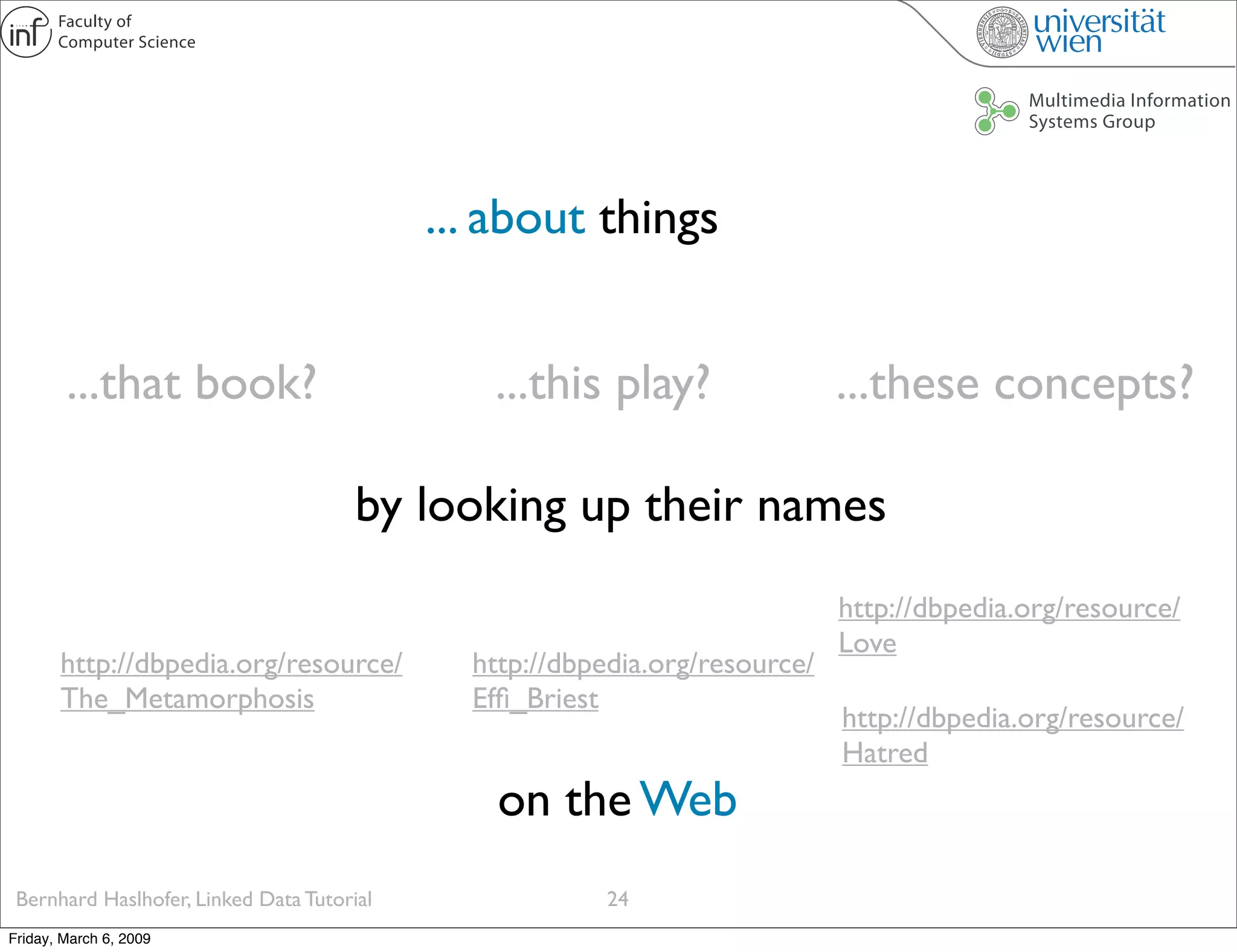

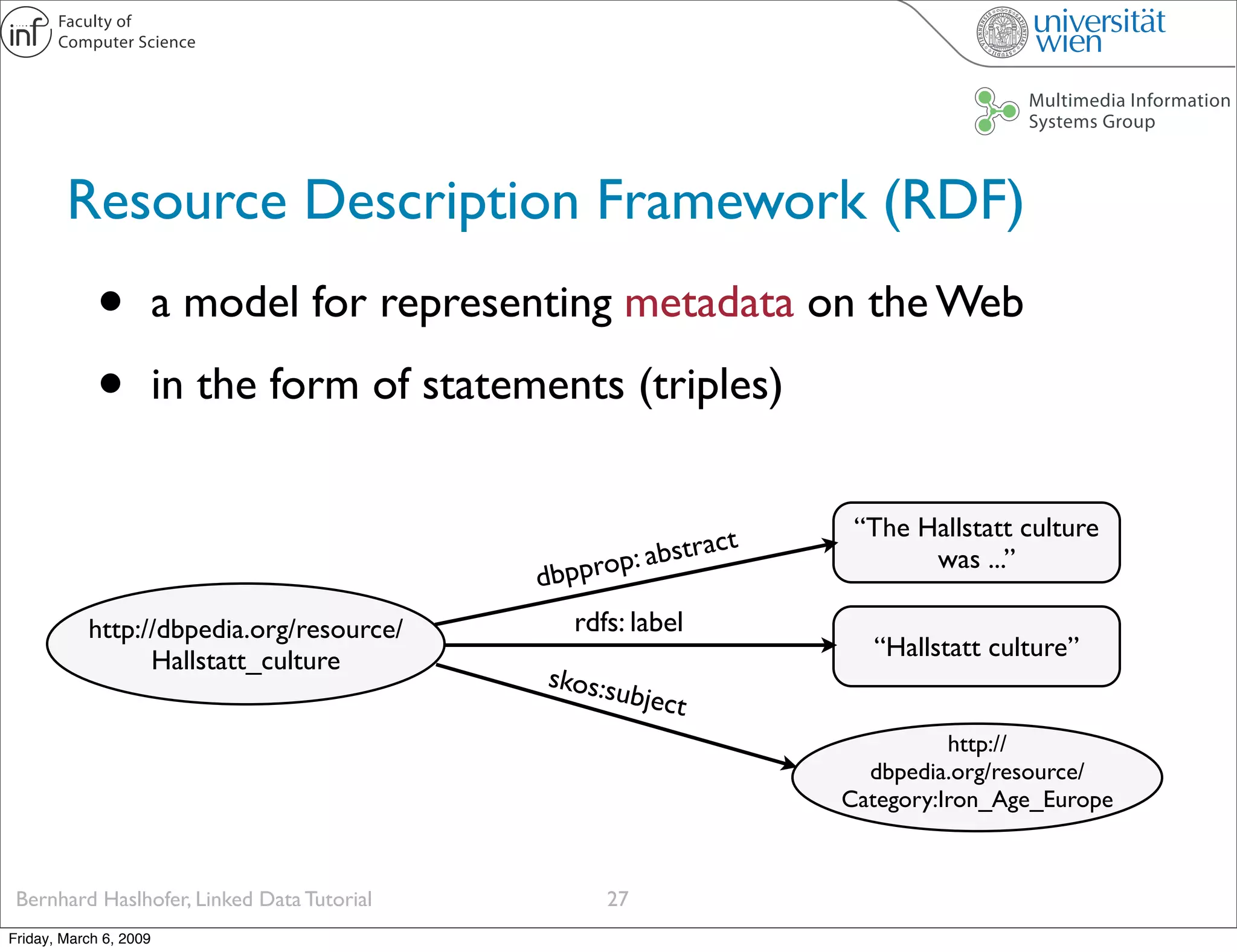

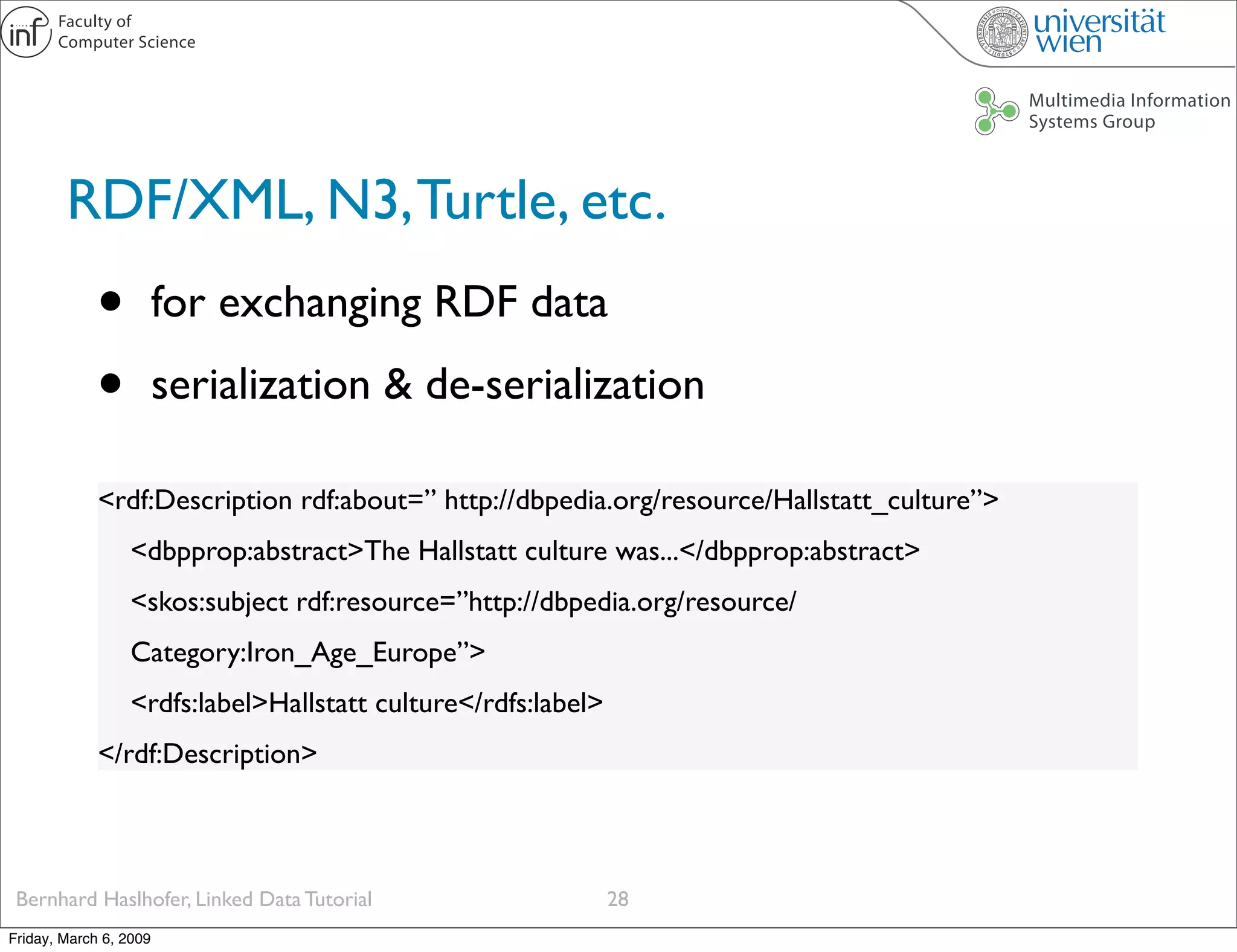

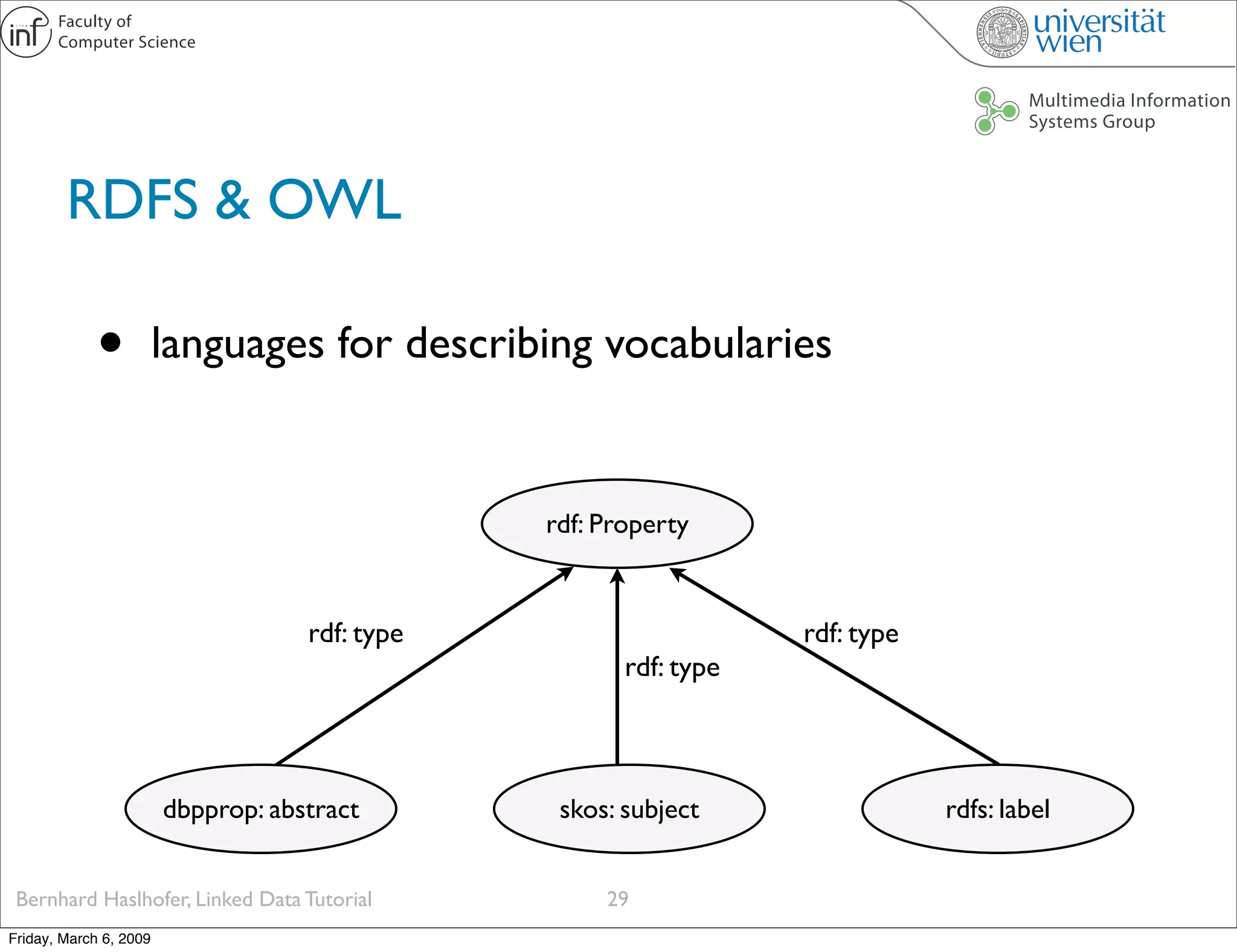

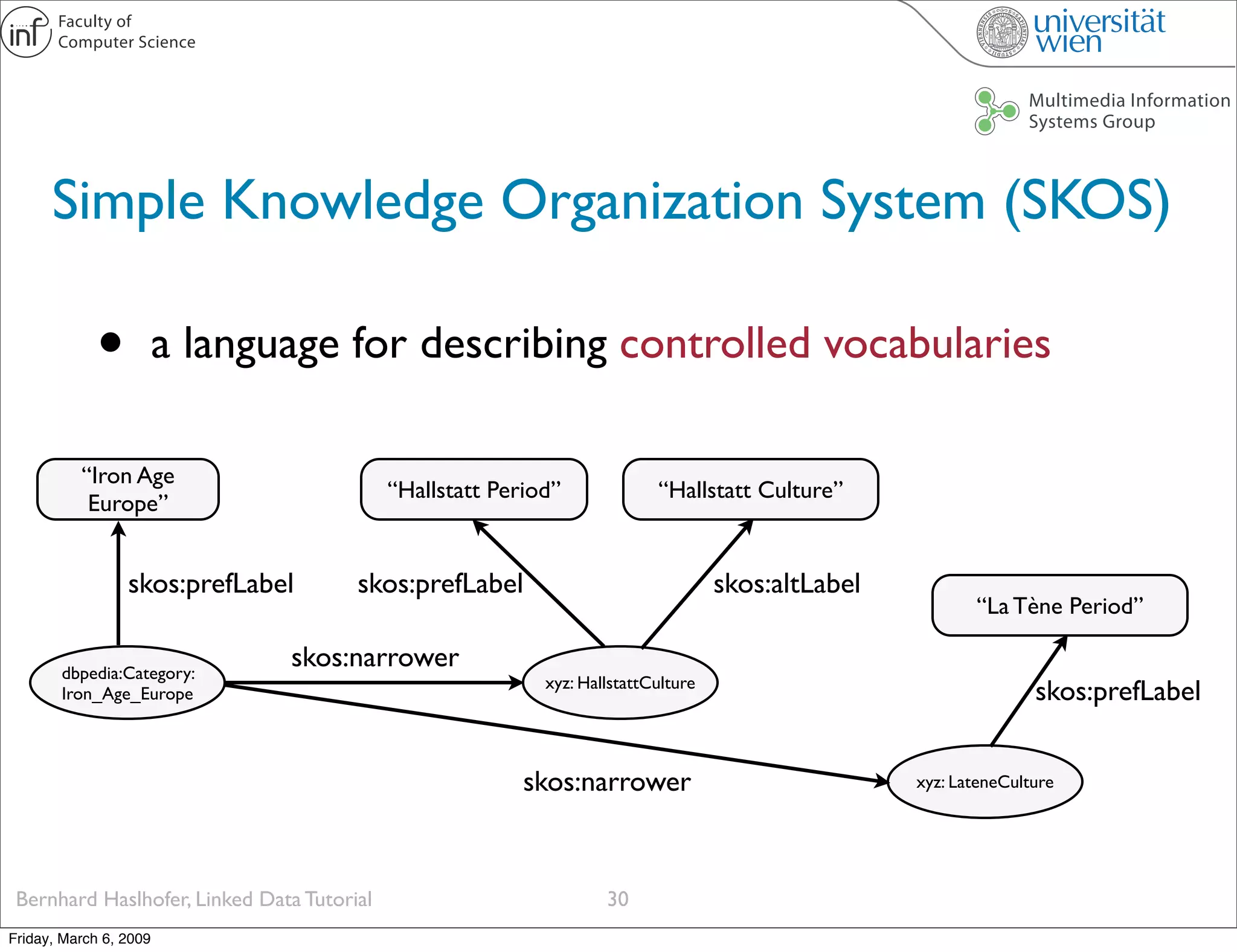

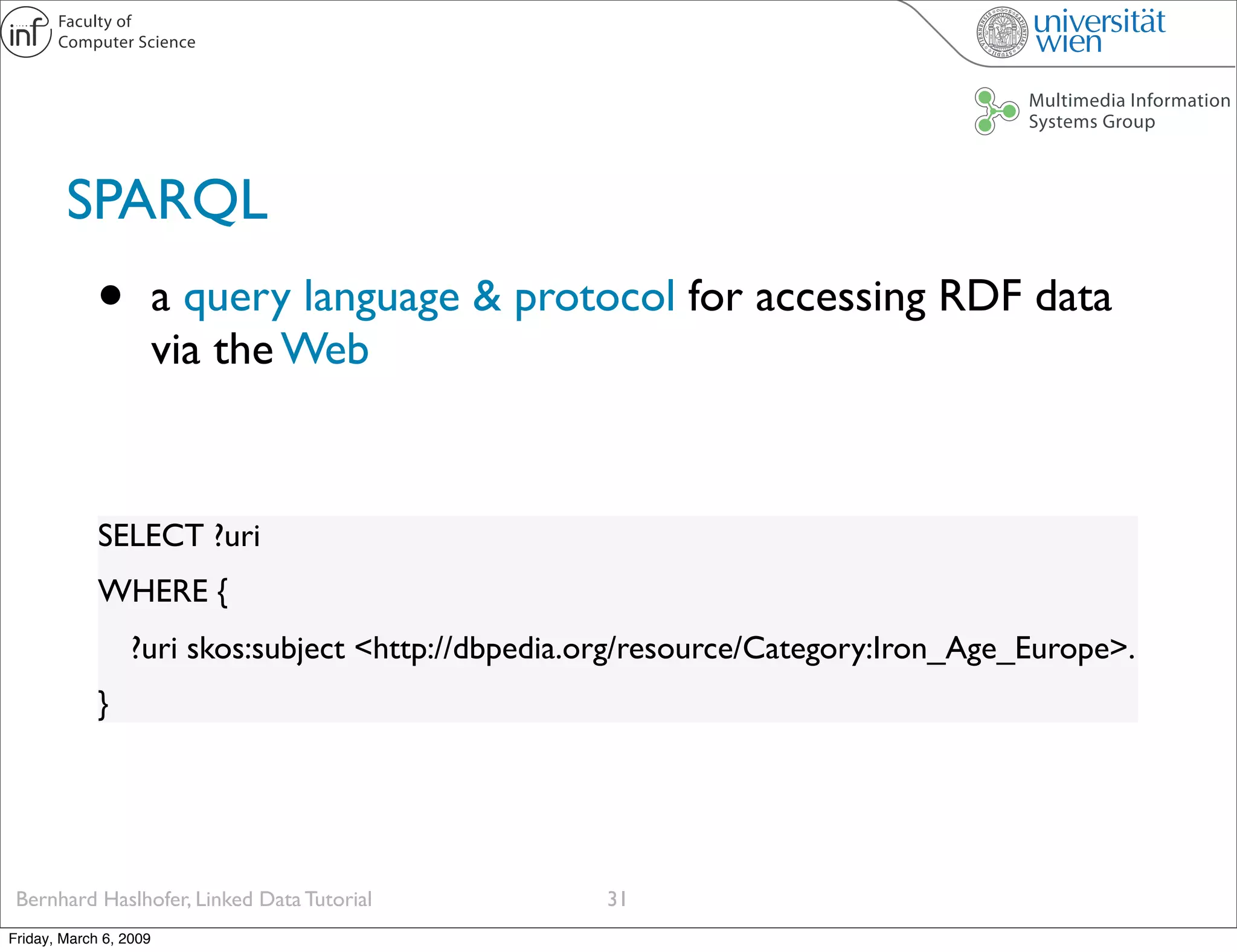

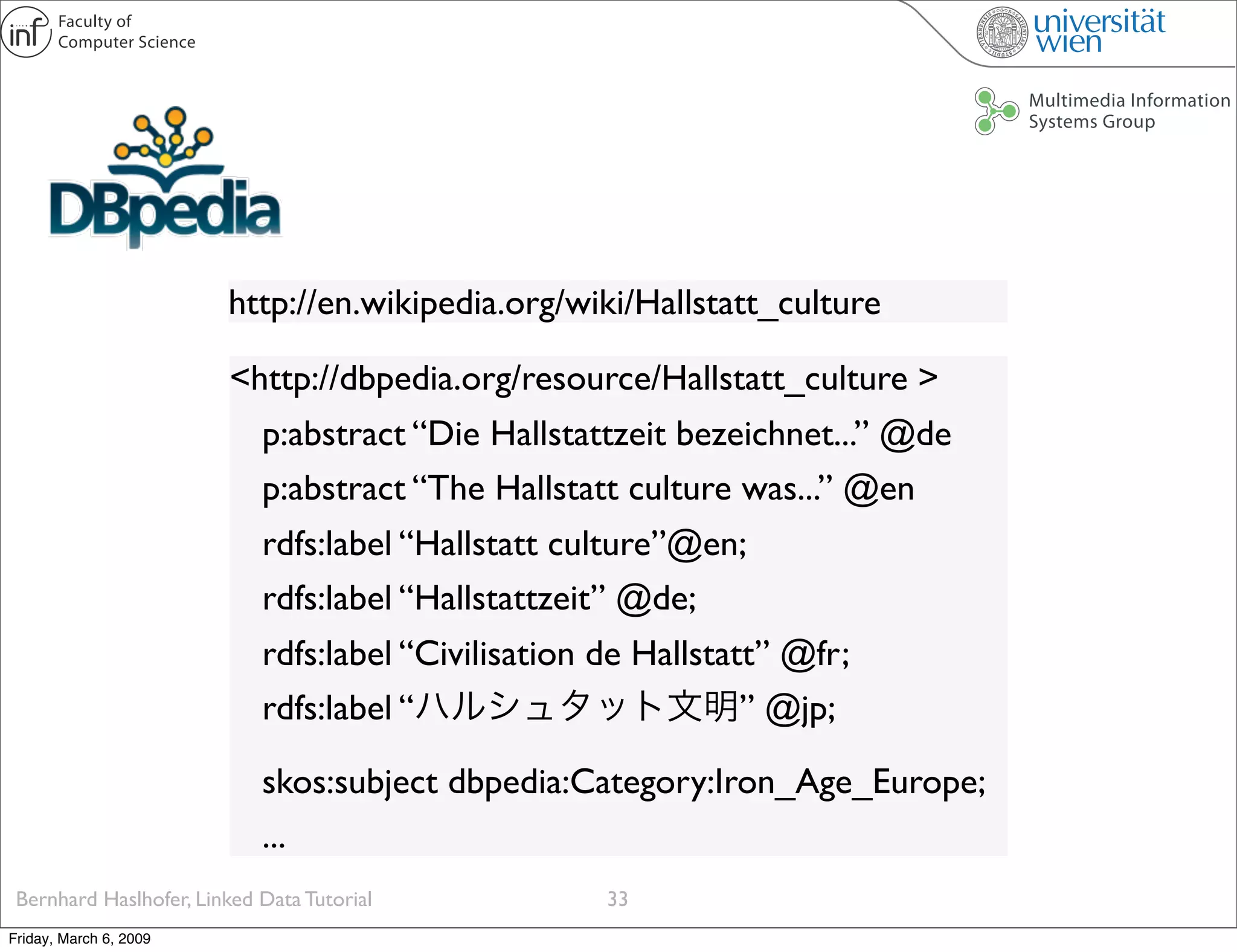

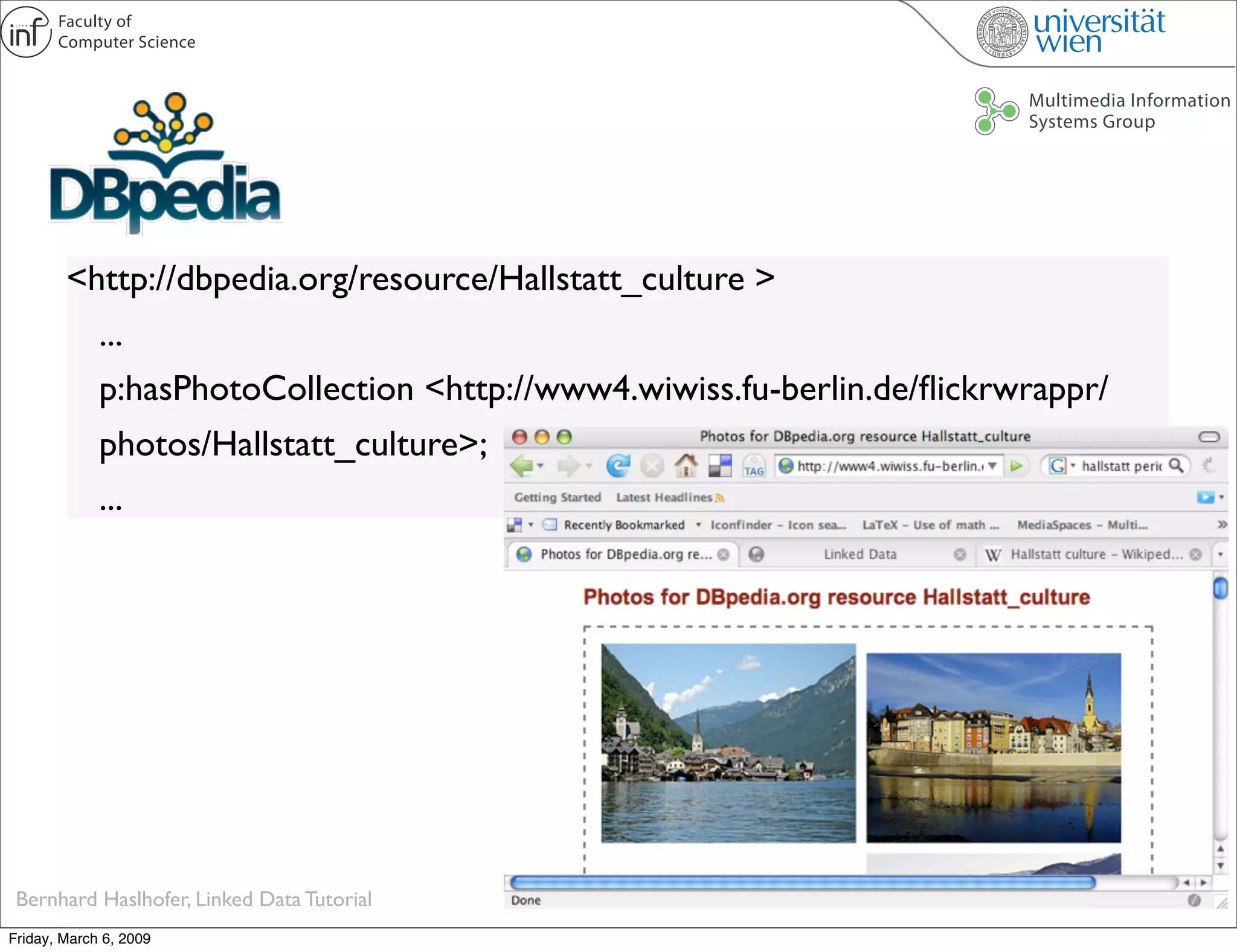

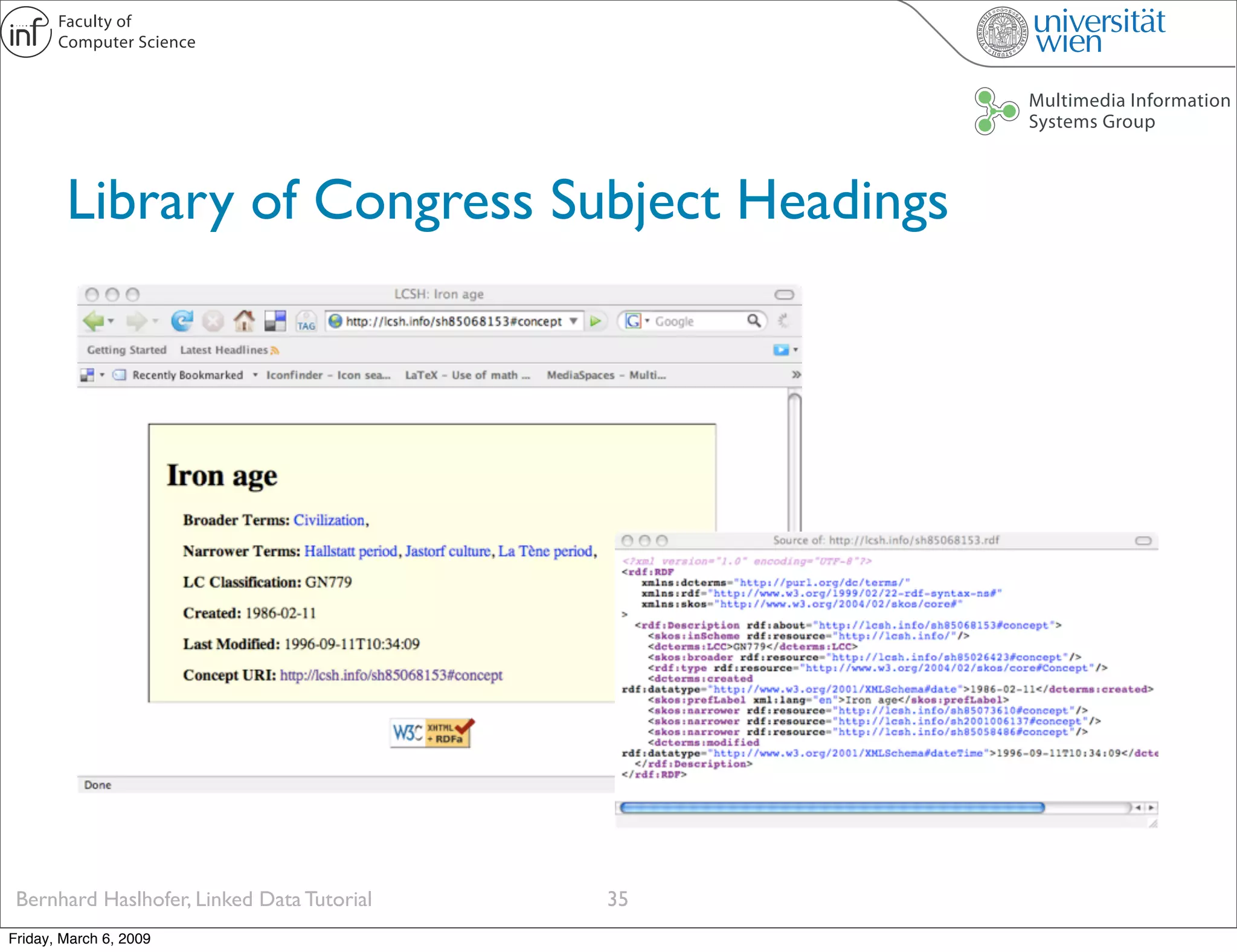

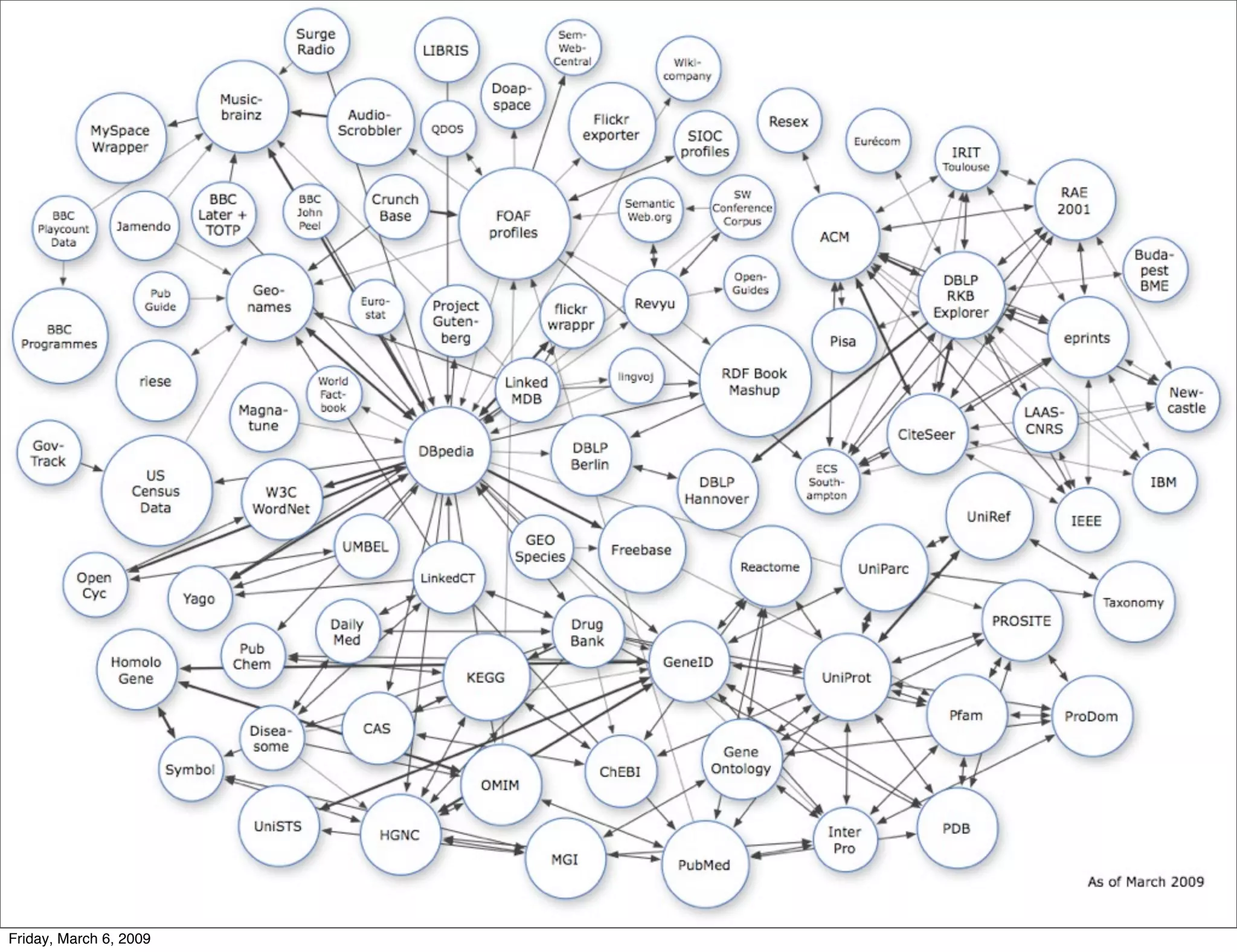

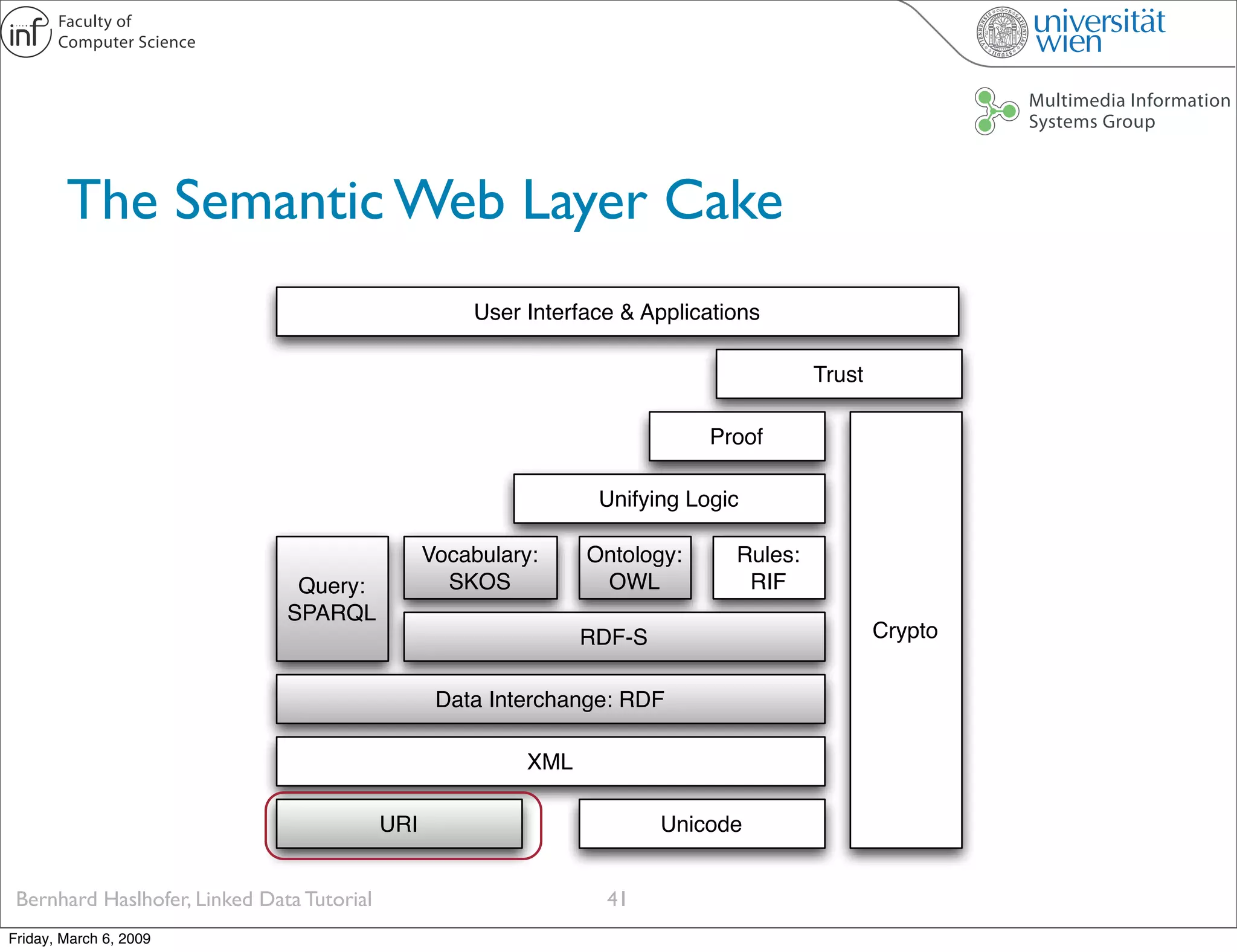

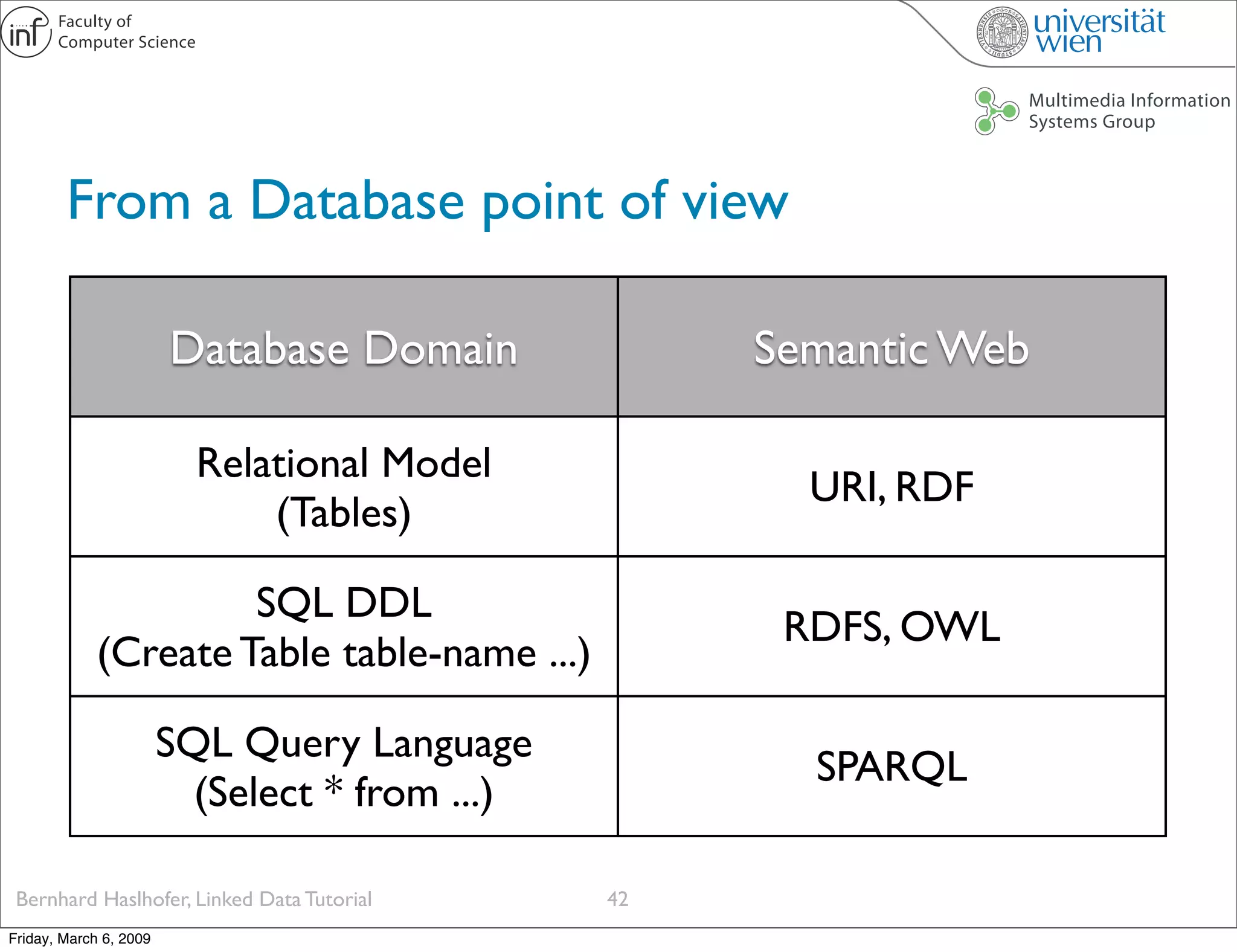

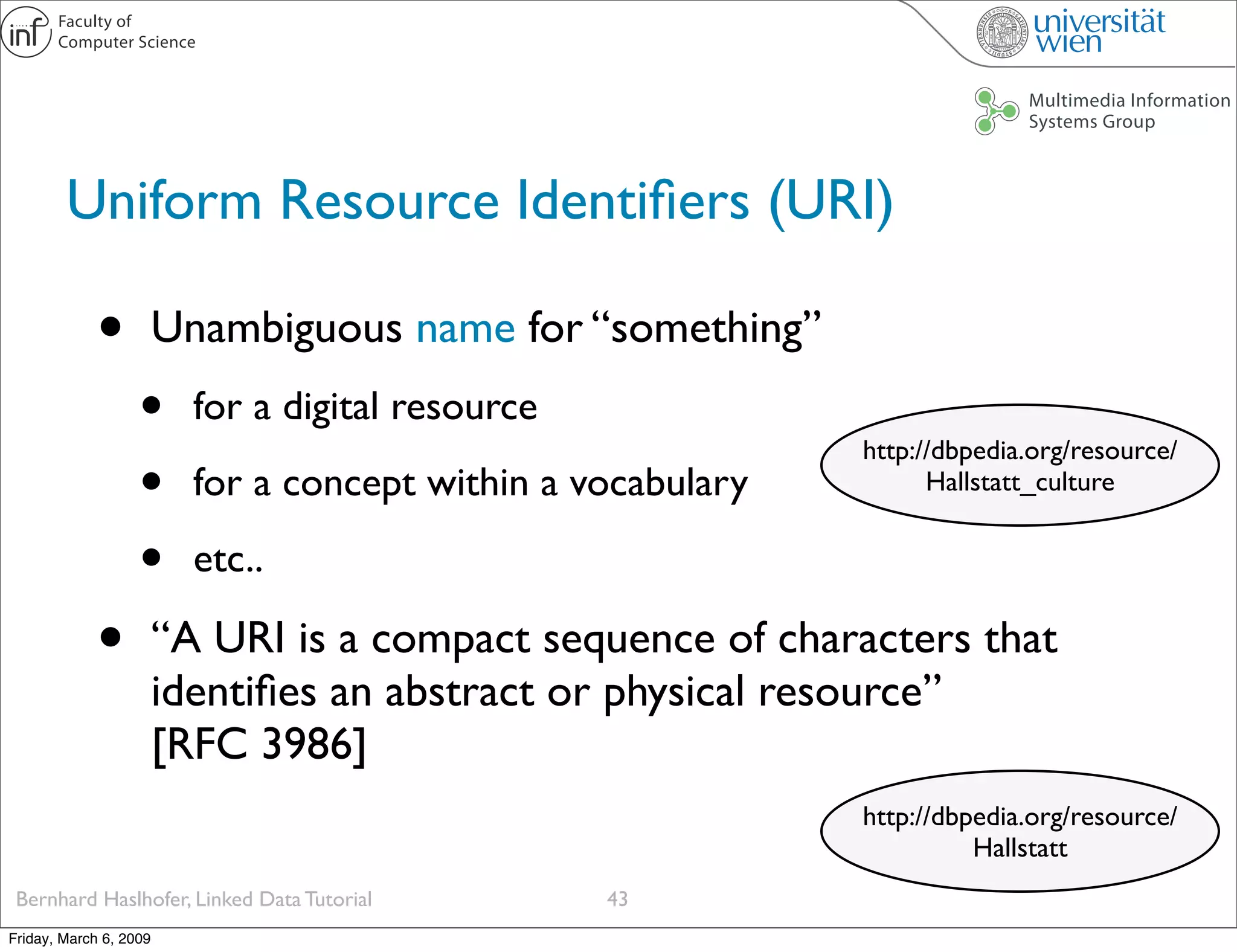

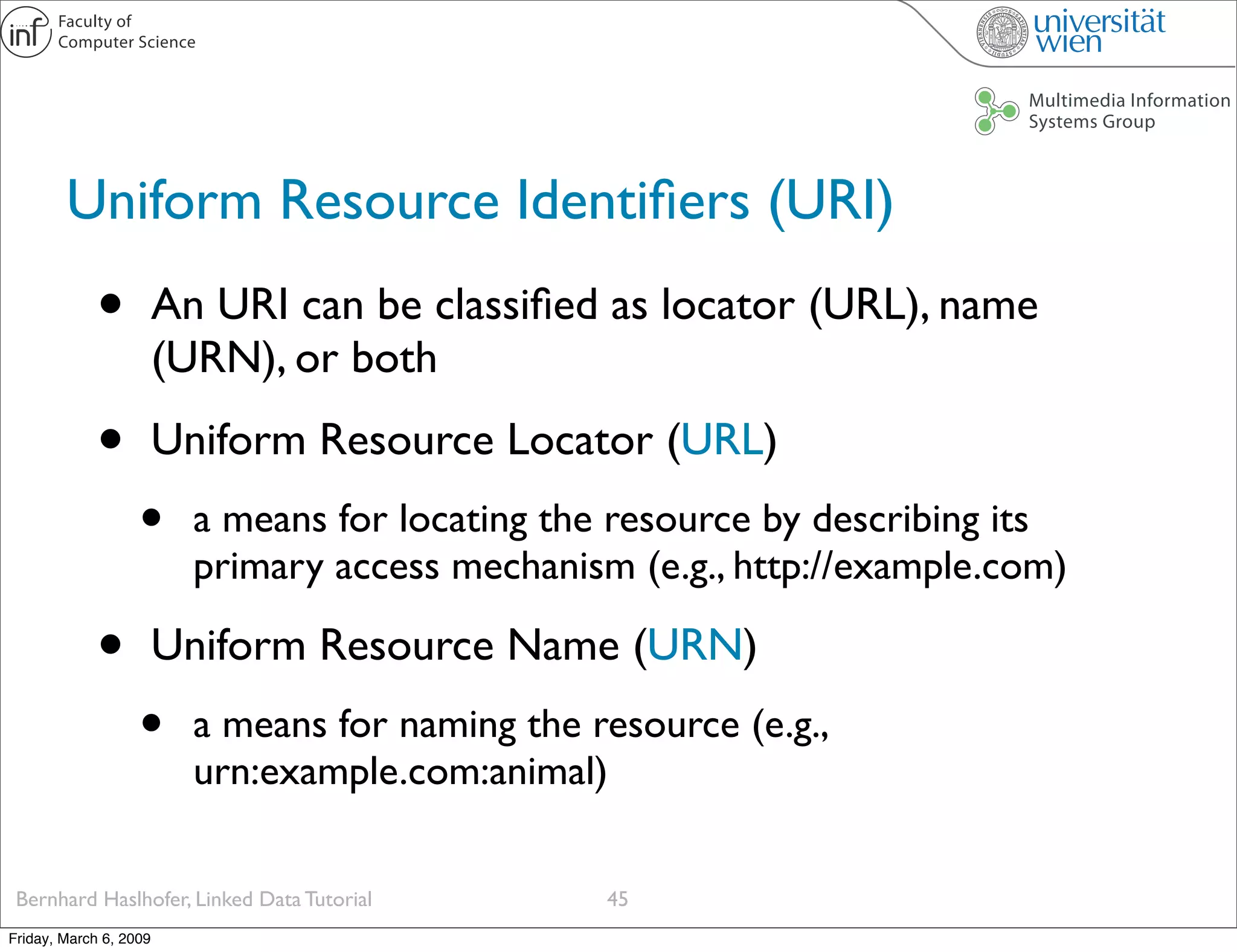

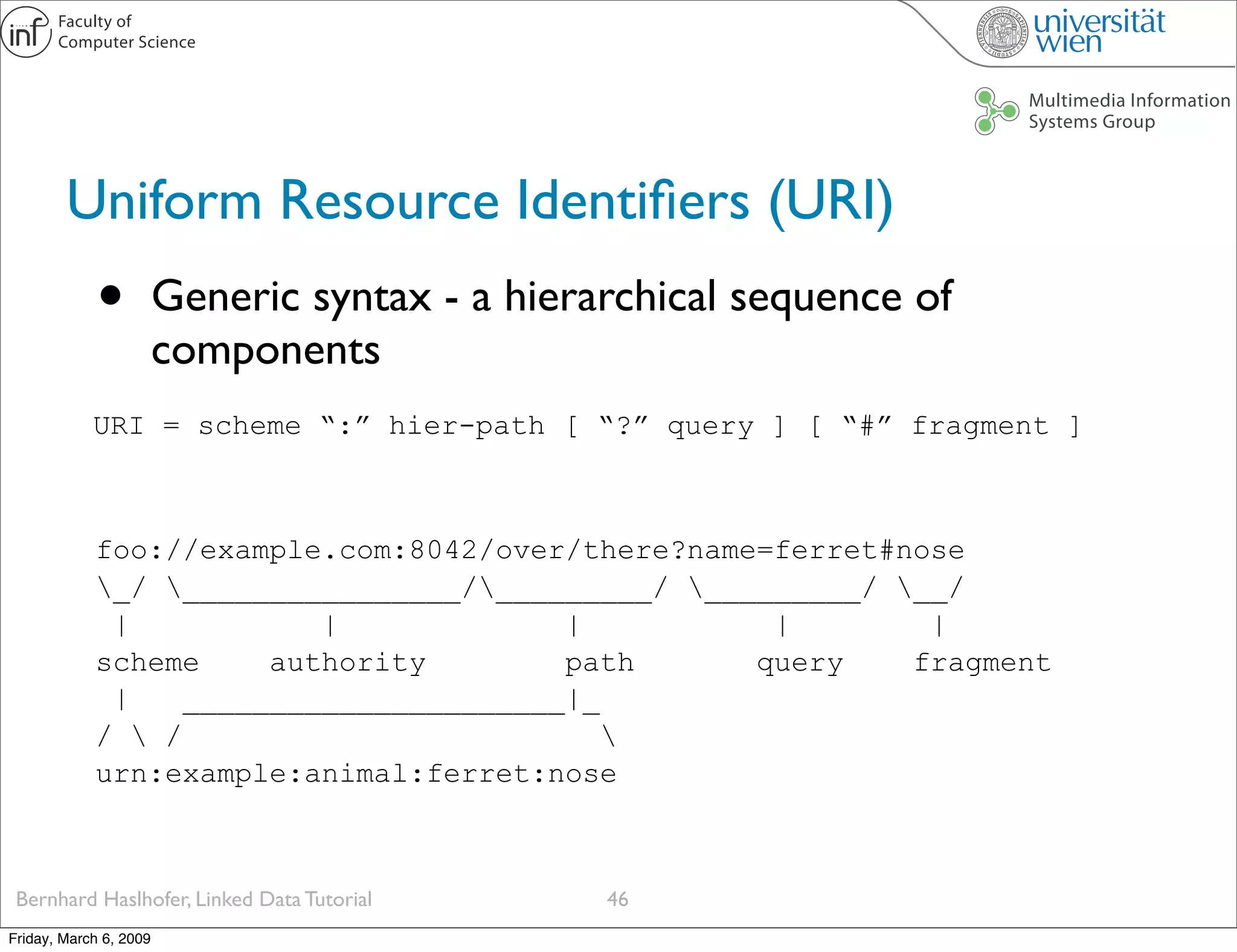

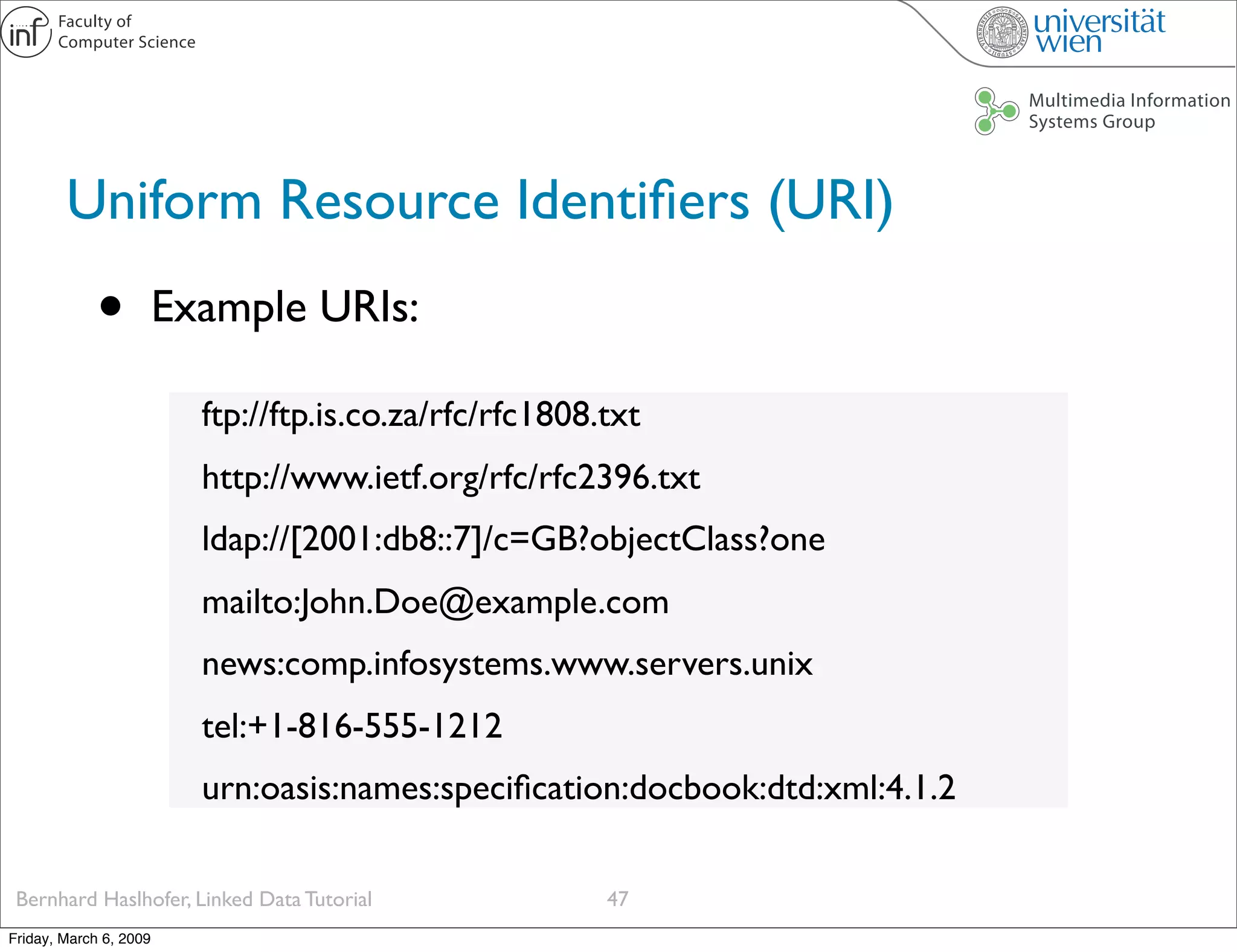

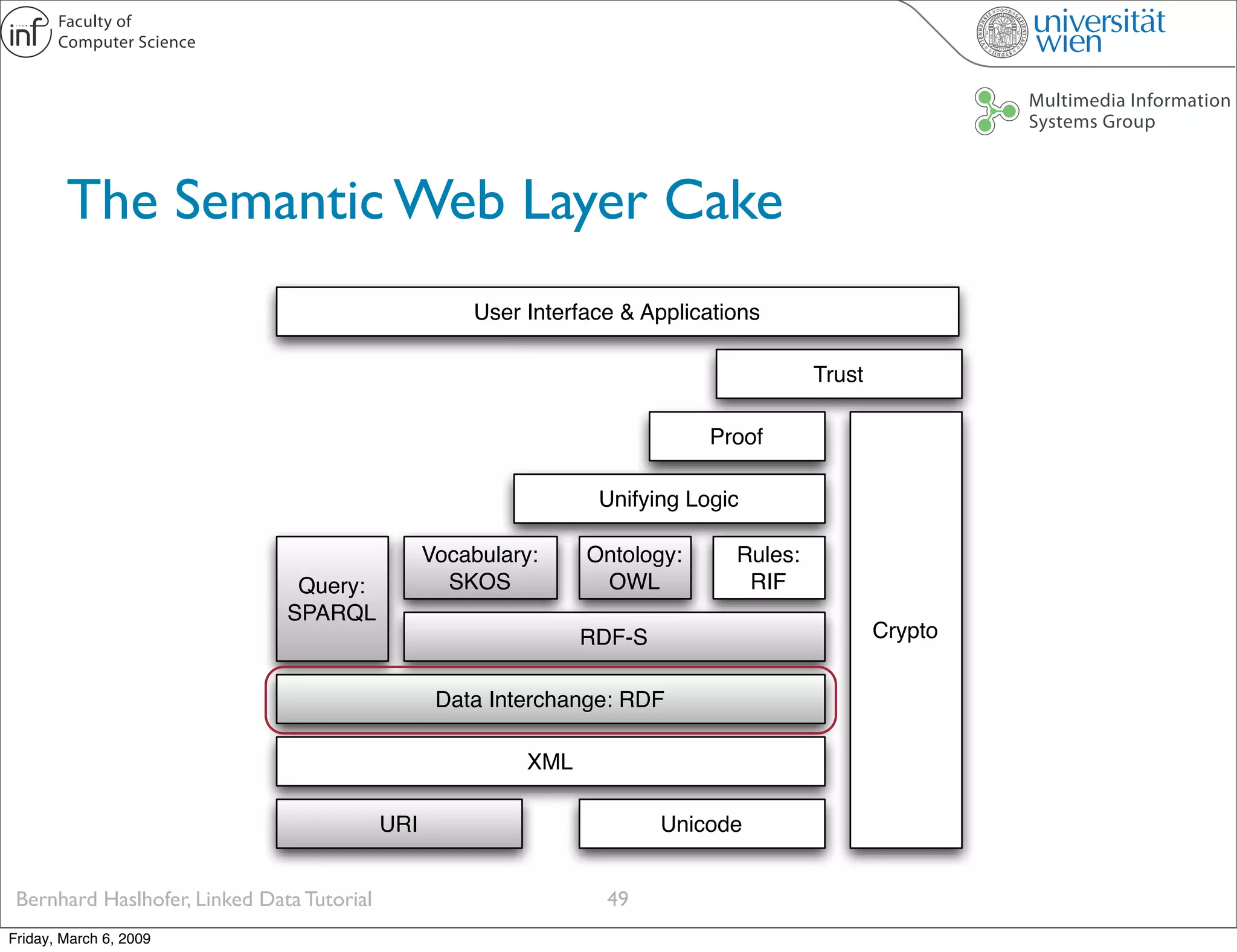

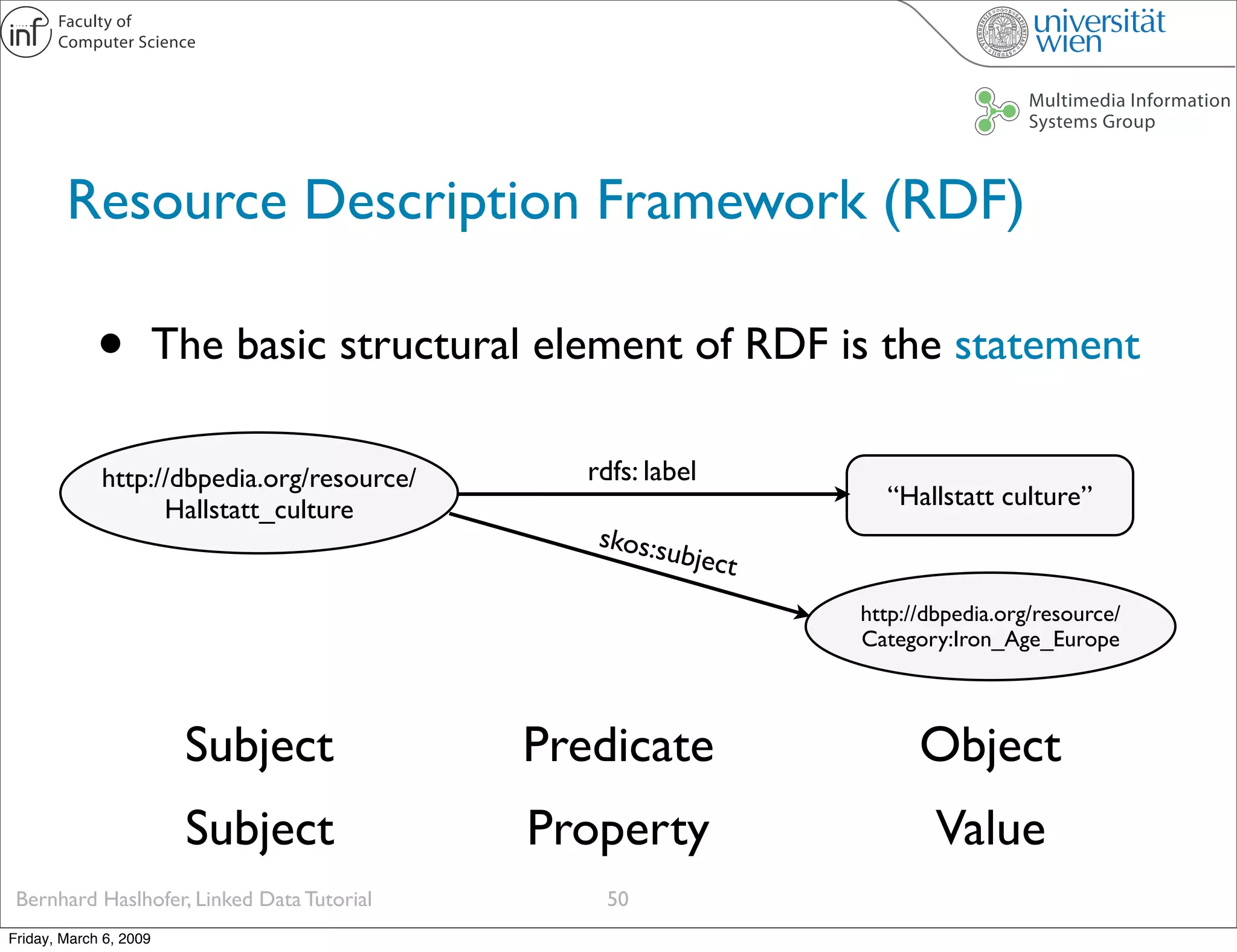

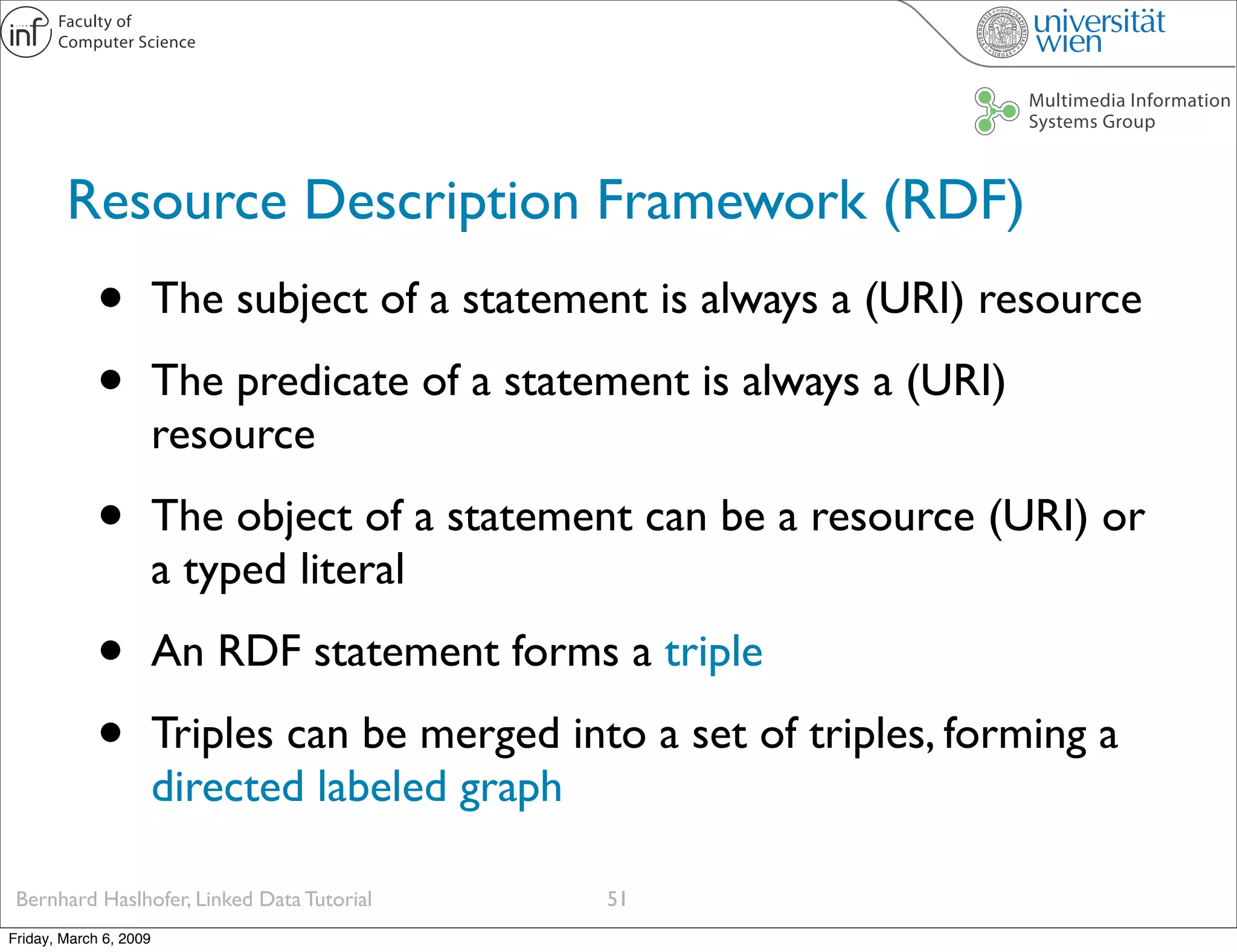

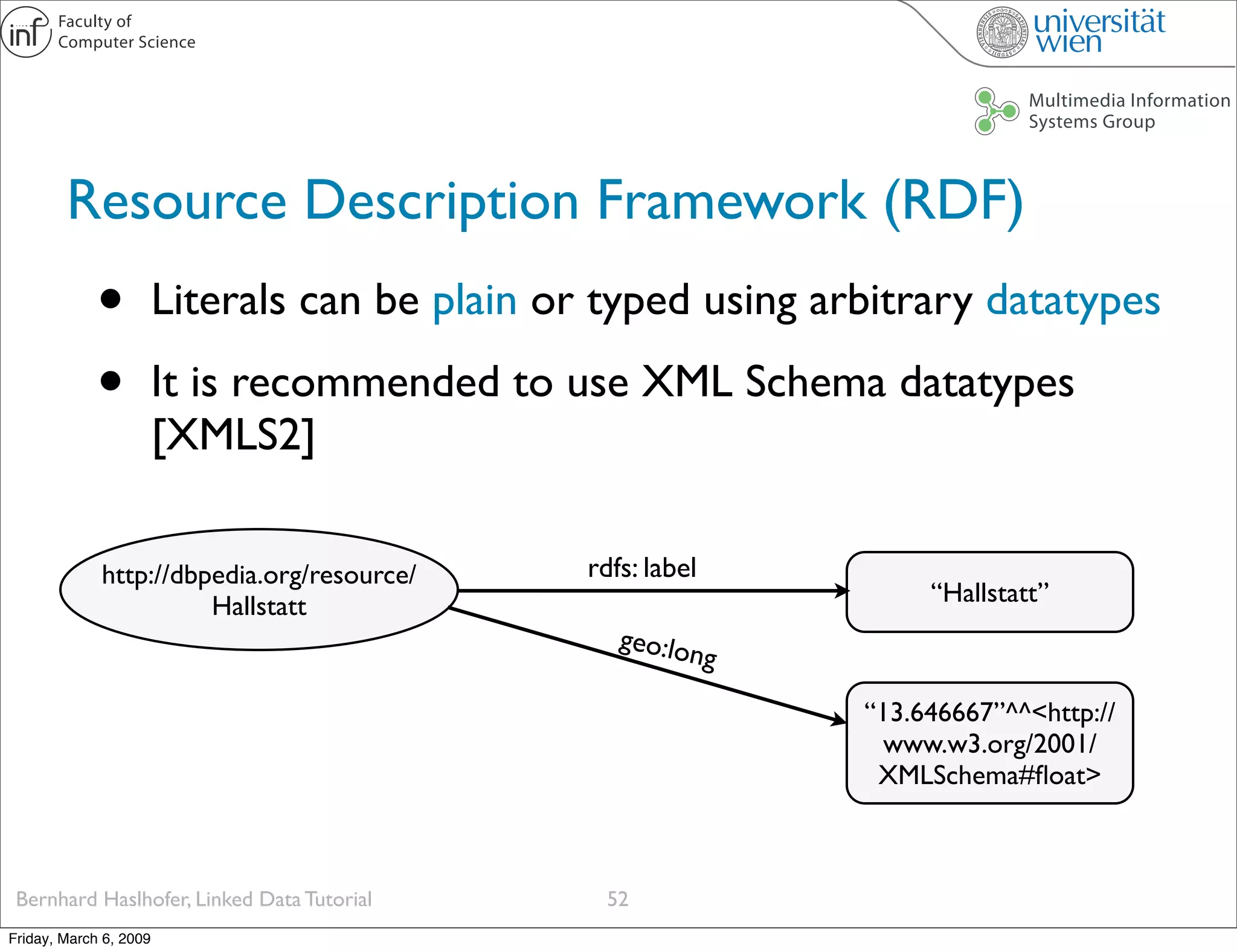

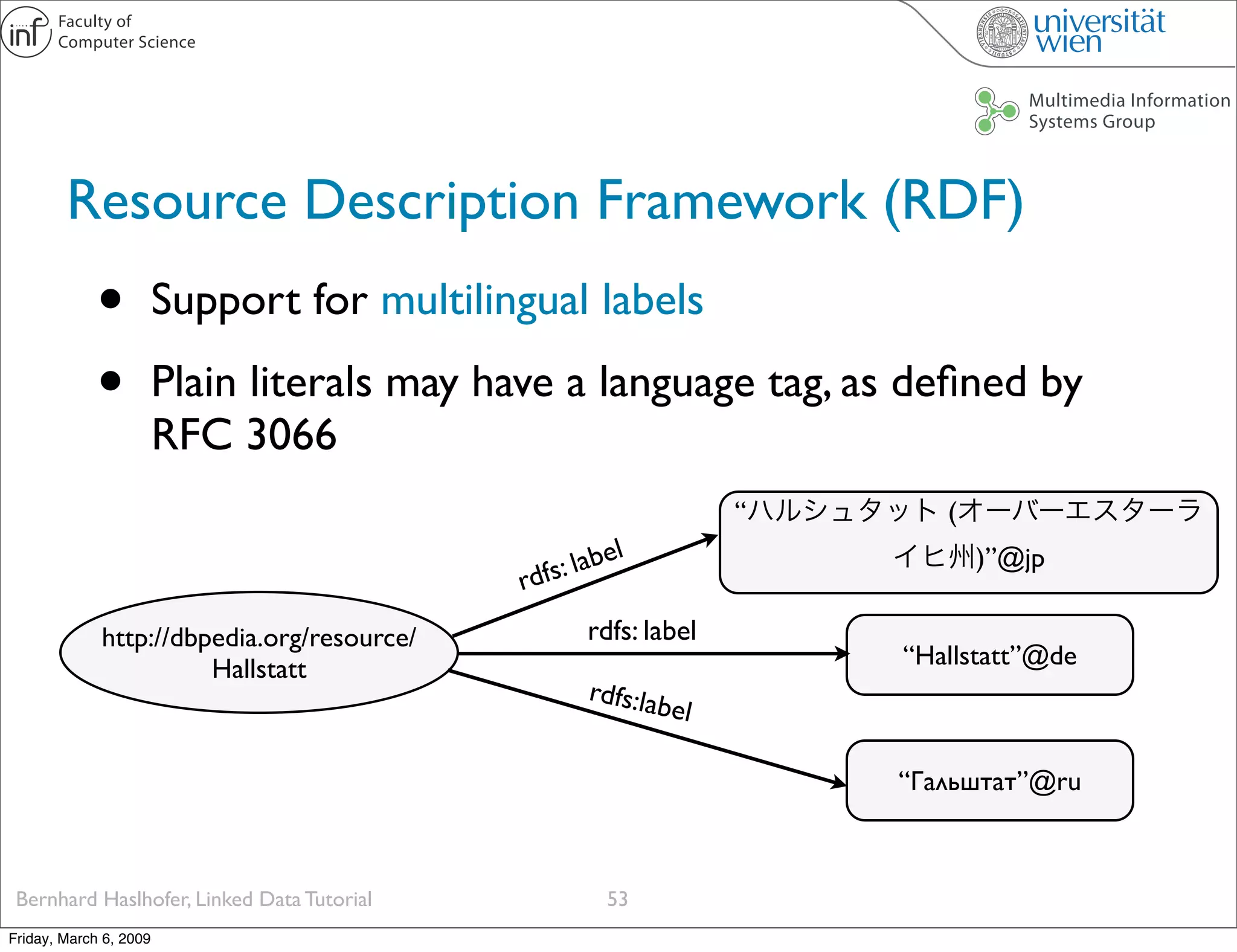

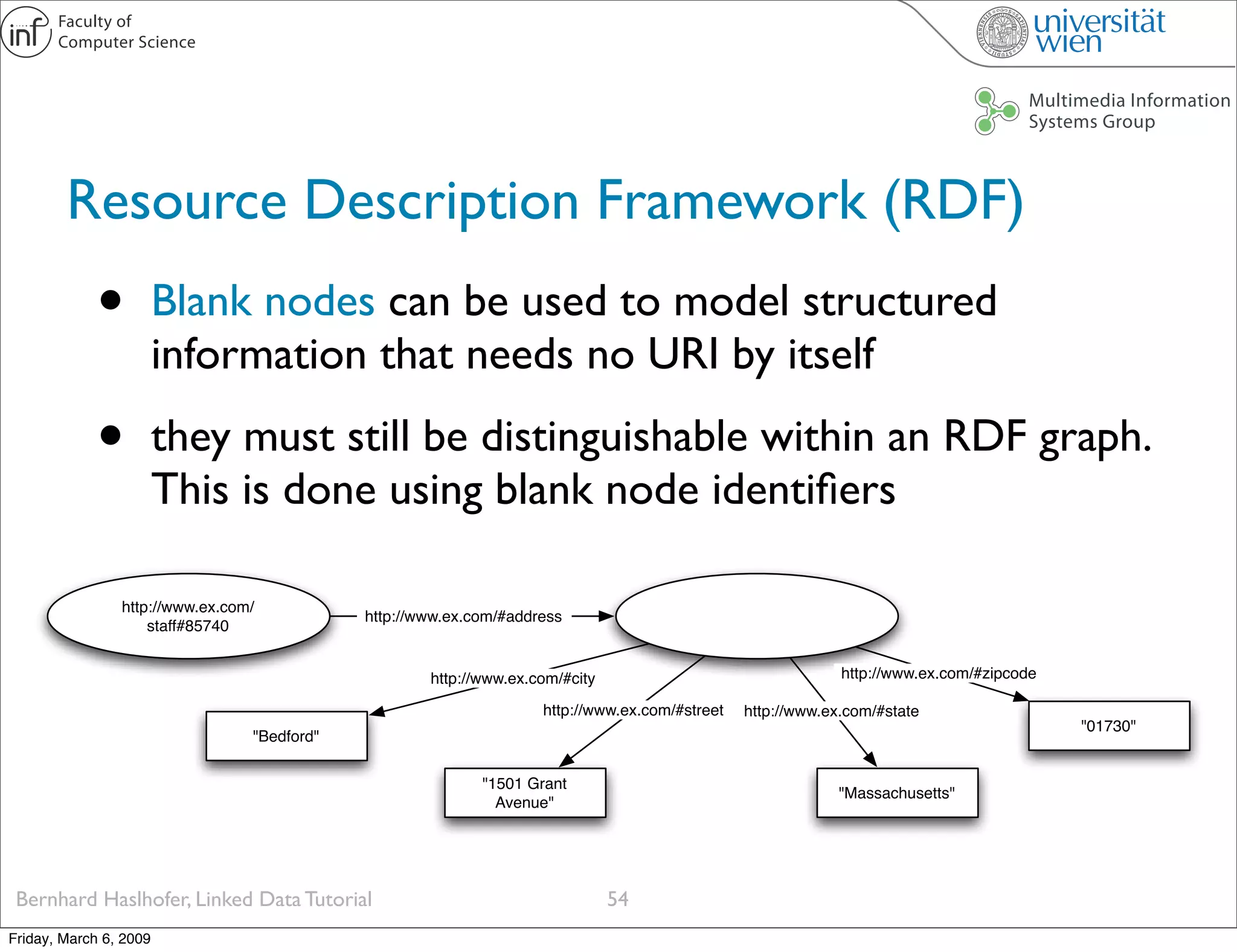

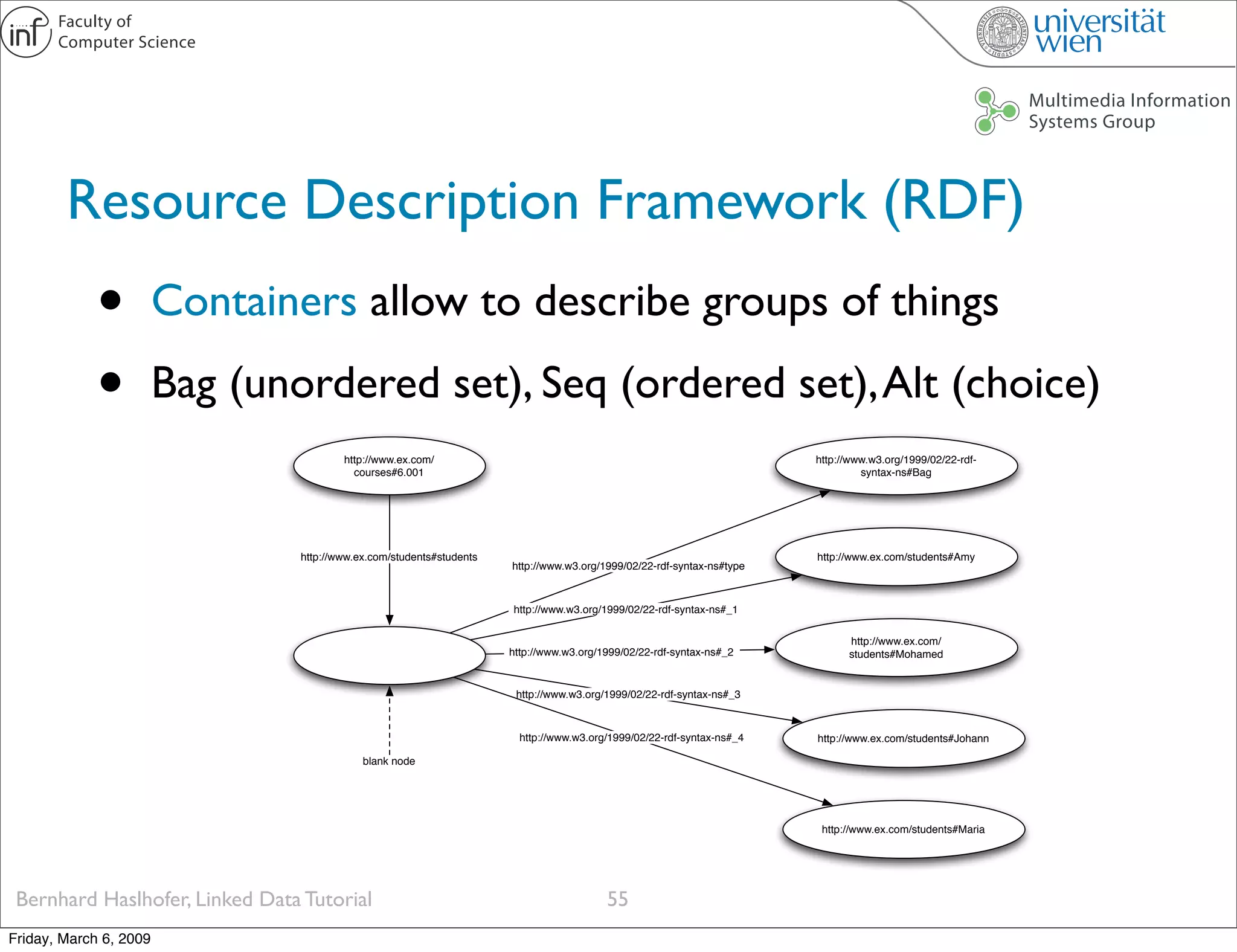

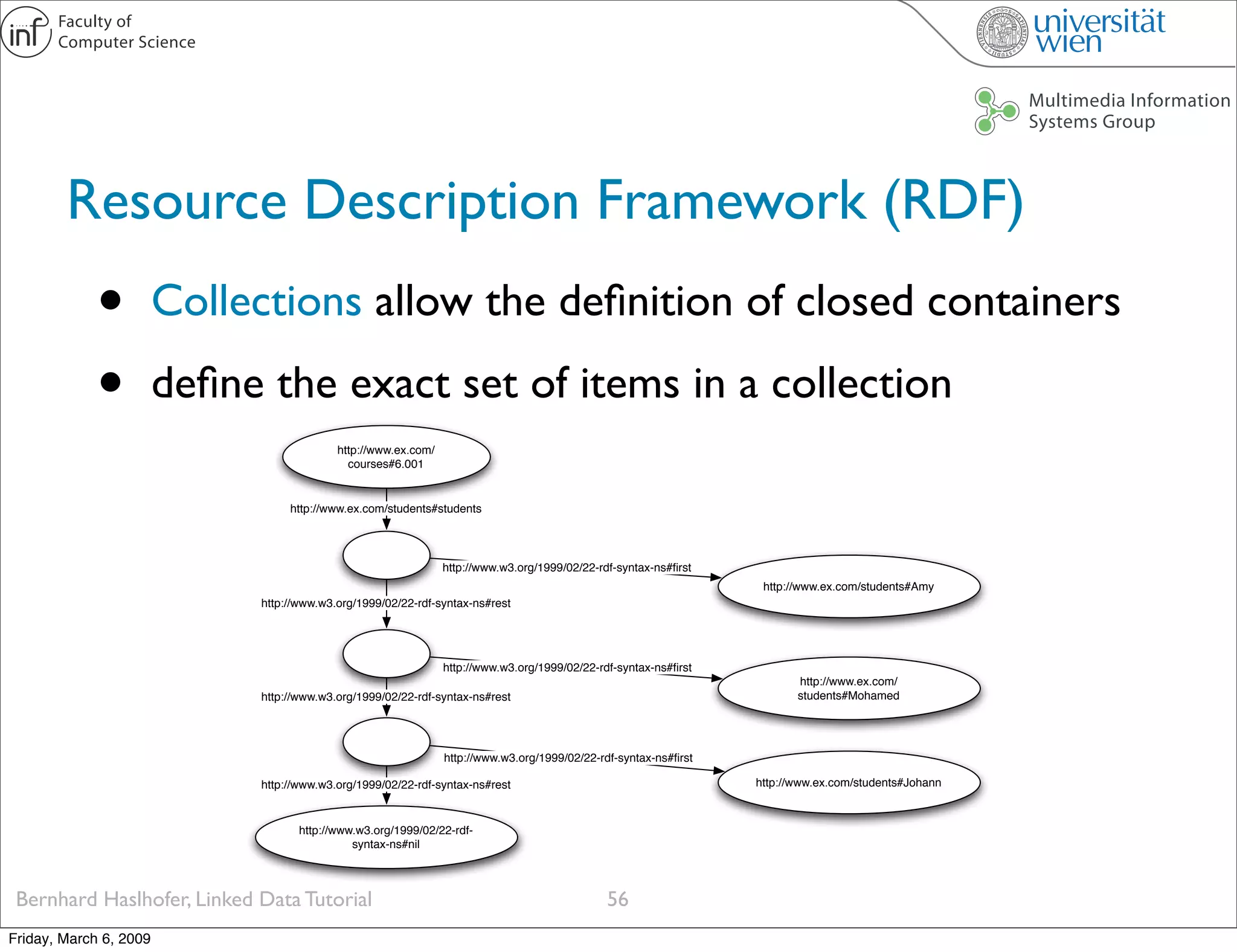

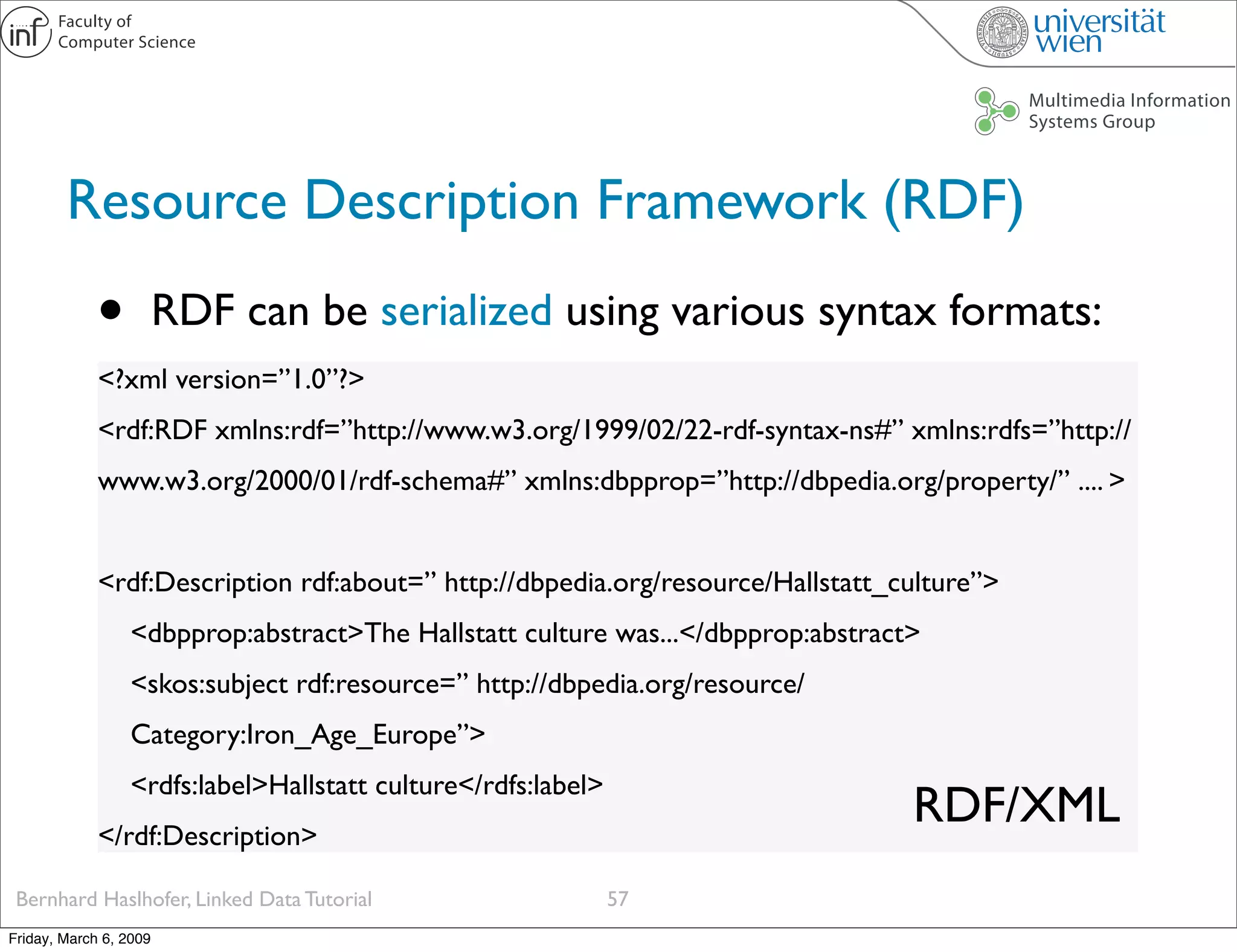

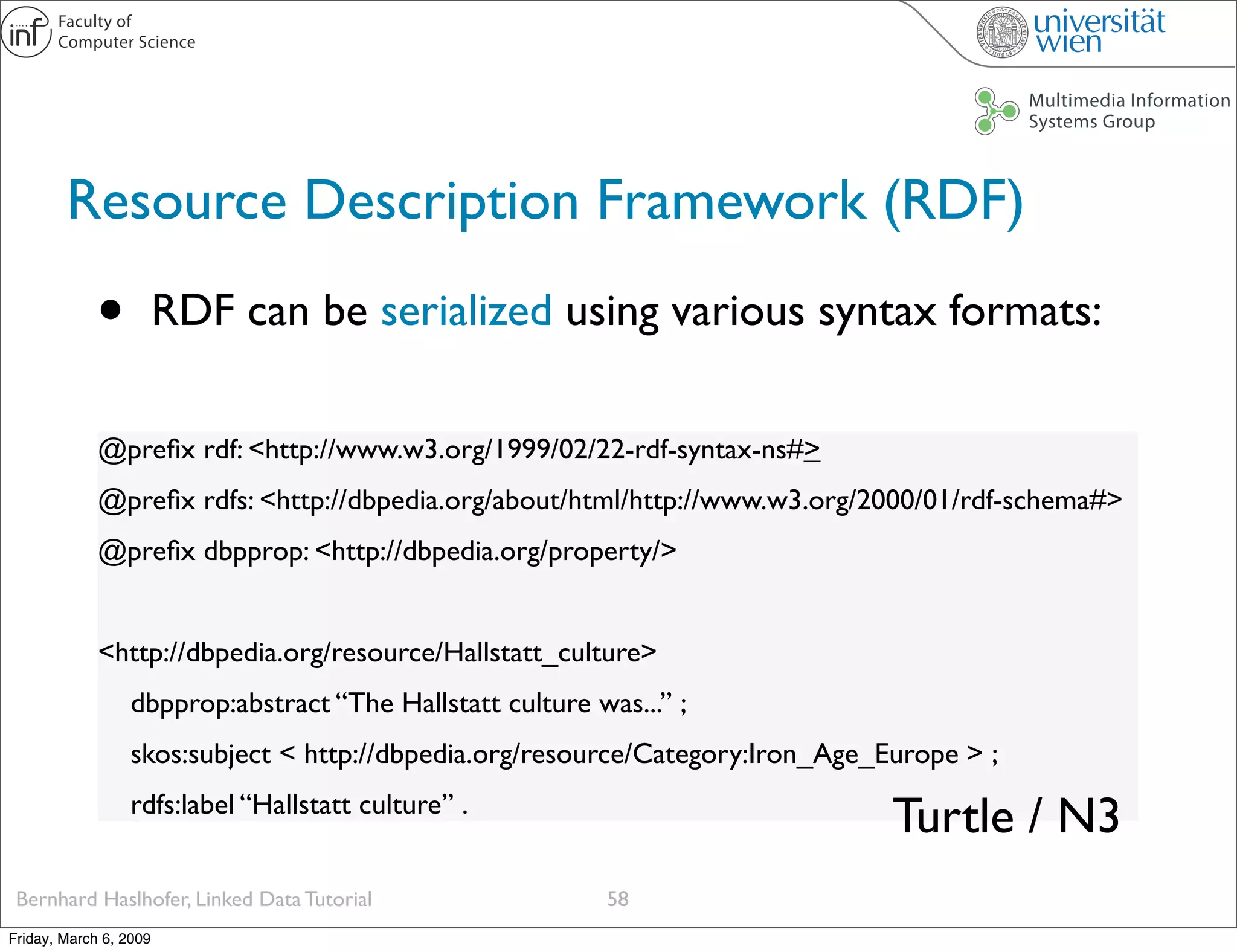

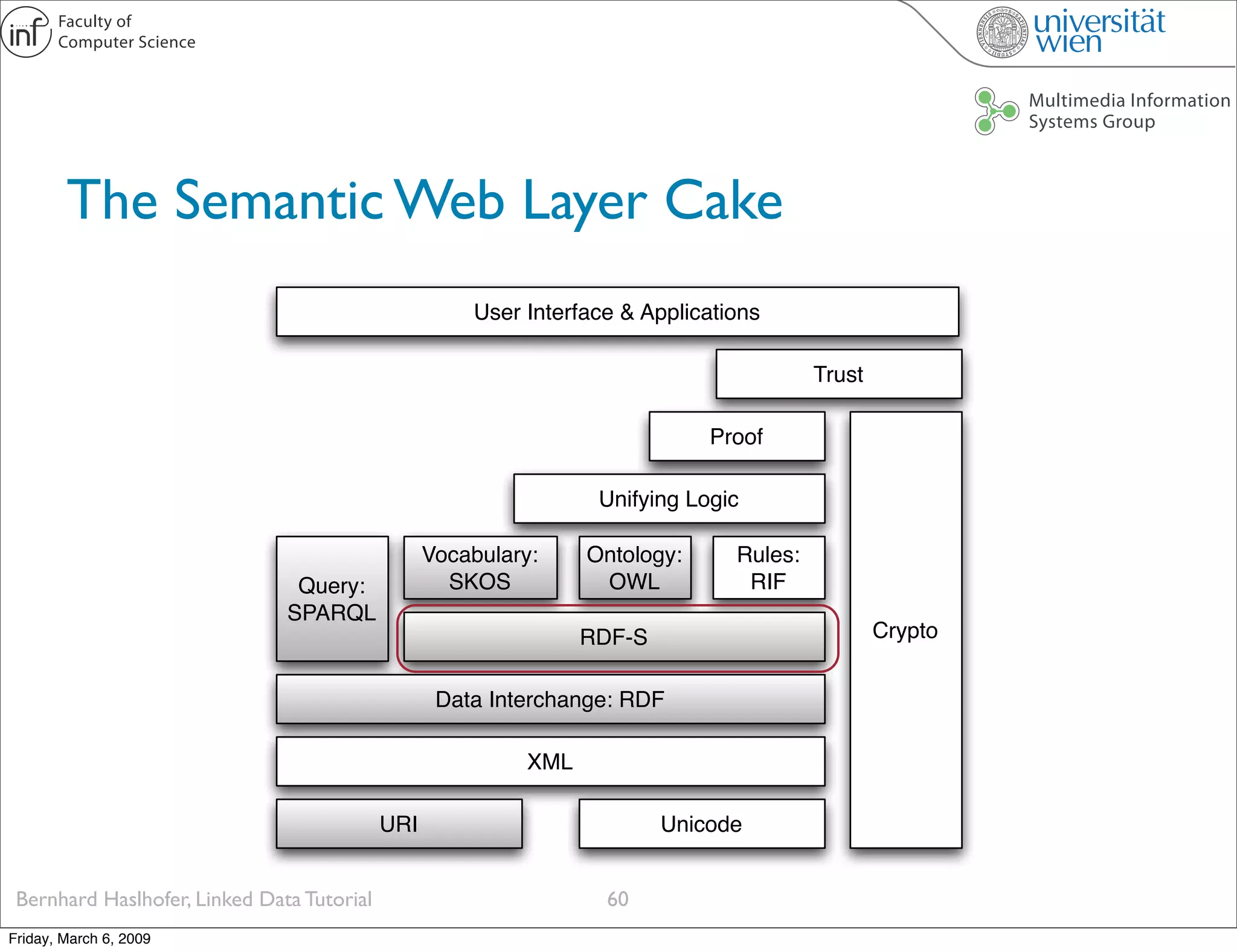

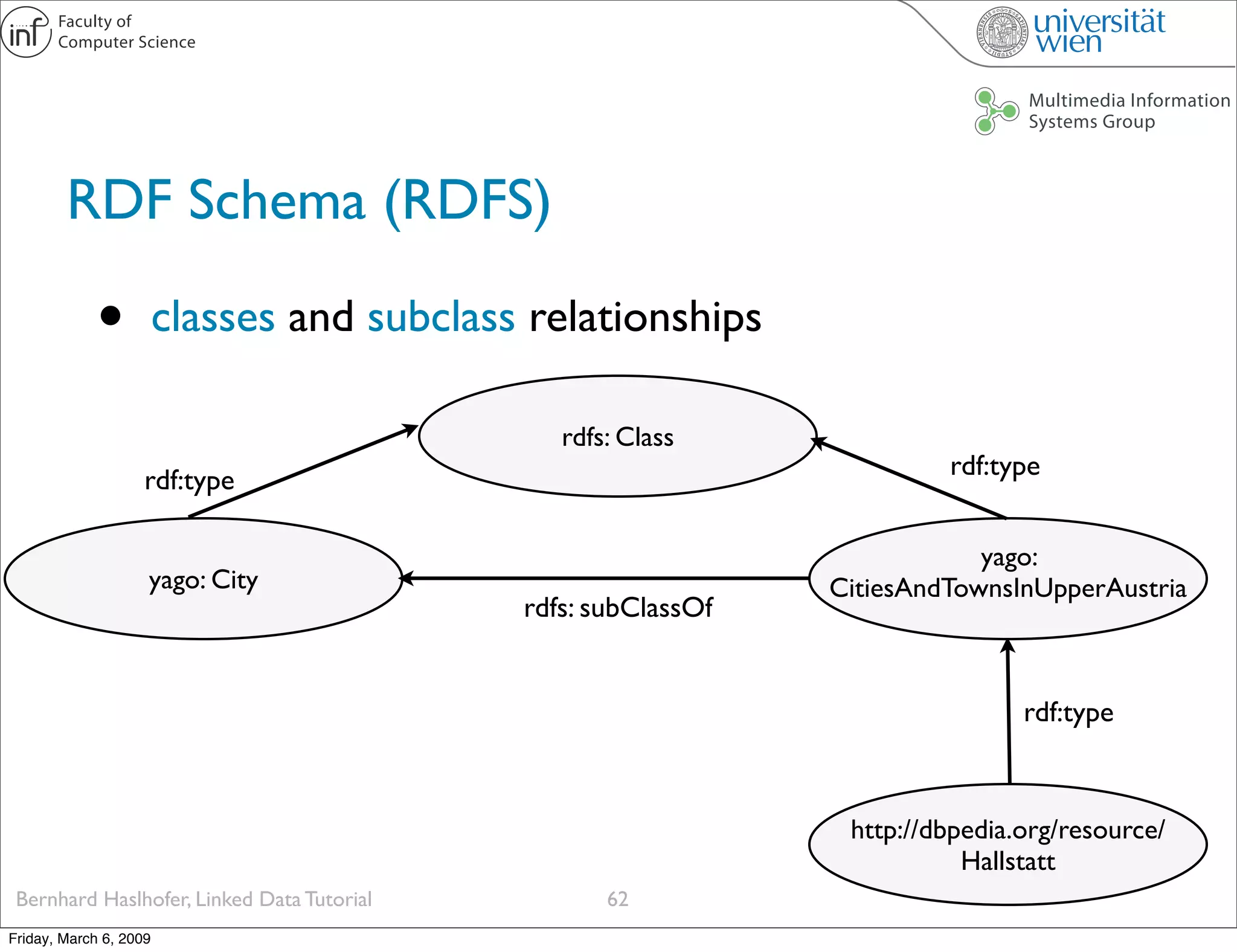

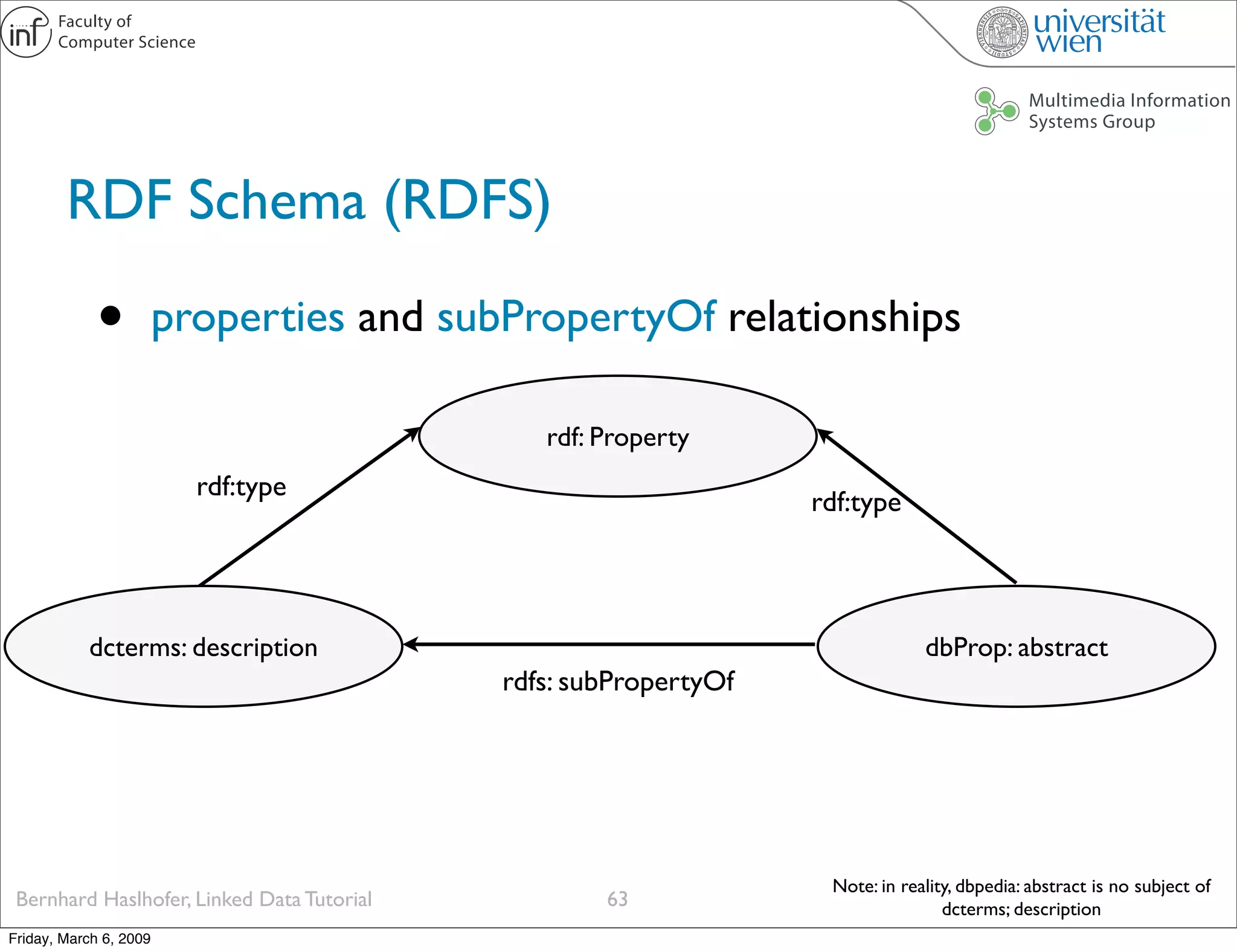

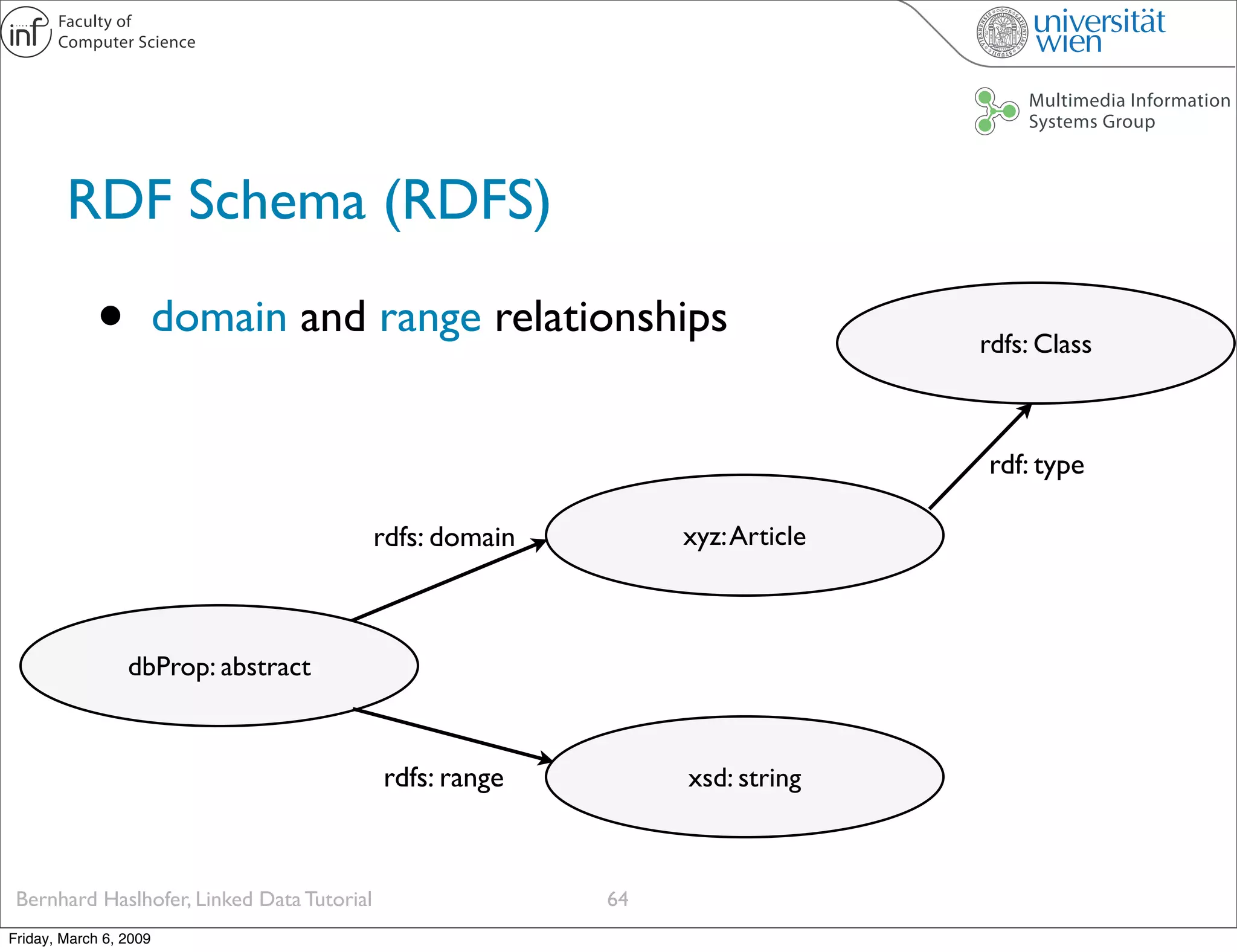

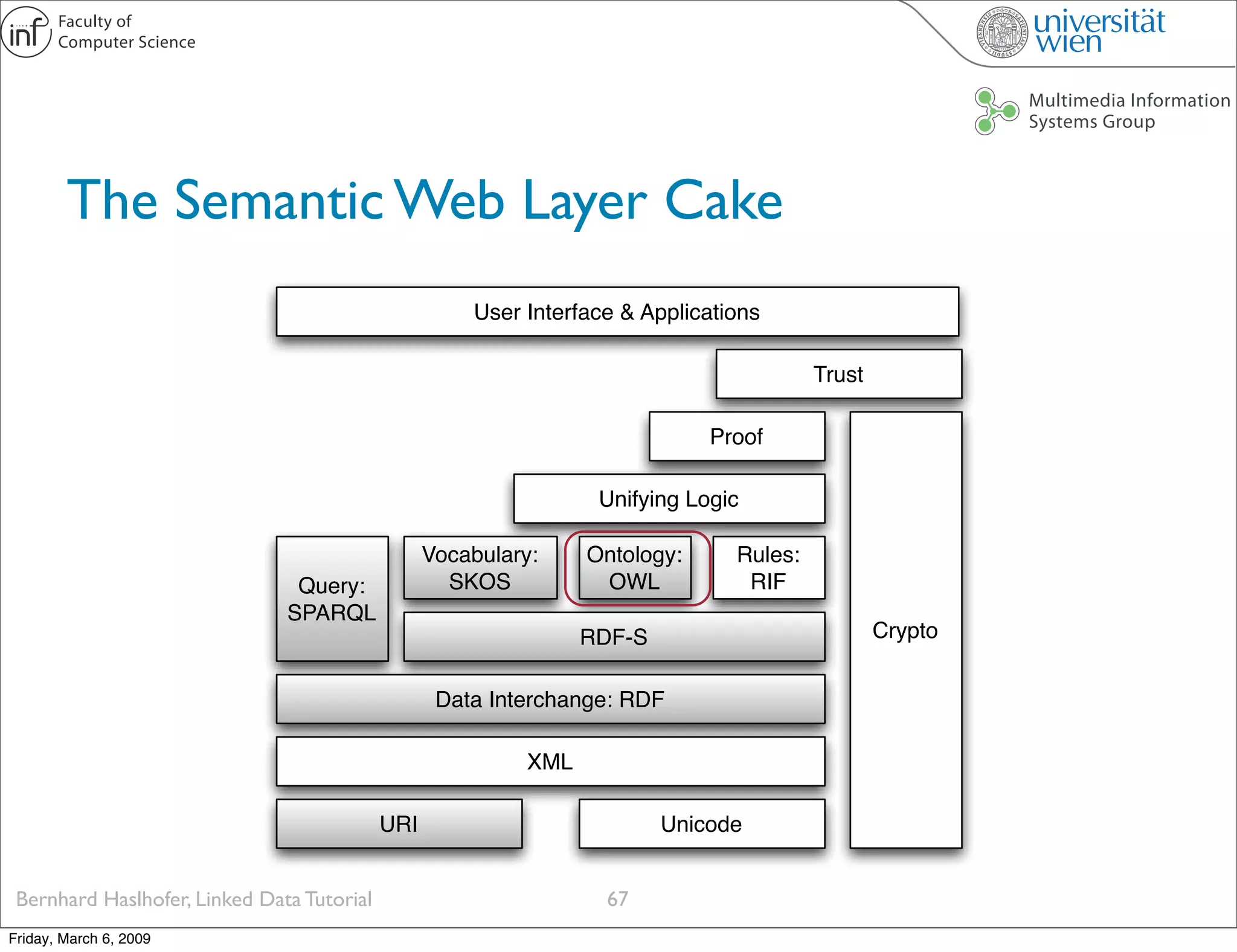

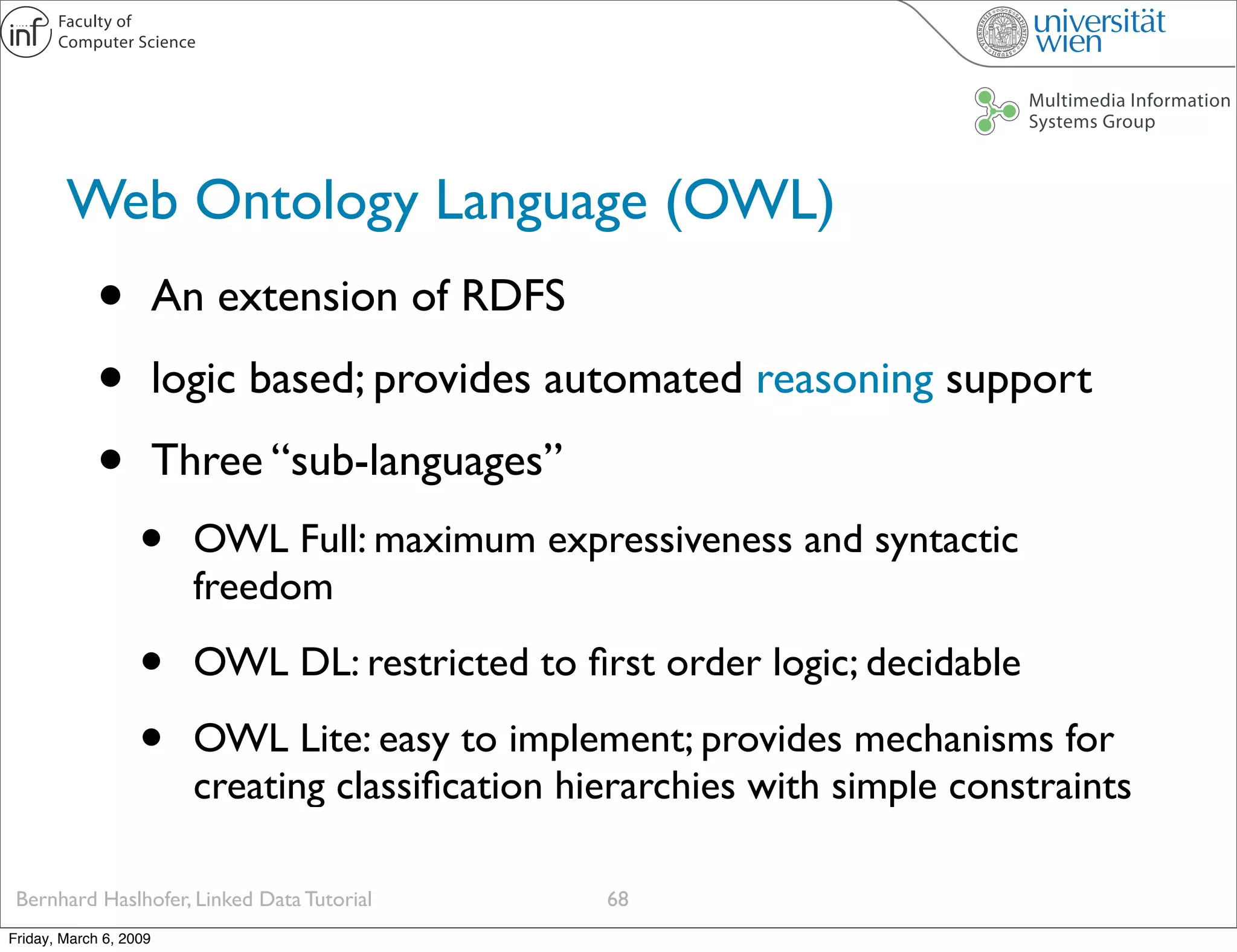

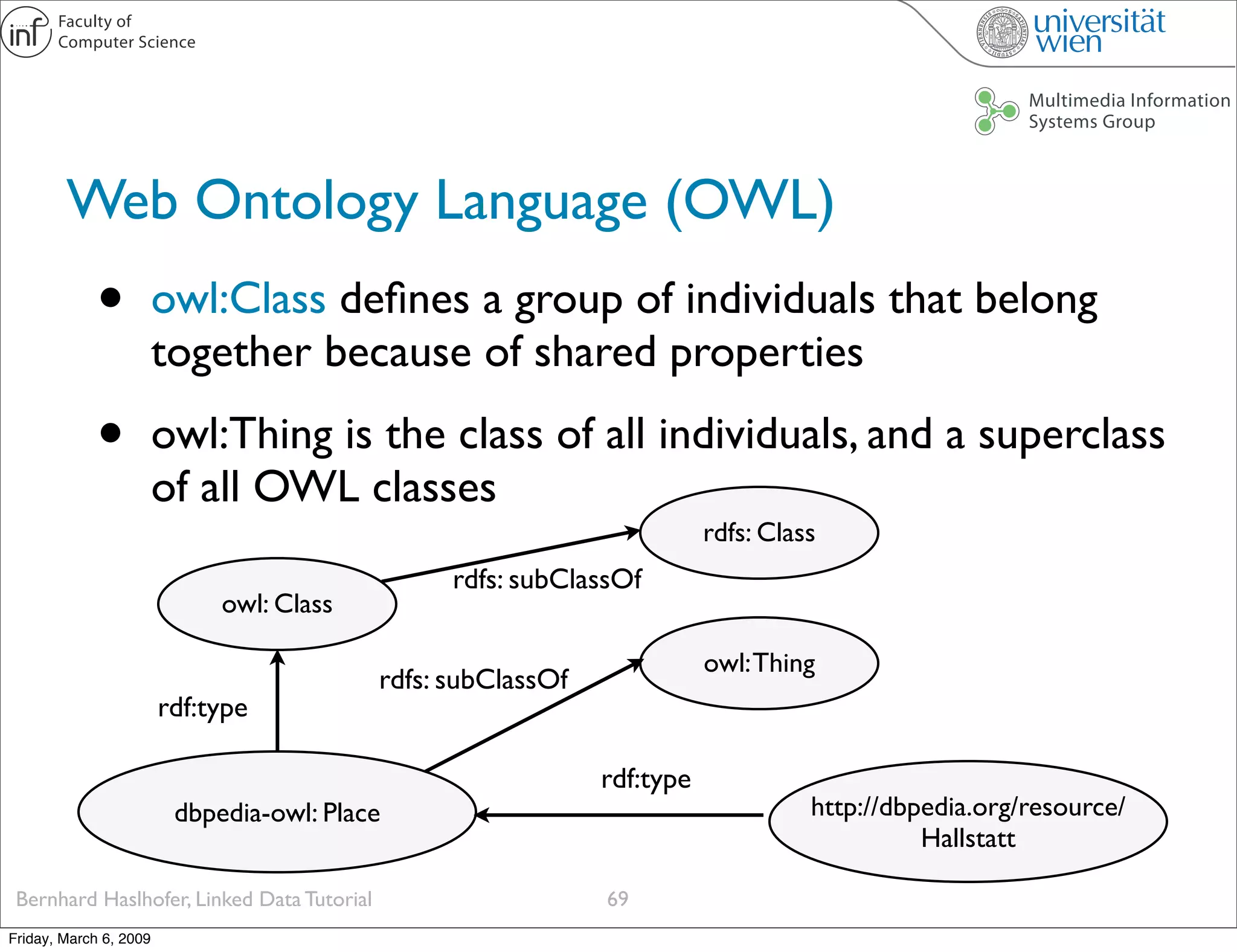

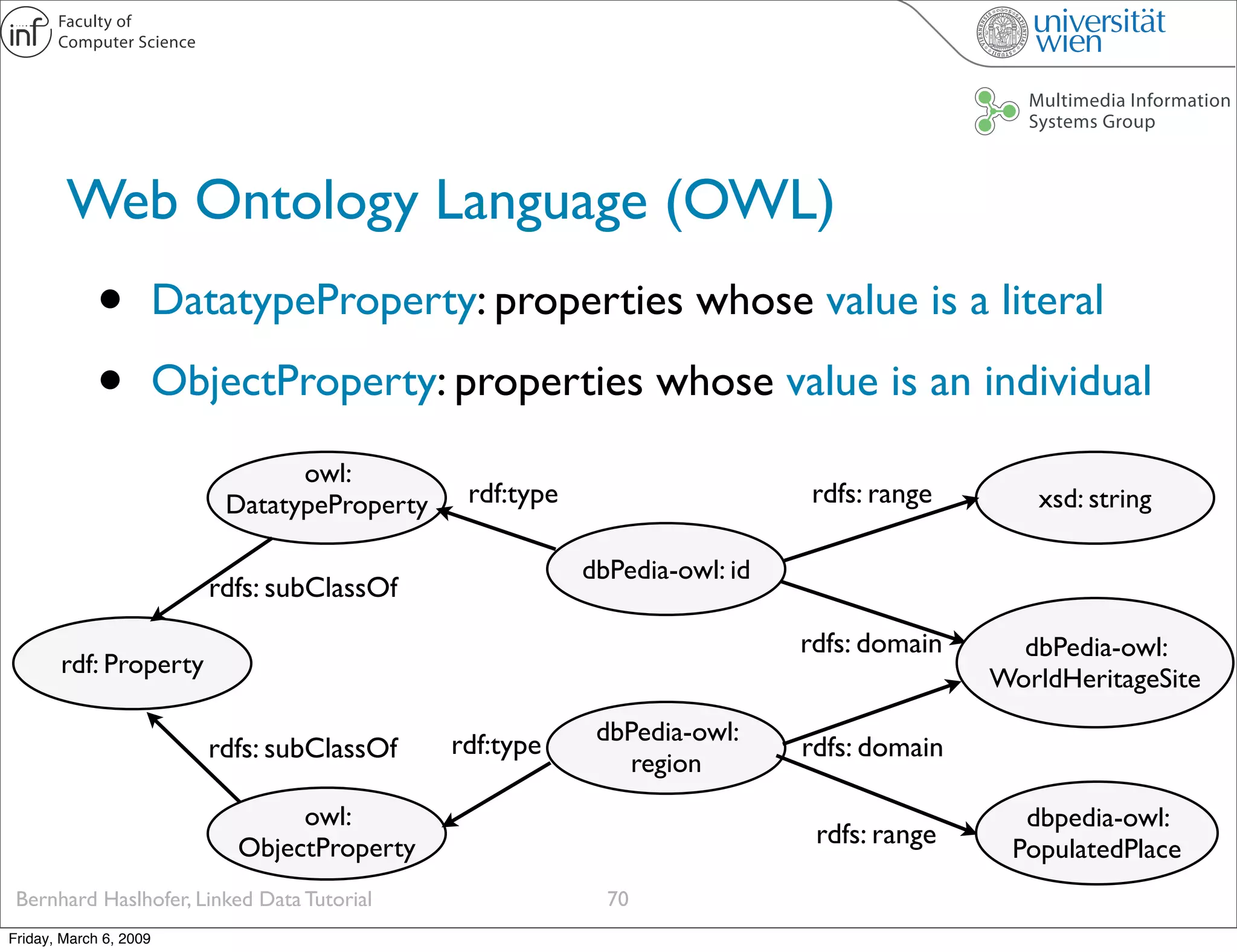

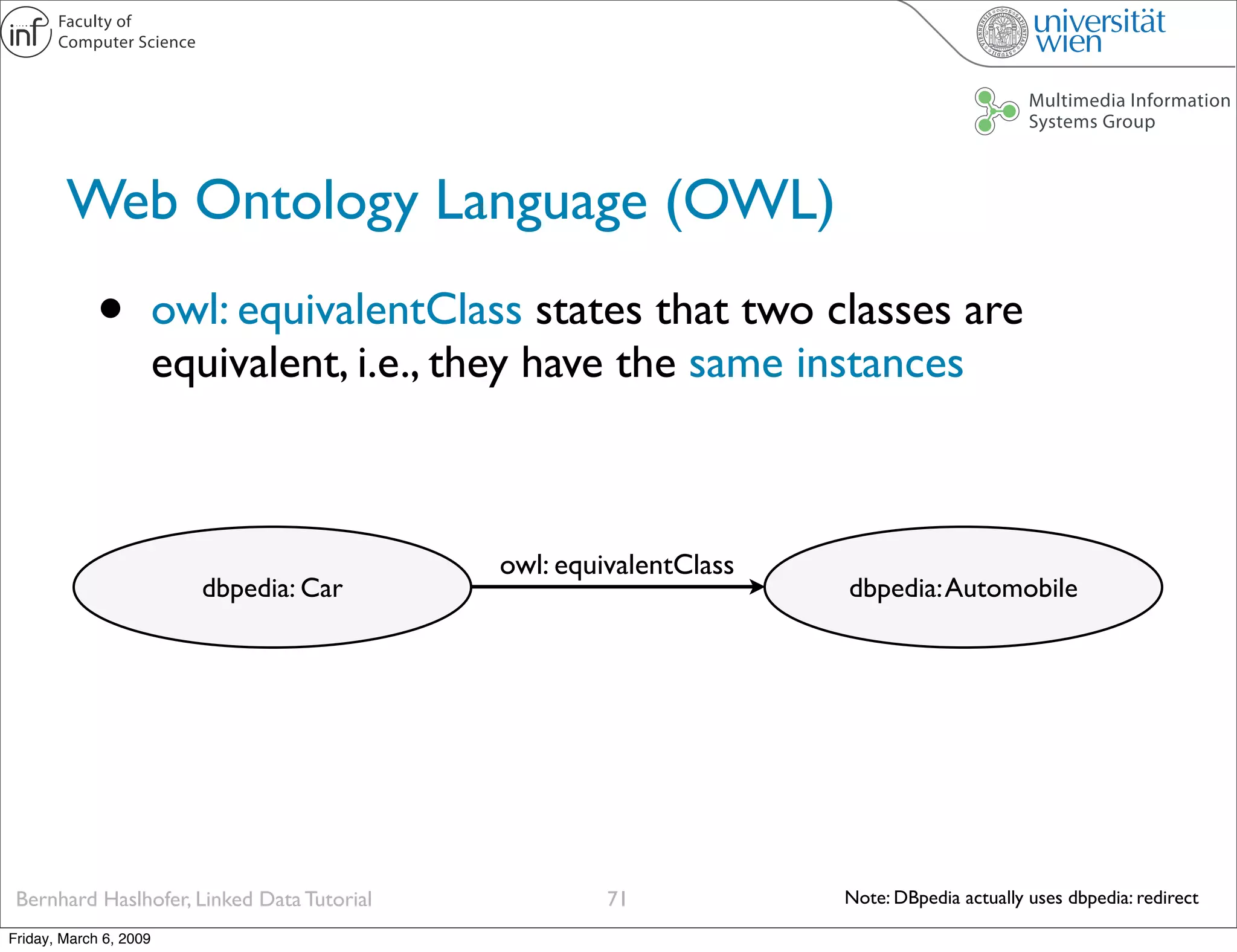

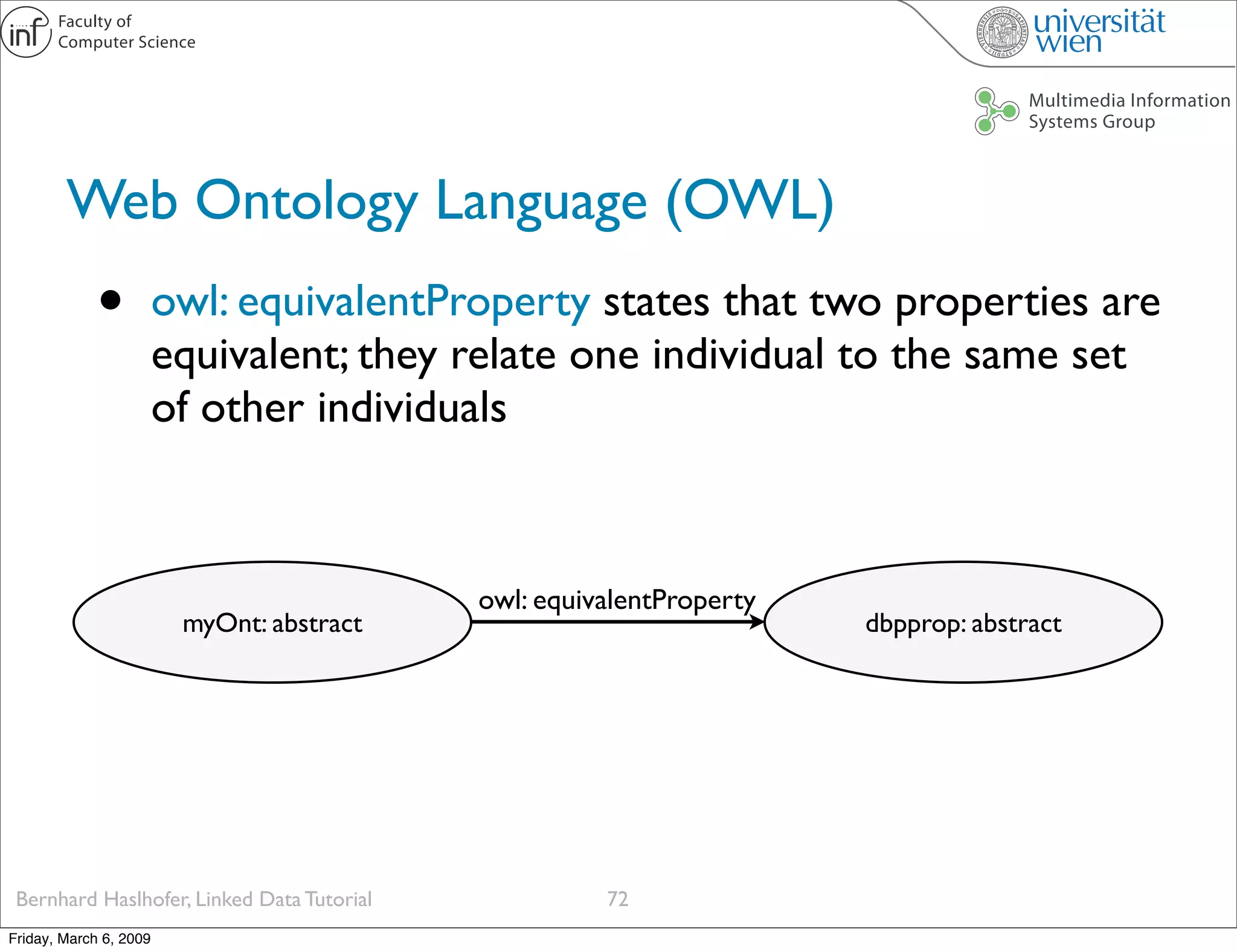

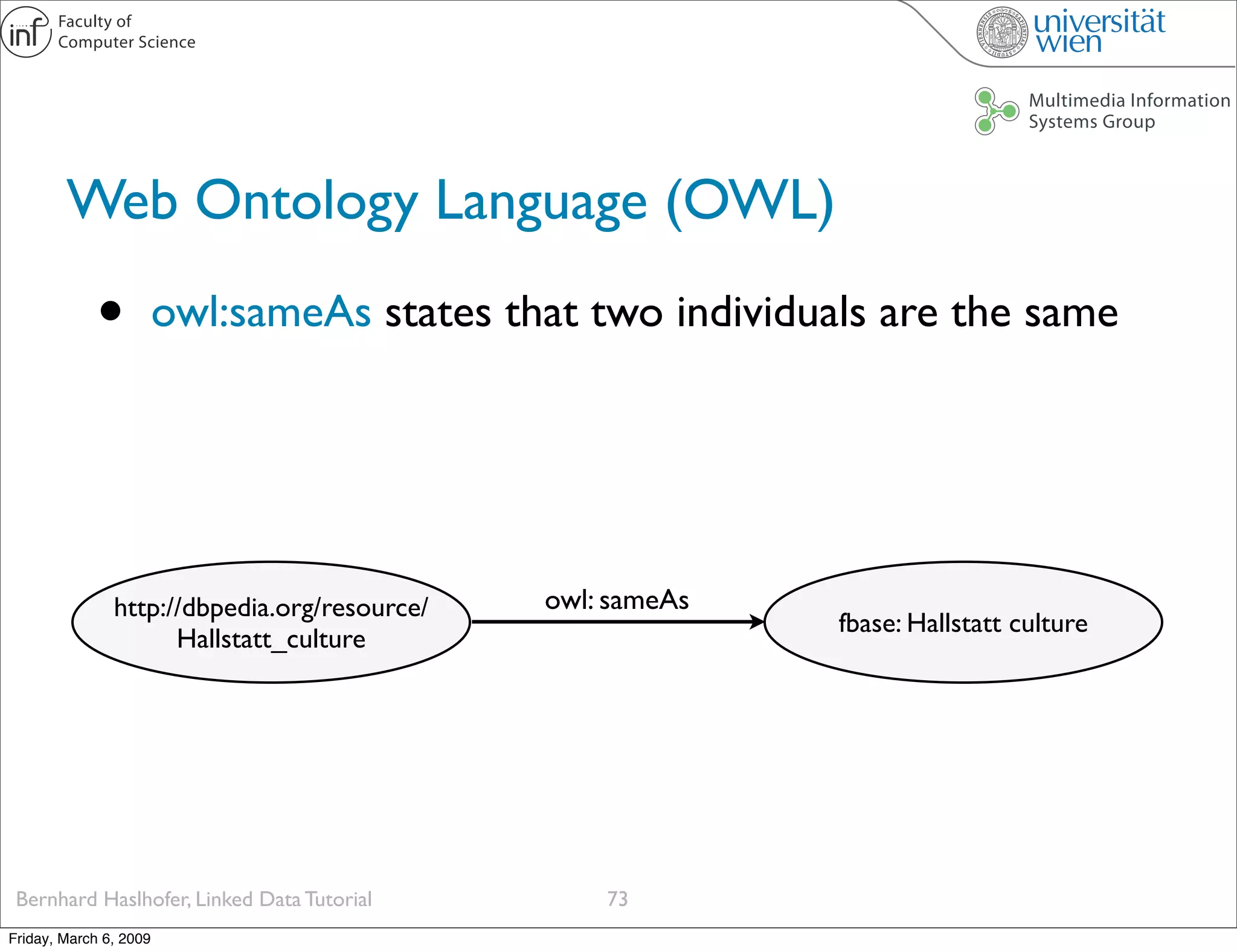

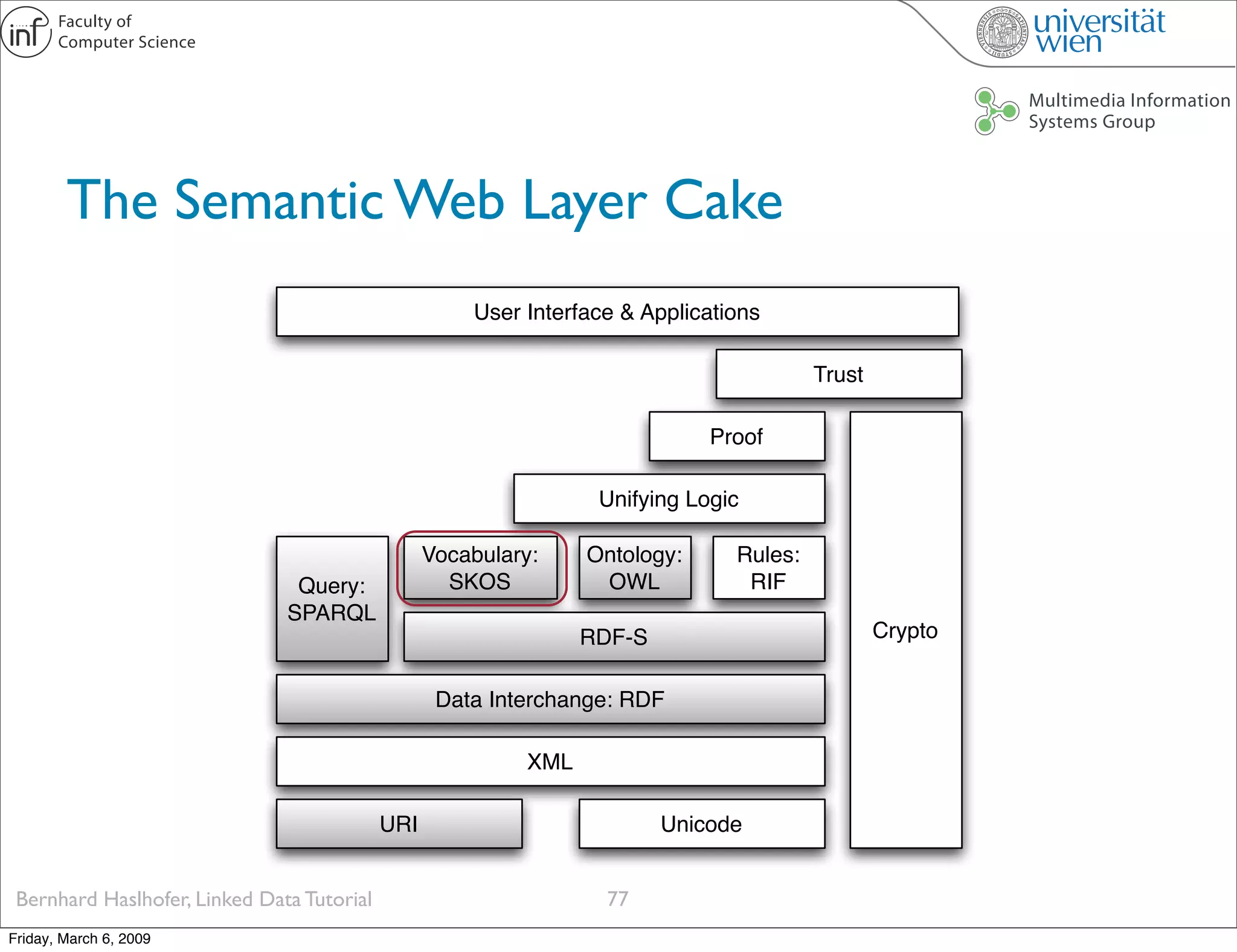

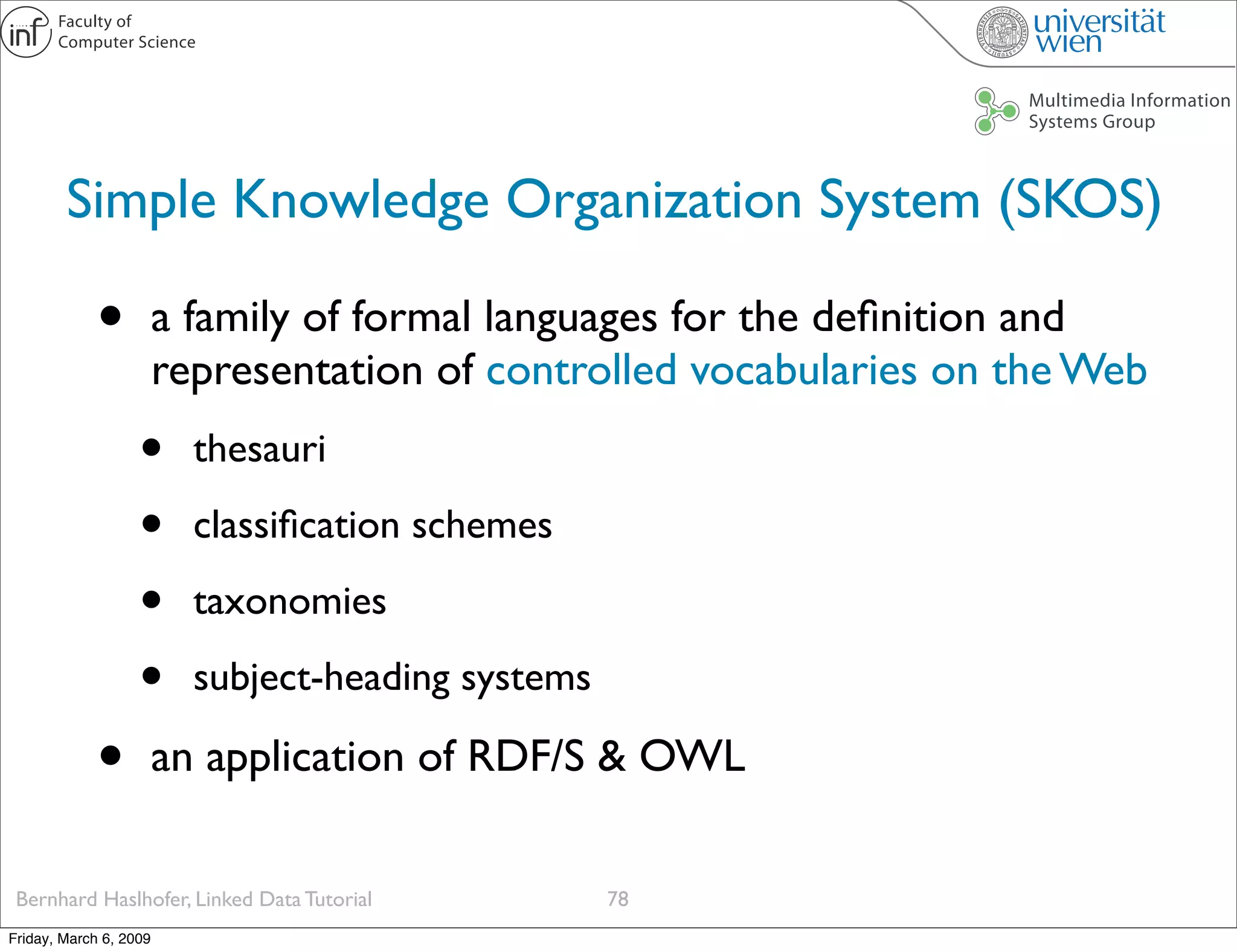

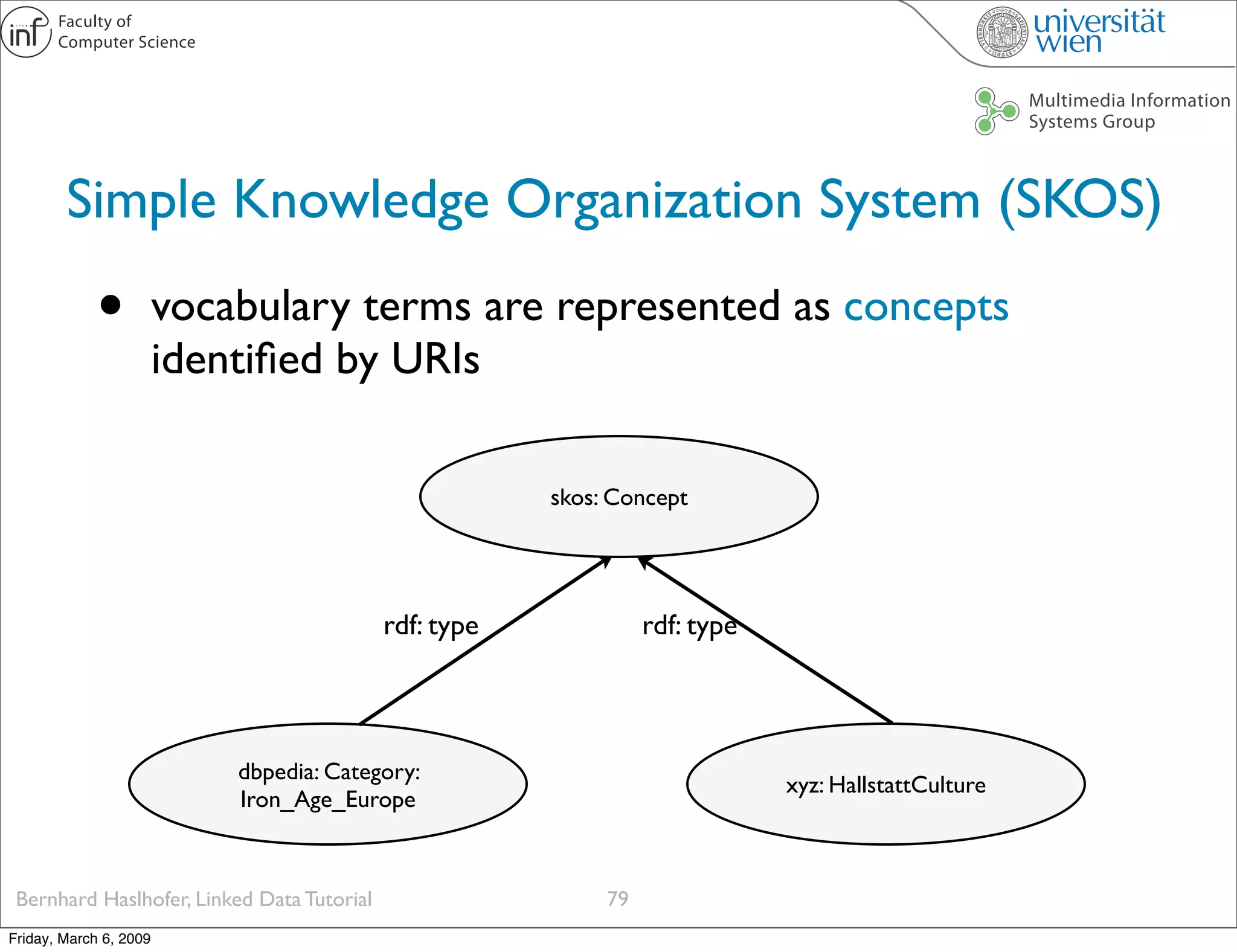

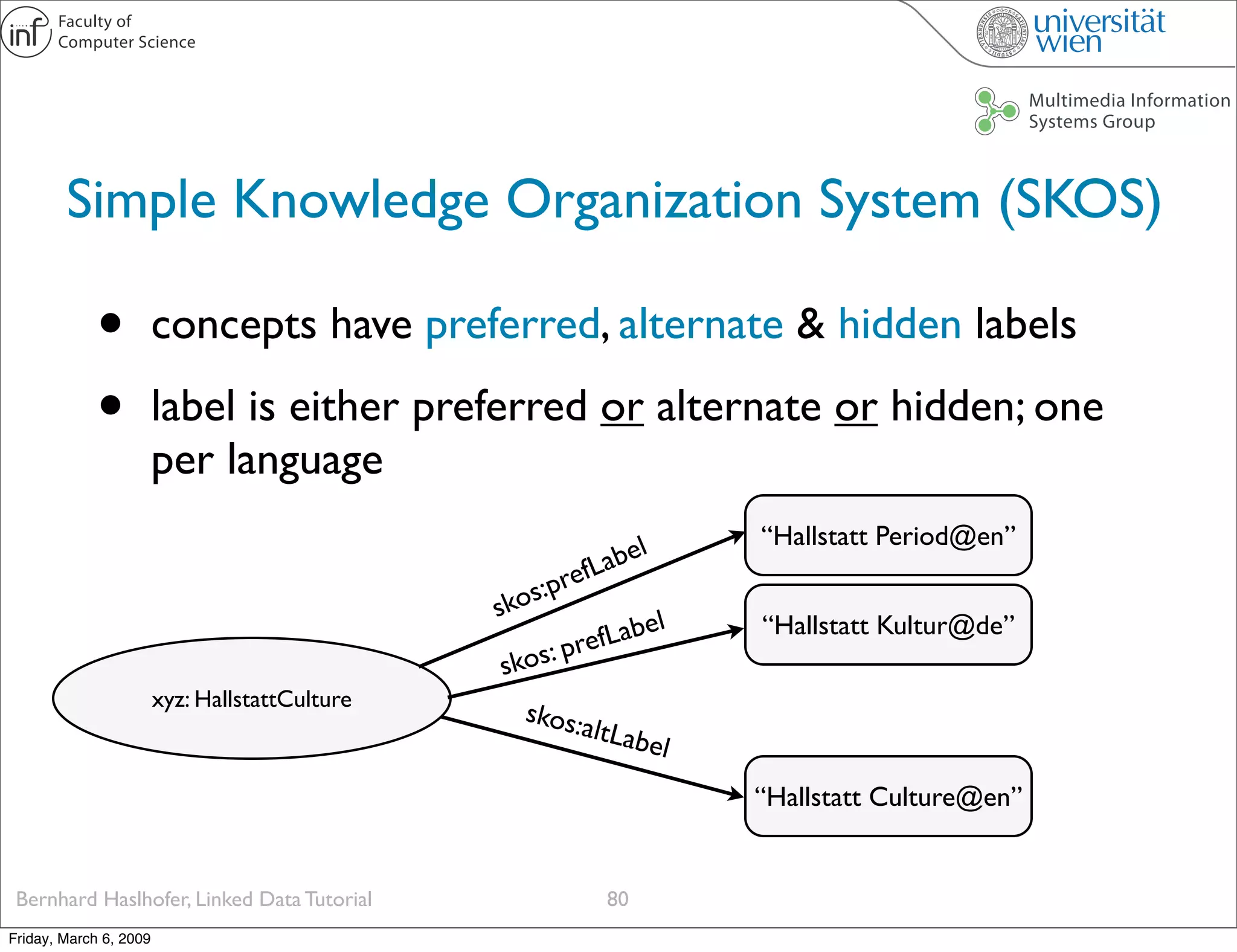

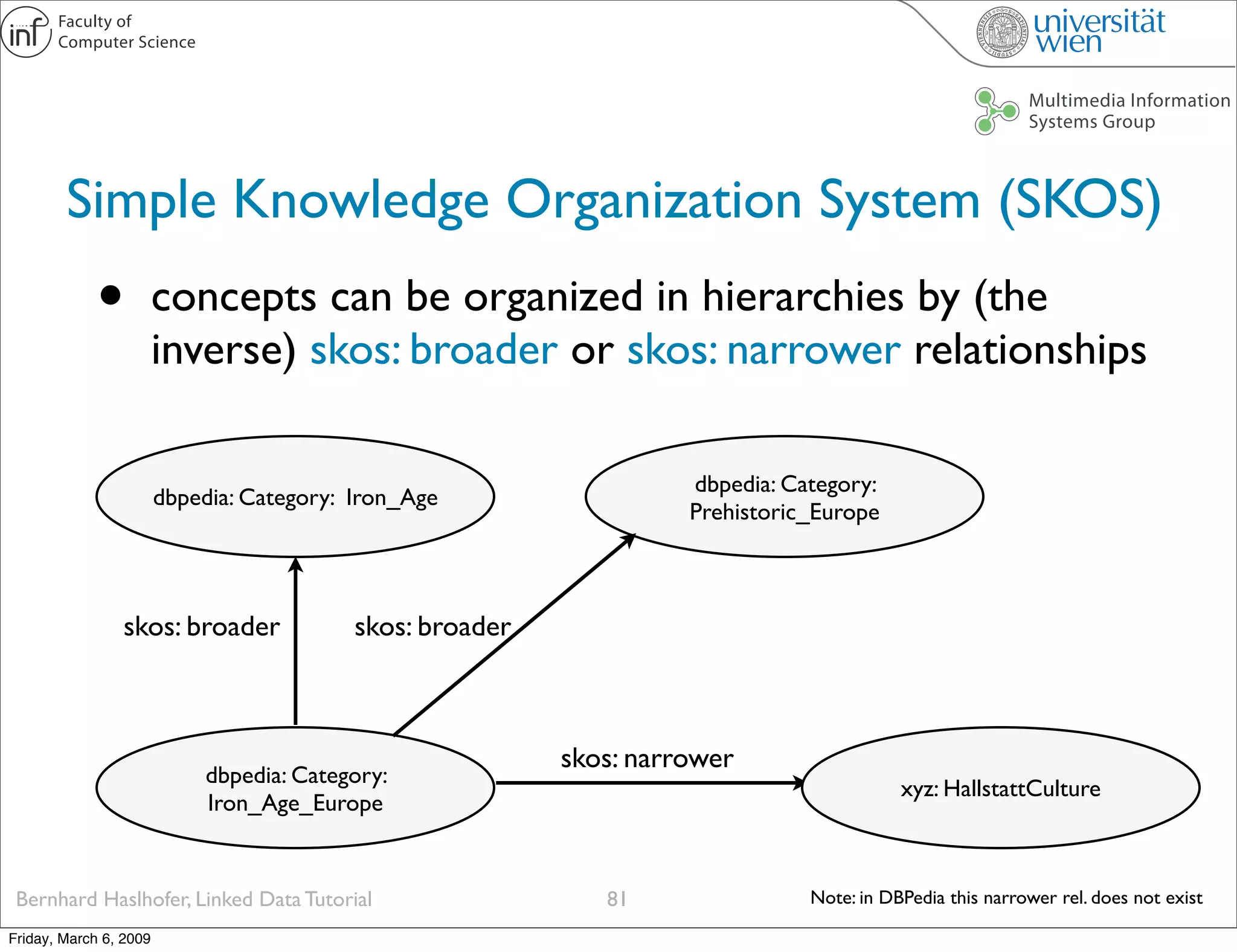

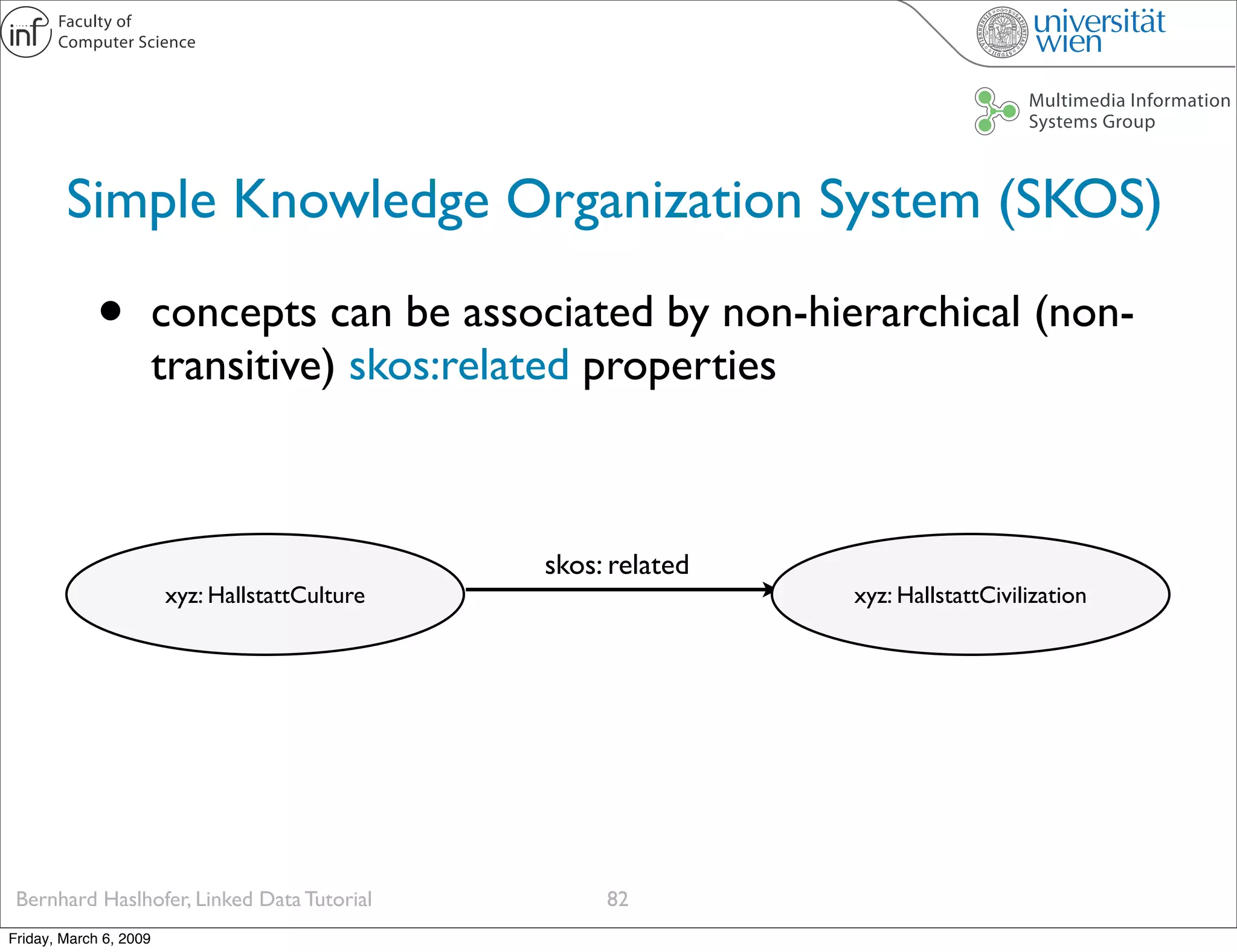

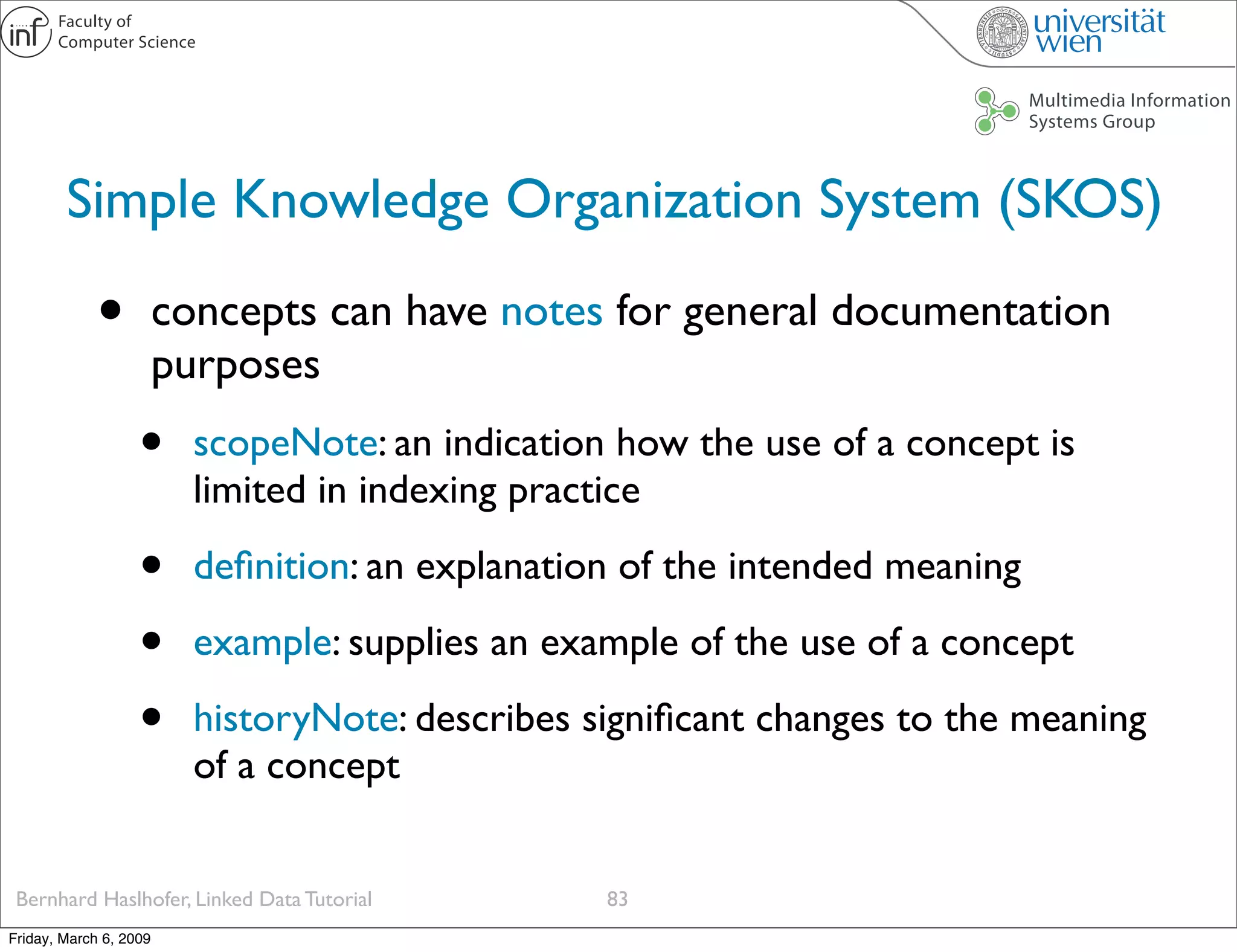

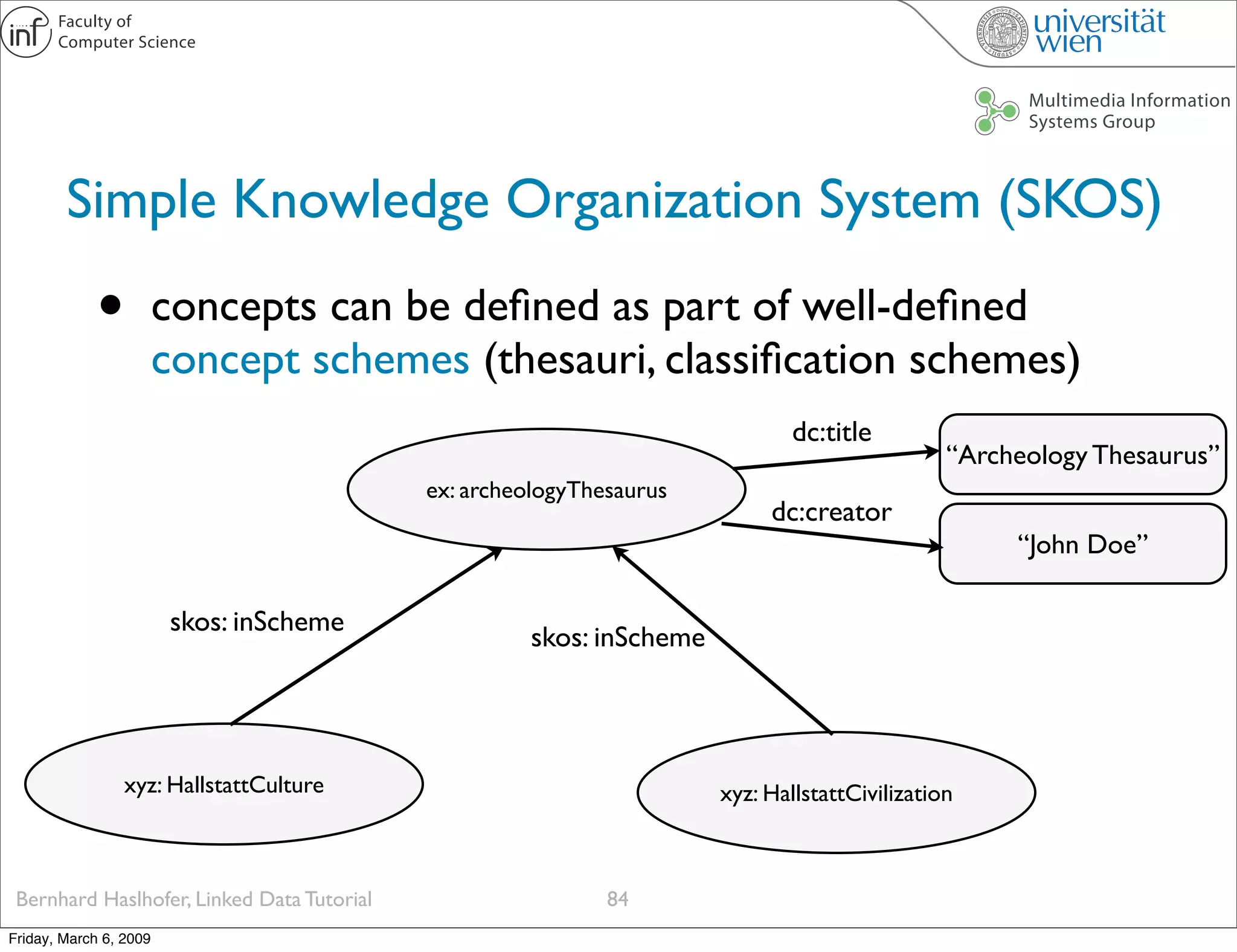

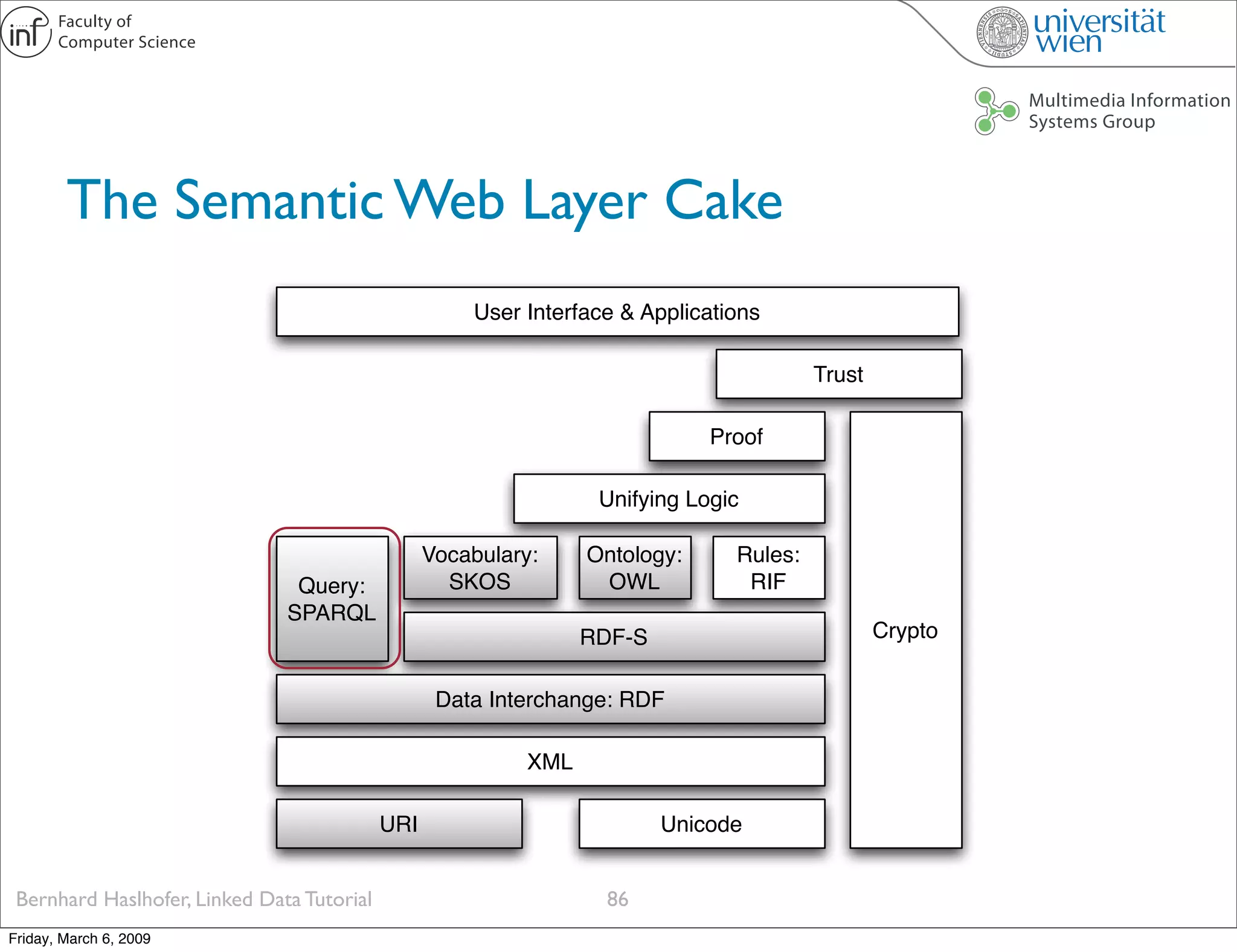

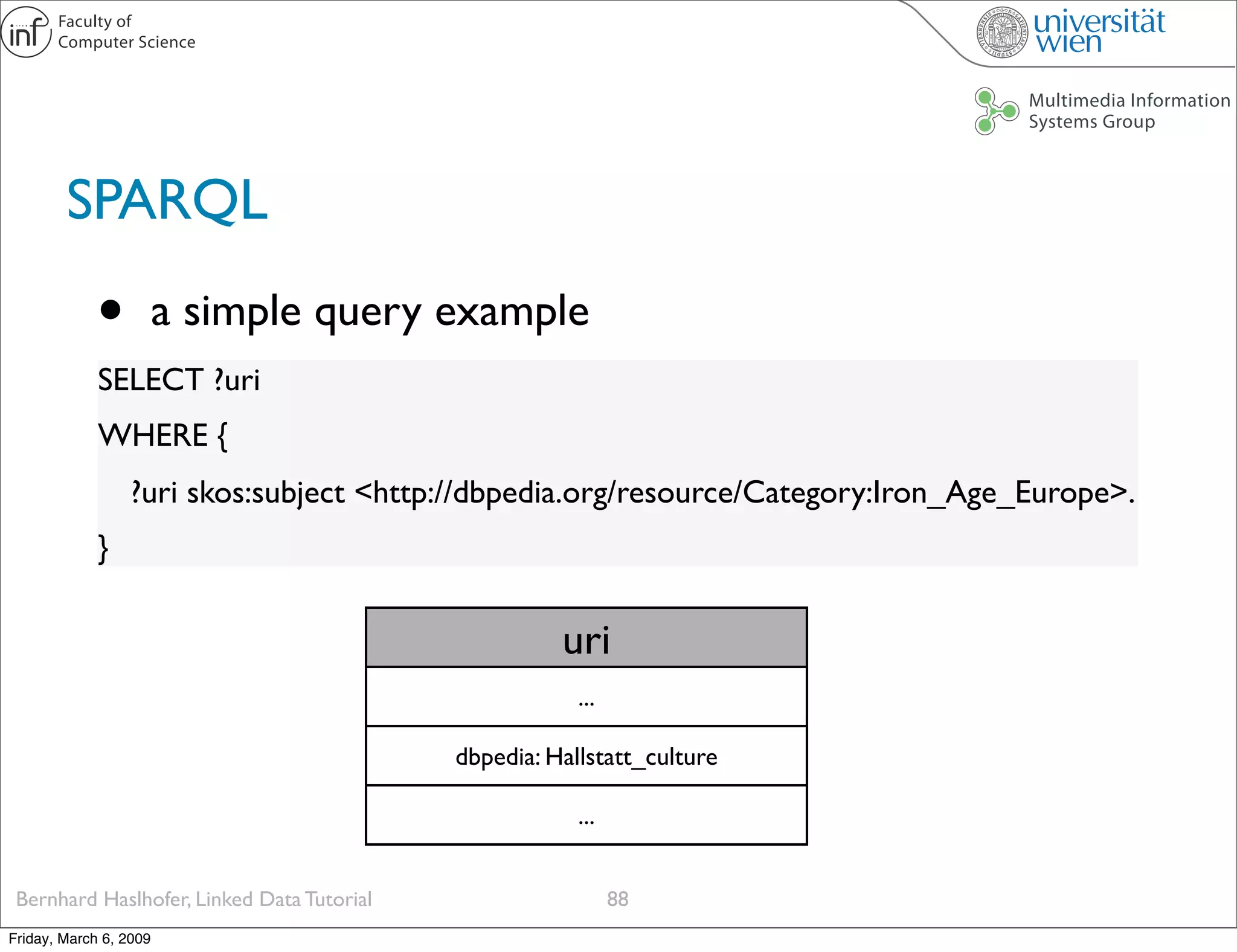

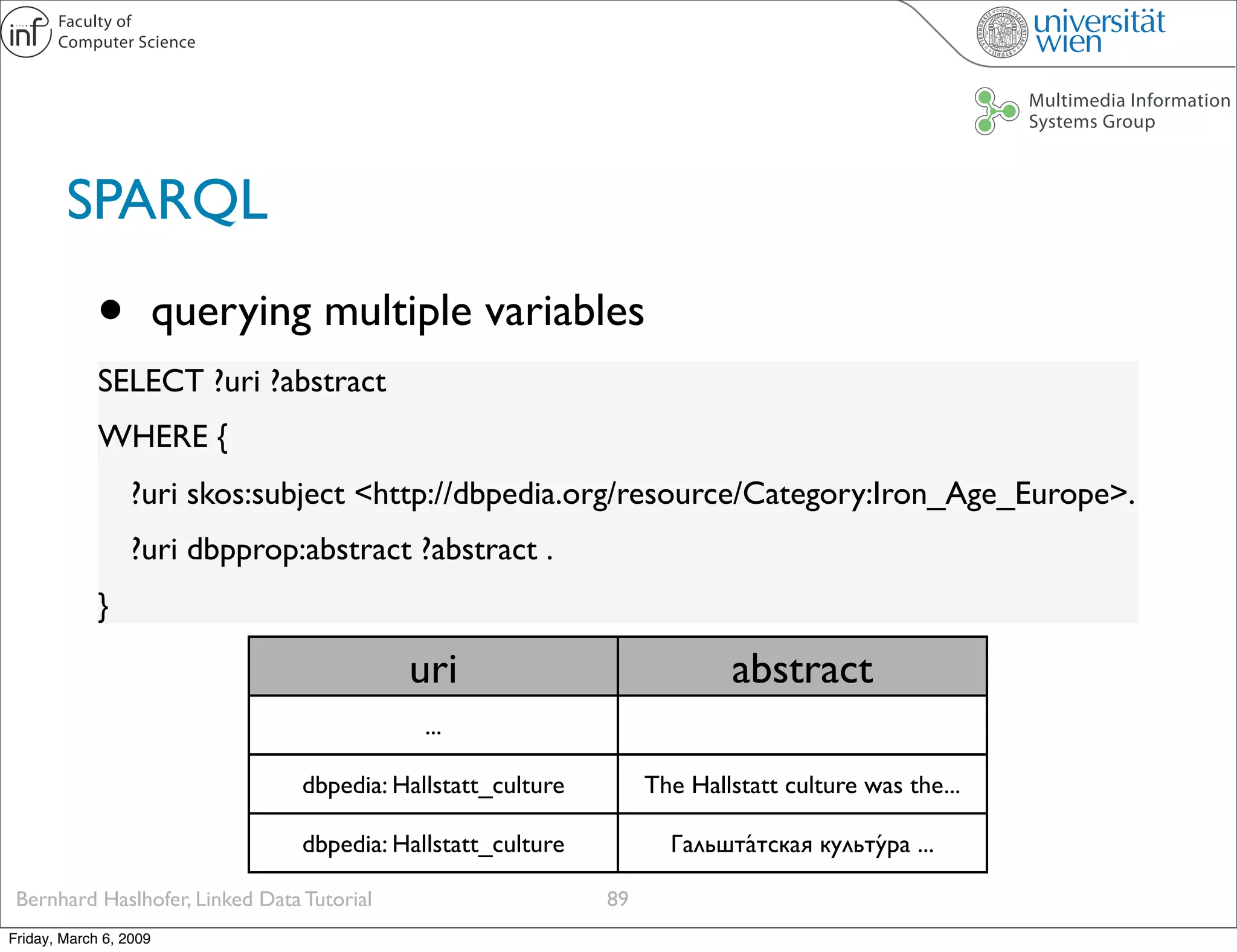

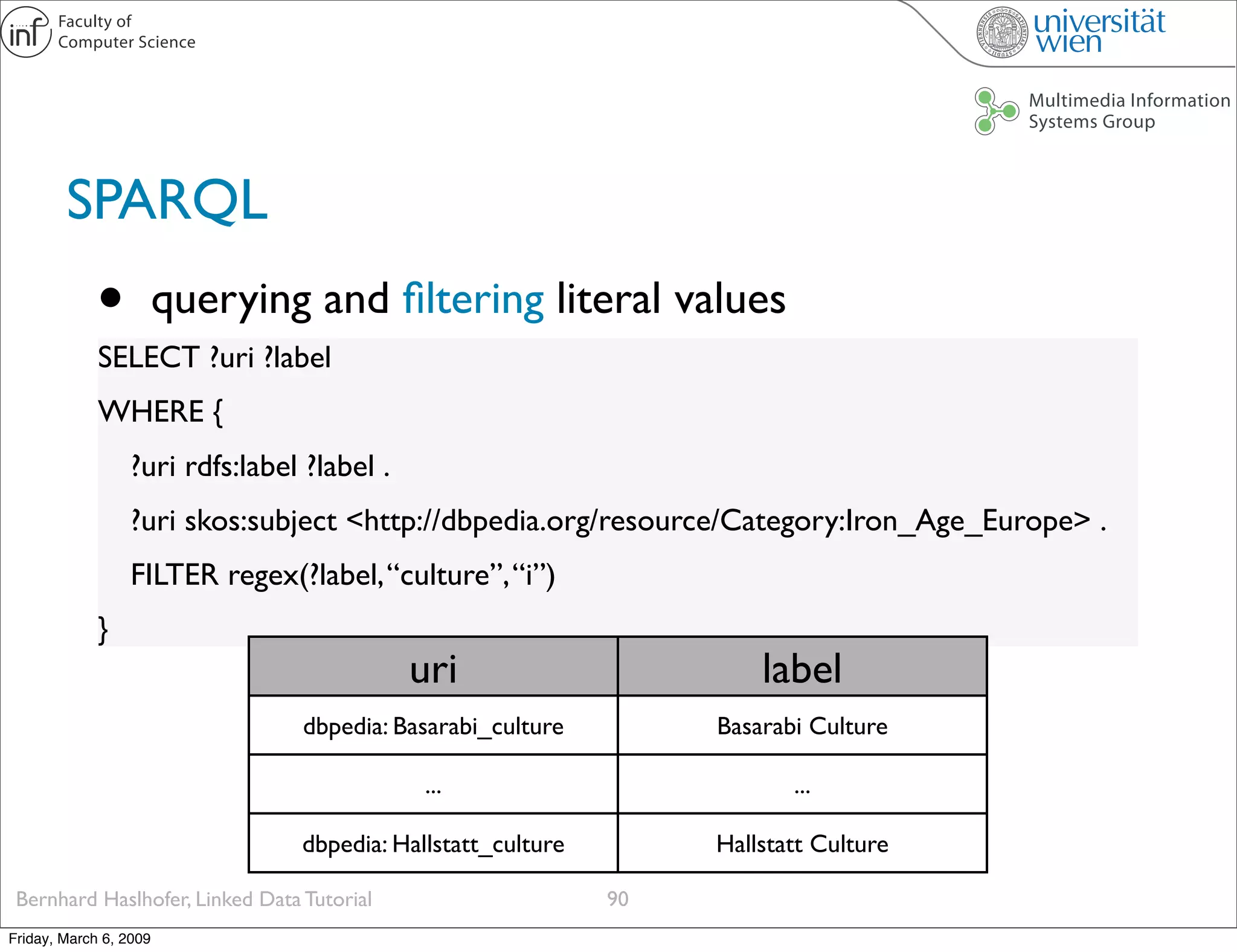

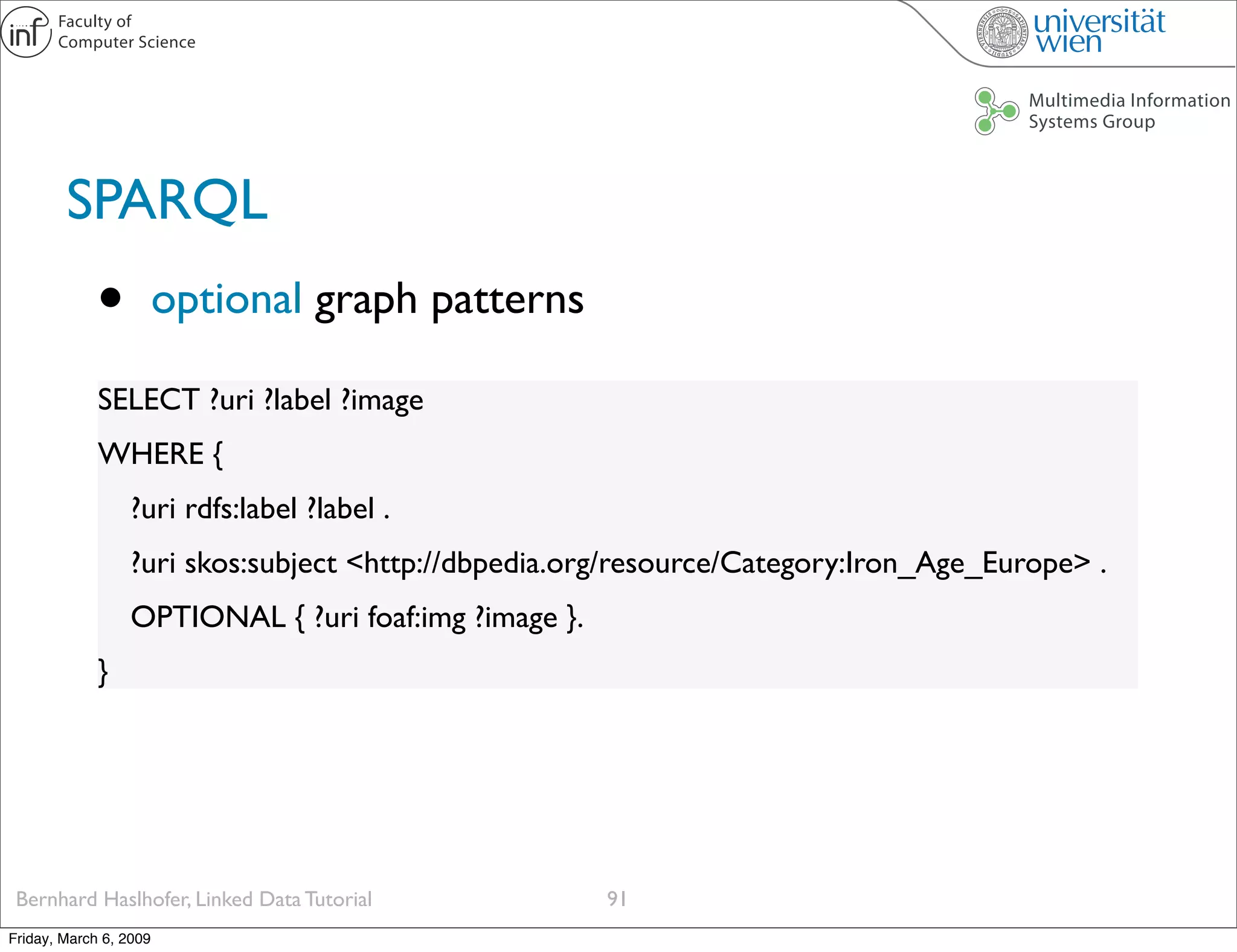

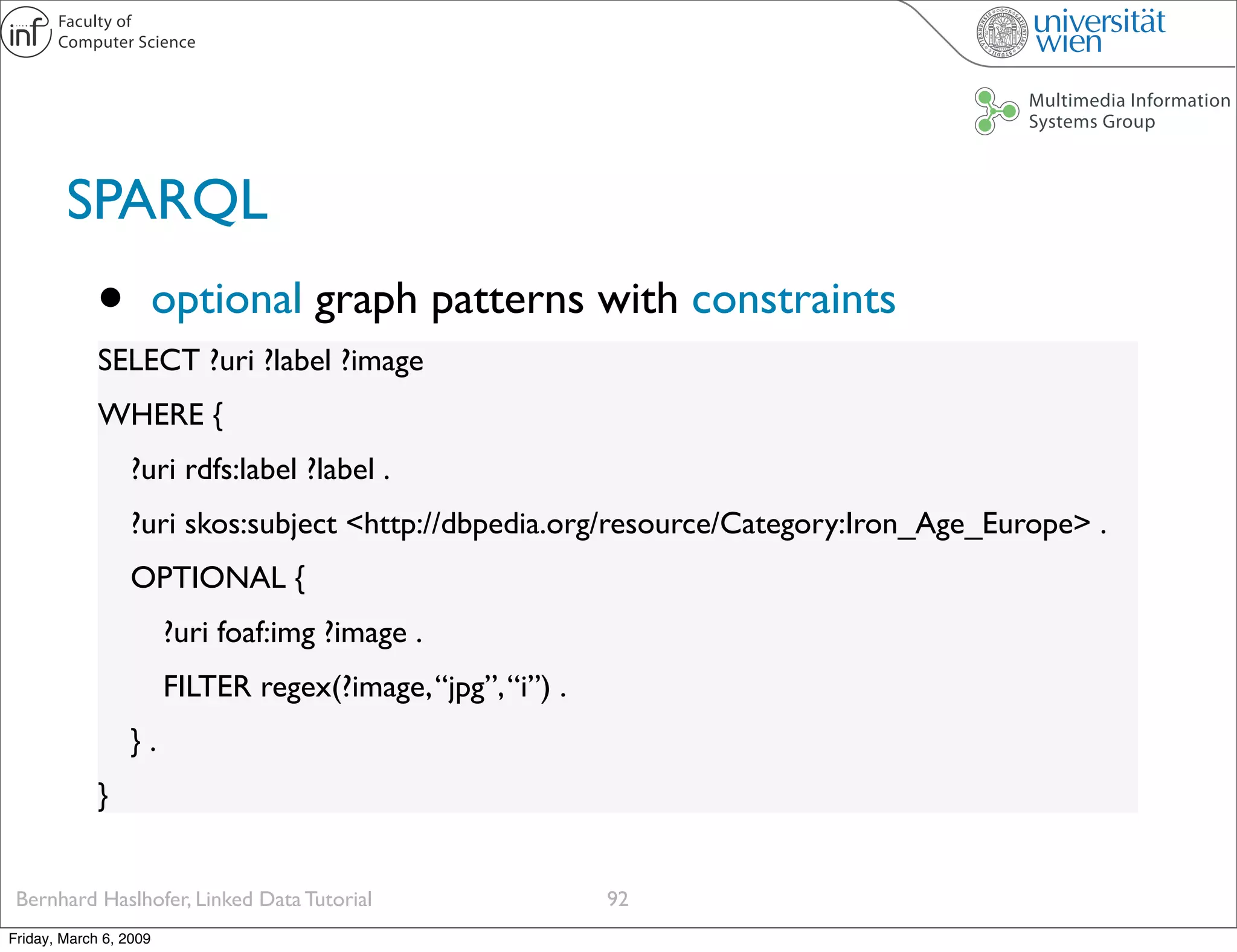

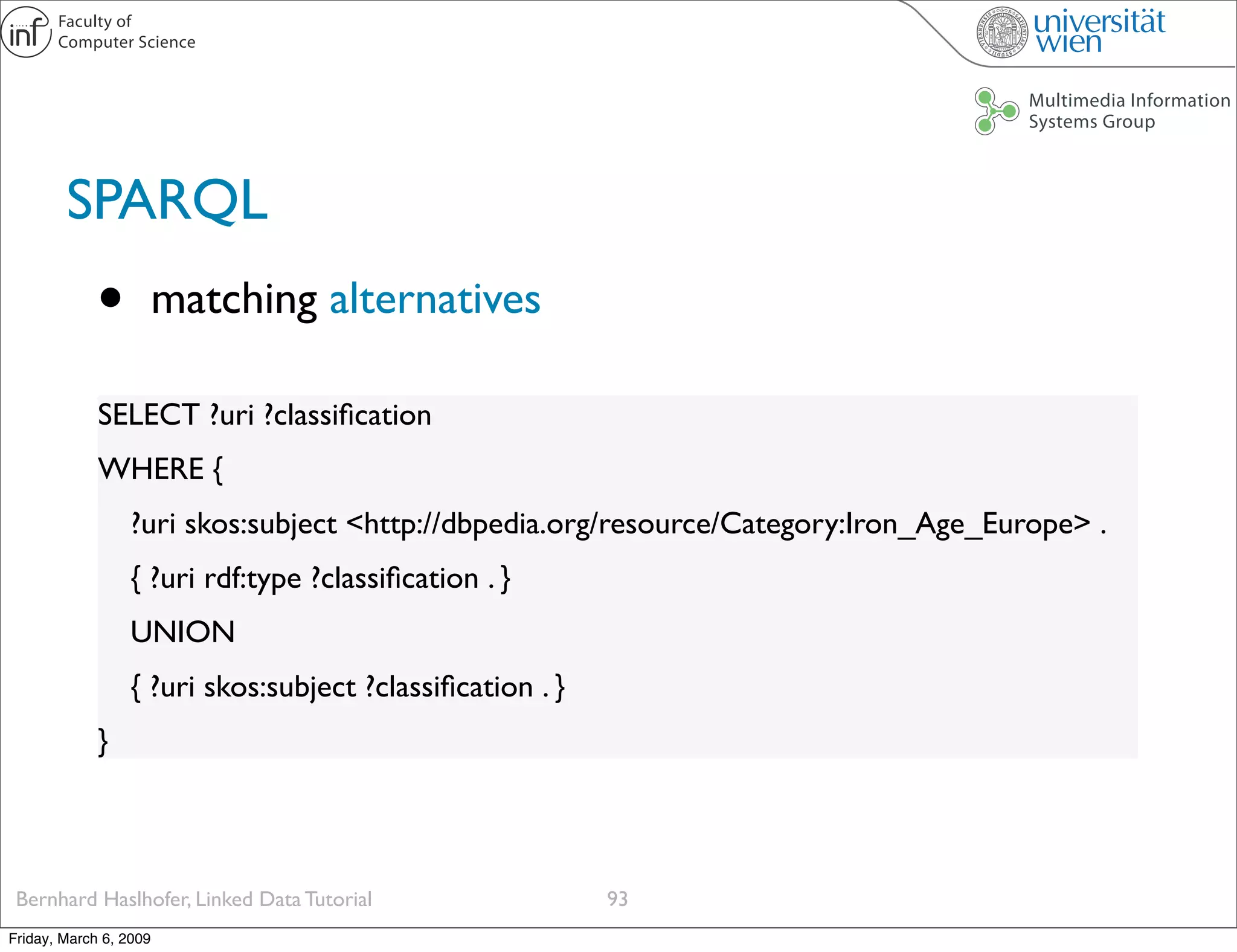

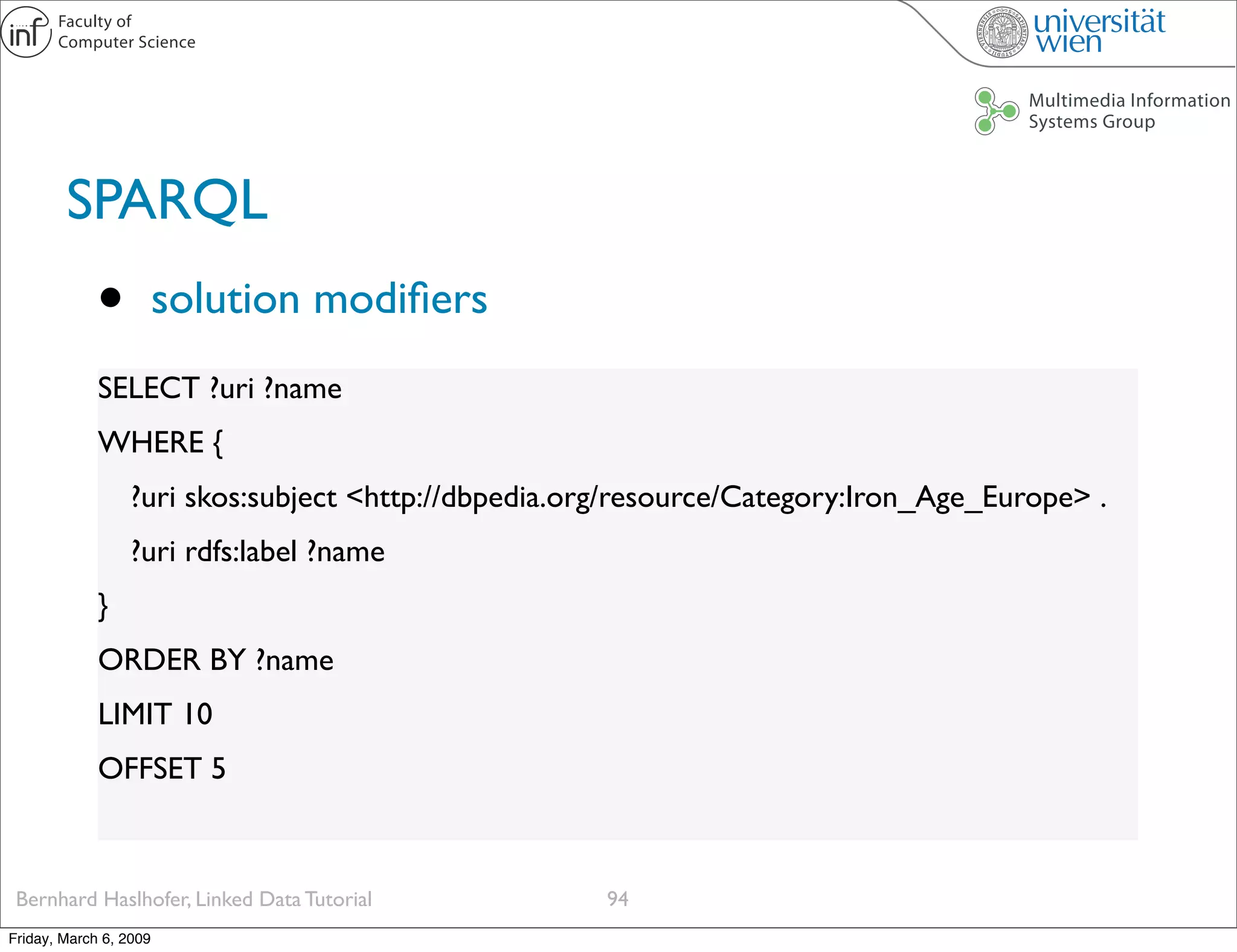

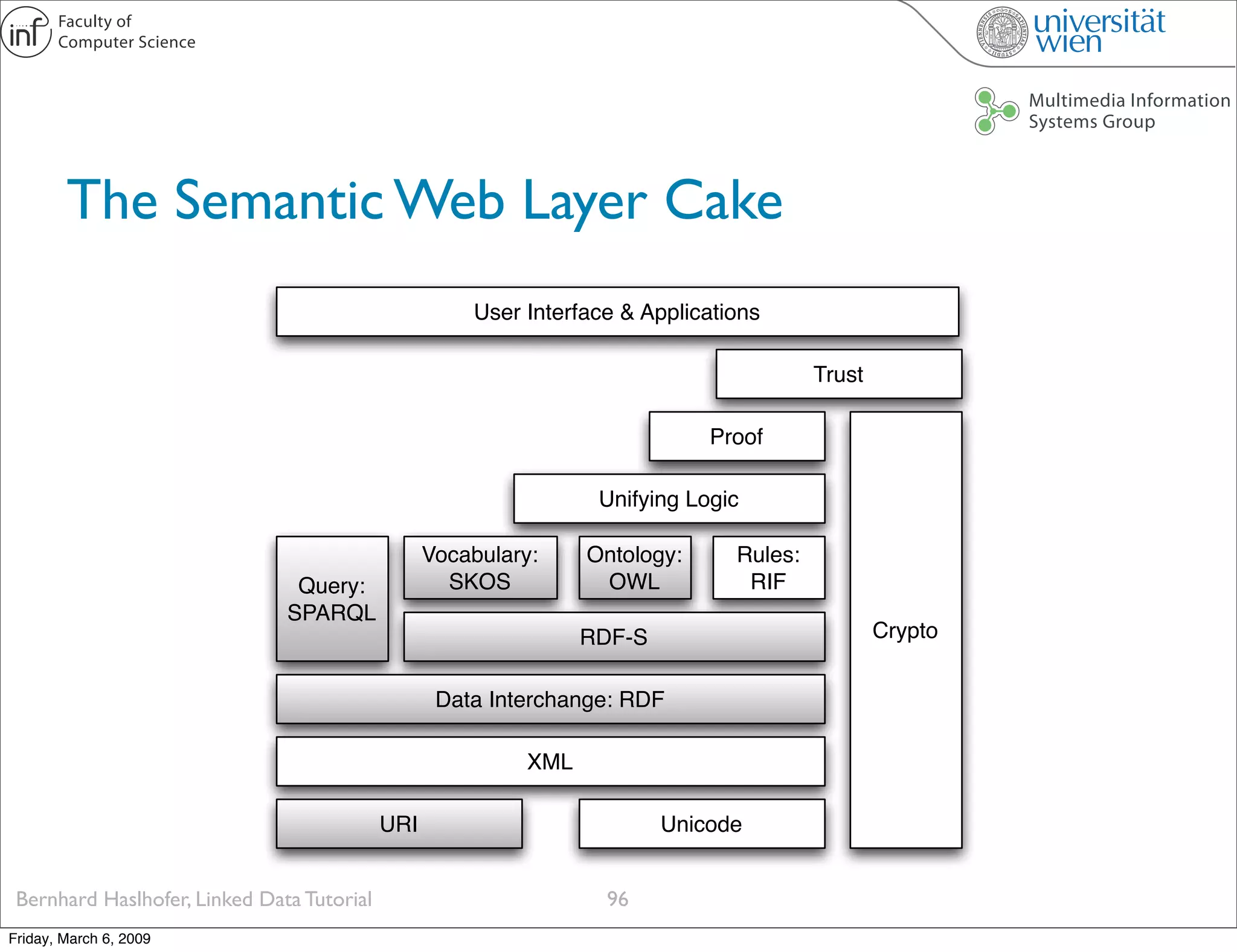

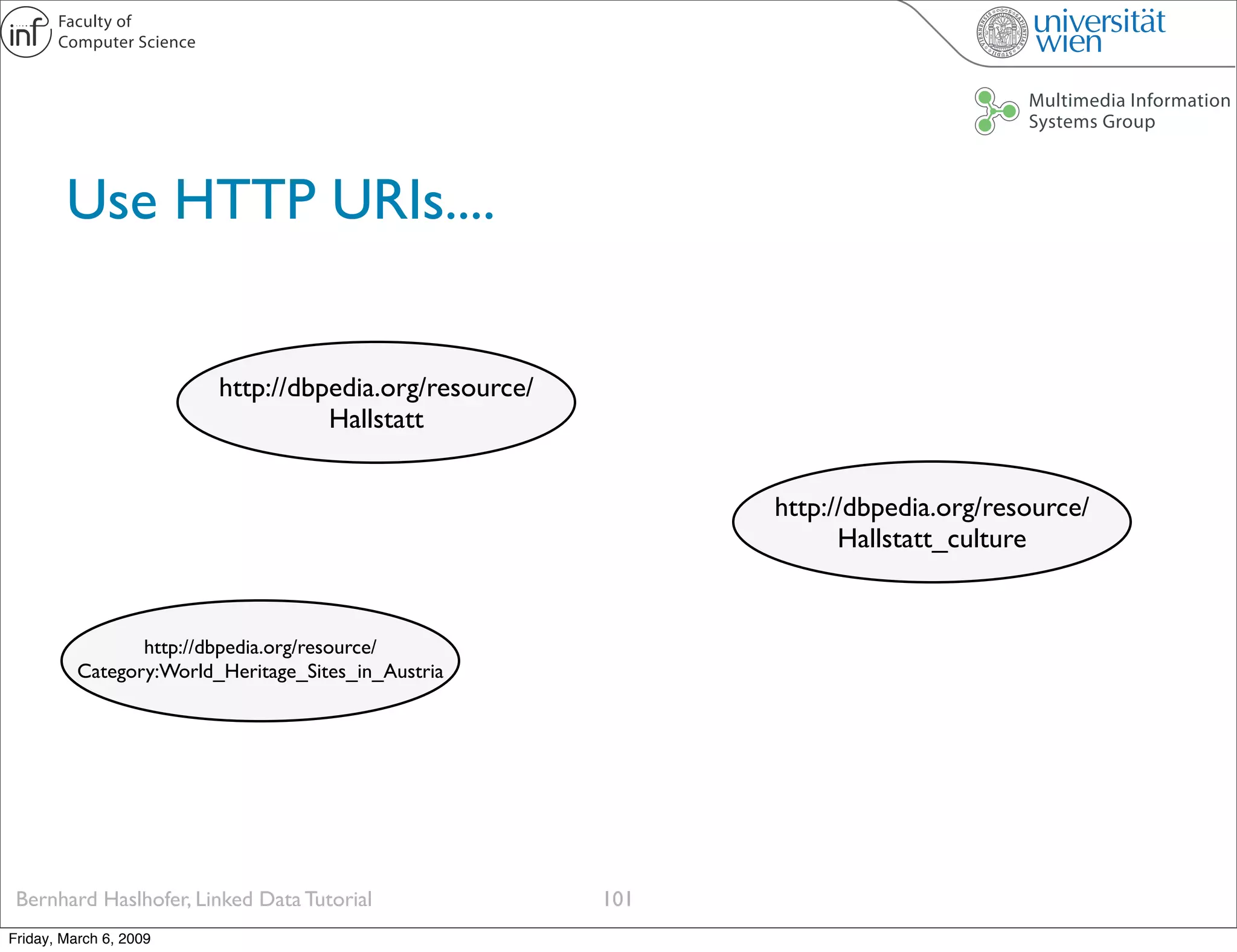

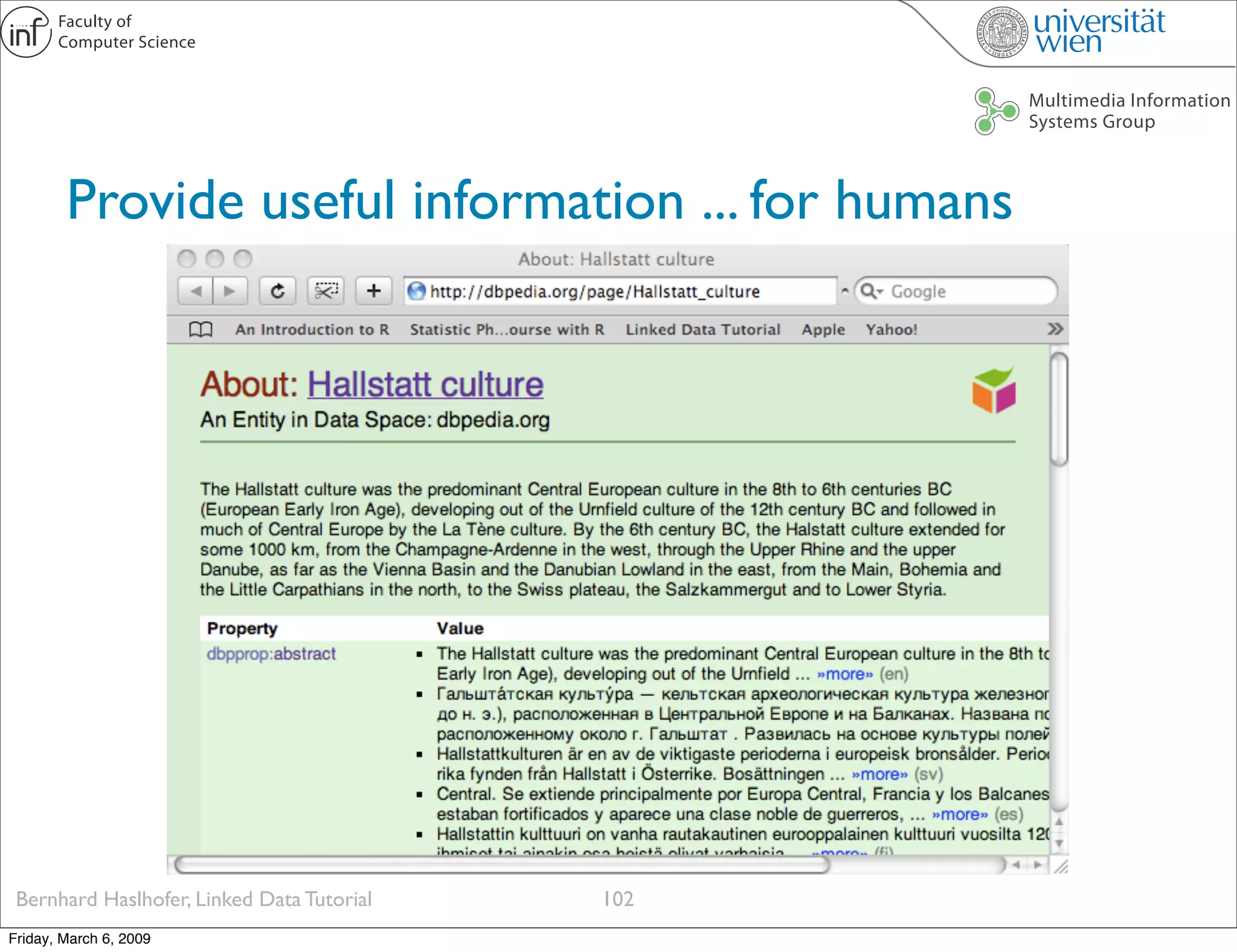

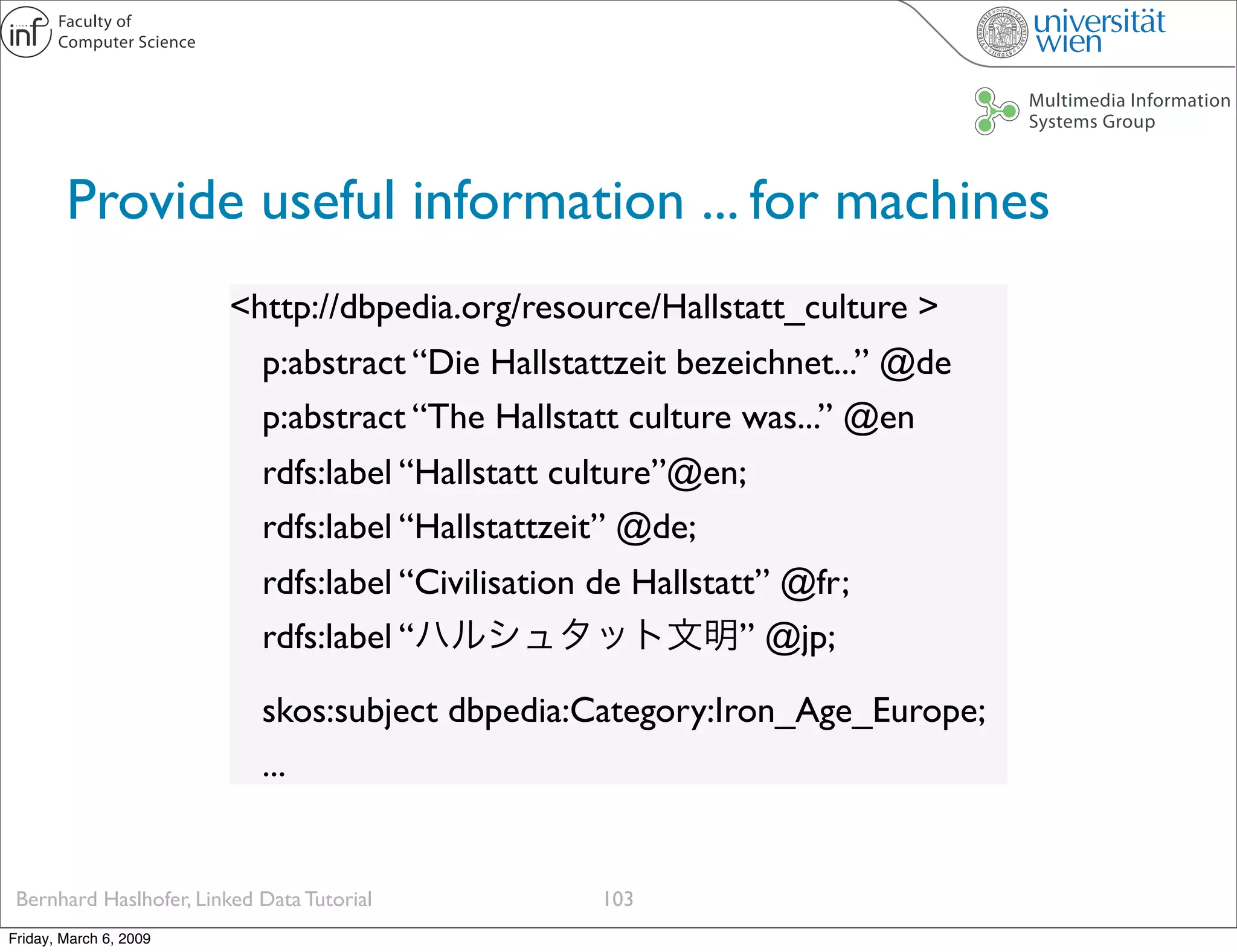

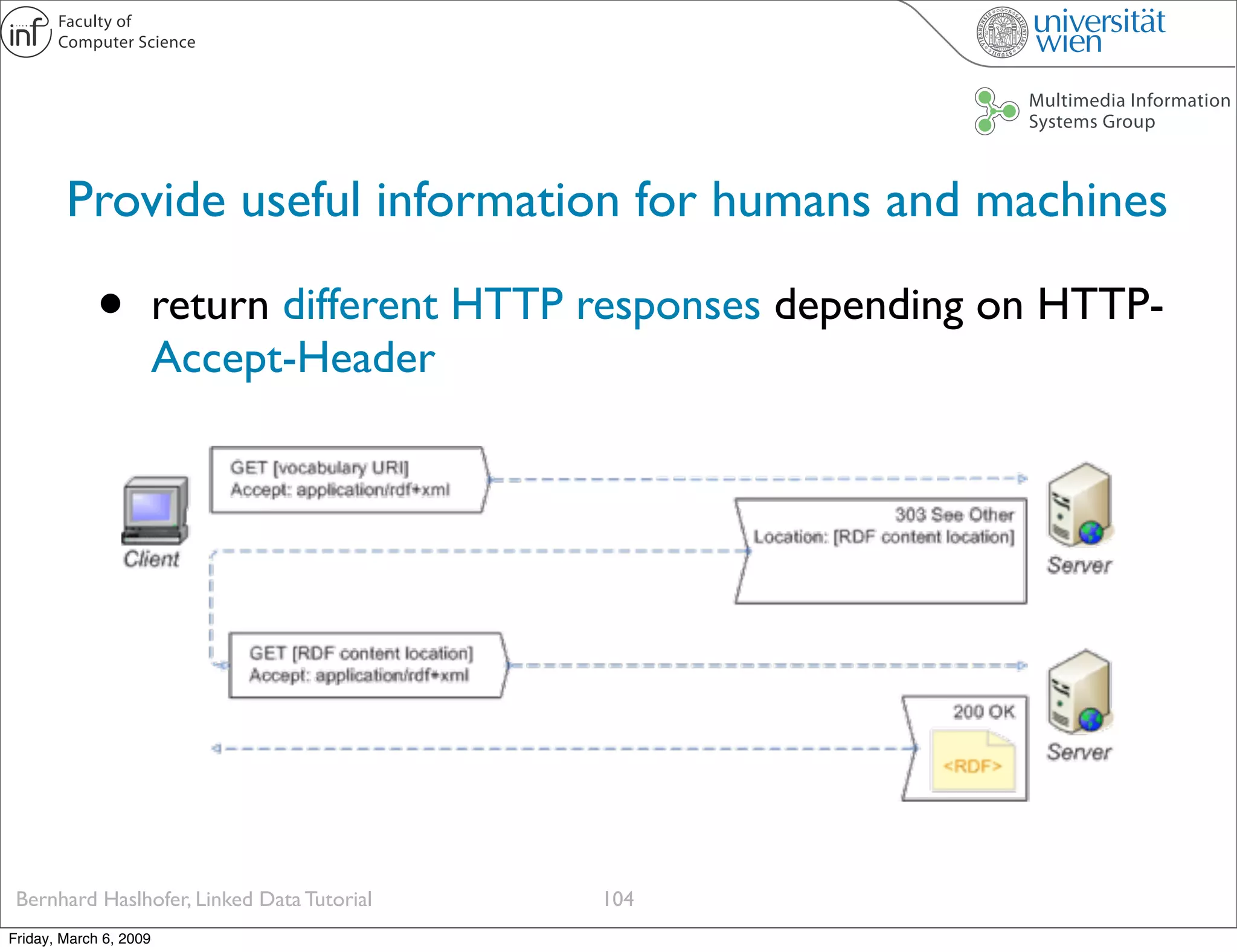

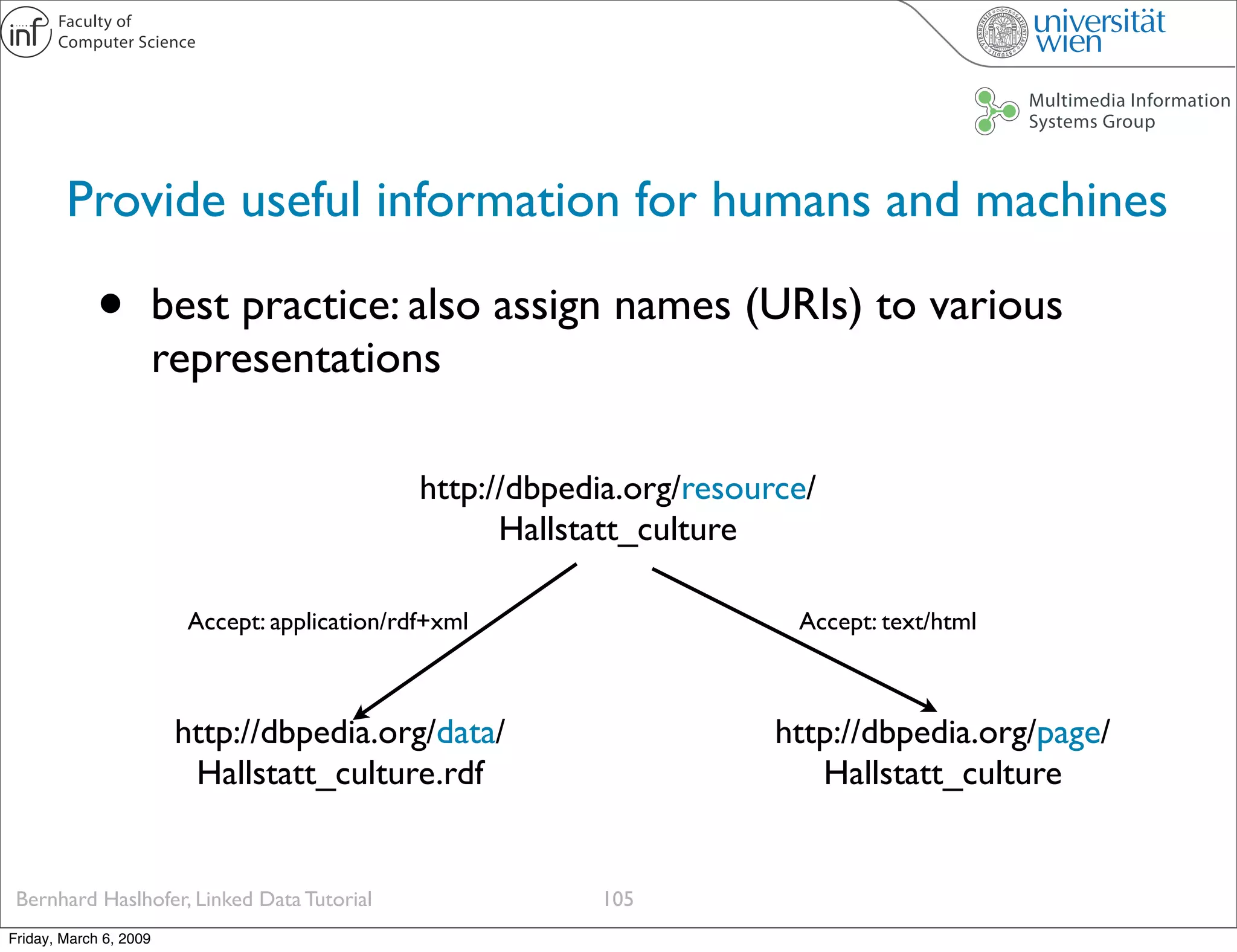

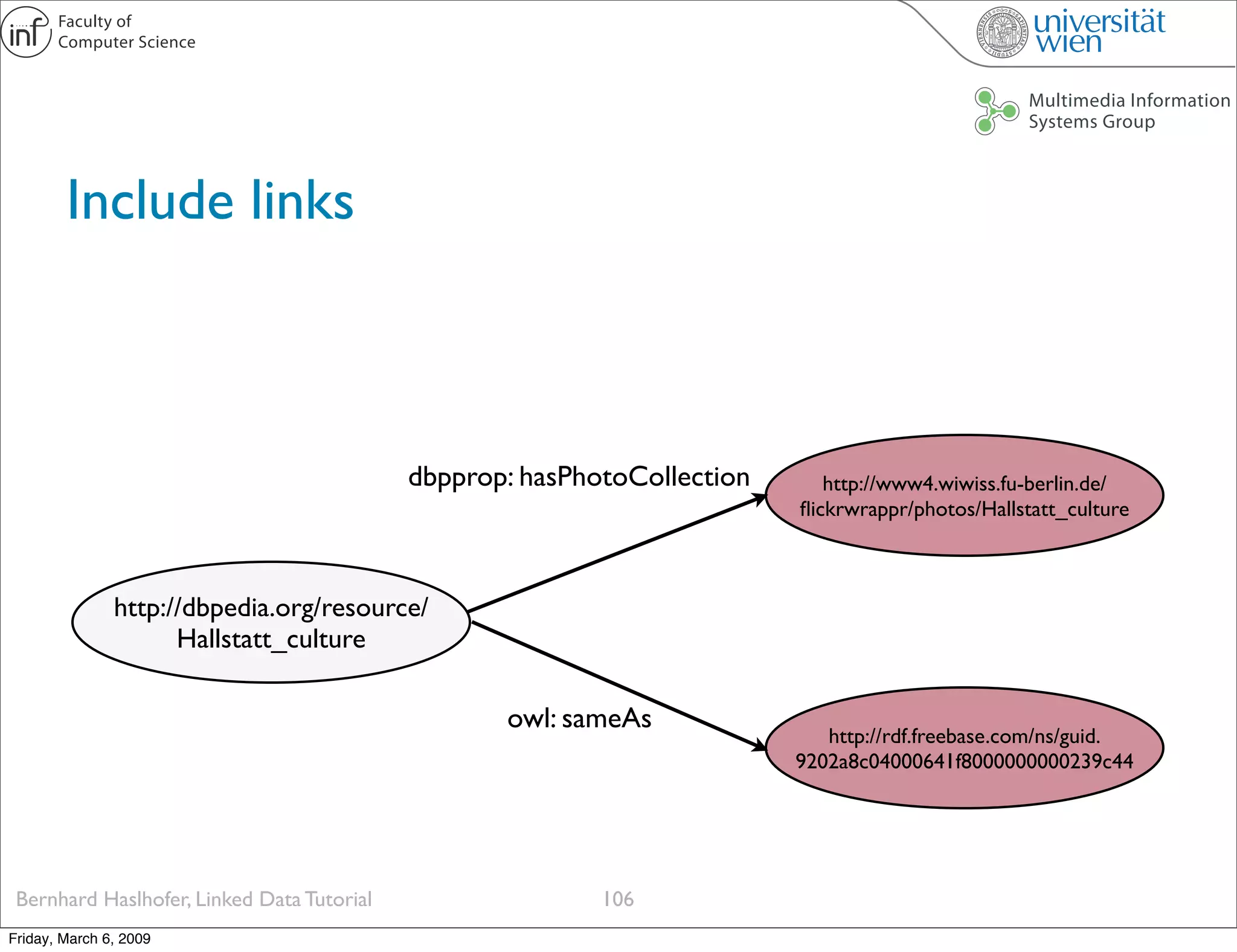

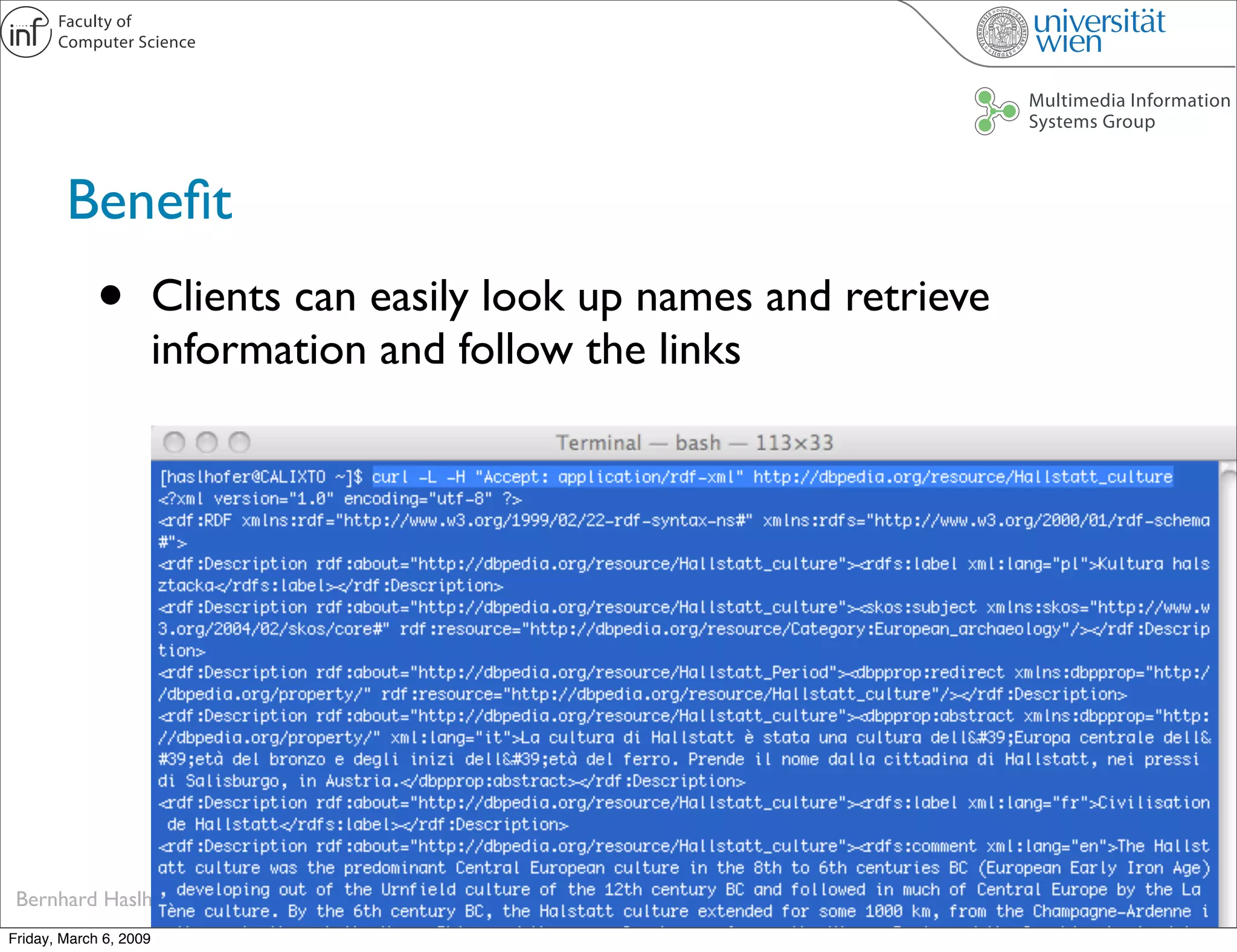

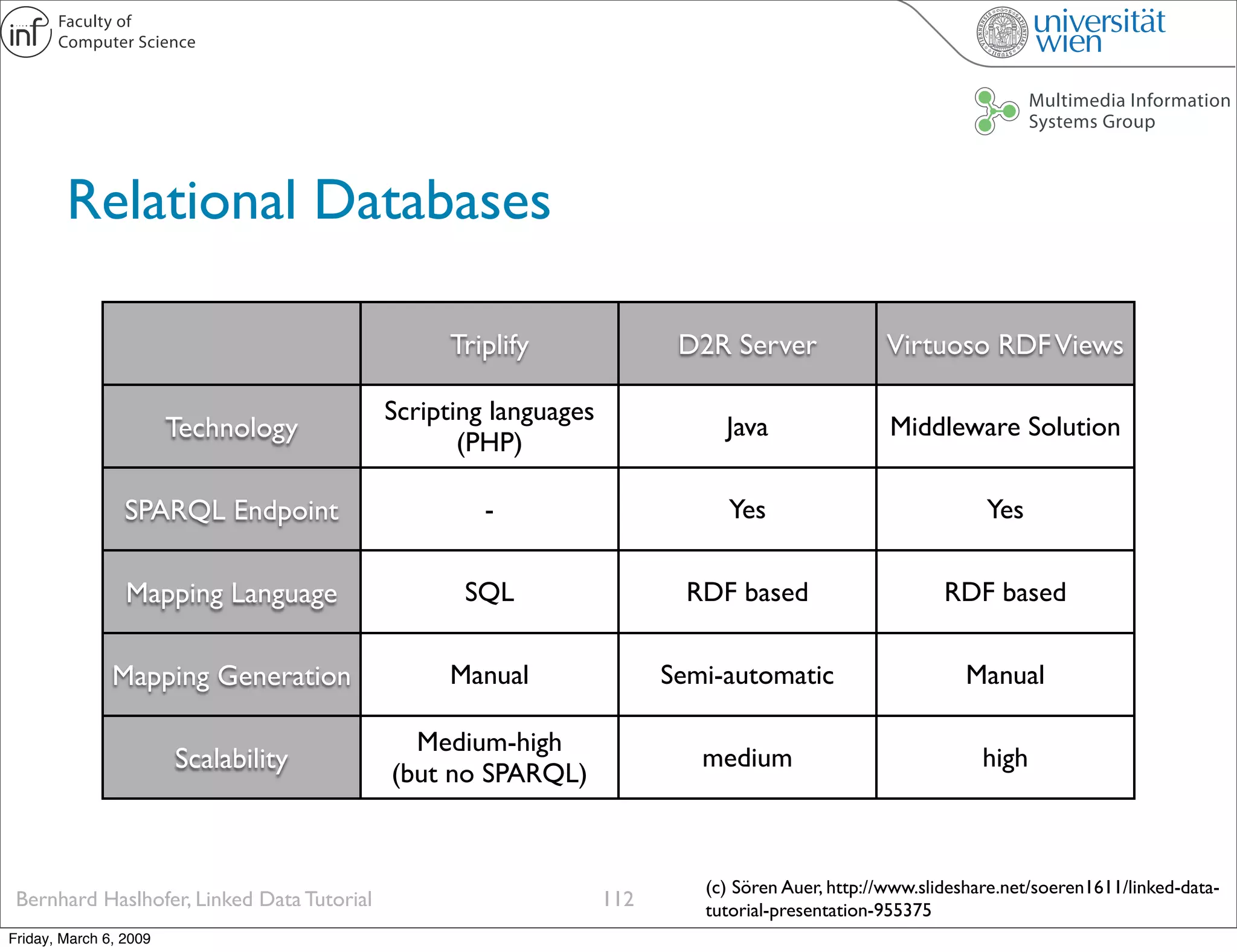

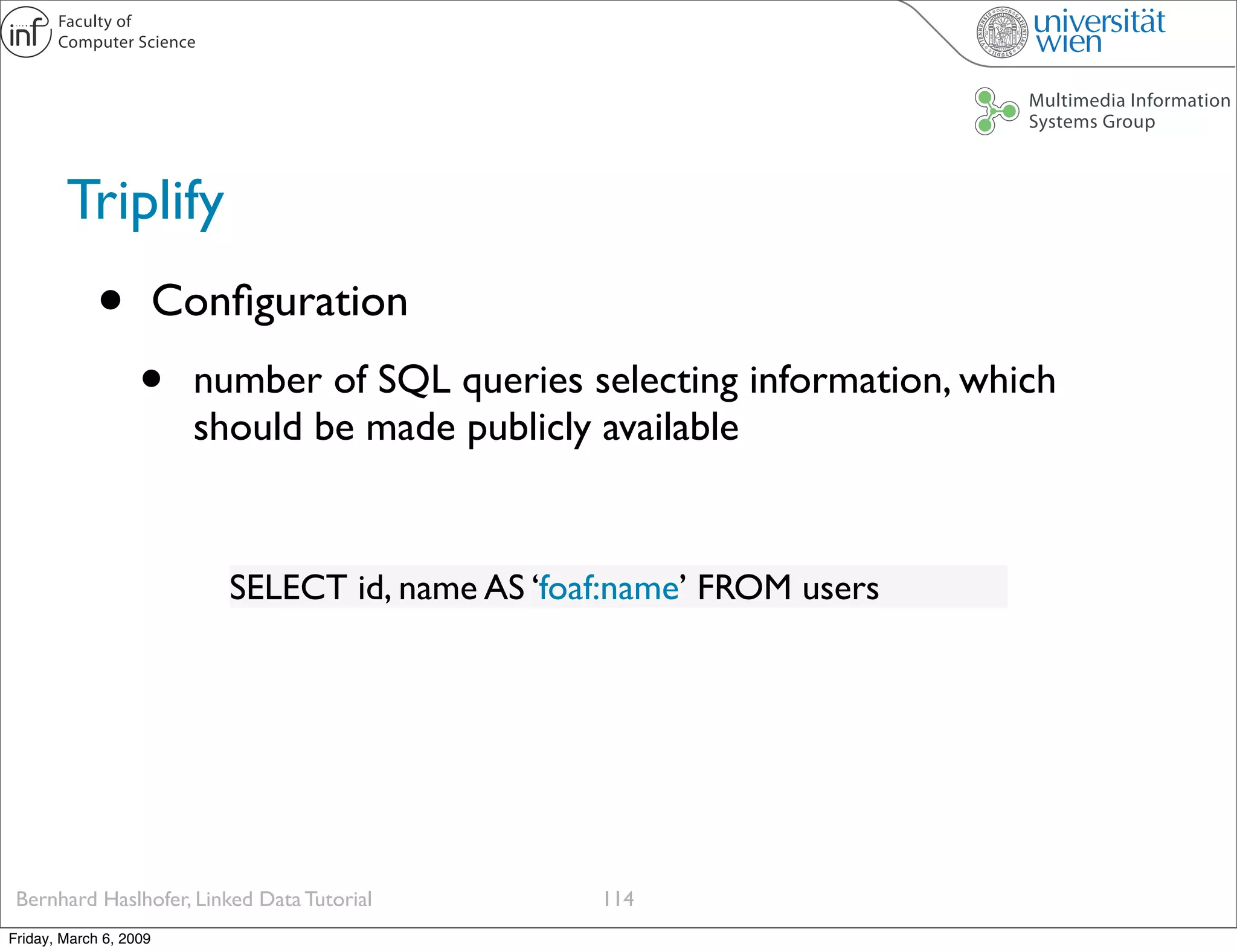

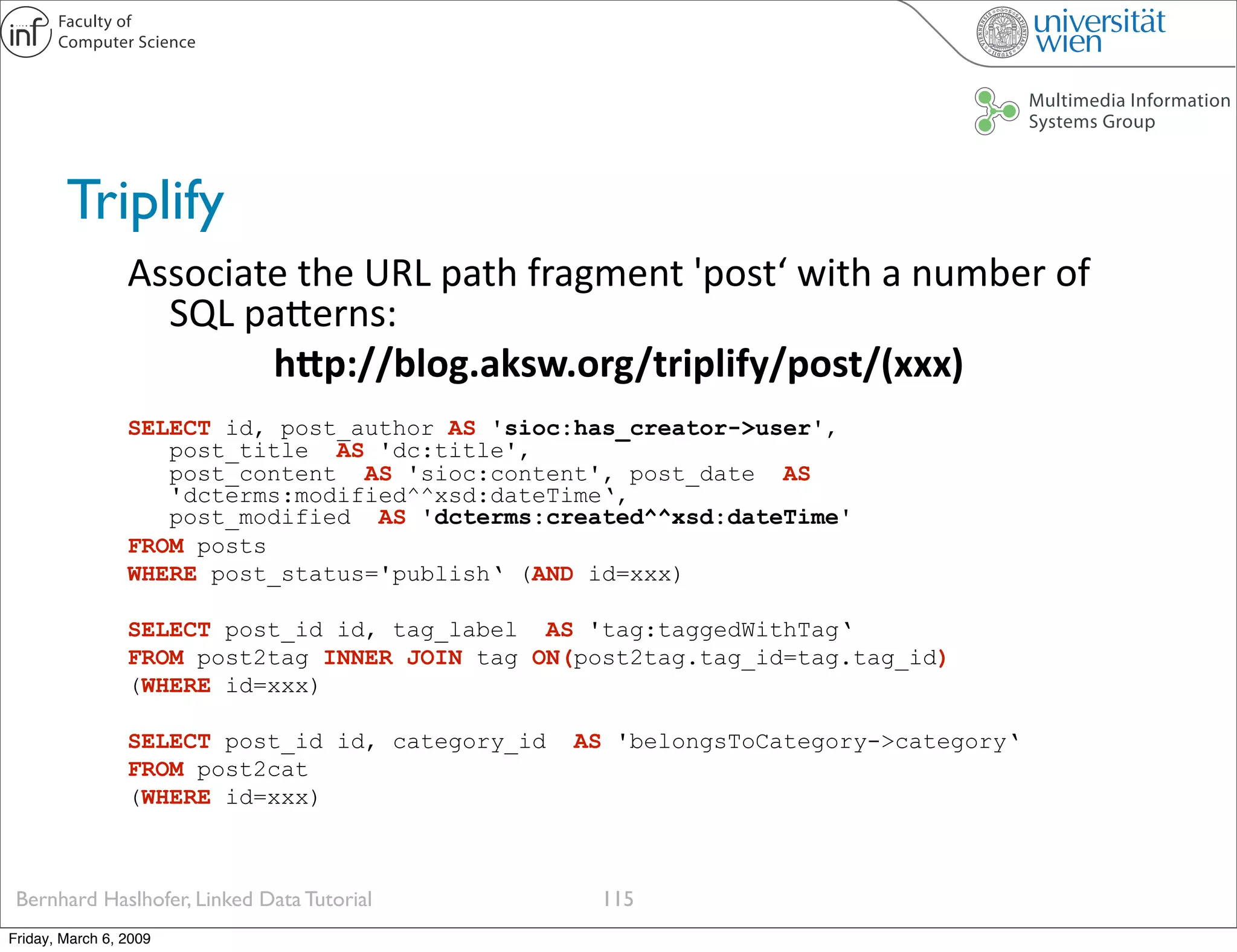

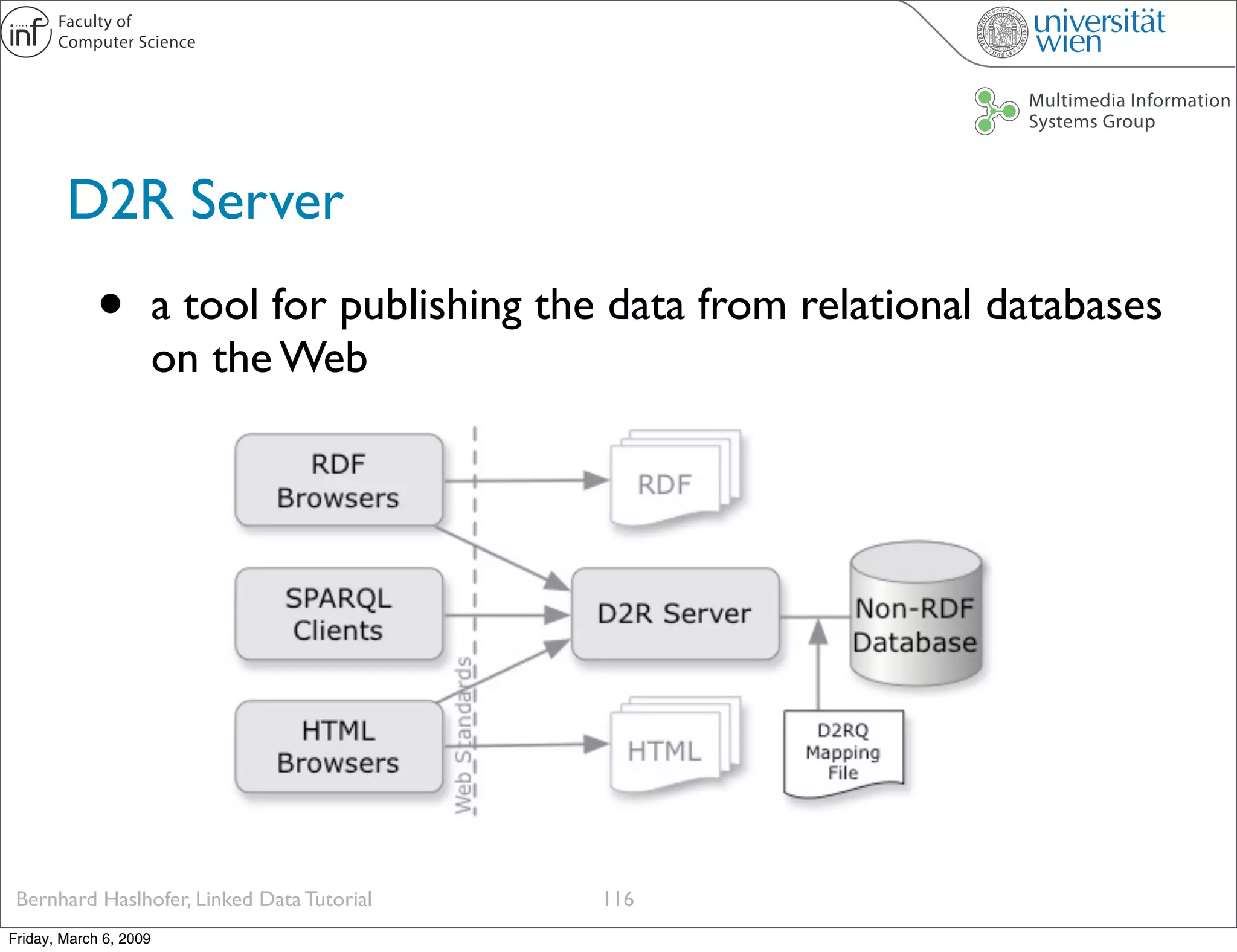

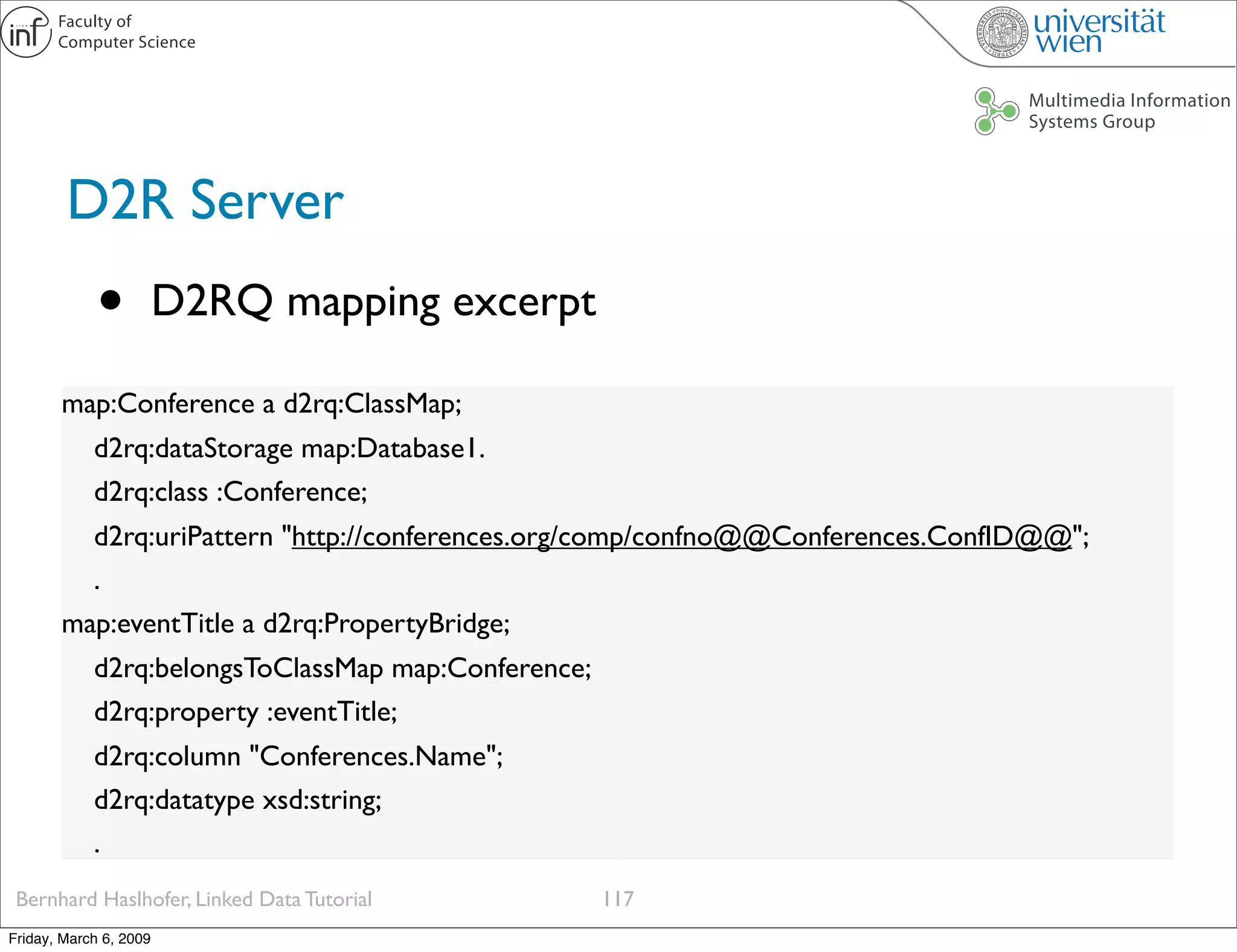

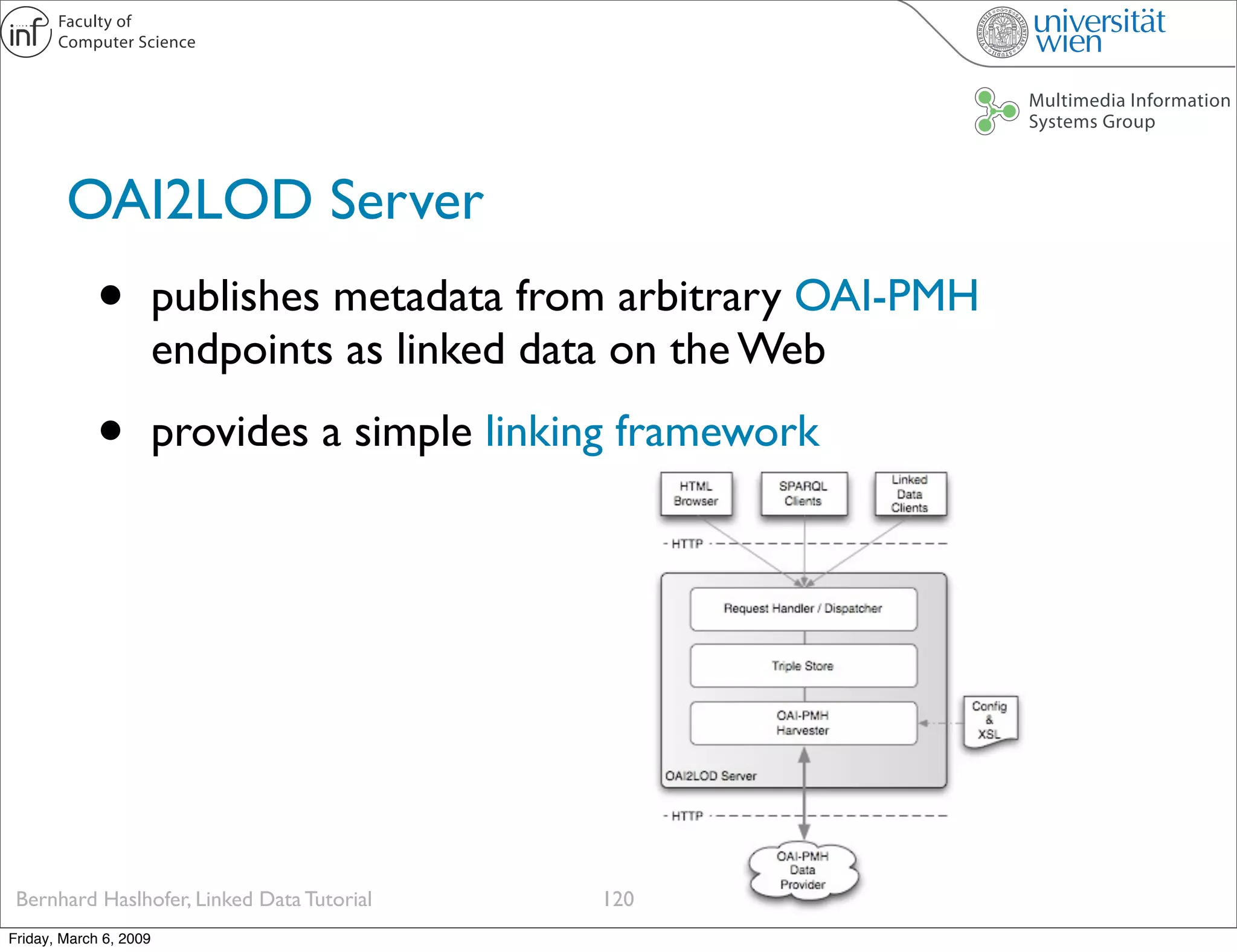

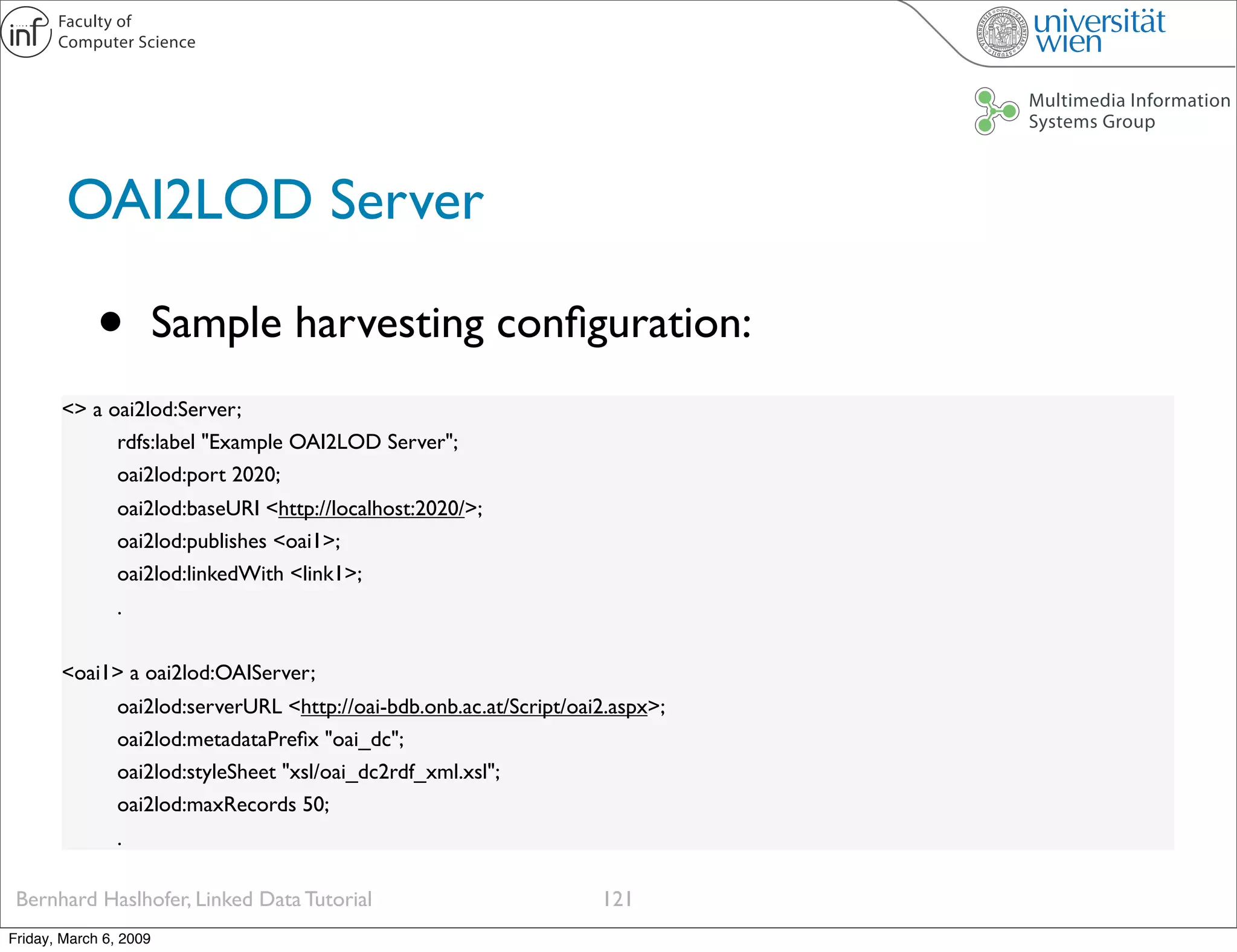

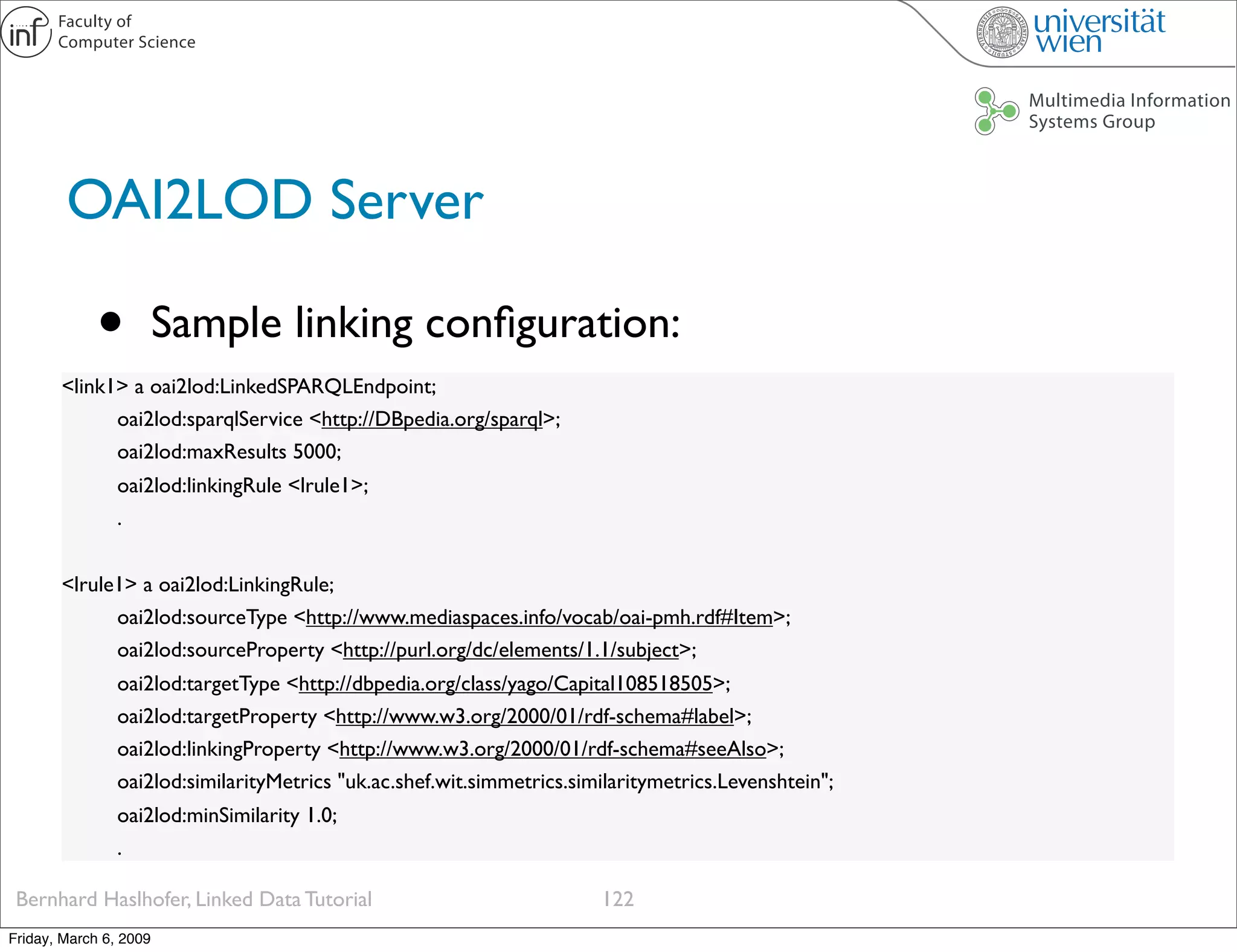

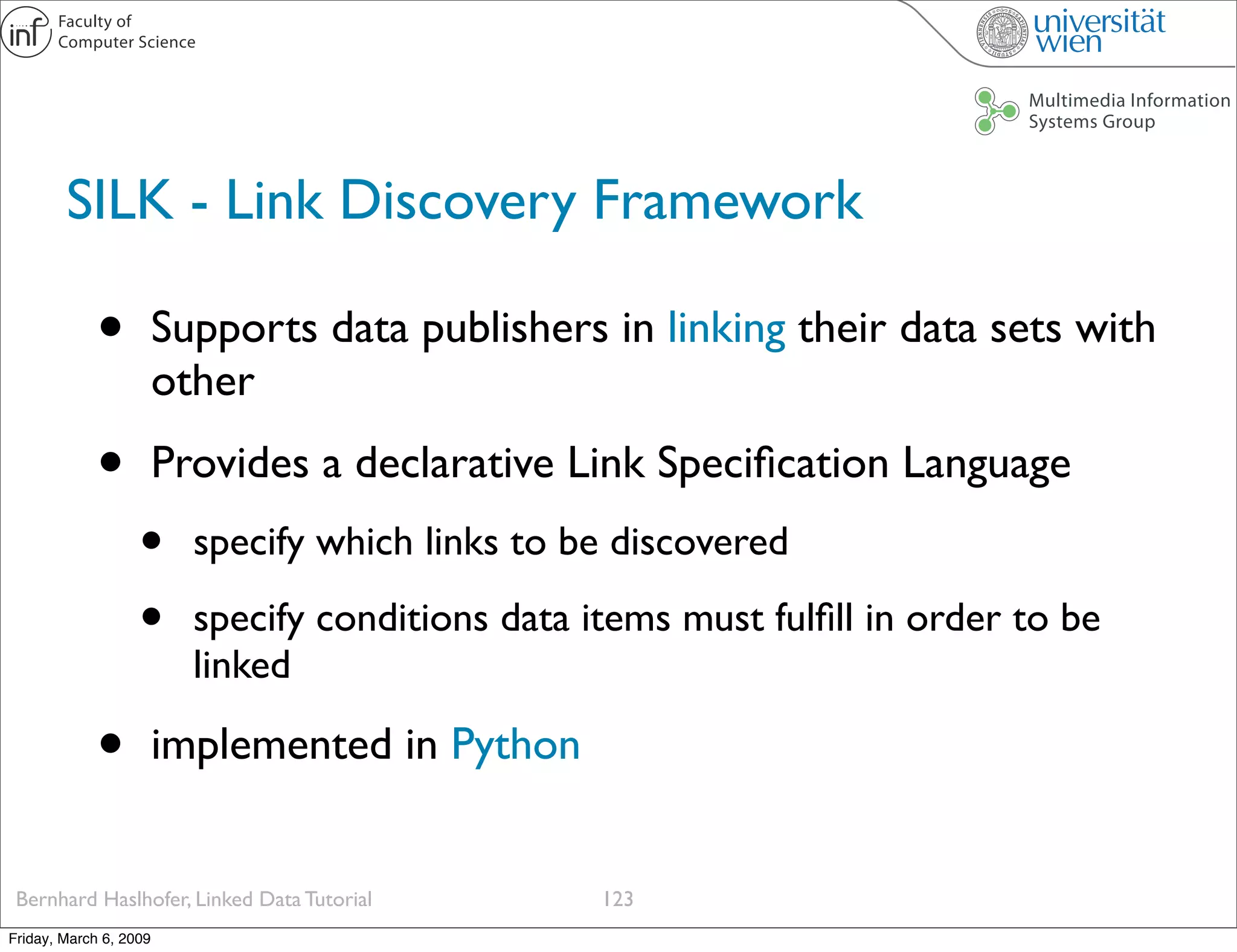

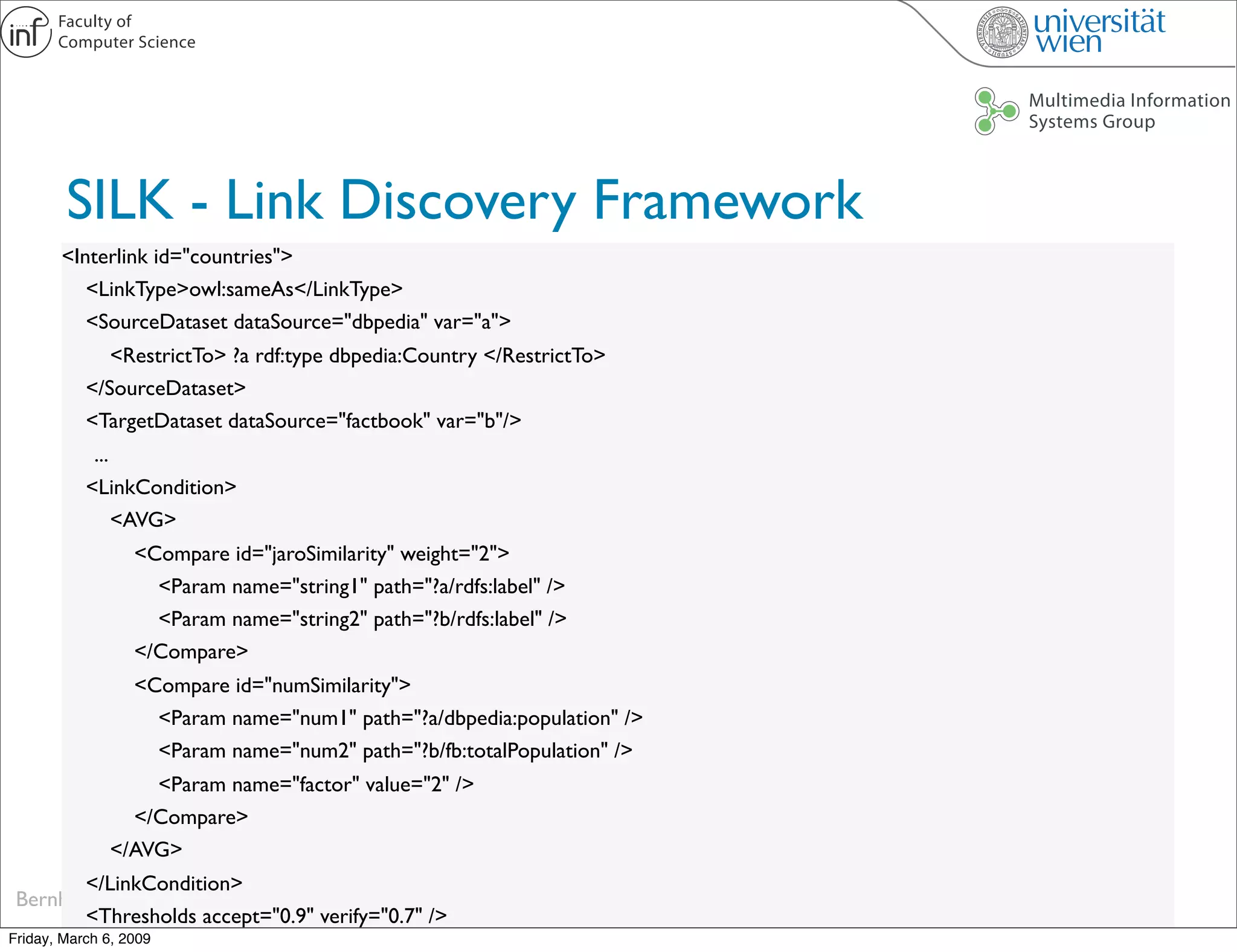

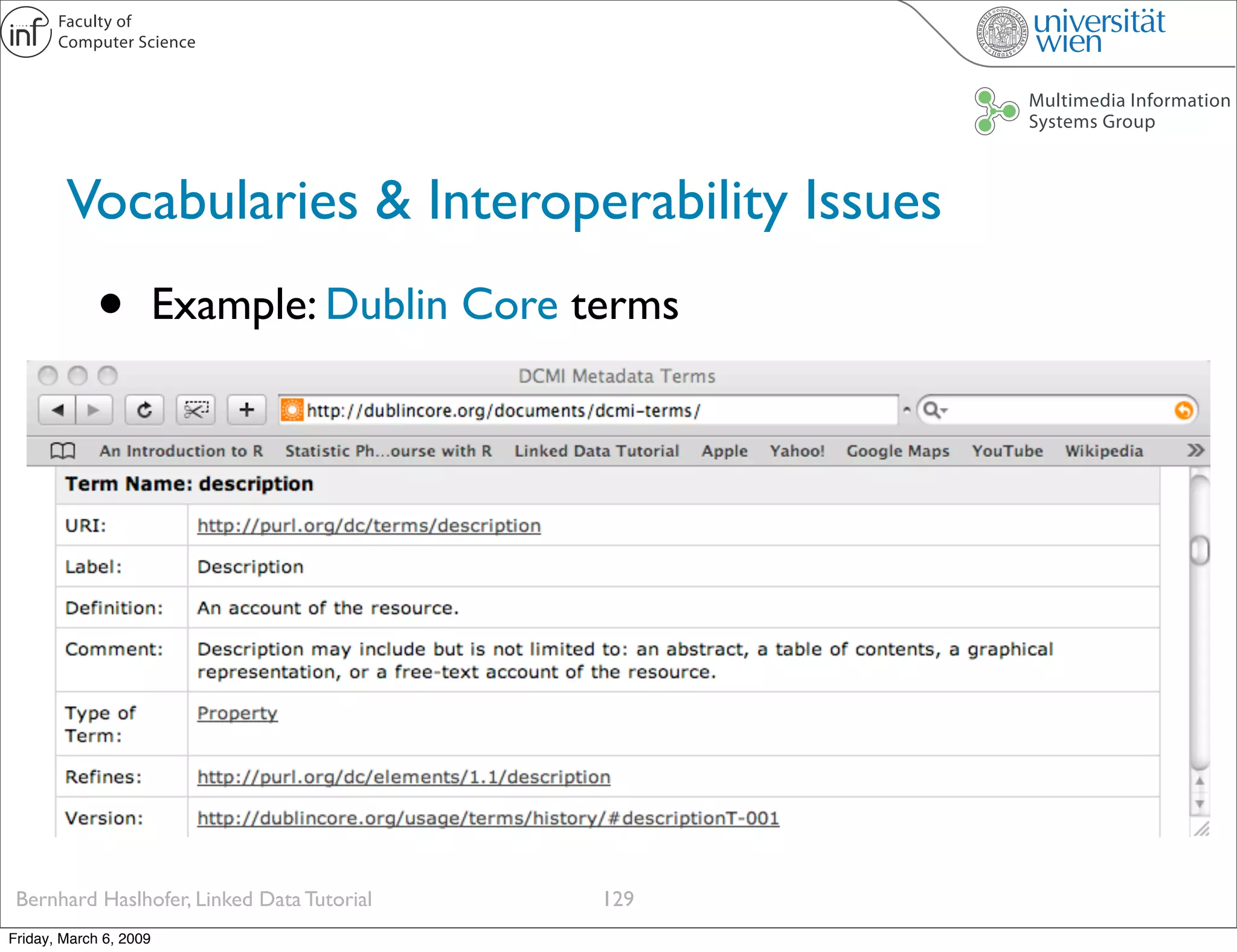

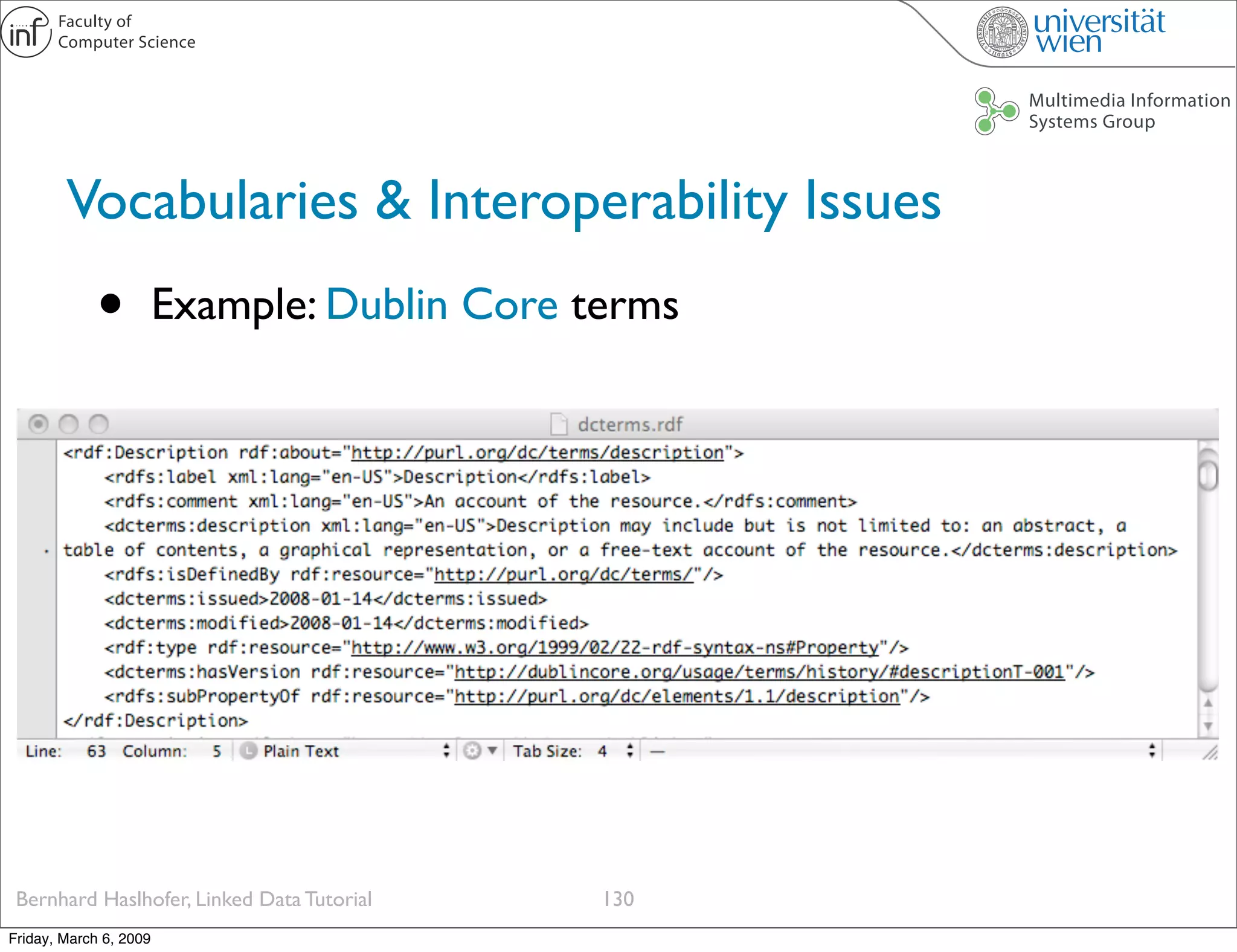

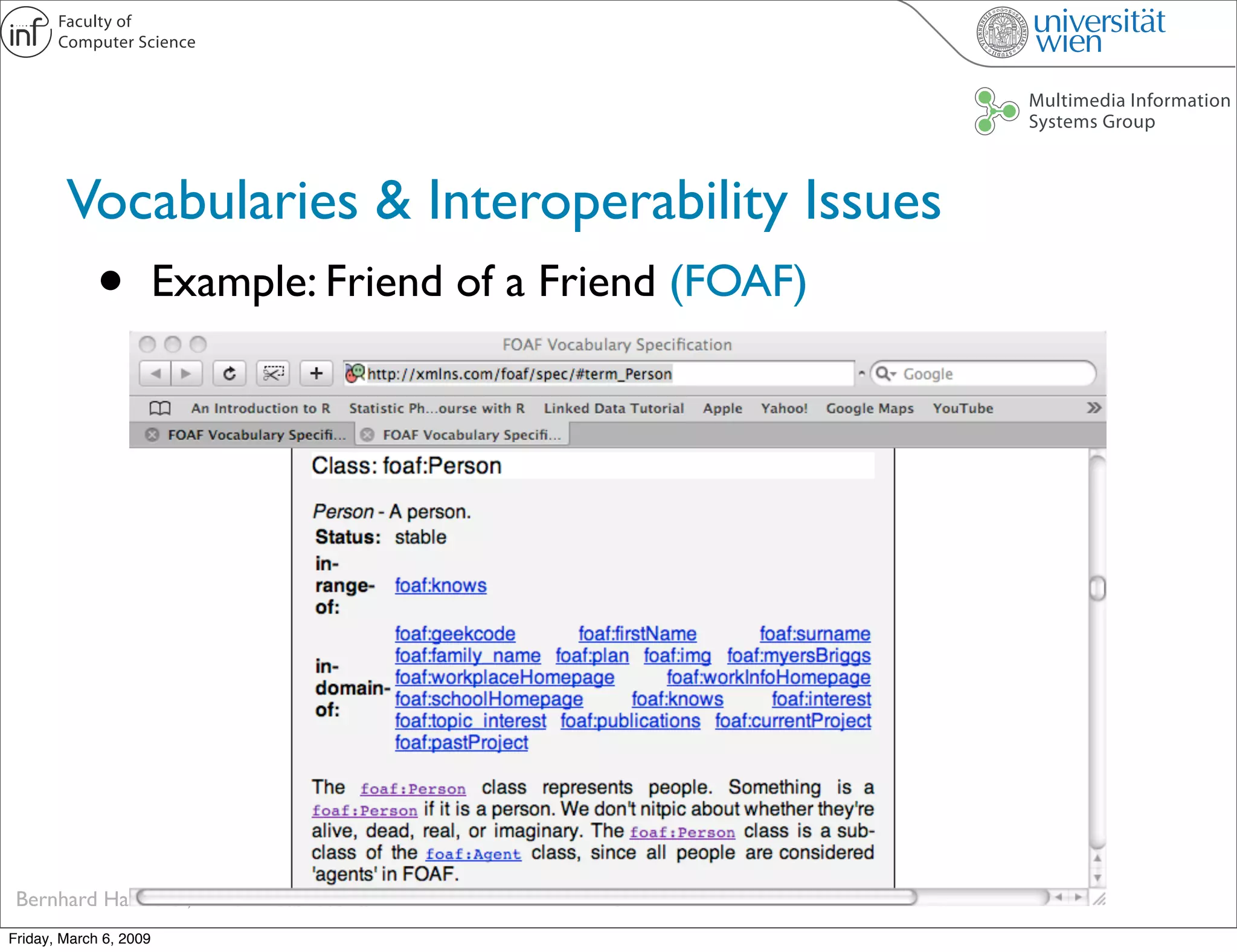

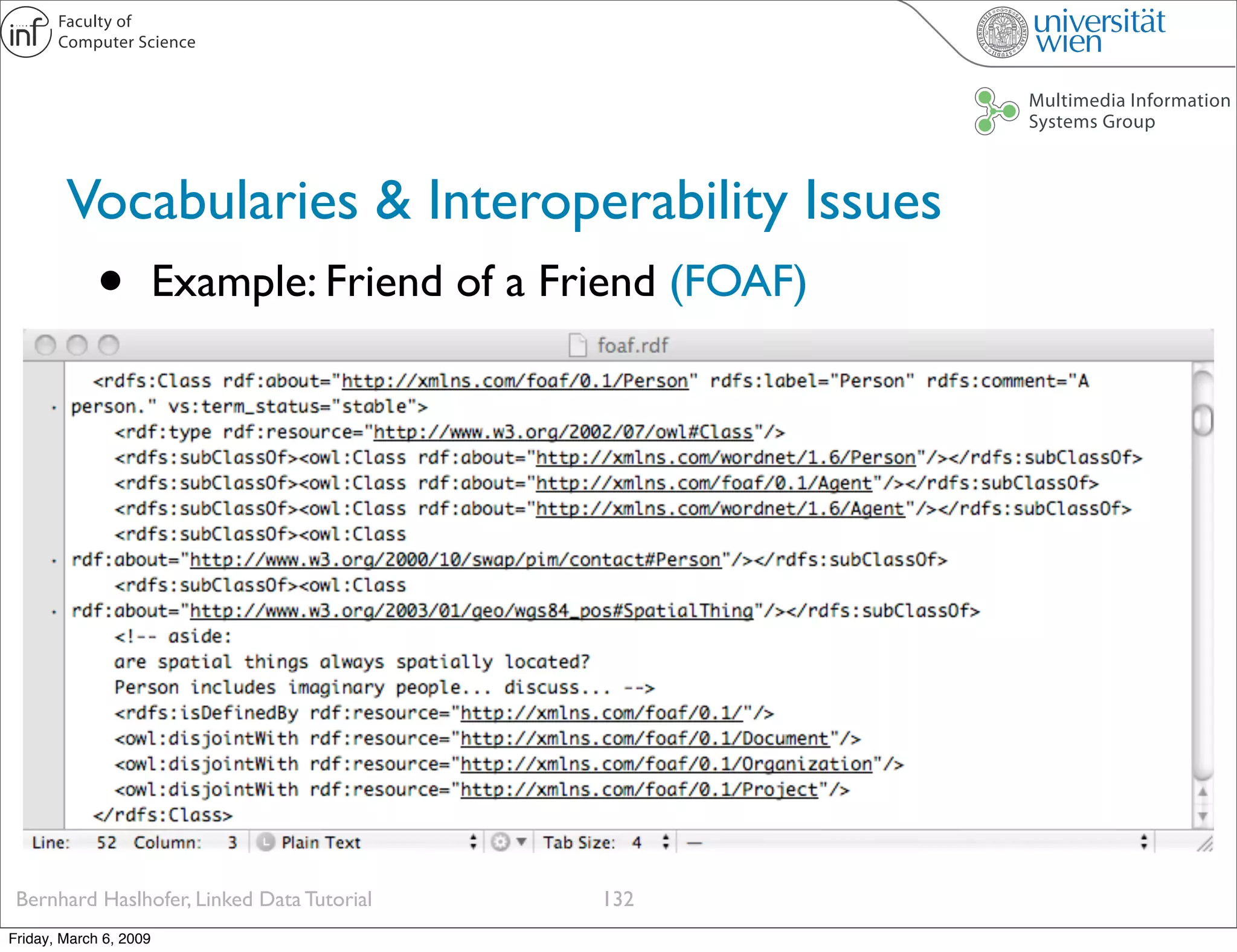

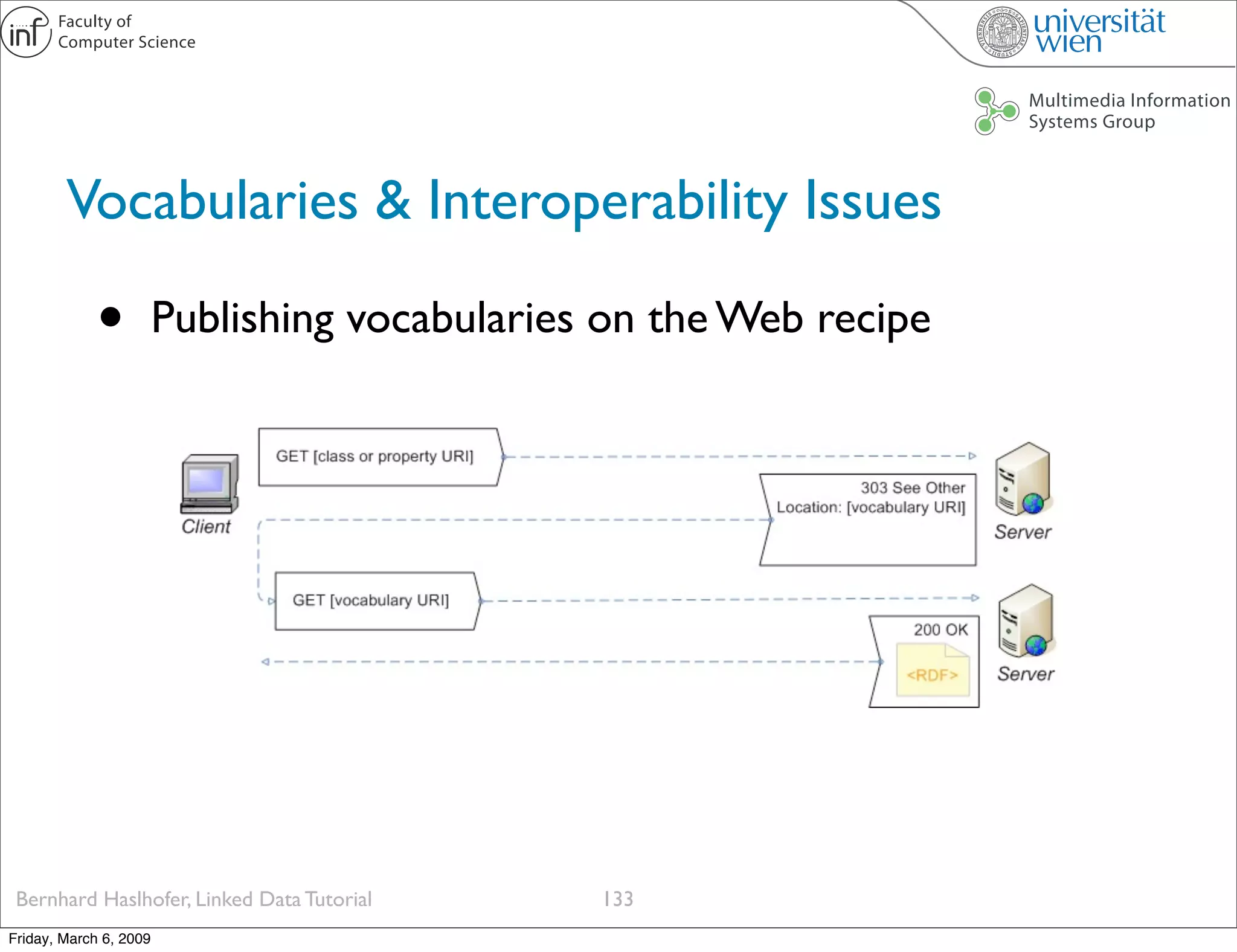

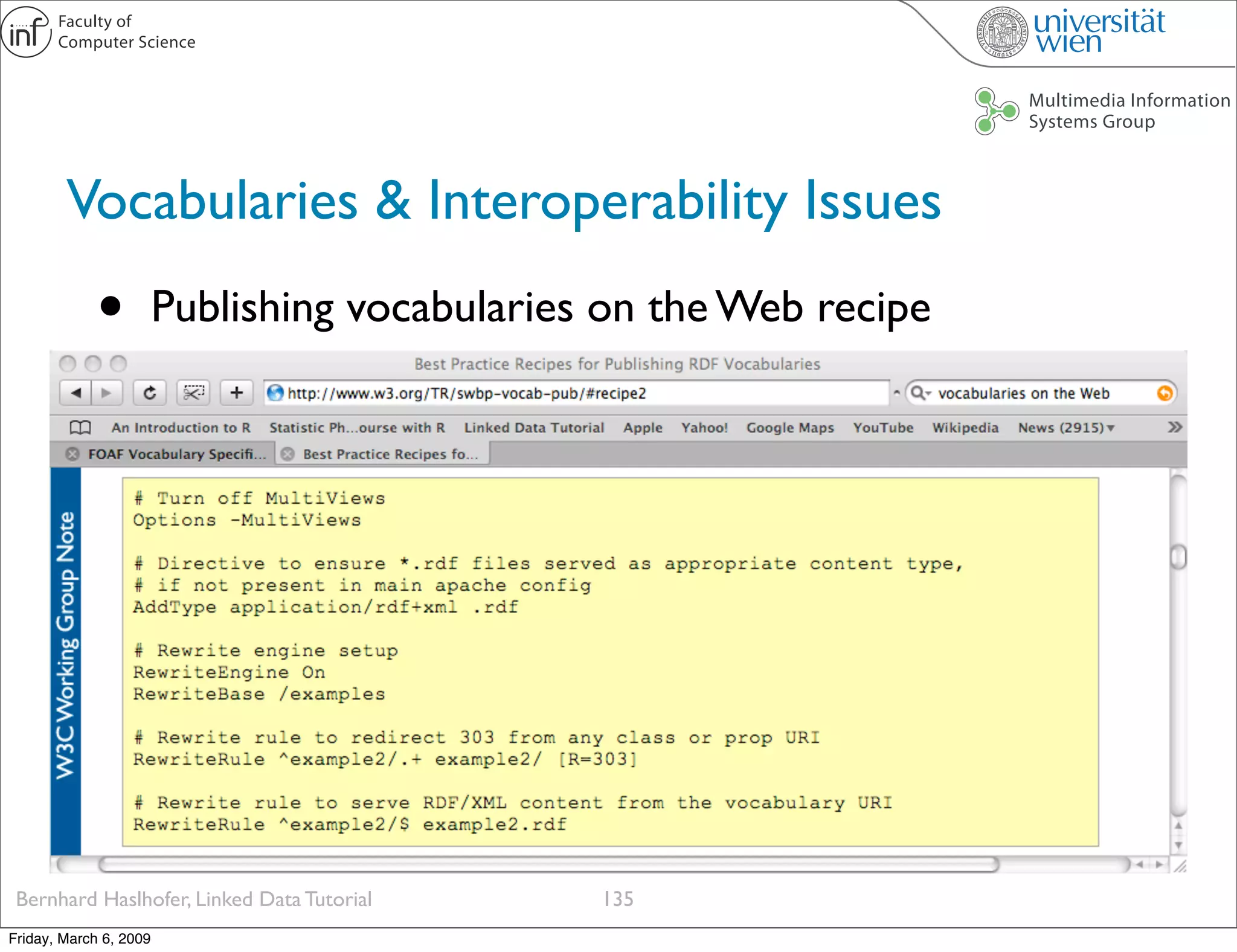

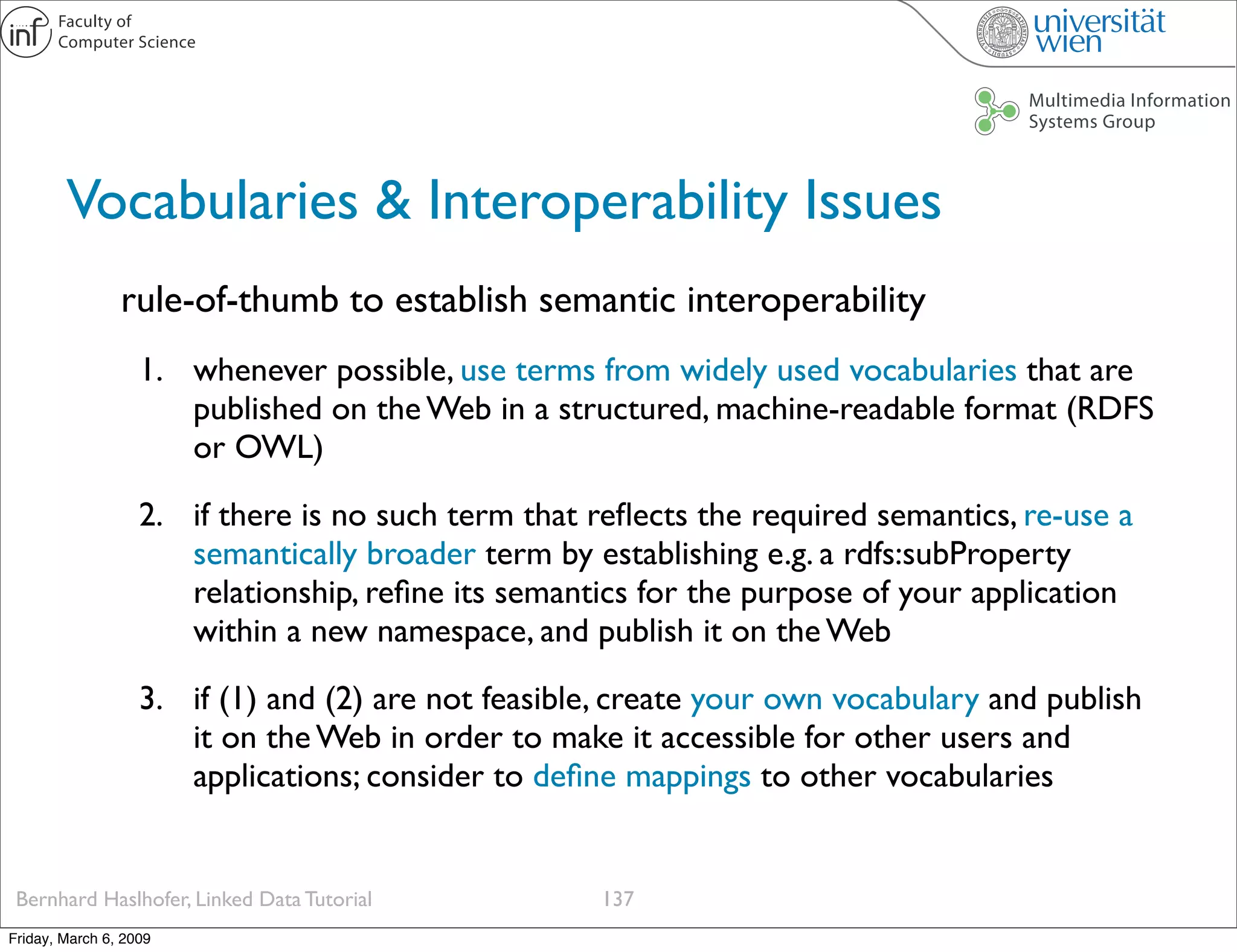

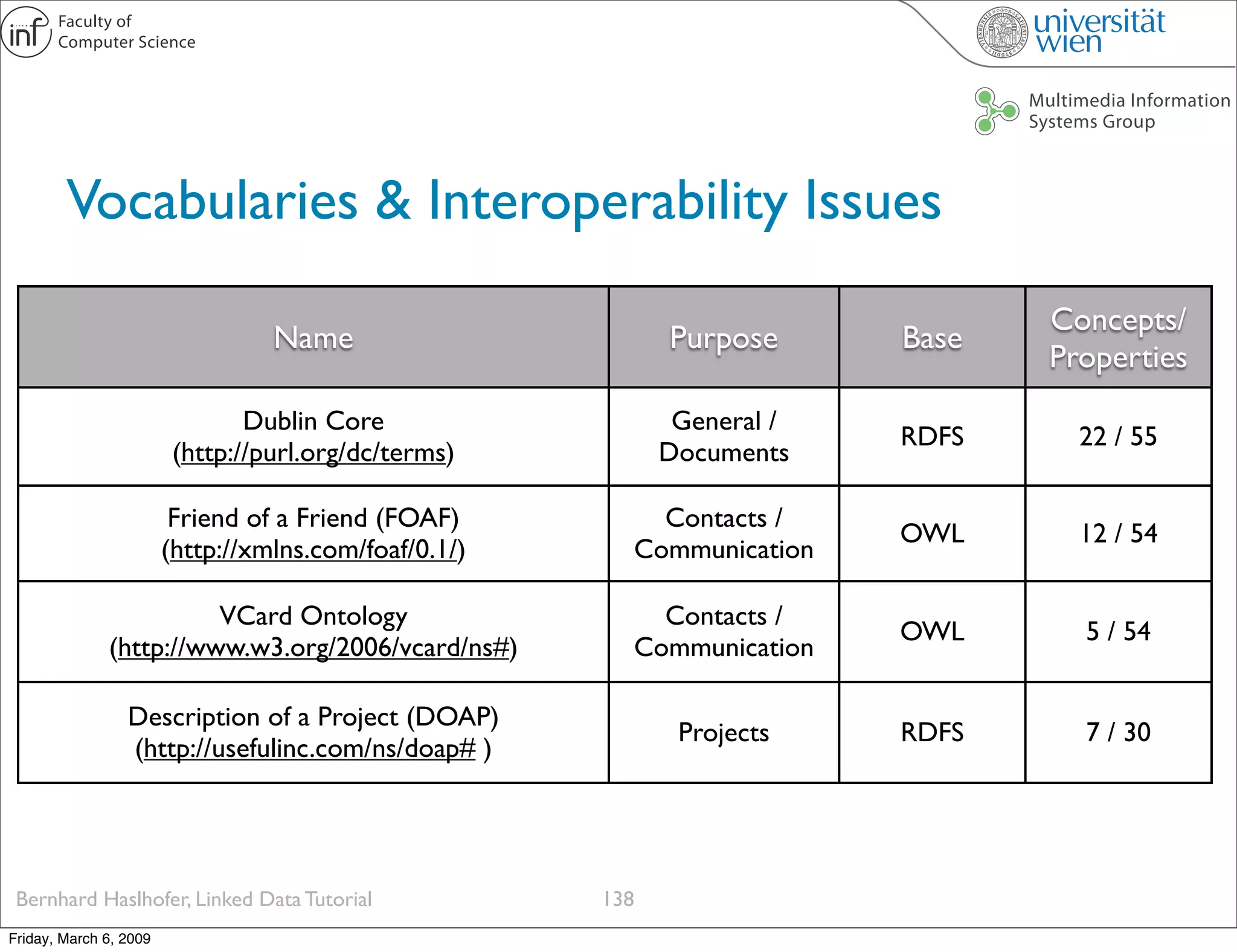

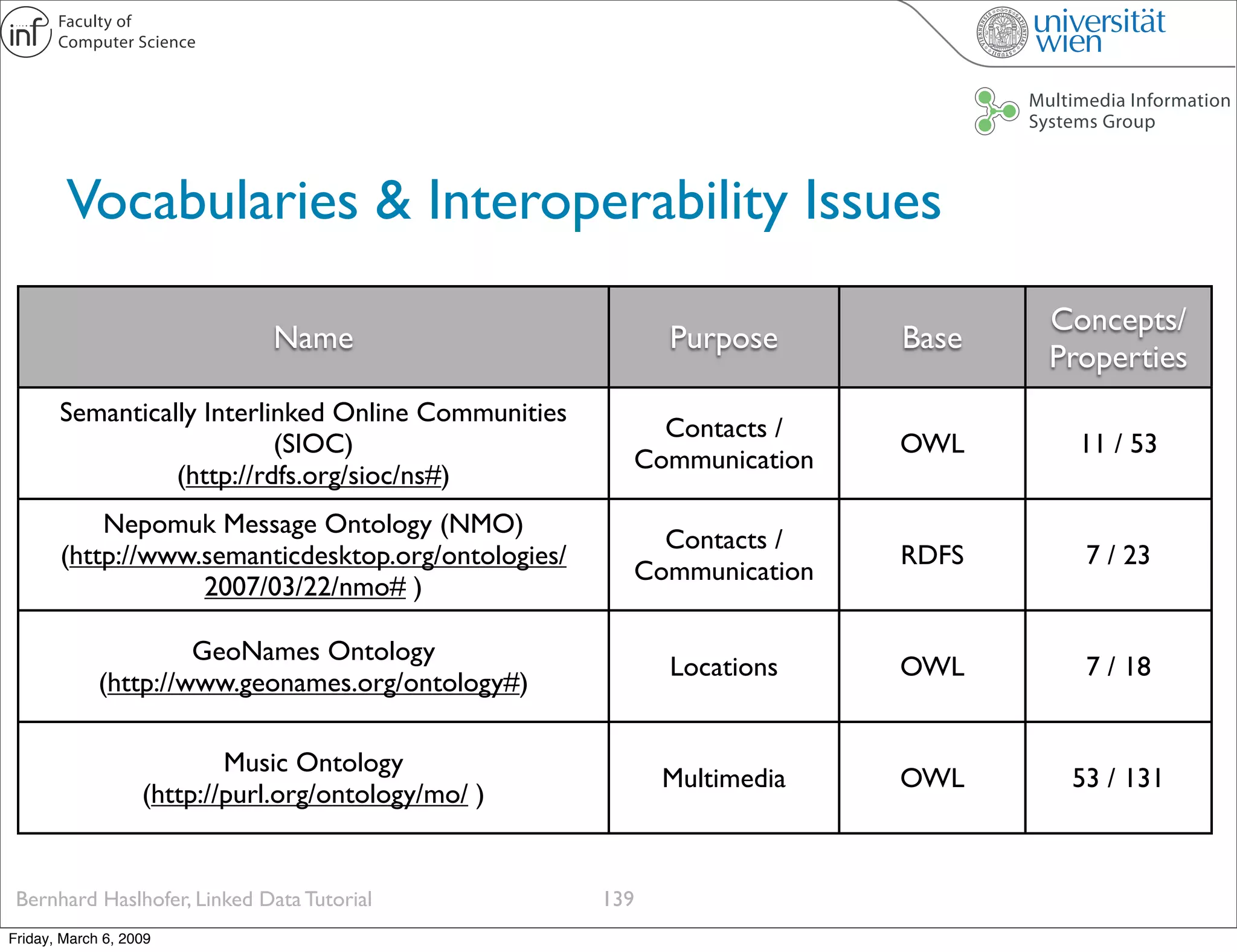

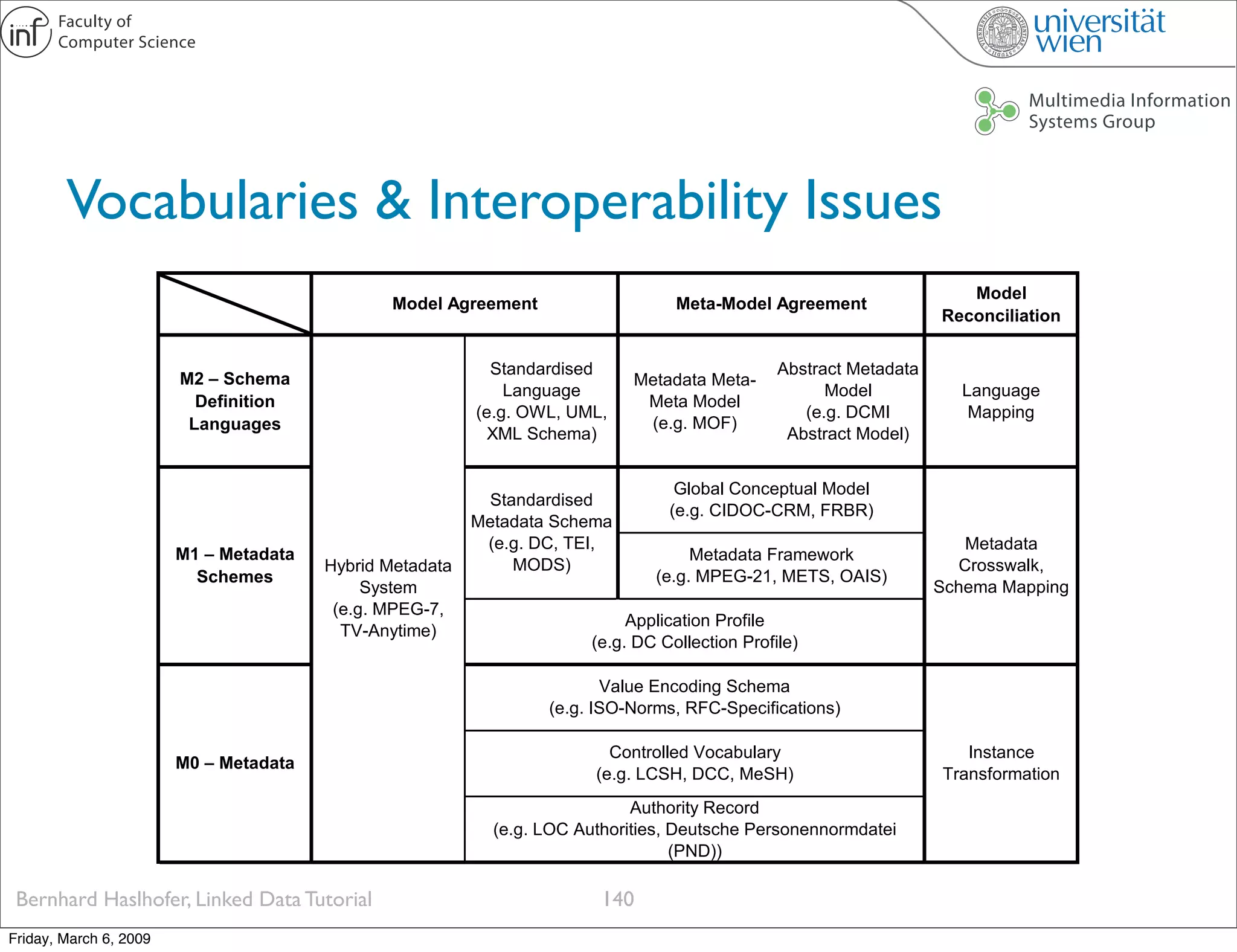

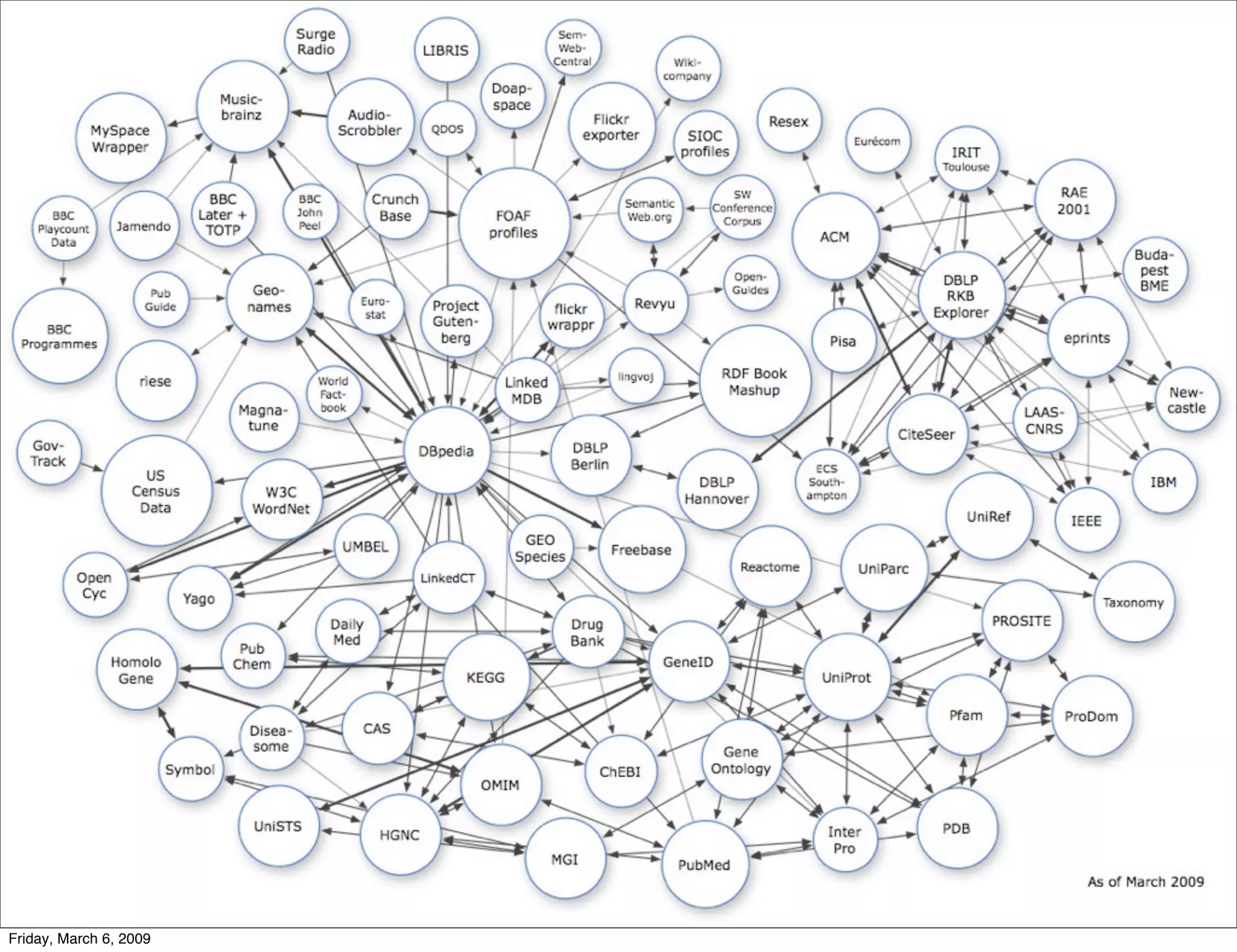

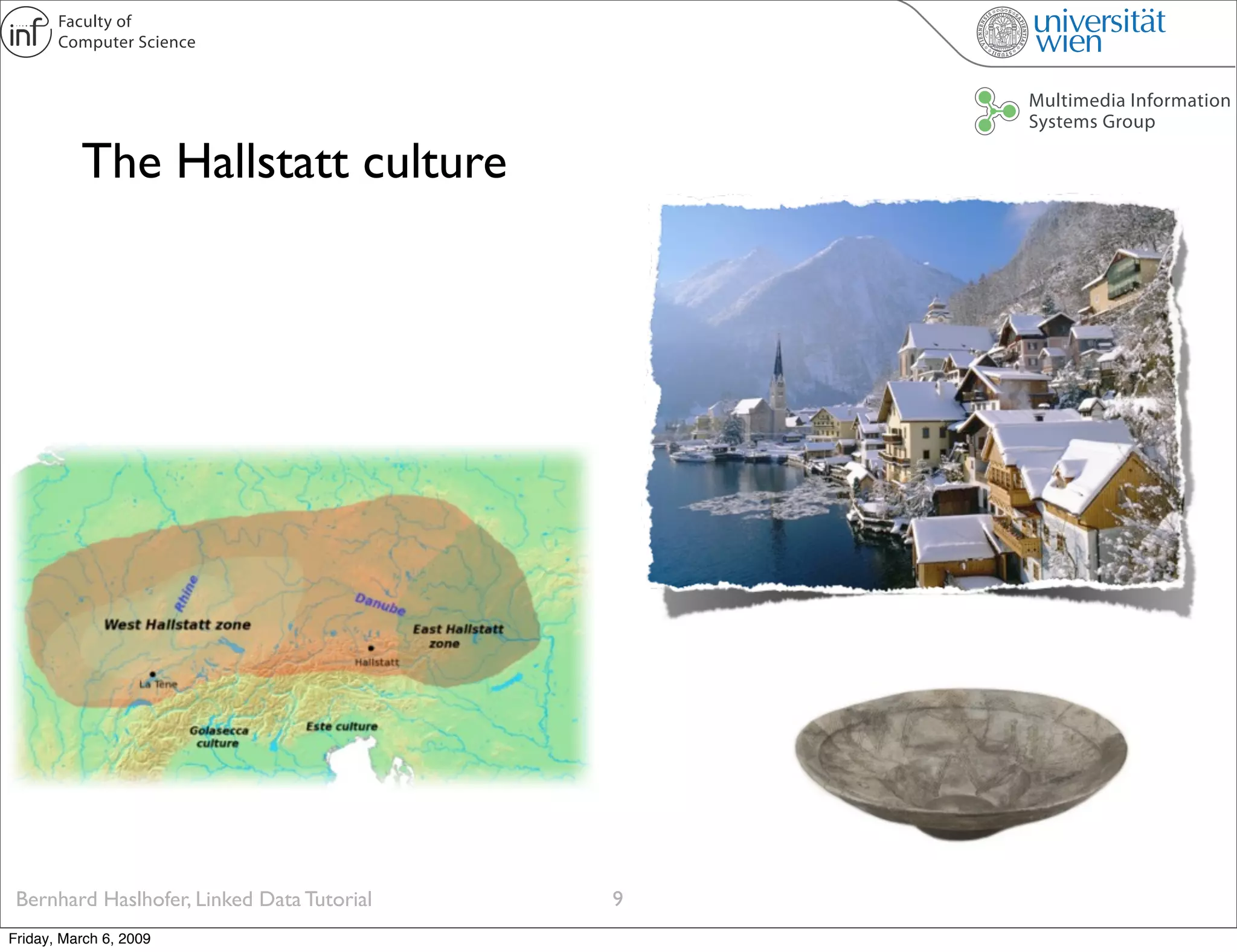

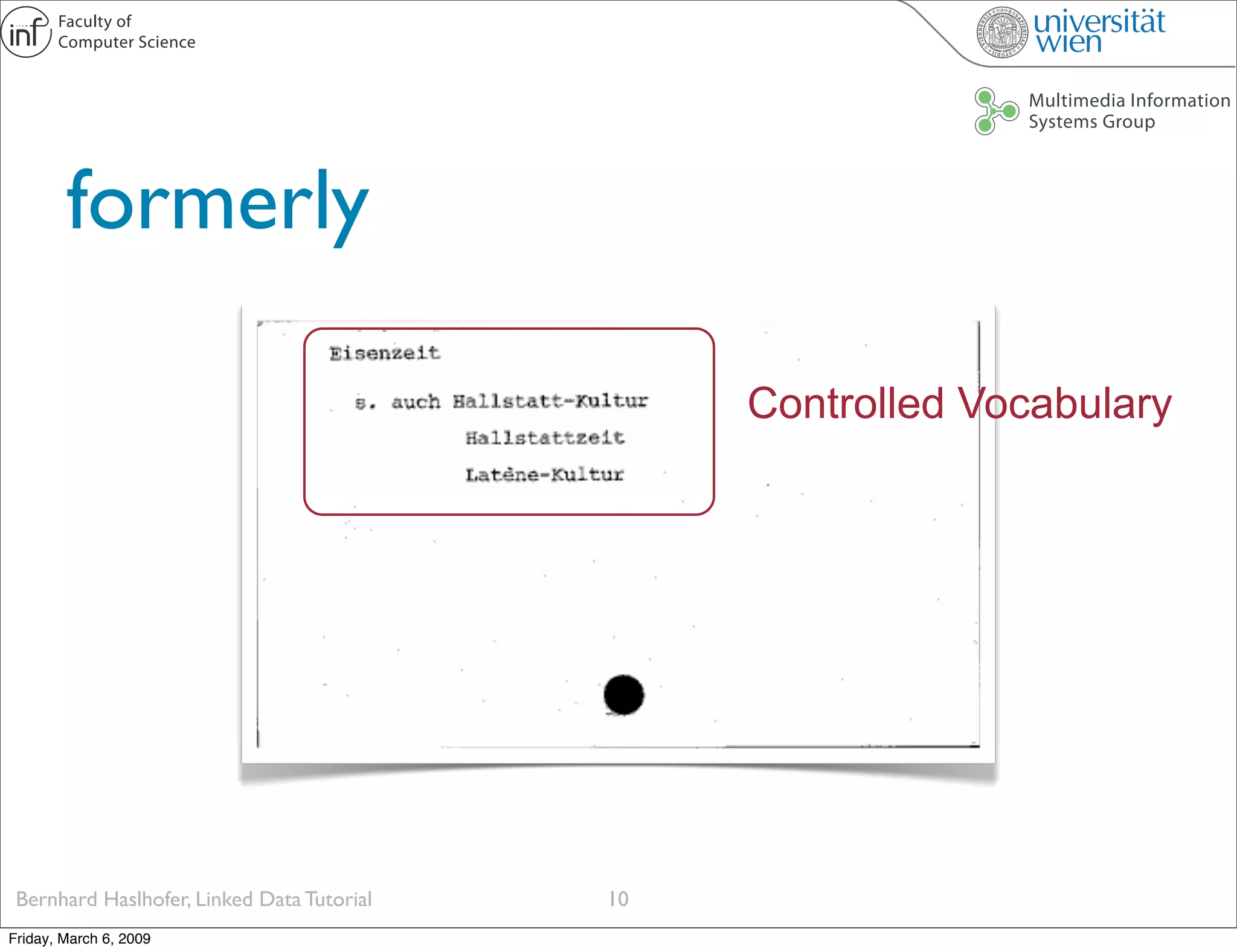

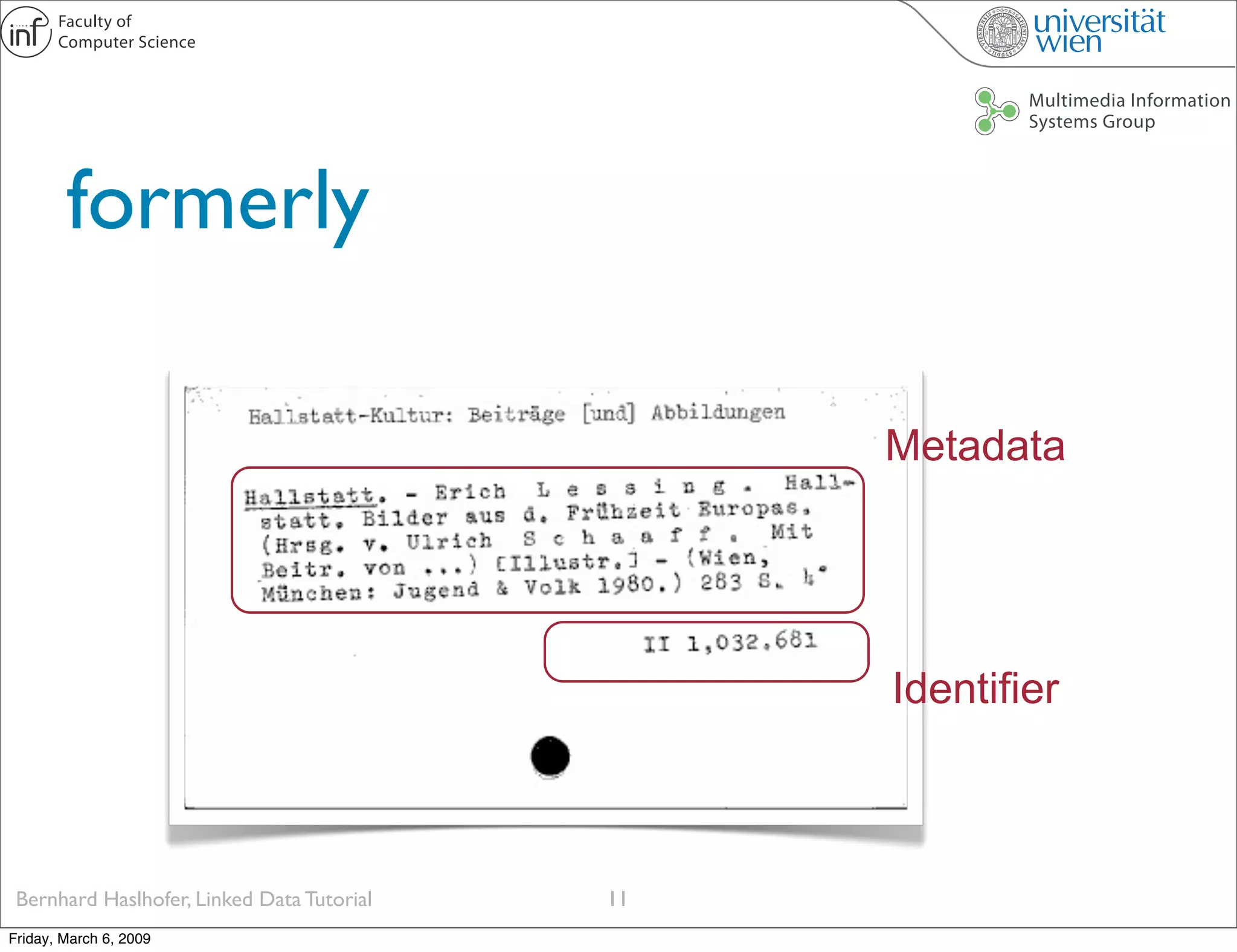

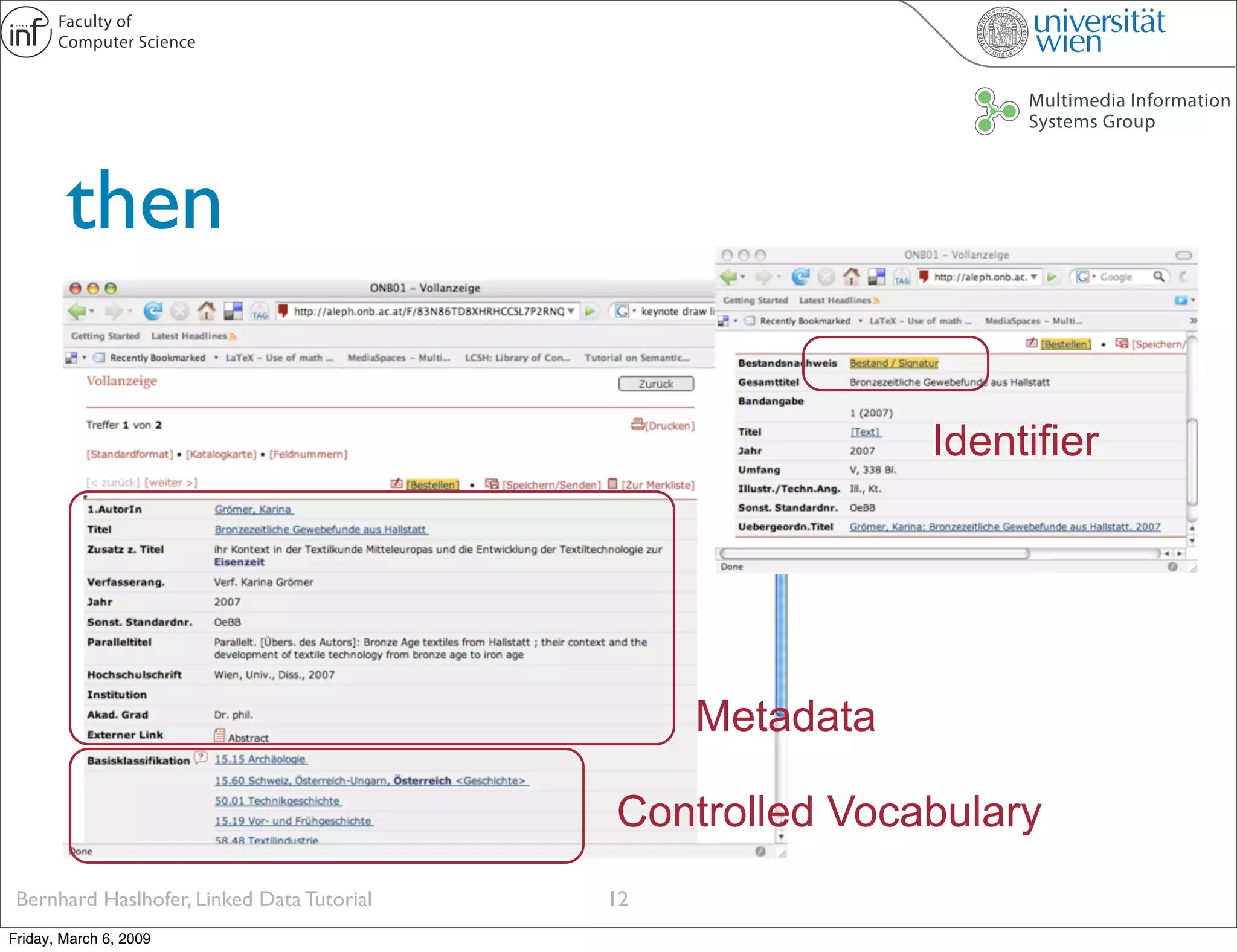

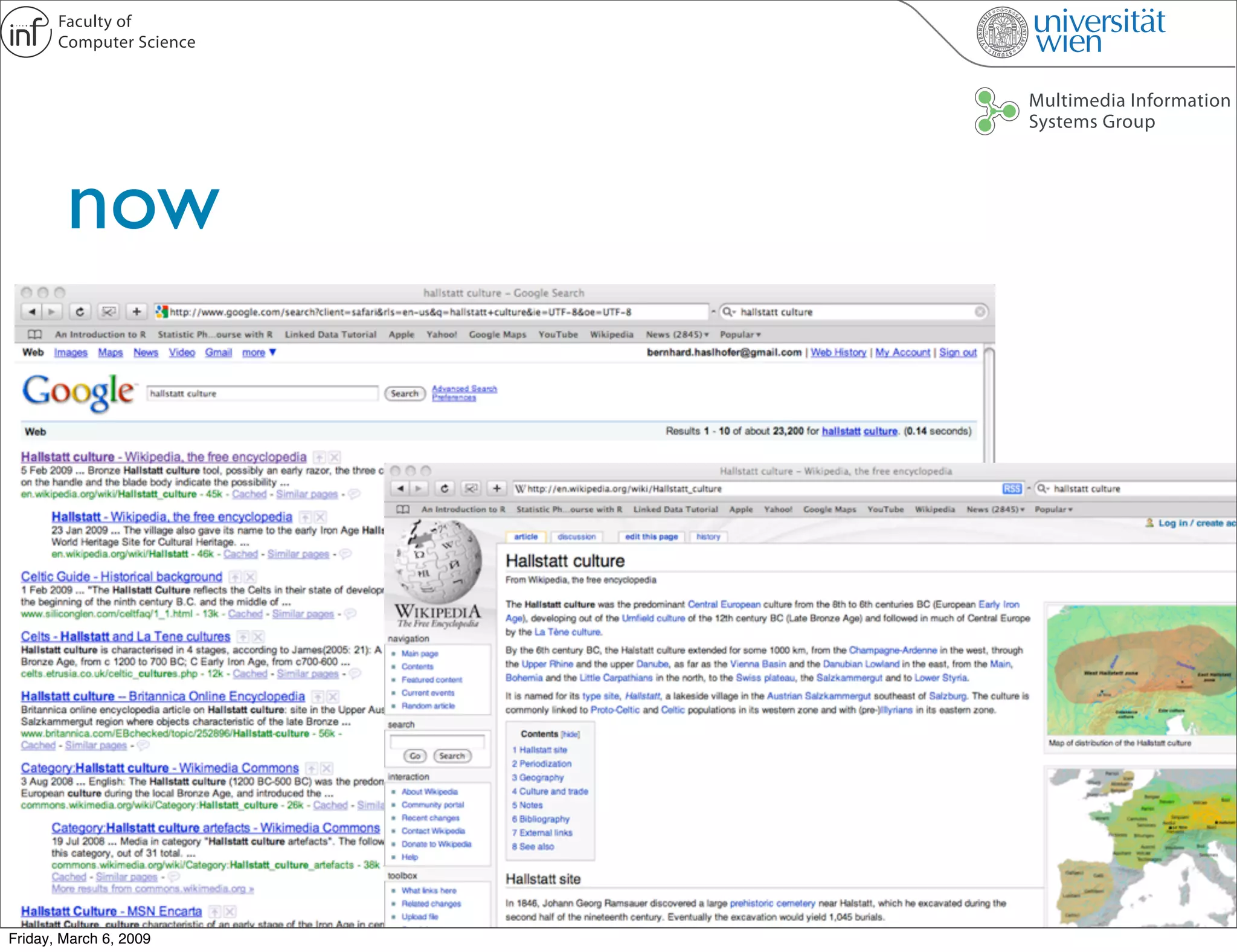

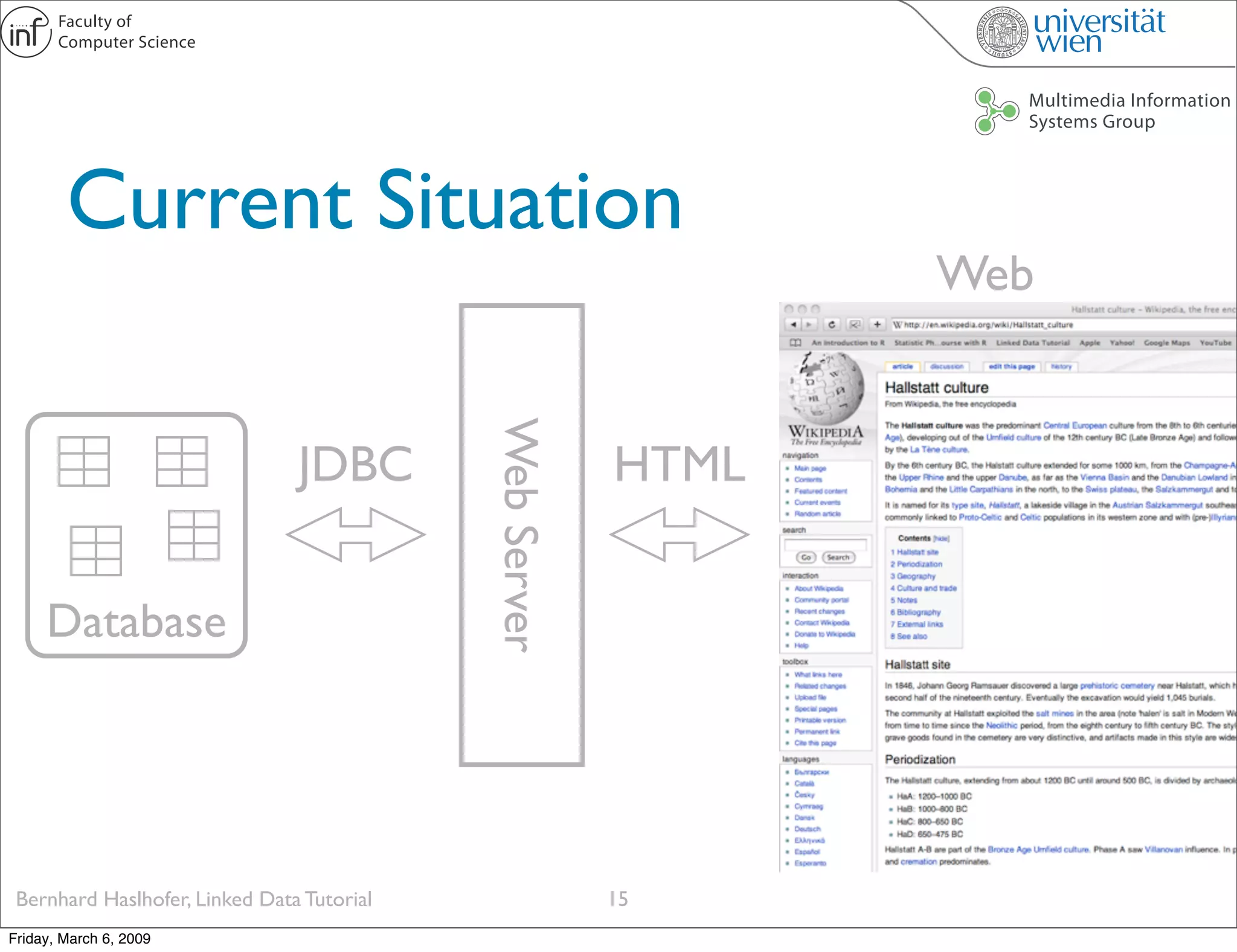

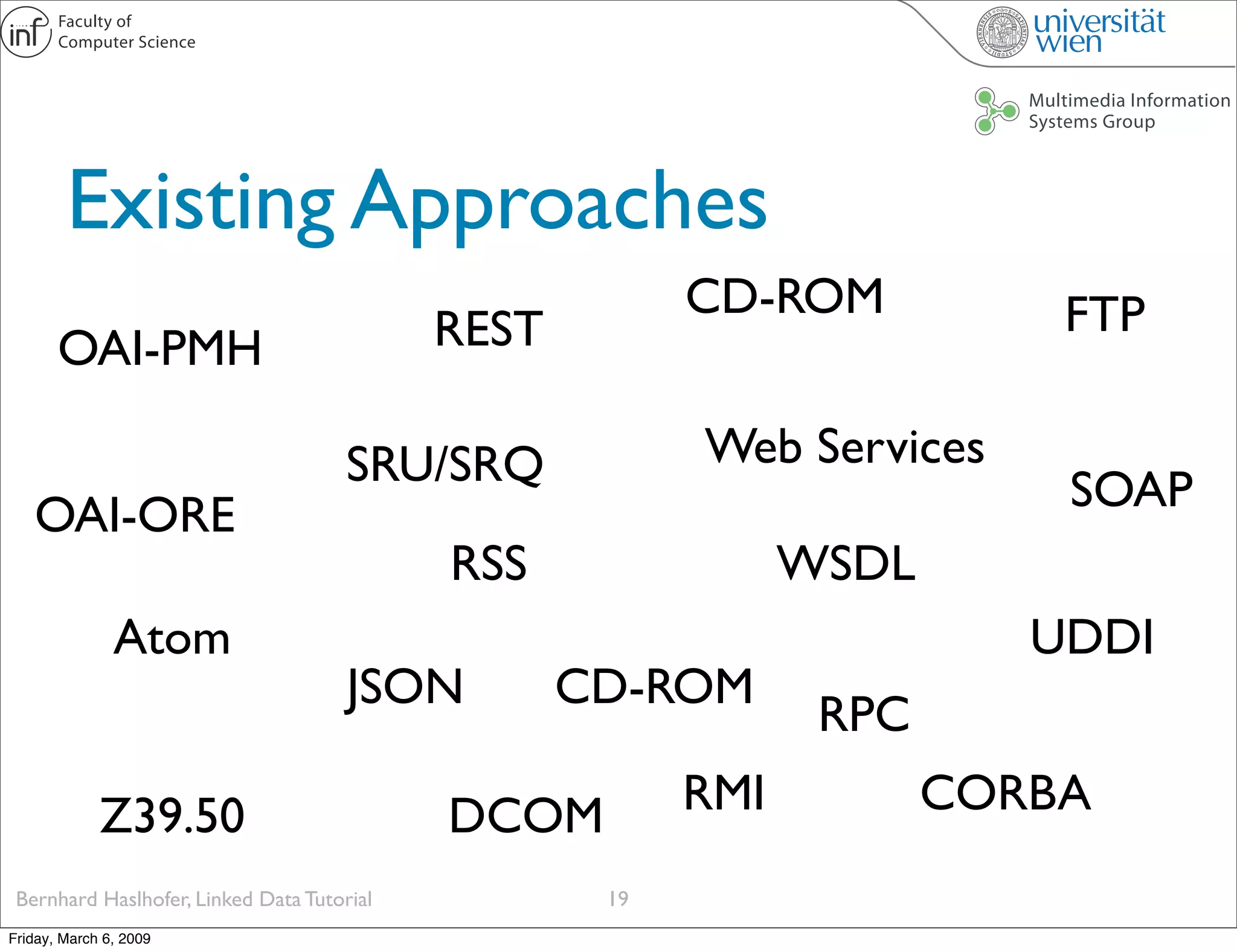

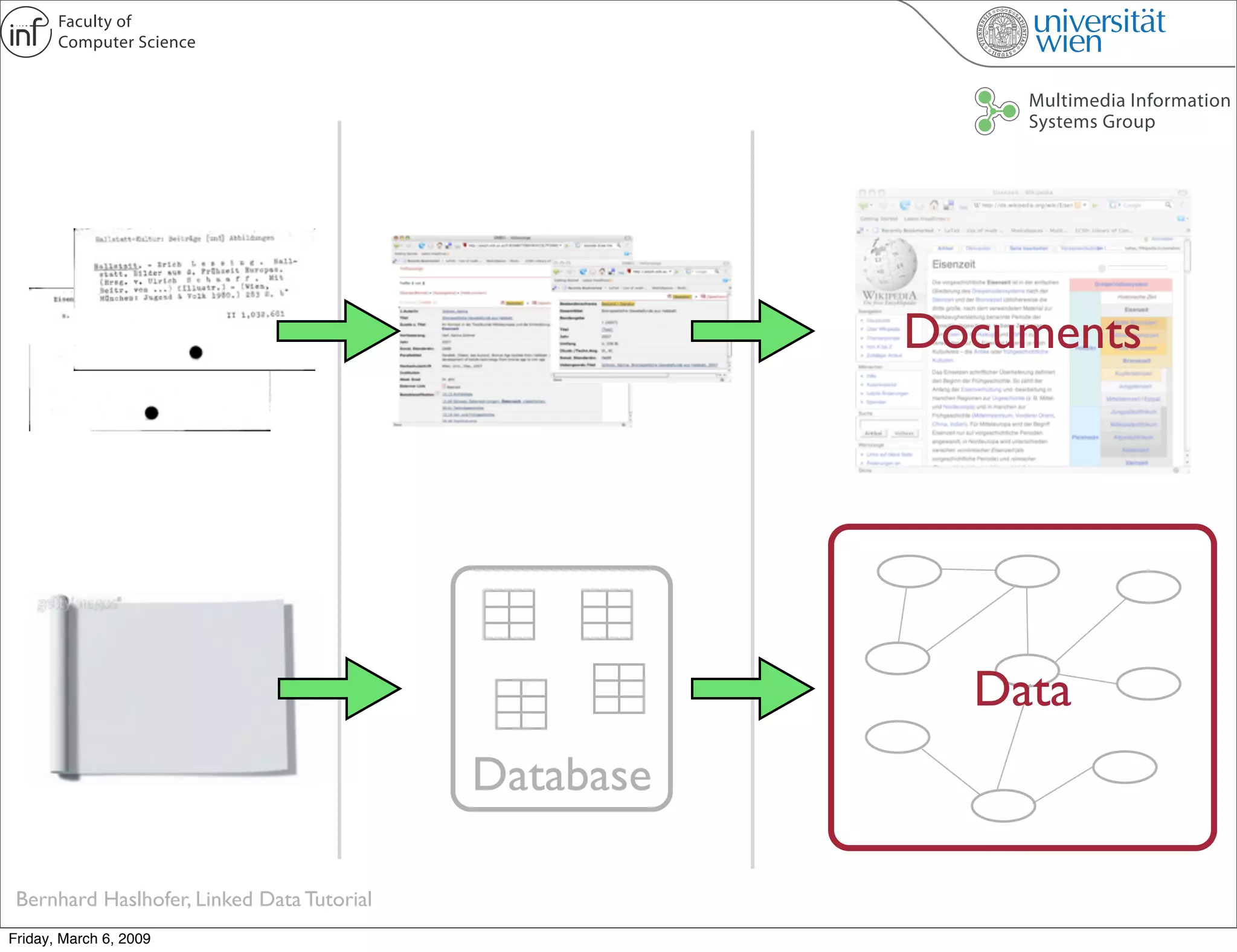

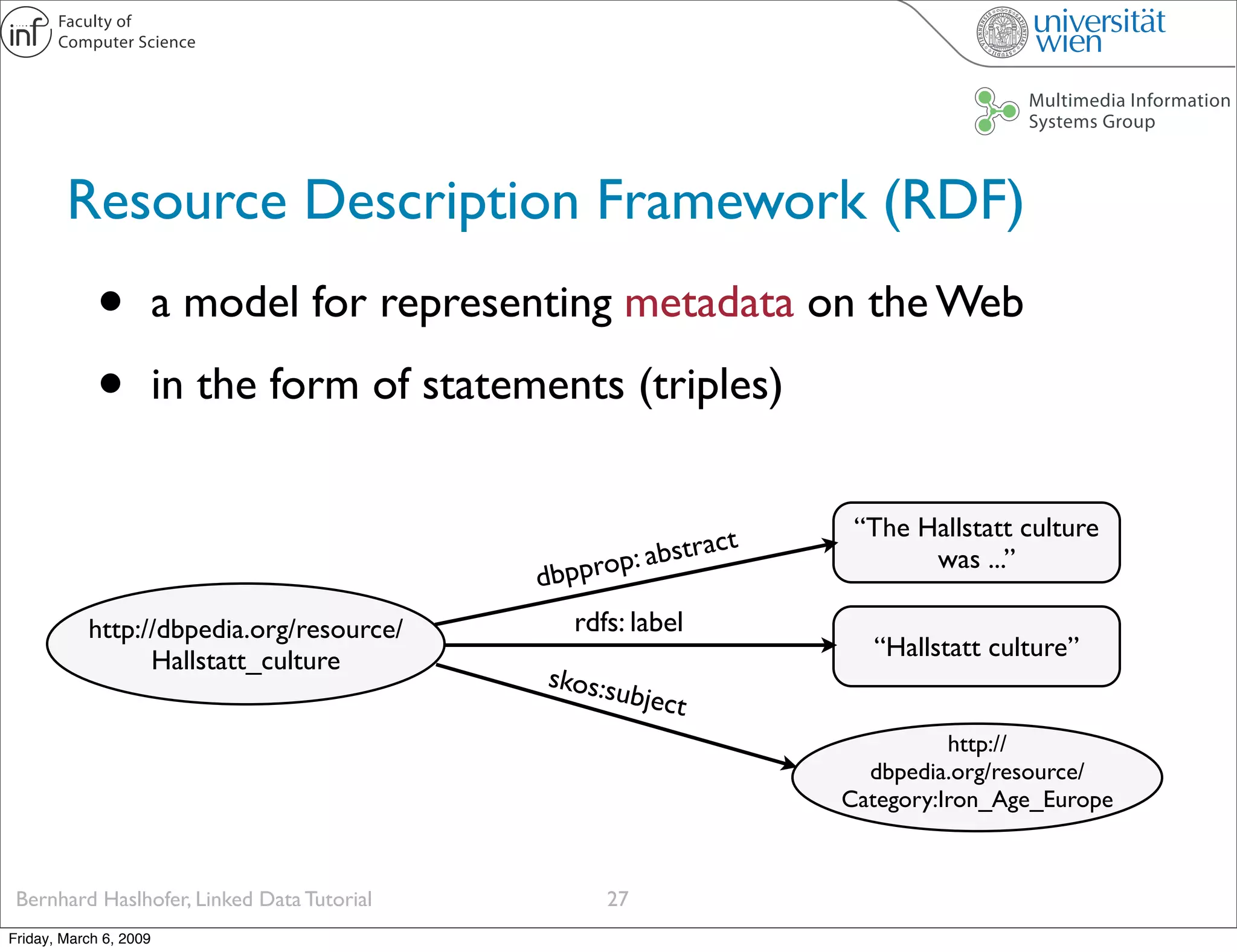

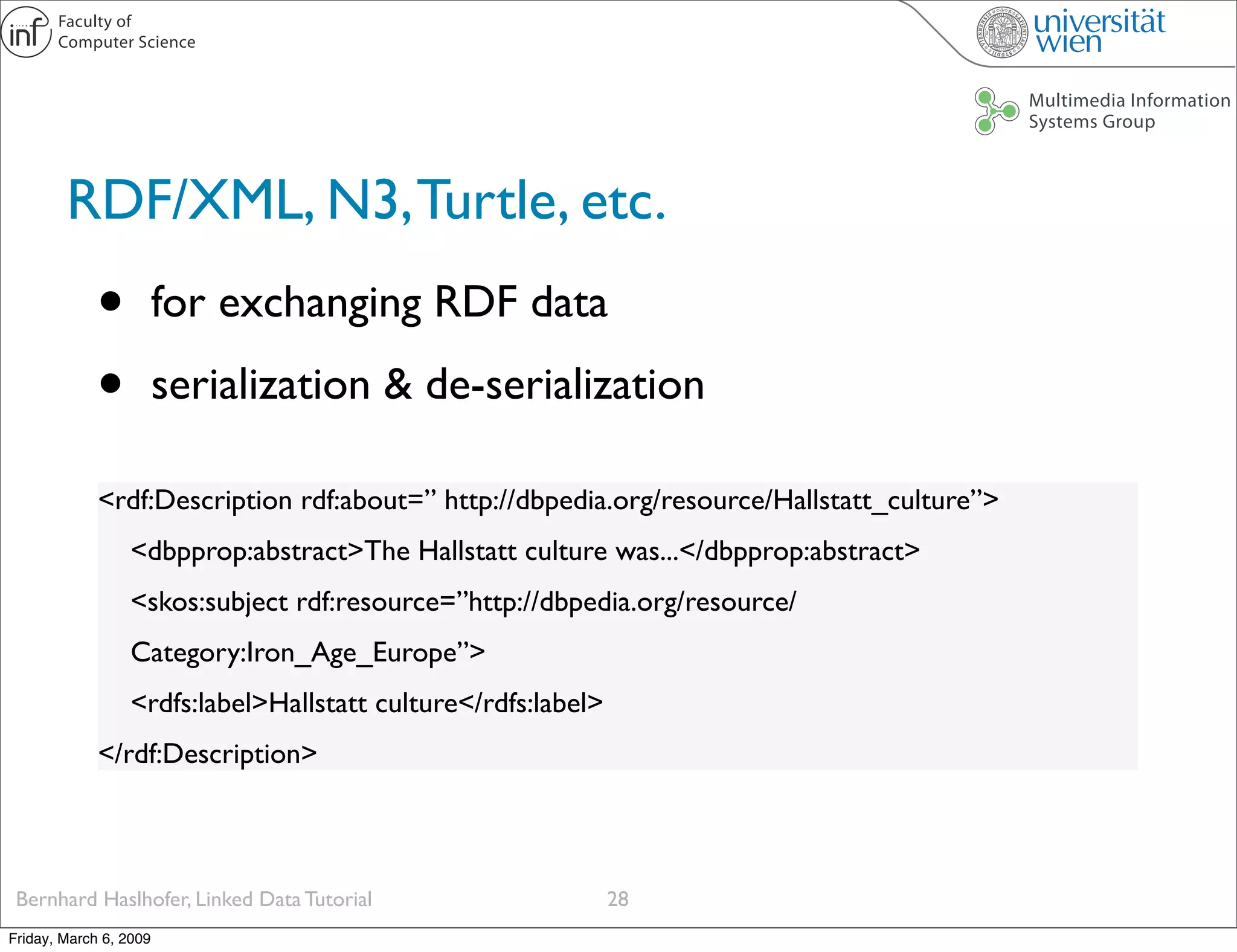

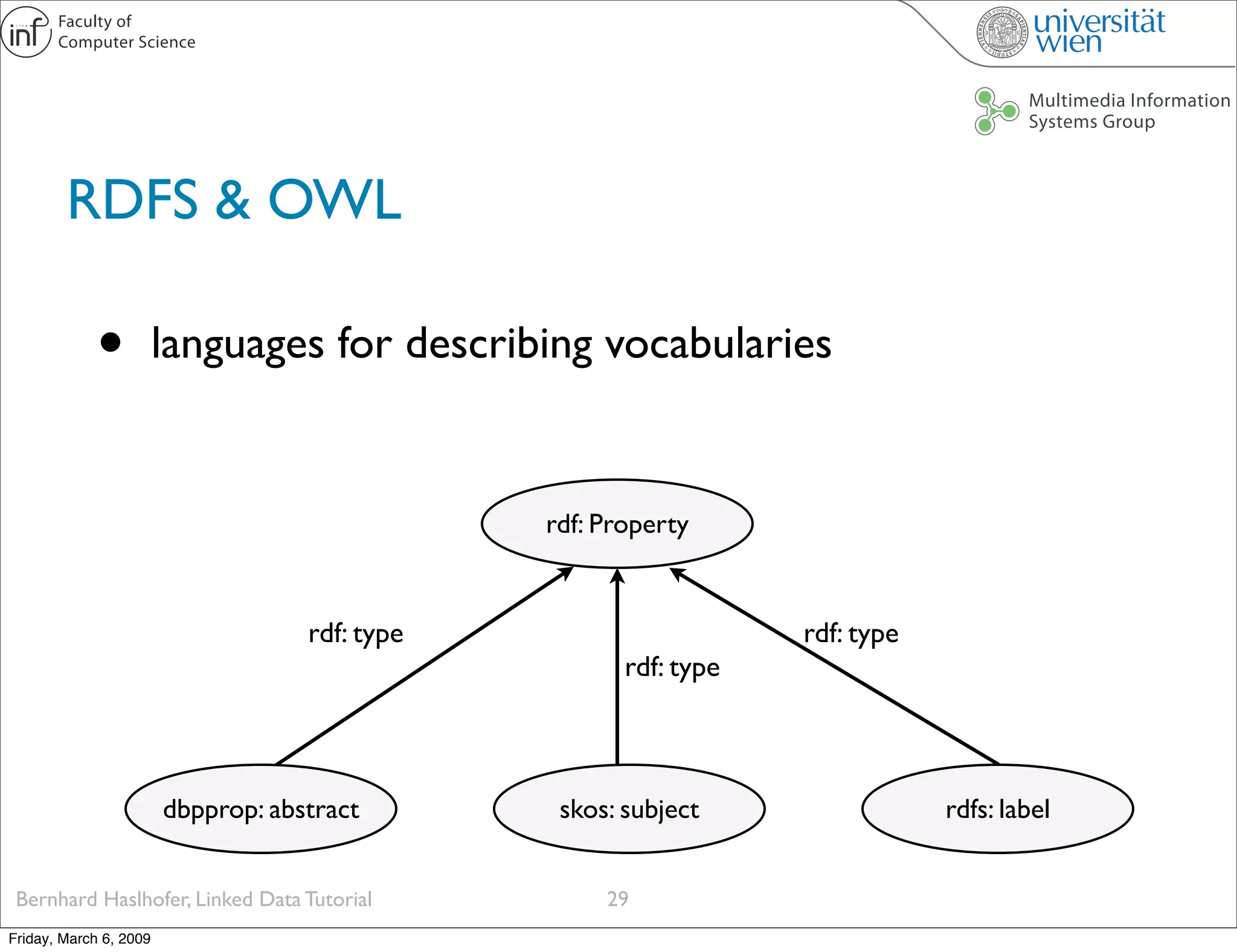

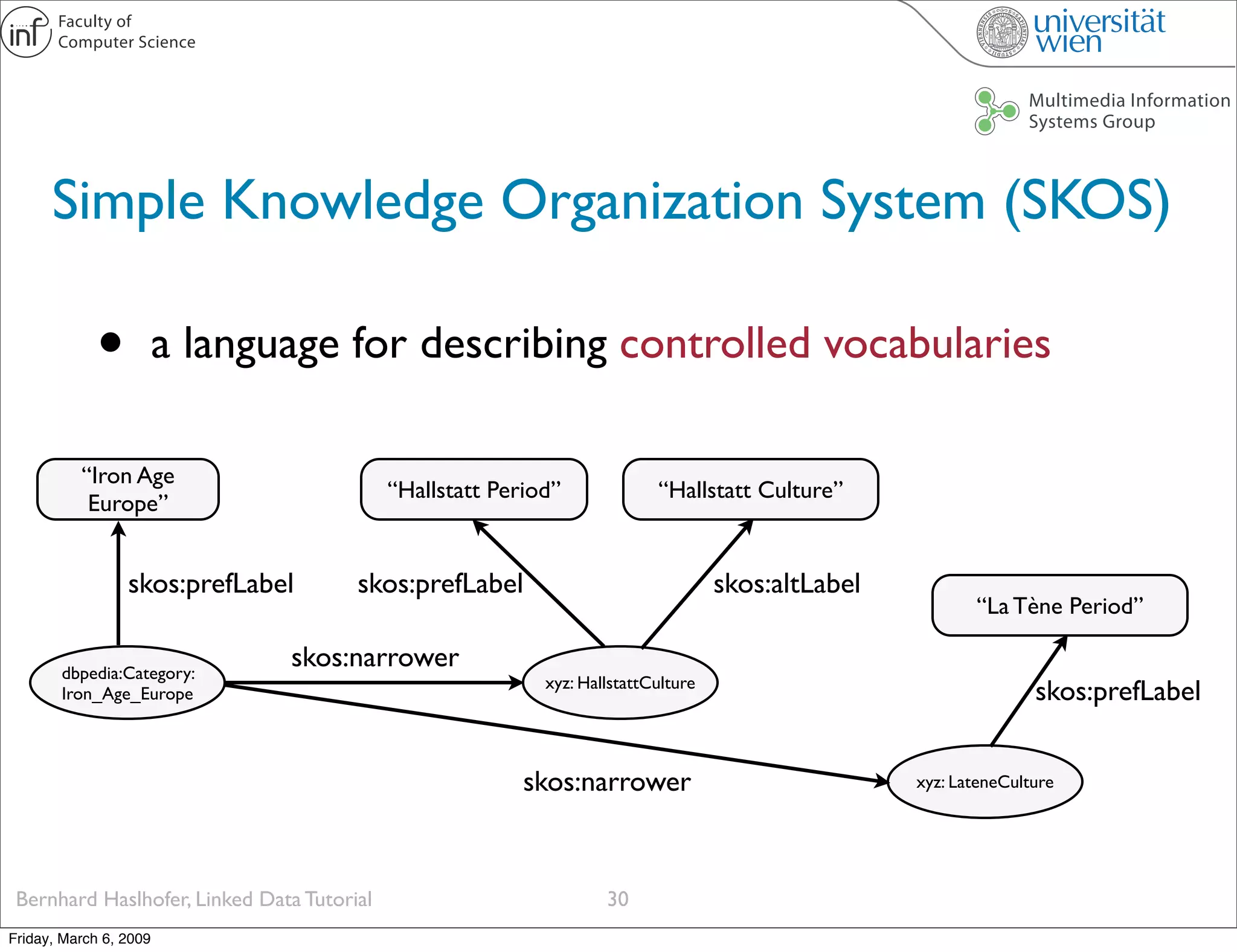

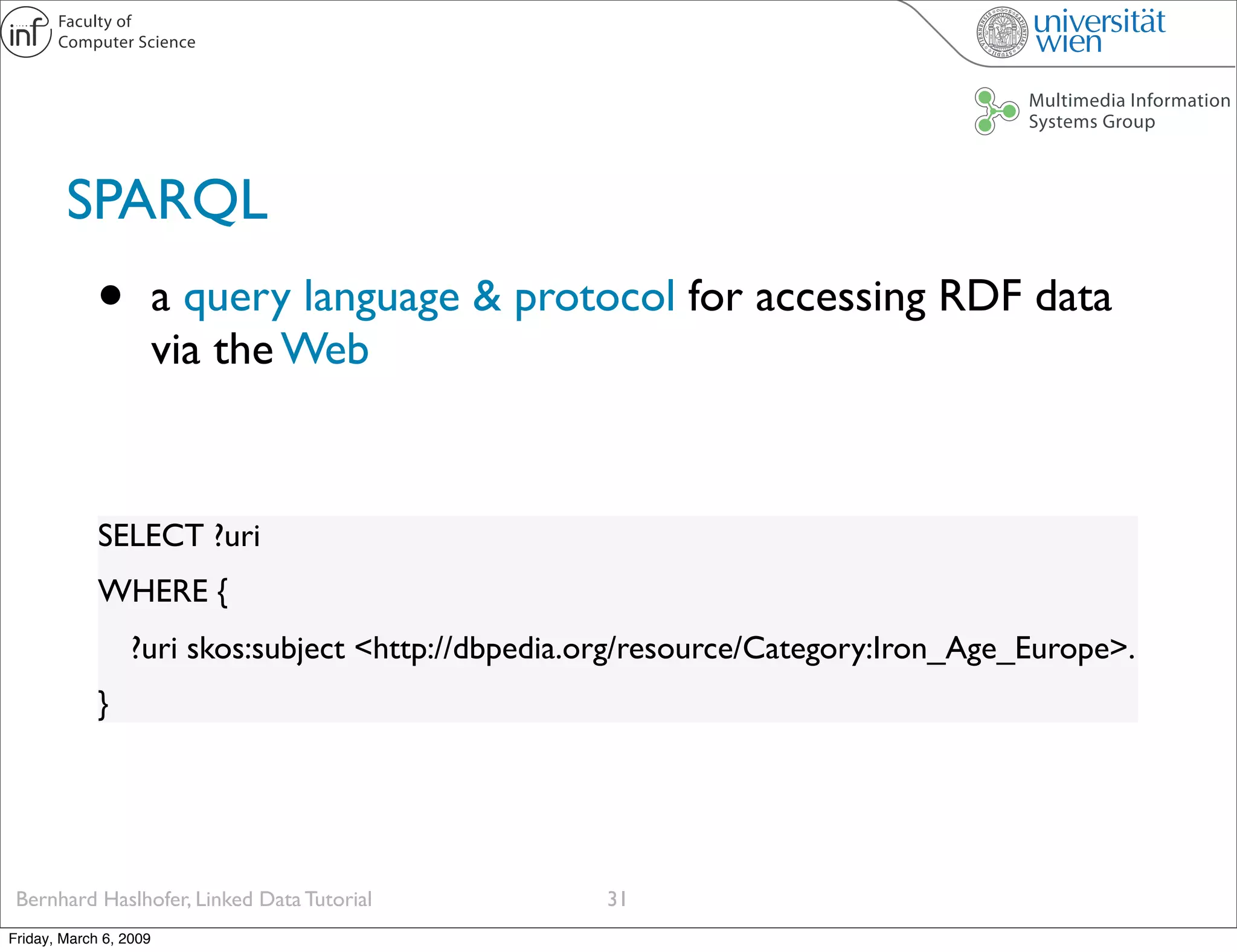

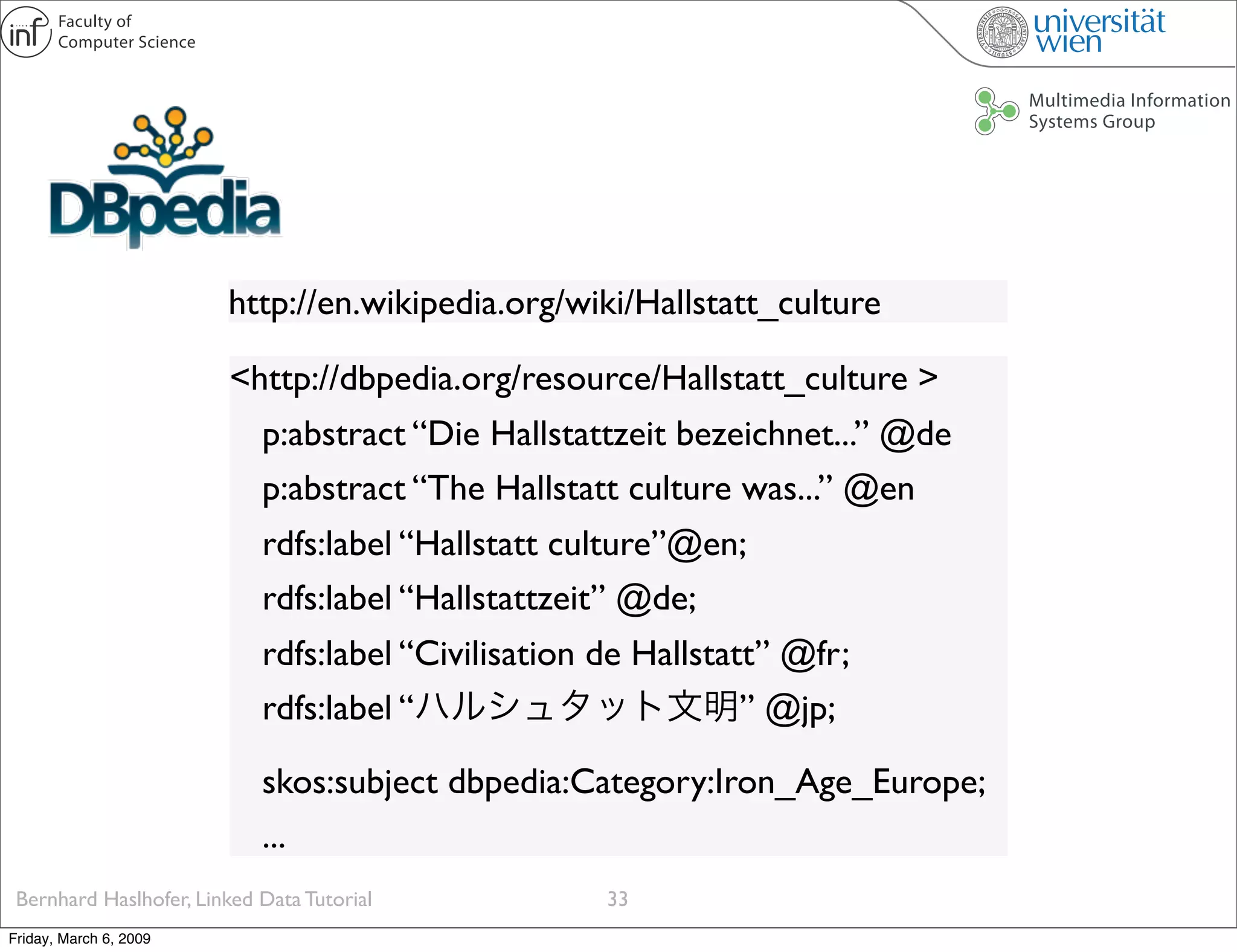

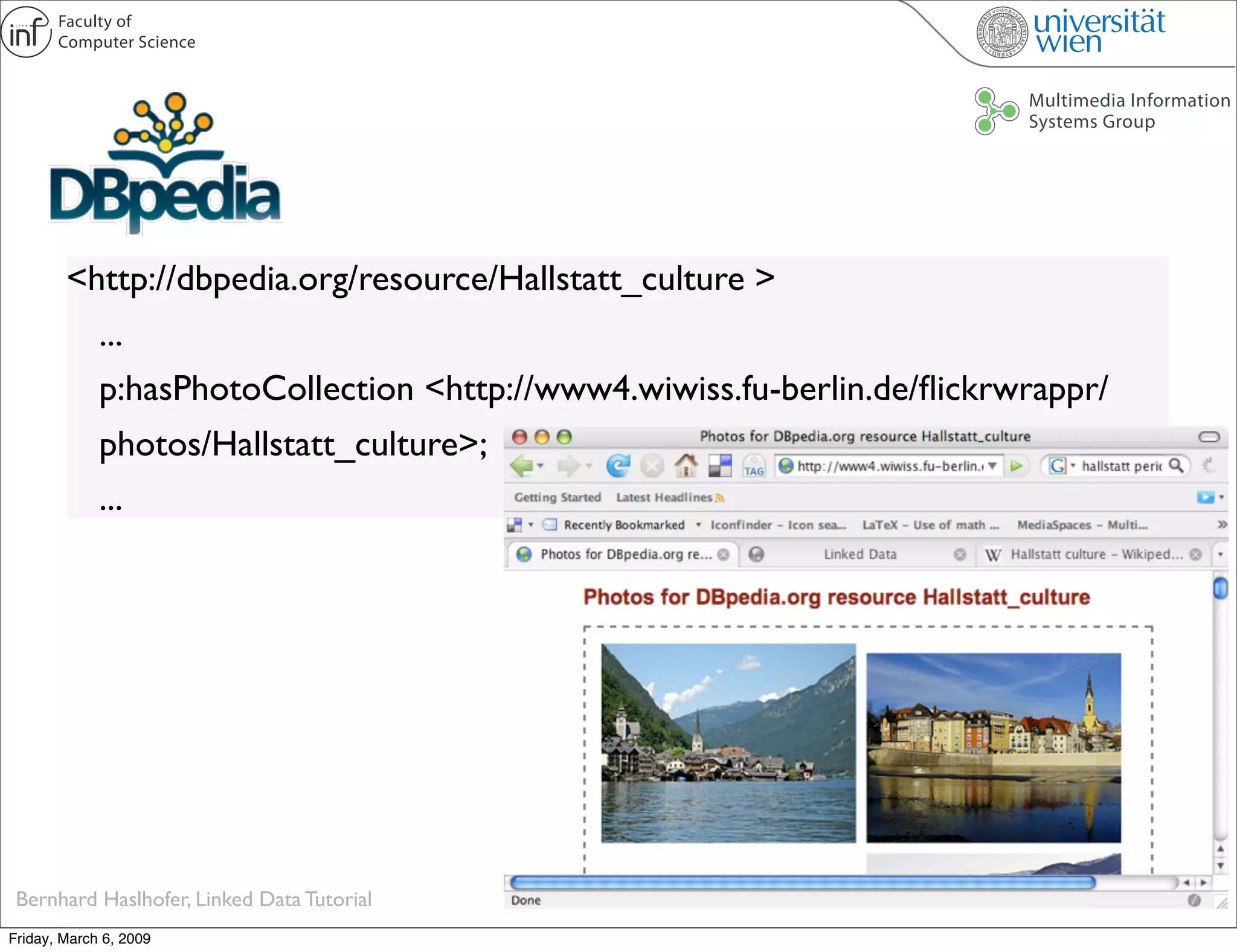

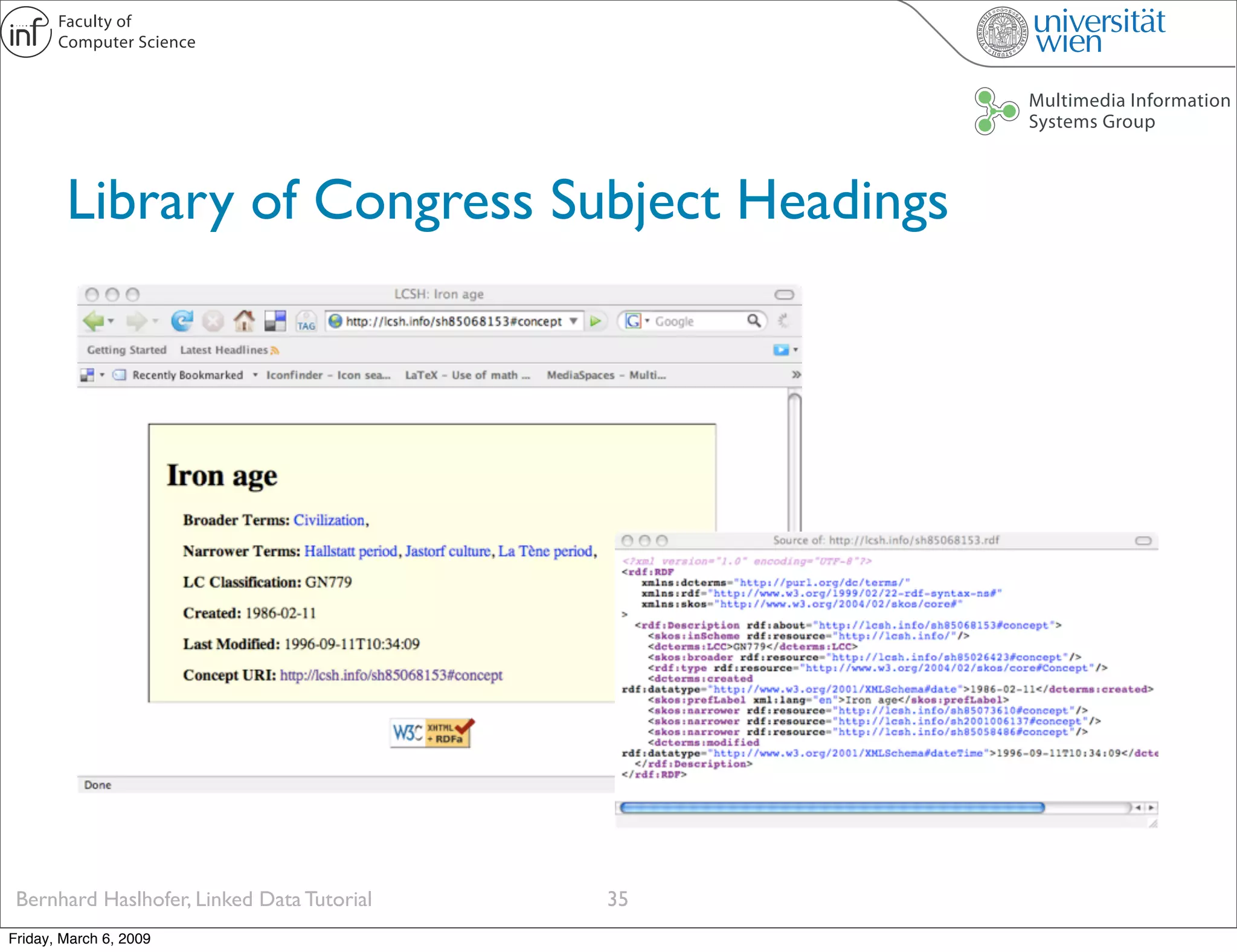

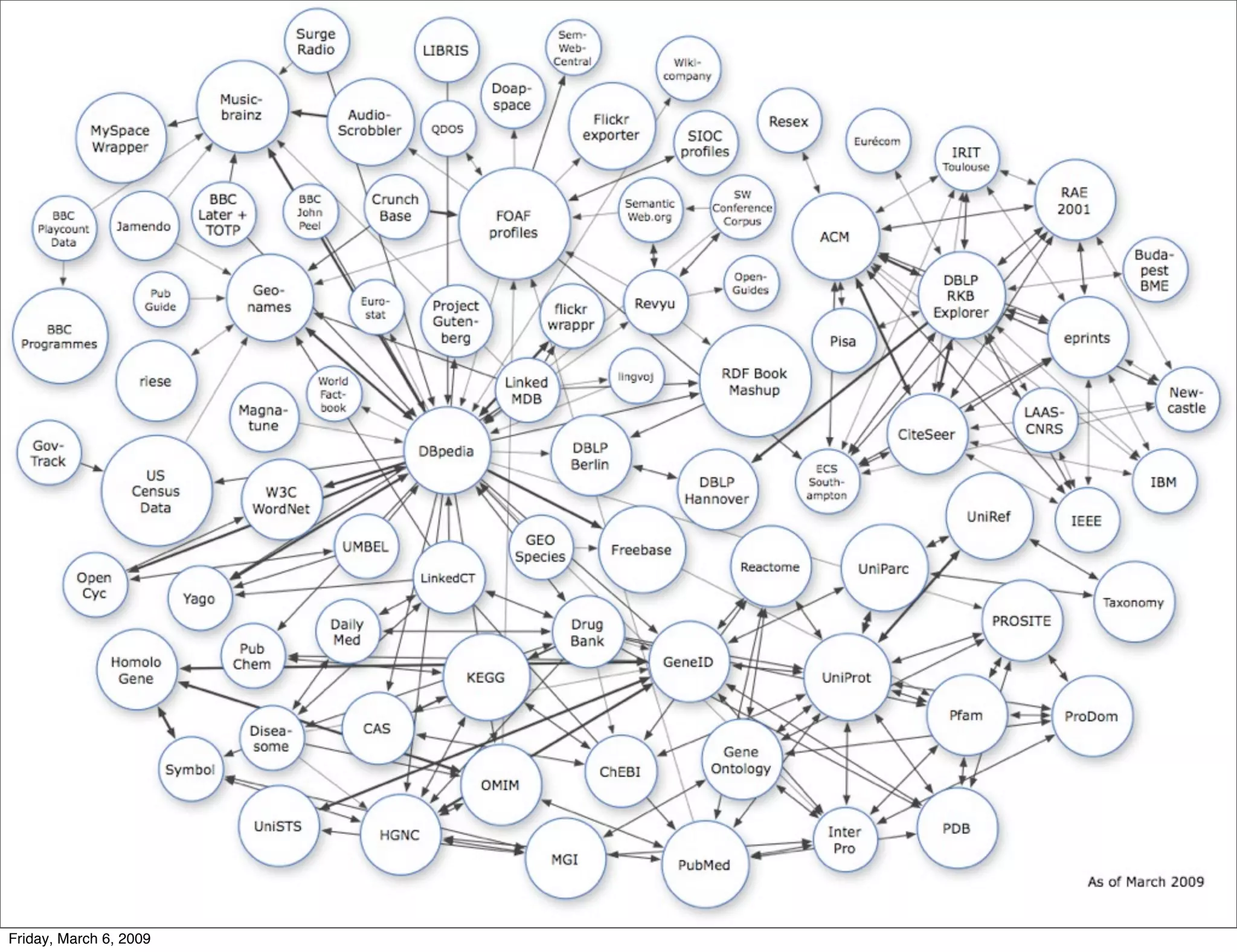

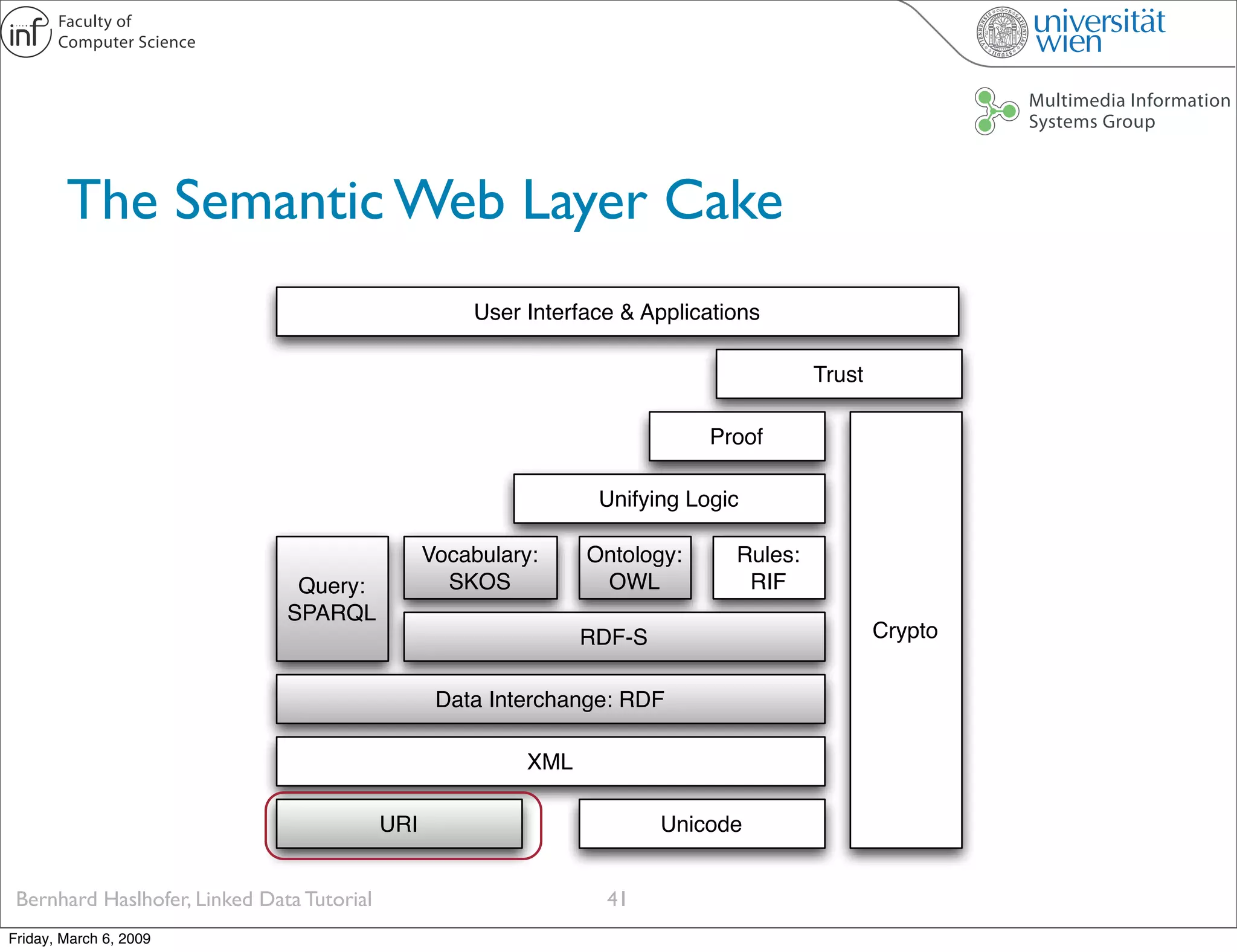

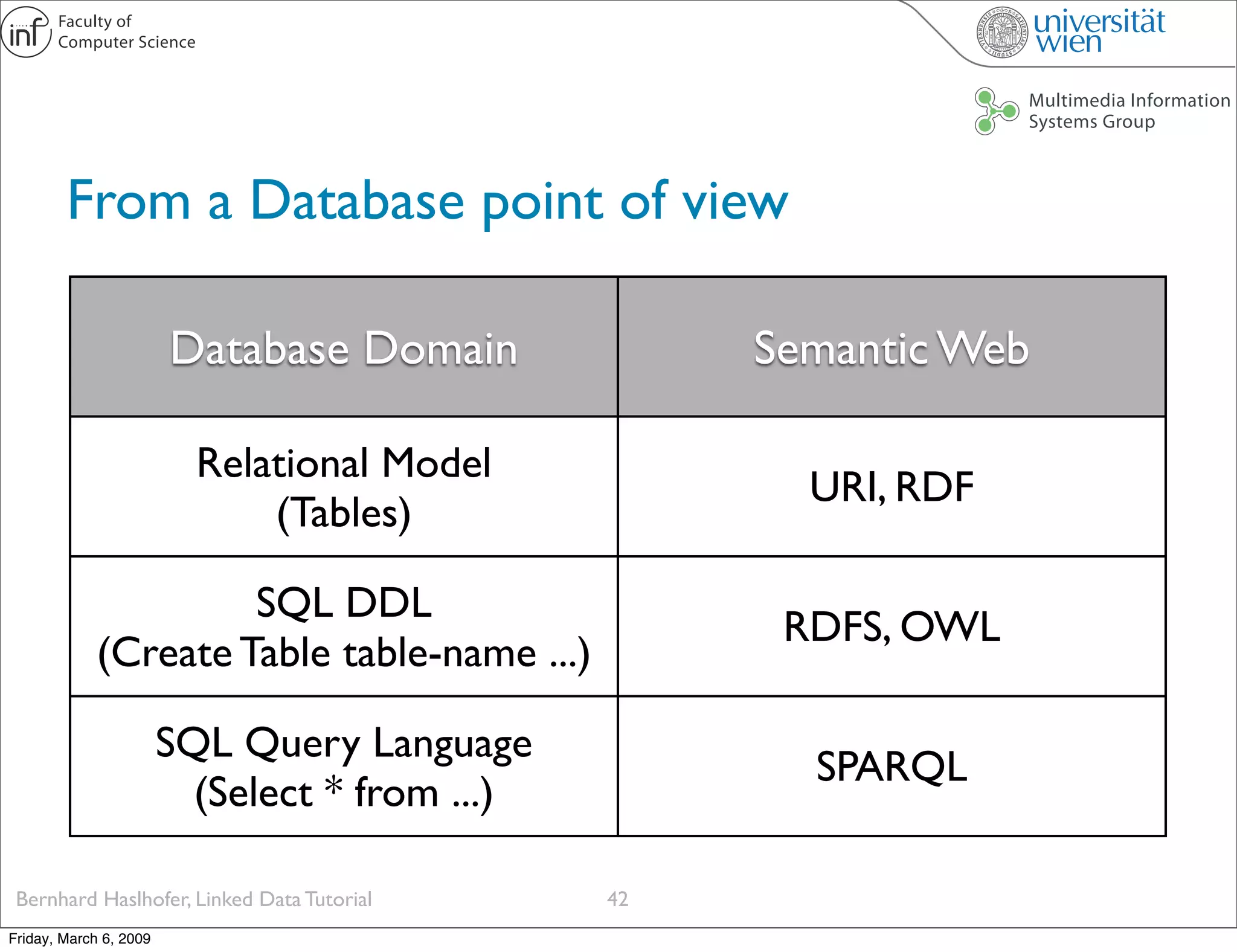

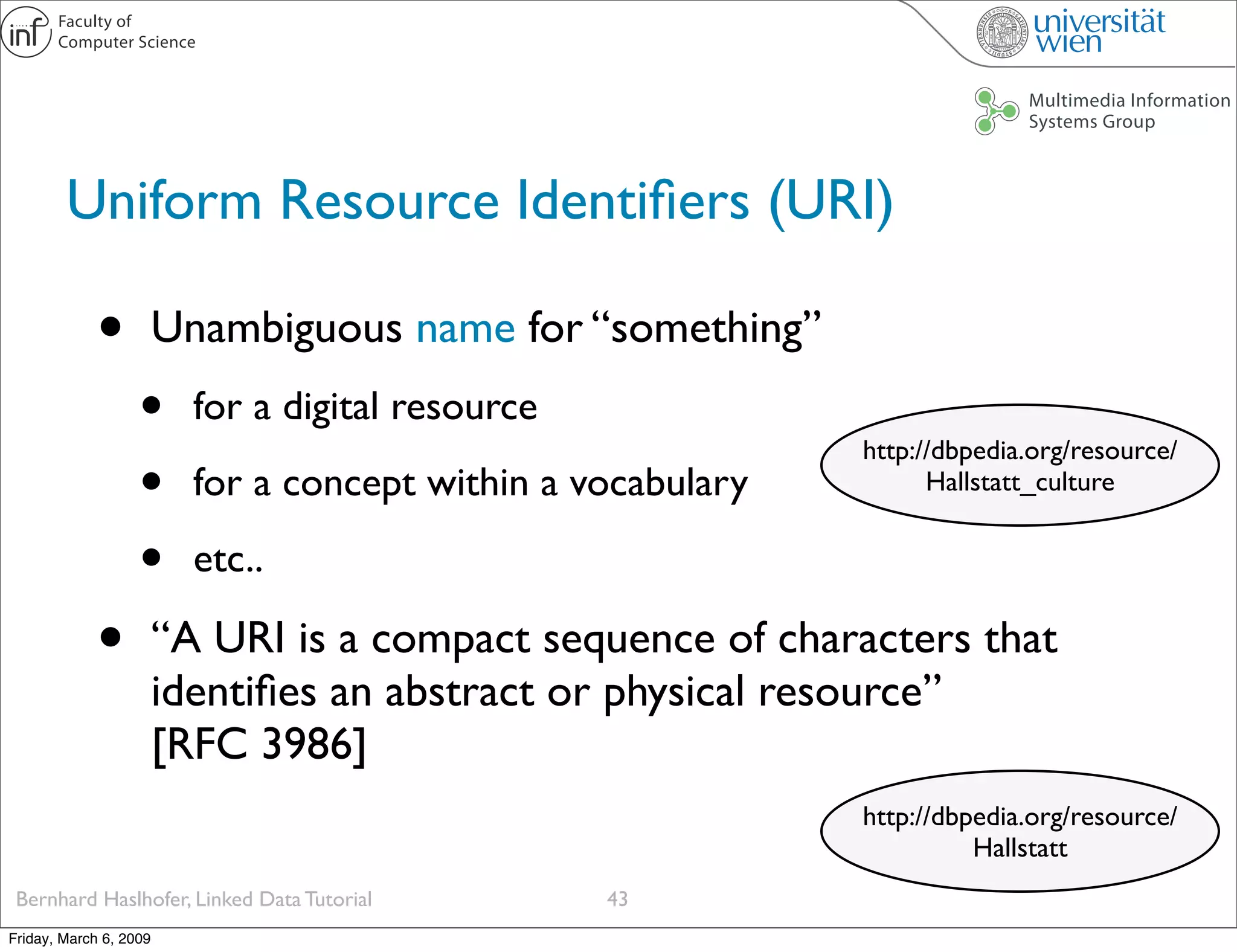

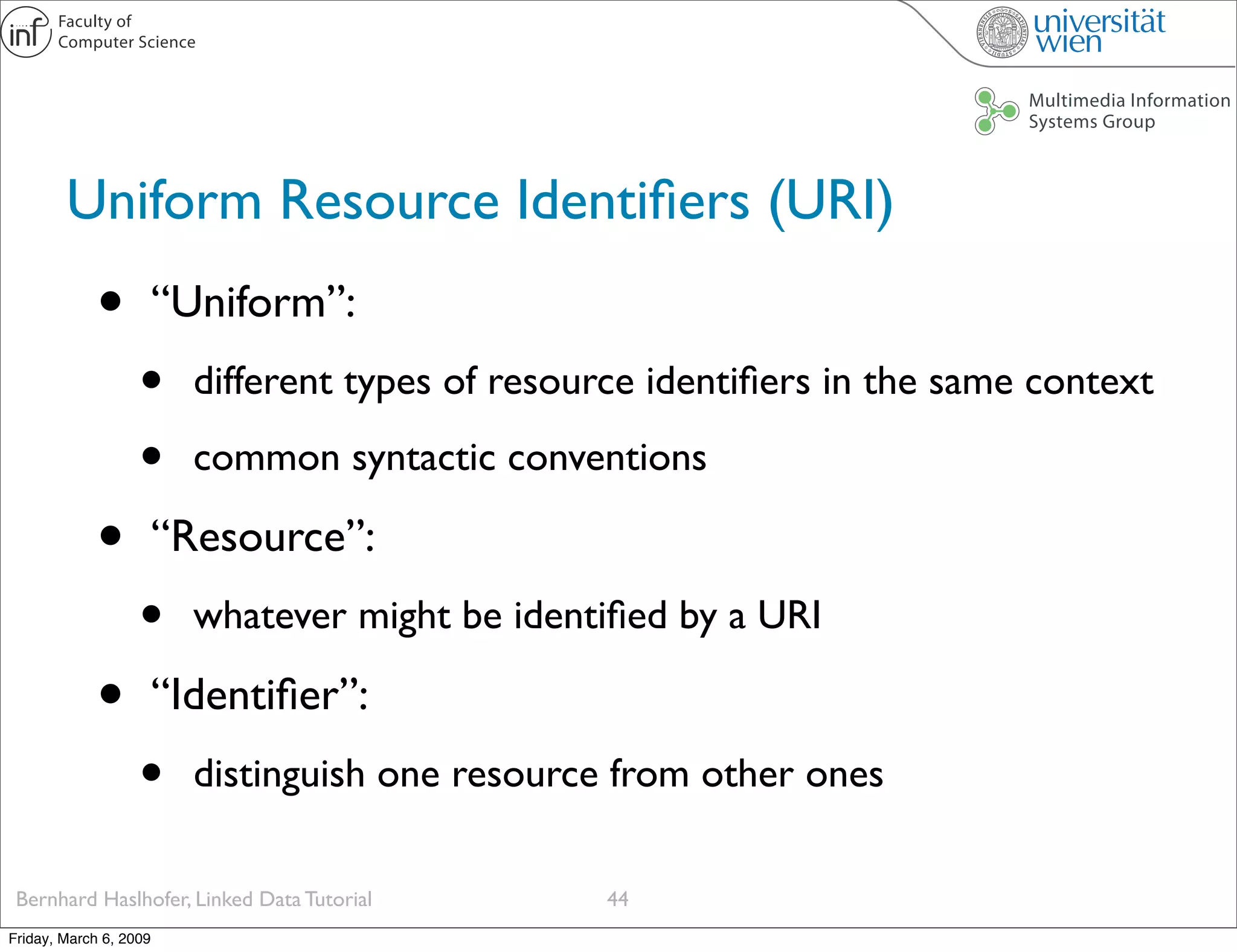

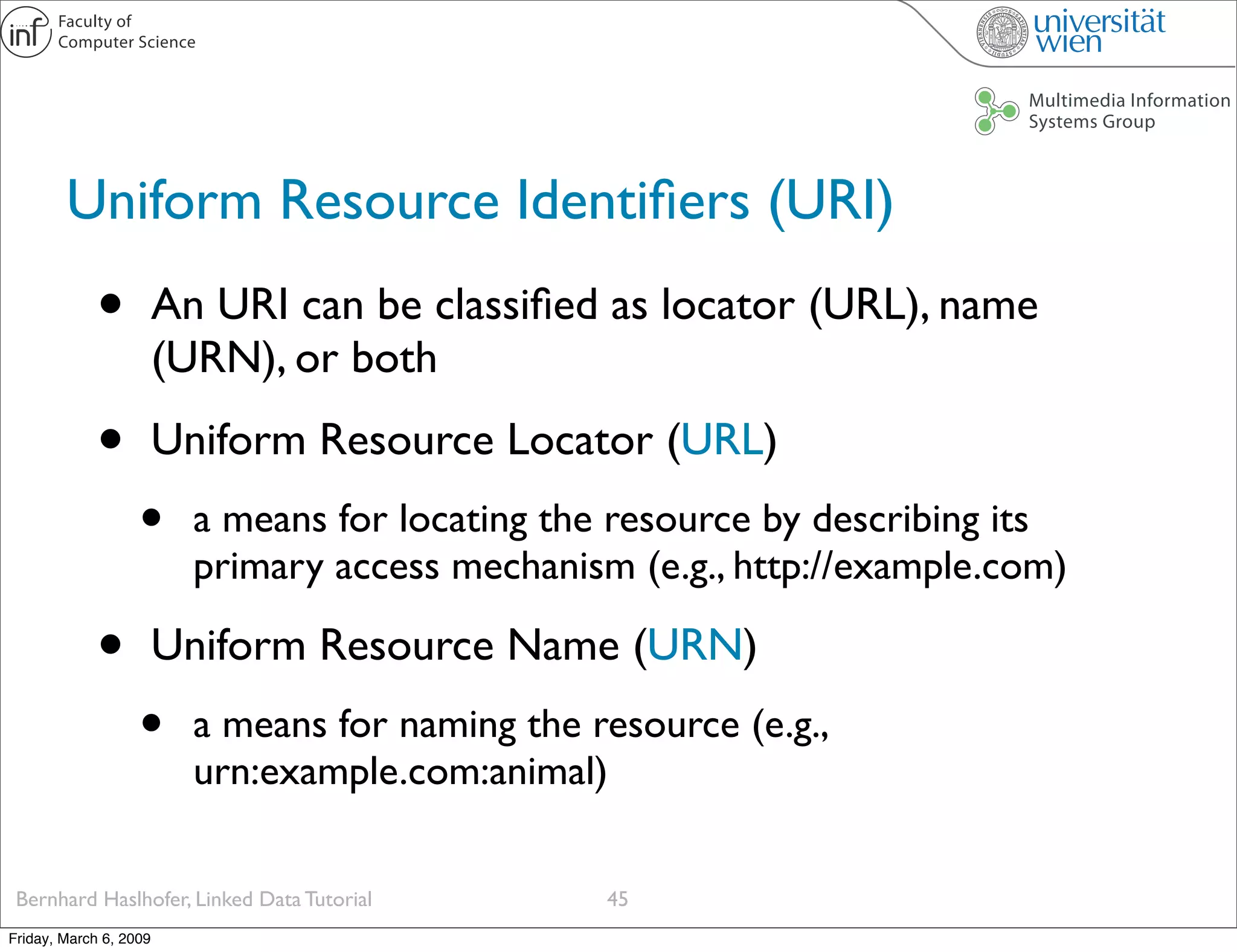

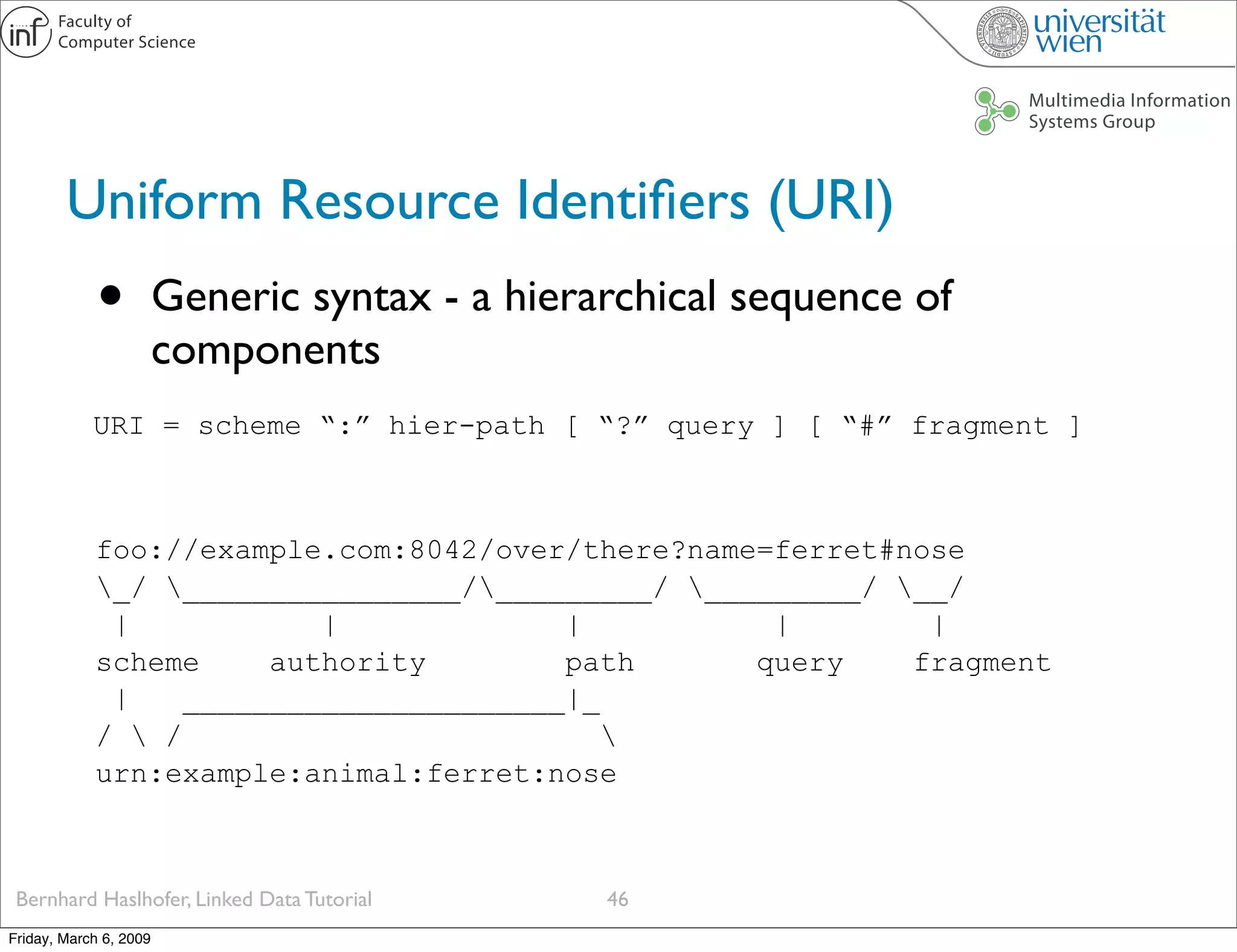

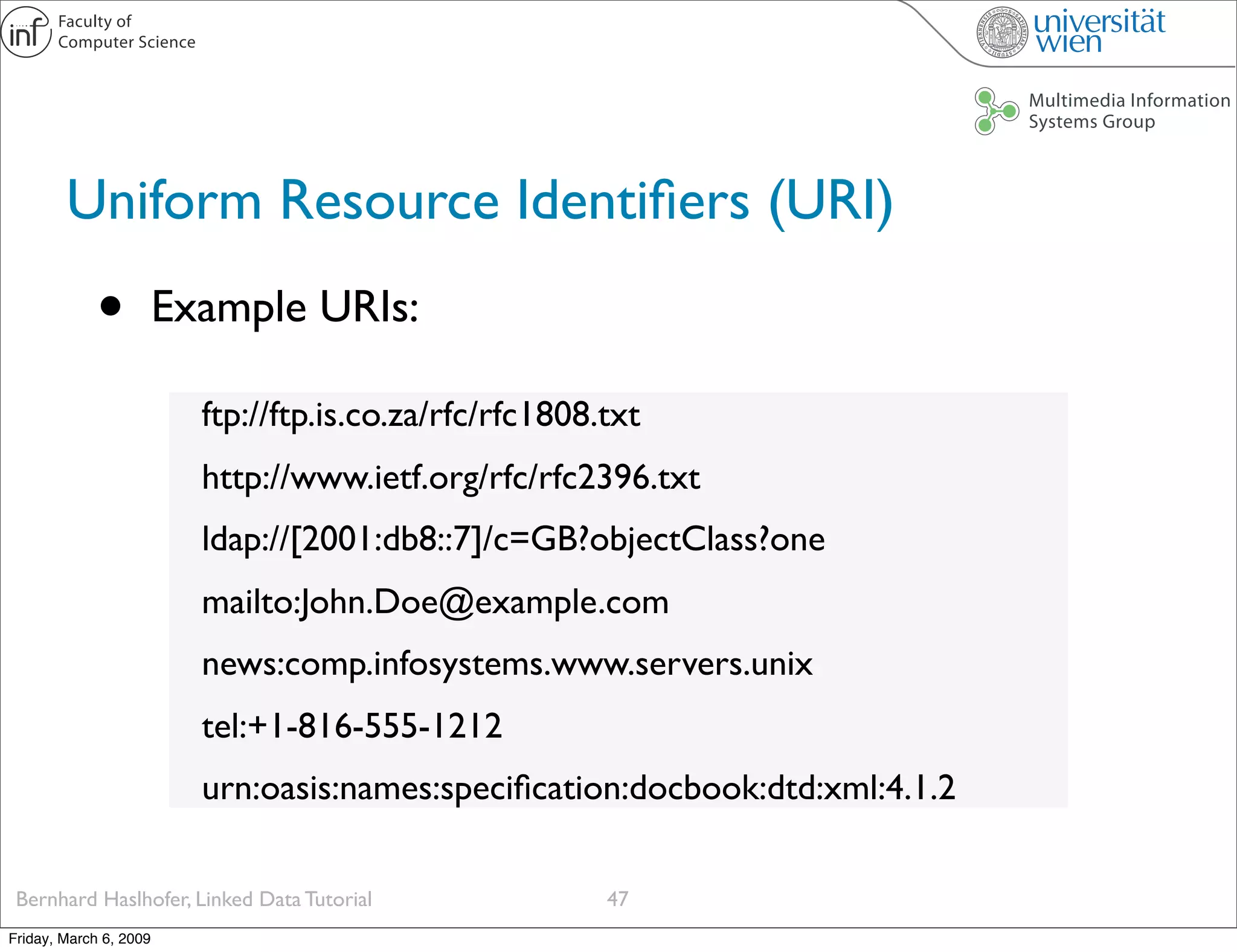

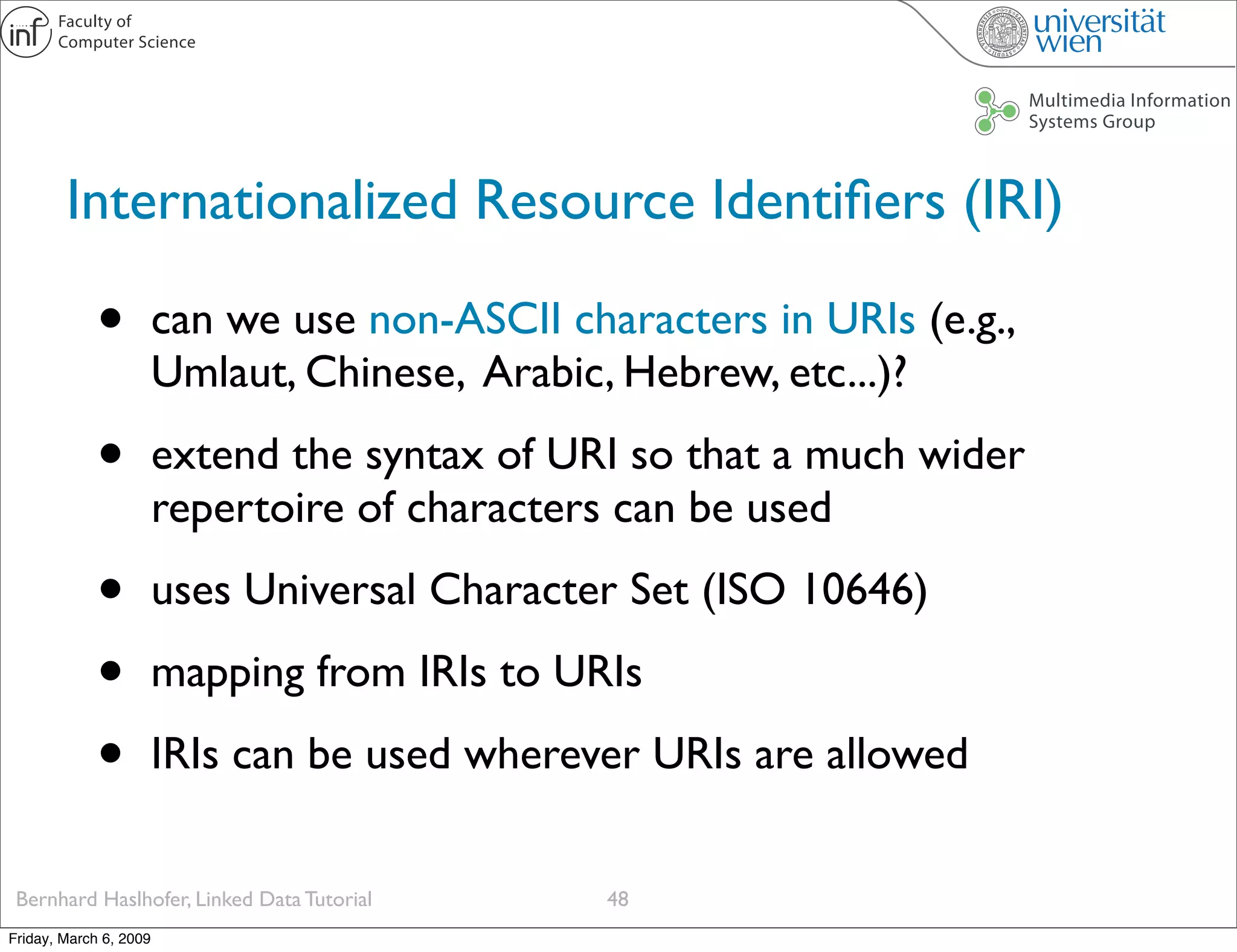

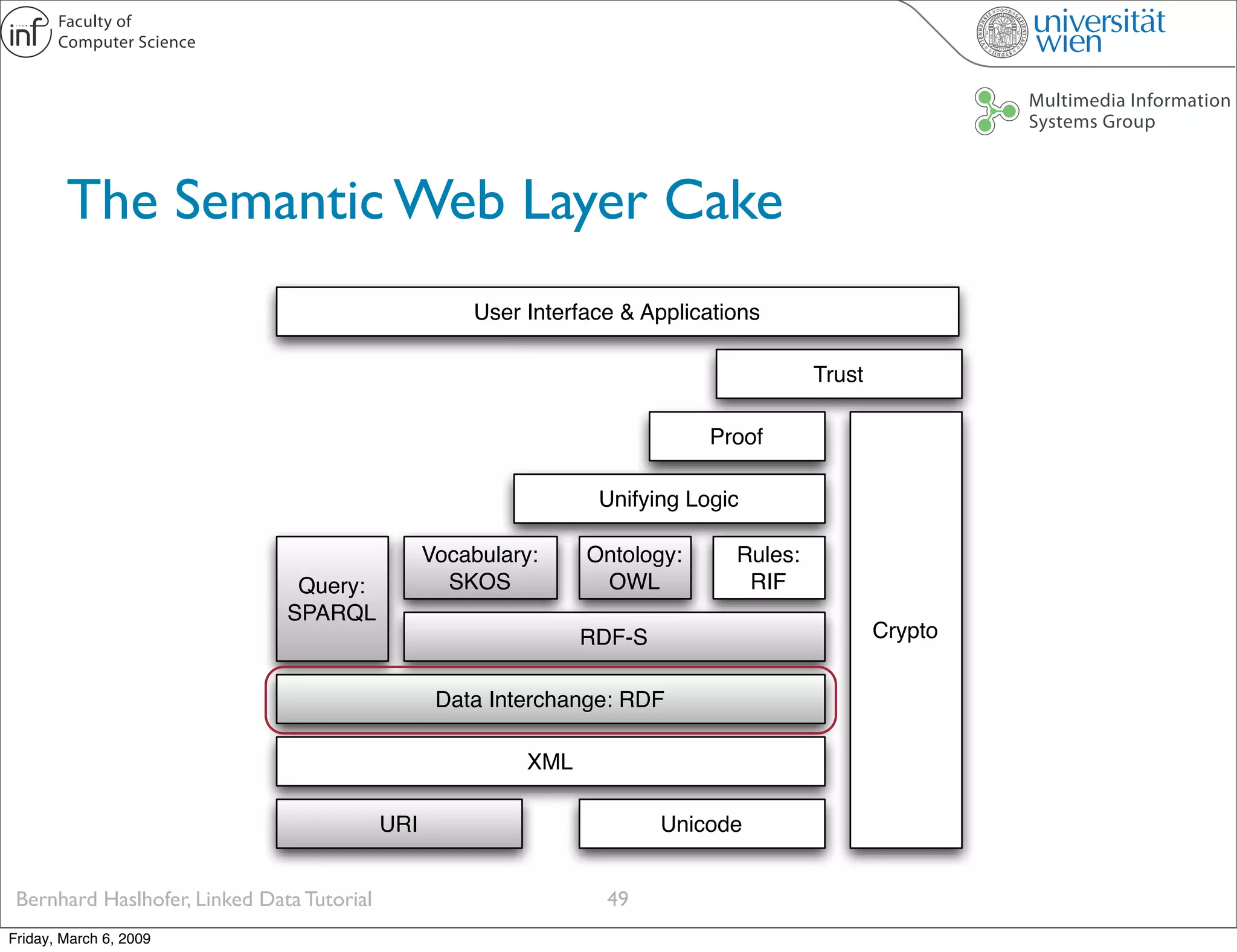

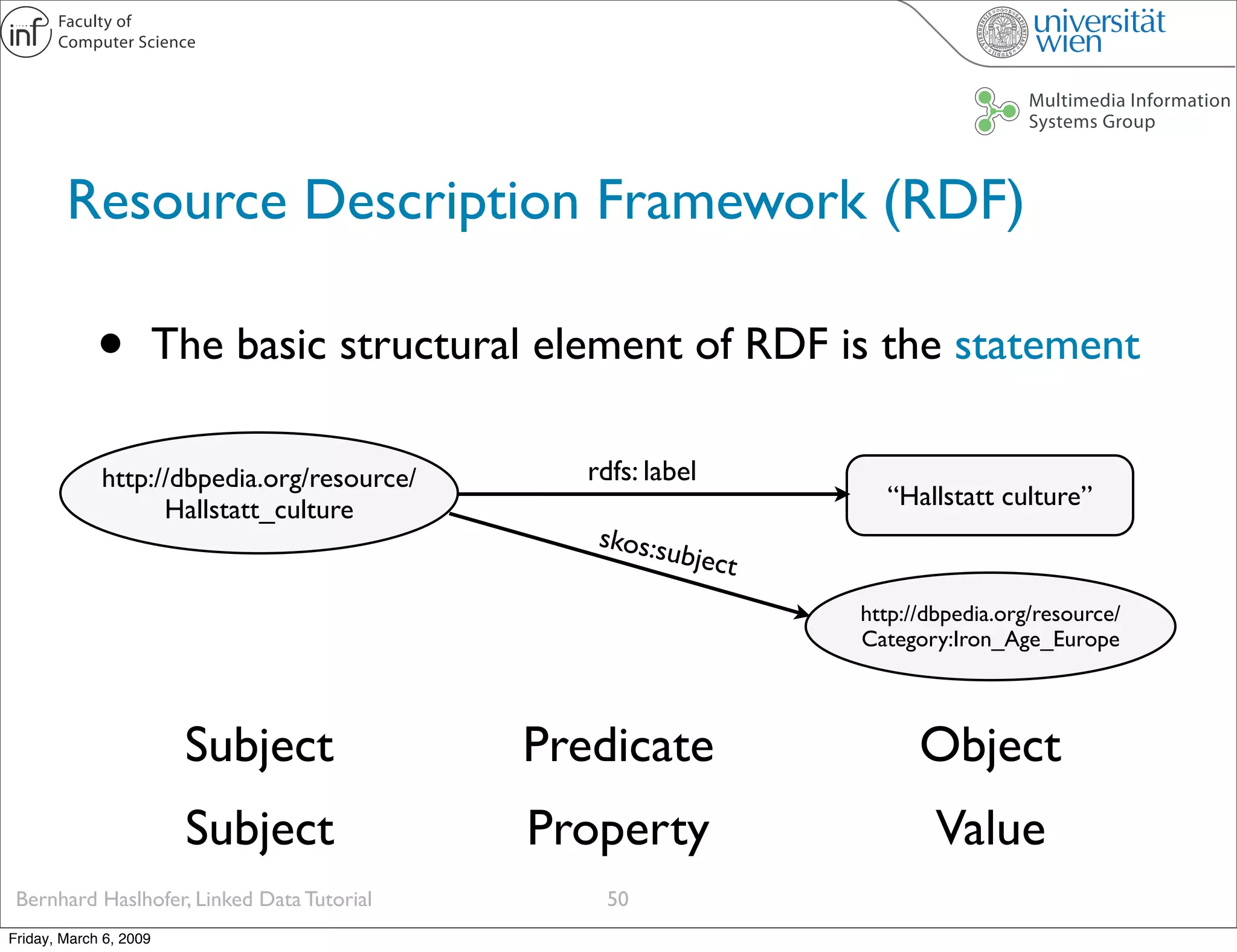

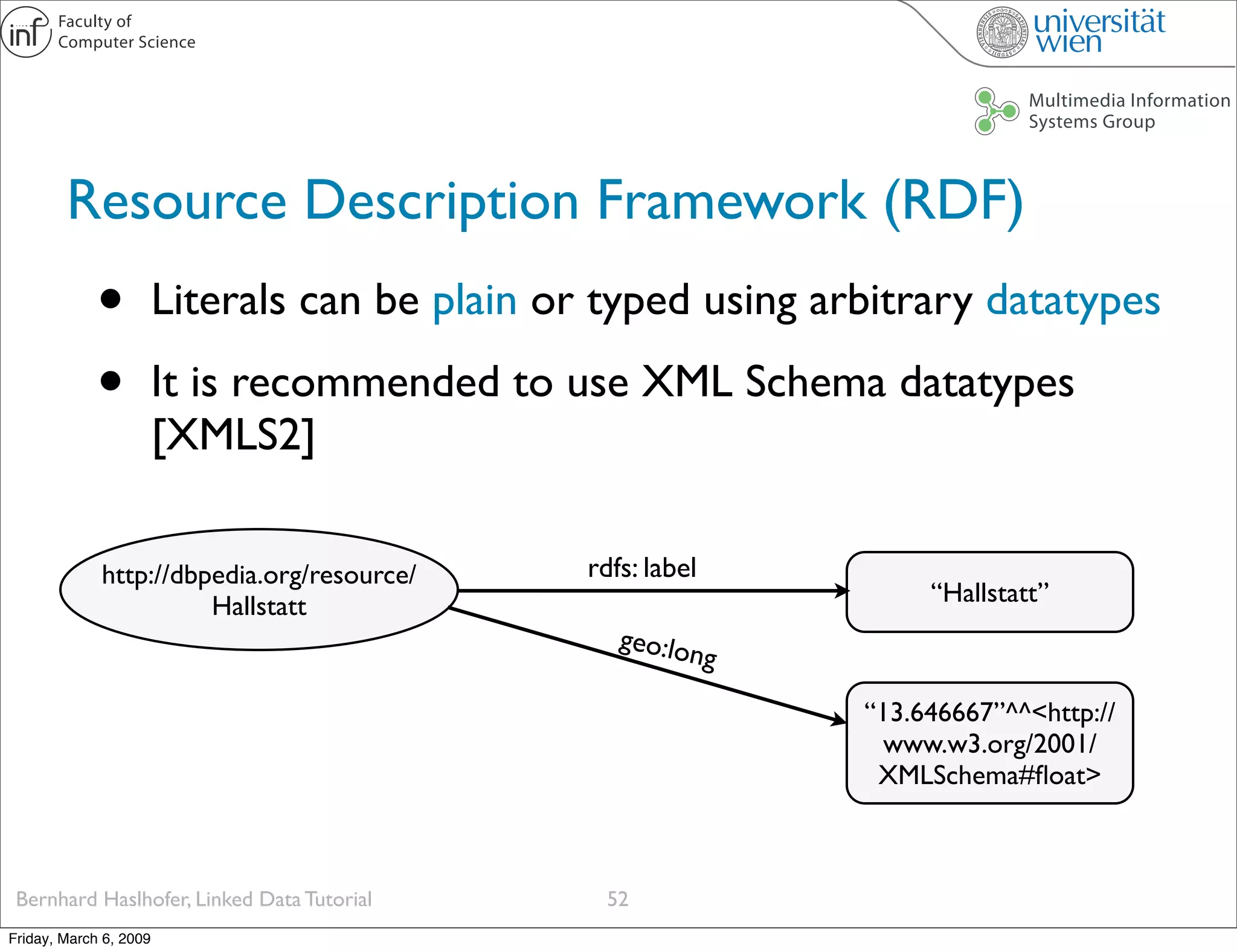

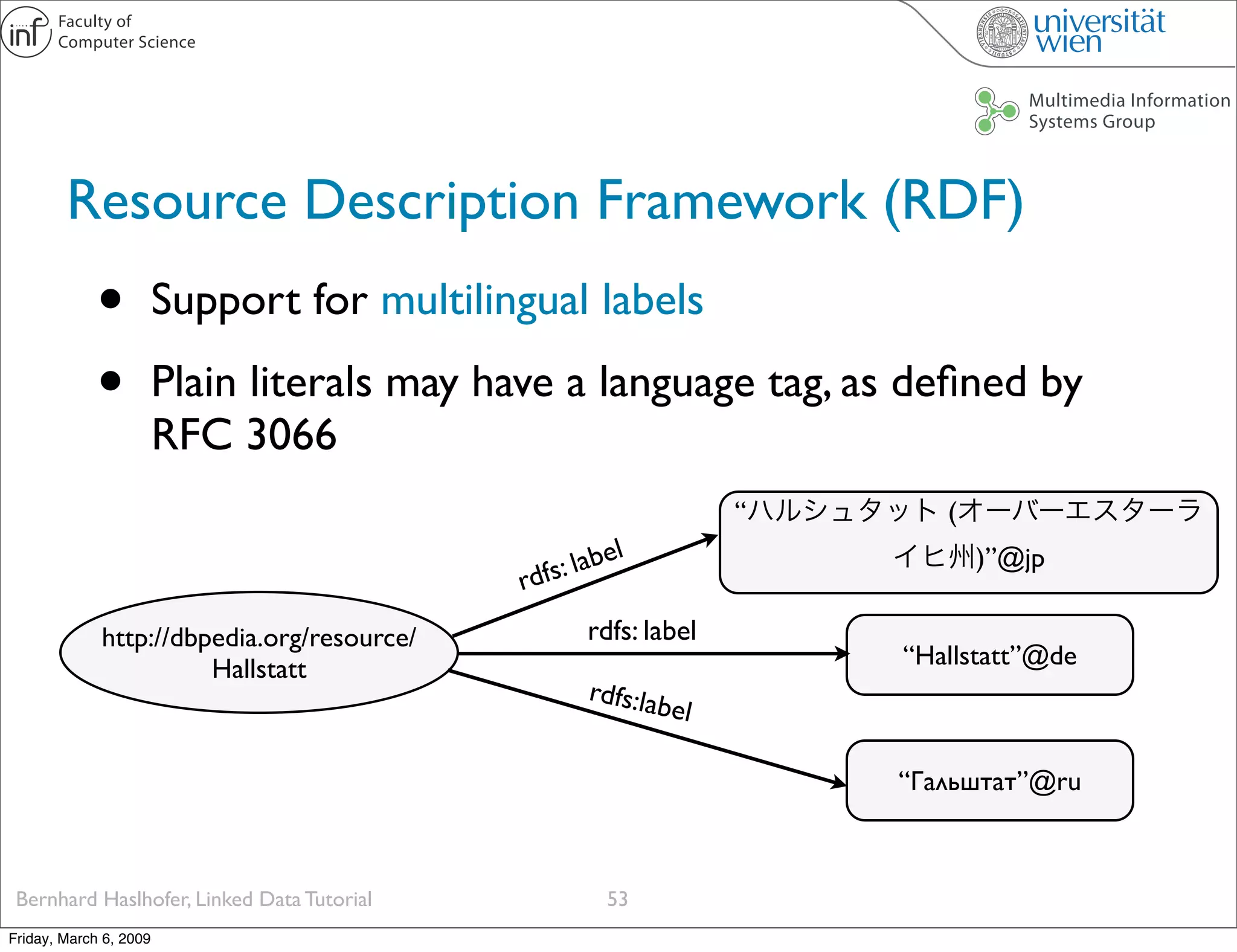

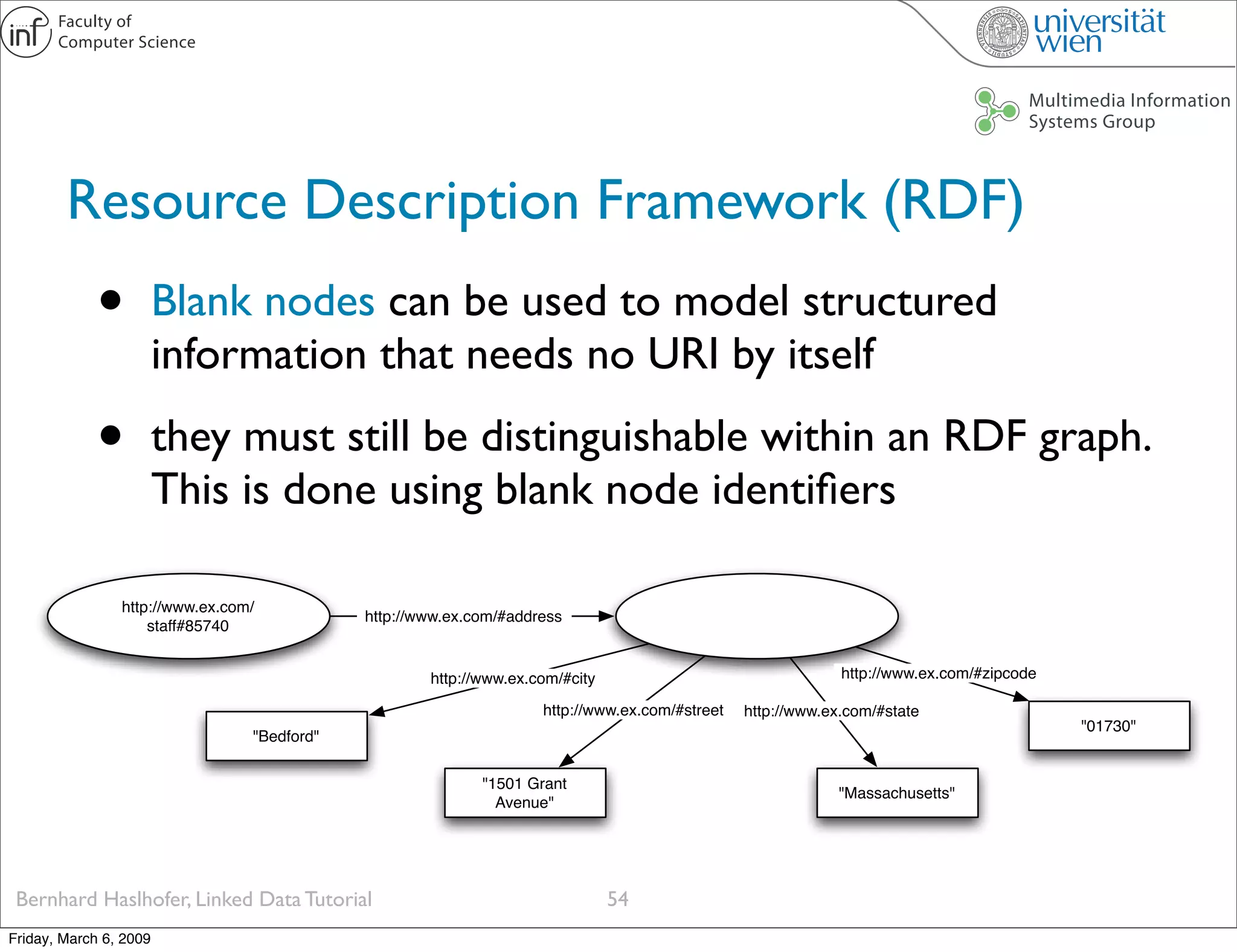

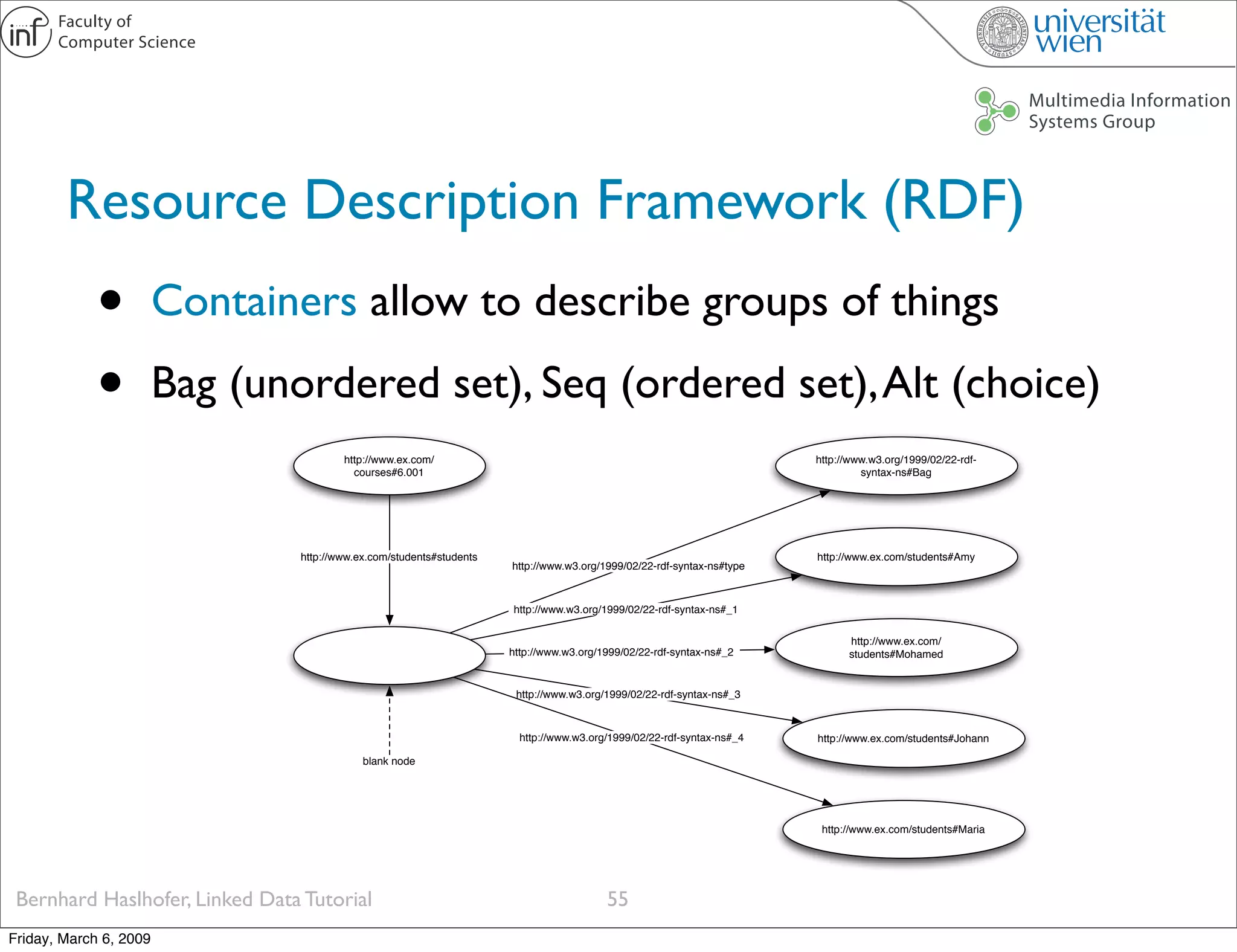

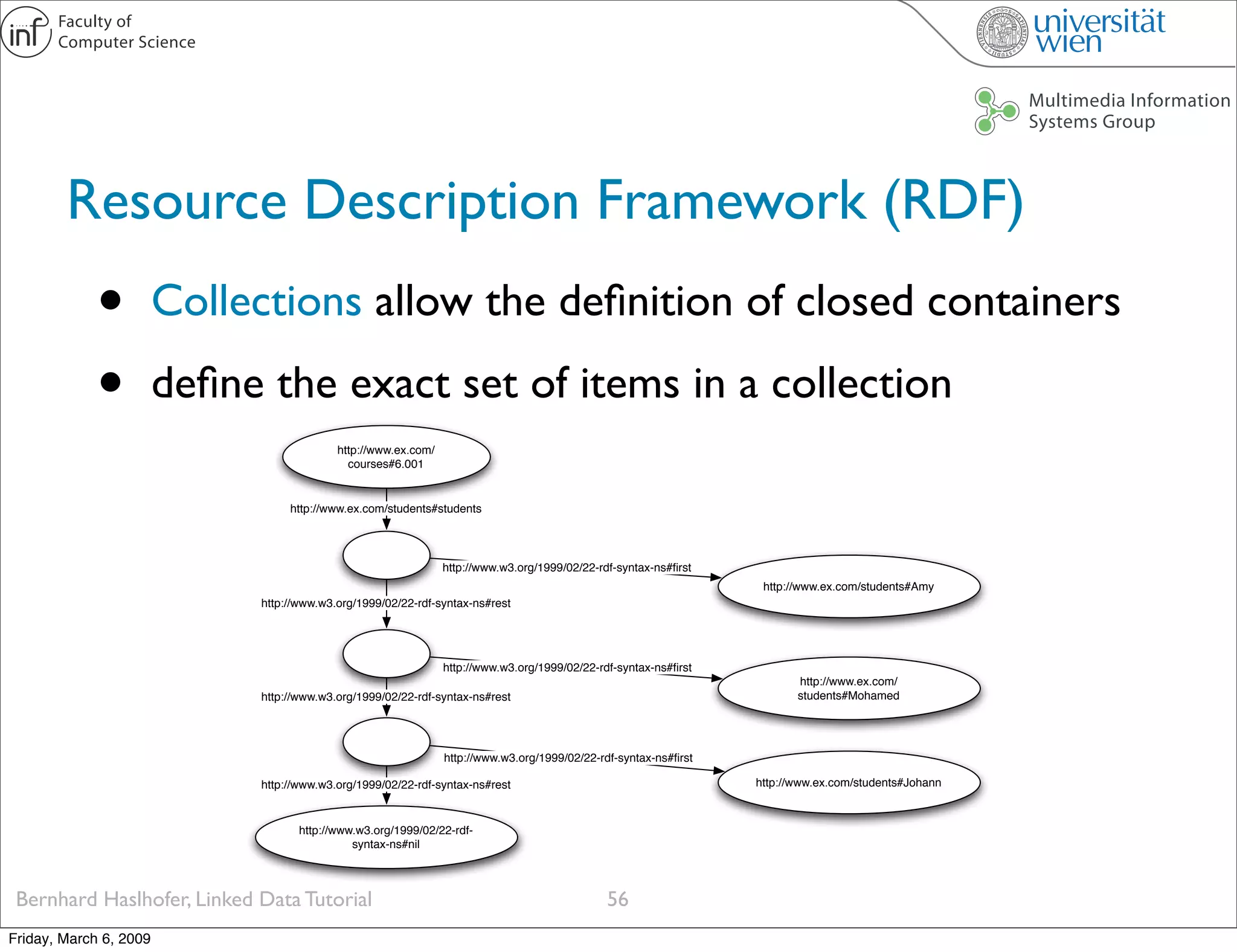

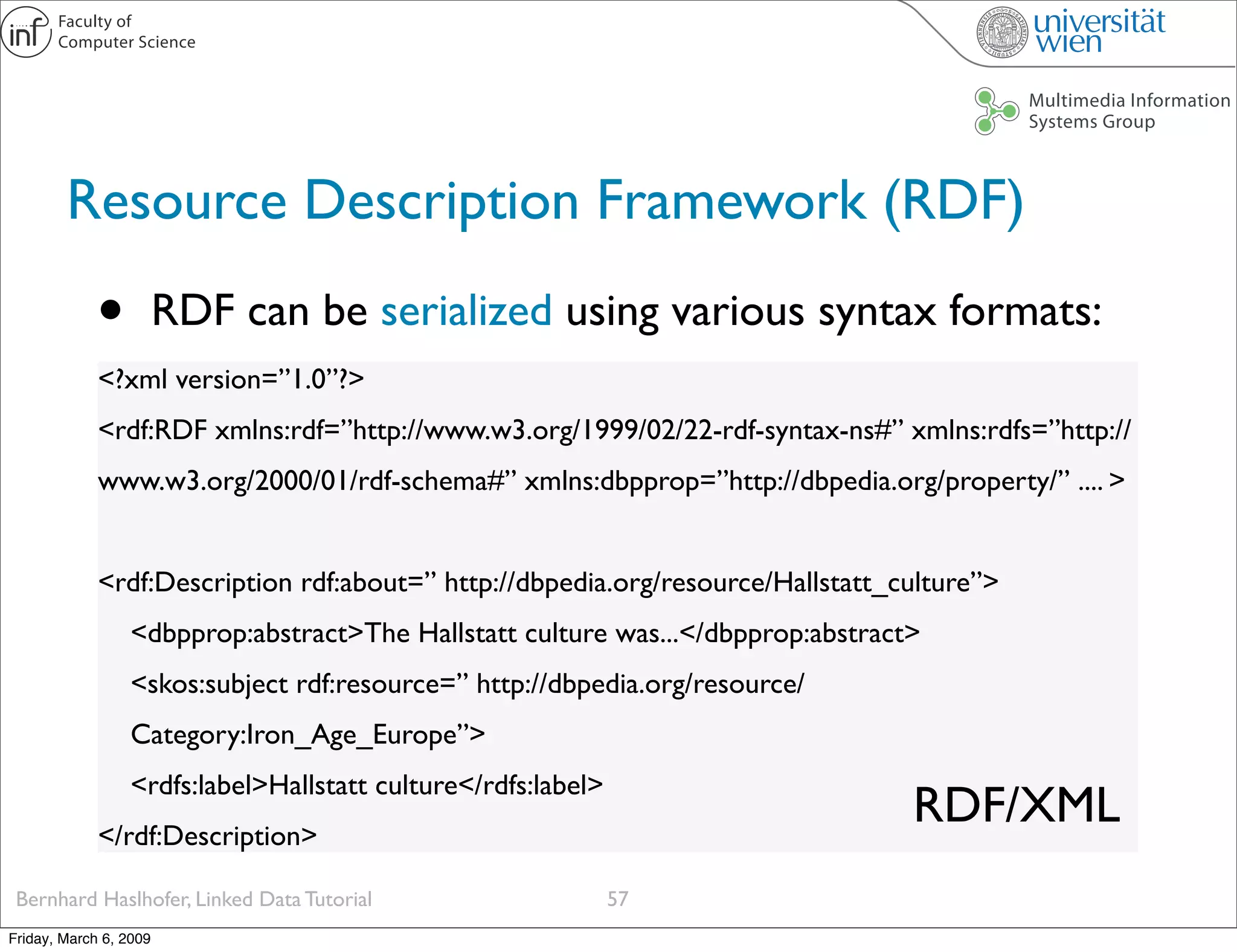

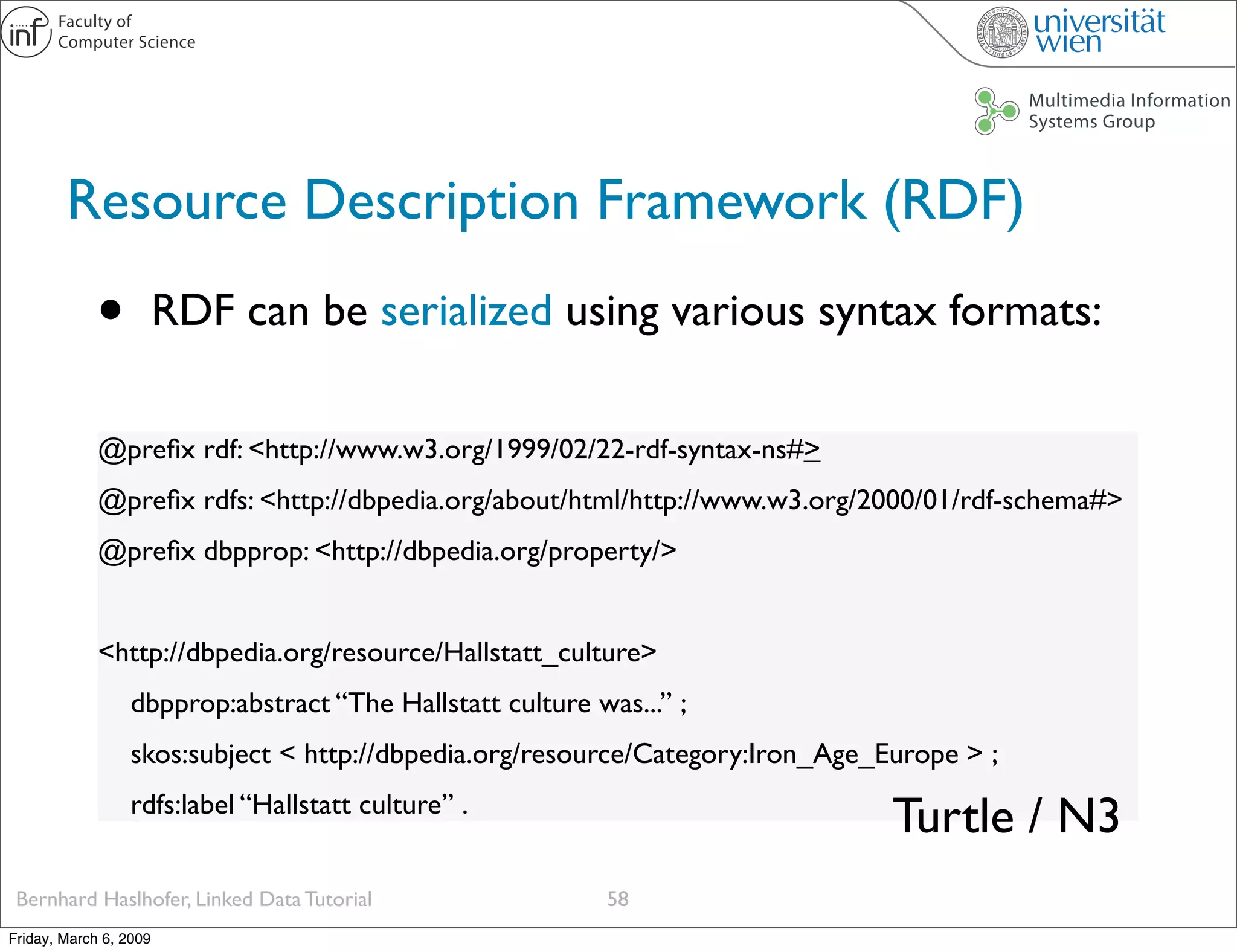

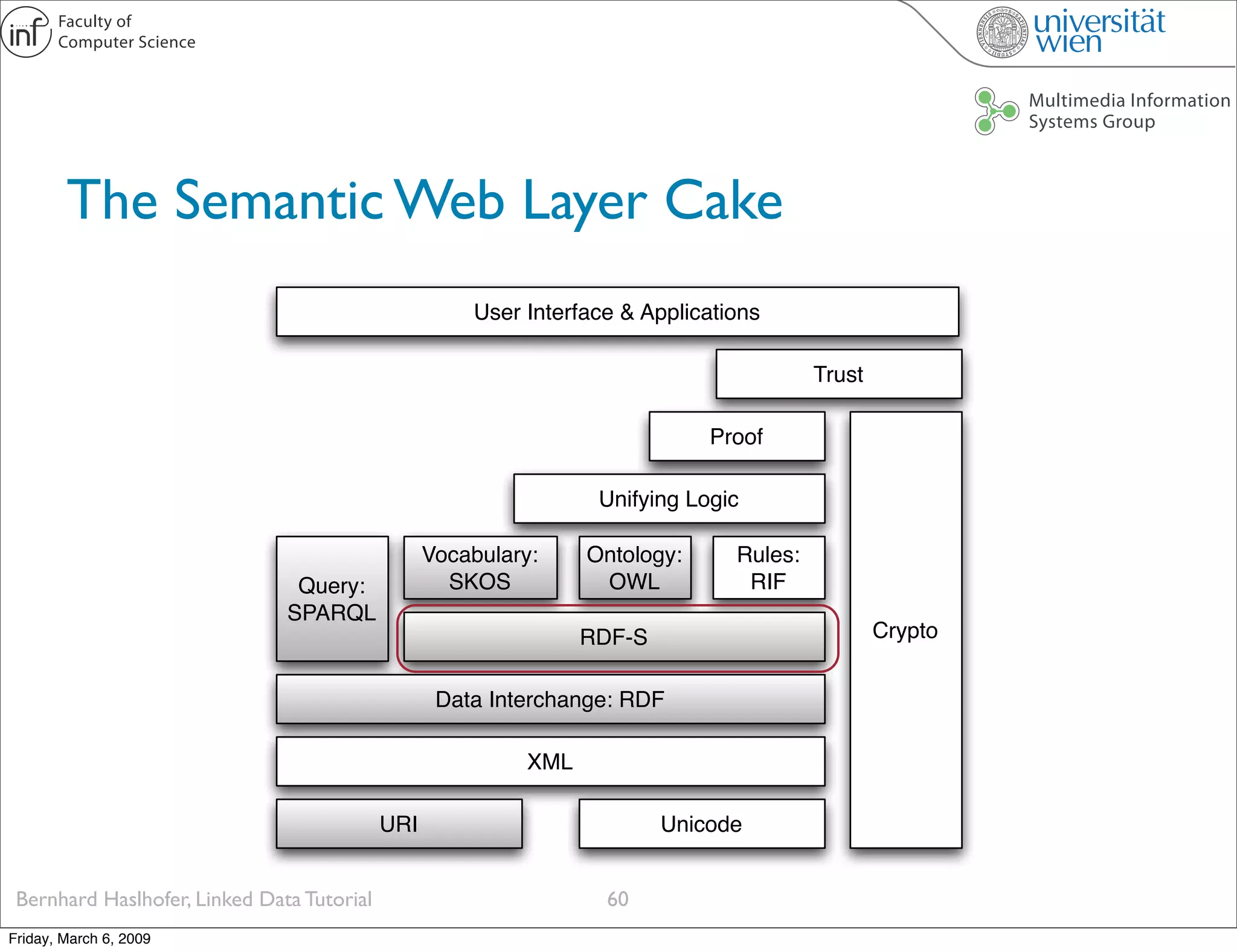

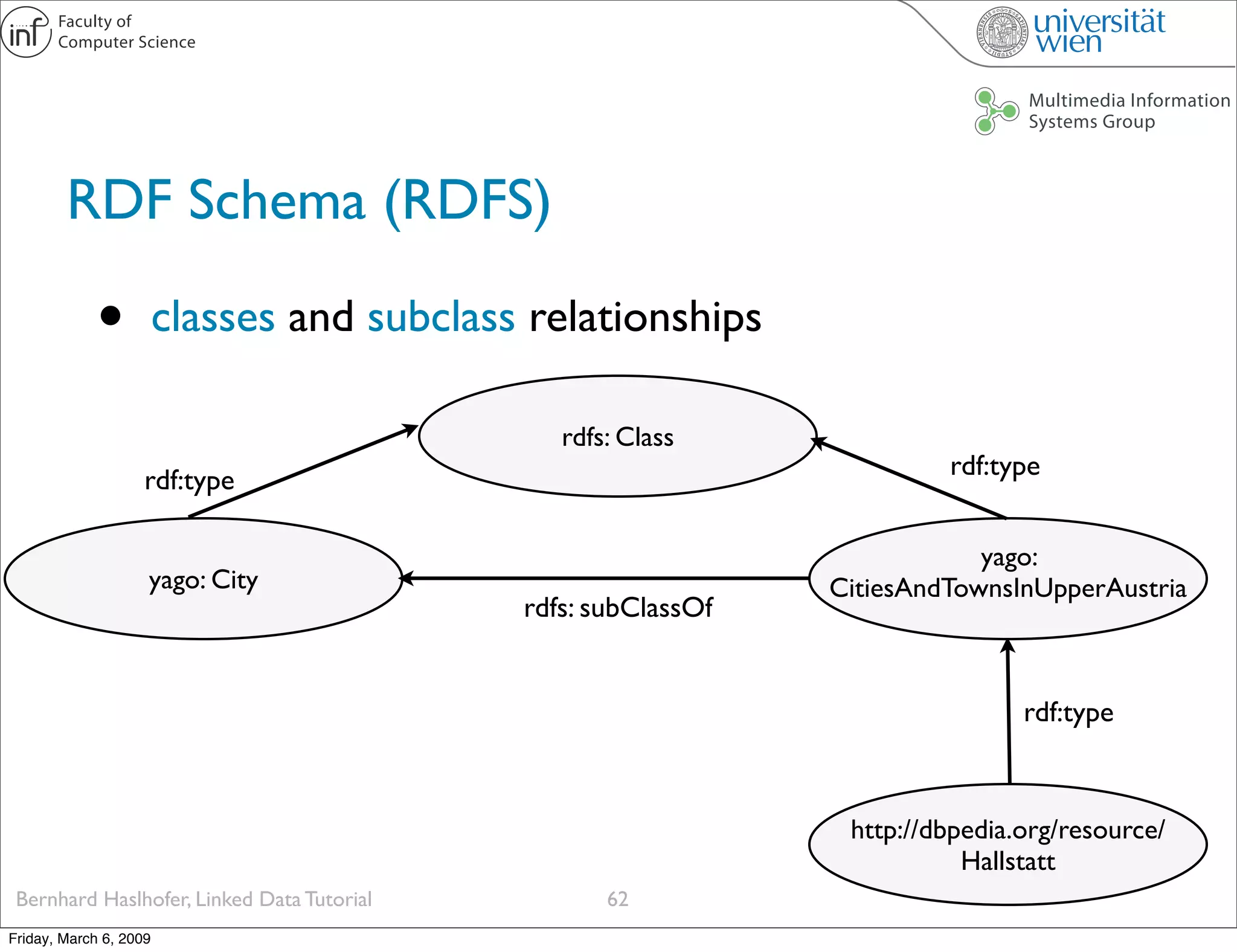

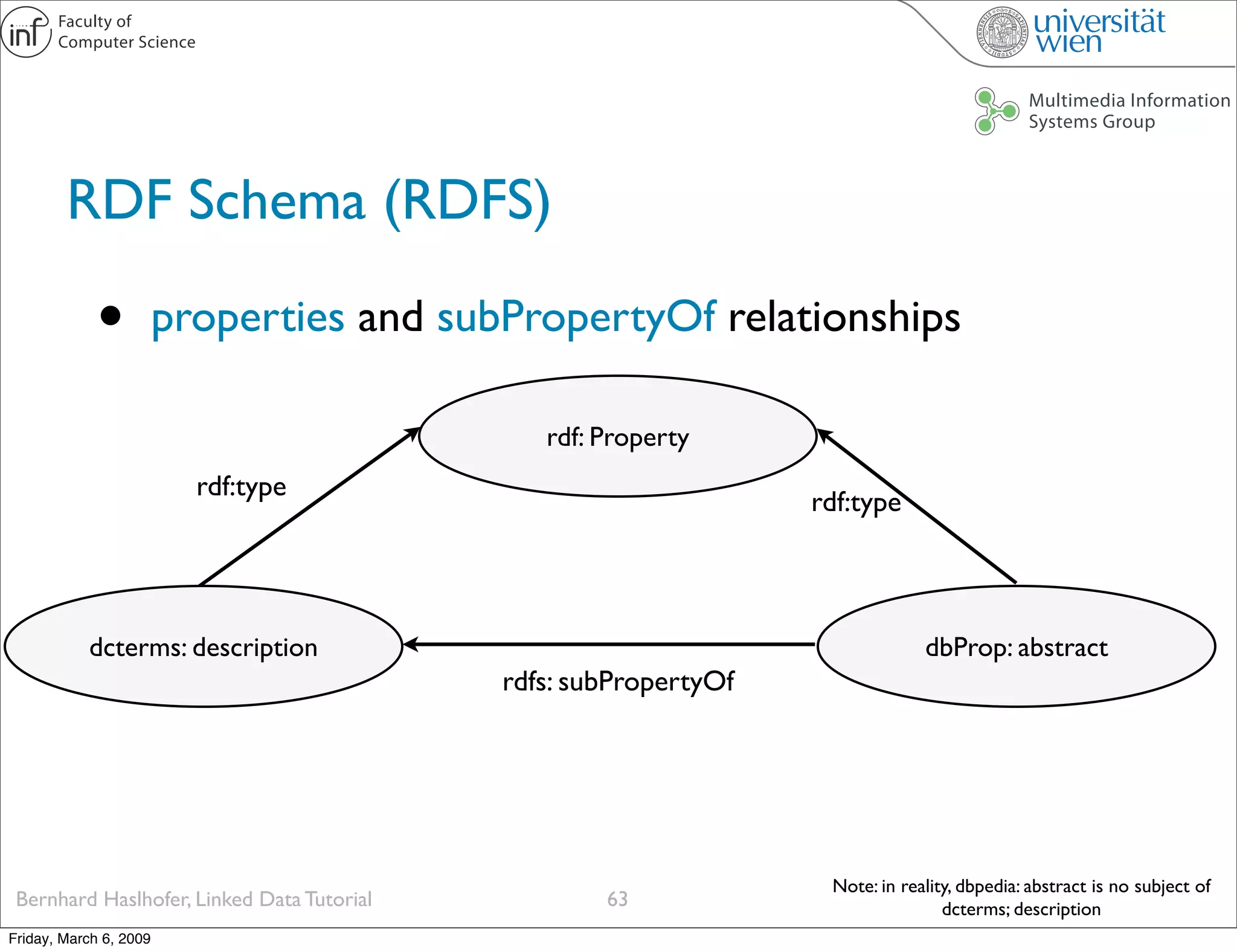

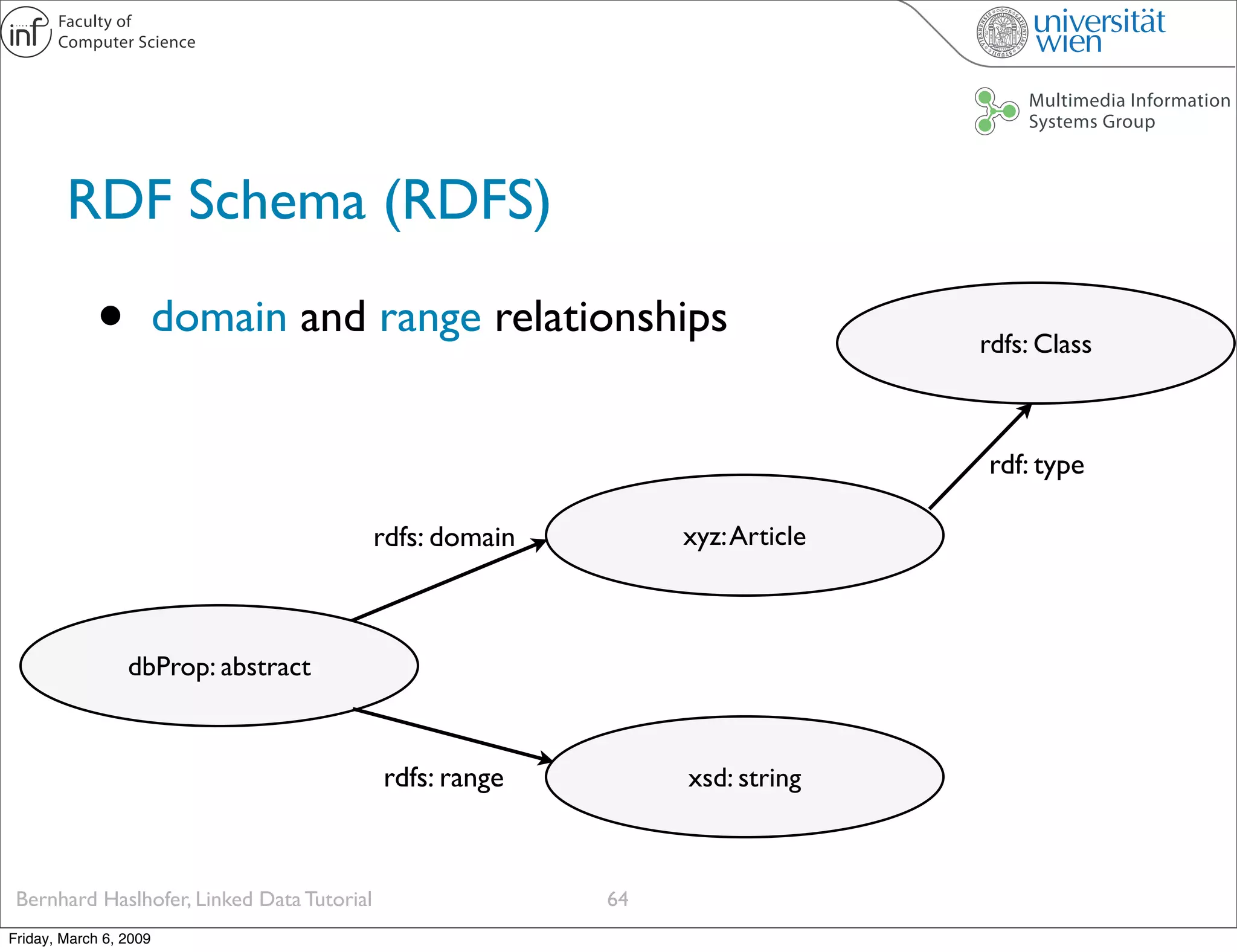

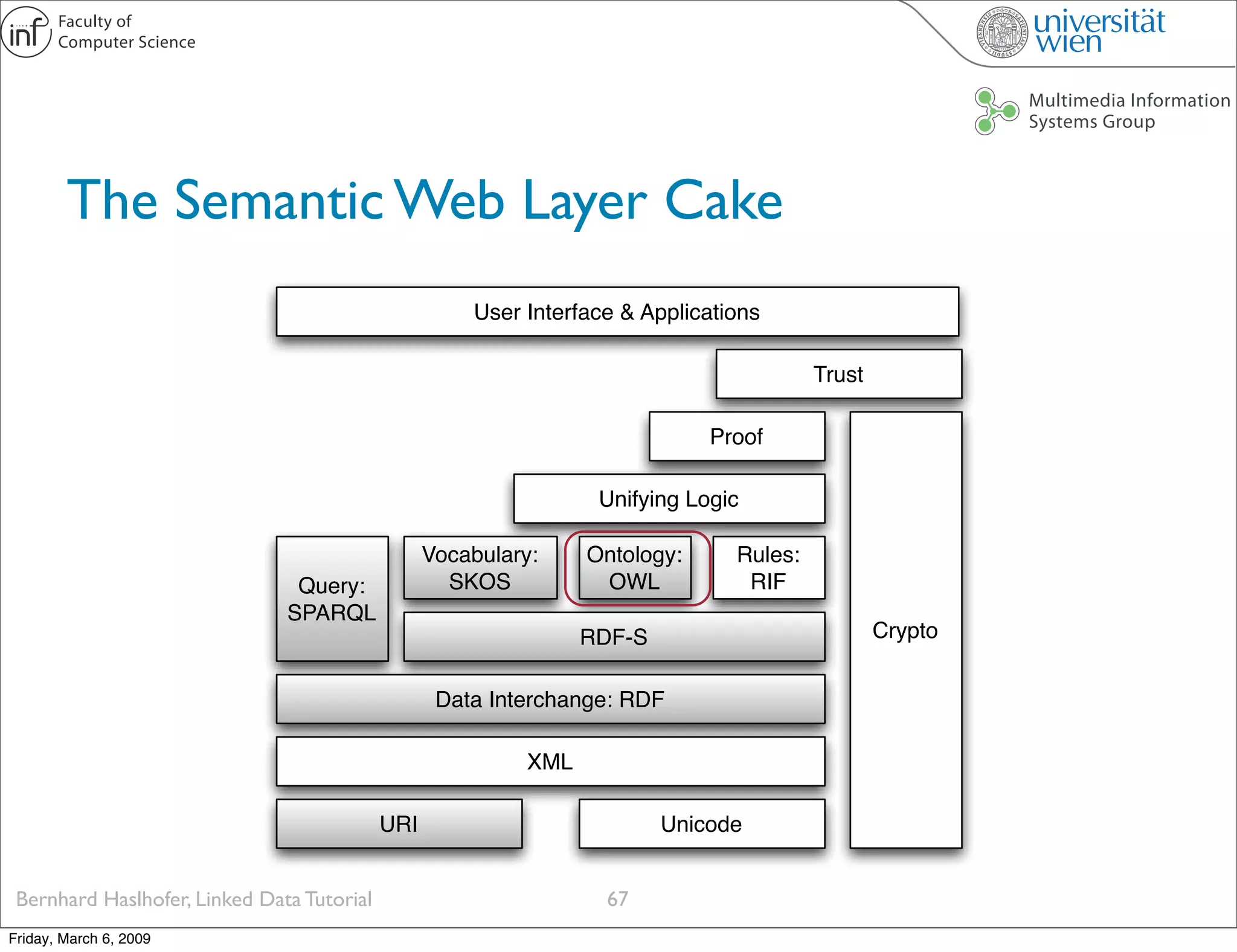

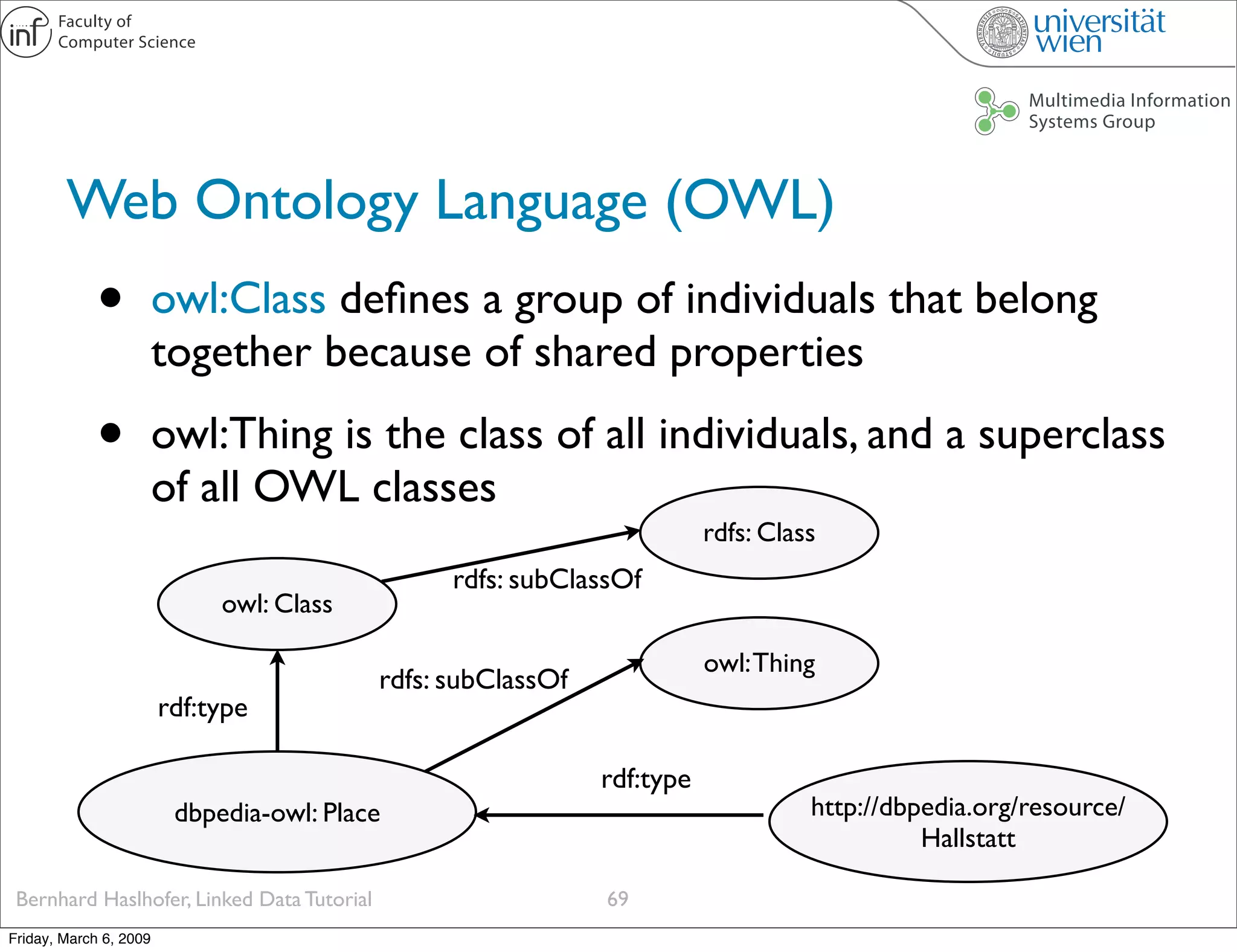

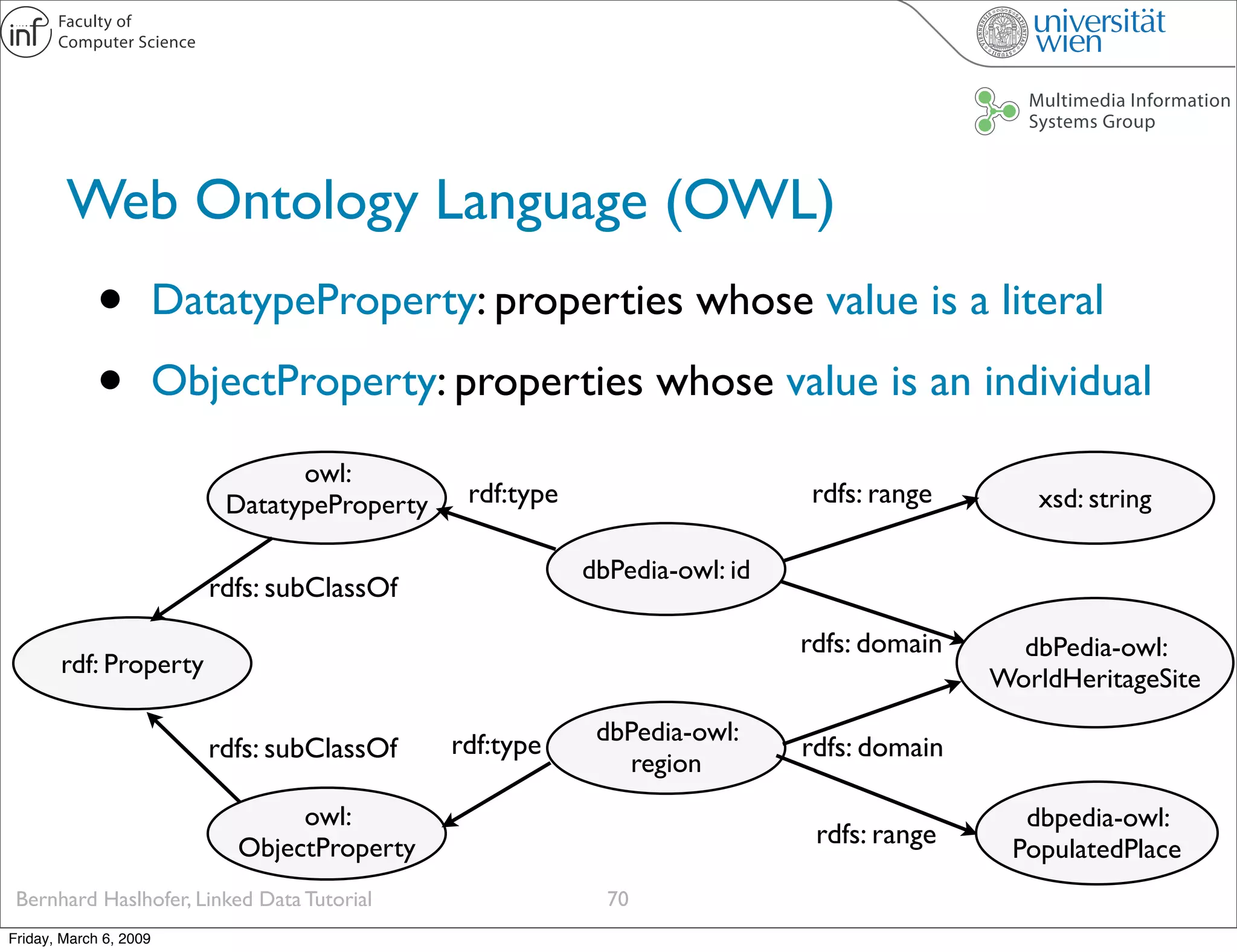

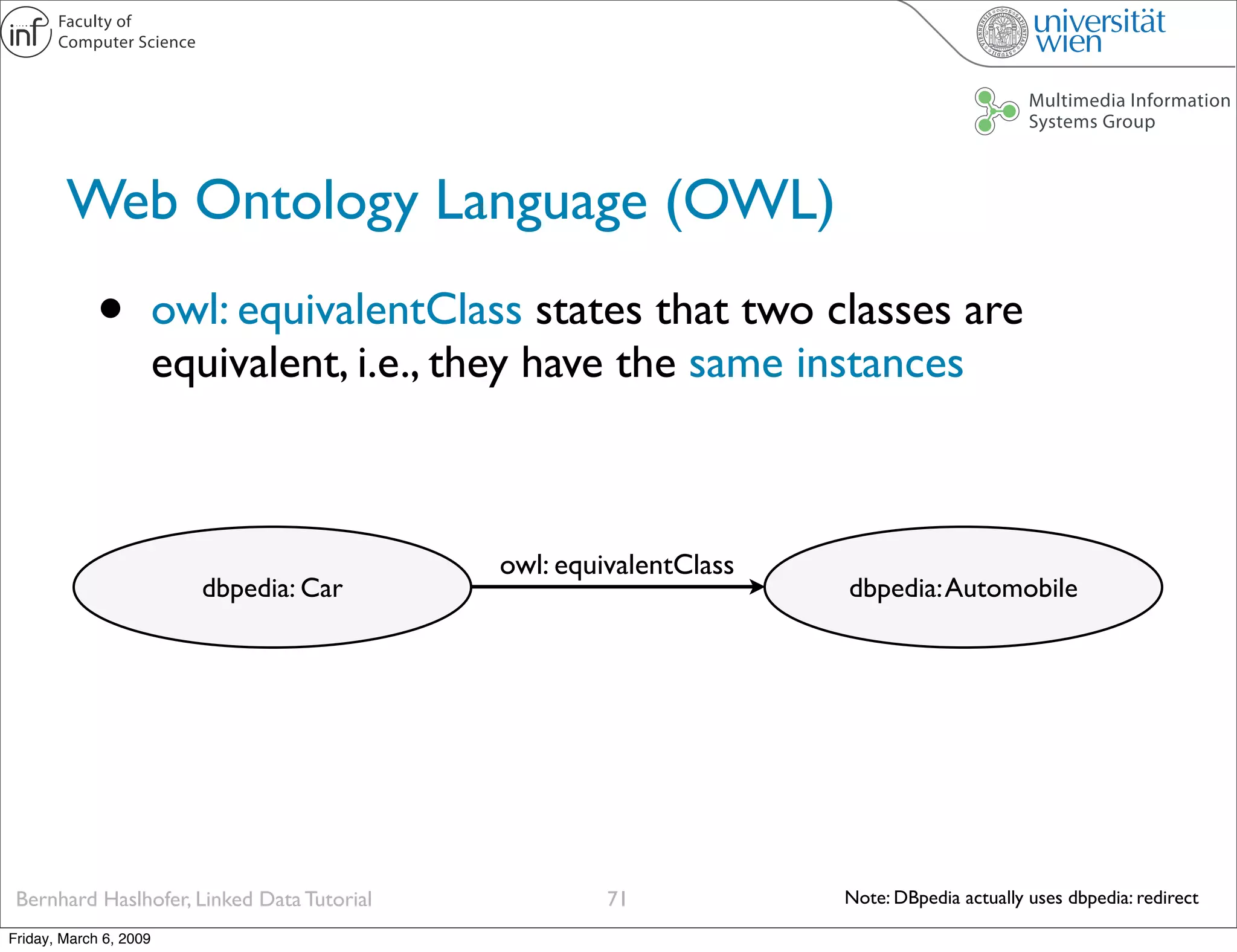

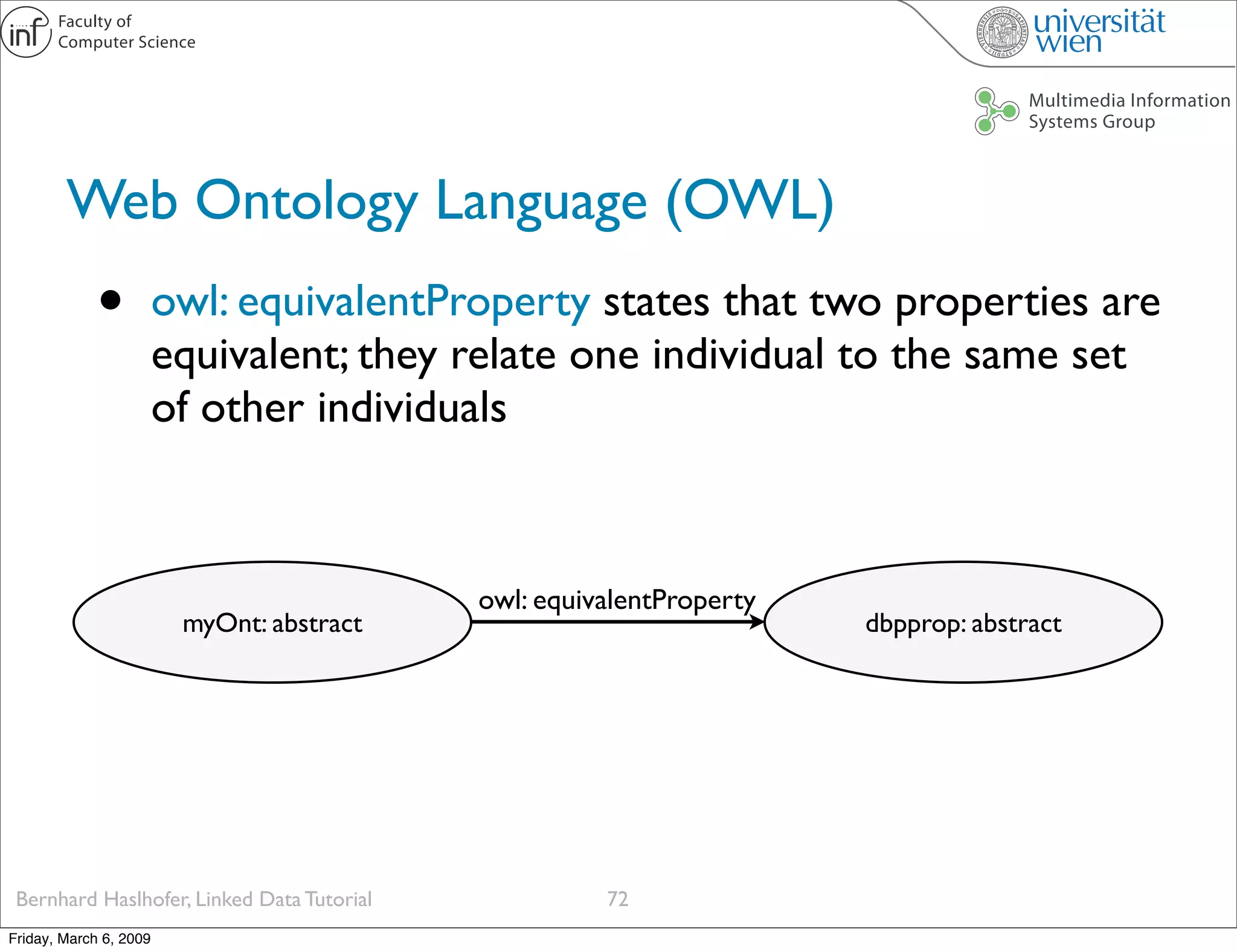

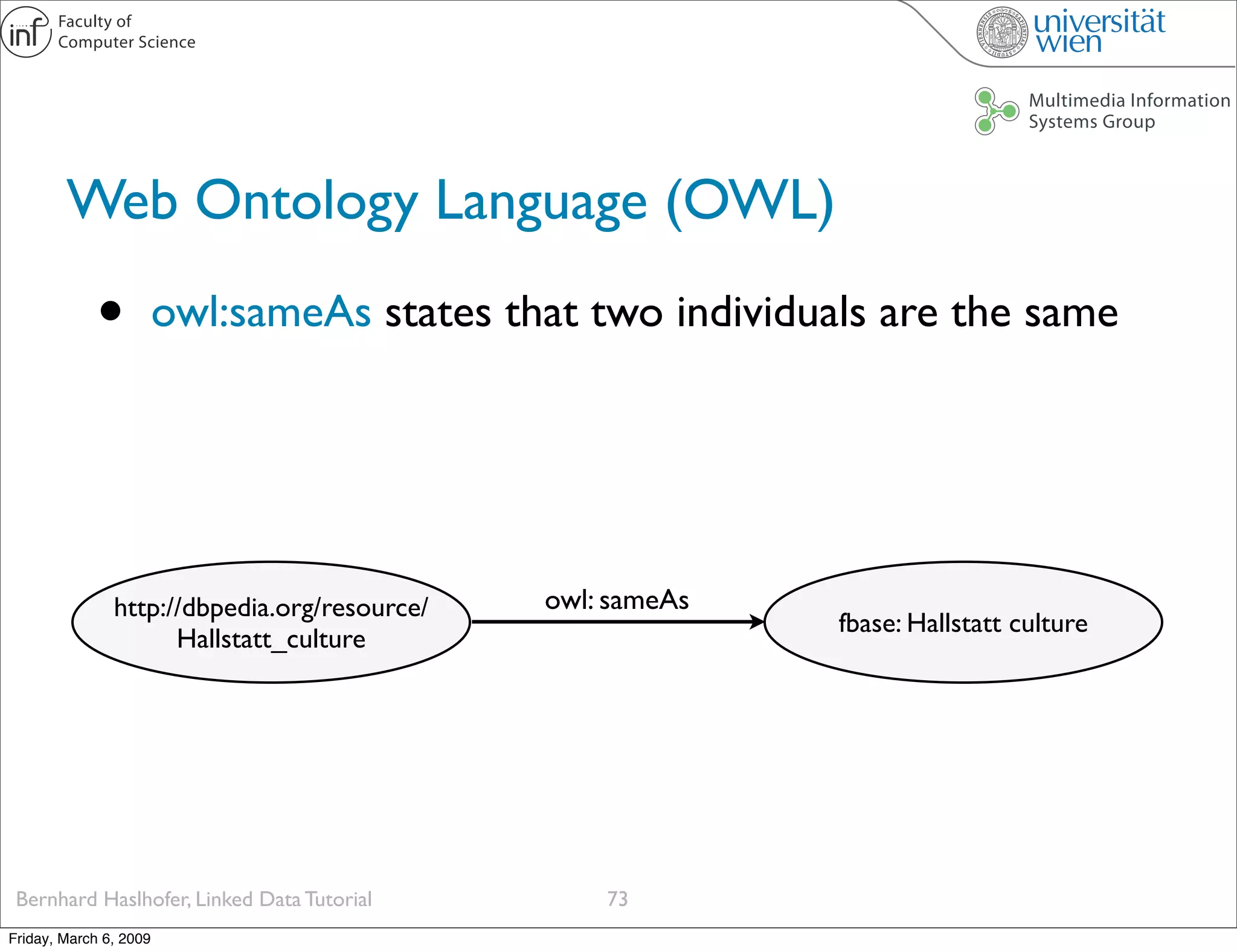

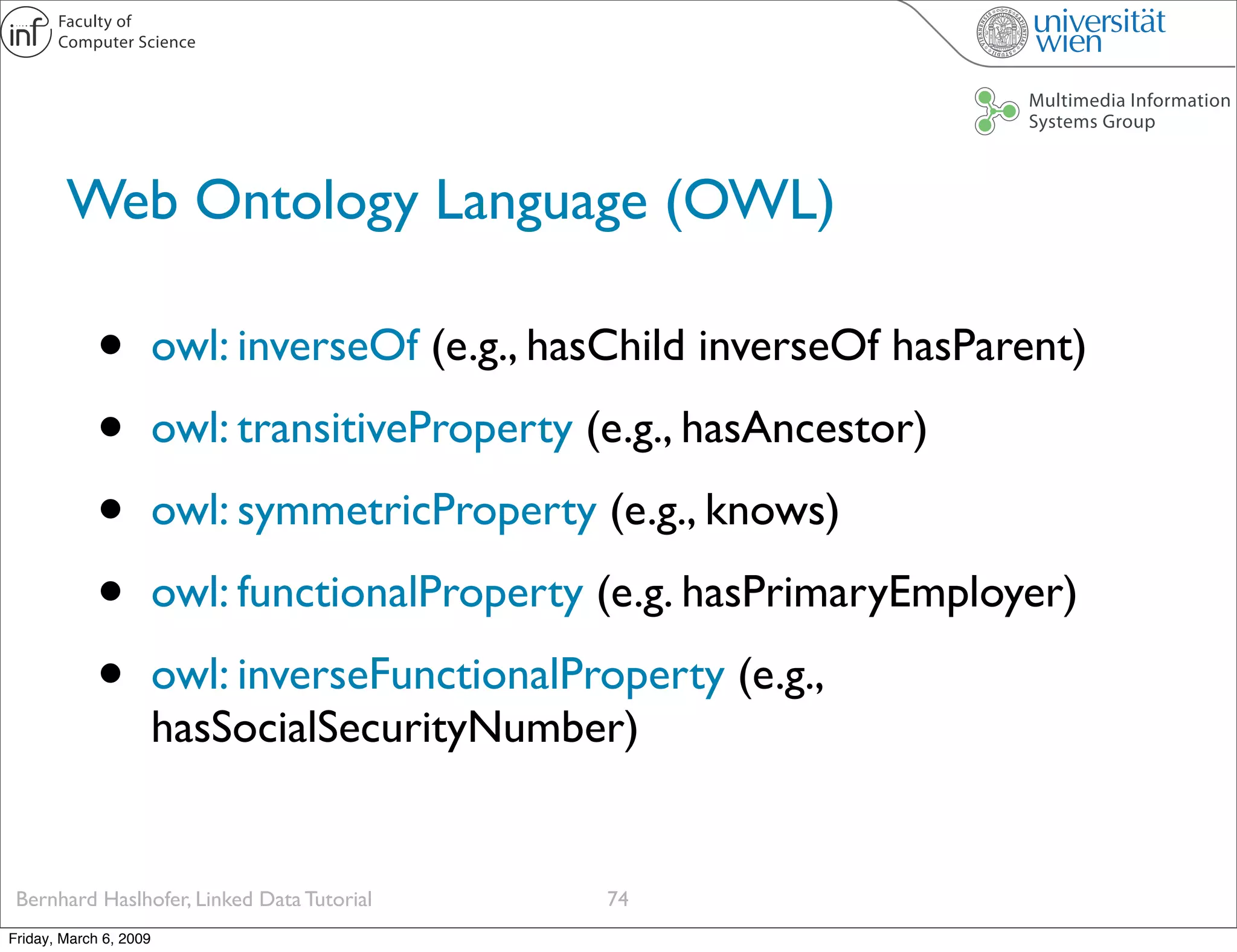

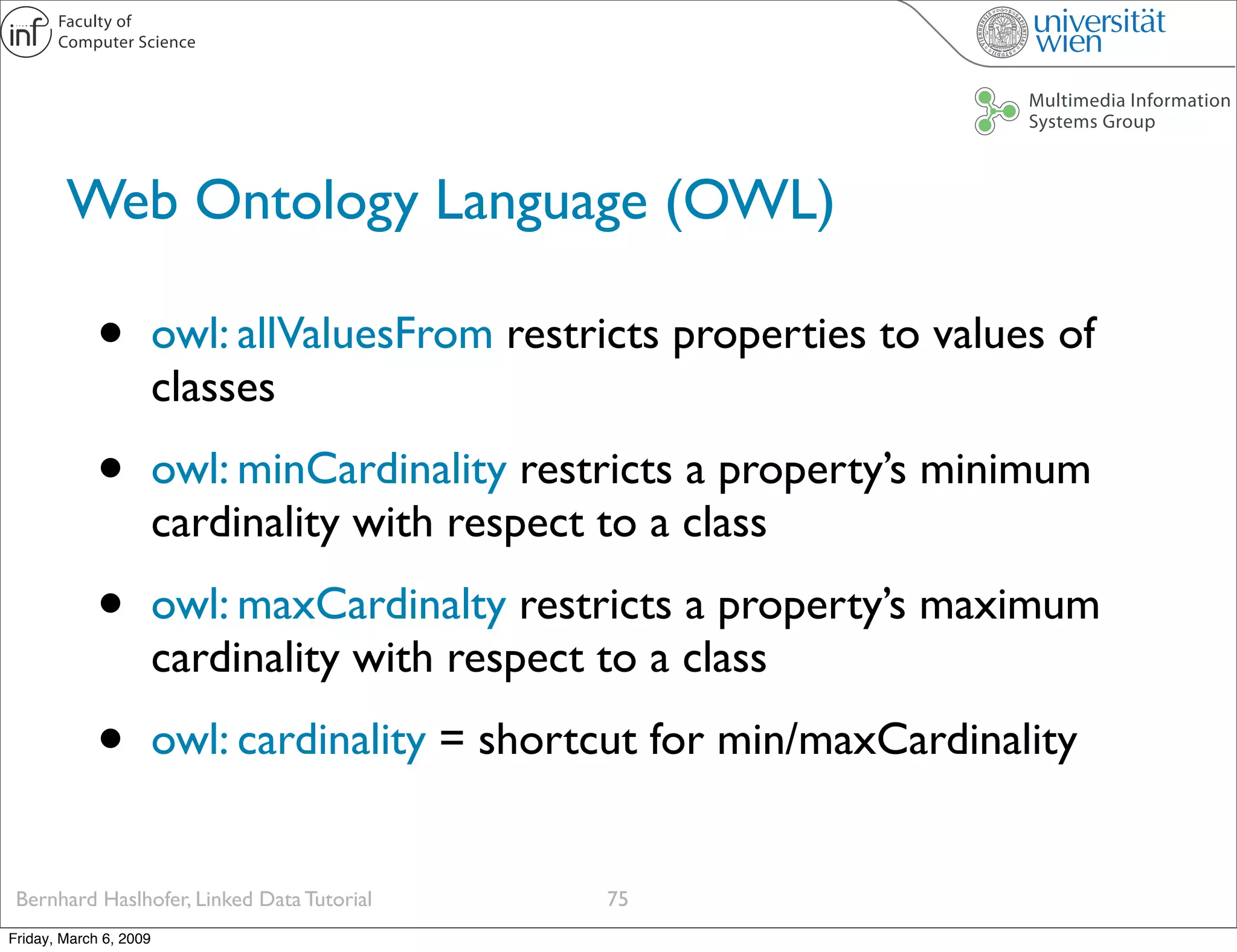

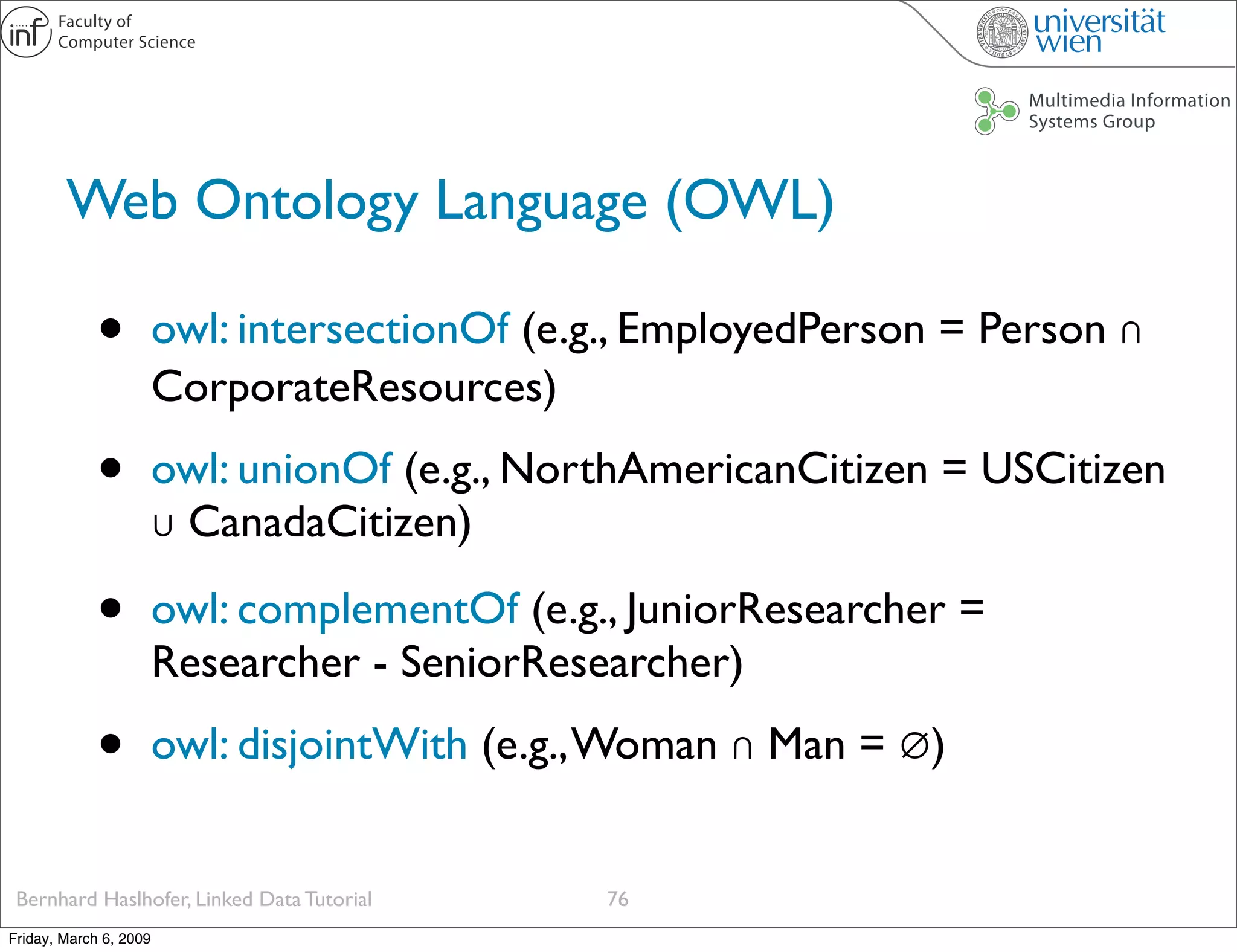

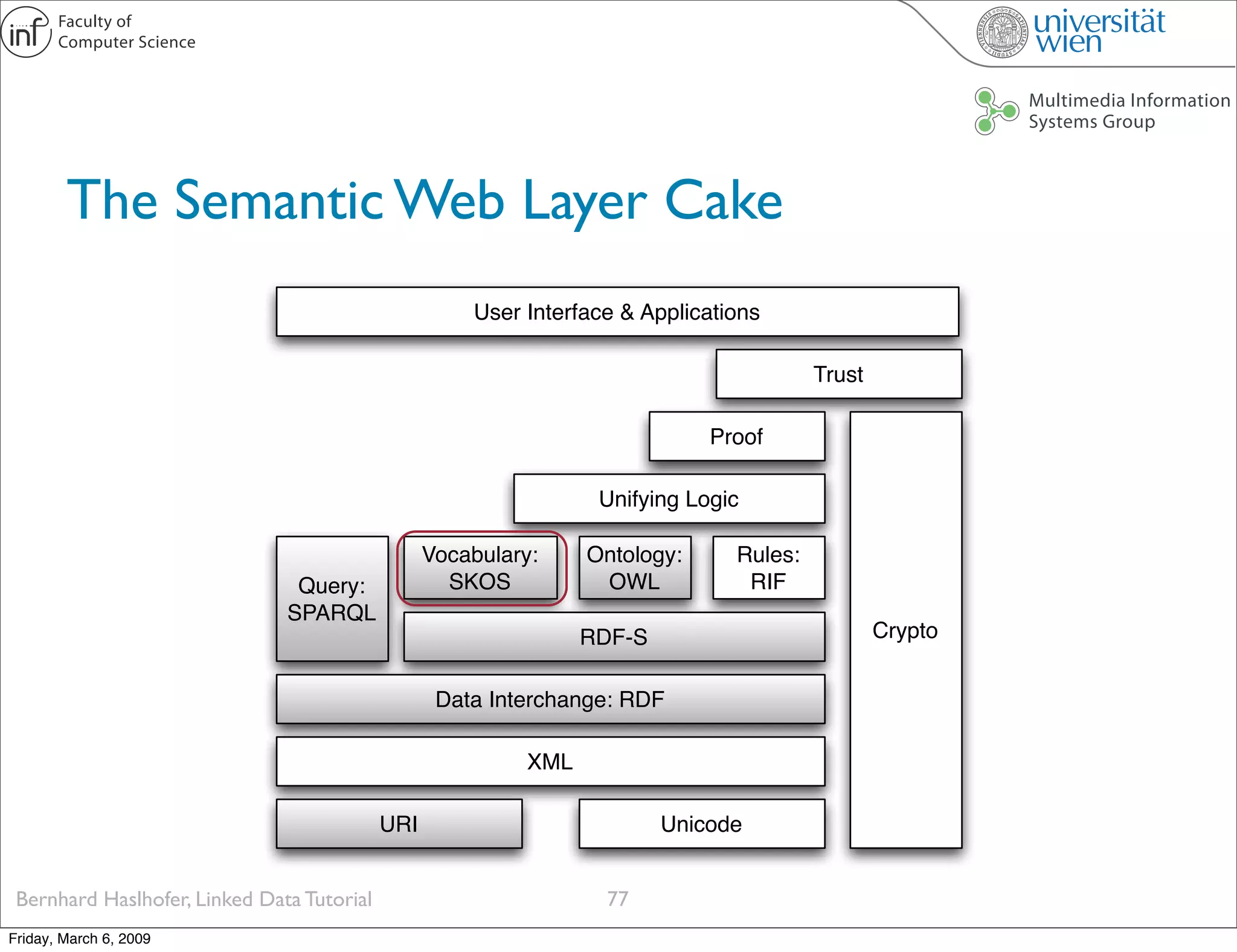

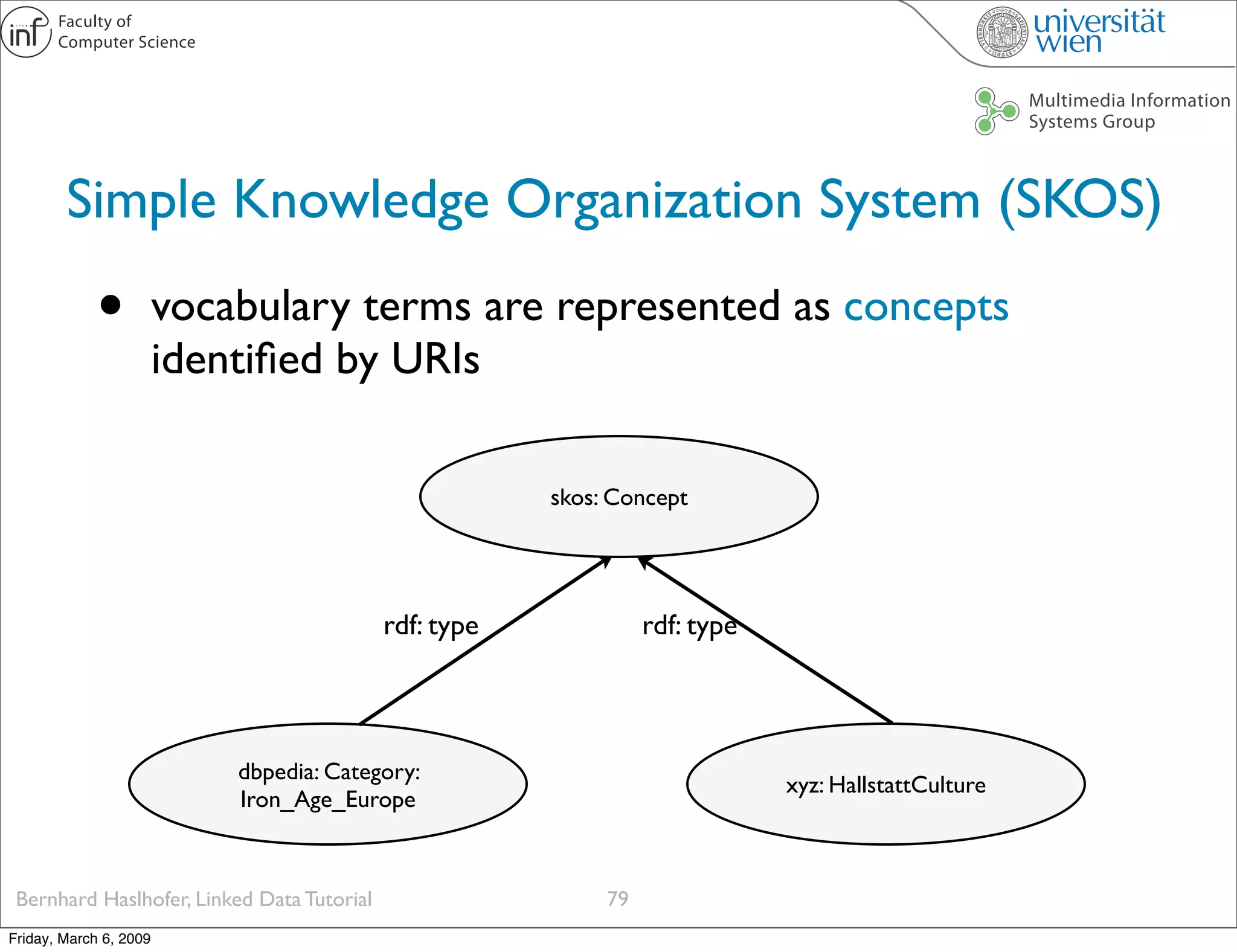

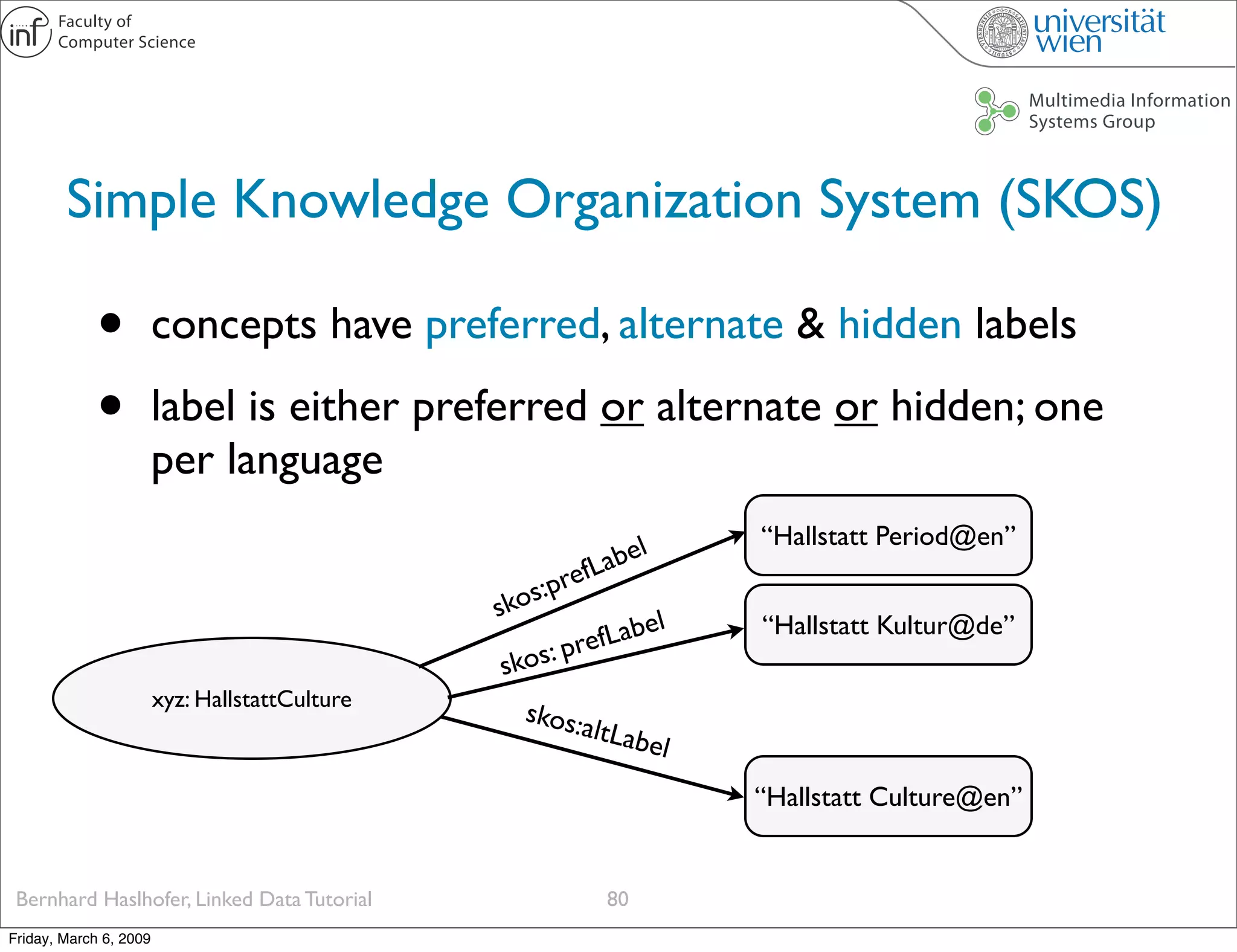

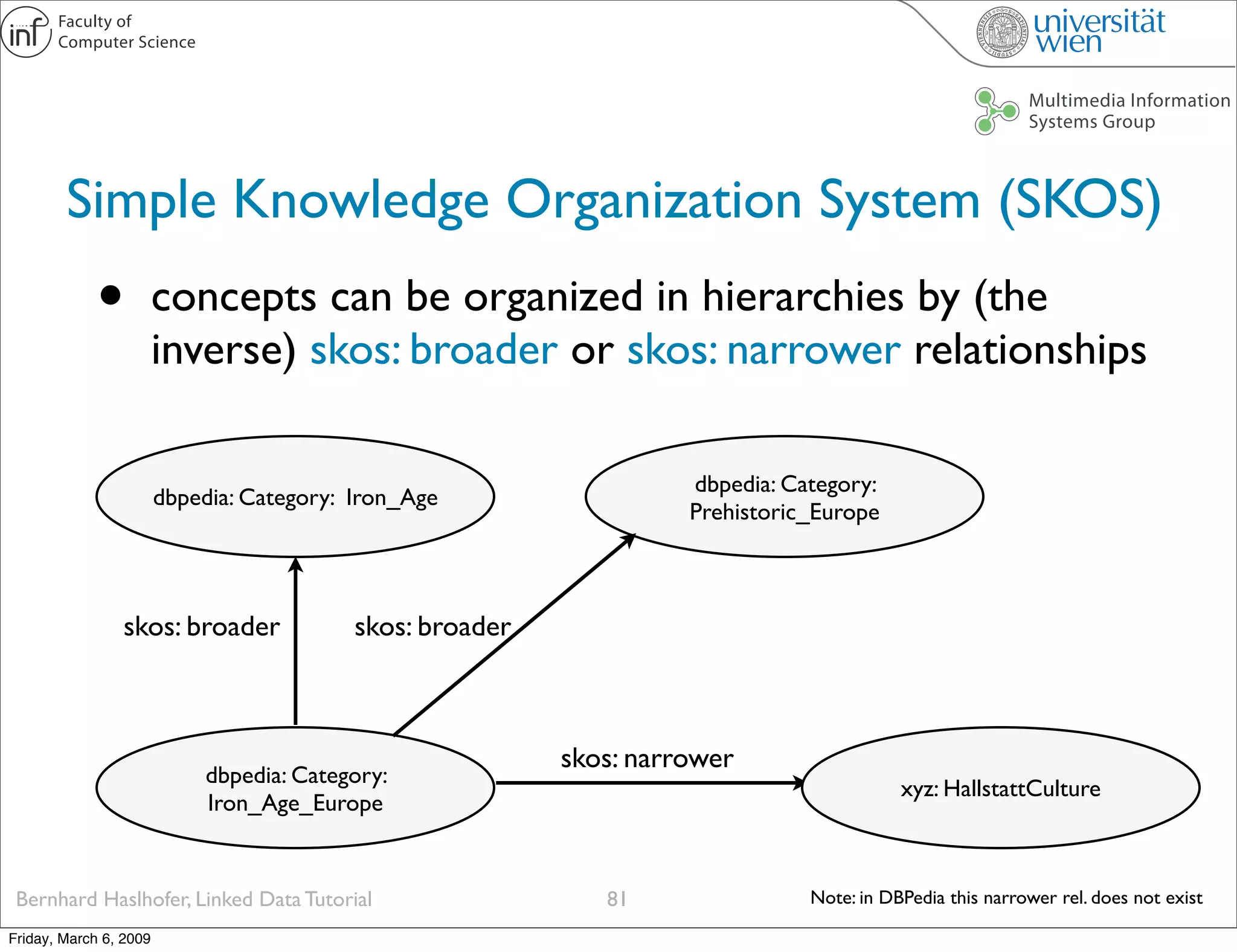

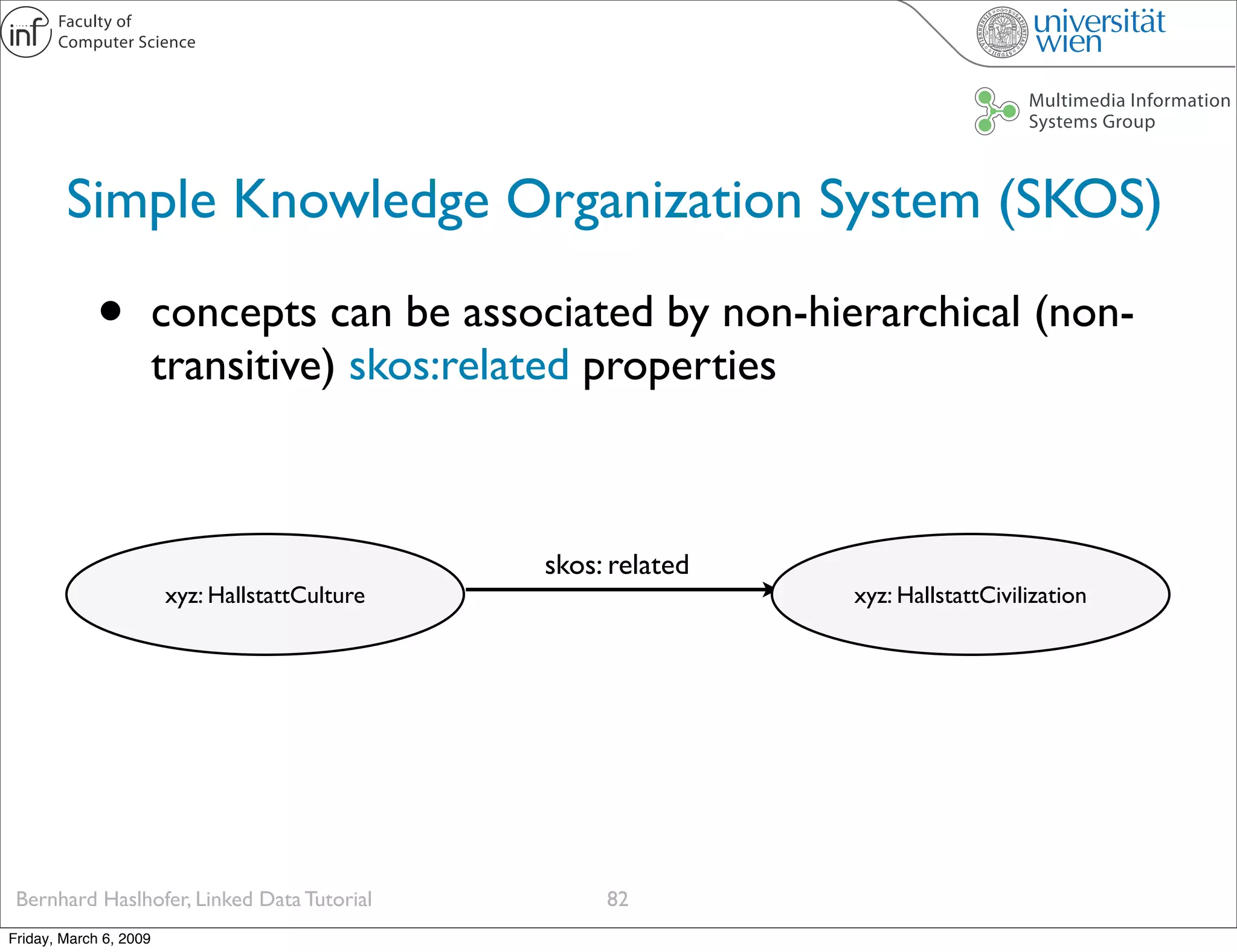

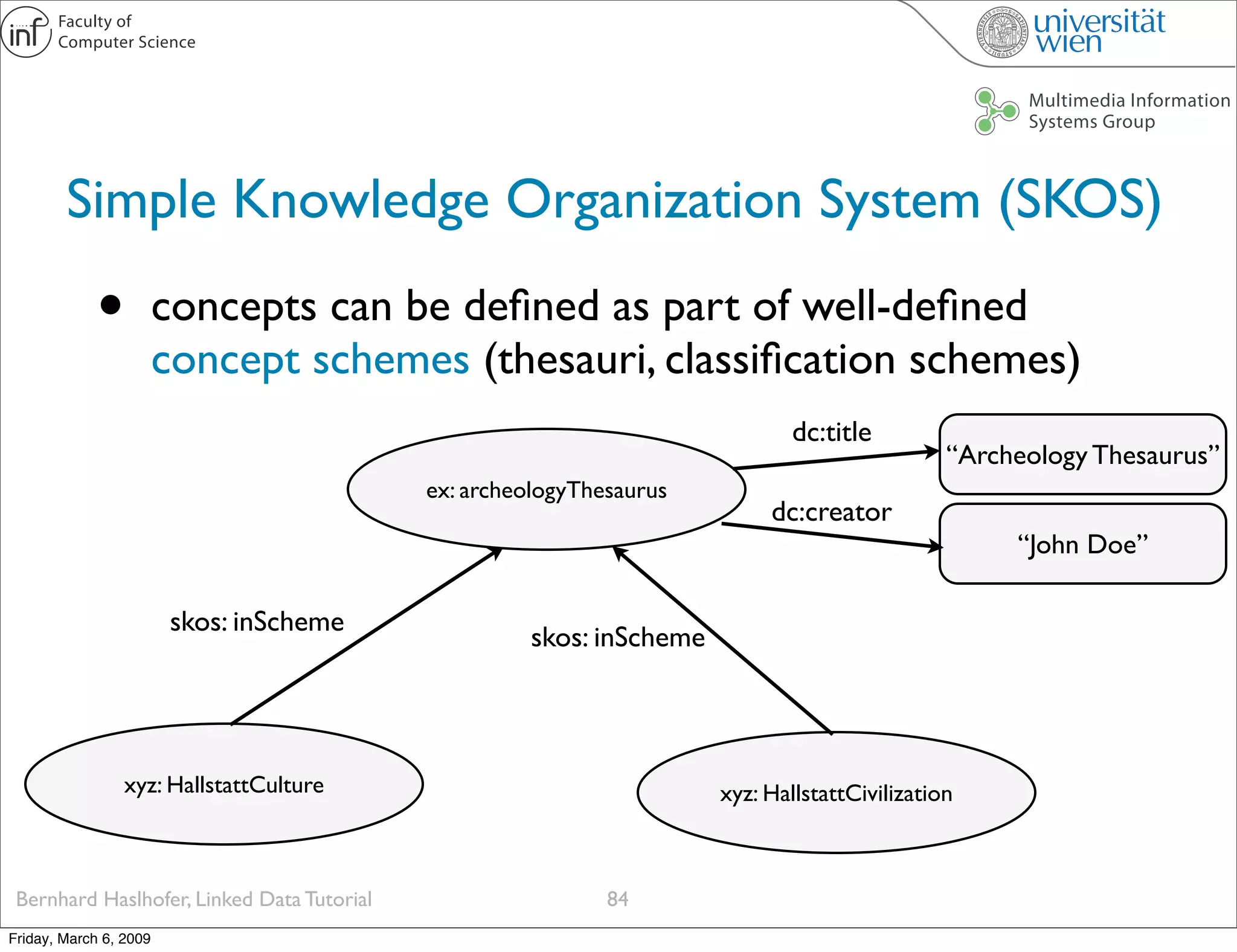

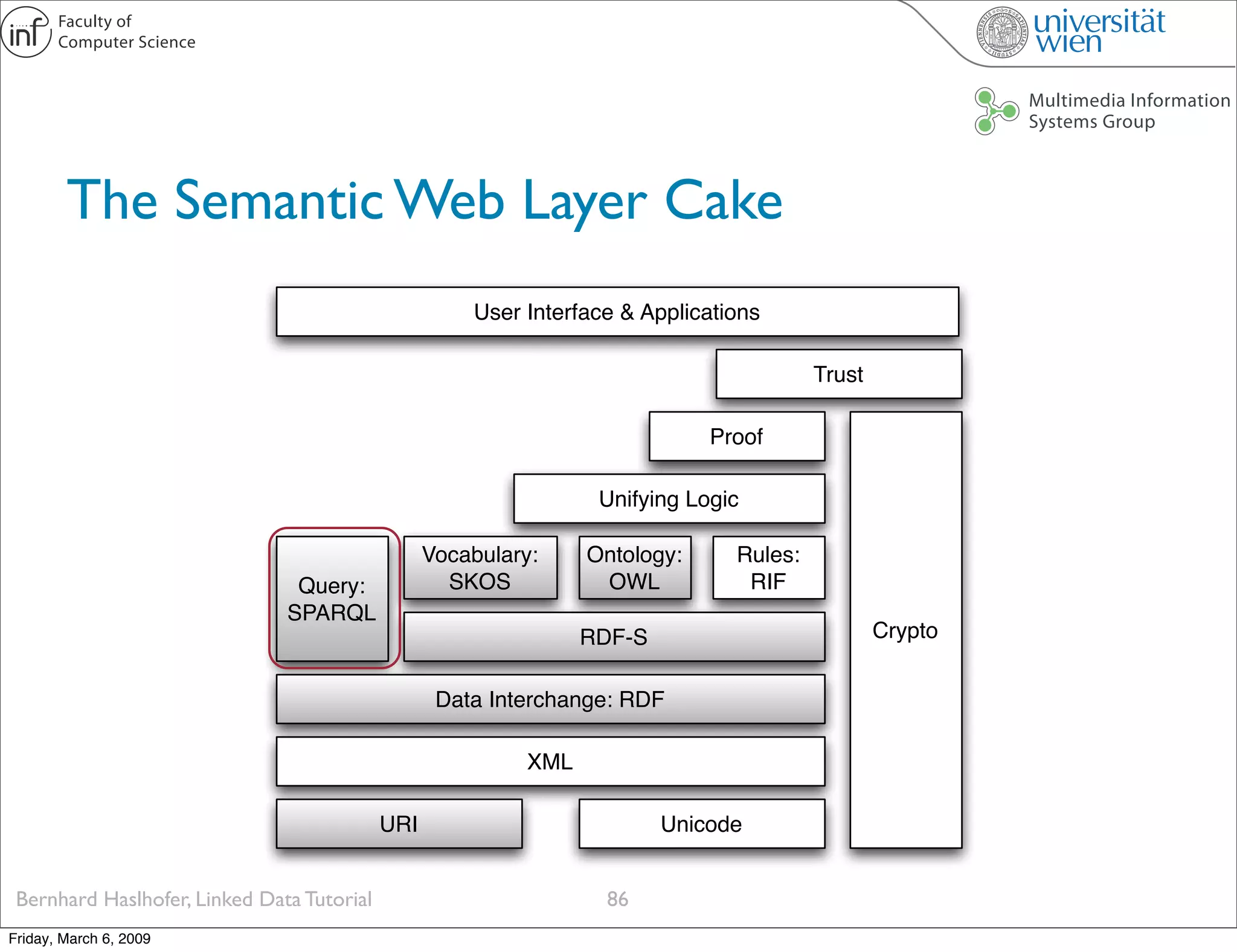

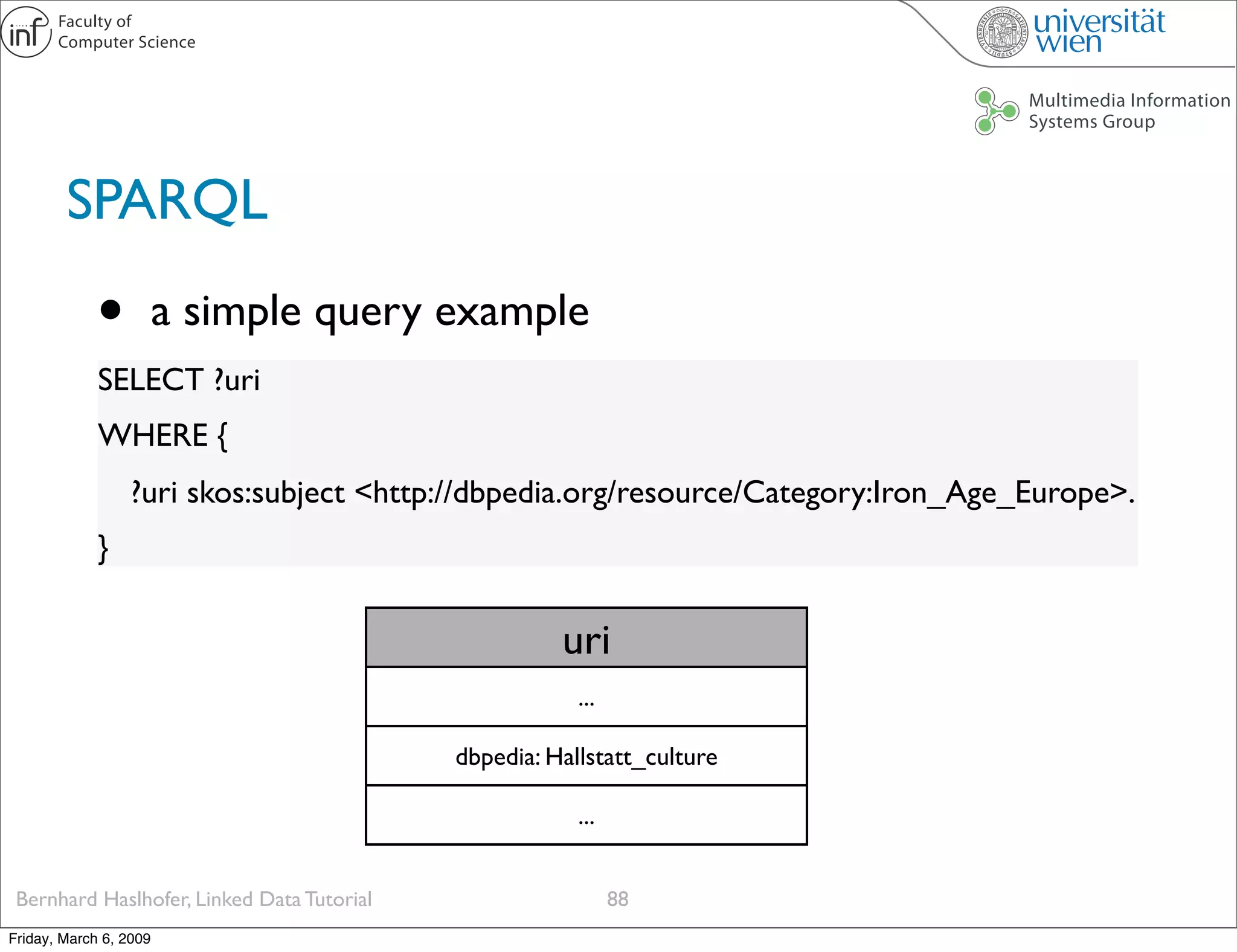

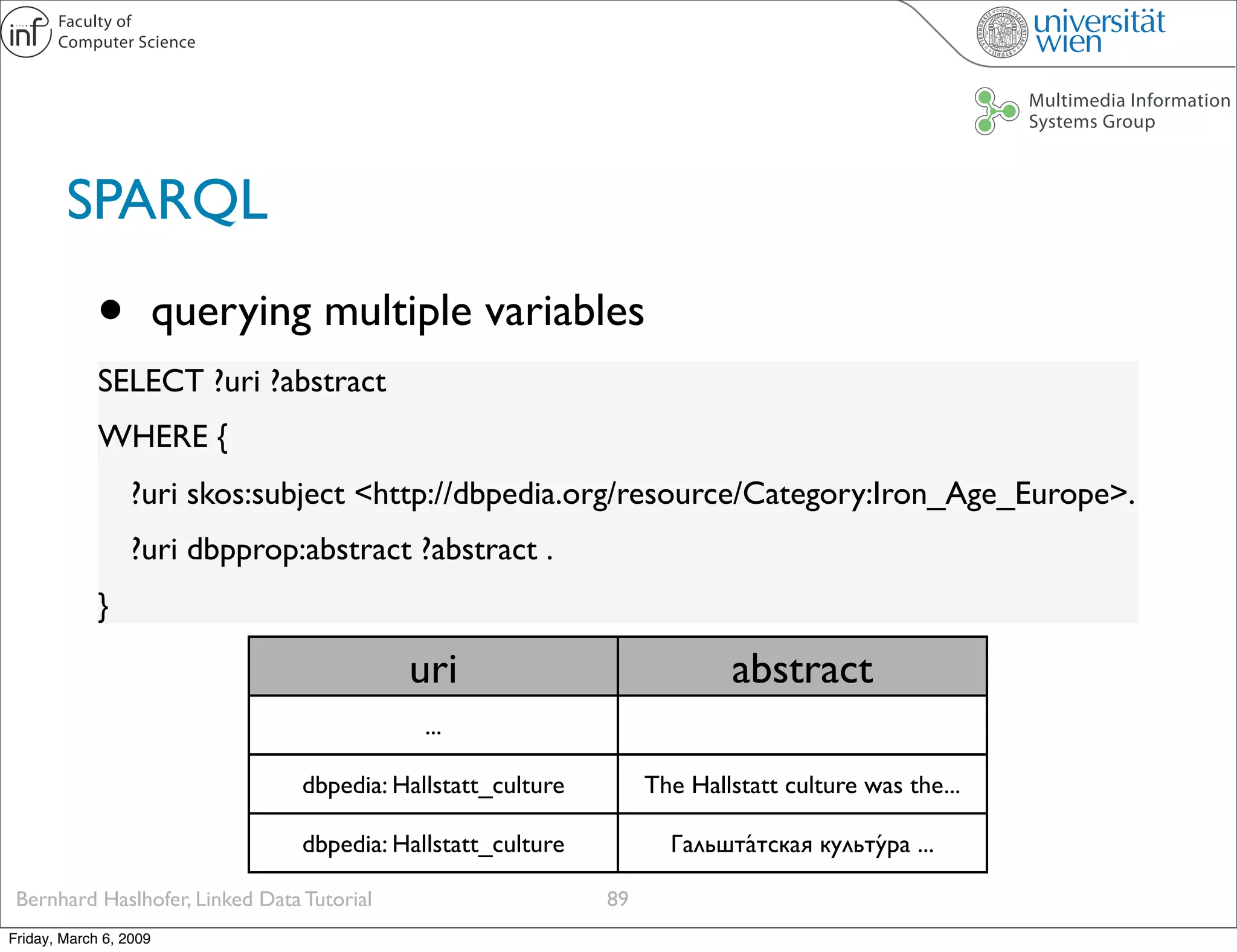

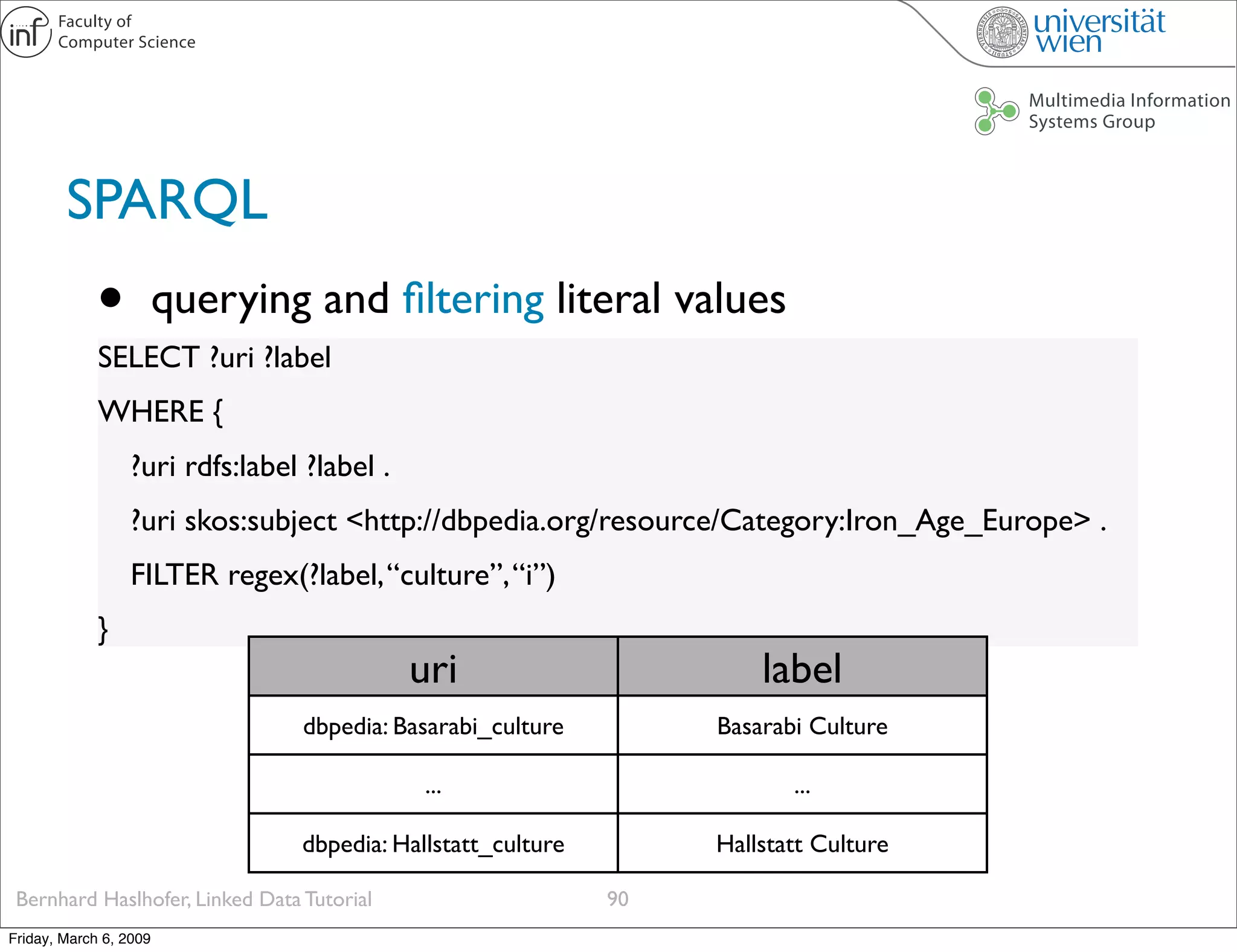

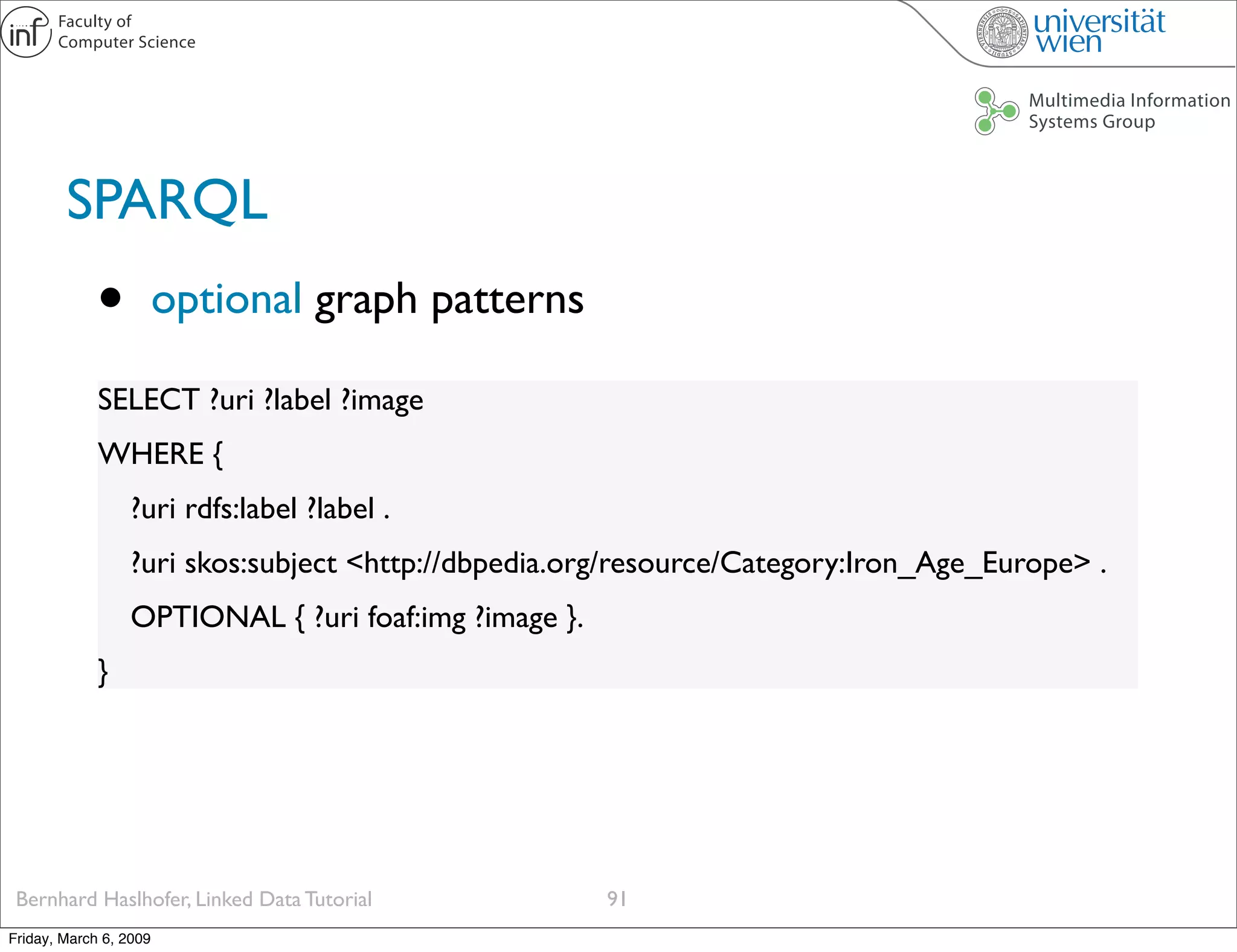

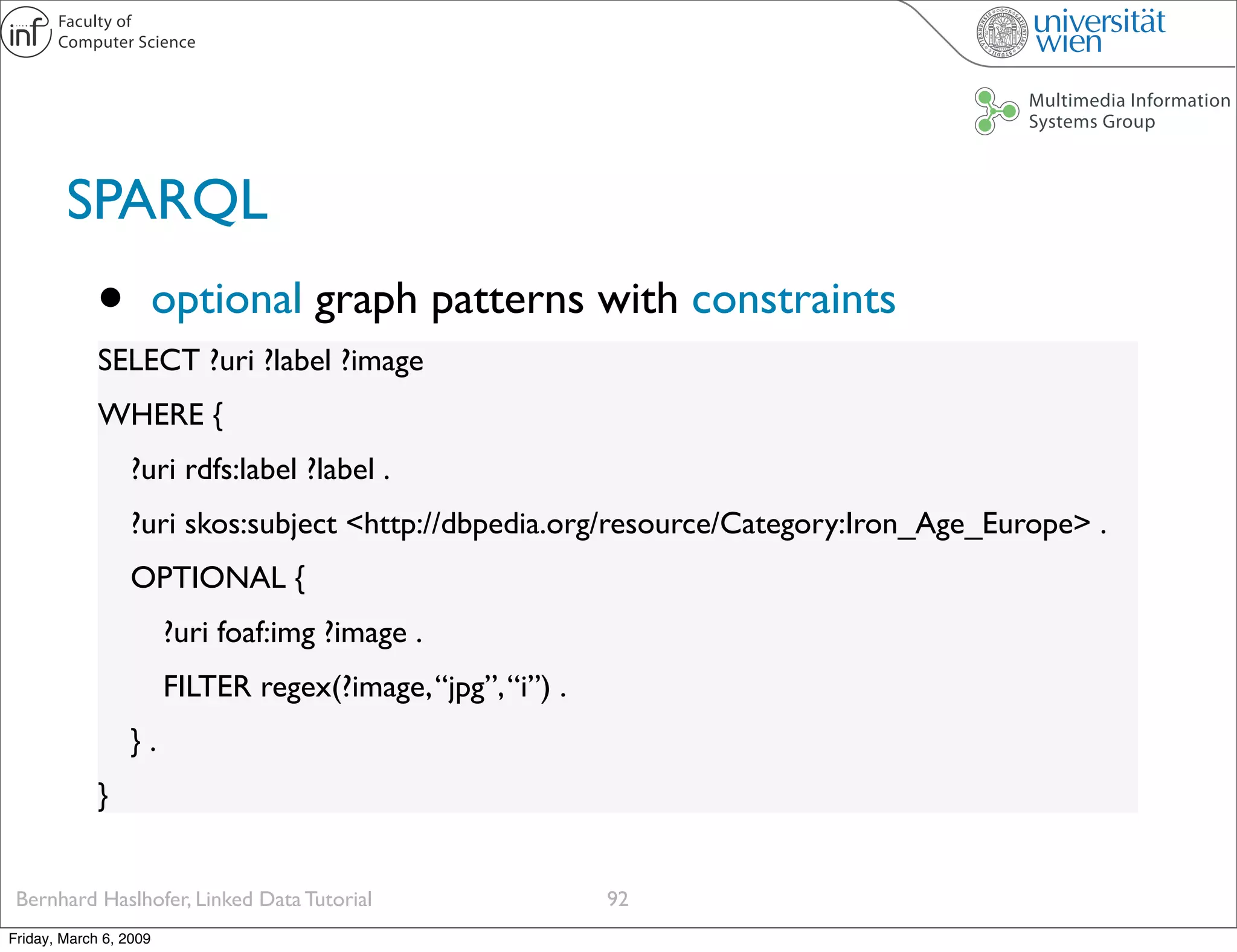

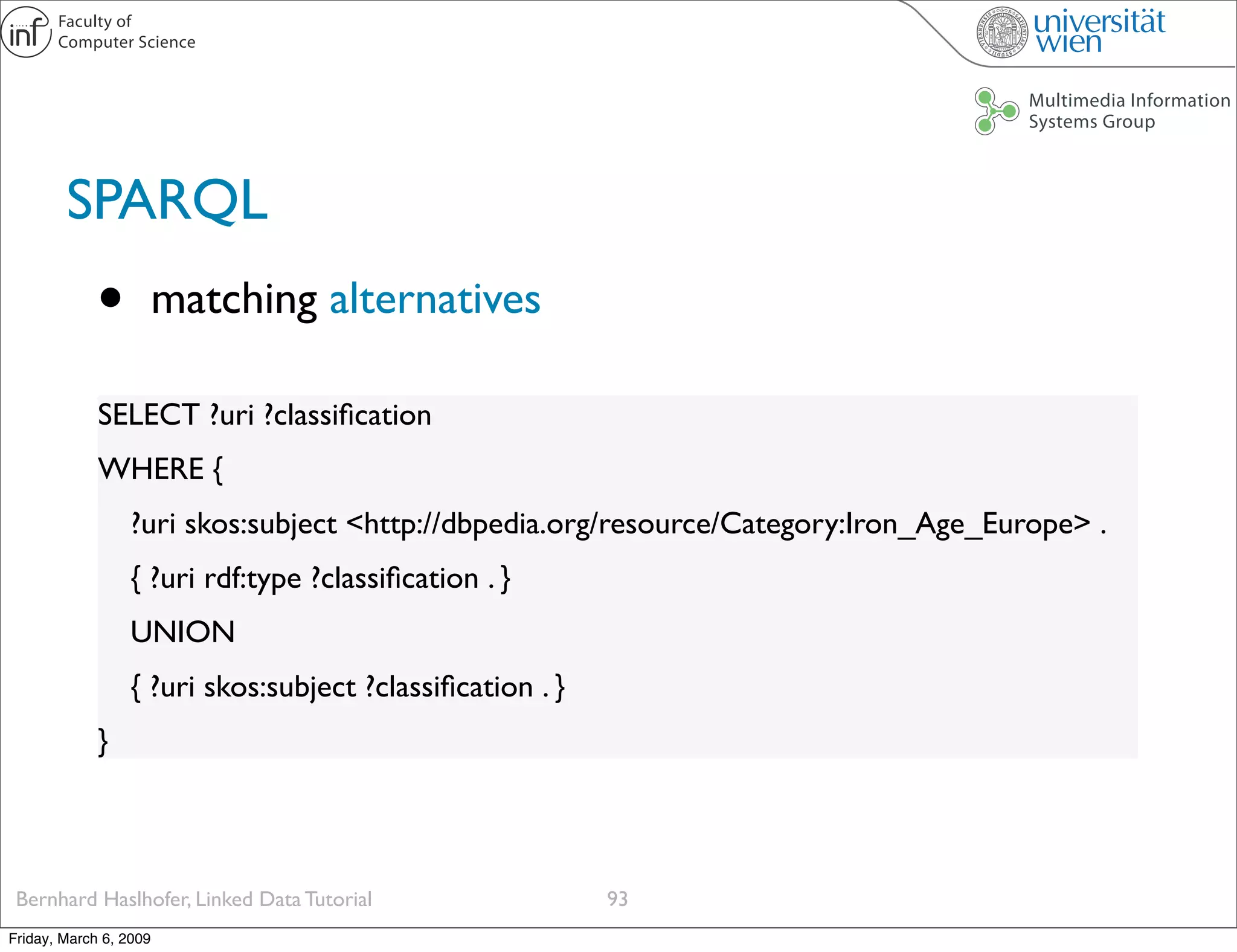

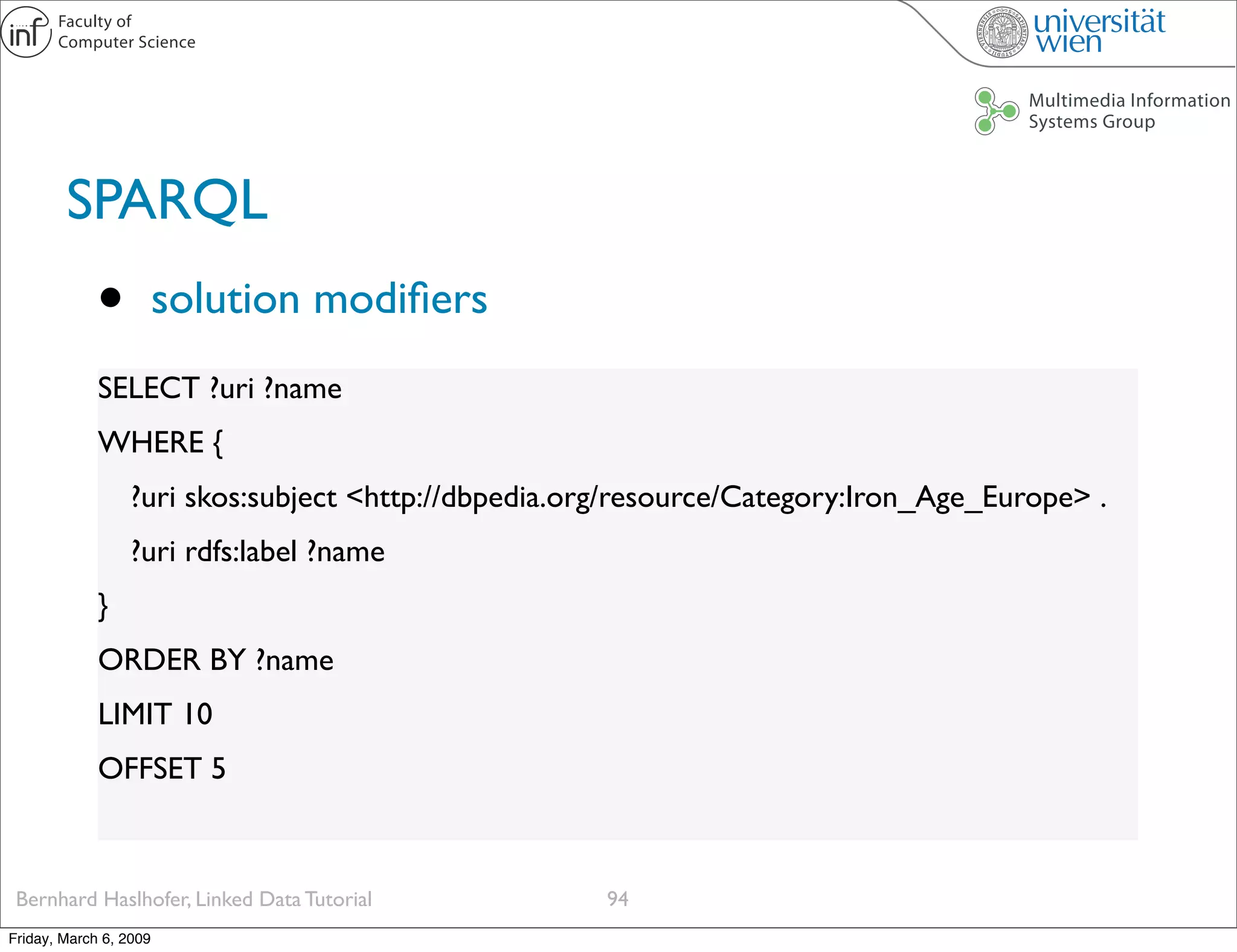

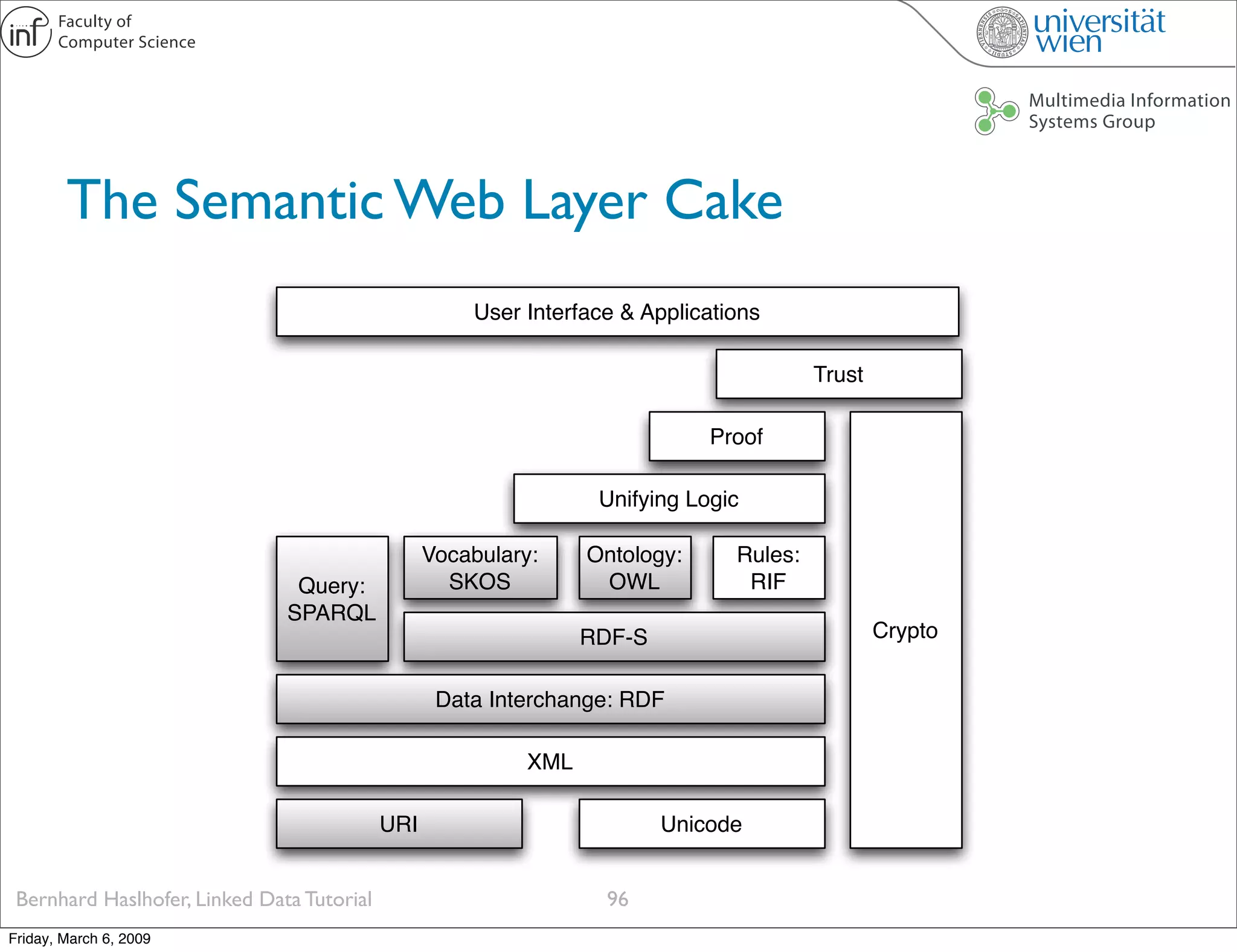

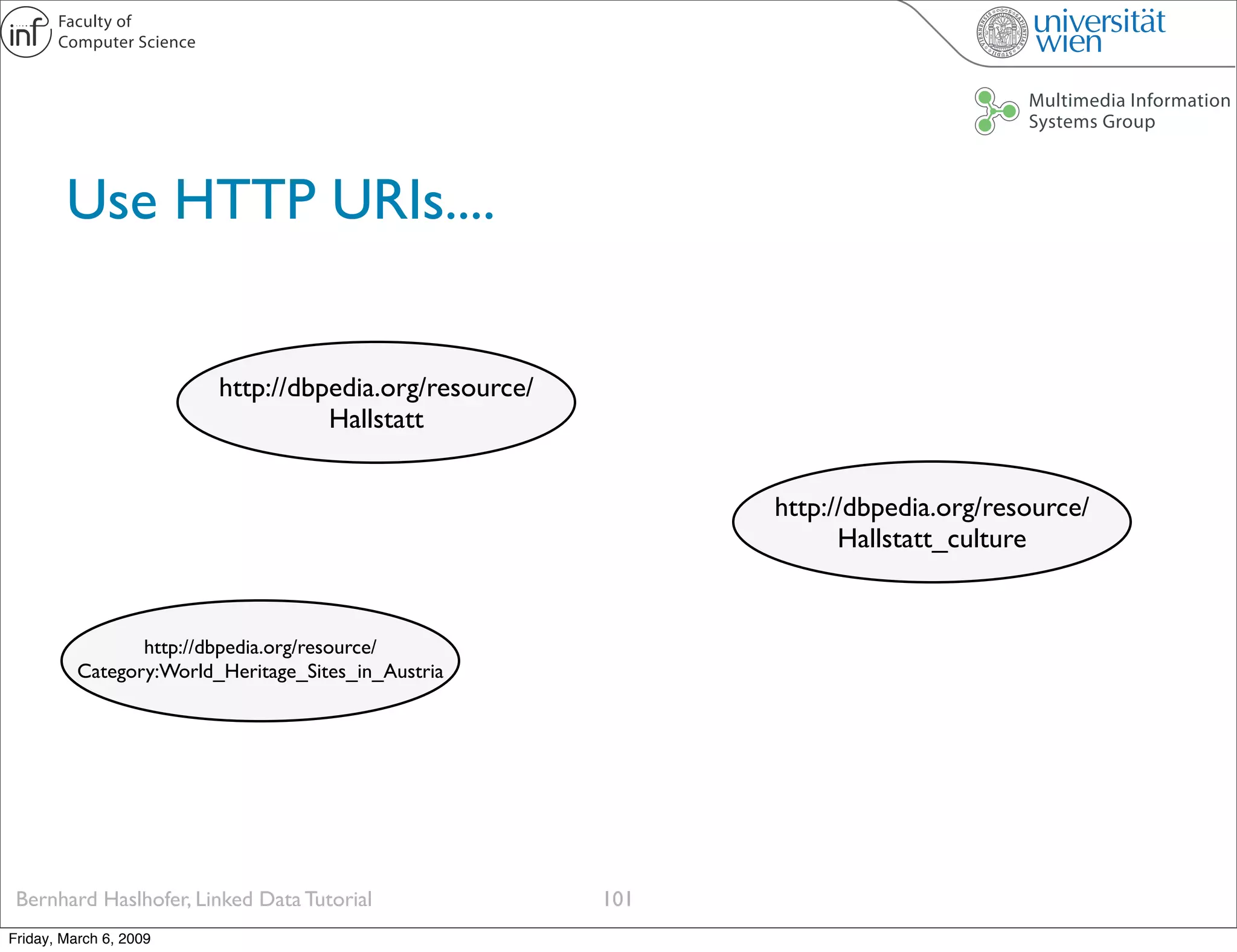

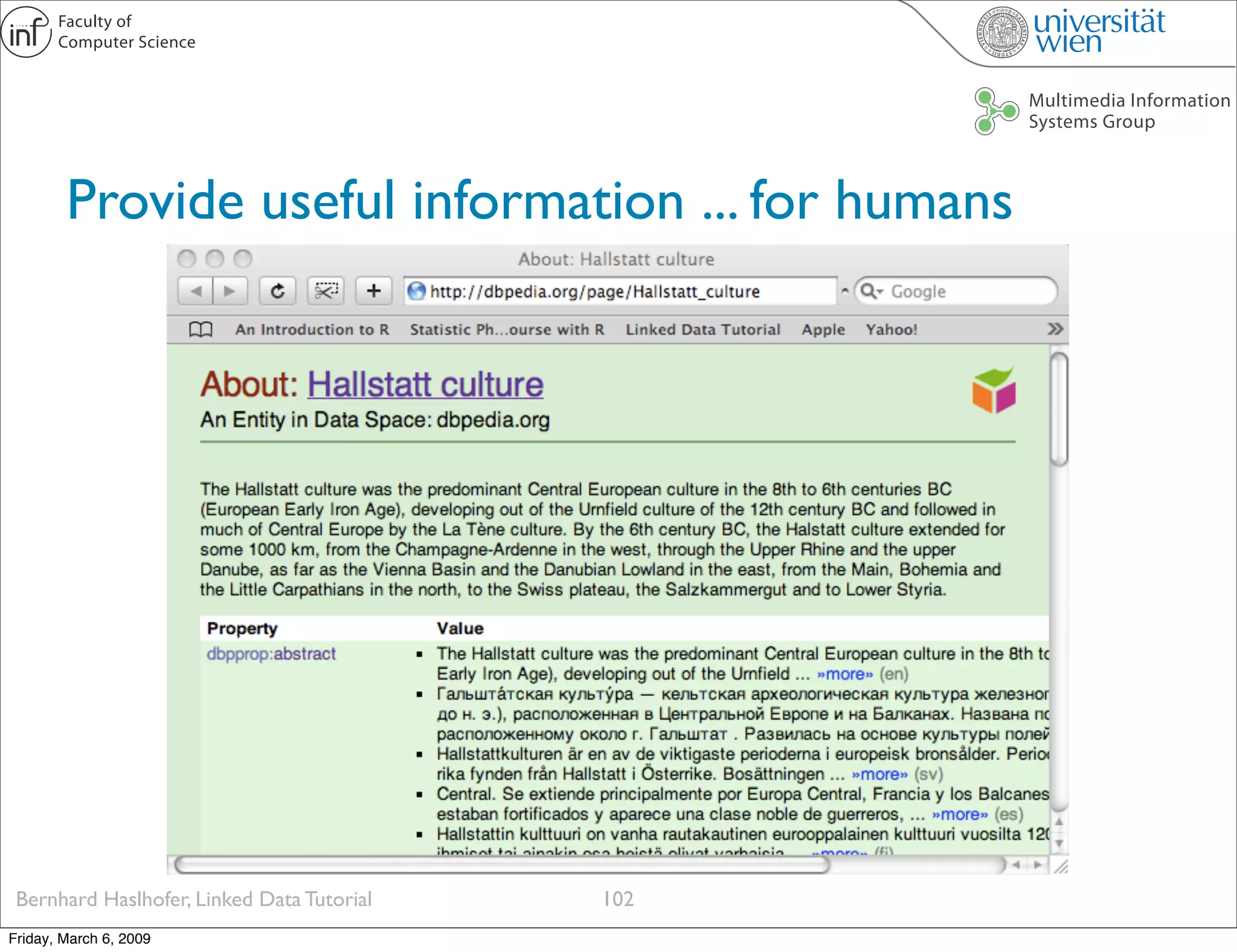

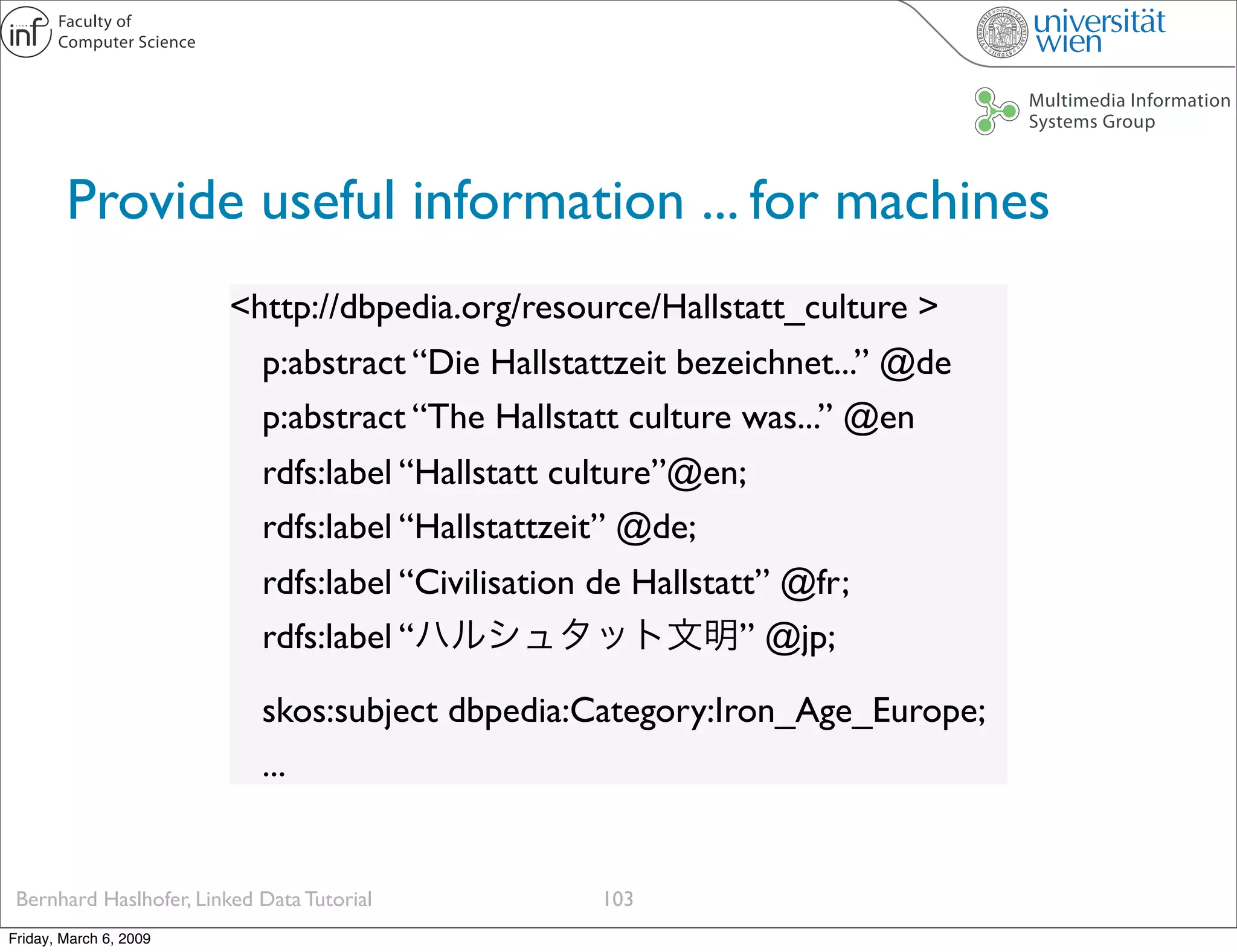

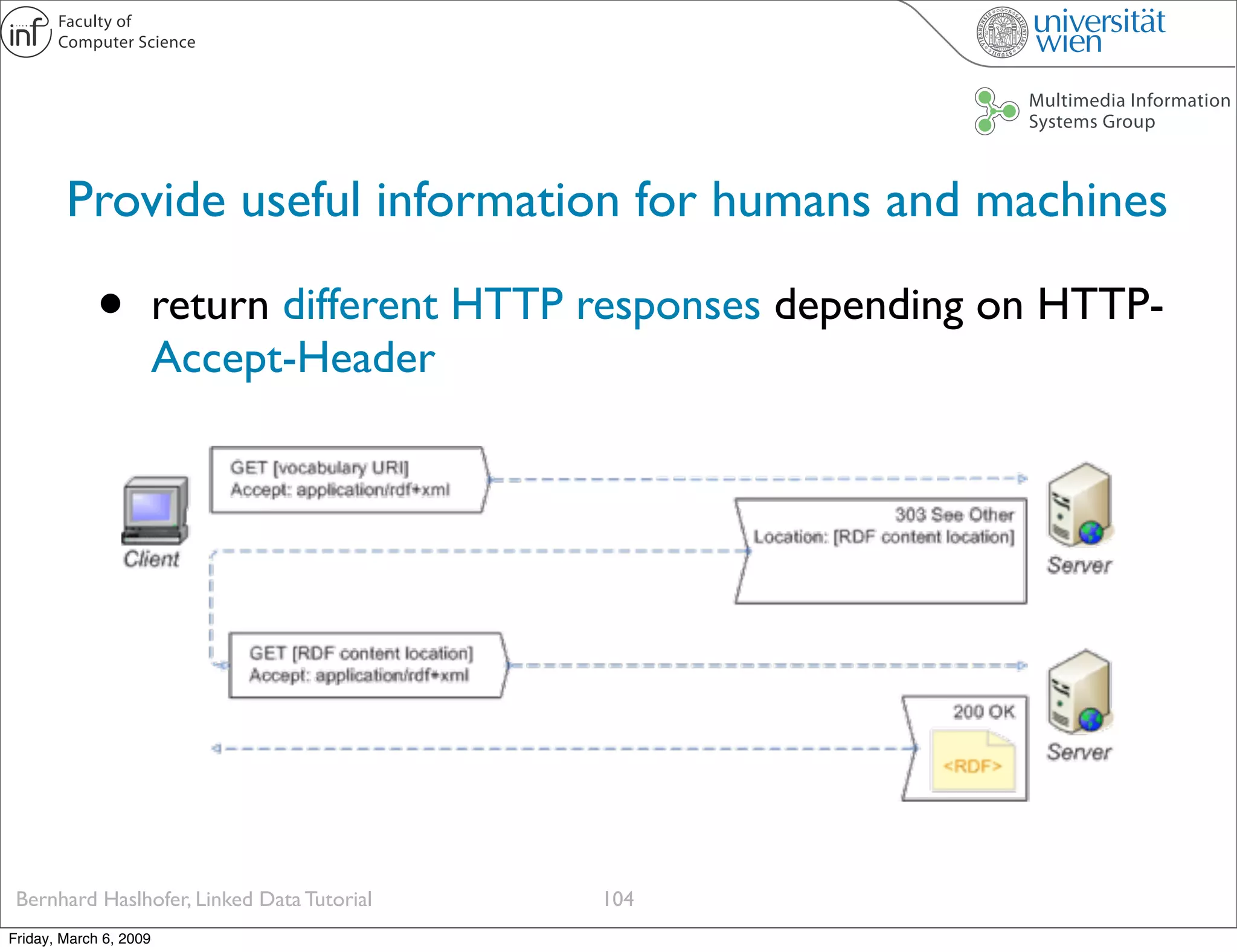

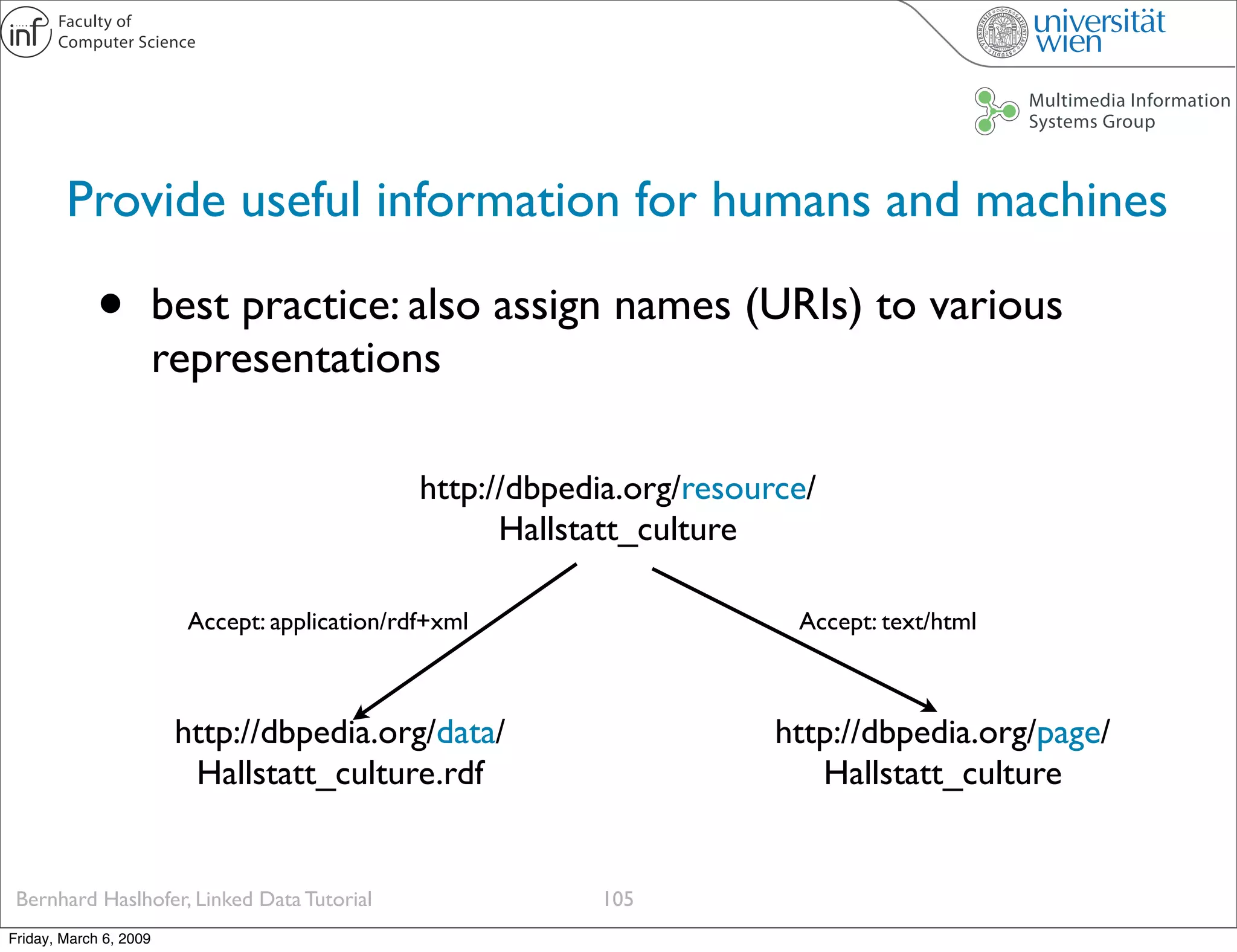

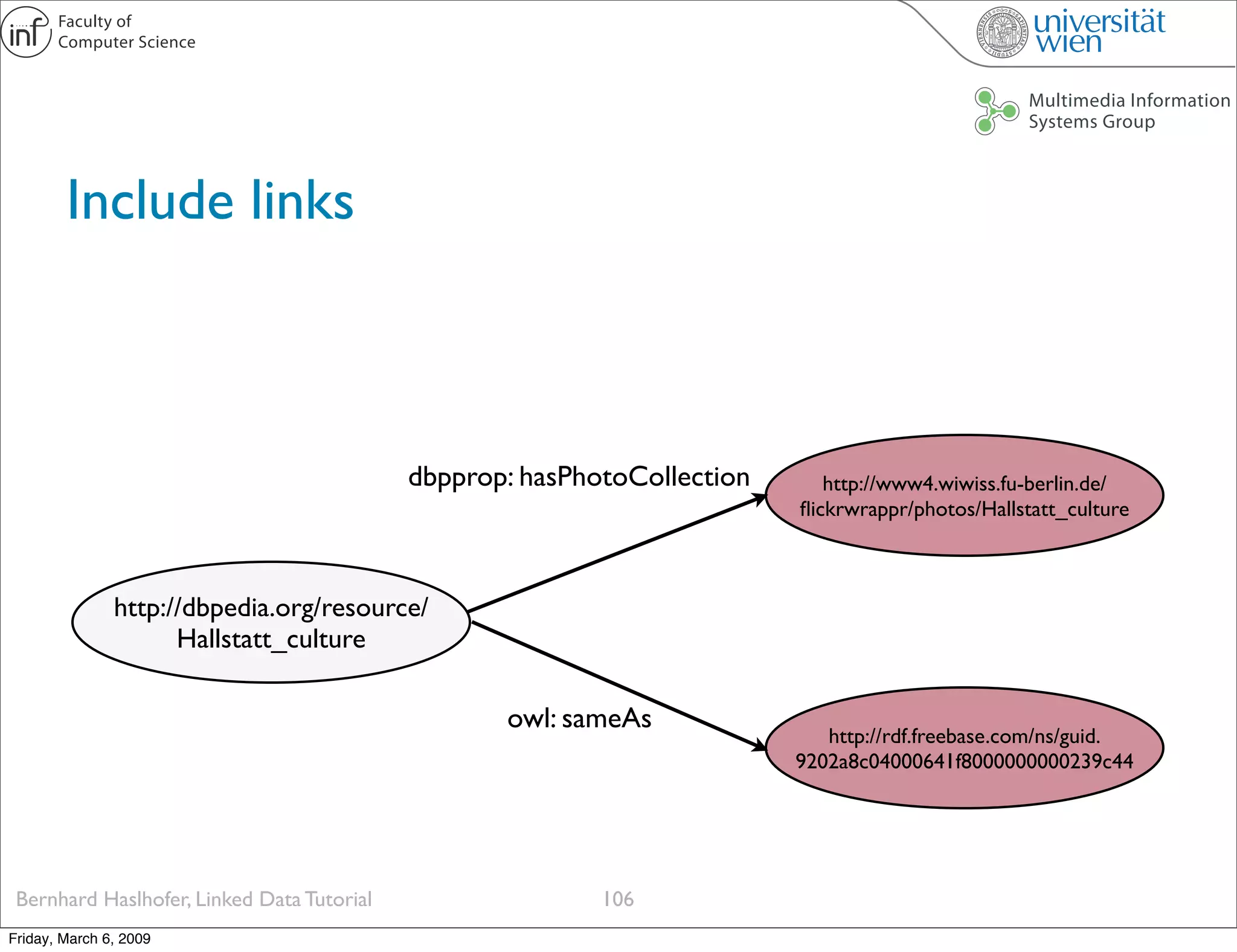

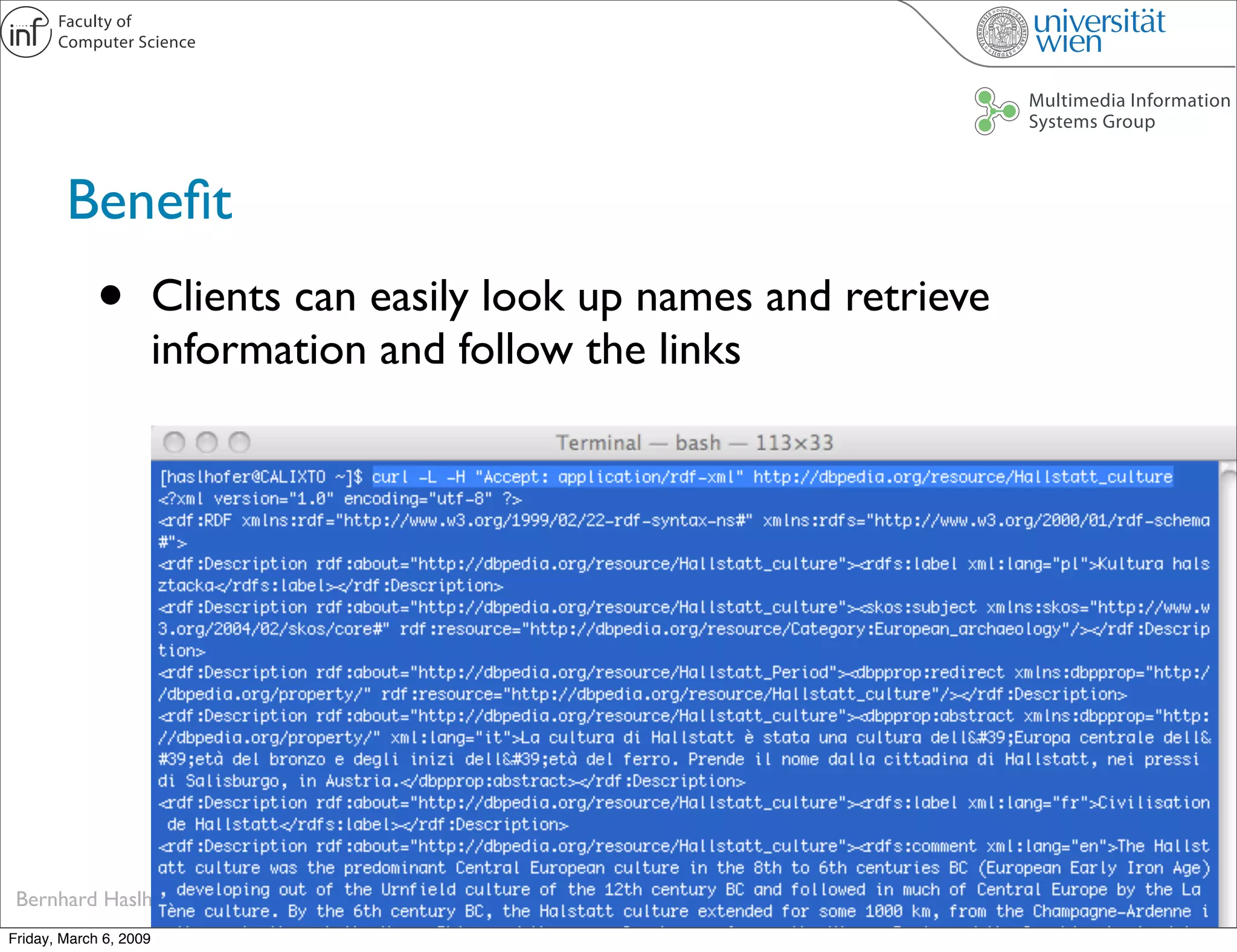

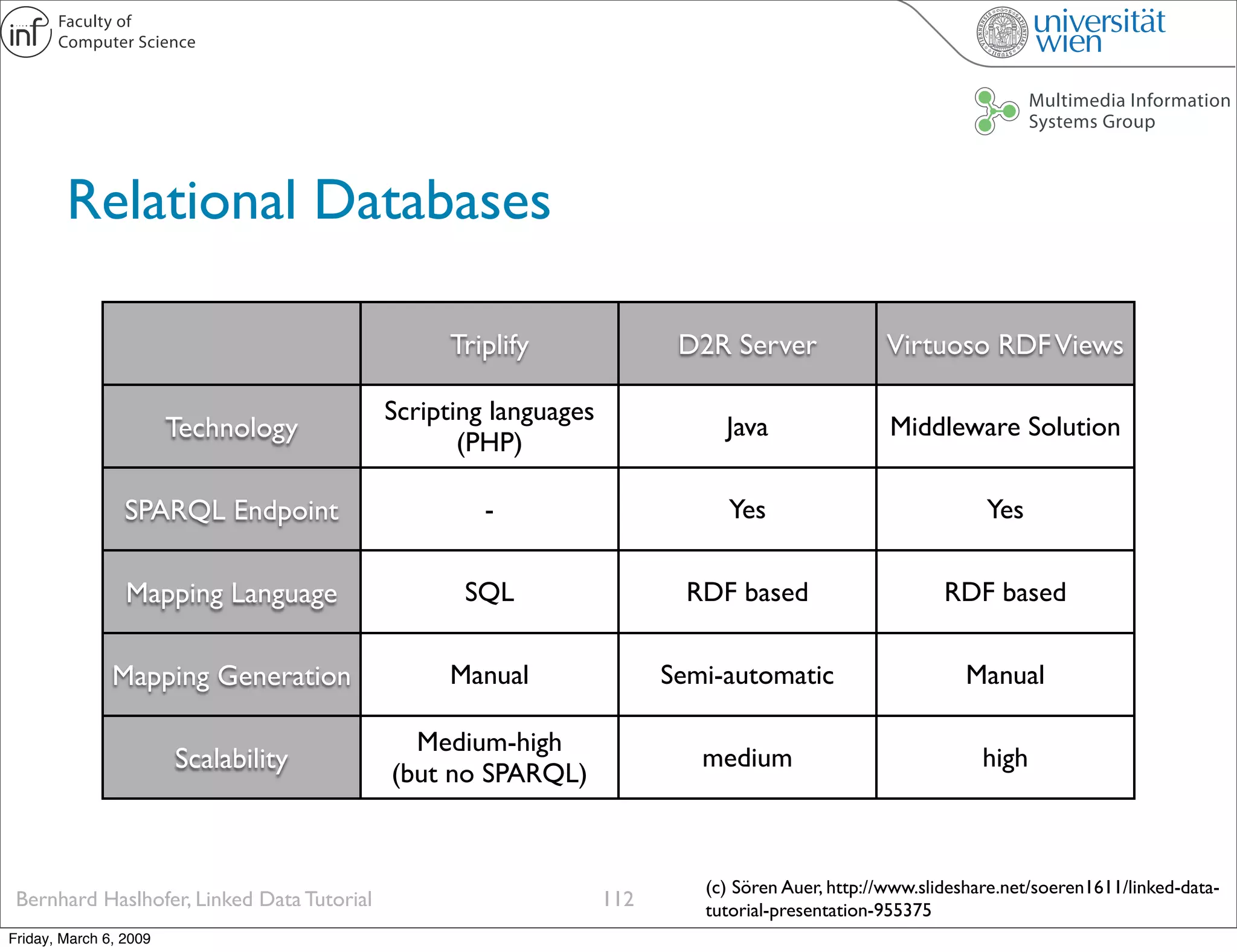

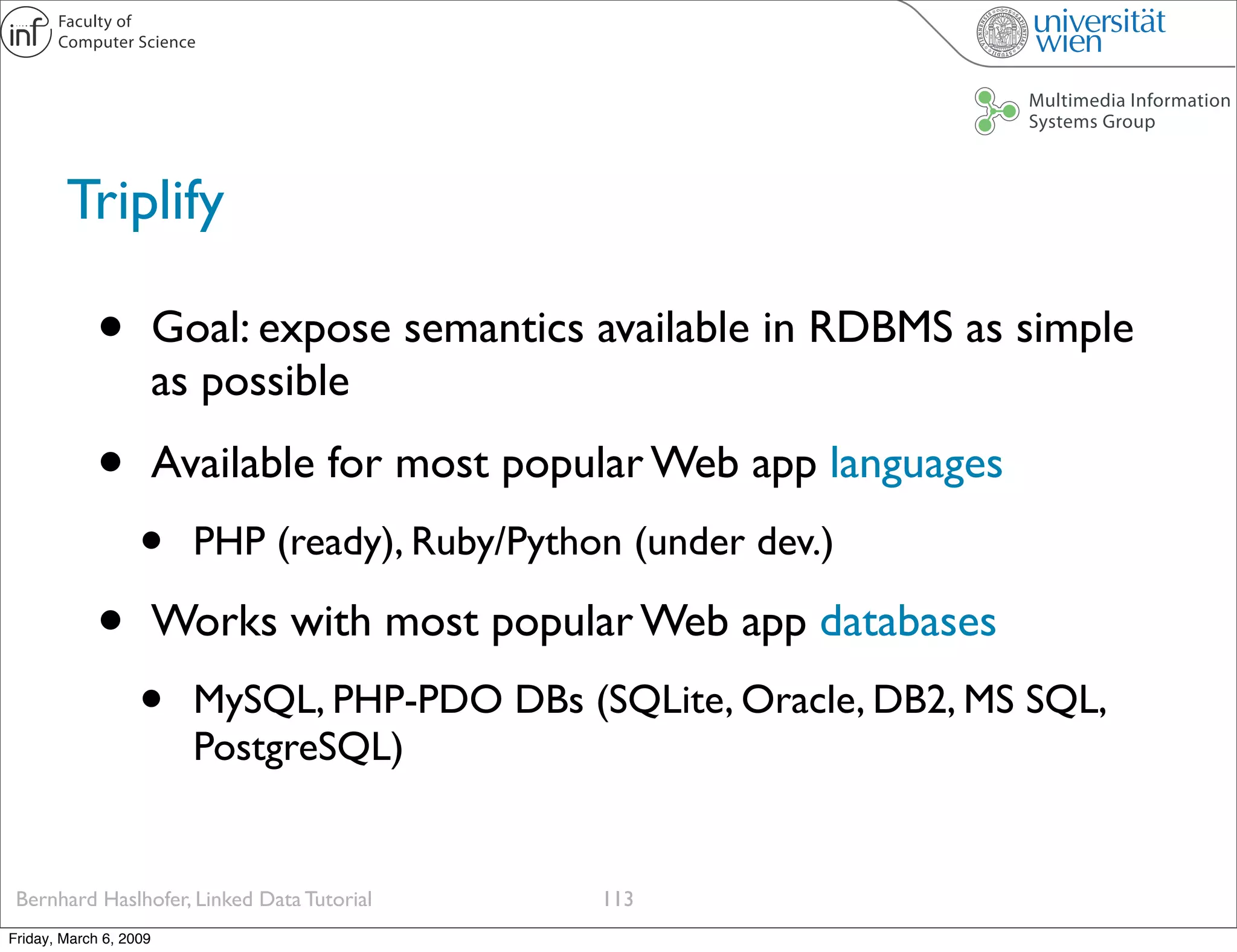

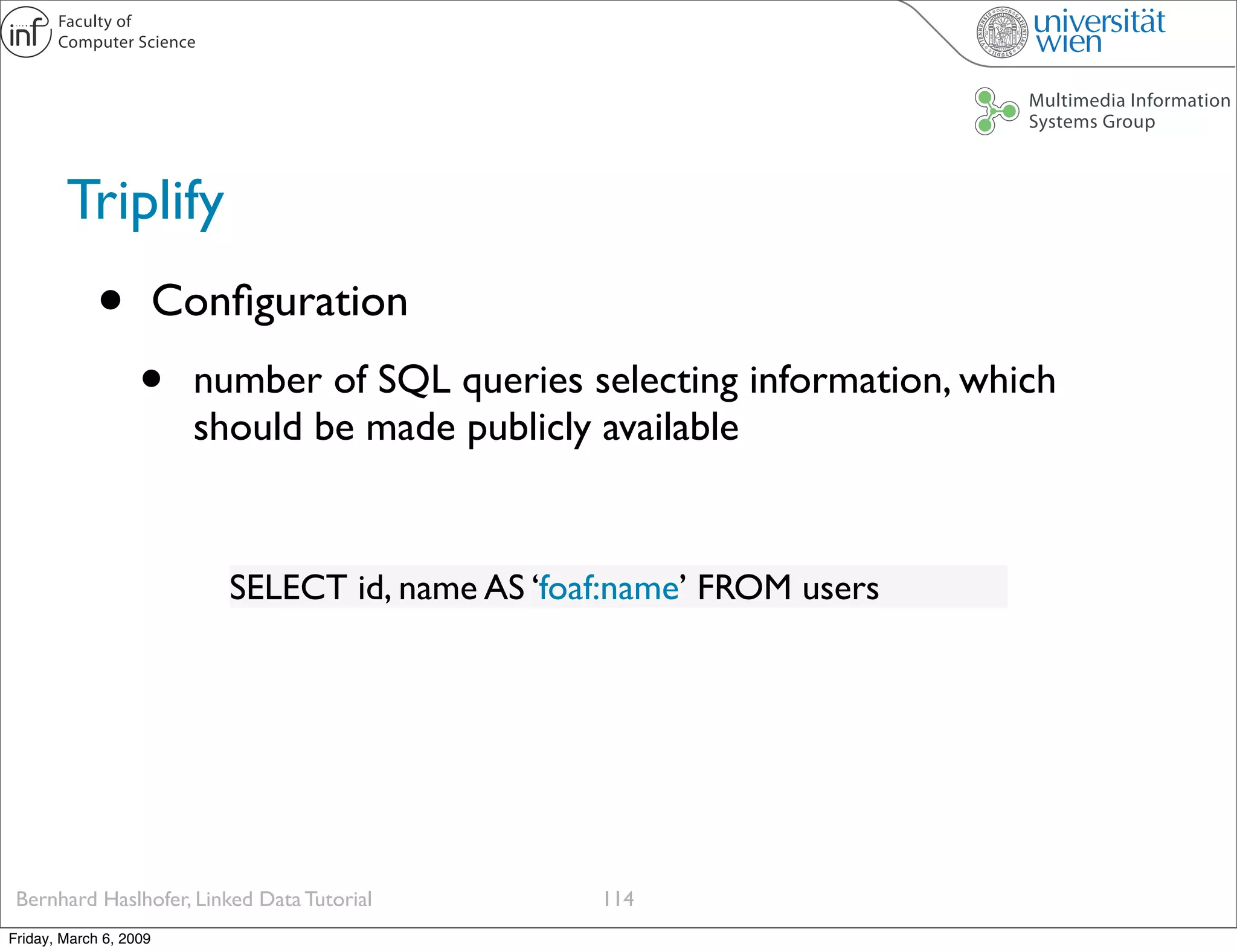

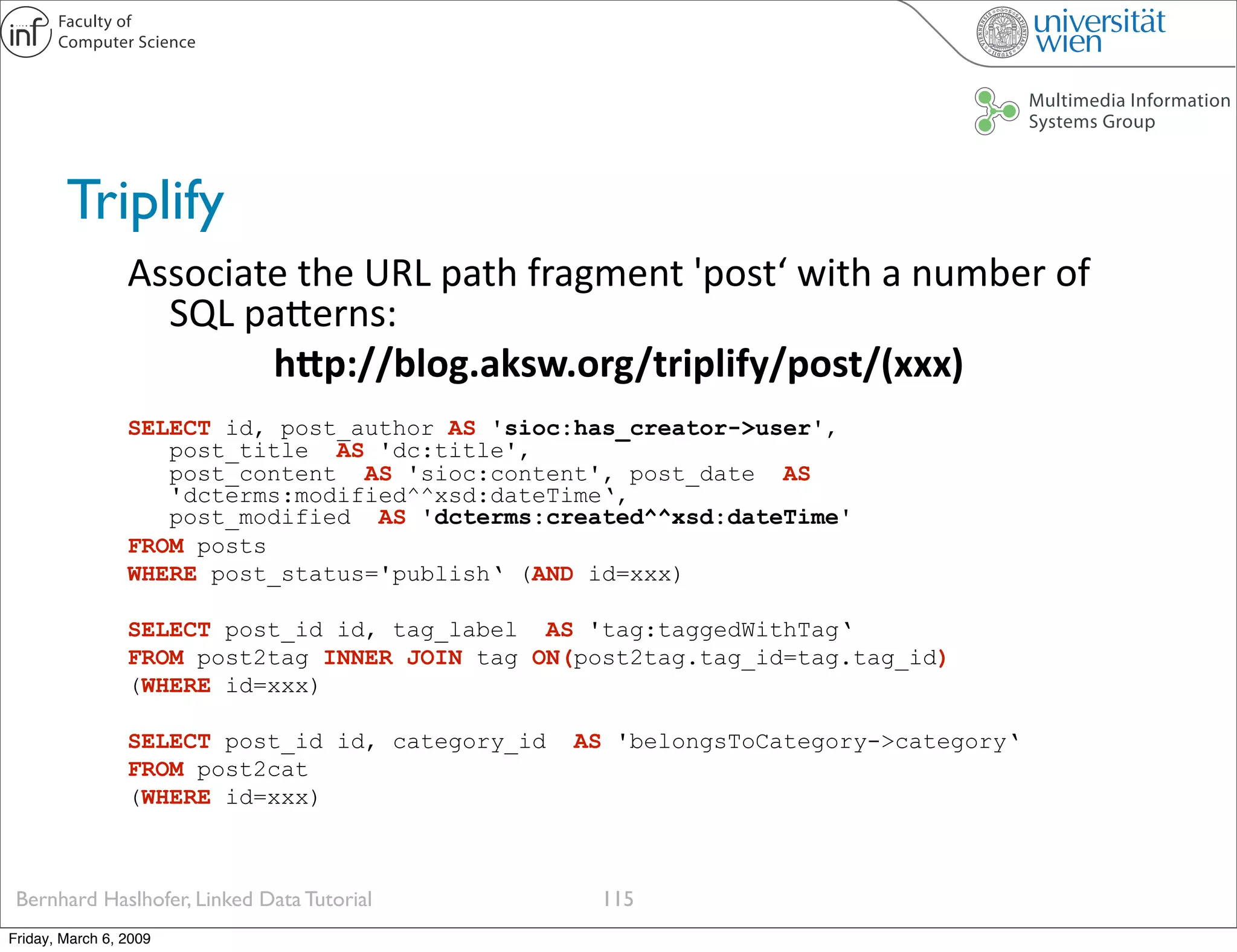

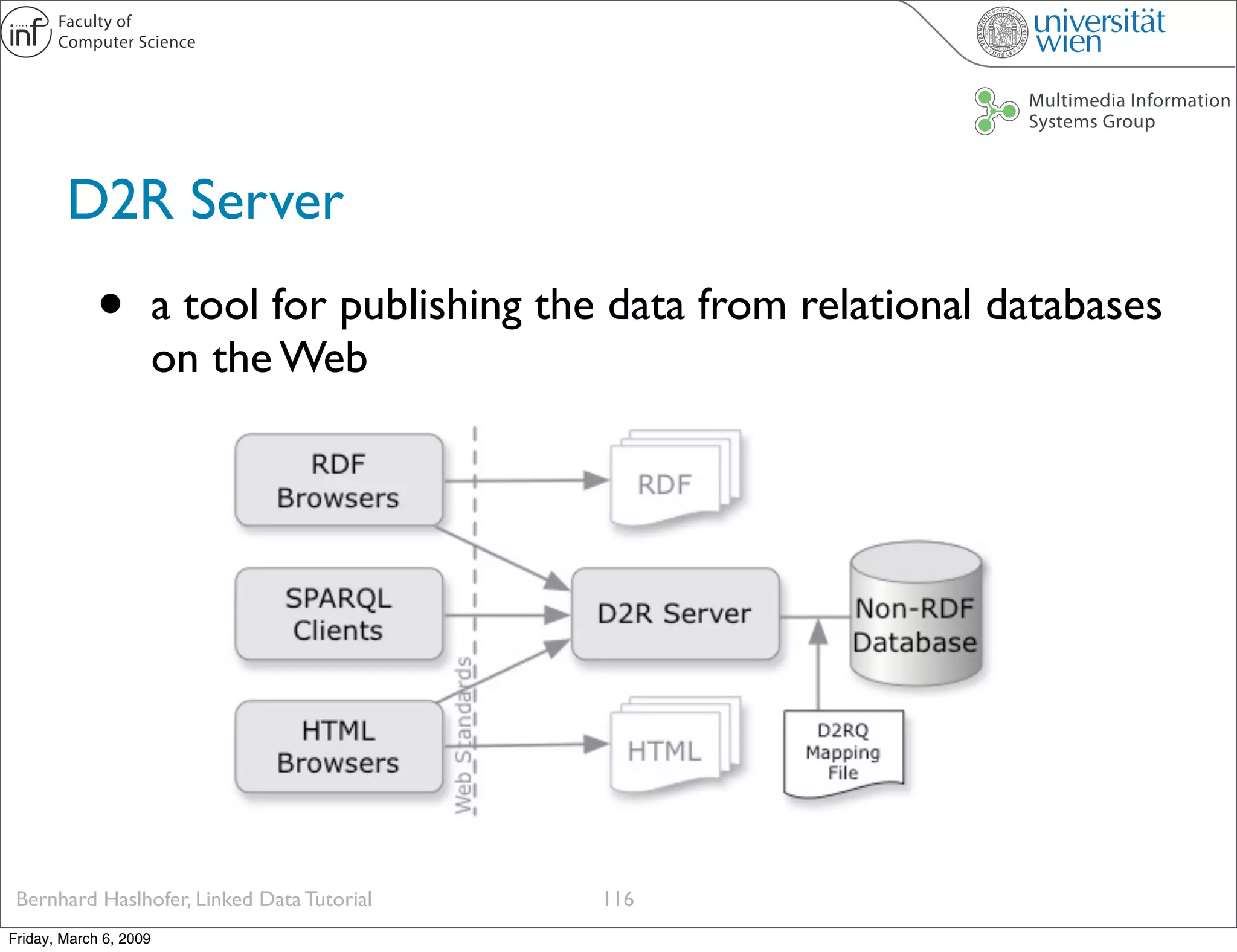

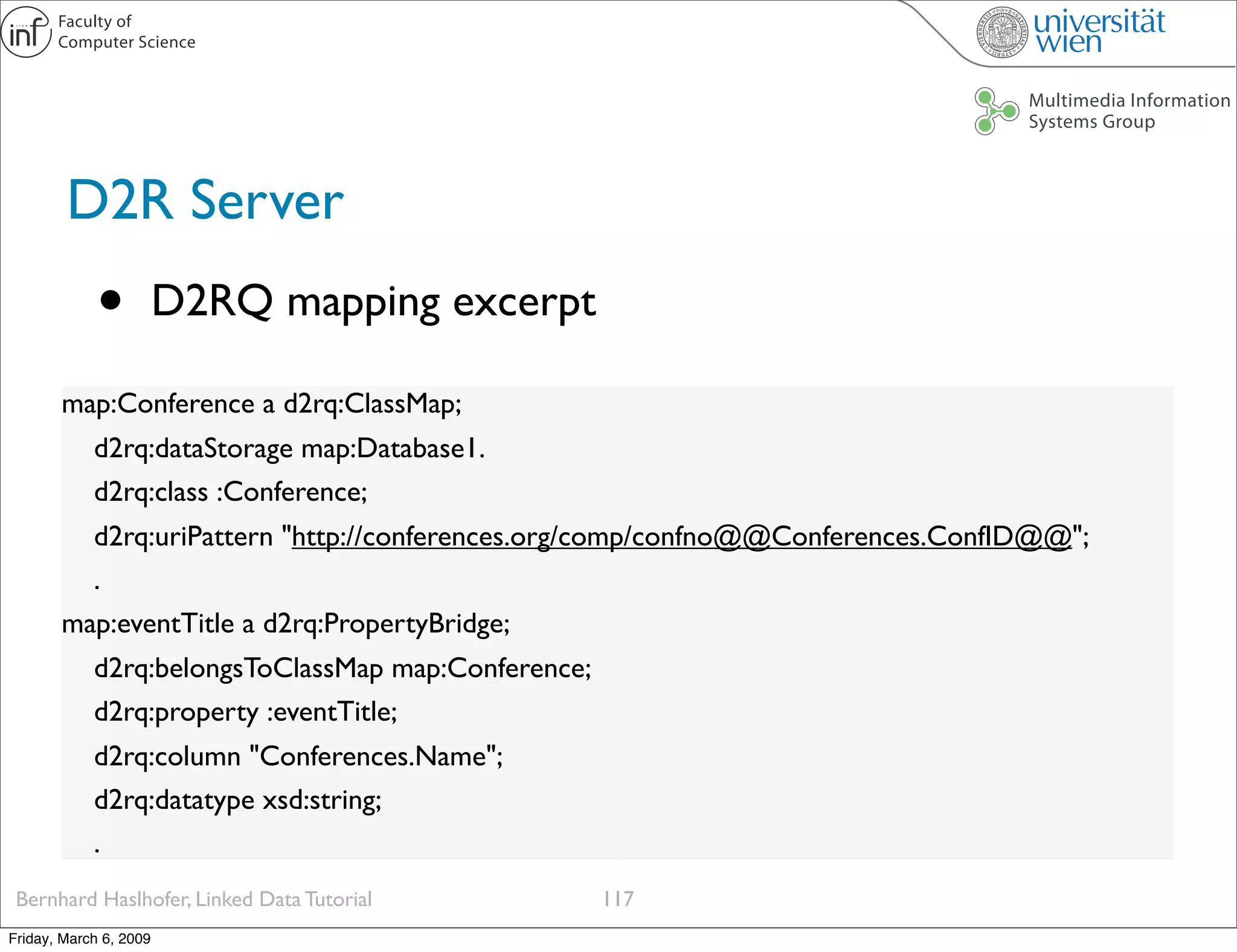

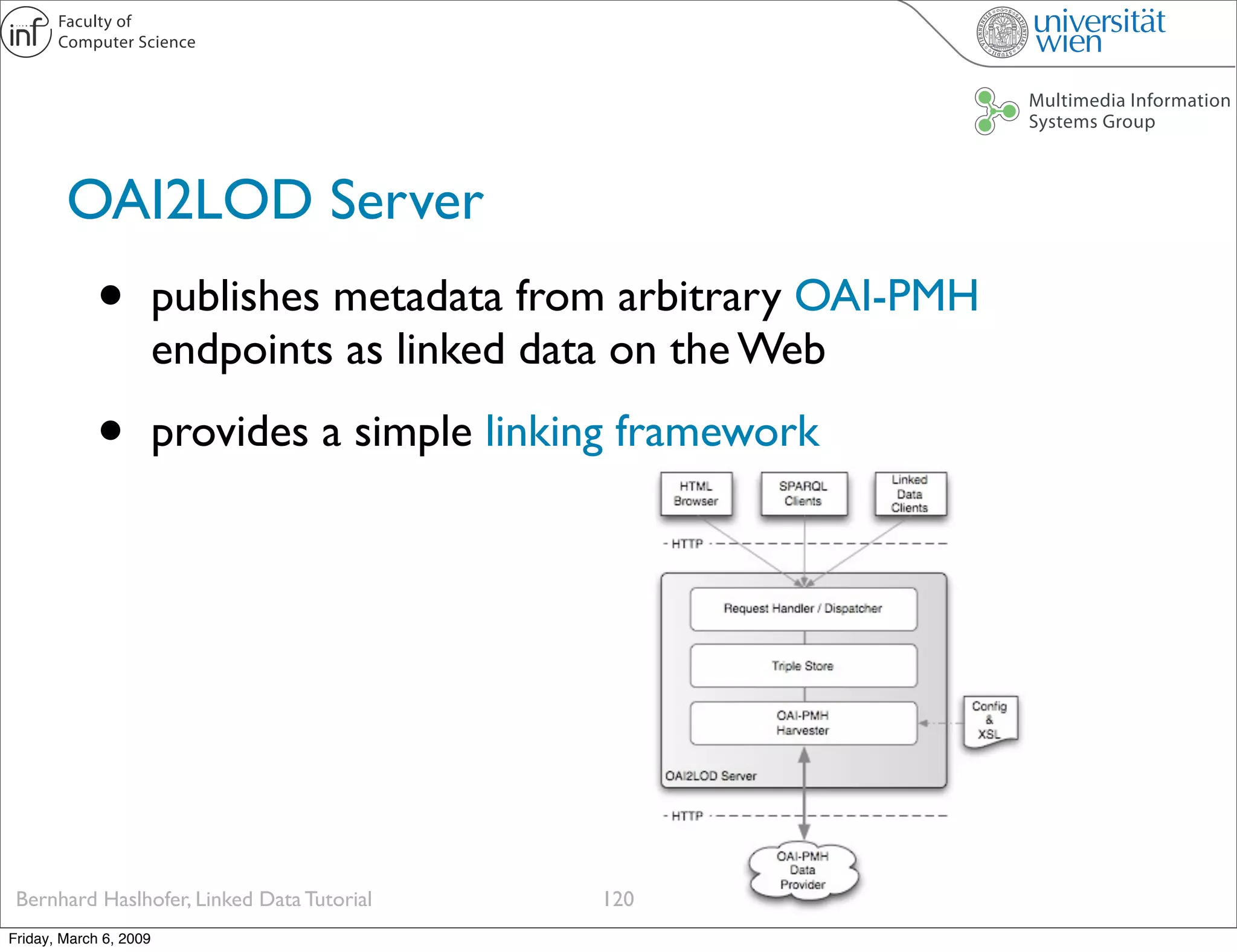

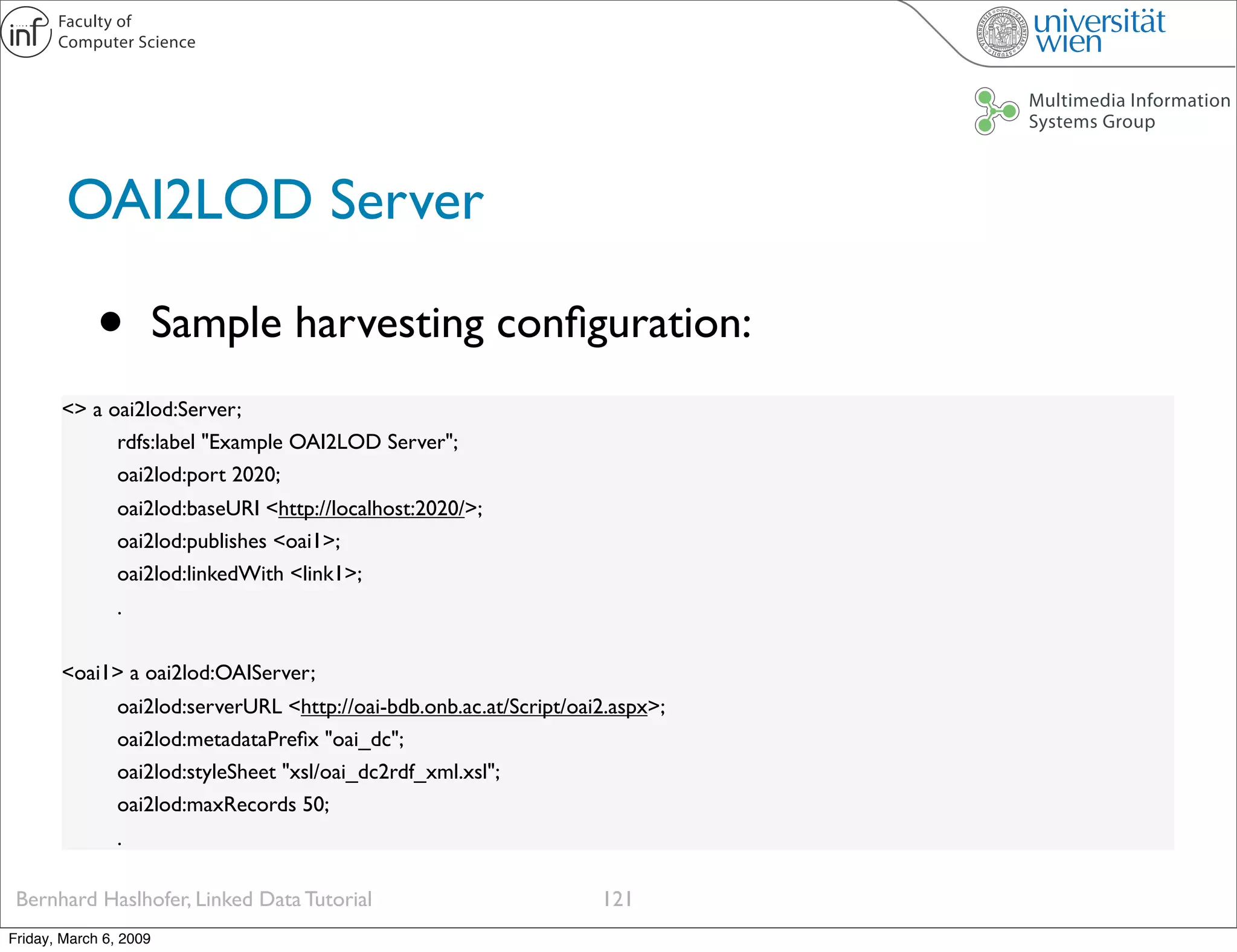

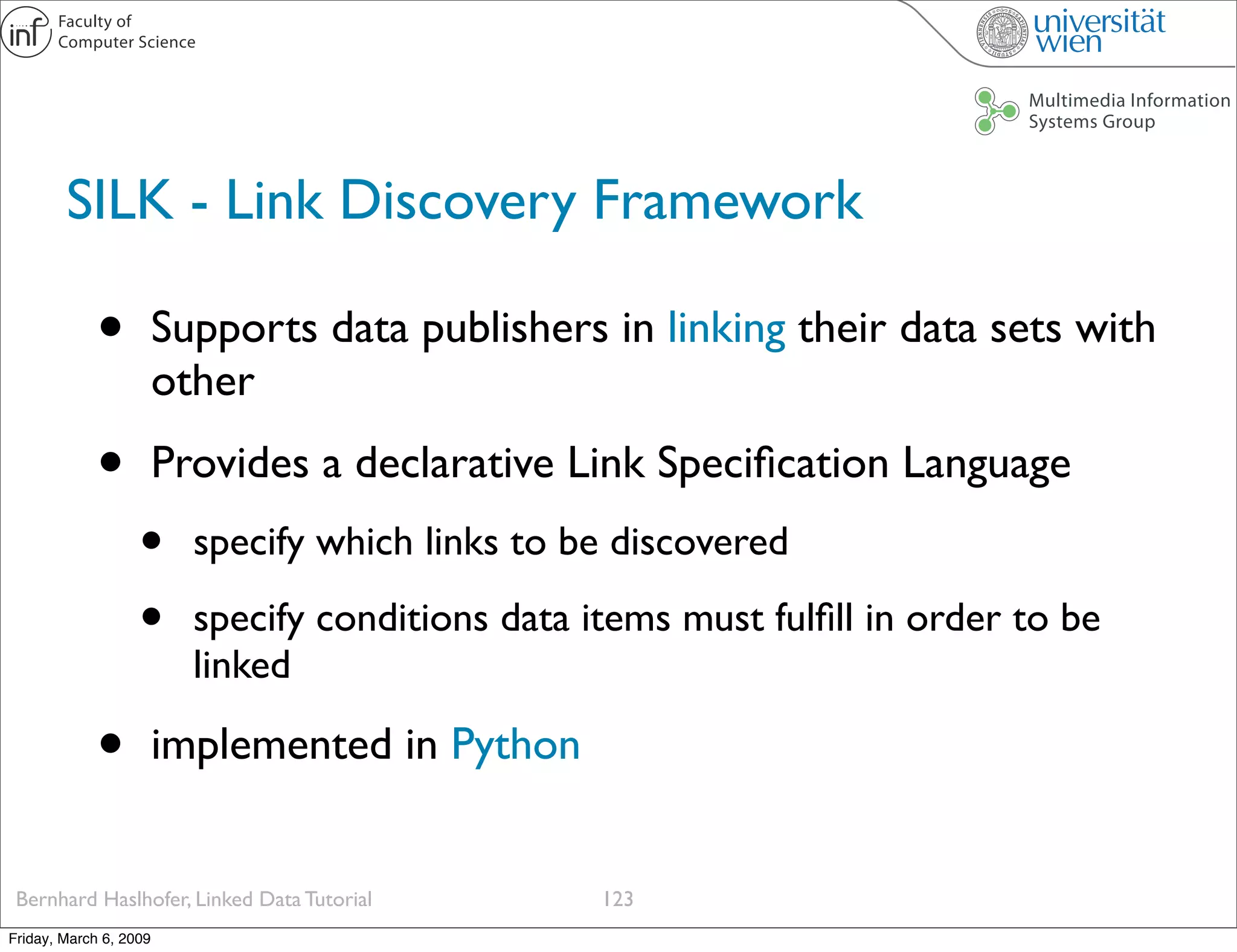

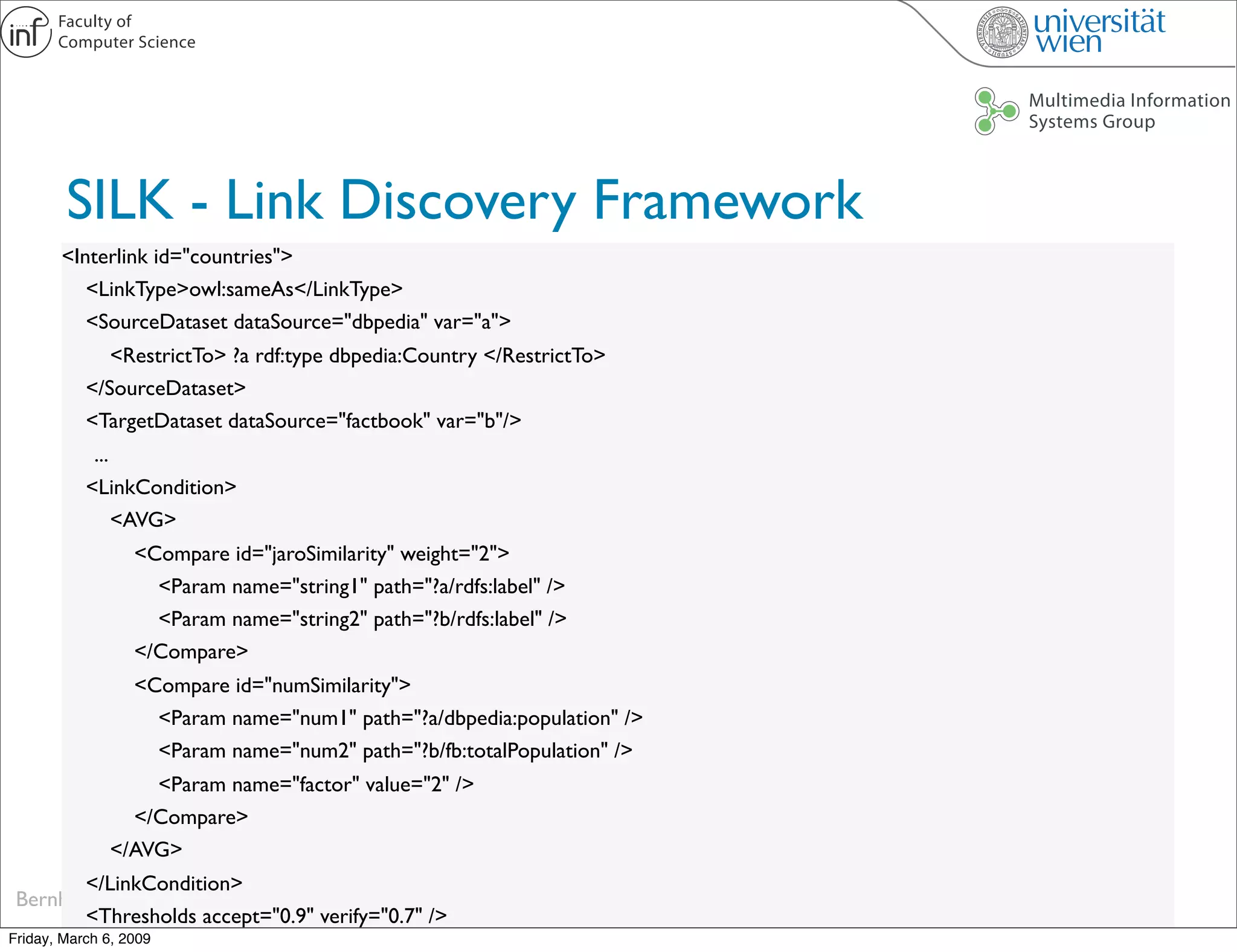

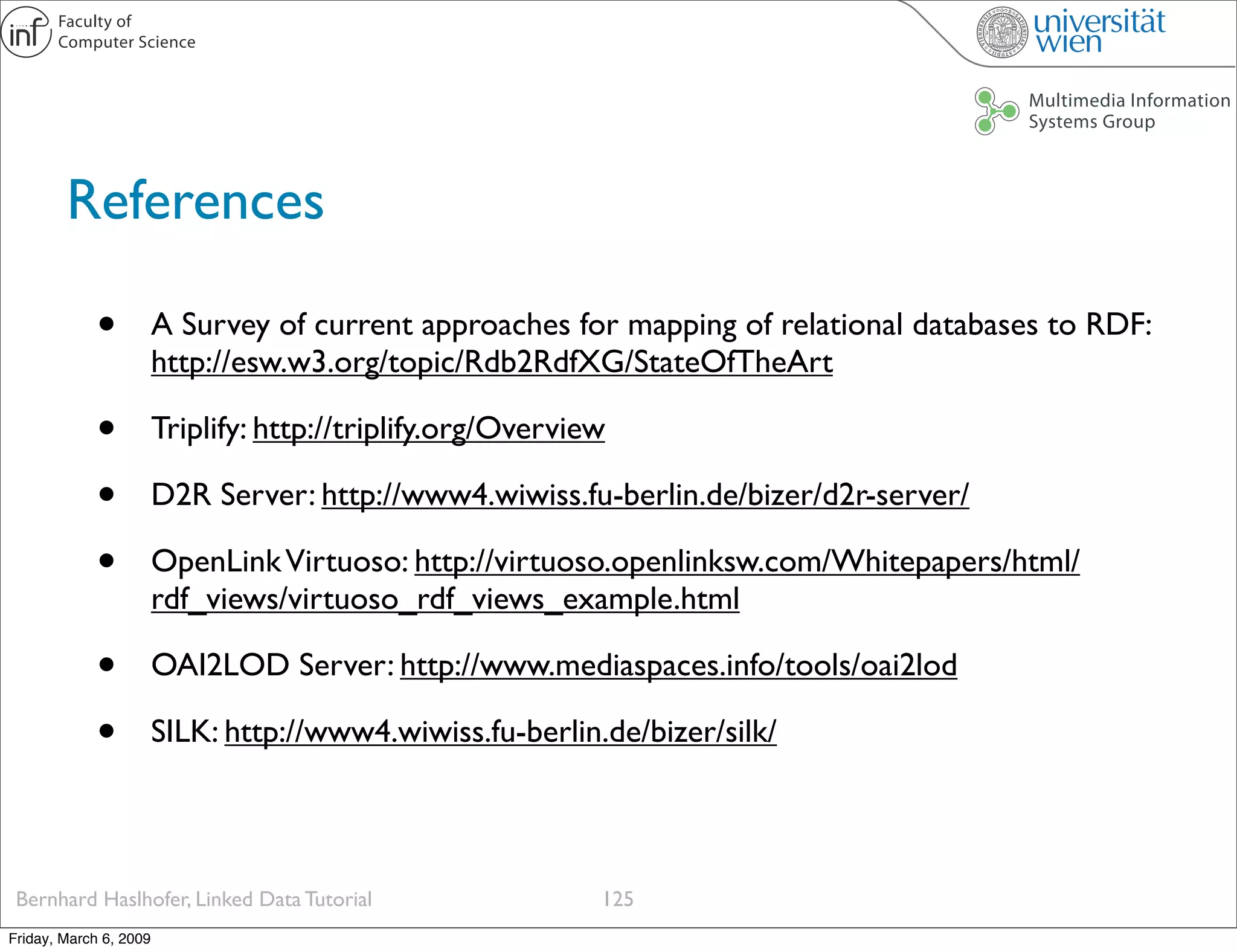

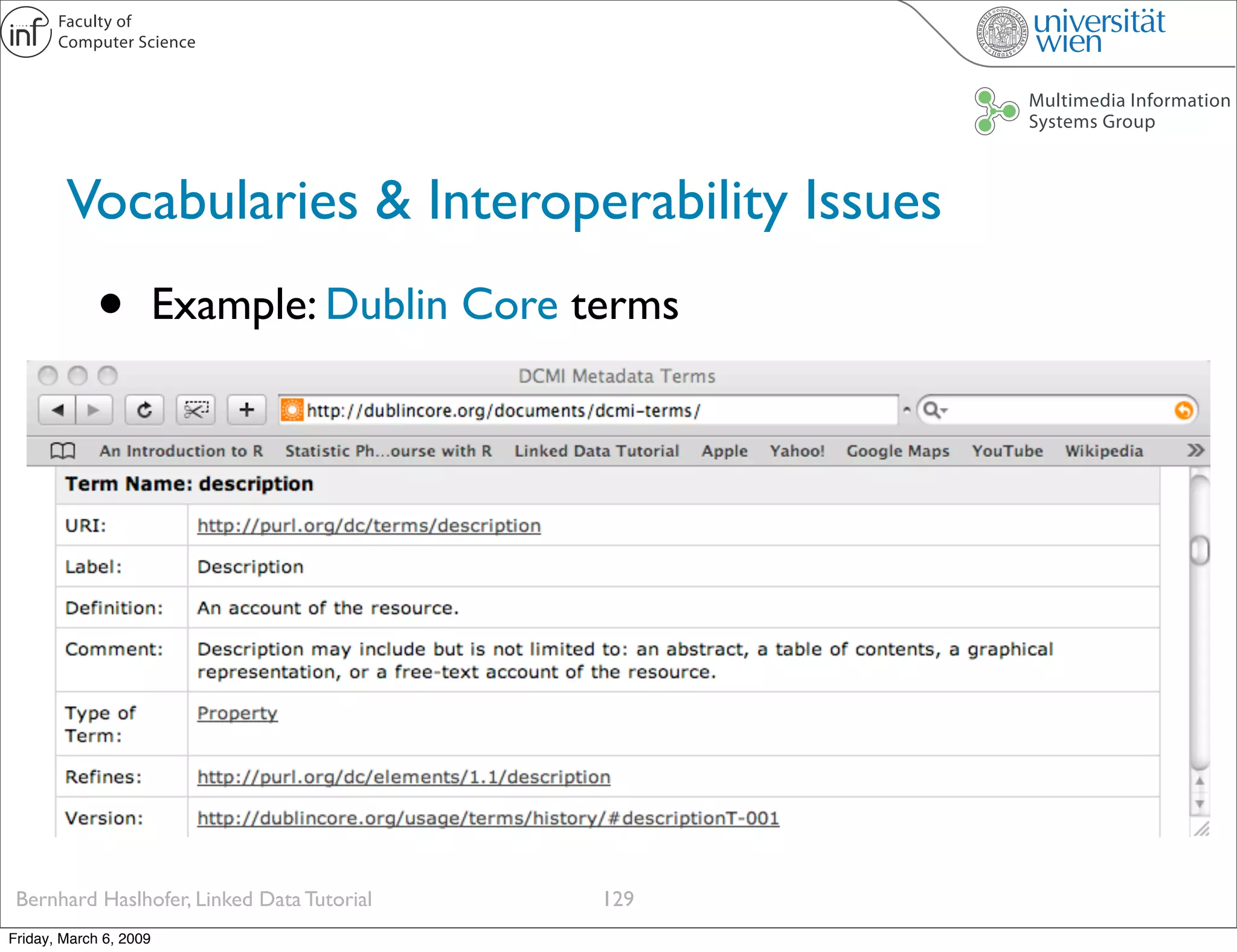

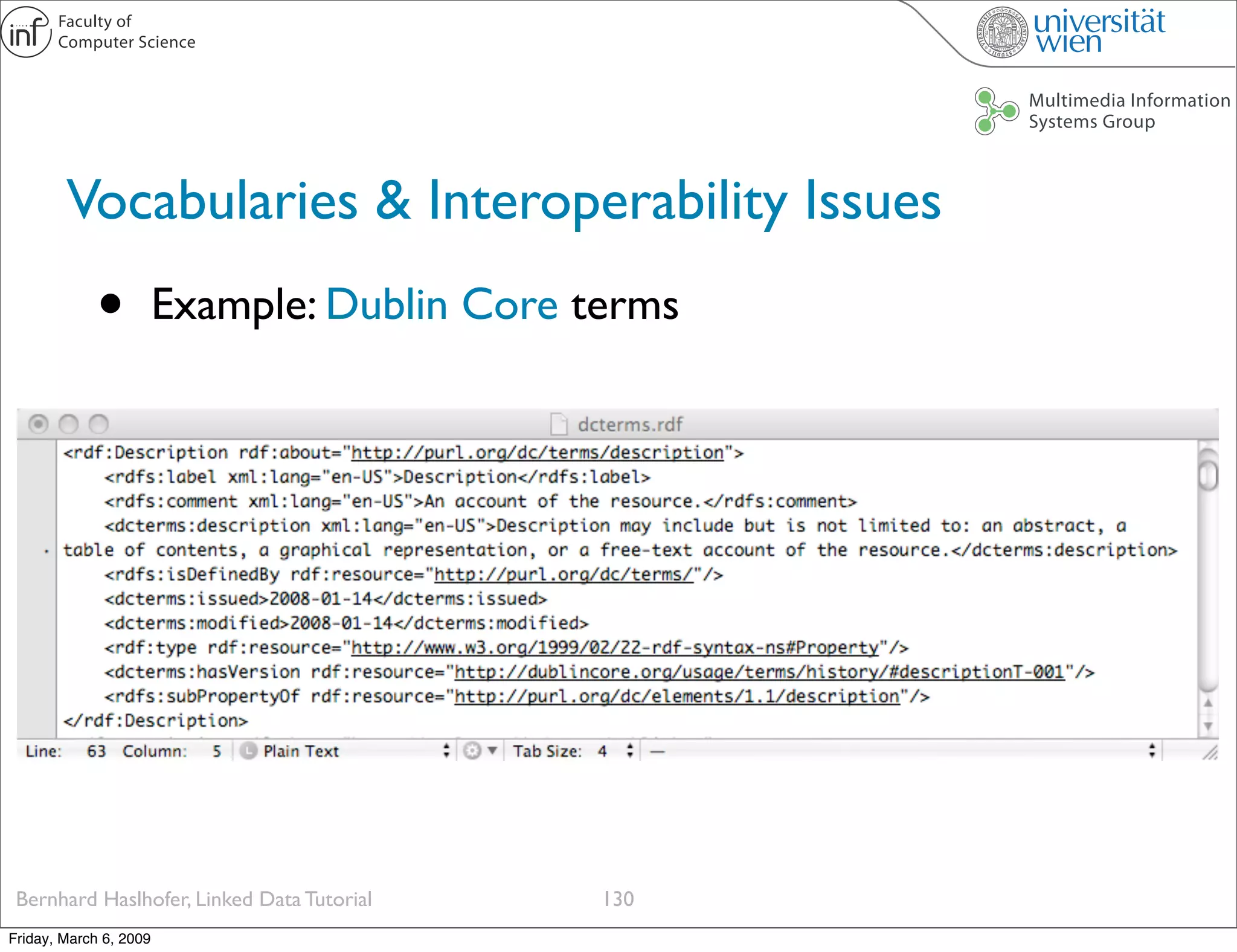

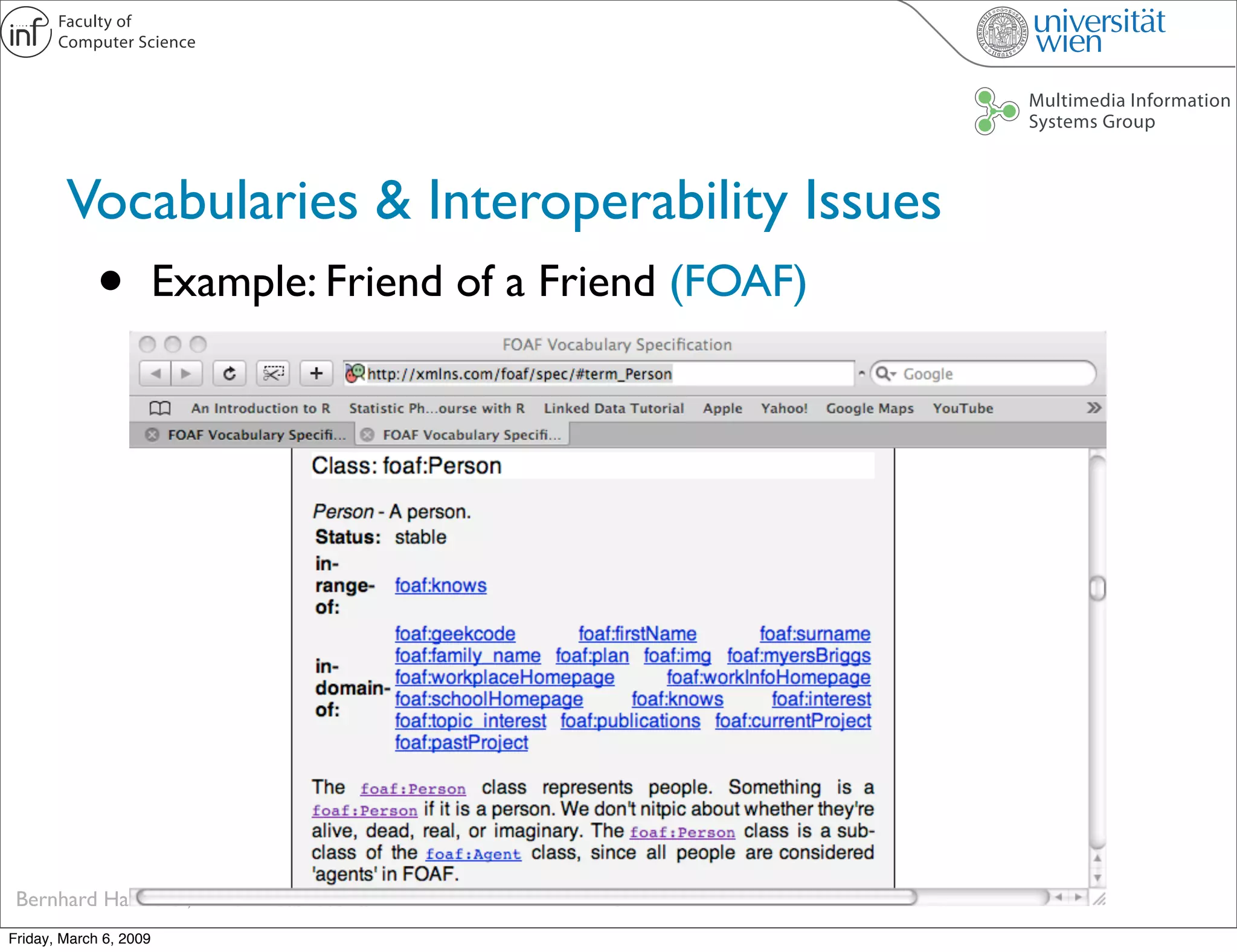

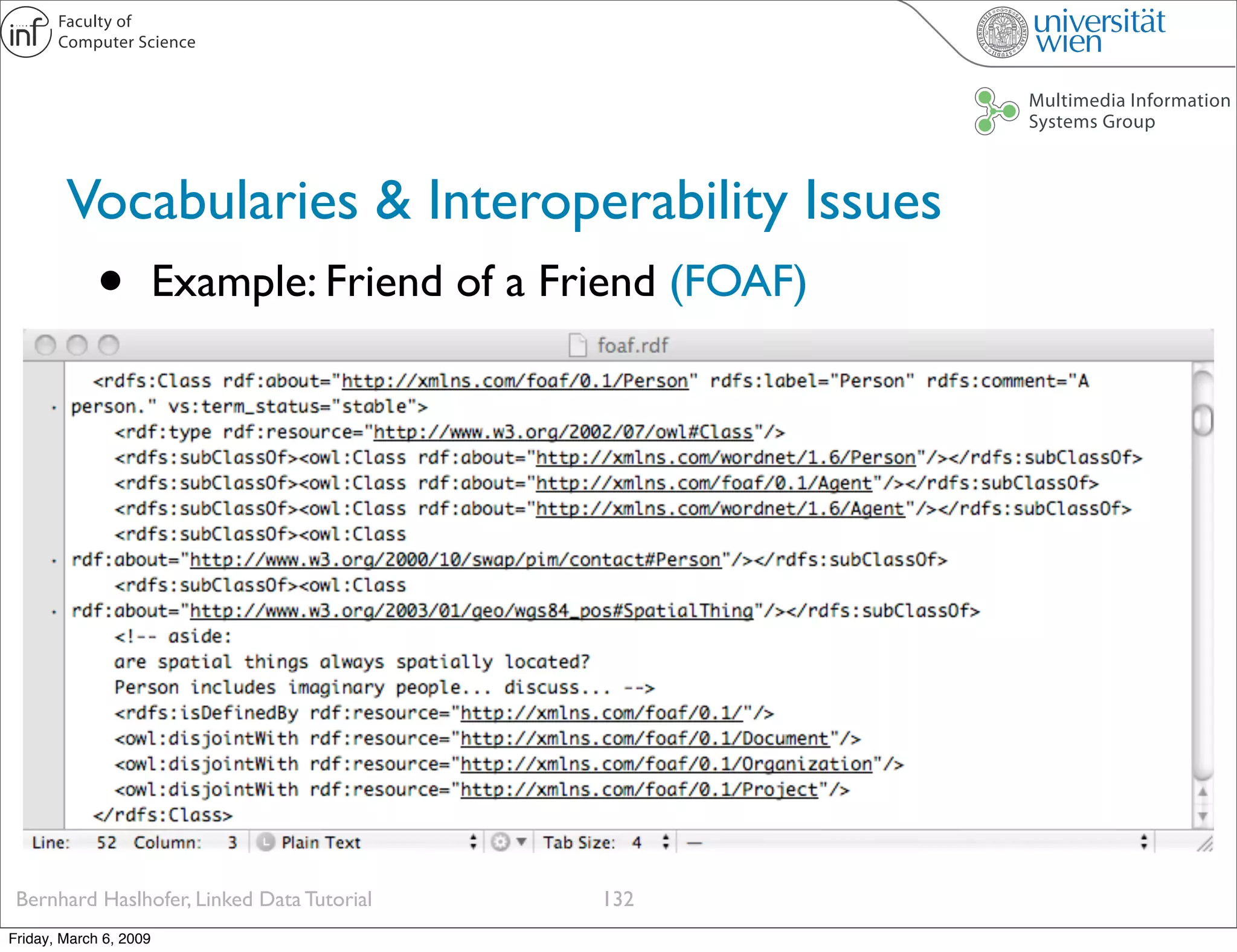

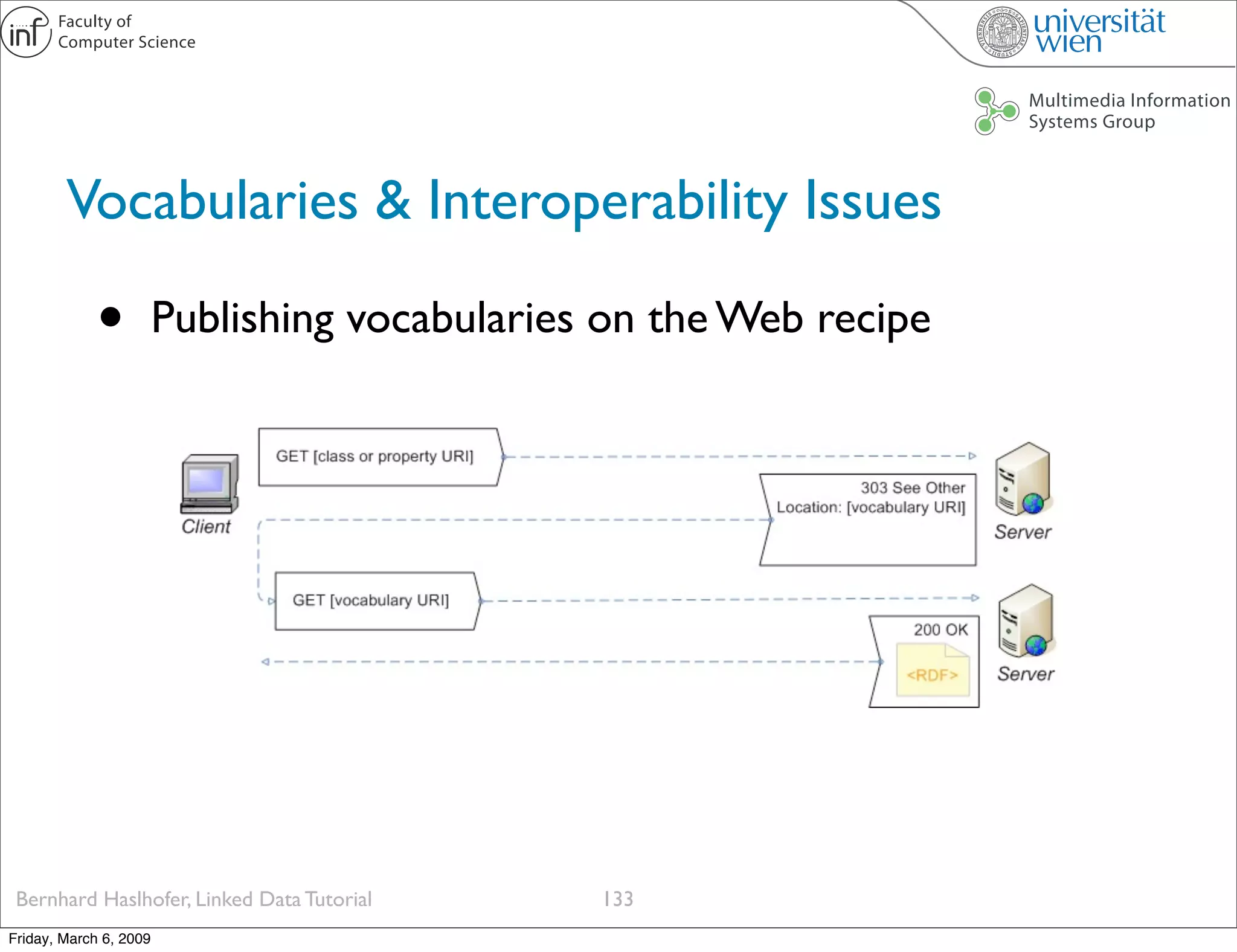

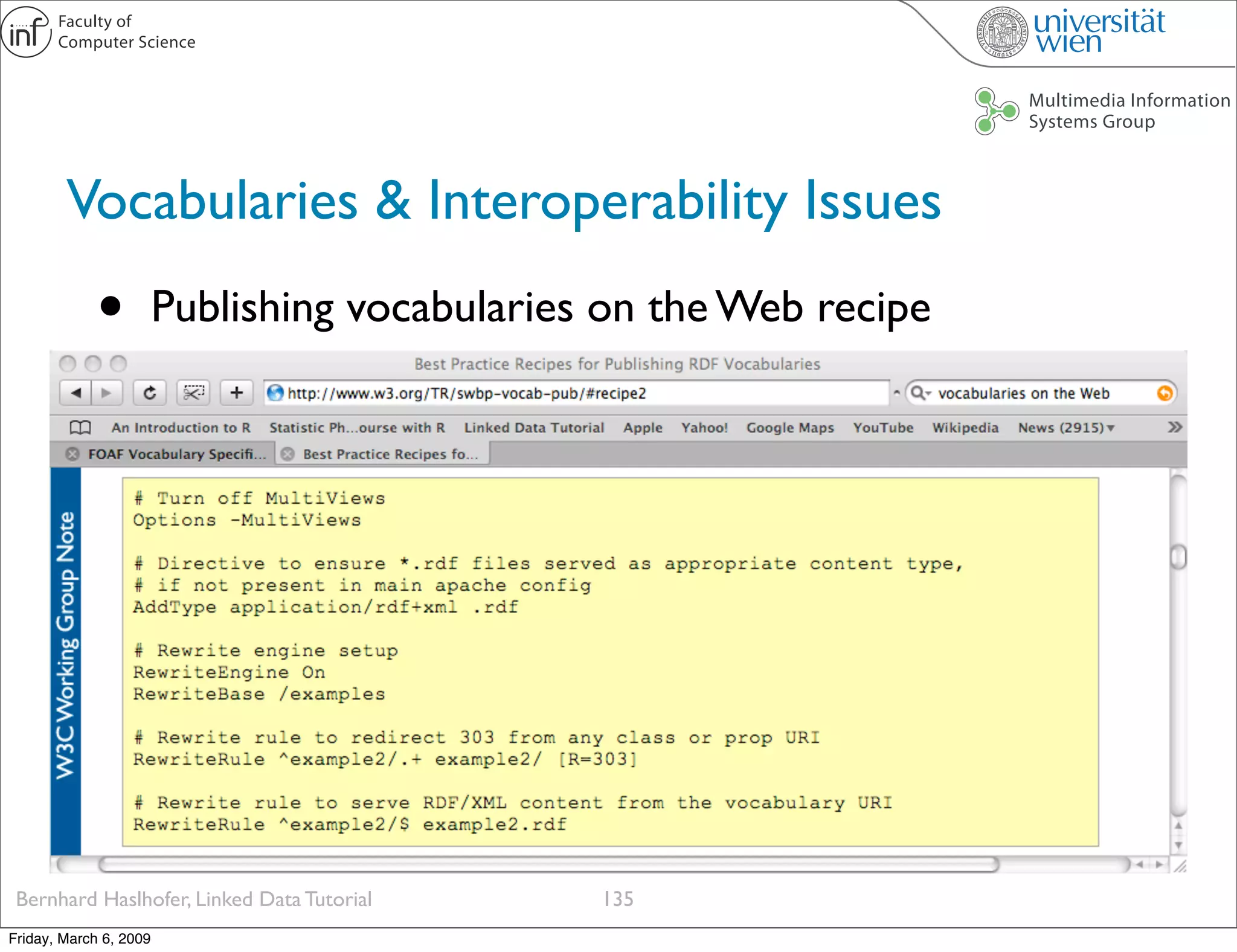

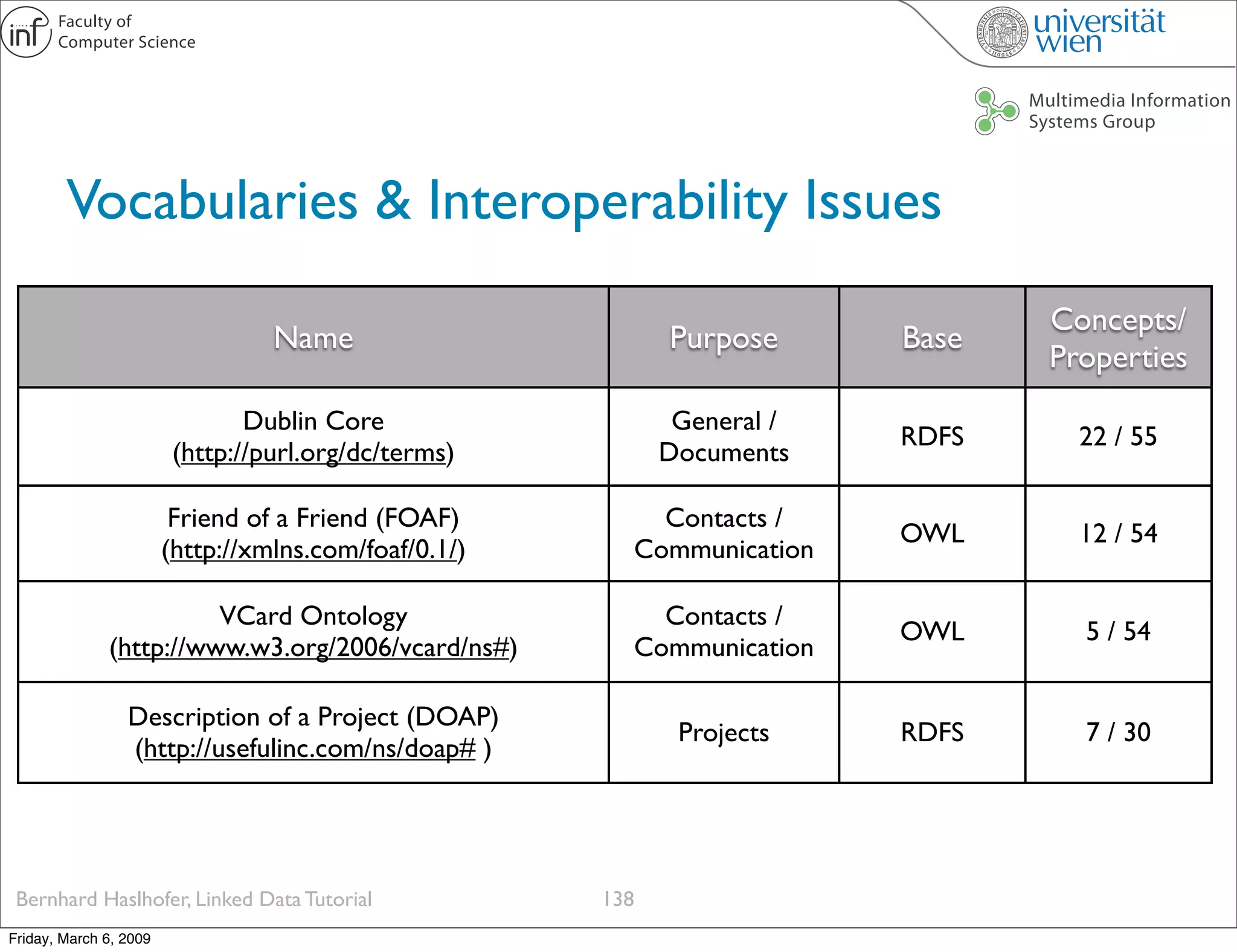

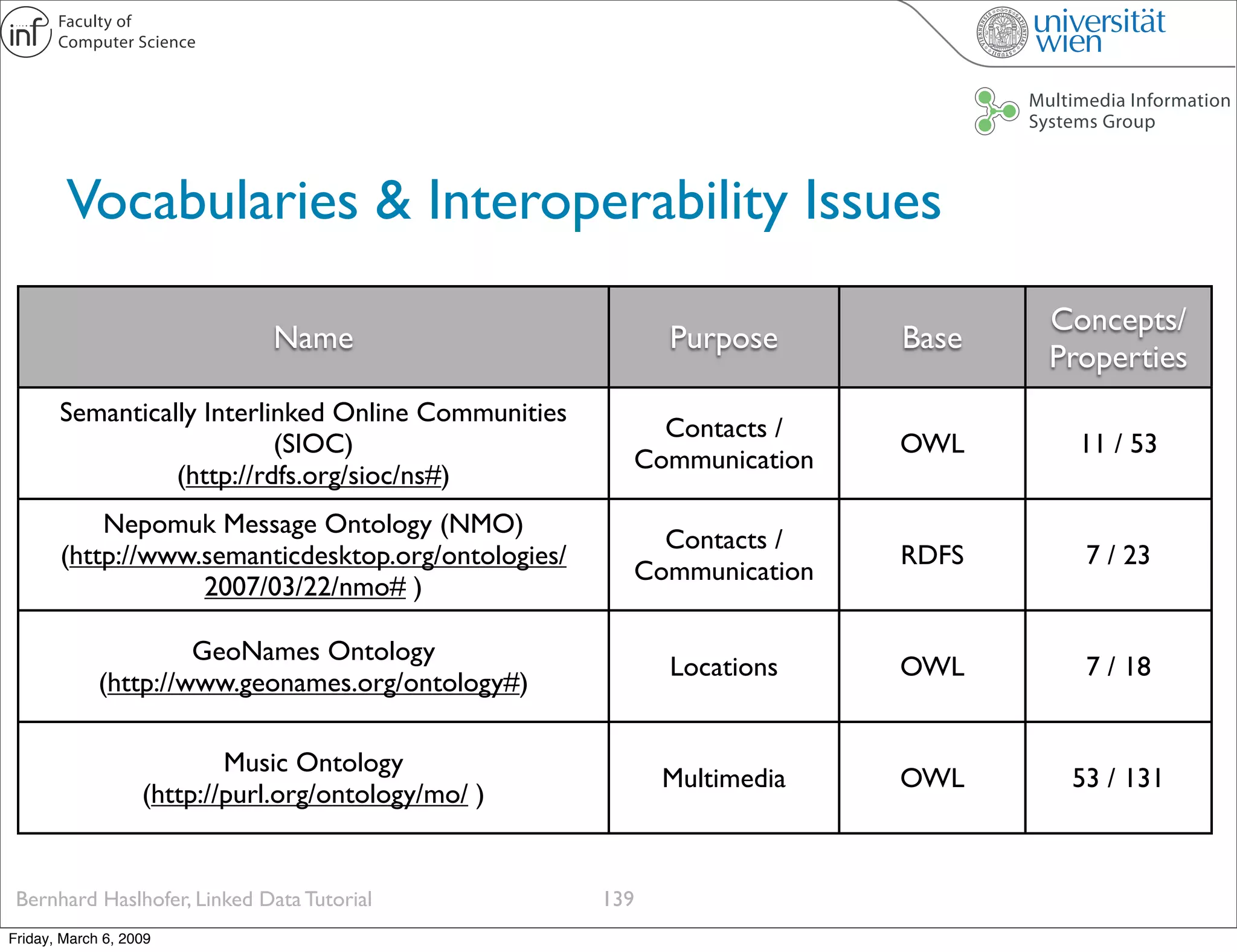

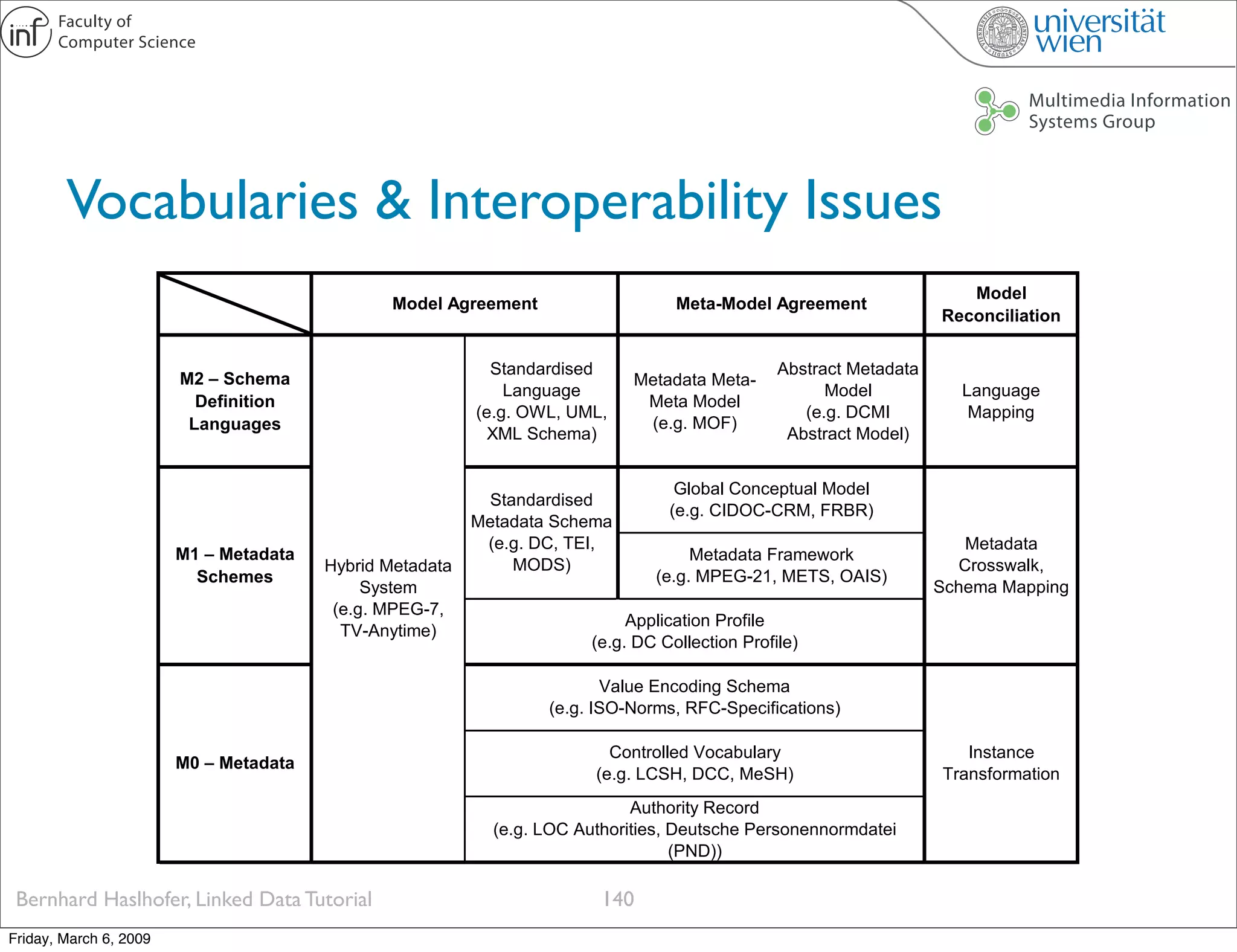

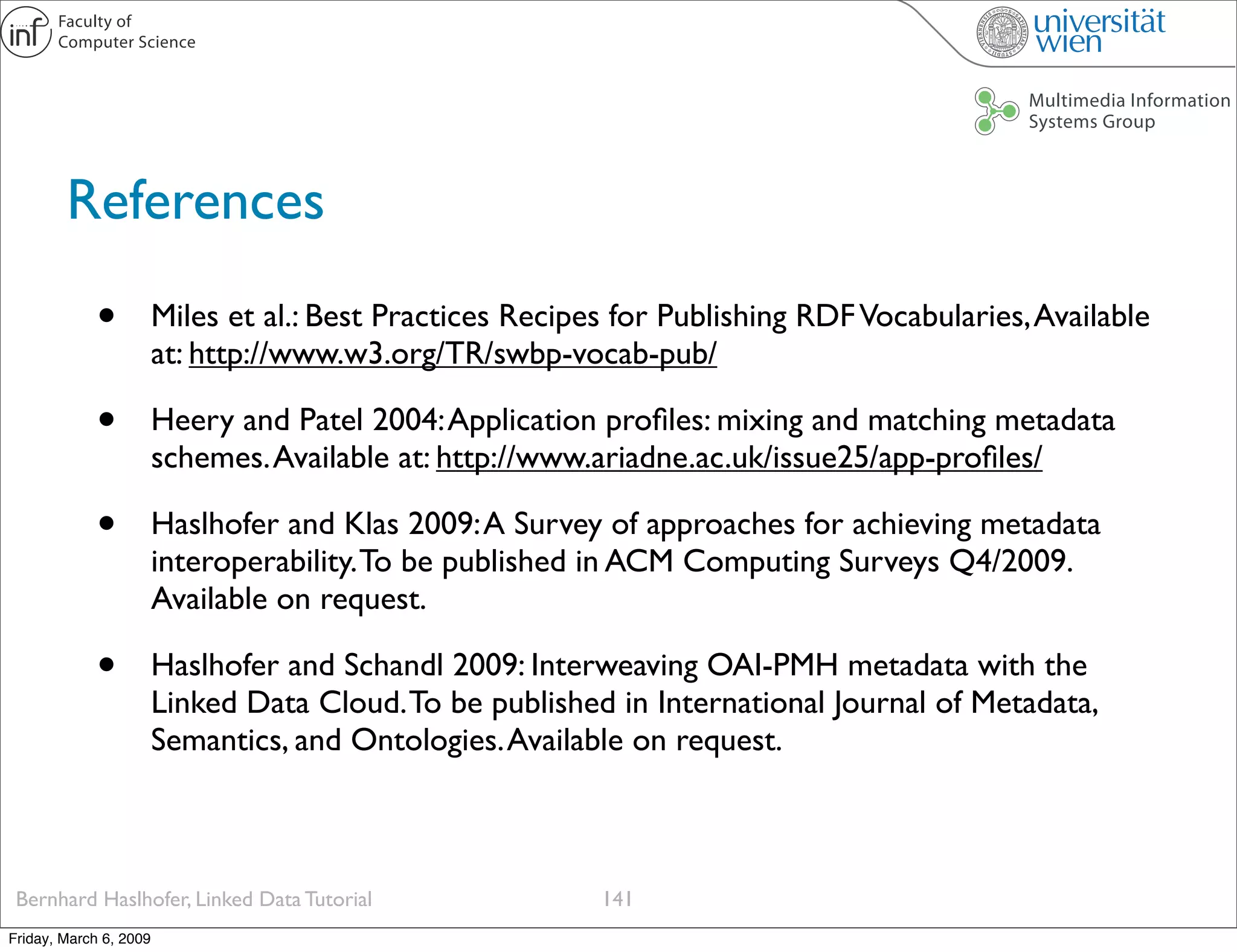

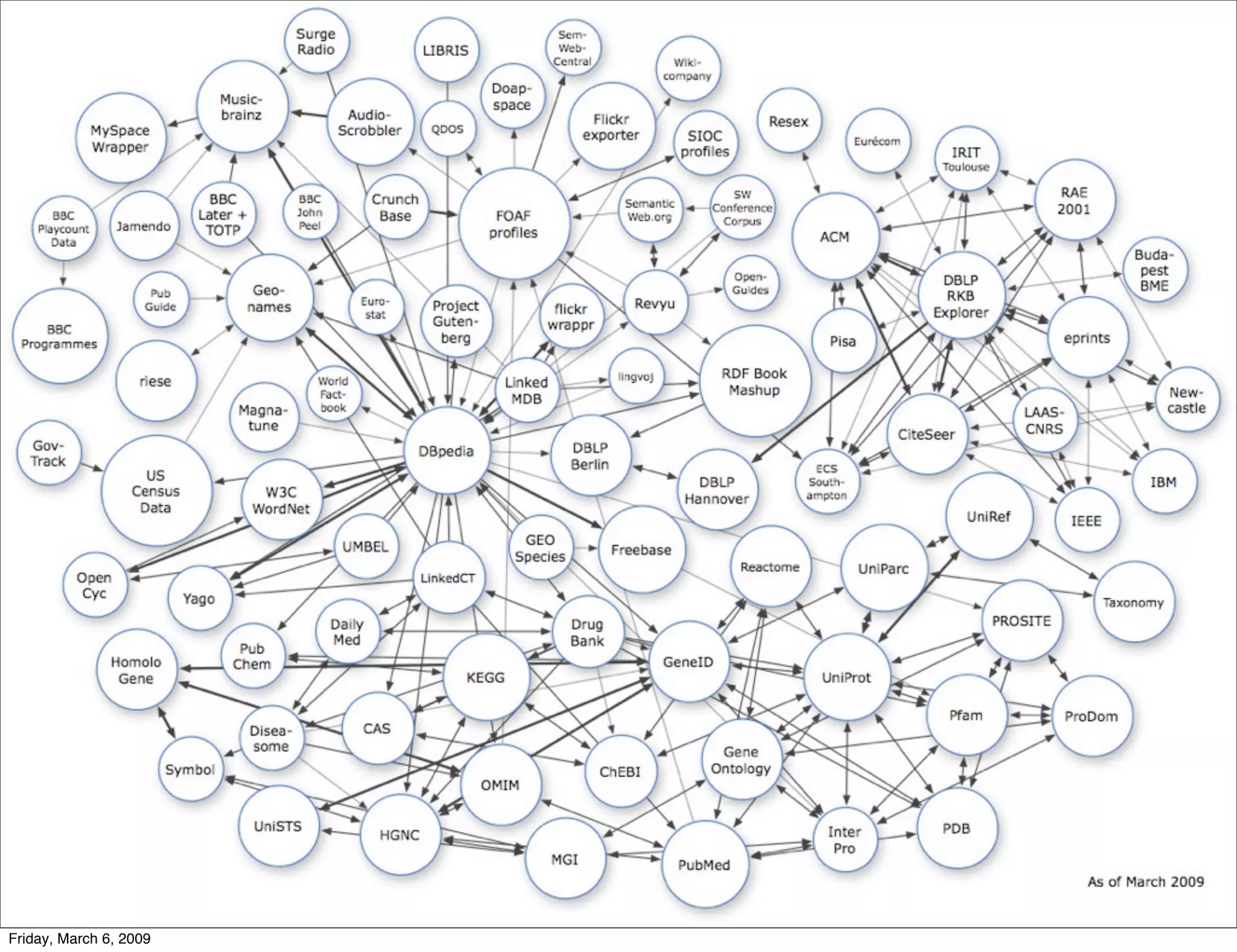

This document provides an overview of a Linked Data tutorial presented on March 6, 2009. The tutorial covered topics such as the motivation for Linked Open Data, relevant technologies like URIs, RDF, and SPARQL, and principles for publishing and interlinking data on the web in a way that is accessible to both humans and machines. The goal of Linked Data is to open up data silos and make public data available on the web in a standardized format.