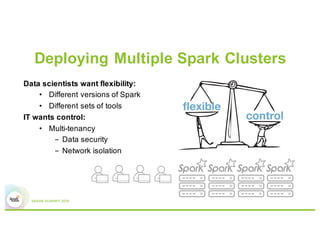

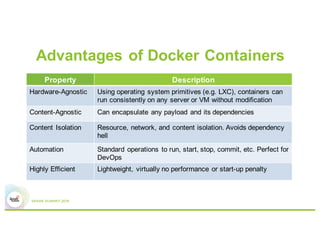

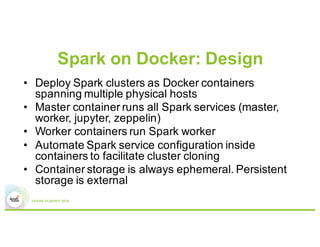

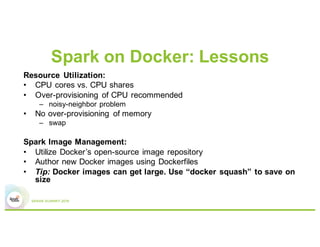

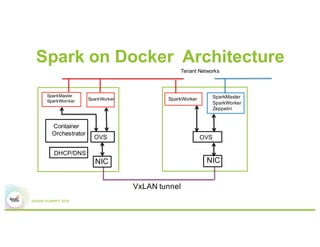

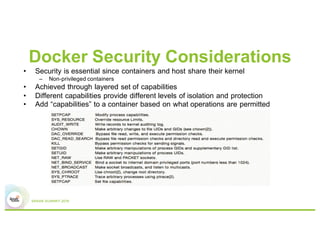

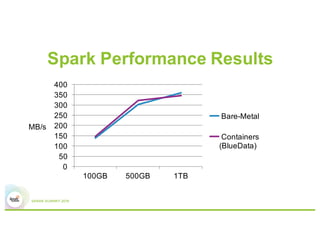

Running Spark on Docker containers provides flexibility for data scientists and control for IT. Some key lessons learned include optimizing CPU and memory resources to avoid noisy neighbor problems, managing Docker images efficiently, using network plugins for multi-host connectivity, and addressing storage and security considerations. Performance testing showed Spark on Docker containers can achieve comparable performance to bare metal deployments for large-scale data processing workloads.