Introduction-to-Hypothesis-Testing Explained in detail

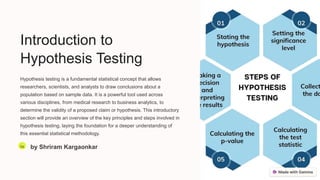

- 1. Introduction to Hypothesis Testing Hypothesis testing is a fundamental statistical concept that allows researchers, scientists, and analysts to draw conclusions about a population based on sample data. It is a powerful tool used across various disciplines, from medical research to business analytics, to determine the validity of a proposed claim or hypothesis. This introductory section will provide an overview of the key principles and steps involved in hypothesis testing, laying the foundation for a deeper understanding of this essential statistical methodology. Sa by Shriram Kargaonkar

- 2. Null Hypothesis and Alternative Hypothesis Null Hypothesis (H0) The null hypothesis is a statistical statement that suggests there is no significant difference or relationship between the variables being studied. It represents the status quo or the default position that researchers aim to disprove through their investigation. The null hypothesis is typically denoted as H0 and is the hypothesis that is tested for statistical significance. Alternative Hypothesis (H1) The alternative hypothesis is the statement that contradicts the null hypothesis and proposes that there is a significant difference or relationship between the variables. It represents the research hypothesis that the investigator believes to be true. The alternative hypothesis is typically denoted as H1 and is the hypothesis that is accepted if the null hypothesis is rejected based on the statistical analysis. Relationship Between H0 and H1 The null and alternative hypotheses are mutually exclusive, meaning that if one is true, the other must be false. The goal of hypothesis testing is to determine whether the null hypothesis can be rejected in favor of the alternative hypothesis, based on the evidence provided by the data collected. The choice between the null and alternative hypotheses has important implications for the conclusions drawn from the study and the decisions made based on those conclusions.

- 3. Types of Errors in Hypothesis Testing In the process of hypothesis testing, there are two types of potential errors that can occur: Type I errors and Type II errors. Understanding these errors is crucial for interpreting the results of a hypothesis test and making informed decisions. 1. Type I Error: A Type I error, also known as a false positive, occurs when the null hypothesis is true, but it is incorrectly rejected. In other words, the test concludes that there is a significant difference or effect when, in reality, there is none. The probability of committing a Type I error is represented by the significance level, denoted as α. A common significance level used in research is 0.05, which means there is a 5% chance of making a Type I error. 2. Type II Error: A Type II error, also known as a false negative, occurs when the null hypothesis is false, but it is not rejected. In this case, the test fails to detect a significant difference or effect that is actually present. The probability of committing a Type II error is represented by β, and the complementary probability (1 - β) is known as the power of the test. Researchers aim to minimize the probability of Type II errors by increasing the power of the test, often by increasing the sample size or using more sensitive measurement techniques. 3. The trade-off between Type I and Type II errors is an important consideration in hypothesis testing. Decreasing the significance level (α) to reduce the risk of a Type I error can lead to an increased risk of a Type II error, and vice versa. Researchers must carefully balance these two types of errors based on the specific context and the relative consequences of each type of error in their research or decision-making process.

- 4. Level of Significance and p-value In hypothesis testing, the level of significance, denoted as α, represents the probability of rejecting the null hypothesis when it is actually true. This is also known as the Type I error rate. The level of significance is a crucial decision that the researcher must make before conducting the statistical test. Common levels of significance are 1% (0.01), 5% (0.05), and 10% (0.10), with 5% being the most widely used. The p-value, on the other hand, is the probability of obtaining a test statistic at least as extreme as the one observed, assuming the null hypothesis is true. If the p-value is less than the chosen level of significance, the null hypothesis is rejected, and the result is considered statistically significant. The smaller the p-value, the stronger the evidence against the null hypothesis. It's important to note that the level of significance and the p-value are related but distinct concepts. The level of significance is a pre-determined threshold, while the p-value is the actual probability calculated from the data. Researchers must carefully consider the appropriate level of significance and interpret the p-value in the context of their research question and the risks associated with making incorrect decisions.

- 5. One-Tailed and Two-Tailed Tests One-Tailed Test A one-tailed test is used when the hypothesis focuses on a specific direction of the effect, either greater than or less than a specified value. This type of test is appropriate when there is a clear directional prediction about the population parameter based on prior knowledge or theory. For example, a researcher might hypothesize that a new drug will increase the average lifespan of patients compared to a placebo. In this case, a one-tailed test would be used to determine if the new drug has a positive effect. Two-Tailed Test A two-tailed test is used when the hypothesis does not specify a direction of the effect, but rather tests whether the population parameter is different from a specified value, without regard to the direction of the difference. This type of test is appropriate when there is no clear directional prediction or when the researcher wants to detect any type of difference, whether positive or negative. For example, a researcher might hypothesize that a new teaching method will affect student test scores, without specifying whether the effect will be an increase or a decrease. Choosing Between One-Tailed and Two-Tailed Tests The choice between a one-tailed and two-tailed test depends on the research question and the researcher's prior knowledge or expectations. One-tailed tests have more statistical power, meaning they can detect smaller effects with the same sample size. However, they also have a higher risk of making a Type I error, which is rejecting the null hypothesis when it is true. Two-tailed tests are more conservative and have a lower risk of Type I errors, but they require a larger sample size to detect the same effect size. Researchers should carefully consider the trade-offs and choose the appropriate test based on their research objectives and the available evidence.

- 6. Test Statistic and Sampling Distribution In hypothesis testing, the test statistic is a numerical value calculated from the sample data that is used to determine whether to reject or fail to reject the null hypothesis. The test statistic is compared to a sampling distribution, which represents the possible values the test statistic could take on if the null hypothesis is true. The sampling distribution depends on the type of hypothesis test being performed, the characteristics of the population, and the size of the sample. Common sampling distributions used in hypothesis testing include the z-distribution, t-distribution, chi-square distribution, and F-distribution. The p-value of the test is the probability of obtaining a test statistic at least as extreme as the one observed, assuming the null hypothesis is true. If the p-value is less than the chosen significance level, the null hypothesis is rejected, indicating the sample data provides sufficient evidence to conclude the alternative hypothesis is true.

- 7. Parametric and Non-Parametric Tests Parametric Tests Parametric tests are a class of statistical tests that make assumptions about the parameters (such as mean and standard deviation) of the underlying probability distribution of the data. These tests are appropriate when the data follows a specific probability distribution, such as the normal distribution. Examples of parametric tests include the t-test, ANOVA, and regression analysis. Non-Parametric Tests Non-parametric tests, on the other hand, do not make assumptions about the underlying probability distribution of the data. These tests are more flexible and can be used when the data does not follow a specific distribution or when the assumptions for parametric tests are not met. Examples of non- parametric tests include the Mann-Whitney U test, Kruskal- Wallis test, and Wilcoxon signed-rank test. Choosing the Right Test The choice between parametric and non-parametric tests depends on the characteristics of the data and the research question. Parametric tests are generally more powerful when the assumptions are met, but non- parametric tests can be more appropriate when the assumptions are violated. It's important to carefully consider the assumptions and choose the appropriate test to ensure accurate and meaningful results.

- 8. Assumptions for Hypothesis Testing When conducting a hypothesis test, there are several key assumptions that must be met in order for the test to be valid and the conclusions drawn to be reliable. Failure to meet these assumptions can lead to incorrect inferences and faulty decision-making. The primary assumptions for hypothesis testing include: Normality: For many common statistical tests, such as the t-test and ANOVA, the underlying population distribution must be normal or approximately normal. This assumption ensures that the sampling distribution of the test statistic follows a known probability distribution, which is essential for calculating p-values and making inferences. Independence: The observations in the sample must be independent of one another. This means that the value of one observation does not depend on the value of any other observation. Violations of independence, such as in the case of repeated measures or clustered data, require specialized statistical techniques. Homogeneity of Variance: For many tests, the variances of the populations being compared must be equal (or approximately equal). This assumption ensures that the test statistic follows the expected probability distribution and that the Type I error rate is maintained at the desired level. Absence of Multicollinearity: In multiple regression analysis, the independent variables must not be highly correlated with one another. Multicollinearity can lead to unstable and unreliable estimates of the regression coefficients, making it difficult to interpret the effects of individual predictors. Careful consideration of these assumptions is crucial for ensuring the validity and reliability of hypothesis testing results. Violations of these assumptions may require the use of alternative statistical methods or transformations of the data to meet the necessary conditions.

- 9. Interpreting the Results of a Hypothesis Test Interpreting the results of a hypothesis test is a crucial step in the statistical analysis process. Once the test statistic has been calculated and the p-value has been determined, the researcher must make a decision about whether to reject or fail to reject the null hypothesis. This decision has important implications for the conclusions that can be drawn from the data. 95% Confidence 5% Significance Level 0.017 P-Value — Key Metrics The level of significance, or alpha value, is typically set at 5% (0.05) in social science research, meaning that the researcher is willing to accept a 5% chance of making a Type I error (rejecting the null hypothesis when it is true). The p-value represents the probability of obtaining the observed test statistic (or one more extreme) under the assumption that the null hypothesis is true. If the p-value is less than the significance level, the null hypothesis is rejected, indicating that the observed effect is statistically significant. In the example above, the p-value of 0.017 is less than the 5% significance level, so the null hypothesis would be rejected. This suggests that the observed effect is unlikely to have occurred by chance and that there is evidence to support the alternative hypothesis. The 95% confidence interval around the effect size provides additional information about the magnitude and precision of the effect.

- 10. Conclusion and Key Takeaways Embrace Hypothesis Testing Hypothesis testing is a fundamental statistical tool that allows researchers and analysts to draw meaningful conclusions from data. By understanding the concepts of null and alternative hypotheses, as well as the different types of errors and significance levels, you can design and interpret hypothesis tests with confidence, leading to more informed decision-making. Choose the Appropriate Test Selecting the right hypothesis test is crucial, as it depends on the data characteristics, research goals, and underlying assumptions. Familiarize yourself with the various parametric and non-parametric tests, and learn how to identify the appropriate test for your specific scenario. This will ensure the validity and reliability of your findings. Interpret Results Carefully When interpreting the results of a hypothesis test, pay close attention to the test statistic, p-value, and the ultimate decision to either reject or fail to reject the null hypothesis. Understanding the practical and statistical significance of your findings will help you draw meaningful conclusions and make informed decisions based on the available evidence. Continuous Learning Hypothesis testing is a dynamic field that continues to evolve, with new techniques and advancements emerging regularly. Stay up-to-date with the latest developments, attend relevant workshops and conferences, and engage with the research community. Continuous learning will ensure that your knowledge and skills remain sharp, enabling you to adapt to changing research environments and contribute to the advancement of your field.