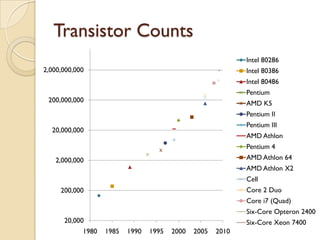

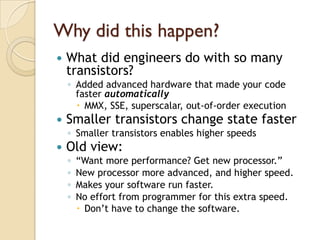

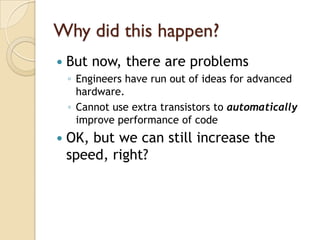

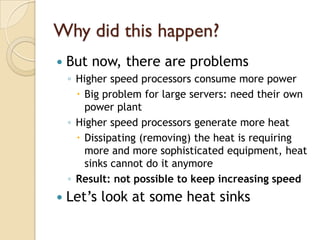

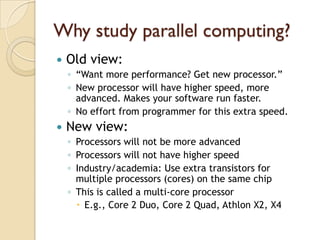

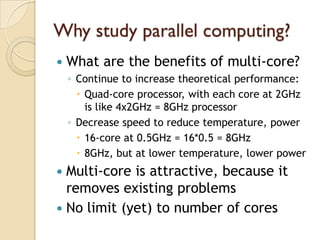

This document provides details about a course on parallel and distributed computing systems. It discusses why studying parallel computing is important due to technological shifts toward multi-core processors. The course will cover foundations of parallel algorithms and programming, and provide hands-on experience using parallel hardware. Students will need basic knowledge of computer architecture and programming to succeed in the course.